You are currently browsing the category archive for the ‘Asset Lifecycle Management’ category.

For those of you following my blog over the years, there is, every time after the PLM Roadmap PDT Europe conference, one or two blog posts, where the first starts with “The weekend after ….”

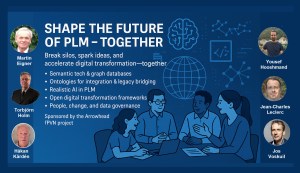

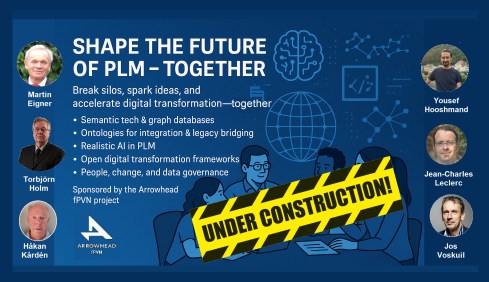

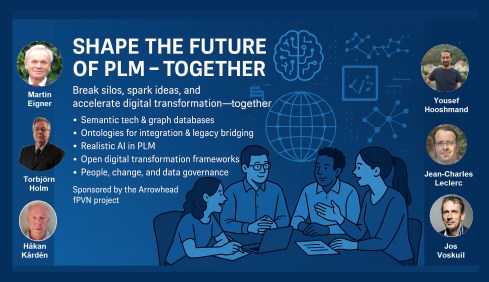

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

And now it is time for the PLM roadmap / PDT conference review

The conference

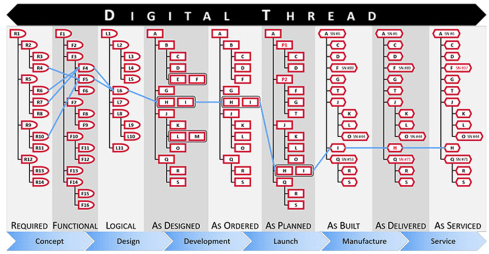

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.

This time, the conference was sold out with 150+ attendees, just fitting in the conference space, and the two-day program started with a challenging day 1 of advanced topics, and on day 2 we saw more company experiences.

Combined with the traditional dinner in the middle, it was again a great networking event to charge the brain. We still need the brain besides AI. Some of the highlights of day 1 in this post.

Combined with the traditional dinner in the middle, it was again a great networking event to charge the brain. We still need the brain besides AI. Some of the highlights of day 1 in this post.

PLM’s Integral Role in Digital Transformation

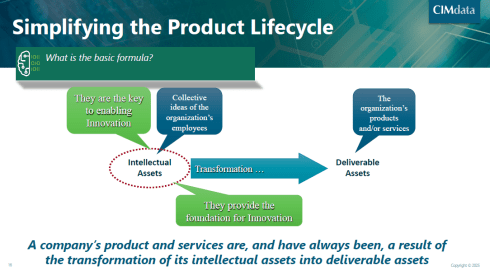

As usual, Peter Bilello, CIMdata’s President & CEO, kicked off the conference, and his message has not changed over the years. PLM should be understood as a strategic, enterprise-wide approach that manages intellectual assets and connects the entire product lifecycle.

As usual, Peter Bilello, CIMdata’s President & CEO, kicked off the conference, and his message has not changed over the years. PLM should be understood as a strategic, enterprise-wide approach that manages intellectual assets and connects the entire product lifecycle.

I like the image below explaining the WHY behind product lifecycle management.

It enables end-to-end digitalization, supports digital threads and twins, and provides the backbone for data governance, analytics, AI, and skills transformation.

Peter walked us briefly through CIMdata’s Critical Dozen (a YouTube recording is available here), all of which are relevant to the scope of digital transformation. Without strong PLM foundations and governance, digital transformation efforts will fail.

The Digital Thread as the Foundation of the Omniverse

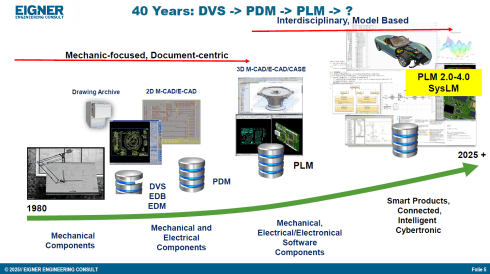

Prof. Dr.-Ing. Martin Eigner, well known for his lifetime passion and vision in product lifecycle management (PDM and PLM tools & methodology), shared insights from his 40-year journey, highlighting the growing complexity and ever-increasing fragmentation of customer solution landscapes.

Prof. Dr.-Ing. Martin Eigner, well known for his lifetime passion and vision in product lifecycle management (PDM and PLM tools & methodology), shared insights from his 40-year journey, highlighting the growing complexity and ever-increasing fragmentation of customer solution landscapes.

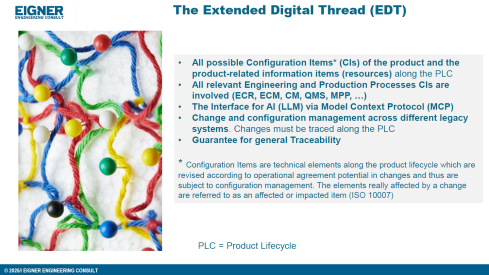

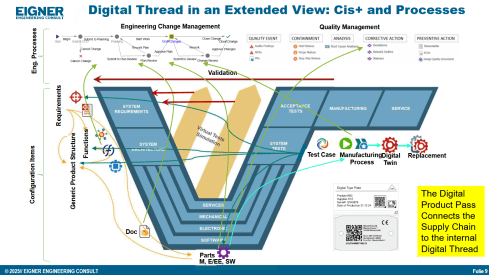

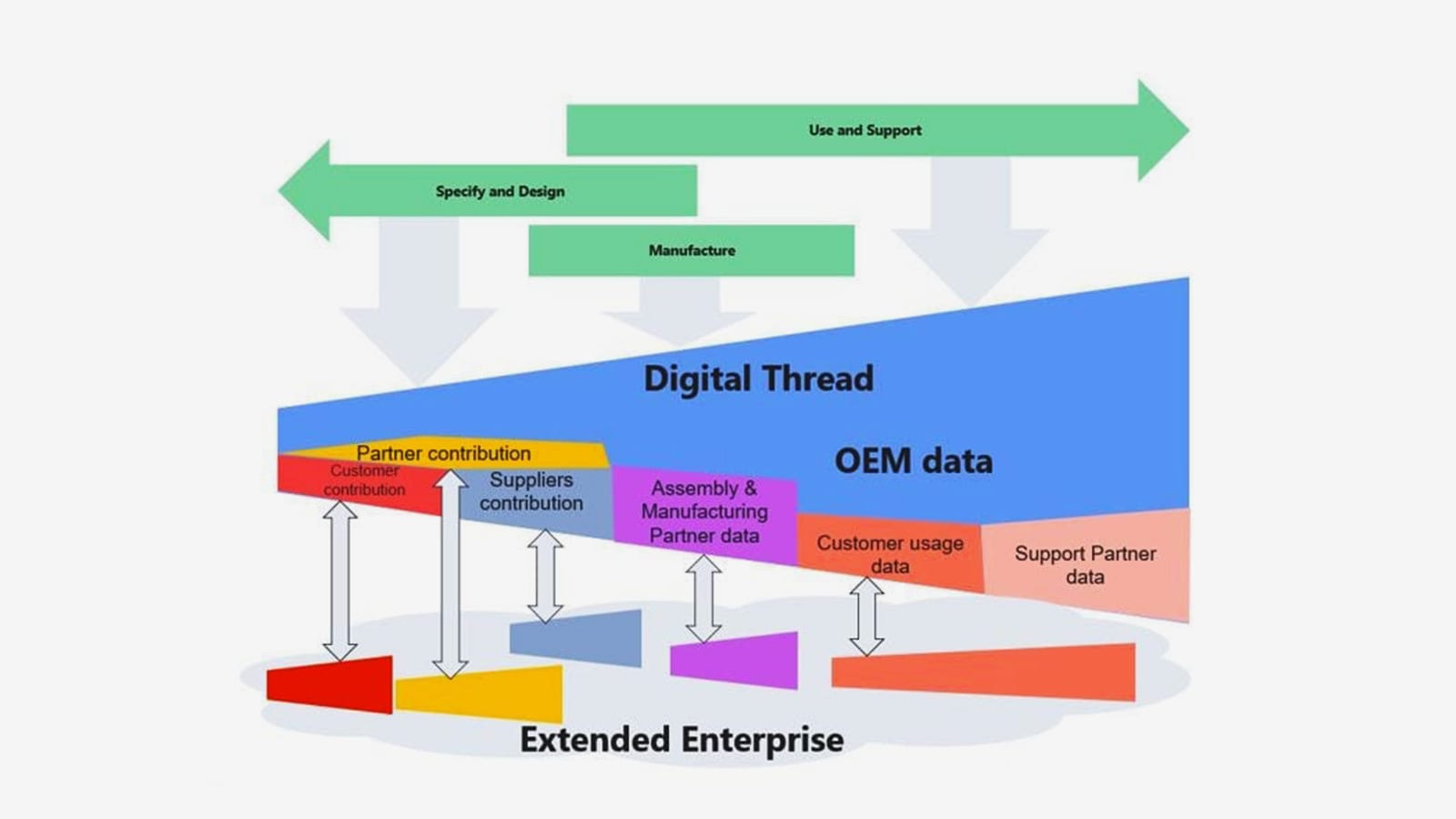

In his current eco-system, ERP (read SAP) is playing a significant role as an execution platform, complemented by PDM or ECTR capabilities. Few of his customers go for the broad PLM systems, and therefore, he stresses the importance of the so-called Extended Digital Thread.

Prof Eigner describes the EDT more precisely as an overlaying infrastructure implemented by a graph database that serves as a performant knowledge graph of the enterprise.

The EDT serves as the foundation for AI-driven applications, supporting impact analysis, change management, and natural-language interaction with product data. The presentation also provides a detailed view of Digital Twin concepts, ranging from component to system and process twins, and demonstrates how twins enhance predictive maintenance, sustainability, and process optimization.

Combined with the NVIDIA Omniverse as the next step toward immersive, real-time collaboration and simulation, enabling virtual factories and physics-accurate visualization. The outlook emphasizes that combining EDT, Digital Twin, AI, and Omniverse moves the industry closer to the original PLM vision: a unified, consistent Single Source of Truth 😮that boosts innovation, efficiency, and ROI.

![]() For me, hearing and reading the term Single Source of Truth still creates discomfort with reality and humanity, so we still have something to discuss.

For me, hearing and reading the term Single Source of Truth still creates discomfort with reality and humanity, so we still have something to discuss.

Semantic Digital Thread for Enhanced Systems Engineering in a Federated PLM Landscape

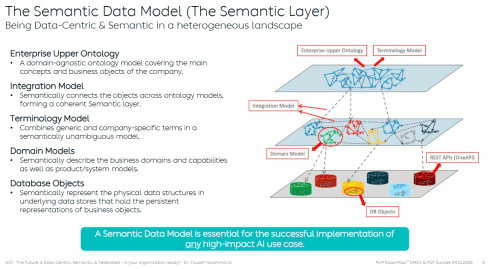

Dr. Yousef Hooshmand‘s presentation was a great continuation of the Extended Digital Thread theme discussed by Dr. Martin Eigner. Where the core of Martin’s EDT is based on traceability between artifacts and processes throughout the lifecycle, Yousef introduced a (for me) totally new concept: starting with managing and structuring the data to manage the knowledge, rather than starting from the models and tools to understand the knowledge.

Dr. Yousef Hooshmand‘s presentation was a great continuation of the Extended Digital Thread theme discussed by Dr. Martin Eigner. Where the core of Martin’s EDT is based on traceability between artifacts and processes throughout the lifecycle, Yousef introduced a (for me) totally new concept: starting with managing and structuring the data to manage the knowledge, rather than starting from the models and tools to understand the knowledge.

It is a fundamentally different approach to addressing the same problem of complexity. During our pre-conference workshop “Shape the future of PLM – together,” I already got a bit familiar with this approach, and Yousef’s recently released paper provides all the details.

All the relevant information can be found in his recent LinkedIn post here.

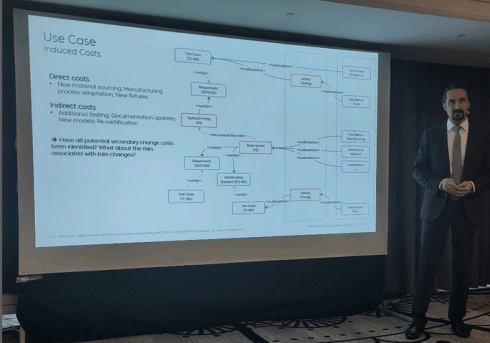

In his presentation during the conference, Yousef illustrated the value and applicability of the Semantic Digital Thread approach by presenting it in an automotive use case: Impact Analysis and Cost Estimation (image above)

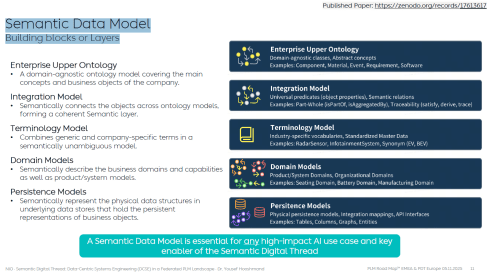

To understand the Semantic Digital Thread, it is essential to understand the Semantic Data Model and its building blocks or layers, as illustrated in the image below:

In addition, such an infrastructure is ideal for AI applications and avoids vendor- or tool lock-in, providing a significant long-term advantage.

I am sure it will take time for us to digest the content if you are entering the domain of a data-driven enterprise (the connected approach) instead of a document-driven enterprise (the coordinated approach).

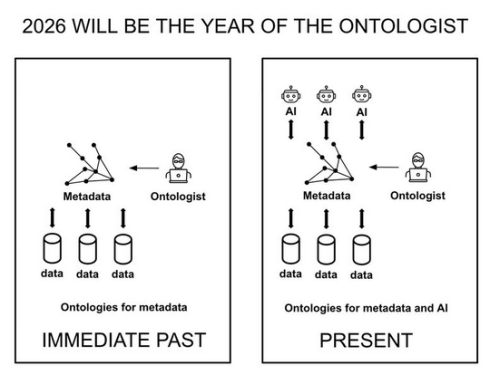

However, as many of the other presentations on day 1 also stated: “data without context is worthless – then they become just bits and bytes.” For advanced and future scenarios, you cannot avoid working with ontologies, semantic models and graph databases.

However, as many of the other presentations on day 1 also stated: “data without context is worthless – then they become just bits and bytes.” For advanced and future scenarios, you cannot avoid working with ontologies, semantic models and graph databases.

Where is your company on the path to becoming more data-driven?

Note: I just saw this post and the image above, which emphasizes the importance of the relationship between ontologies and the application of AI agents.

Evaluation of SysML v2 for use in Collaborative MBSE between OEMs and Suppliers

It was interesting to hear Chris Watkins’ speech, which presented the findings from the AD PLM Action Group MBSE Collaboration Working Group on digital collaboration based on SysML v2.

It was interesting to hear Chris Watkins’ speech, which presented the findings from the AD PLM Action Group MBSE Collaboration Working Group on digital collaboration based on SysML v2.

The topic they research is that currently there are no common methods and standards for exchanging digital model-based requirements and architecture deliverables for the design, procurement, and acceptance of aerospace systems equipment across the industry.

The action group explored the value of SysML v2 for data-driven collaboration between OEMs and suppliers, particularly in the early concept phases.

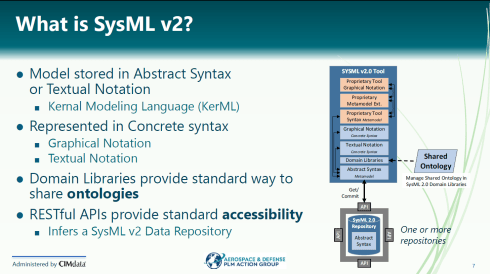

Chris started with a brief explanation of what SysXML v2 is – image below:

As the image illustrates, SysML v2-ready tools allow people to work in their proprietary interfaces while sharing results in common, defined structures and ontologies.

When analyzing various collaboration scenarios, one of the main challenges remained managing changes, the required ontologies, and working in a shared IT environment.

👉You can read the full report here: AD PAG reports: Model-Based Systems Engineering.

An interesting point of discussion here is that, in the report, participants note that, despite calling out significant gaps and concerns, a substantial majority of the industry indicated that their MBSE solution provider is a good partner. At the same time, only a small minority expressed a negative view.

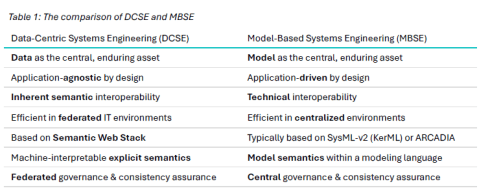

Would Data-Centric Systems Engineering change the discussion? See table 1 below from Yousef’s paper:

An illustration that there was enough food for discussion during the conference.

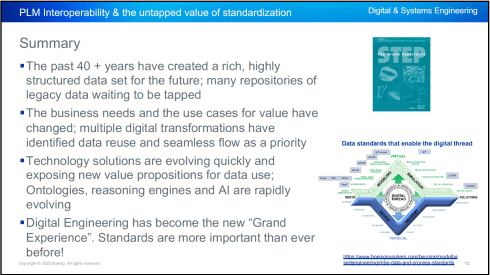

PLM Interoperability and the Untapped Value of 40 Years in Standardization

In the context of collaboration, two sessions fit together perfectly.

First, Kenny Swope from Boeing. Kenny is a longtime Boeing engineering leader and global industrial-data standards expert who oversees enterprise interoperability efforts, chairs ISO/TC 184/SC 4, and mentors youth in technology through 4-H and FIRST programs.

First, Kenny Swope from Boeing. Kenny is a longtime Boeing engineering leader and global industrial-data standards expert who oversees enterprise interoperability efforts, chairs ISO/TC 184/SC 4, and mentors youth in technology through 4-H and FIRST programs.

Kenny shared that over the past 40+ years, the understanding and value of this approach have become increasingly apparent, especially as organizations move toward a digital enterprise. In a digital enterprise, these standards are needed for efficient interoperability between various stakeholders. And the next session was an example of this.

Unlocking Enterprise Knowledge

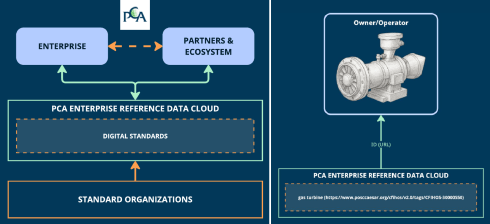

Fredrik Anthonisen, the CTO of the POSC Caesar Association (PCA), started his story about the potential value of efficient standard use.

Fredrik Anthonisen, the CTO of the POSC Caesar Association (PCA), started his story about the potential value of efficient standard use.

According to a Siemens report, “The true costs of downtime” a $1,4 trillion is lost to unplanned downtime.

The root cause is that, most of the time, the information needed to support the MRO activity is inaccessible or incomplete.

Making data available using standards can provide part of the answer, but static documents and slow consensus processes can’t keep up with the pace of change.

Therefore, PCA established the PCA enterprise reference data cloud, where all stakeholders in enterprise collaboration can relate their data to digital exposed standards, as the left side of the image shows.

Fredrik shared a use case (on the right side of the image) as an example. Also, he mentioned that the process for defining and making the digital reference data available to participants is ongoing. The reference data needs to become the trusted resource for the participants to monetize the benefits.

Summary

Day 1 had many more interesting and advanced concepts related to standards and the potential usage of AI.

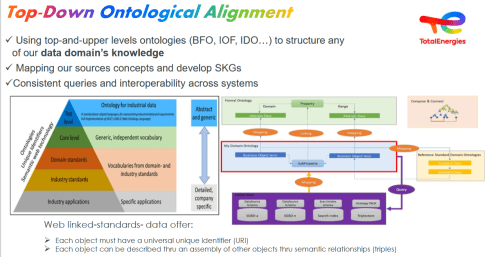

Jean-Charles Leclerc, Head of Innovation & Standards at TotalEnergies, in his session, “Bringing Meaning Back To Data,” elaborated on the need to provide data in the context of the domain for which it is intended, rather than “indexed” LLM data.

Jean-Charles Leclerc, Head of Innovation & Standards at TotalEnergies, in his session, “Bringing Meaning Back To Data,” elaborated on the need to provide data in the context of the domain for which it is intended, rather than “indexed” LLM data.

Very much aligned with Yousef’s statement that there is a need to apply semantic technologies, and especially ontologies, to turn the data into knowledge.

More details can also be found in the “Shape the future of PLM – together” post, where Jean-Charles was one of the leading voices.

The panel discussion at the end of day 1 was free of people jumping on the hype. Yes, benefits are envisioned across the product lifecycle management domain, but to be valuable, the foundation needs to be more structured than it has been in the past.

The panel discussion at the end of day 1 was free of people jumping on the hype. Yes, benefits are envisioned across the product lifecycle management domain, but to be valuable, the foundation needs to be more structured than it has been in the past.

“Reliable AI comes from a foundation that supports knowledge in its domain context.”

Conclusion

For the casual user, day 1 was tough – digital transformation in the product lifecycle domain requires skills that might not yet exist in smaller organizations. Understanding the need for ontologies (generic/domain-specific) and semantic models is essential to benefit from what AI can bring – a challenging and enjoyable journey to follow!

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

The meeting had two primary purposes.

- Firstly, we aimed to create an environment where these concepts could be discussed and presented to a broader audience, comprising academics, industrial professionals, and software developers. The group’s feedback could serve as a benchmark for them.

- The second goal was to bring people together and create a networking opportunity, either during the PLM Roadmap/PDT Europe conference, the day after, or through meetings established after this workshop.

Personally, it was a great pleasure to meet some people in person whose LinkedIn articles I had admired and read.

The meeting was sponsored by the Arrowhead fPVN project, a project I discussed in a previous blog post related to the PLM Roadmap/PDT Europe 2024 conference last year. Together with the speakers, we have begun working on a more in-depth paper that describes the similarities and the lessons learned that are relevant. This activity will take some time.

Therefore, this post only includes the abstracts from the speakers and links to their presentations. It concludes with a few observations from some attendees.

Reasoning Machines: Semantic Integration in Cyber-Physical Environments

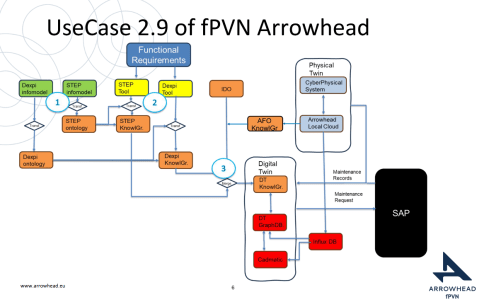

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

The presentation outlines the phases of the product and production lifecycle, including requirements, specification, design, build-up, handover, and operations. It raises a question about unifying these phases and their associated technologies and standards, emphasizing that the most extended phase, which involves operation, maintenance, failure, and evolution until retirement, should be the primary focus.

It also discusses seamless integration, outlining a partial list of standards and technologies categorized into three sections: “Modelling & Representation Standards,” “Communication & Integration Protocols,” and “Architectural & Security Standards.” Each section contains a table listing various technology standards, their purposes, and references. Additionally, the presentation includes a “Conceptual Layer Mapping” table that details the different layers (Knowledge, Service, Communication, Security, and Data), along with examples, functions, and references.

The presentation outlines an approach for utilizing semantic technologies to ensure interoperability across heterogeneous datasets throughout a product’s lifecycle. Key strategies include using OWL 2 DL for semantic consistency, aligning domain-specific knowledge graphs with ISO 23726-3, applying W3C Alignment techniques, and leveraging Arrowhead’s microservice-based architecture and Framework Ontology for scalable and interoperable system integration.

The utilized software architecture system, including three main sections: “Functional Requirements,” “Physical Twin,” and “Digital Twin,” each containing various interconnected components, will be presented. The Architecture includes today several Knowledge Graphs (KG): A DEXPI KG, A STEP (ISO 10303) KG, An Arrowhead Framework KG and under work the CFIHOS Semantics Ontology, all aligned.

👉The presentation: W3C Major standard interoperability_Paris

Beyond Handover: Building Lifecycle-Ready Semantic Interoperability

Jean-Charles Leclerc argued that Industrial data standards must evolve beyond the narrow scope of handover and static interoperability. To truly support digital transformation, they must embrace lifecycle semantics or, at the very least, be designed for future extensibility.

This shift enables technical objects and models to be reused, orchestrated, and enriched across internal and external processes, unlocking value for all stakeholders and managing the temporal evolution of properties throughout the lifecycle. A key enabler is the “pattern of change”, a dynamic framework that connects data, knowledge, and processes over time. It allows semantic models to reflect how things evolve, not just how they are delivered.

By grounding semantic knowledge graphs (SKGs) in such rigorous logic and aligning them with W3C standards, we ensure they are both robust and adaptable. This approach supports sustainable knowledge management across domains and disciplines, bridging engineering, operations, and applications.

Ultimately, it’s not just about technology; it’s about governance.

Being Sustainab’OWL (Web Ontology Language) by Design! means building semantic ecosystems that are reliable, scalable, and lifecycle-ready by nature.

Additional Insight: From Static Models to Living Knowledge

To transition from static information to living knowledge, organizations must reassess how they model and manage technical data. Lifecycle-ready interoperability means enabling continuous alignment between evolving assets, processes, and systems. This requires not only semantic precision but also a governance framework that supports change, traceability, and reuse, turning standards into operational levers rather than compliance checkboxes.

👉The presentation: Beyond Handover – Building Lifecycle Ready Semantic Interoperability

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

How can we achieve our vision of PLM – the Single Source of Truth?

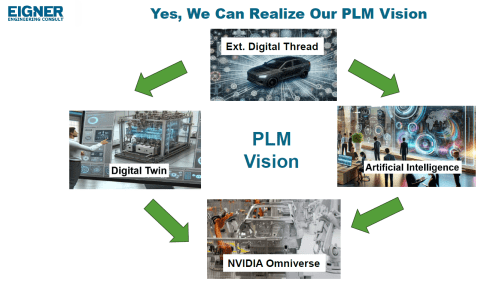

Martin Eigner stated that Product Lifecycle Management (PLM) has long promised to serve as the Single Source of Truth for organizations striving to manage product data, processes, and knowledge across their entire value chain. Yet, realizing this vision remains a complex challenge.

Achieving a unified PLM environment requires more than just implementing advanced software systems—it demands cultural alignment, organizational commitment, and seamless integration of diverse technologies. Central to this vision is data consistency: ensuring that stakeholders across engineering, manufacturing, supply chain, and service have access to accurate, up-to-date, and contextualized information along the Product Lifecycle. This involves breaking down silos, harmonizing data models, and establishing governance frameworks that enforce standards without limiting flexibility.

Emerging technologies and methodologies, such as Extended Digital Thread, Digital Twins, cloud-based platforms, and Artificial Intelligence, offer new opportunities to enhance collaboration and integrated data management.

However, their success depends on strong change management and a shared understanding of PLM as a strategic enabler rather than a purely technical solution. By fostering cross-functional collaboration, investing in interoperability, and adopting scalable architectures, organizations can move closer to a trustworthy single source of truth. Ultimately, realizing the vision of PLM requires striking a balance between innovation and discipline—ensuring trust in data while empowering agility in product development and lifecycle management.

👉The presentation: Martin – Workshop PLM Future 04_10_25

The Future is Data-Centric, Semantic, and Federated … Is your organization ready?

Yousef Hooshmand, who is currently working at NIO as PLM & R&D Toolchain Lead Architect, discussed the must-have relations between a data-centric approach, semantic models and a federated environment as the image below illustrates:

Why This Matters for the Future?

- Engineering is under unprecedented pressure: products are becoming increasingly complex, customers are demanding personalization, and development cycles must be accelerated to meet these demands. Traditional, siloed methods can no longer keep up.

- The way forward is a data-centric, semantic, and federated approach that transforms overwhelming complexity into actionable insights, reduces weeks of impact analysis to minutes, and connects fragmented silos to create a resilient ecosystem.

- This is not just an evolution, but a fundamental shift that will define the future of systems engineering. Is your organization ready to embrace it?

👉The presentation: The Future is Data-Centric, Semantic, and Federated.

Some of first impressions

👉 Bhanu Prakash Ila from Tata Consultancy Services– you can find his original comment in this LinkedIn post

Key points:

- Traditional PLM architectures struggle with the fundamental challenge of managing increasingly complex relationships between product data, process information, and enterprise systems.

- Ontology-Based Semantic Models – The Way Forward for PLM Digital Thread Integration: Ontology-based semantic models address this by providing explicit, machine-interpretable representations of domain knowledge that capture both concepts and their relationships. These lay the foundations for AI-related capabilities.

It’s clear that as AI, semantic technologies, and data intelligence mature, the way we think and talk about PLM must evolve too – from system-centric to value-driven, from managing data to enabling knowledge and decisions.

A quick & temporary conclusion

Typically, I conclude my blog posts with a summary. However, this time the conclusion is not there yet. There is work to be done to align concepts and understand for which industry they are most applicable. Using standards or avoiding standards as they move too slowly for the business is a point of ongoing discussion. The takeaway for everyone in the workshop was that data without context has no value. Ontologies, semantic models and domain-specific methodologies are mandatory for modern data-driven enterprises. You cannot avoid this learning path by just installing a graph database.

Over the last month, I have been actively engaged in the field; however, unfortunately, I have not been able to respond to all the interesting and sometimes humorous posts in my LinkedIn stream.

Over the last month, I have been actively engaged in the field; however, unfortunately, I have not been able to respond to all the interesting and sometimes humorous posts in my LinkedIn stream.

The fun started with a post from Oleg referring to a so-called BOM battle presented at Autodesk University by Gus Quade.

The image seems fake; however, the muscle power behind the BOM players looks real.

Prof. Dr. Jörg Fischer, also pictured, is advocating for rethinking PLM and BOM structures, and I share his discomfort.

Prof. Fischer wrote recently: “Forget everything you know about EBOM and MBOM. CTO+ is rewriting the rules of PLM. “

I am not a CTO expert, but I can grasp the underlying concepts and understand why it is closely associated with SAP. It aligns with the ultimate goal of maintaining a continuous flow of information throughout the company, with ERP (SAP?) at its core.

My question is, how far are we from that option?

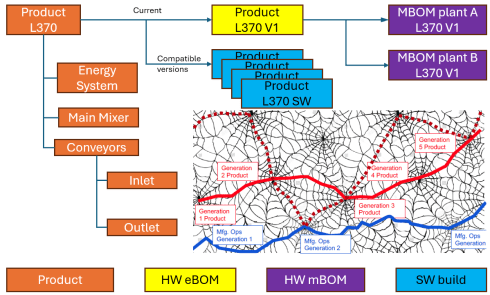

Current PLM implementations often focus on a linear process and data collection from left to right, as illustrated in the old Aras image below. I call this the coordinated approach.

During the recent Dutch PLM platform meeting, we also discussed the potential need for an eBOM, mBOM, and potentially the sBOM. A topic many mid-sized manufacturing companies have not mastered or implemented yet – illustrating the friction in current businesses.

During the recent Dutch PLM platform meeting, we also discussed the potential need for an eBOM, mBOM, and potentially the sBOM. A topic many mid-sized manufacturing companies have not mastered or implemented yet – illustrating the friction in current businesses.

Meanwhile, we discuss agentic AI, the need for data quality, ontologies and graph databases. Take a look at the upcoming workshop on the Future of PLM, scheduled for November 4th in Paris, which serves as a precursor to the PLM Roadmap/PDT Europe 2025 conference on November 5th and 6th.

The reality in the field and future capabilities seem to be so far apart, which made me think about what the next step is after BOM management to move towards the future.

The evolution of the BOM

For those active in PLM, this brief theory ensures we share a common understanding of BOMs.

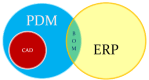

Level 0: In the beginning, there was THE BOM.

Initially, the Bill of Materials (BOM) existed only in ERP systems to support manufacturing. Together with the Bill of Process (BOP), it formed the heart of production execution. Without a BOM in ERP, product delivery would fail.

Initially, the Bill of Materials (BOM) existed only in ERP systems to support manufacturing. Together with the Bill of Process (BOP), it formed the heart of production execution. Without a BOM in ERP, product delivery would fail.

Level 1: Then came a new BOM from CAD.

With the rise of PDM systems and 3D CAD, another BOM emerged — reflecting the product’s design structure, including assemblies and parts. Often referred to as the CAD or engineering BOM, it frequently contained manufacturing details, such as supplier parts or consumables like paint and glue.

With the rise of PDM systems and 3D CAD, another BOM emerged — reflecting the product’s design structure, including assemblies and parts. Often referred to as the CAD or engineering BOM, it frequently contained manufacturing details, such as supplier parts or consumables like paint and glue.

This hybrid BOM bridged engineering and manufacturing, linking CAD/PDM with ERP. Many machine manufacturers adopted this model, as each project was customer-specific and often involved reusing data by copying similar projects.

![]() Many industrial manufacturers still use this linear approach to deliver solutions to their customers.

Many industrial manufacturers still use this linear approach to deliver solutions to their customers.

Level 2: The real eBOM and mBOM arrived.

Later, companies began distinguishing between the engineering BOM (eBOM) and manufacturing BOM (mBOM), especially as engineering became centralized and manufacturing decentralized.

The eBOM represented the stable engineering definition, while the mBOM was derived locally, adapting parts to specific suppliers or production needs.

At the same time, many organizations aimed to evolve toward a Configure-to-Order (CTO) business model — a long-term aspiration in aligning engineering and manufacturing flexibility, as noted by Prof. Jörg Fischer in his CTO+ concept.

A side step: The impact of modularity

Shifting from Engineer-to-Order (ETO) to Configure-to-Order (CTO) relies on adopting a modular product architecture. Modularity enables specific modules to remain stable while others evolve in response to ongoing innovation.

Shifting from Engineer-to-Order (ETO) to Configure-to-Order (CTO) relies on adopting a modular product architecture. Modularity enables specific modules to remain stable while others evolve in response to ongoing innovation.

It’s not just about creating a 200% eBOM or 150% mBOM but about defining modules with their own lifecycles that may span multiple product platforms. Many companies still struggle to apply these principles, as seen in discussions within the North European Modularity (NEM) network.

See one of my reports: The week after the North European Modularity network meeting.

We remain here primarily in the xBOM mindset: the eBOM defines engineering specifications, while the mBOM defines the physical realization—specific to suppliers or production sites.

Level 3: Extending to the sBOM?

To support service operations, the service BOM (sBOM) is introduced, managing serviceable parts and kits linked to the product. Managing service information in a connected manner adds complexity but also significant value, as the best margins often come from after-sales service.

To support service operations, the service BOM (sBOM) is introduced, managing serviceable parts and kits linked to the product. Managing service information in a connected manner adds complexity but also significant value, as the best margins often come from after-sales service.

Click on the image above to understand the relations between the eBOM, mBOM(s) and sBOM.

However, is the sBOM the real solution or only a theme pushed by BOM/PLM vendors to keep everything within their system? So far, this represents a linear hardware delivery model, with BOM structures tied to local ERP systems.

However, is the sBOM the real solution or only a theme pushed by BOM/PLM vendors to keep everything within their system? So far, this represents a linear hardware delivery model, with BOM structures tied to local ERP systems.

For most hardware manufacturers, the story ends here—but when software and product updates become part of the service, the lifecycle story continues.

The next levels: Software and Product Services require more than a BOM

As I mentioned earlier, during the Dutch PLM platform discussion, we had an interesting debate that began with the question of how to manage and service a product during operation. Here, we reach a new level of PLM – not only delivering products as efficiently as possible, but also maintaining them in the field – often for many years.

As I mentioned earlier, during the Dutch PLM platform discussion, we had an interesting debate that began with the question of how to manage and service a product during operation. Here, we reach a new level of PLM – not only delivering products as efficiently as possible, but also maintaining them in the field – often for many years.

There were two themes we discussed:

- The product gets physical updates and upgrades – how can we manage this with the sBOM – challenges with BOM versions or revisions ( a legacy approach)

- The product functions based on software-driven behavior, and the software can be updated on demand – how can we manage this with the sBOM (a different lifecycle)

The conclusion and answer to these two questions were:

We cannot use the sBOM anymore for this; in both cases, you need an additional (infra)structure to keep track of changes over time, I call it the logical product structure or product architecture.

The Logical Product Structure

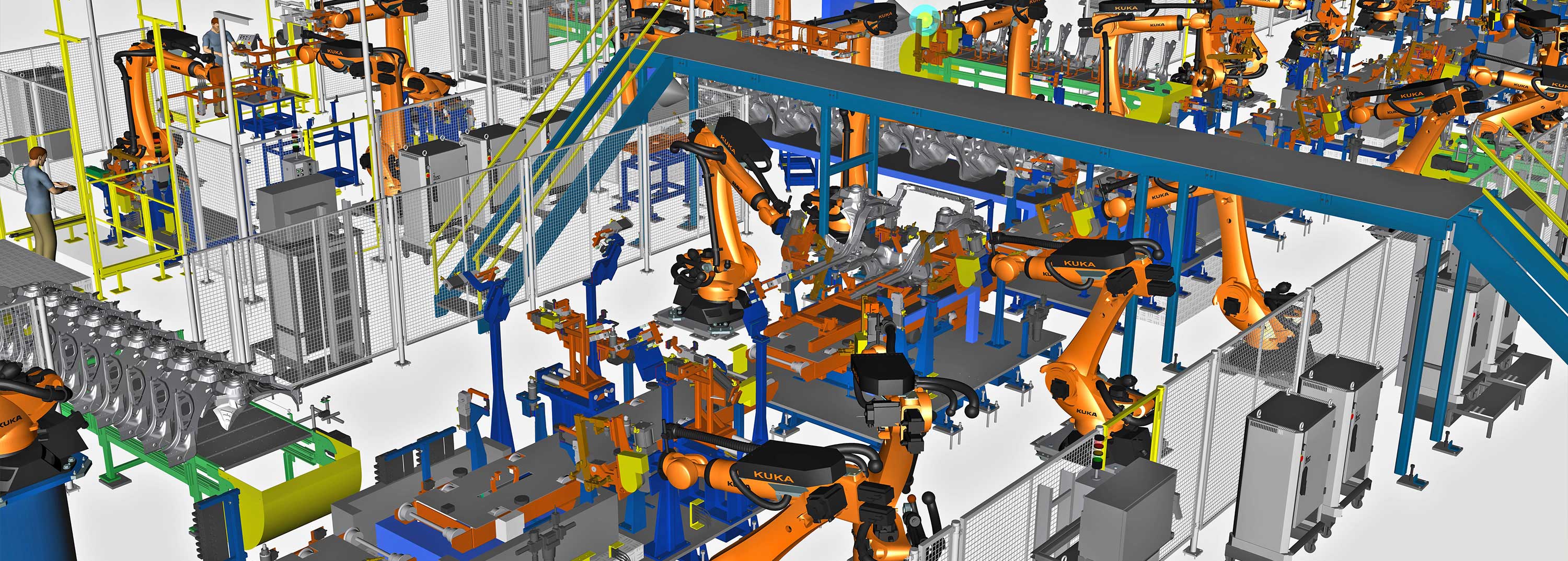

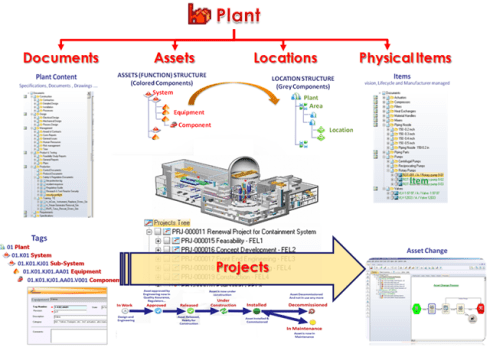

Since 2008, I have been involved in Asset Lifecycle Management projects, explaining the complementary value of PLM methodology and concepts related to an MRO environment, particularly for managing significant assets, such as those in the nuclear plants industry.

Since 2008, I have been involved in Asset Lifecycle Management projects, explaining the complementary value of PLM methodology and concepts related to an MRO environment, particularly for managing significant assets, such as those in the nuclear plants industry.

Historically, the configuration management of a plant was a human effort undertaken by individuals with extensive intrinsic knowledge.

A nuclear plant is an asset with a very long lifecycle that requires regular upgrades and services, and where safety is the top priority. However, thanks to digitization and an aging workforce, there was also a need to embed these practices within a digital infrastructure.

What I learned is that the logical product structure, also known as the plant breakdown structure (PBS), became an essential structure for combining the as-designed and as-operated structures of the plant.

In the SmarTeam image below, the plant breakdown structure was represented by the tag structure.

Coming back to our industrial products in service, it is conceptually a similar approach, albeit that the safety drivers and business margins might make it less urgent. For a product, there can also be a logical product structure that represents the logical components and their connections.

The logical structure of a product remains stable over time; however, specific modules or capabilities may be required, while the physical implementation (mBOM) and engineering definition (eBOM) may evolve over time.

Additionally, all relevant service activities, including issues and operational and maintenance data, can be linked to the logical structure. The logical structure is also the structure used for a digital twin representation.

The logical product structure and software

The logical product structure is also where hardware and software meet. The software can be managed in an ALM environment and provides traceability to the product in service through the product structure.

Note: this is a very simplified version, as you can imagine, it looks more like a web of connected datasets – the top level shows the traceability between the various artifacts – HW and SW

Where is the product structure defined?

The product structure originates from a system architect, and it depends on the tools they are using, where it is defined – historically in a document, later in an Excel file – the coordinated approach.

In a modern data-driven environment, you can find the product structure in an MBSE environment and then connect to a PLM system – the federated and connected approach.

There are also PLM vendors that have the main MBSE data elements in their core data model, reducing the need for building connectivity between the main PLM and MBSE elements. In my experience, the “all-in-one” solutions still underperform in usability and completeness.

Conclusion

I wrote this post to raise awareness that a narrow focus on BOM structures can create a potential risk for the future. Changing business models, for example, the product-service system, require a data-driven infrastructure where both hardware and software artifacts need to be managed in context. Probably not in a single system but supported by a federated infrastructure with a mix of technologies. And I feel sorry that I could not write about a model-based enterprise at this time!

I am looking forward to discussing the future of PLM with a select group of thought leaders on November 4th in Paris, as a precursor to the upcoming PLM Roadmap/PDT Europe conference. For the workshop on November 4th, we almost reached our maximum size we can accommodate, but for the conference, there is still the option to join us.

Please review the agenda and join us for engaging and educational discussions if you can.

And if you are not tired of discussing PLM as a term, a system or a strategy – watch the recording of this unique collection of PLM voices moderated by Michael Finochario.

This week there was an interesting discussion on LinkedIn initiated by Alex Bruskin from Senticore Technologies. I have known Alex for over 20 years, starting from the SmarTeam days and later through encounters in the PLM space. Alex is a real techie on the outside but also a person with a very creative mind to connect technology to business.

This week there was an interesting discussion on LinkedIn initiated by Alex Bruskin from Senticore Technologies. I have known Alex for over 20 years, starting from the SmarTeam days and later through encounters in the PLM space. Alex is a real techie on the outside but also a person with a very creative mind to connect technology to business.

You can see his LinkedIn featured posts here to get an impression.

Where is PLM @ Startups?

This time Alex shared an observation from an event organized by the Pittsburgh Robotics Network, where he spoke with several startups.

This time Alex shared an observation from an event organized by the Pittsburgh Robotics Network, where he spoke with several startups.

His point, and I quote Alex:

Then, I spoke to a number of presenters there, explaining Senticore capabilities and listening to their situation around engineering/ manufacturing.

– many startups offered an add-on to other platforms => an autonomous module for UAV/helicopter/Vehicle. Some offered robotic components or entire robots (robot-dog).

– all startups use #solidworks , and none use #catia or #nx

– none of them have a PLM system nor an MES. I am 90% certain none of them have ERP, either. They all are apparently using #excel for all these purposes.

– only a handful of them are considering getting a PLM system in the near future.

Read the full post here and the comments below to get a broader insight into the topic.

The PLM Doctor knows it all.

The point reminded me of an episode I did together with Helena Gutierrez from Share PLM last year. She asked the same question to the PLM Doctor.

Do you think PLM is only for big corporations or can startups also benefit from it?

You can see the conversation here:

Meanwhile, the PLM Doctor is unemployed due to the lack of incoming questions.

When looking at startups, I could see two paths. One is the traditional path based on historical mechanical PLM, and a second (potential) approach which is based on understanding the future complexity of the startup offering.

There are two paths – path #1

The first evolutionary path you might have seen a few times before in my blog post is the one depicted by Marc Halpern from Gartner in 2015. At that time, we started discussing Product Innovation Platforms and the new generation of PLM. You can see Marc’s slide below, which is still valid for most situations.

In the slide above, you see the startup company on the left side.

Often the main purpose of a startup company is to be visible on the market with their concept as fast as possible. Startups are often driven by a small group of multifunctional people developing a solution. In this approach, there is no place for people and reflection on processes as they are considered overhead.

Often the main purpose of a startup company is to be visible on the market with their concept as fast as possible. Startups are often driven by a small group of multifunctional people developing a solution. In this approach, there is no place for people and reflection on processes as they are considered overhead.

Only when you target your solution in a strongly regulated environment, e.g., medical devices and aerospace, you need to focus on the process too.

Therefore it is logical that most startup companies focus on the tools to develop their solution. A logical path, as what could you do without tools? Next, the choice of the tools will be, most of the time, driven by the team’s experience and available skills in the market.

Again statistics show it is not likely that advanced tools like NX or CATIA will be chosen for the design part. More likely mid-market products like SolidWorks or Autodesk products. And for data management and reporting, the logical tools are the office tools, Excel, Word and Visio.

Again statistics show it is not likely that advanced tools like NX or CATIA will be chosen for the design part. More likely mid-market products like SolidWorks or Autodesk products. And for data management and reporting, the logical tools are the office tools, Excel, Word and Visio.

And don’t forget PowerPoint to sell the solution.

The role of investors is often also here to question investments that are not clearly understood or relevant at that time.

How a startup scales up very much depends on the choices they make for Repeatable business. This is the moment that a company starts to create its legacy. Processes and best practices need to be established and why you often see is that seasoned people join the company. These people have proven their skills in the past, and most likely, they are willing to repeat this.

And here comes the risk – experienced people come with a much better holistic overview of the product lifecycle aspects. They know what critical steps are needed to move the company to an Integrated business. These experiences are crucial; however, they should not become the new single standard.

Implementing the past is not a guarantee for success in a digital and connected future.

Implementing their past experiences would focus too much on creating a System of Record (PLM 1.0), which is crucial for configuration management, change management and compliance. However, it would also create a productivity dip for those developing the product or solution.

This is the same dilemma that very small and medium enterprises face. They function reasonably well in a Repeatable business. How much should they invest in an Integrated or Collaborating business approach?

This is the same dilemma that very small and medium enterprises face. They function reasonably well in a Repeatable business. How much should they invest in an Integrated or Collaborating business approach?

Following the evolution path described by Marc Halpern always brings you to the point where technology changes from Coordinated to Connected. This is a challenging and immature topic, which I have discussed in my blog posts and during conferences.

See: The Challenges of a connected ecosystem for PLM or this full series of posts: The road to model-based and connected PLM.

There are two paths – path #2

Another path that startups could follow is a more forward-looking path, understanding that you need a coordinated and connected approach in the long term. For the fastest execution, you would like to work in a multidisciplinary mode in real time, exactly the characteristic of a startup.

However, in path #2, the startup should have a longer-term vision. Instead of choosing the obvious tools, they should focus on their company’s most important value streams. They have the opportunity to select integrated domains that are based on a connected, often model-based approach. Some examples of these integrated domains:

However, in path #2, the startup should have a longer-term vision. Instead of choosing the obvious tools, they should focus on their company’s most important value streams. They have the opportunity to select integrated domains that are based on a connected, often model-based approach. Some examples of these integrated domains:

- An MBSE environment focusing on real-time interaction related to product architecture and solution components(RFLP)

- A connected product design environment, where in real-time a virtual product can be created, analyzed, and optimized – connected software might be relevant here.

- A connected product realization environment where product engineering and suppliers work together in real time.

All three examples are typical Systems of Engagement. The big difference with individual tools is that they already focus on multidisciplinary collaboration on a data-driven, model-based approach.

In addition, having these systems in place allows the startup company to invest separately in a System of Record(s) environment when scaling up. This could be a traditional PLM system combined with a Configuration Management System or an Asset Management System.

System of Record choices, of course, depends on the industry needs and the usage of the product in the field. We should not consider one system that serves all; it is an infrastructure.

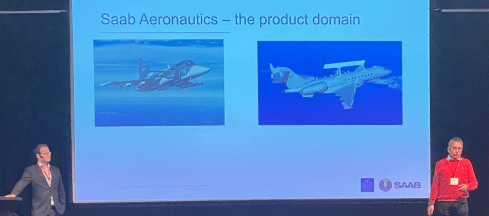

In the image below, you see the concept of this approach described by Erik Herzog from SAAB Aeronautics during the recent PLM Roadmap / PDT Europe conference. You can read more details of this approach in this post: The Week after PLM Roadmap PDT Europe.

![]() Note: SAAB is not a startup; therefore, they must deal with their legacy. They are now working on business sustainable concepts for the future: Heterogeneous and federated PLM.

Note: SAAB is not a startup; therefore, they must deal with their legacy. They are now working on business sustainable concepts for the future: Heterogeneous and federated PLM.

My opinion: The heterogeneous and federated approach is the ultimate target for any enterprise. I already mentioned the importance of connected environments regarding digital twins and sustainability. Material properties, process environmental impacts and product behavior coming from the field will all work only efficiently if dealt with in a connected and federated manner.

Conclusion

The challenge for startups is that they often start without the knowledge and experience that multidisciplinary collaboration within a value stream is crucial for a connected future. This a topic that I would like to explore further with startups and peers in my ecosystem. What do you think? What are your questions? Join the conversation.

I hope you all remained curious after last week’s report from day 1 of the PLM Roadmap / PDT Europe 2022 conference in Gothenburg. The networking dinner after day 1 and the Share PLM after-party allowed us to discuss and compare our businesses. Now the highlights of day 2

I hope you all remained curious after last week’s report from day 1 of the PLM Roadmap / PDT Europe 2022 conference in Gothenburg. The networking dinner after day 1 and the Share PLM after-party allowed us to discuss and compare our businesses. Now the highlights of day 2

The Power of Curiosity

We started with a keynote speech from Stefaan van Hooydonk, Founder of the Global Curiosity Institute. It was a well-received opener of the day and an interesting theme concerning PLM.

According to Stefaan, in the previous century, curiosity had a negative connotation. Curiosity killing the cat is one of these expressions confirming the mindset. It was all about conformity to the majority, the company, and curiosity was non-conformant.

According to Stefaan, in the previous century, curiosity had a negative connotation. Curiosity killing the cat is one of these expressions confirming the mindset. It was all about conformity to the majority, the company, and curiosity was non-conformant.

The same mindset I would say we have with traditional PLM; we all have to work the same way with the same processes.

In the 21st century, modern enterprises stimulate curiosity as we understand that throughout history, curiosity has been the engine of individual, organizational, and societal progress. And in particular, in modern, unpredictable times, curiosity becomes important, for the world, the others around us and ourselves.

As Stefaan describes in his book, the Curiosity Manifesto, organizations and individuals can develop curiosity. Stefaan pushed us to reflect on our personal curiosity behavior.

- Are we really interested in the person, the topic I do not know or do not like?

- Are we avoiding curious steps out of fear? Fear for failing, judgment?

After Stefaan’s curiosity storm, you could see that the audience was inspired to apply it to themselves and their PLM mission(s).

I hope the latter – as here there is a lot to discover.

Digital Transformation – Time to roll up your sleeves

In his presentation, Torbjörn Holm, co-founder of Eurostep, addressed one of the bigger elephants in the modern enterprise: how to deal with data?

Thanks to digitization, companies are gathering ad storing data, and there seem to be no limits. However, data centers compete for electricity from the grid with civilians.

Torbjörn also introduced the term “Dark data – the dirty secret of the ICT sector. We store too much data; some research mentions that only 12 % of the data stored is critical, and the rest clogs up on some file servers. Storing unstructured and unused data generates millions of greenhouse gasses yearly.

Torbjörn also introduced the term “Dark data – the dirty secret of the ICT sector. We store too much data; some research mentions that only 12 % of the data stored is critical, and the rest clogs up on some file servers. Storing unstructured and unused data generates millions of greenhouse gasses yearly.

It is time for a data cleanup day, and inspired by Torbjörn’s story, I have already started to clean up my cloud storage. However, I did not touch my backup hard disks as they do not use energy when switched off.

Further, Torbjörn elaborated that companies need to have end-to-end data policies. Which data is required? And in the case of contracted work or suppliers, data is crucial.

Ultimately companies that want to benefit from a virtual twin of their asset in operation need to have processes in place to acquire the correct data and maintain the valid data. Digital twins do not run on documents; as mentioned in some of my blog posts, they need accurate data.

Torbjörn once more reminded us that the PLCS objective is designed for that.

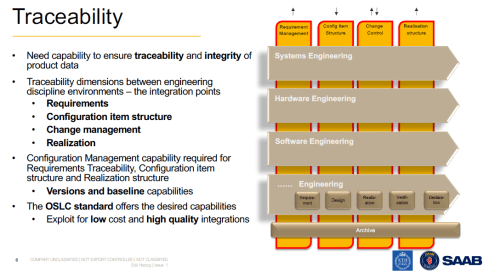

Heterogeneous and federated PLM – is it feasible?

One of the sessions that upfront had most of my attention was the presentation from Erik Herzog, Technical Fellow at Saab Aeronautics and Jad El-Khoury, Researcher at the KTH/Royal Institute of Technology.

Their presentation was closely related to the pre-conference workshop we had organized by Erik and Eurostep. More about this topic in the future.

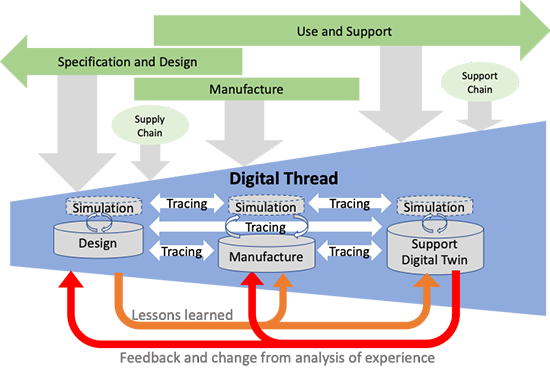

Saab, Eurostep and KTH conducted a research project named Helipe to analyze and test a federated PLM architecture. The concept was strongly driven by engineering. The idea is shown in the images below.

First are the four main modular engineering environments; in the image, we see mechanical, electrical, software and engineering environments. The target is to keep these environments as standard as possible towards the outside world so that later, an environment could be swapped for a better environment. Inside an environment, automation should provide optimal performance for the users.

In my terminology, these environments serve as systems of engagement.

The second dimension of this architecture is the traceability layer(s) – the requirements management layer, the configuration item structures, change control and realization structures.

These traceability structures look much like what we have been doing with traditional PLM, CM and ERP systems. In my terminology, they are the systems or record, not mentioned to directly serve end-users but to provide traceability, baselines for configuration, compliance and more.

The team chose the OSLC standard to realize these capabilities. One of the main reasons because OSLC is an existing open standard based on linked data, not replicating data. In this way, a federated environment would be created with designated connections between datasets.

Jad El-Koury demonstrated how to link an existing requirement in Siemens Polarion to a Defect in IBM ELM and then create a new requirement in Polarion and link this requirement to the same defect. I never get excited from technical demos; more important to learn is the effort to build such integration and its stability over time. Click on the image for the details

Jad El-Koury demonstrated how to link an existing requirement in Siemens Polarion to a Defect in IBM ELM and then create a new requirement in Polarion and link this requirement to the same defect. I never get excited from technical demos; more important to learn is the effort to build such integration and its stability over time. Click on the image for the details

The conclusions from the team below give the right indicators where the last two points seem feasible.

Still, we need more benchmarking in other environments to learn.

Still, we need more benchmarking in other environments to learn.

I remain curious about this approach as I believe it is heading toward what is necessary for the future, the mix of systems of record and systems of engagement connected through a digital web.

The bold part of the last sentence may be used by marketers.

Sustainability and Data-driven PLM – the perfect storm

For those familiar with my blog (virtualdutchman.com) and my contribution to the PLM Global Green Alliance, it will be no surprise that I am currently combining new ways of working for the PLM domain (digitization) with an even more hot topic, sustainability.

For those familiar with my blog (virtualdutchman.com) and my contribution to the PLM Global Green Alliance, it will be no surprise that I am currently combining new ways of working for the PLM domain (digitization) with an even more hot topic, sustainability.

More hot is perhaps a cynical remark.

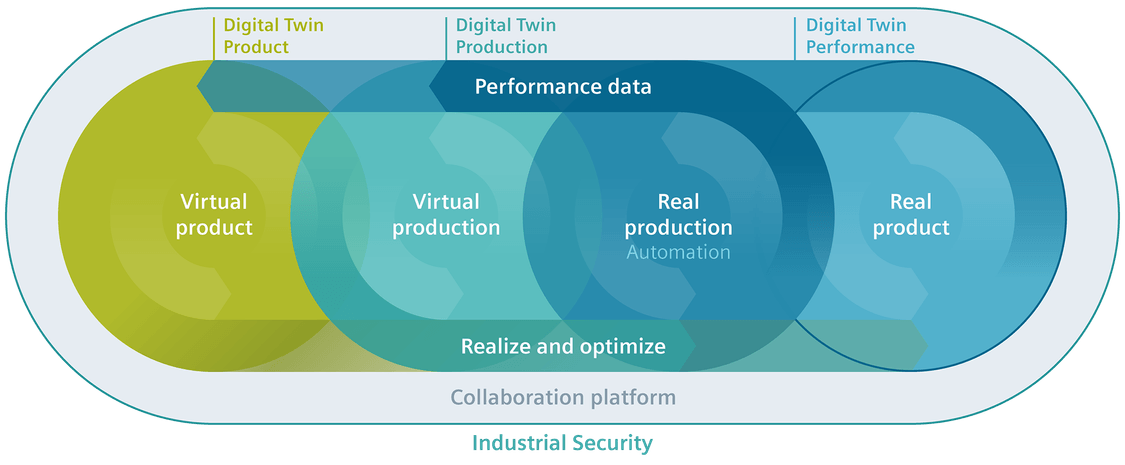

In my presentation, I explained that a model-based, data-driven enterprise will be able to use digital twins during the design phase, the manufacturing process planning and twins of products in operation. Each twin has a different purpose.

The virtual product during the design phase does not have a real physical twin yet, so some might say it is not a twin at this stage. The virtual product/twin allows companies to perform trade-offs, verification and validation relatively fast and inexpensively. The power of analyzing this virtual twin will enable companies to design products not only at the best price/performance range but even as important, with the lowest environmental impact during manufacturing and usage in the field.

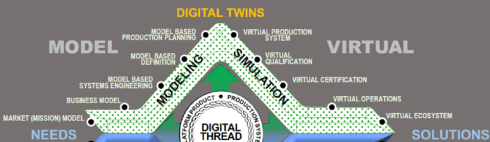

As the Boeing diamond nicely shows, there is a whole virtual world for digital twins. The manufacturing digital twin allows companies to analyze their manufacturing process and virtually analyze the most effective manufacturing process, preferably with the lowest environmental impact.

For digital twins from a product in the field, we can analyze its behavior and optimize performance, hopefully with environmental performance indicators in mind.

For a sustainable future, it is clear that we need to implement concepts of the circular economy as the earth does not have enough resources and renewables to support our current consumption behavior and ways of living.

Note: not for everybody on the globe, a quote from the European Environment Agency below:

Europe consumes more resources than most other regions. An average European citizen uses approximately four times more resources than one in Africa and three times more than one in Asia, but half of that of a citizen of the USA, Canada, or Australia

To reduce consumption, one of the recommendations is to switch the business model from owning products to products as a service. In the case of products as a service, the manufacturer becomes the owner of the full product lifecycle. Therefore, the manufacturer will have business reasons to make the products repairable, upgradeable, recyclable and using energy efficiently, preferably with renewables. If not, the product might become too expensive; fossil energy will be too expensive as carbon taxes will increase, and virgin materials might become too expensive.

It is a business change; however, sustainability will push organizations to change faster than we are used to. For example, we learned this week that the peeking energy prices and Russia’s current war in Ukraine have led to strong investments in renewables.

It is a business change; however, sustainability will push organizations to change faster than we are used to. For example, we learned this week that the peeking energy prices and Russia’s current war in Ukraine have led to strong investments in renewables.

As a result, many countries no longer want to depend on Russian energy. The peak of carbon emissions for the world is now expected in 2025.

(Although we had a very bad year so far)

Therefore, my presentation concluded that we should use sustainability as an additional driver for our digital transformation in the PLM domain. The planet cannot wait until we slowly change our traditional working methods.

Therefore, my presentation concluded that we should use sustainability as an additional driver for our digital transformation in the PLM domain. The planet cannot wait until we slowly change our traditional working methods.

Therefore, the need for digital twins to support sustainability and systems thinking are the perfect storm to speed up our digitization projects.

You can find my presentation as usual, here on SlideShare and a “spoken” version on our PGGA YouTube channel here

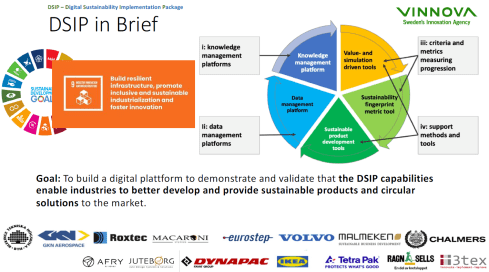

Digitalization for the Development and Industrialization of Innovative and Sustainable Solutions

This session, given by Ola Isaksson, Professor, Product Development & Systems Engineering Design Research Group Leader at Chalmers University, was a great continuation on my part of sustainability. Ola went deeper into the aspects of sustainable products and sustainable business models.

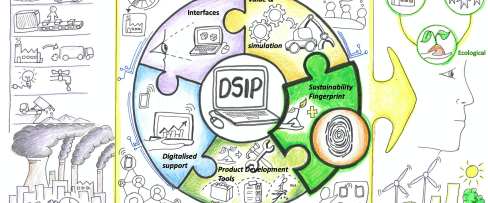

The DSIP project (Digital Sustainability Implementation Package – image above) aims to help companies understand all aspects of sustainable development. Ola mentioned that today’s products’ evolution is insufficient to ensure a sustainable outcome. Currently, not products nor product development practices are adequate enough as we do not understand all the aspects.

For example, Ola used the electrification process, taking the Lithium raw material needed for the batteries. If we take the Nissan Leaf car as the point of measure, we would have used all Lithium resources within 50 years.

Therefore, other business models are also required, where the product ownership is transferred to the manufacturer. This is one of the 9Rs (or 10), as the image shows moving from a linear economy towards a circular economy.

Also, as I mentioned in my session, Ola referred to the upcoming regulations forcing manufacturers to change their business model or product design. All these aspects are discussed in the DSIP project, and I look forward to learning the impact this project had on educating and supporting companies in their sustainability journey.

A day 2 summary

We had Bernd Feldvoss, Value Stream Leader PLM Interoperability Standards at Airbus, reporting on the progress of the A&D action group focusing on Collaboration. At this stage, the project team has developed an open-service Collaboration Management System (CMS) web application, providing navigation through the eight-step guidelines and offering the potential to improve OEM-supplier collaboration consistency and efficiency within the A&D community.

We had Bernd Feldvoss, Value Stream Leader PLM Interoperability Standards at Airbus, reporting on the progress of the A&D action group focusing on Collaboration. At this stage, the project team has developed an open-service Collaboration Management System (CMS) web application, providing navigation through the eight-step guidelines and offering the potential to improve OEM-supplier collaboration consistency and efficiency within the A&D community.

We had Henrik Lindblad, Group Leader PLM & Process Support at the European Spallation Source, building and soon operating the world’s most powerful neutron source, enabling scientific breakthroughs in research related to materials, energy, health and the environment. Besides a scientific breakthrough, this project is also an example of starting with building a virtual twin of the facility from the start providing a multidisciplinary collaboration space.

We had Henrik Lindblad, Group Leader PLM & Process Support at the European Spallation Source, building and soon operating the world’s most powerful neutron source, enabling scientific breakthroughs in research related to materials, energy, health and the environment. Besides a scientific breakthrough, this project is also an example of starting with building a virtual twin of the facility from the start providing a multidisciplinary collaboration space.

Conclusion

I left the conference with a lot of positive energy. The Curiosity session from Stefaan van Hooydonk energized us all, but as important for our PLM domain, I saw the trend towards more federated PLM environments, more discussions related to sustainability, and people in 3D again. So far, my takeaways this time. Enough to explore till the next event.

Another episode of “The PLM Doctor is IN“. This time a question from Ilan Madjar, partner and co-founder of XLM Solutions. Ilan is my co-moderator at the PLM Global Green Alliance for sustainability topics.

All these activities resulted in the following question(s) related to the Digital Twin. Now sit back and enjoy.

PLM and the Digital Twin

Is it a new concept? How to implement and certify the result?

Relevant topics discussed in this video

- An article about the introduction of the Digital Twin concept by Michael Grieves: The history and creation of the digital twin concept discussing Michael Grieves

- A presentation from Don Farr explaining the digital value chain with the “famous” Boeing diamond model – PDF HERE

- My Slideshare presentation discussing the difference between Coordinated and Connected PLM

- My Slideshare presentation from the Digital Twin conference Eindhoven Nov 2020: Digital Twins do not run on documents

Conclusion

I hope you enjoyed the answer and look forward to your questions and comments. Let me know if you want to be an actor in one of the episodes.

The main rule: A (single) open question that is puzzling you related to PLM.

After “The PLM Doctor is IN #2,” now again a written post in the category of PLM and complementary practices/domains.

After PLM and Configuration Lifecycle ManagementCLM (January 2021) and PLM and Configuration Management CM (February 2021), now it is time to address the third interesting topic:

PLM and Supply Chain collaboration.

In this post, I am speaking with Magnus Färneland from Eurostep, a company well known in my PLM ecosystem, through their involvement in standards (STEP and PLCS), the PDT conferences, and their PLM collaboration hub, ShareAspace.

Supply Chain collaboration

The interaction between OEMs and their suppliers has been a topic of particular interest to me. As a warming-up, read my post after CIMdata/PDT Roadmap 2020: PLM and the Supply Chain. In this post, I briefly touched on the Eurostep approach – having a Supply Chain Collaboration Hub. Below an image from that post – in this case, the Collaboration Hub is positioned between two OEMs.

Recently Eurostep shared a blog post in the same context: 3 Steps to remove data silos from your supply chain addressing the dreams of many companies: moving from disconnected information silos towards a logical flow of data. This topic is well suited for all companies in the digital transformation process with their supply chain. So, let us hear it from Eurostep.

Eurostep – the company / the mission

First of all, can you give a short introduction to Eurostep as a company and the unique value you are offering to your clients?

First of all, can you give a short introduction to Eurostep as a company and the unique value you are offering to your clients?

Eurostep was founded in 1994 by several world-class experts on product data and information management. In the year 2000, we started developing ShareAspace. We took all the experience we had from working with collaboration in the extended enterprise, mixed it with our standards knowledge, and selected Microsoft as the technology for our software platform.

Eurostep was founded in 1994 by several world-class experts on product data and information management. In the year 2000, we started developing ShareAspace. We took all the experience we had from working with collaboration in the extended enterprise, mixed it with our standards knowledge, and selected Microsoft as the technology for our software platform.

We now offer ShareAspace as a solution for product information collaboration in all three industry verticals where we are active: Manufacturing, Defense and AEC & Plant.

ShareAspace is based on an information standard called PLCS (ISO 10300-239). This means we have a data model covering the complete life cycle of a product from requirements and conceptual design to an existing installed base. We have added things needed, such as consolidation and security. Our partnership with Microsoft has also resulted in ShareAspace being available in Azure as a service (our Design to Manufacturing software).

ShareAspace is based on an information standard called PLCS (ISO 10300-239). This means we have a data model covering the complete life cycle of a product from requirements and conceptual design to an existing installed base. We have added things needed, such as consolidation and security. Our partnership with Microsoft has also resulted in ShareAspace being available in Azure as a service (our Design to Manufacturing software).

Why a supply chain collaboration hub?

Currently, most suppliers work in a disconnected manner with their clients – sending files up and down or the need to work inside the OEM environment. What are the reasons to consider a supply chain collaboration hub or, as you call it, a product information collaboration solution?

Currently, most suppliers work in a disconnected manner with their clients – sending files up and down or the need to work inside the OEM environment. What are the reasons to consider a supply chain collaboration hub or, as you call it, a product information collaboration solution?

The hub concept is not new per se. There are plenty of examples of file sharing hubs. Once you realize that sending files back and forth by email is a disaster for keeping control of your information being shared with suppliers, you would probably try out one of the available file-sharing alternatives.

The hub concept is not new per se. There are plenty of examples of file sharing hubs. Once you realize that sending files back and forth by email is a disaster for keeping control of your information being shared with suppliers, you would probably try out one of the available file-sharing alternatives.

However, after a while, you begin to realize that a file share can be quite time consuming to keep up to date. Files are being changed. Files are being removed! Some files are enormous, and you realize that you only need a fraction of what is in the file. References within one file to another file becomes corrupt because the other file is of a new version. Etc. Etc.

![]() This is about the time when you realize that you need similar control of the data you share with suppliers as you have in your internal systems. If not better.

This is about the time when you realize that you need similar control of the data you share with suppliers as you have in your internal systems. If not better.

A hub allows all partners to continue to use their internal tools and processes. It is also a more secure way of collaboration as the suppliers and partners are not let into the internal systems of the OEM.

Another significant side effect of this is that you only expose the data in the hub intended for external sharing and avoid sharing too much or exposing internal sensitive data.

A hub is also suitable for business flexibility as partners are not hardwired with the OEM. Partners can change, and IT systems in the value chain can change without impacting more than the single system’s connecting to the hub.

Should every company implement a supply chain collaboration hub?

Based on your experience, what types of companies should implement a supply chain collaboration hub and what are the expected benefits?

Based on your experience, what types of companies should implement a supply chain collaboration hub and what are the expected benefits?

The large OEMs and 1st tier suppliers certainly benefit from this since they can incorporate hundreds, if not thousands, of suppliers. Sharing technical data across the supply chain from a dedicated hub will remove confusions, improve control of the shared data, and build trust with their partners.

The large OEMs and 1st tier suppliers certainly benefit from this since they can incorporate hundreds, if not thousands, of suppliers. Sharing technical data across the supply chain from a dedicated hub will remove confusions, improve control of the shared data, and build trust with their partners.

With our cloud-based offering, we now also make it possible for at least mid-sized companies (like 200+ employees) to use ShareAspace. They may not have a well-adopted PLM system or the issues of communicating complex specifications originating from several internal sources. However still, they need to be professional in dealing with suppliers.

![]() The smallest client we have is a manufacturer of pool cleaners, a complex product with many suppliers. The company Weda [www.weda.se] has less than 10 employees, and they use ShareAspace as SaaS. With ShareAspace, they have improved their collaboration process with suppliers and cut costs and lowered inventory levels.

The smallest client we have is a manufacturer of pool cleaners, a complex product with many suppliers. The company Weda [www.weda.se] has less than 10 employees, and they use ShareAspace as SaaS. With ShareAspace, they have improved their collaboration process with suppliers and cut costs and lowered inventory levels.

ShareAspace can really scale big. It serves as a collaboration solution for the two new Aircraft carriers in the UK, the QUEEN ELIZABETH class. The aircraft carriers were built by a consortium that was closed in early 2020.

ShareAspace can really scale big. It serves as a collaboration solution for the two new Aircraft carriers in the UK, the QUEEN ELIZABETH class. The aircraft carriers were built by a consortium that was closed in early 2020.

ShareAspace is being used to hold the design data and other documentation from the consortium to be available to the multiple organizations (both inside and outside of the Ministry of Defence) that need controlled access.

What is the dependency on standards?

I always associate Eurostep with the PLCS (ISO 10303-239) standard, providing an information model for “hardware” products along the lifecycle. How important is this standard for you in the context of your ShareAspace offering?

I always associate Eurostep with the PLCS (ISO 10303-239) standard, providing an information model for “hardware” products along the lifecycle. How important is this standard for you in the context of your ShareAspace offering?

Should everyone adapt to this standard?

We have used PLCS to define the internal data schema in ShareAspace. This is an excellent starting point for capturing information from different systems and domains and still getting it to fit together. Why invent something new?

We have used PLCS to define the internal data schema in ShareAspace. This is an excellent starting point for capturing information from different systems and domains and still getting it to fit together. Why invent something new?

However, we can import data in most formats, and it does not have to be according to a standard. When connecting to Teamcenter, Windchill, Enovia, SAP, Oracle, Maximo etc., it is more often in a proprietary format than according to any standards.

On the other hand, in some industries like Defense, standards-based data exchange is required and put into contracts. Sometimes it prescribes PLCS. For the plant industry, it could be CFIHOS or ISO15926.

Supply Chain Collaboration and digital transformation

As stated at the beginning of this post, digital transformation is about connecting the information siloes through a digital thread. How important is this related to the supply chain?

As stated at the beginning of this post, digital transformation is about connecting the information siloes through a digital thread. How important is this related to the supply chain?

Many companies have come a long way in improving their internal management of product data. But still, the exchange and sharing of data with the external world has considerable potential for improvement. Just look at the chaos everyone has experienced with emails, still used a lot, in finding the latest Word document or PowerPoint file. Imagine if you collaborate on a ship, a truck, a power plant, or a piece of complex infrastructure. FTP is not the answer, and for product data, Dropbox is not doing the trick.

Many companies have come a long way in improving their internal management of product data. But still, the exchange and sharing of data with the external world has considerable potential for improvement. Just look at the chaos everyone has experienced with emails, still used a lot, in finding the latest Word document or PowerPoint file. Imagine if you collaborate on a ship, a truck, a power plant, or a piece of complex infrastructure. FTP is not the answer, and for product data, Dropbox is not doing the trick.

A Digital Thread must support versions and changes in all directions, as changes are natural with reasonably advanced products. Much of the information created about or around a product is generated within the supply chain, like production parameters, test and inspection protocols, certifications, and more. Without an intelligent way of capturing this data, companies will continue to spend a fortune on administration trying to manage this manually.

As the Digital Thread extends across the value chain, a useful sharing tool is needed to allow for configuration management across the complete chain – ShareAspace is designed for this. The great thing with PLCS is that it gives a standard model for the Digital Thread covering several Digital Twins. PLCS adds the life cycle component, which is essential, and there is no alternative. Therefore, we are welcome with ShareAspace and PLCS to add capabilities to snapshot standards like IFC etc., that are outside the STEP series of standards.

Learning more

We discussed that a supply chain collaboration hub can have specific value to a company. Where can readers learn more?

There is a lot of information available. Of course, on our Eurostep website, you will find information under the tab Resources or on the ShareAspace website under the tab News.

Other sources are:

What I have learned

- I am surprised to see that the type of Supplier Collaboration Platform delivered by Eurostep is not a booming market. Where Time to Market is significantly impacted by how companies work with their suppliers, most companies still rely on the exchange of data packages.