You are currently browsing the category archive for the ‘ERP’ category.

I am writing this post because one of my PLM peers recently asked me this question: “Is the BOM losing its position? He was in discussion with another colleague who told him:

I am writing this post because one of my PLM peers recently asked me this question: “Is the BOM losing its position? He was in discussion with another colleague who told him:

“If you own the BOM, you own the Product Lifecycle”.

This statement made me think of ä recent post from Jan Bosch recent post: Product Development fallacy #8: the bill of materials has the highest priority.

Software becomes increasingly an essential part of the final product, and combined with Jan’s expertise in software development, he wrote this article. I recommend reading the full post (4 min read) and next browse through the comments.

Software becomes increasingly an essential part of the final product, and combined with Jan’s expertise in software development, he wrote this article. I recommend reading the full post (4 min read) and next browse through the comments.

If you cannot afford these 10 minutes, here is my favorite quote from the article:

An excessive focus on the bill of materials leads to significant challenges for companies that are undergoing a digital transformation and adopting continuous value delivery. The lack of headroom, high coupling and versioning hell may easily cause an explosion of R&D expenditure over time.

Where did the BOM focus come from? A historical overview related to the rise (and fall) of the BOM.

In the beginning, there was the drawing.

Before the era of computers, there was “THE drawing”, describing assemblies, subassemblies or parts. And on the drawing, you can find the parts list if relevant. This parts list was the first Bill of Material, describing the parts/materials shown on the drawing.

Before the era of computers, there was “THE drawing”, describing assemblies, subassemblies or parts. And on the drawing, you can find the parts list if relevant. This parts list was the first Bill of Material, describing the parts/materials shown on the drawing.

Next came MRP/ERP

With the introduction of the MRP system (Material Requirement Planning), it was the first step that by using computers, people could collect the material requirements for one system as data and process.

With the introduction of the MRP system (Material Requirement Planning), it was the first step that by using computers, people could collect the material requirements for one system as data and process.

Entering new materials/parts described on drawings was still a manual process, as well as referring to existing parts on the drawing. Reuse of parts was a manual process based on individual knowledge.

In the nineties, MRP evolved into ERP (Enterprise Resource Planning), which included the MRP part and added resource and manufacturing planning and financial reporting.

The ERP system became the most significant IT system, the execution system of the company. As it was the first enterprise system implemented, it was the first moment we learned about implementation challenges – people change and budget overruns. However, as the ERP system brought visibility to the company’s execution, it became a “must-have” system for management.

The ERP system became the most significant IT system, the execution system of the company. As it was the first enterprise system implemented, it was the first moment we learned about implementation challenges – people change and budget overruns. However, as the ERP system brought visibility to the company’s execution, it became a “must-have” system for management.

The introduction of mainstream 2D CAD did not affect the company’s culture so much. Drawings became electronic drawings, and the methodology of the parts list on the drawing remained.

Sometimes the interaction with the MRP/ERP system was enhanced by an interface – sending the drawing BOM to ERP. The advantage of the interface: no manual transfer of data reducing typos and BOM errors. The disadvantages at that time: relatively expensive (connectivity between systems was a challenge) and mostly one direction.

Sometimes the interaction with the MRP/ERP system was enhanced by an interface – sending the drawing BOM to ERP. The advantage of the interface: no manual transfer of data reducing typos and BOM errors. The disadvantages at that time: relatively expensive (connectivity between systems was a challenge) and mostly one direction.

And then there was PDM.

In parallel with the introduction of ERP systems, mainstream 3D CAD systems became affordable, particularly SolidWorks, Solid Edge and Inventor. These 3D CAD systems allow sharing of parts and assemblies in different products, and the PDM database was the first aid to support part reuse, versioning and standardization.

In parallel with the introduction of ERP systems, mainstream 3D CAD systems became affordable, particularly SolidWorks, Solid Edge and Inventor. These 3D CAD systems allow sharing of parts and assemblies in different products, and the PDM database was the first aid to support part reuse, versioning and standardization.

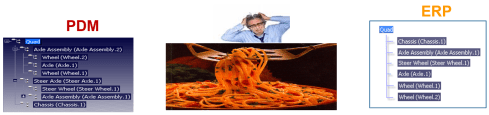

By extracting the parts from the assemblies and subassemblies, it was possible to generate a BOM structure in the PDM system to be transferred or typed into the ERP system. We did not talk about EBOM or MBOM then, as there was only one BOM in the ERP system, and the PDM system was a tool to feed the ERP system.

Many companies still have based their processes on this approach. ERP (read SAP nowadays) is the central execution system, and PDM is an external system. You might remember the story and image from my previous post about people, processes and tools. The bad practice example: Asking the ERP system to provide a part number when starting to design a part.

Many companies still have based their processes on this approach. ERP (read SAP nowadays) is the central execution system, and PDM is an external system. You might remember the story and image from my previous post about people, processes and tools. The bad practice example: Asking the ERP system to provide a part number when starting to design a part.

And then products started to change.

In the early 2000s, I worked with SmarTeam to define the E&E (Electronics and Electrical) template. One of the new concepts was to synchronize all design data coming from different disciplines to a single BOM structure.

In the early 2000s, I worked with SmarTeam to define the E&E (Electronics and Electrical) template. One of the new concepts was to synchronize all design data coming from different disciplines to a single BOM structure.

It was the time we started to talk about the EBOM. A type of BOM, as the structure to consolidate all the design data, was based on parts.

The EBOM, most of the time, reflects the design intent in logical groups and sending the relevant parts in the correct order to the ERP system was a favorite expensive customization for service providers. How to transfer an engineering BOM view to an ERP system that only understands the manufacturing view?

Note: not all ERP systems have the data model to differentiate between engineering parts and manufacturing parts

The image below illustrates the challenge and the customer’s perception.

The automated link between the design side (EBOM) and manufacturing side (MBOM) was a mission impossible – too many exceptions for the (spaghetti) code.

And then came the MBOM.

The identified issues connecting PDM and ERP led to the concept of implementing the MBOM in the PLM system. The MBOM in PLM is one of the characteristics of a PLM implementation compared to a PDM implementation. In a traditional PLM system, there is an interaction and connection between the EBOM and MBOM. EBOM parts should end up as MBOM parts. This interaction can be supported by automation, however, as it is in the same system, still leaving manual changes possible.

The MBOM structure in PLM could then be the information structure to transfer to the ERP system; however, there is more, as Jörg W. Fischer wrote in his provoking post-Die MBOM muss weg (The MBOM must go). He rightly points out (in German) that the MBOM is not a structure on its own but a combination of different views based on Assembly Drawings, Process Planning and Material Requirements.

The MBOM structure in PLM could then be the information structure to transfer to the ERP system; however, there is more, as Jörg W. Fischer wrote in his provoking post-Die MBOM muss weg (The MBOM must go). He rightly points out (in German) that the MBOM is not a structure on its own but a combination of different views based on Assembly Drawings, Process Planning and Material Requirements.

His conclusion:

Calling these structures, MBOM is trying to squeeze all three structures into one. That usually doesn’t work and then leads to much more emotional discussions in the project. It also costs a lot of money. It is, therefore, better not to use the term MBOM at all.

And indeed, just having an MBOM in your PLM system might help you to prepare some of the manufacturing steps, the needed resources and parts. The MBOM result still has to be localized at the local plant where the manufacturing takes place. And here, the systems used are the ERP system and the MES system.

The main advantage of having the MBOM in the PLM system is the direct relation between specification and manufacturing intent, allowing manufacturing engineering to work collaboratively with engineering in the same environment.

- The first benefit is fewer iterations and a shorter time to production, thanks to early interaction and manufacturing involvement in the engineering process.

- The second benefit is: product knowledge is centralized in a single system. Consolidating your Product Knowledge in ERP does not make sense due to global localization and the missing capabilities to manage the iterative engineering processes on non-existing parts.

And then came the SBOM, the xBOM

Traditional PLM vendors and implementations kept using xBOM structures as placeholders for related specification data (mechanical designs, electrical, software deliverables, serialized products). Most of the time, related files.

Traditional PLM vendors and implementations kept using xBOM structures as placeholders for related specification data (mechanical designs, electrical, software deliverables, serialized products). Most of the time, related files.

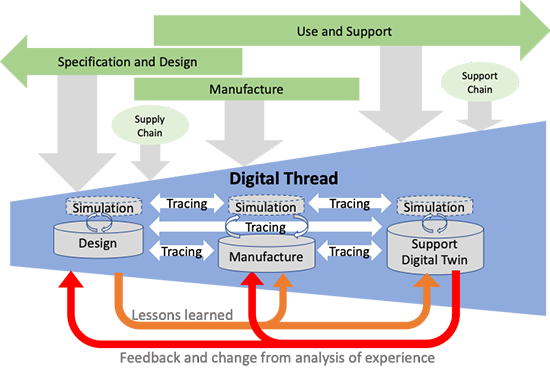

And with this approach, talking about digital thread, PLM systems also touch on the concepts of Configuration Management.

And with this approach, talking about digital thread, PLM systems also touch on the concepts of Configuration Management.

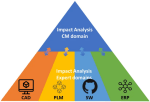

I will not go into the details here but look at the two images by clicking on them and see a similar mindset.

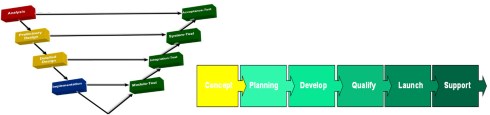

It is about the traceability of information in structures and systems. These structures work well in a relatively static and linear product development and delivery environment, as illustrated below:

Engineering change and release processes are based on managing the changes in different structures from the left to the right.

And then came software!

Modern connected products are no longer mechanical products. The product’s functionality no longer depends on the mechanical properties but mainly on embedded electronics and software used. For example, look at the mechanical design of a telecom transmission tower – its behavior merely comes from non-mechanical components, and they can change over time. Still, the Bill of Material contains a lot of concrete and steel parts.

Modern connected products are no longer mechanical products. The product’s functionality no longer depends on the mechanical properties but mainly on embedded electronics and software used. For example, look at the mechanical design of a telecom transmission tower – its behavior merely comes from non-mechanical components, and they can change over time. Still, the Bill of Material contains a lot of concrete and steel parts.

The ultimate example is comparing a Tesla (software on wheels) with a traditional car. For modern connected products, electronics and software need to be part of the solution. Software and electronics allow the product to be upgraded over time. Managing these products in the same manner as mechanical products is impossible, inefficient and therefore threatening your company’s future business.

I requote Jan Bosch:

An excessive focus on the bill of materials leads to significant challenges for companies that are undergoing a digital transformation and adopting continuous value delivery. The lack of headroom, high coupling and versioning hell may easily cause an explosion of R&D expenditure over time.

The model-based, connected enterprise

I will not solve the puzzle of the future in this post. You can read my observations in my series: The road to model-based and connected PLM. We need a new infrastructure with at least two modes. One that still serves as a System of Record, storing information in a traditional manner, like a Bill of Materials for the static parts, as not everyone and everything can be connected.

In addition, we need various Systems of Engagement that enable close to real-time interaction between products (systems) and relevant stakeholders for the engagement scope(multidisciplinary / consumers).

In addition, we need various Systems of Engagement that enable close to real-time interaction between products (systems) and relevant stakeholders for the engagement scope(multidisciplinary / consumers).

Digital twins are examples of such environments. Currently, these Systems of Engagement often work disconnected from the System of Record due to the lack of understanding of how to connect. (standard connectors? / OSLC?)

Our mission is to explore, as I wrote in my post Time to split PLM and drop our mechanical mindset.

And while I was finalizing this post, I read a motivating post from Jan Bosch again for all of you working on understanding and pushing the digital transformation in your eco-system.

And while I was finalizing this post, I read a motivating post from Jan Bosch again for all of you working on understanding and pushing the digital transformation in your eco-system.

The title: Be the protagonist of your life: 15 rules A starting point for more to come.

Conclusion

The BOM is no longer the master of the product lifecycle when it comes to managing connected products, where functionality mainly depends on software. BOM structures with related documents are just one of the extracted baselines from a data-driven, connected enterprise. This traditional PLM infrastructure requires other, non-BOM-driven structures to represent the actual status of a virtual or physical product.

The BOM is not dead, but there is more ………

Your thoughts?

In March 2018, I started a series of blog posts related to model-based approaches. The first post was: Model-Based – an introduction. The reactions to these series of posts can be summarized in two bullets:

In March 2018, I started a series of blog posts related to model-based approaches. The first post was: Model-Based – an introduction. The reactions to these series of posts can be summarized in two bullets:

- Readers believed that the term model-based was focusing on the 3D CAD model. A logical association as PLM is often associated with 3D CAD-model data management (actually PDM), and in many companies, the 3D CAD model is (yet) not a major information carrier/

- Readers were telling me that a model-based approach is too far from their day-to-day life. I have to agree here. I was active in some advanced projects where the product’s behavior depends on a combination of hardware and software. However, most companies still work in a document-driven, siloed discipline manner merging all deliverables in a BOM.

More than 3 years later, I feel that model-based approaches have become more and more visible for companies. One of the primary reasons is that companies start to collaborate in the cloud and realize the differences between a coordinated and a connected manner.

More than 3 years later, I feel that model-based approaches have become more and more visible for companies. One of the primary reasons is that companies start to collaborate in the cloud and realize the differences between a coordinated and a connected manner.

Initiatives as Industry 4.0 or concepts like the Digital Twin demand a model-based approach. This post is a follow-up to my recent post, The Future of PLM.

History has shown that it is difficult for companies to change engineering concepts. So let’s first look back at how concepts slowly changed.

The age of paper drawings

In the sixties of the previous century, the drawing board was the primary “tool” to specify a mechanical product. The drawing on its own was often a masterpiece drawn on special paper, with perspectives, details, cross-sections.

In the sixties of the previous century, the drawing board was the primary “tool” to specify a mechanical product. The drawing on its own was often a masterpiece drawn on special paper, with perspectives, details, cross-sections.

All these details were needed to transfer the part or assembly information to manufacturing. The drawing set should contain all information as there were no computers.

Making a prototype was, depending on the complexity of the product, the interpretation of the drawings and manufacturability of a product, not always that easy. After a first release, further modifications to the product definition were often marked on the manufacturing drawings using a red pencil. Terms like blueprint and redlining come from the age of paper drawings.

Making a prototype was, depending on the complexity of the product, the interpretation of the drawings and manufacturability of a product, not always that easy. After a first release, further modifications to the product definition were often marked on the manufacturing drawings using a red pencil. Terms like blueprint and redlining come from the age of paper drawings.

There are still people talking nostalgically about these days as creating and interpreting drawings was an important skill. However, the inefficiencies with this approach were significant.

- First, updating drawings because there was redlining in manufacturing was often not done – too much work.

- Second, drawing reuse was almost impossible; you had to start from scratch.

- Third, and most importantly, you needed to be very skilled in interpreting a drawing set. In particular, when dealing with suppliers that might not have the same skillset and the knowledge of which drawing version was actual.

However, paper was and still is the cheapest neutral format to distribute designs. The last time I saw companies still working with paper drawings was at the end of the previous century.

However, paper was and still is the cheapest neutral format to distribute designs. The last time I saw companies still working with paper drawings was at the end of the previous century.

Curious to learn if they are now extinct?

The age of electronic drawings (CAD)

With the introduction of AutoCAD and personal computers around 1982, more companies started to look into drafting with the computer. There was already the IBM drafting system in 1965, but it was Autodesk that pushed the 2D drafting business with their slogan:

“80 percent of the functionality for 20 percent of the price (Autodesk 1982)”

A little later, I started to work for an Autodesk distributor/reseller. People would come to the showroom to see how a computer drawing could be plotted in the finest quality at the end. But, of course, the original draftsman did not like the computer as the screen was too small.

However, the enormous value came from making changes, the easy way of sharing drawings and the ease of reuse. The picture on the left is me in 1989, demonstrating AutoCAD with a custom-defined tablet and PS/2 computer.

However, the enormous value came from making changes, the easy way of sharing drawings and the ease of reuse. The picture on the left is me in 1989, demonstrating AutoCAD with a custom-defined tablet and PS/2 computer.

The introduction of electronic drawings was not a disruption, more optimization of the previous ways of working.

The exchange with suppliers and manufacturing could still be based on plotted drawings – the most neutral format. And thanks to the filename, there was better control of versions between all stakeholders.

Aren’t we all happy?

The introduction of mainstream 3D CAD

In 1995, 3D CAD became available for the mid-market, thanks to SolidWorks, Solid Edge and a little later Inventor. Before that working with 3D CAD was only possible for companies that could afford expensive graphic stations, provided by IBM, Silicon Graphics, DEC and SUN. Where are they nowadays? The PC is an example of disruptive innovation, purely based on technology. See Clayton Christensen’s famous book: The Innovator’s Dilemma.

In 1995, 3D CAD became available for the mid-market, thanks to SolidWorks, Solid Edge and a little later Inventor. Before that working with 3D CAD was only possible for companies that could afford expensive graphic stations, provided by IBM, Silicon Graphics, DEC and SUN. Where are they nowadays? The PC is an example of disruptive innovation, purely based on technology. See Clayton Christensen’s famous book: The Innovator’s Dilemma.

The introduction of 3D CAD on PCs in the mid-market did not lead directly to new ways of working. Designing a product in 3D was much more efficient if you mastered the skills. 3D brought a better understanding of the product dimensions and shape, reducing the number of interpretation errors.

Still, (electronic) drawings were the contractual deliverable when interacting with suppliers and manufacturing. As students were more and more trained with the 3D CAD tools, the traditional art of the draftsman disappeared.

Still, (electronic) drawings were the contractual deliverable when interacting with suppliers and manufacturing. As students were more and more trained with the 3D CAD tools, the traditional art of the draftsman disappeared.

3D CAD introduced some new topics to solve.

- First of all, a 3D CAD Assembly in the system was a collection of separate files, subassemblies, parts, and drawings that relate to each other with a specific version. So how to ensure the final assembly drawings were based on the correct part revisions? Companies were solving this by either using intelligent filenames (with revisions) or by using a PDM system where the database of the PDM system managed all the relations and their status.

- The second point was that the 3D CAD assembly also introduced a new feature, the product structure, or the “Bill of Materials”. This logical structure of the assembly up resembled a lot of the Bill of Material of the product. You could even browse deeper levels, which was not the case in the traditional Bill of Material on a drawing.

Note: The concept of EBOM and MBOM was not known in most companies. People were talking about the BOM as a one-level definition of parts or subassemblies in the assembly. See my Where is the MBOM? Post from July 2008 when this topic was still under discussion.

- The third point that would have a more significant impact later is that parts and assemblies could be reused in other products. This introduced the complexity of configuration management. For example, a 3D CAD part or assembly file could contain several configurations where only one configuration would be valid for the given product. Managing this in the 3D CAD system lead to higher productivity of the designer, however downstream when it came to data management with PDM systems, it became a nightmare.

I experienced these issues a lot when discussing with companies and implementers, mainly the implementation of SmarTeam combined with SolidWorks and Inventor. Where to manage the configuration constraints? In the PDM system or inside the 3D CAD system.

These environments were not friends (image above), and even if they came from the same vendor, it felt like discussing with tribes.

The third point also covered another topic. So far, CAD had been the first step for the detailed design of a product. However, companies now had an existing Bill of Material in the system thanks to the PDM systems. It could be a Bill of Material of a sub-assembly that is used in many other products.

Configuring a product no longer started from CAD; it started from a Product or Bill of Material structure. Sales and Engineers identified the changes needed on the BoM, keeping as much as possible released information untouched. This led to a new best practice.

The item-centric approach

Around 2005, five years after introducing the term Product Lifecycle Management, slowly, a new approach became the standard. Product Lifecycle Management was initially introduced to connect engineering and manufacturing, driven by the automotive and aerospace industry.

Around 2005, five years after introducing the term Product Lifecycle Management, slowly, a new approach became the standard. Product Lifecycle Management was initially introduced to connect engineering and manufacturing, driven by the automotive and aerospace industry.

It was with PLM that concepts as EBOM and MBOM became visible.

In particular, the EBOM was closely linked to engineering practices, i.e., modularity and reuse. The EBOM and its related information represented the product as it was specified. It is essential to realize that the parts in the EBOM could be generic specified purchase parts to be resolved when producing the product or that the EBOM contained Make-parts specified by drawings.

At that time, the EBOM was often used as the foundation for the ERP system – see image above. The BOM was restructured and organized according to the manufacturing process specifying materials and resources needed in the ERP system. Therefore, although it was an item-like structure, this BOM (the MBOM) always had a close relation to the Bill of Process.

For companies with a single manufacturing site, the notion of EBOM and MBOM was not that big, as the ERP system would be the source of the MBOM. However, the complexity came when companies have several manufacturing sites. That was when a generic MBOM in the PLM system made more sense to centralize all product information in a single system.

The EBOM-MBOM approach has become more and more a standard practice since 2010. As a result, even small and medium-sized enterprises realized a need to manage the EBOM and the MBOM.

There were two disadvantages introduced with this EBOM-MBOM approach.

- First, the EBOM and the MBOM as information structures require a lot of administrative maintenance if information needs to be always correct (and that is the CM target). Some try to simplify this by keeping the EBOM part the same as the MBOM part, meaning the EBOM specification already targets a single supplier or manufacturer.

- The second disadvantage of making every item in the BOM behave like a part creates inefficiencies in modern environments. Products are a mix of hardware(parts) and software(models/behavior). This BOM-centric view does not provide the proper infrastructure for a data-driven approach as part specifications are still done in drawings. We need 3D annotated models related to all kinds of other behavior and physical models to specify a product that contains hard-and software.

A new paradigm is needed to manage this mix efficiently, the enabling foundation for Industry 4.0 and efficient Digital Twins; there is a need for a model-based approach based on connected data elements.

A new paradigm is needed to manage this mix efficiently, the enabling foundation for Industry 4.0 and efficient Digital Twins; there is a need for a model-based approach based on connected data elements.

More next week.

Conclusion

| The age of paper drawings | 1960 – now dead |

| The age of electronic drawings | 1982 – potentially dead in 2030 |

| The mainstream 3D CAD | 1995 – to be evolving through MBD and MBSE to the future – not dead shortly |

| Item-centric approach | 2005 – to be evolving to a connected model-based approach – not dead shortly |

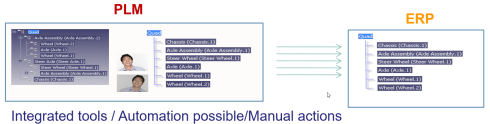

I believe we are almost at the end of learning from the past. We have seen how, from an initial serial CAD-driven approach with PDM, we evolved to PLM-managed structures, the EBOM and the MBOM. Or to illustrate this statement, look at the image below, where I use a Tech-Clarity image from Jim Brown.

The image on the right describes perfectly the complementary roles of PLM and ERP. The image on the left shows the typical PDM-approach. PDM feeding ERP in a linear process. The image on the right, I believe it is from 2004, shows the best practice before digital transformation. PLM is supporting product innovation in an iterative approach, pushing released information to ERP for execution.

As I think in images, I like the concept of a circle for PLM and an arrow for ERP. I am always using those two images in discussions with my customers when we want to understand if a particular activity should be in the PLM or ERP-domain.

As I think in images, I like the concept of a circle for PLM and an arrow for ERP. I am always using those two images in discussions with my customers when we want to understand if a particular activity should be in the PLM or ERP-domain.

Ten years ago, the PLM-domain was conceptually further extended by introducing support for products in operations and service. Similar to the EBOM (engineering) and the MBOM (manufacturing), the SBOM (service) was introduced to support product information for products in operation. In theory a full connected cicle.

Asset Lifecycle Management

At the same time, I was promoting PLM-practices for owners/operators to enhance Asset Lifecycle Management. My first post from June 2010 was called: PLM for Asset Lifecycle Management and Asset Development introduces this approach.

Conceptually the SBOM and Asset Lifecycle Management have a lot in common. There is a design product, in this case, an asset (plant, machine) running in the field, and we need to make sure operators have the latest information about the asset. And in case of asset changes, which can be a maintenance operation, a repair or complete overall, we need to be sure the changes are based on the correct information from the as-built environment. This requires full configuration management.

Conceptually the SBOM and Asset Lifecycle Management have a lot in common. There is a design product, in this case, an asset (plant, machine) running in the field, and we need to make sure operators have the latest information about the asset. And in case of asset changes, which can be a maintenance operation, a repair or complete overall, we need to be sure the changes are based on the correct information from the as-built environment. This requires full configuration management.

Asset changes can be based on extensive projects that need to be treated like new product development projects, with a staged approach that can take weeks, months, sometimes years. These activities are typical activities performed in PLM-systems, not in MRO-systems that are designed to manage the actual operation. Again here we see the complementary roles of PLM (iterative) and MRO (execution).

Since 2008, I have worked a lot in this environment, mainly in the nuclear and process industry. If you want to learn more about this aspect of PLM, I recommend looking at the PLMpartner website, where Bjørn Fidjeland, in cooperation with SharePLM, published a course on Plant Information Management. We worked together in several projects and Bjørn has done a great effort to describe the logical model to be used instead of a function-feature story.

Ten years ago, we were not calling this concept the “Digital Twin,” as the aim was to provide end-to-end support of asset information from engineering, procurement, and construction towards operation in a coordinated manner. The breaking point in the relation between the EPCs and Owner/Operators is the data-handover – how much of your IP can/do you expose and what is needed. Nowadays, we would call striving for end-to-end data continuity the Digital Thread.

Hot from the press in this context, CIMdata just published a commentary Managing the Digital Thread in Global Value Chains describing Eurostep’s ShareAspace capabilities and experiences in managing an end-to-end information flow (Digital Thread) in a heterogeneous environment based on exchange standards like ISO 10303-239 PLCS. Their solution is based on what I consider a more modern approach for managing digital continuity compared to the traditional approach I described before. Compare the two images in this paragraph. The first image represents the old/current way with a disconnected handover, the second represents ShareAspace connected approach based on a real digital thread.

The Service BOM

As discussed with Asset Lifecycle Management, there is a disconnect between the engineering disciplines and operations in the field, looking from the point of view of an Asset owner/operator.

Now when we look from the perspective of a manufacturing company that produces assets to be serviced, we can identify a different dataflow and a new structure, the Service BOM (SBOM).

The SBOM provides information on how a product needs to be serviced. What are the parts that require service, and what are the service kits that are possible for that product? For that reason, service engineering should be done in parallel to product engineering. When designing a product, the engineer needs to identify which the wearing parts (always require service in time) and which parts might be serviceable.

The SBOM provides information on how a product needs to be serviced. What are the parts that require service, and what are the service kits that are possible for that product? For that reason, service engineering should be done in parallel to product engineering. When designing a product, the engineer needs to identify which the wearing parts (always require service in time) and which parts might be serviceable.

There are different ways to look at the SBOM. Conceptually, the SBOM could be created in close relation with the EBOM. At the moment you define your product, you also should specify how the product will be serviced. See the image below

From this example, it is clear that part standardization and modularization have a considerable benefit for services downstream. What if you have only one serviceable part that applies to many products? The number of parts to have in stock will be strongly reduced instead of having many similar parts that only fit in a single product?

Depending on the type of product, the SBOM can be generic, serving many products in the field. In that case, the company has to deal with catalogs, to be defined in PLM. Or the SBOM can be aligned with the As-Built of a capital product in the field. In that case, the concepts of Asset Lifecycle Management apply. Click on the image to see a clear picture.

Depending on the type of product, the SBOM can be generic, serving many products in the field. In that case, the company has to deal with catalogs, to be defined in PLM. Or the SBOM can be aligned with the As-Built of a capital product in the field. In that case, the concepts of Asset Lifecycle Management apply. Click on the image to see a clear picture.

![]() The SBOM on its own, in such an environment, will have links to specific documents, service instructions, operating manuals.

The SBOM on its own, in such an environment, will have links to specific documents, service instructions, operating manuals.

If your PLM-system allows it, extending the EBOM and MBOM with an SBOM is not a complex effort. What is crucial to understand is that the SBOM has its own lifecycle, which can even last longer than the active product sold. So sometimes, manufacturing specifications, related to service parts need to be maintained too, creating a link between the SBOM and potential MBOM(s).

ECM = Enterprise Change Management

When I discussed ECM in my previous post in the context of Engineering Change Management, I got the feedback that nowadays, everyone talks about Enterprise Change Management. Engineering Change Management is old school.

In the past, and even in a 2014 benchmark, a customer had two change management systems. One in PLM and one in ERP, and companies were looking into connecting these two processes. Like the BOM-interaction between PLM and ERP, this is technology-wise, never a real problem.

The real problem in such situations was to come to a logical flow of events. Many times the company insisted that every change should start from the ERP-system as we like to standardize. This means that even an engineering change had to be registered first in the ERP-system

The real problem in such situations was to come to a logical flow of events. Many times the company insisted that every change should start from the ERP-system as we like to standardize. This means that even an engineering change had to be registered first in the ERP-system

Luckily the reach of PLM has grown. PLM is no longer the engineering tool (IT-system thinking). PLM has become the information backbone for product information all along the product lifecycle. Having the MBOM and SBOM available through a PLM-infrastructure allows organizations to streamline their processes.

And in this modern environment, enterprise change management might take place mostly in a PLM-infrastructure. The PLM-infrastructure providing a digital thread, as the Aras picture above illustrates, provides the full traceability to support configuration management.

However, we still have to remember that configuration management and engineering change management, first of all, are based on methodology and processes. Next, the combination of tools to be used will vary.

However, we still have to remember that configuration management and engineering change management, first of all, are based on methodology and processes. Next, the combination of tools to be used will vary.

I like to conclude this topic with a quote from Lee Perrin’s comment on my previous blog post

I would add that aerospace companies implemented CM, to avoid fatal consequences to their companies, but also to their flying customers.

PLM provides the framework within which to carry out Configuration Management. CM can indeed be carried out without PLM, as was done in the old paper-based days. As you have stated, PLM makes the whole CM process much more efficient. I think more transparent too.

Conclusion

After nine posts around the theme Learning from the past to understand the future, I walked through the history of CAD, PDM and PLM in a fast mode, pointing to practices and friction points. In the blogging space, it is hard to find this information as most blog posts are coming from software vendors explaining why their tool is needed. Hopefully, these series have helped many of you to understand a broader context. Now I want to focus on the future again in my upcoming blog posts.

Still, feel free to contact me and discuss methodology topics.

![]()

For those who have followed my blog over the years, it must be clear that I am advocating for a digital enterprise explaining benefits of a data-driven approach where possible. In the past month an old topic with new insights came to my attention: Yes or No intelligent Part Numbers or do we mean Product Numbers?

What’s the difference between a Part and a Product?

In a PLM data model, you need to have support for both Parts and Products and there is a significant difference between these two types of business objects. A Product is an object facing the outside world, which can be a company (B2B) or customer (B2C) related. Examples of B2C products are the Apple iPhone 8, the famous IKEA Billy, or my Garmin 810 and my Dell OptiPlex 3050 MFXX8. Examples of B2B products are the ABB synchronous motor AMZ 2500, the FESTO standard cylinder DSBG. Products have a name and if there are variants of the product, they also have an additional identifier.

In a PLM data model, you need to have support for both Parts and Products and there is a significant difference between these two types of business objects. A Product is an object facing the outside world, which can be a company (B2B) or customer (B2C) related. Examples of B2C products are the Apple iPhone 8, the famous IKEA Billy, or my Garmin 810 and my Dell OptiPlex 3050 MFXX8. Examples of B2B products are the ABB synchronous motor AMZ 2500, the FESTO standard cylinder DSBG. Products have a name and if there are variants of the product, they also have an additional identifier.

A Part represents a physical object that can be purchased or manufactured. A combination of Parts appears in a BOM. In case these Parts are not yet resolved for manufacturing, this BOM might be the Engineering BOM or a generic Manufacturing BOM. In case the Parts are resolved for a specific manufacturing plant, we talk about the MBOM.

A Part represents a physical object that can be purchased or manufactured. A combination of Parts appears in a BOM. In case these Parts are not yet resolved for manufacturing, this BOM might be the Engineering BOM or a generic Manufacturing BOM. In case the Parts are resolved for a specific manufacturing plant, we talk about the MBOM.

I have discussed the relation between Parts and Products in a earlier post Products, BOMs and Parts which was a follow-up on my LinkedIn post, the importance of a PLM data model. Although both posts were written more than two years ago, the content is still valid. In the upcoming year, I will address this topic of products further, including software and services moving to solutions / experiences.

Intelligent number for Parts?

As parts are company internal business objects, I would like to state if the company is serious about becoming a digital enterprise, parts should have meaningless unique identifiers. Unique identifiers are the link between discipline or application specific data sets. For example, in the image below, where I imagined attributes sets for a part, based on engineering and manufacturing data sets.

Apart from the unique ID, there might be a common set of attributes that will be exposed in every connected system. For example, a description, a classification and one or more status attributes might be needed.

Note 1: A revision number is not needed when you create every time a new unique ID for a new version of the part. This practice is already common in the electronics industry. In the old mechanical domain, we are used to having revisions in particular for make parts based on Form-Fit-Function rules.

Note 2: The description might be generated automatically based on a concatenation of some key attributes.

Of course if you are aiming for a full digital enterprise, and I think you should, do not waste time fixing the past. In some situations, I learned that an external consultant recommended the company to rename their old meaningful part numbers to the new non-intelligent part numbering scheme. There are two mistakes here. Renumbering is too costly, as all referenced information should be updated. And secondly as long as the old part numbers have a unique ID for the enterprise, there is no need to change. The connectivity of information should not depend on how the unique ID is formatted.

Of course if you are aiming for a full digital enterprise, and I think you should, do not waste time fixing the past. In some situations, I learned that an external consultant recommended the company to rename their old meaningful part numbers to the new non-intelligent part numbering scheme. There are two mistakes here. Renumbering is too costly, as all referenced information should be updated. And secondly as long as the old part numbers have a unique ID for the enterprise, there is no need to change. The connectivity of information should not depend on how the unique ID is formatted.

Read more if you want here: The impact of Non-Intelligent Part Numbers

Intelligent numbers for Products?

If the world was 100 % digital and connected, we could work with non-intelligent product numbers. However, this is a stage beyond my current imagination. For products we will still need a number that allows customers to refer to, for when they communicate with their supplier / vendor or service provider. For many high-tech products the product name and type might be enough. When I talk about the Samsung S5 G900F 16G, the vendor knows which kind of configuration I am referring too. Still it is important to realize that behind these specifications, different MBOMs might exist due to different manufacturing locations or times.

If the world was 100 % digital and connected, we could work with non-intelligent product numbers. However, this is a stage beyond my current imagination. For products we will still need a number that allows customers to refer to, for when they communicate with their supplier / vendor or service provider. For many high-tech products the product name and type might be enough. When I talk about the Samsung S5 G900F 16G, the vendor knows which kind of configuration I am referring too. Still it is important to realize that behind these specifications, different MBOMs might exist due to different manufacturing locations or times.

However, when I refer to the IKEA Billy, there are too many options to easily describe the right one consistent in words, therefore you will find a part number on the website, e.g. 002.638.50. This unique ID connects directly to a single sell-able configuration. Here behind this unique ID also different MBOMs might exist for the same reason as for the Samsung telephone. The number is a connection to the sales configuration and should not be too complicated as people need to be able to read and recognize it when you go to a warehouse.

However, when I refer to the IKEA Billy, there are too many options to easily describe the right one consistent in words, therefore you will find a part number on the website, e.g. 002.638.50. This unique ID connects directly to a single sell-able configuration. Here behind this unique ID also different MBOMs might exist for the same reason as for the Samsung telephone. The number is a connection to the sales configuration and should not be too complicated as people need to be able to read and recognize it when you go to a warehouse.

Conclusion

There is a big difference between Product and Part numbers because of the intended scope of these business objects. Parts will soon exist in connected, digital enterprises and therefore do not need any meaningful number anymore. Products need to be identified by consumers anywhere around the world, not yet able or willing to have a digital connection with their vendors. Therefore smaller and understandable numbers will remain needed to support exact communication between consumer and vendor.

In my series describing the best practices related to a (PLM) data model, I described the general principles, the need for products and parts, the relation between CAD documents and the EBOM, the topic of classification and now the sensitive relation between EBOM and MBOM.

First some statements to set the scene:

- The EBOM represents the engineering (design) view of a product, structured in a way that it represents the multidisciplinary view of the functional definition of the product. The EBOM combined with its related specification documents, models, drawings, annotations should give a 100 % clear definition of the product.

- The MBOM represents the manufacturing view of a product, structured in a way that represents the way the product is manufactured. This structure is most of the time not the same as the EBOM, due to the manufacturing process and purchasing of parts.

A (very) simplified picture illustrating the difference between an EBOM and a MBOM. If the Car was a diesel there would be also embedded software in both BOMs (currently hidden)

For many years, the ERP systems have claimed ownership of the MBOM for two reasons

- Historically the MBOM was the starting point for production. Where the engineering department often worked with a set of tools, the ERP system was the system where data was connected and used to have a manufacturing plan and real-time execution

- To accommodate a more advanced integration with PDM systems, ERP vendors began to offer an EBOM capability also in their system as PDM systems often worked around the EBOM.

These two approaches made it hard to implement “real” PLM where (BOM) data is flowing through an organization instead of stored in a single system.

By claiming ownership of the BOM by ERP, some problems came up:

- A disconnect between the iterative engineering domain and the execution driven ERP domain. The EBOM is under continuous change (unless you have a simple or the ultimate product) and these changes are all related to upstream information, specifications, requirements, engineering changes and design changes. An ERP system is not intended for handling iterative processes, therefore forcing the user to work in a complex environment or trying to fix the issue through heavy customization on the ERP side.

- Global manufacturing and outsourced manufacturing introduced a new challenge for ERP-centric implementations. This would require all manufacturing sites also the outsourced manufacturers the same capabilities to transfer an EBOM into a local MBOM. And how do you capitalize the IP from your products when information is handled in a dispersed environment?

The solution to this problem is to extend your PDM implementation towards a “real” PLM implementation providing the support for EBOM, MBOM, and potential plant specific MBOM. All in a single system / user-experience designed to manage change and to allow all users to work in a global collaborative way around the product. MBOM information then will then be pushed when needed to the (local) ERP system, managing the execution.

Note 1: Pushing the MBOM to ERP does not mean a one-time big bang. When manufacturing parts are defined and sourced, there will already be a part definition in the ERP system too, as logistical information must come from ERP. The final push to ERP is, therefore, more a release to ERP combined with execution information (when / related to which order).

In this scenario, the MBOM will be already in ERP containing engineering data complemented with manufacturing data. Therefore from the PLM side we talk more about sharing BOM information instead of owning. Certain disciplines have the responsibility for particular properties of the BOM, but no single ownership.

Note 2: The whole concept of EBOM and MBOM makes only sense if you have to deliver repetitive products. For a one-off product, more a project, the engineering process will have the manufacturing already in mind. No need for a transition between EBOM and MBOM, it would only slow down the delivery.

Now let´s look at some EBOM-MBOM specifics

EBOM phantom assemblies

When extracting an EBOM directly from a 3D CAD structure, there might be subassemblies in the EBOM due to a logical grouping of certain items. You do not want to see these phantom assemblies in the MBOM as they only complicate the structuring of the MBOM or lead to phantom activities. In an EBOM-MBOM transition these phantom assemblies should disappear and the underlying end items should be linked to the higher level.

When extracting an EBOM directly from a 3D CAD structure, there might be subassemblies in the EBOM due to a logical grouping of certain items. You do not want to see these phantom assemblies in the MBOM as they only complicate the structuring of the MBOM or lead to phantom activities. In an EBOM-MBOM transition these phantom assemblies should disappear and the underlying end items should be linked to the higher level.

EBOM materials

In the EBOM, there might be materials like a rubber tube with a certain length, a strip with a certain length, etc. These materials cannot be purchased in these exact dimensions. Part of the EBOM to MBOM transition is to translate these EBOM items (specifying the exact material) into purchasable MBOM items combined with a fitting operation.

EBOM end-items (make)

For make end-items, there are usually approved manufacturers defined and it is desirable to have multiple manufacturers (certified through the AML) for make end-items, depending on cost, capacity and where the product needs to be manufactured. Therefore, a make end-item in the EBOM will not appear in a resolved MBOM.

EBOM end-items (buy)

For buy end-items, there is usually a combination of approved manufacturers (AML) combined with approved vendors (AVL). The approved manufacturers are defined by engineering, based on part specifications. Approved vendors are defined by manufacturing combined with purchasing based on the approved manufacturers and logistical or commercial conditions

Are EBOM items and MBOM items different?

There is a debate if EBOM items should/could appear in an MBOM or that EBOM items are only in the EBOM and connected to resolved items in the MBOM. Based on the previous descriptions of the various EBOM items, you can conclude that a resolved MBOM does not contain EBOM items anymore in case of multiple sourcing. Only when you have a single manufacturer for an EBOM item, the EBOM item could appear in the MBOM. Perhaps this is current in your company, but will this stay the same in the future?

There is a debate if EBOM items should/could appear in an MBOM or that EBOM items are only in the EBOM and connected to resolved items in the MBOM. Based on the previous descriptions of the various EBOM items, you can conclude that a resolved MBOM does not contain EBOM items anymore in case of multiple sourcing. Only when you have a single manufacturer for an EBOM item, the EBOM item could appear in the MBOM. Perhaps this is current in your company, but will this stay the same in the future?

It is up to your business process and type of product which direction you choose. Coming back to one-off products, here is does not make sense to have multiple manufacturers. In that case, you will see that the EBOM item behaves at the same time as an MBOM item.

What about part numbering?

Luckily I reached the 1000 words so let´s be short on this debate. In case you want an automated flow of information between PLM and ERP, it is important that shared data is connected through a unique identifier.

Luckily I reached the 1000 words so let´s be short on this debate. In case you want an automated flow of information between PLM and ERP, it is important that shared data is connected through a unique identifier.

Automation does no need intelligent numbering. Therefore giving parts in the PLM system and the ERP system a unique, meaningless number you ensure guaranteed digital connectivity.

If you want to have additional attributes on the PLM or ERP side that describe the part with a number relevant for human identification on the engineering side or later at the manufacturing side (labeling), this all can be solved.

An interesting result of this approach is that a revision of a part is no longer visible on the ERP side (unless you insist). Each version of the MBOM parts is pointing to a unique version of an MBOM part in ERP, providing an error free sharing of data.

Conclusion

Life can be simple if you generalize and if there was no past, no legacy and no ownership of data thinking. The transition of EBOM to MBOM is the crucial point where the real PLM vision is applied. If there is no data sharing on MBOM level, there are two silos, the characteristic of the old linear past.

(See also: From a linear world to a circular and fast)

What do you think? Is more complexity needed?

I will be soon discussing these topics at the PDT2015 in Stockholm on October 13-14. Will you be there ?

And for Dutch/Belgium readers – October 8th in Bunnik:

Op 8 oktober ben ik op het BIM Open 2015 Congres in Bunnik waar ik de overeenkomsten tussen PLM en BIM zal bespreken en wat de constructie industrie kan leren van PLM

This time I would like to receive some feedback from my readers as I believe the topic I am discussing here might be similar to a PLM / ERP discussion – a discussion between religions. I have preached the past two years a more data-centric approach for PLM, instead of file management and related tot this data-centric approach, the concept of a PLM platform / Business Platform – CIMdata/ Innovation Platform – Gartner becomes clear.

This time I would like to receive some feedback from my readers as I believe the topic I am discussing here might be similar to a PLM / ERP discussion – a discussion between religions. I have preached the past two years a more data-centric approach for PLM, instead of file management and related tot this data-centric approach, the concept of a PLM platform / Business Platform – CIMdata/ Innovation Platform – Gartner becomes clear.

What´s the issue?

As I wrote in my earlier post (random PLM future thoughts), I realized that talking about platforms is not that straight-forward when meeting companies with their history and terminology. Some claim they are already using a business platform, others have no clue what makes a platform different from a their current PLM implementation ? Therefore I will summarize the different approaches I have seen in my network and give a non-academic opinion as a base for discussion. Looking forward to your opinion.

The platform approach

My definition of a PLM platform:

- A central repository of data based on a core data model. Information is stored as data in a unique way

- On top of this repository, applications can run, using a subset of the overall data elements, proving dedicated functionality and user interface to a particular user / role

- Access to the platform is provided through web-technology. Storage could be on the cloud.

- External applications and data can be connected through an open (standardized?) API embedded or federated

- The PLM platform can be a collection of services and functionality coming from various vendors / suppliers – the app store concept

- The platform approach is THE DREAM for business, being flexible to combine and edit data in any desired context in dedicated apps / environments

In the PLM world, Dassault Systems with their 3DExperience approach is following this trend although here you might argue about the ease of use to add external apps to this platform – is it open ? Aras and Autodesk might also claim they have a PLM platform, where you might question the same and if the depth of the data model and the provided solutions on top of the data model are mature enough. Finally also SAP can be considered as a platform, but I would not name it a PLM platform at this moment in time. An important question for me would be: How can achieve openness of a PLM platform?

In the PLM world, Dassault Systems with their 3DExperience approach is following this trend although here you might argue about the ease of use to add external apps to this platform – is it open ? Aras and Autodesk might also claim they have a PLM platform, where you might question the same and if the depth of the data model and the provided solutions on top of the data model are mature enough. Finally also SAP can be considered as a platform, but I would not name it a PLM platform at this moment in time. An important question for me would be: How can achieve openness of a PLM platform?

Your thoughts?

The PLM backbone approach

My definition of a PLM backbone:

- The core PLM functionality is provided by a single, proprietary PLM system

- Additional functionality that is not part of the core development (acquisitions) is connected to the backbone through proprietary interfaces

- External authoring tools are linked to the backbone through integrations or interfaces which could be developed by third parties

- External system can interface to the PLM backbone through open interfaces

- The PLM backbone is THE DREAM for engineering, as historically this was the domain where PLM started to be implemented

I would consider Siemens and PTC (see picture) the best examples of a PLM backbone approach with their PLM portfolio. Teamcenter and Windchill are both rich PLM systems further connected to several systems, covering the product lifecycle. I am not expert enough to state that the same conclusion is valid for Oracle´s Agile, where I believe the backbone is bigger than the PLM system. What do you think ? Will these PLM vendors also move to a platform approach? And what will be the platform?

I would consider Siemens and PTC (see picture) the best examples of a PLM backbone approach with their PLM portfolio. Teamcenter and Windchill are both rich PLM systems further connected to several systems, covering the product lifecycle. I am not expert enough to state that the same conclusion is valid for Oracle´s Agile, where I believe the backbone is bigger than the PLM system. What do you think ? Will these PLM vendors also move to a platform approach? And what will be the platform?

The Service Bus approach

My understanding of the Service Bus (I am not an IT-expert):

- Service Bus has a standardized interface to request for data or to post data that needs to be stored in other systems

- The Service Bus approach reduces the amount of (custom) interfaces between systems by requiring standardized inputs and outputs per system

- Providing a user with information that is not entirely available in a single system, the service bus needs to acquire the data from other systems, which might not give a high-performance as expected by business people

- The Service Bus is the IT DREAM as it simplifies the complexity for IT to manage point-to-point solutions between systems and makes an upgrade strategy easier to support.

From a very high-level view, the service bus approach has some similarities to a platform. The service bus concept allows business to select the systems they like the most (provided they connect to the service bus) – Image property of IBM.com

From a very high-level view, the service bus approach has some similarities to a platform. The service bus concept allows business to select the systems they like the most (provided they connect to the service bus) – Image property of IBM.com

The main difference would be the persistence of information, where is the real data stored? I came across the service bus approach more often in the past, where the target was most of the time to integrate the PDM functionality (PLM as an enterprise solution was never in scope here).

For the Service Bus approach, I am curious to learn its relevance for future PLM implementations as the challenge would be to provide any user in the company with the relevant information in context. Is the service bus going to be replaced by the platform? Who would be the major players here?

The Business Intelligence approach

This method I discovered in project-centric companies (Oil & Gas companies, EPCs, Construction companies) but strangely enough also at some manufacturing companies, where I would assume integration of systems would bring large benefits.

- Each type of information is managed only in one single system avoiding interfaces or duplication of data.

- Only where needed, data will be pushed from one system to other systems

- Business Intelligence applications extract information from the relevant system and present this in context to the user, giving him/her a better of understanding

- Business users will work have to work in multiple systems to complete their tasks

- The BI approach is the ULTIMATE IT DREAM as it simplifies their works dramatically and shuts down business demands.

I have seen an example where IT dictated that for document management we use product ABC (well-known Content Management system). Next for internal documents we use SharePoint. For CAD, we use product PQR as much as possible (heavily adapted) or AutoCAD 2D (to support the minimum). For ERP, the standard system is XYZ (a famous ERP system – you do not lose your job by selecting them) and of course everyone uses Excel as a common interface of information between people.

I have seen an example where IT dictated that for document management we use product ABC (well-known Content Management system). Next for internal documents we use SharePoint. For CAD, we use product PQR as much as possible (heavily adapted) or AutoCAD 2D (to support the minimum). For ERP, the standard system is XYZ (a famous ERP system – you do not lose your job by selecting them) and of course everyone uses Excel as a common interface of information between people.

It was impossible in this company to have a business view on the solution landscape. As you can imagine, this company’s margins are not (yet) under pressure as their industry is very conservative.

What do you think?

Is the future for PLM in platforms? If Yes, what about openness? Who are the candidates to offer such a platform? Or will lack of industry standards and openness block wider adoption? If No, will there be a massive PLM system in the future, connected to other enterprise systems (ERP/CRM)? Or will PLM be implemented as a collection of smaller systems communicating through an enterprise service bus?

I am looking forward discussing the topic here and soon during the upcoming Product Innovation conference in Düsseldorf

Some weeks ago PLMJEN asked me my opinion on Peter Schroer´s post and invitation to an ARAS webinar called: Change Management: One Size Will Never Fit All. Change Management is actually a compelling topic, and I realized I had never written a dedicated post to such an essential topic. The introduction from Peter was excellent:

Some weeks ago PLMJEN asked me my opinion on Peter Schroer´s post and invitation to an ARAS webinar called: Change Management: One Size Will Never Fit All. Change Management is actually a compelling topic, and I realized I had never written a dedicated post to such an essential topic. The introduction from Peter was excellent:

Change management is the toughest thing inside of PLM. It’s also the most important.

For the rest, the post elaborated further into software capabilities and the value of having templates processes for various industry practices. I share that opinion when talking to companies that are starting to establish their processes. It is extremely rare that an existing company will change its processes towards more standard processes delivered by the PLM system when implementing a new system. The rule of thumb is People, Processes and Tools. This all is nicely explained by Stephen Porter in his latest blog post Beware the quick fix successful plm deployment strategies. As I was not able to attend the webinar, here are my more general thoughts related to change management and why it is essential for PLM.

Change Management has always been there

It is not that PLM has invented change management. Before companies started to use ERP and PDM systems, every company had to deal with managing changes. At that time, their business was mostly local and compared with today slow. “Time to market” was more a “Time to Region” issue. Engineering and Manufacturing were operating from the same location. Change management was a personal responsibility supported by (paper) documents and individuals. Only with the growing complexity of products, growing and global customer demands and increasing regulatory constraints it became impossible to manage change in an unstructured manner.

Survival of the fittest change organization

I have worked with several companies where change management was a running Excel business. Running can be interpreted in two ways. The current operation could not stop and step back and look into an improvement cycle, and a lot of people were running to collect, check and validate information in order to make change estimates and make decisions based on the collected data.

I have worked with several companies where change management was a running Excel business. Running can be interpreted in two ways. The current operation could not stop and step back and look into an improvement cycle, and a lot of people were running to collect, check and validate information in order to make change estimates and make decisions based on the collected data.

When a lot of people are running, it means your business is at risk. A lot of people means costs for data (re)search and handling are higher than the competition if this can be done automatically. Also in countries of low labor costs, a lot of people running becomes a threat at a certain moment. In addition, running people can make mistakes or provide insufficient information, which leads to the wrong decisions.

Wrong decisions can be costly. Your product may become too expensive; your project may delay significant as information was based on conflicting information between disciplines or suppliers. Additional iterations to fix these issues lead to a longer time to market. Late discoveries can lead to severe high costs. For certain, when the product has been released to the market the cost might be tremendous.

From the other side if making changes becomes difficult because the data has to be collected from various sources through human intervention, organizations might try to avoid making changes.

From the other side if making changes becomes difficult because the data has to be collected from various sources through human intervention, organizations might try to avoid making changes.

Somehow this is also an indirect death penalty. The future is for companies that are able to react quickly at any time and implement changes.

The analogy is with a commercial aircraft and a fighter plane. Let’s take the Airbus 380 in mind and a modern fighter jet the Joint Strike Fighter (JSF). The Airbus 380 brings you comfortable from A to B as long as A and B are well prepared places to land. The flight is comfortable as the plane is extremely stable. It is a well planned trip with an aversion to change of the trajectory.

The JSF airplane by definition is an unstable plane. It is only by its computer steering control that the plane behaves stable in the air. The built-in instability makes it possible to react as quickly as possible to unforeseen situations, preferable faster than the competition. This is a solution designed for change.

Based on your business you all should admire the JSF concept and try to understand where it is needed in your organization.

Why is change management integrated in PLM so important?

If we consider where changes appear the most, it is evident in the early lifecycle of the product most of the changes occur. And as long as they are in the virtual world with uncommitted costs to the product they are relative cheap. To my surprise many engineering companies and engineering departments work only with change management outside their own environment. Historically because outside their environment connected to prototyping or production costs of change are the highest. And our existing ERP system has an Engineering Change process – so let’s use that.

Meanwhile, engineering is used to work with the best so far information. At any moment, every discipline stores their data in a central repository. This could be a directory structure or PDM systems. Everyone is looking to the latest data. Files are overwritten with the latest versions. Data in the PDM system shows the latest version to all users. Hallelujah

Meanwhile, engineering is used to work with the best so far information. At any moment, every discipline stores their data in a central repository. This could be a directory structure or PDM systems. Everyone is looking to the latest data. Files are overwritten with the latest versions. Data in the PDM system shows the latest version to all users. Hallelujah

And this is the place where it goes wrong. A mechanical engineer has overlooked a requirement in the specification that has been changed. Yes, the latest version of the 20 page document is there. An electrical engineer has defined a new control system for the engine, but has not noticed that the operating parameters of the motor have been changed. Typical examples where a best so far environments creates the visibility, but the individual user cannot understand the impact of a change anymore (especially when additional sites perform the engineering work)

Here comes the value of change management in PLM. Change Management in PLM can be light weighted in the early design phases, providing checks on changes (baselines) and notifications to disciplines involved. Approval processes are more agreements to changes to implement and their impact on all disciplines.

PLM supports the product definition through the whole product lifecycle, change management at each stage can have its particular behavior. In the early stages a focus on notifications and visibility of change, later checking the impact based on the maturity of the various disciplines and finally when running into production and materials commitment towards a strict and organized change mechanism. It is only in a PLM system where the gradual flow can be supported seamless

PLM supports the product definition through the whole product lifecycle, change management at each stage can have its particular behavior. In the early stages a focus on notifications and visibility of change, later checking the impact based on the maturity of the various disciplines and finally when running into production and materials commitment towards a strict and organized change mechanism. It is only in a PLM system where the gradual flow can be supported seamless

Change Management and ERP

As mentioned before, most manufacturing companies have implemented change management in ERP as the costs of change are the highest when the product capabilities are committed. However, the ERP system is not the place to explore and iterate for further improved solutions. The ERP system can be the trigger for a change process based on production issues. However the full implementation of the change requires a change in the product definition, the area where PLM is strong.

NOTE: on purpose I am not mentioning a change in the engineering definition as in some cases the engineering definition might remain the same, but only the manufacturing process or materials need to be adapted. PLM supports iterations, not an ERP execution matter.

Change Management and Configuration Management

So far we have been discussing how the manufacturing system would be able to offer products based on the right engineering definition. As each specific product might not have an individual definition checked at any time, there is the need for configuration management (CM). Proper implemented configuration management assures there is a consistent relationship between how the product is specified and defined and the way it is produced. Read a refined and precise explanation on wiki

So far we have been discussing how the manufacturing system would be able to offer products based on the right engineering definition. As each specific product might not have an individual definition checked at any time, there is the need for configuration management (CM). Proper implemented configuration management assures there is a consistent relationship between how the product is specified and defined and the way it is produced. Read a refined and precise explanation on wiki

In one of my following posts I will focus on configuration management practices and why PLM systems and Configuration Management are like a Siamese twins

Conclusion:

Storing your data in a (PLM) system has only value if you are able to keep the actual status of the information and its context. Only then a person can make the right decisions immediately and with the right accuracy. The more systems or manual data handling, the less completive your company will be. Integrated and lean change management means survival !

Last year, I read Clayton Christensen’s book “The Innovator’s dilemma – When New Technologies Cause Great Firms to Fail “. I was intrigued how his theory also applies to PLM and wrote about it in a blog posts last year.

Recently, I attended an HBR Webinar “Innovating over the Horizon: How to Survive Disruption and Thrive” , which raises serious implications for PLM. As presented by Clayton Christensen and Max Wessel, both professors in the Harvard Business School, I foresaw numerous consequences demanding attention.

Recently, I attended an HBR Webinar “Innovating over the Horizon: How to Survive Disruption and Thrive” , which raises serious implications for PLM. As presented by Clayton Christensen and Max Wessel, both professors in the Harvard Business School, I foresaw numerous consequences demanding attention.

I’d like to highlight some observations for you:

- Disruptive innovation will hit any domain – so also the PLM domain

- You are less impacted if your products/services are targeting a job to be done

- ERP has a well defined job – so not much discussion there

- PLM does not have a clear job – so vulnerable for disruption

- Will PLM disappear?

Disruption explained

The above diagram explains it all. Often products come into the market with a performance below customer expectations. The product will improve in time, and at a certain moment it will reach that expectation level. Through sustaining innovation, the company keeps improving their product(s) to attract more customers, and start delivering more than a single customer is asking for.

This is for sure the case in PLM. All the PLM vendors are now able to deliver a lot of functionality around global collaboration, covering the whole product lifecycle. Companies that implement PLM, just implement a fraction of these capabilities and still have additional demands. Still the known PLM vendors nearly always win when a company is searching for a new PLM solution.