You are currently browsing the category archive for the ‘PLM’ category.

Let me start with a confession: as a kid, I was a classic nerd, drawn to soccer and exact sciences. Math and physics weren’t just subjects—they were my playground.

Let me start with a confession: as a kid, I was a classic nerd, drawn to soccer and exact sciences. Math and physics weren’t just subjects—they were my playground.

During my education to become a teacher in Physics and Mathematics, I discovered something even more captivating: programming. It started with my first Apple IIe, where I tackled the challenge of programming with limited memory using machine language, analogue/digital interfaces, Pascal, and C.

Later, I turned to Visual Basic and C++, writing programs to simulate math scenarios, automate AutoCAD tasks, and later develop solutions on top of SmarTeam.

It was not just work—it was how I relaxed – structuring my thinking – would we call it now Vibe coding?

The upside of this experience? Technical and physical concepts never intimidated me – they helped me to see the bigger picture. I was wired to think deeply, patiently, and persistently—skills that have stayed with me ever since.

The upside of this experience? Technical and physical concepts never intimidated me – they helped me to see the bigger picture. I was wired to think deeply, patiently, and persistently—skills that have stayed with me ever since.

The switch to human

But then I got involved in training and mediating in PLM implementations, where I discovered that technical skills were needed; however, more important were understanding human behavior (not software), communication and PLM methodology skills.

Many implementations at that time stalled because everyone started with great enthusiasm until the results failed to materialize. The solution was not as expected, too unstable or not possible. And from the point of view of the users, it is too complex and frustrating for them. You can read one of my experiences from that time: Where is my ROI. Mister Voskuil

One of those many interesting discussions

But the budget was often finished, and the enthusiasm was gone. One of my favorite quotes at that time was:

“You never get a second first impression.”

indicating that from the start, you need to anticipate user acceptance, don’t think of a big bang approach and start with understanding and agreeing on the big picture before diving into the details.

How many of you have been in this situation?

Although the majority of people in the PLM community agree that human behavior can make or break a PLM implementation, the majority of discussions and focus are most of the time targeting tools and technologies.

Organizational Change Management is often considered too soft to address, particularly in so-called result-driven organizations. Shut up and do the work!

Organizational Change Management is often considered too soft to address, particularly in so-called result-driven organizations. Shut up and do the work!

Recently, some PLM software vendors mentioned OCM as an important activity, sometimes even provided by them. Their business model is to sell as many software licenses as possible, and therefore, they promise best-case scenarios and coverage of business scenarios.

Would you buy your PLM software from a company that says:

“Our software is great; however, you also need to address a business change program.”

Or would you buy from“We are a market leader in your business, and thousands of users are currently working happily with our software.”

I believe, with the experience as a PLM coach, that every PLM implementation should be a people and business discussion first – preferably sponsored at C-level – before jumping on the solutions.

I believe, with the experience as a PLM coach, that every PLM implementation should be a people and business discussion first – preferably sponsored at C-level – before jumping on the solutions.

The challenge of this approach is that a human-centric approach depends on people, often hard to scale, as it is a people business, not a software tools business.

Digital Transformation is failing

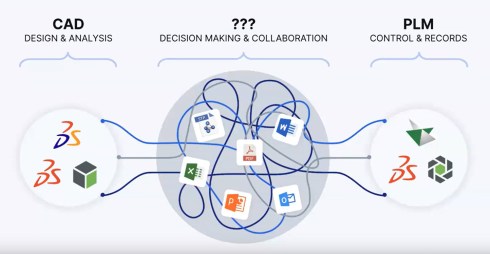

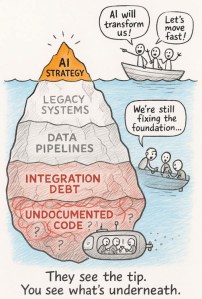

While preparing for the upcoming Share PLM summit in Jerez on May 19-20, I was looking back at why real digital transformation in the PLM domain is still failing – we keep on working mostly in a linear document-driven operating model.

While preparing for the upcoming Share PLM summit in Jerez on May 19-20, I was looking back at why real digital transformation in the PLM domain is still failing – we keep on working mostly in a linear document-driven operating model.

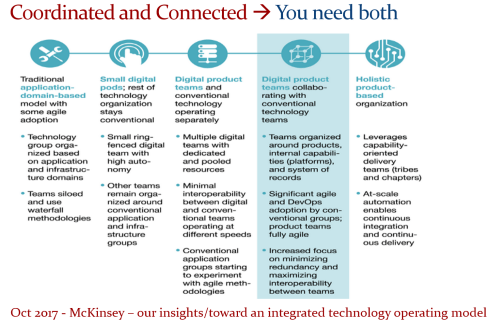

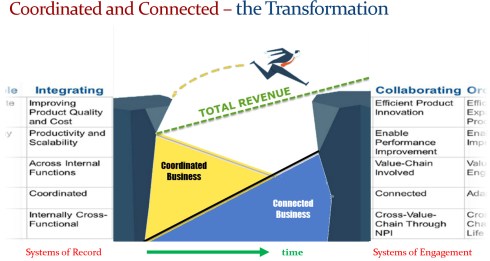

My opinion at this moment: For existing organizations, the move from coordinated to coordinated and connected is too complex for humans.

Despite a great white paper from McKinsey on how organisations could move away from a linear, often document-driven organisation to an organisation working in multidisciplinary product teams, there is no real progress in most organisations.

Changing the organizational structure appears to be so difficult, and this relates to Conway’s Law, which states that systems reflect the organizational structure, presenting a challenge in determining where to start.

![]() Not starting means not failing. And failing is the worst thing you can do at the C-level.

Not starting means not failing. And failing is the worst thing you can do at the C-level.

And now there is “product memory.”

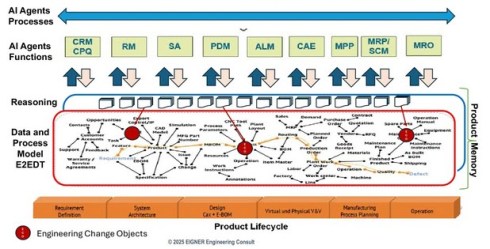

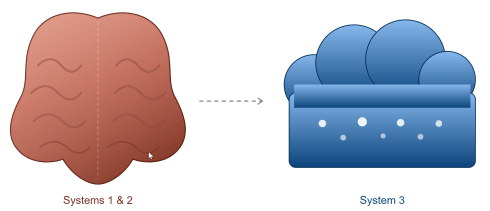

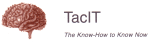

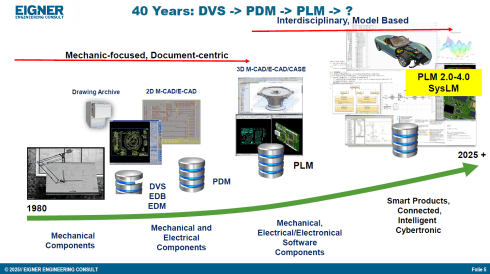

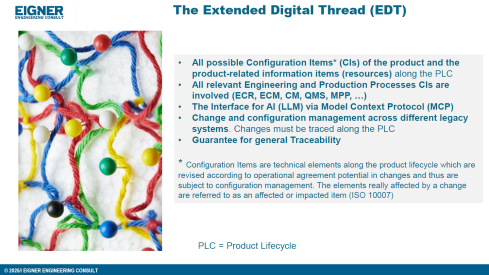

Is the “product memory” based on an agentic AI layer and an underlying ontology, the next big thing after the connected digital enterprise? Initially formulated by Benedict Smith and later translated to a more PLM-specific scope by Martin Eigner and Oleg Shilovitsky, we are trying to combine the (boring) systems of record data with all the reasoning and decision-making – that’s where the knowledge is sitting.

Is the “product memory” based on an agentic AI layer and an underlying ontology, the next big thing after the connected digital enterprise? Initially formulated by Benedict Smith and later translated to a more PLM-specific scope by Martin Eigner and Oleg Shilovitsky, we are trying to combine the (boring) systems of record data with all the reasoning and decision-making – that’s where the knowledge is sitting.

You can follow the thought experiments when reading the True Intelligence newsletters from the start.

A theme that came up also in other “the future of PLM” discussions was that traditional PLM only stores the results of a development and delivery process, but the reasoning is missing.

In my opinion, Colab Software was one of the first complementary to PLM startups, with a focus on capturing the discussions and decisions during a design review, as the older image below shows – also, Colab Software is now much more advanced with an AI-supported infrastructure.

Still, the image shows the value; the reasoning that was captured from the communication between different stakeholders in the product development process during design reviews.

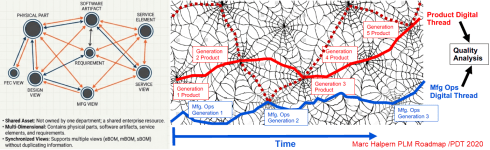

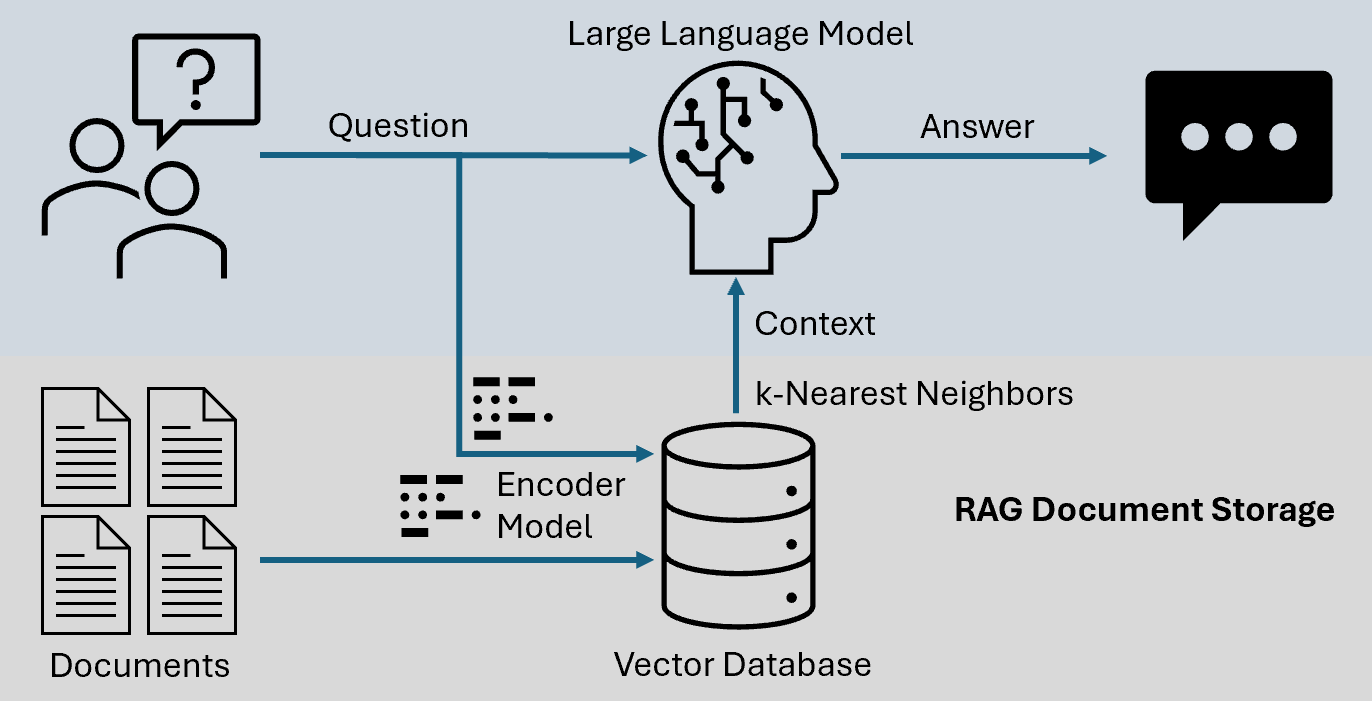

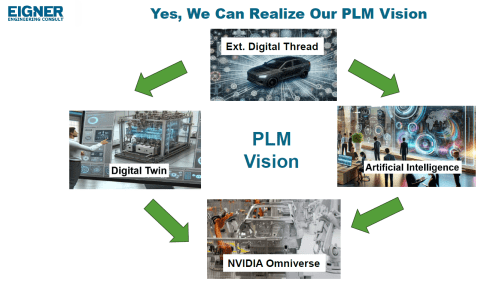

More in the traditional PLM domain, Martin and Oleg started developing the tconcept of an agentic AI enterprise driven by a graph-based layer on top of existing enterprise systems as Martin’s image illustrates below.

Where Oleg stays (for me) more in the traditional PLM enterprise world:

![]() e.g., his post Product Memory Architecture: How PLM Loses Engineering Knowledge and What Comes Next,

e.g., his post Product Memory Architecture: How PLM Loses Engineering Knowledge and What Comes Next,

Martin zoomed in on his day-to-day customer base in Germany when writing

![]() this post: The Actual Concept of Product Memory based on a Digital Thread with a vision for the upcoming 5 years.

this post: The Actual Concept of Product Memory based on a Digital Thread with a vision for the upcoming 5 years.

In addition, less PLM-focused but very data-driven, Jan Bosch wrote a complementary post on his blog related to

![]() the agentic AI approach: From Copilot to Colleague – the rise of agentic AI.

the agentic AI approach: From Copilot to Colleague – the rise of agentic AI.

An interesting quote from this post, valid for us all:

Agent systems require investment in data architecture, workflow mapping, governance frameworks and operational monitoring. Those investments compound. The organization that has deployed agents across its revenue cycle, supply chain and finance operations simultaneously develops deep operational expertise in running agentic systems, which is itself a form of competitive advantage.

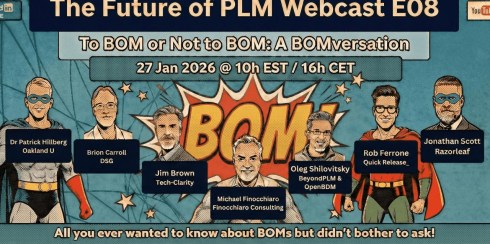

And while finalizing this post, there was an interesting discussion related to product memory at The Future of PLM: Introducing Product Memory organized by Fino, also known as Michael Finocchiaro

As a “techie,” I was able to enjoy and follow the discussion about a future infrastructure related to product knowledge. The term “product memory” seems a little overhyped, as if information that is not directly accessible through agents is a cause of failure. The big elephant in the room is where and how to start.

Enjoy the dialogue here:

What about a product memory trauma?

In the past, when discussing knowledge graphs, I already posed the question:

“How can knowledge graphs unlearn?”

In the techie world, there was always a hypothetical response for this question, but will it happen in a product memory environment where not everything is 100 percent exact and correct? Patrick Hillberg, one of the few PLM teachers, can educate you all about seemingly small mistakes with a big impact.

In the techie world, there was always a hypothetical response for this question, but will it happen in a product memory environment where not everything is 100 percent exact and correct? Patrick Hillberg, one of the few PLM teachers, can educate you all about seemingly small mistakes with a big impact.

During the product memory discussion, I heard a statement that only validated data is allowed to be part of the memory.

Has anyone thought about the utopia of this statement?

The ambitious statement that product memory would lead to a single source of truth is, for me, also a utopia. 100 percent correct data does not exist, nor will 100 percent accurate decisions exist. It will be the most likely truth for the moment.

Now compare this with the human brain; when a serious accident happens, the person involved might have trauma from that. Then you need a psychiatrist to fix the trauma, meaning create other memory constructs – rewiring the brain.

Now compare this with the human brain; when a serious accident happens, the person involved might have trauma from that. Then you need a psychiatrist to fix the trauma, meaning create other memory constructs – rewiring the brain.

While seeing this interesting dialogue with Rob Ferrone (the original Product Data PLuMber) about how Quick Release became a significant consultancy firm with the pragmatic focus on making the data flow (old image below), I had a new thought.

With Rob’s entrepreneurial skills, he might be able to start a new company soon, fixing product memory traumas – as data-governance becomes a commodity.

Will the product data plumber become the first product memory shrink?

Conclusion

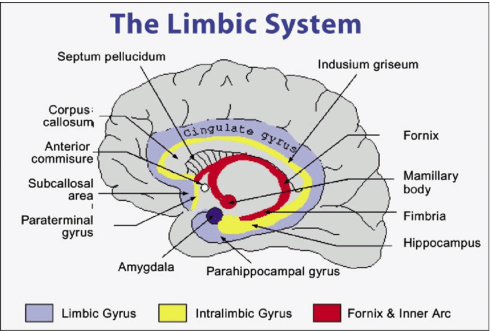

We are experiencing a fast-moving convergence on future PLM concepts, where the image from Martin Eigner nicely represents such a possible architecture based on “product memory”. The challenge I see is whether we would be able to implement such an architecture to be reliable and supported by humans. Because humans still have their old hardware, the limbic brain, that will try to escape from the perfect world with a single source of truth – they like their truth

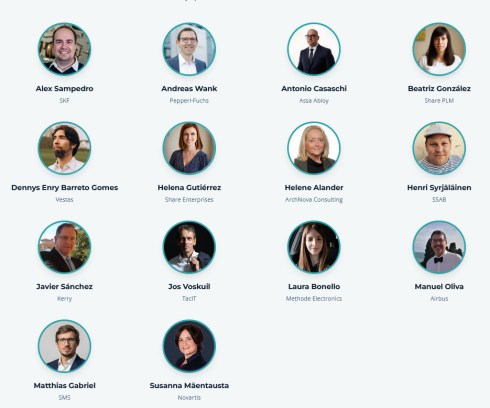

This was 2025 – this year, same atmosphere, more experienced & bigger and more to discuss.

Do you ever think about where we’ll be ten years from now? I’ve noticed I ask that question more and more these days. Probably because I have the time, not being involved anymore in day-to-day business and alerts.

Do you ever think about where we’ll be ten years from now? I’ve noticed I ask that question more and more these days. Probably because I have the time, not being involved anymore in day-to-day business and alerts.

Interestingly, we tend to assume that long-term thinking is someone else’s job — left to business management and governments. Roadmaps, strategies, and vision stories have always been part of my work with companies.

And yet, the dominant reality right now is a dramatic focus on the short term — driven by populism on one side and quarterly profit targets on the other. The result is a collective inability to make decisions that matter for the next decade, let alone the next generation.

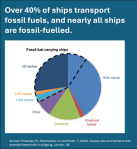

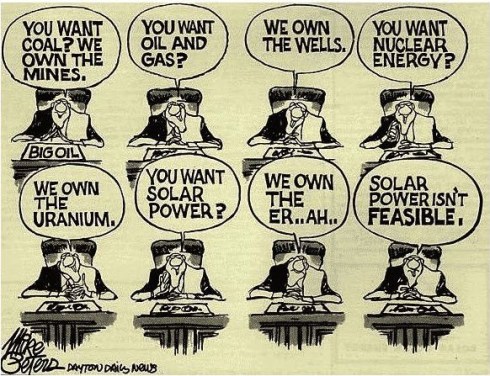

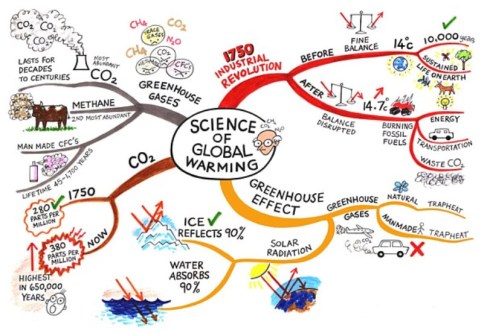

The current war in the Middle East has made something painfully visible that many of us already knew: we are dangerously dependent on fossil fuels.

The current war in the Middle East has made something painfully visible that many of us already knew: we are dangerously dependent on fossil fuels.

Around 40 percent of global shipping is tied to fossil fuel supply chains. Countries that have not invested in energy independence are now feeling that vulnerability acutely.

The energy transition is not just an environmental ambition — it is a geopolitical necessity.

- China understood this years ago and has been investing accordingly.

- AI data centers are now one of the fastest-growing sources of electricity demand, and even in Texas, they are building wind and solar parks to keep that energy demand under their own control.

- And Cuba — pushed by American sanctions — has been forced to innovate into wind and solar energy, with Chinese support. These are not coincidences.

They are signals that working on an energy transition makes you less vulnerable!

A real “burning platform”!

While we see burning platforms in the Middle East, we are also in a classic “burning platform” situation — a phrase from the world of change management that captures a simple truth: people only change when staying the same becomes more costly than changing.

It’s a depressing observation about human nature — and one I keep coming back to whenever I see exciting possibilities on the horizon that we simply refuse to act on.

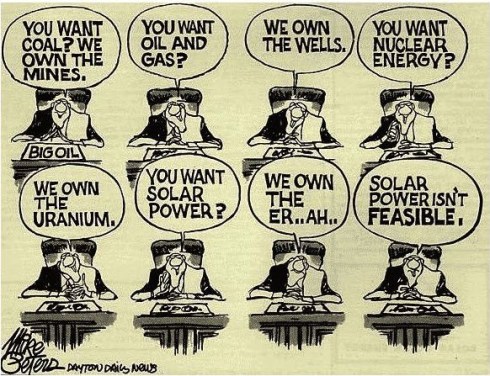

The fossil fuel dependency is one burning platform, willingly used at the moment by those countries and companies that are benefiting from this industry.

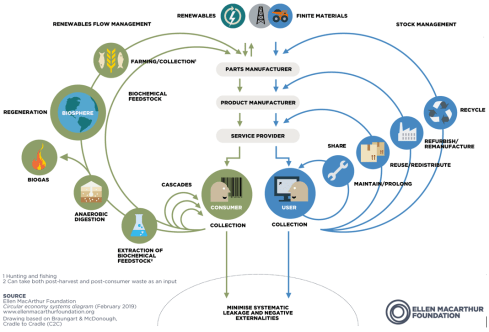

The downside is that the path towards a more circular economy — reducing waste, rethinking production, designing for longevity — is equally urgent and equally neglected.

This is precisely why the PLM Green Global Alliance (PGGA) exists — to keep these conversations alive and focus on the topics that support a sustainable future.

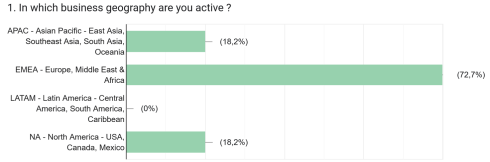

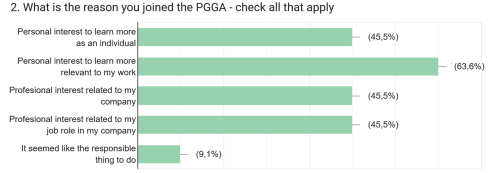

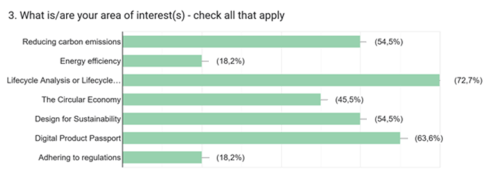

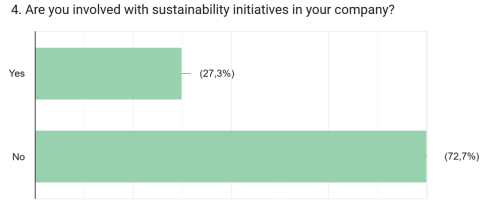

Four weeks ago, I launched a survey among our new LinkedIn group members. Due to a low response rate, I extended it to the whole group two weeks later.

The takeaway? Even within this community, the energy transition and sustainability don’t appear to feel like a burning platform — something demanding urgent action.

PLM Green Global Alliance survey

A quick overview of the responses — given the low number of replies, treat this as an indication rather than a statistically solid survey.

Although we launched the PGGA as a truly GLOBAL alliance — with core team members from both the US and Europe — the membership skews heavily toward the EMEA region. The political climate and culture of each region explain a lot about that.

It’s encouraging to see that most people joined out of personal interest, with professional motivations also playing a role. That tells us the PGGA needs to keep its focus on sharing real experiences — not just theory.

LCA (Life Cycle Analysis or Life Cycle Assessments) stands out as a strong area of interest — and the good news is that several of our core team members are actively working on it. Don’t hesitate to post your questions to the group.

On the Digital Product Passport (DPP), we’re planning an interview and/or webinar. The DPP is a great example of a topic that’s as much about digitizing product information as it is about methodology.

As you may have seen the post The show must go on – but will it be sustainable? last week. Erik Rieger and Matthew Sullivan, the Design for Sustainability team, are actively looking for more participants to help shape guidance in this area.

The answers illustrate that for most people, working on sustainability activities is (still) not part of their daily mission.

Question 5 allowed the participants to vote for topics of interest, and we can summarize the answers as follows:

- Understand what PLM solution providers are offering (we continue with our interviews)

- Discussing how to determine the carbon impact/LCA in the full scope, not only in the design scope and how various platforms contribute to it in the various lifecycle stages.

- Design for Sustainability guidance and info

- The role of PLM and AI in the context of sustainability

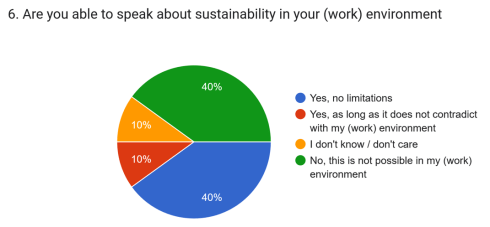

Since the survey was anonymous, we can’t link answers to specific regions. But we’re aware that in some countries, polarization has made certain topics off-limits — either by mandate or out of fear of a difficult working atmosphere.

The last two questions were about potential involvement for the PGGA from the people answering the survey. 3 people responded positively to support the PGGA in action.

Within the PGGA, everyone is welcome to share their perspective — with respect for those who see it differently. It’s not about being right or wrong. It’s about the dialogue, and about finding paths forward to a future that’s sustainable not just for the planet, but for businesses and the people within them.

Within the PGGA, everyone is welcome to share their perspective — with respect for those who see it differently. It’s not about being right or wrong. It’s about the dialogue, and about finding paths forward to a future that’s sustainable not just for the planet, but for businesses and the people within them.

A low response or apathy?

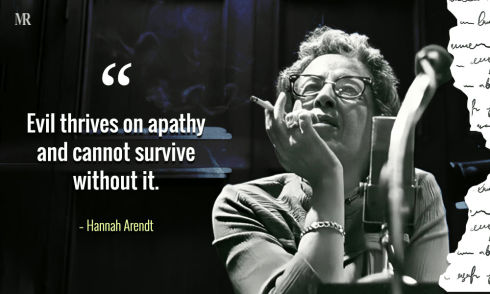

The survey results are interesting on their own — but when you combine them with the low response rate, they say something more: even in communities that care, mobilizing action is hard.

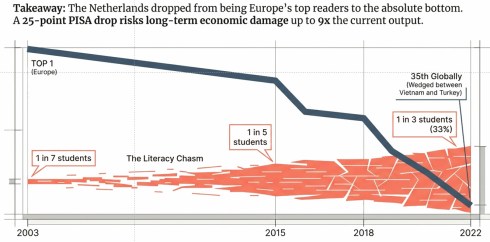

![]() Are we too busy with the short term, or have we become apathetic to what is happening around us and have the feeling our efforts do not matter?

Are we too busy with the short term, or have we become apathetic to what is happening around us and have the feeling our efforts do not matter?

On that last point, I keep thinking of Hannah Arendt — the German-American historian and philosopher who lived from October 1906 till December 1975.

Her famous book, published after the Second World War, is The Origins of Totalitarianism (1951), an alarming book if you read it in today’s context.

My favorite quote from this book:

Written in the context of the Holocaust, it explained how the indifference of ordinary people allowed atrocities to unfold. Arendt warns against moral detachment. Staying informed and engaged takes effort — but it’s the effort that matters.

Today, she might write:

“Evil thrives on social media, and cannot exist without it.”

To conclude

So what can we do? The conclusion is simple, even if the execution is not directly possible: don’t just watch it burn. Every one of us has a space of influence — in our companies, in our communities, in our professional networks. The energy transition, the circular economy, the push for longer-term thinking — none of these will happen because a government issued a directive or a CEO signed a strategy paper. They happen because individuals within their sphere of influence decide to make them happen.

Where are you standing?

Respond with a “like” if you care!

Recently, I have been reading some interesting posts beyond all the technical discussions related to PLM and AI. Is PLM becoming obsolete? Are we heading to a new type of infrastructure based on MCP agents? Are these agents an example of new ways of collaboration?

Collaboration – it pops up everywhere!

Here is a quote from the article that triggered me:

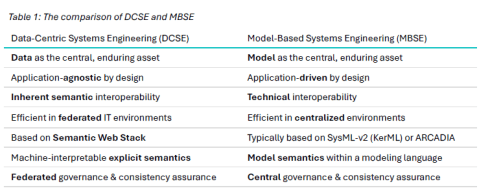

The 𝐧𝐮𝐦𝐛𝐞𝐫 𝐨𝐧𝐞 𝐫𝐞𝐚𝐬𝐨𝐧 organizations deploy MBSE is not simulation or architecture development. It is 𝐞𝐧𝐡𝐚𝐧𝐜𝐞𝐝 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐚𝐧𝐝 𝐜𝐨𝐦𝐦𝐮𝐧𝐢𝐜𝐚𝐭𝐢𝐨𝐧 — at 67%. But here is the uncomfortable part.

Only 24% reported actually achieving collaboration as a business outcome. That is a 43-point gap between intent and result. Traceability is even worse — 48% deploy MBSE for it, 9% say they have realized it.

What if the problem is not that MBSE fails to deliver collaboration — but that most organizations 𝐧𝐞𝐯𝐞𝐫 𝐝𝐞𝐟𝐢𝐧𝐞 𝐰𝐡𝐚𝐭 𝐛𝐞𝐭𝐭𝐞𝐫 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐥𝐨𝐨𝐤𝐬 𝐥𝐢𝐤𝐞 in measurable terms?

Chad Jackson’s article aligns with many other discussions I had with companies related to PLM (and MBSE) – itinspired me to focus this time on collaboration.

How do we measure collaboration?

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

From the management side, accountability flows downward. The architecture of the organization mirrors the architecture of the product, and the architecture of the product mirrors the architecture of the organization.

We have known this for decades; it is Conway’s Law. Yet we are still surprised when silos emerge exactly where we designed them.

The Management Dilemma

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

But collaboration? What is the KPI?

It is a fair question. If something cannot be quantified, it becomes subjective and depends on gut feelings. And if it cannot be tied directly to quarterly results, it often becomes optional.

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

Still, it improved collaboration a lot, and sometimes it became a burden with all the CC-messages and epistles exchanged.

Collaboration has an impact, deeply and systematically. But its impact is indirect, delayed, and distributed. It reduces friction, can improve shared understanding and prevent expensive rework.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

For a hierarchical and linearly structured organization, horizontal collaboration is often hard to “sell.”

Back to Conway’s Law

Organizational structure shapes communication patterns. Communication patterns shape systems.

Organizational structure shapes communication patterns. Communication patterns shape systems.

If your organization is vertical, your product will be vertical. If your incentives are local, your decisions will be local. If your teams are isolated, your solutions will be fragmented.

You cannot expect horizontal behavior from a vertically optimized structure without friction.

Disconnected collaboration initiatives fail because they try to overlay horizontal tools on top of vertical incentives.

Attempts like a new collaboration platform or using shared workspace technology to incentivize collaboration are examples of this approach.

But the underlying structure remains untouched. People are still measured on local performance. Budgets are still allocated per department. Promotions still reward vertical success.

![]() First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

Most likely, it is in the IT or Engineering silo, rarely on a higher organizational level.

And then we are surprised when collaboration stalls?

The Myth of the Tool

Whenever collaboration becomes a pain, people look for IT tools as a cure.

“We need better platforms.”

“We need better platforms.”

“We need integrated systems.”

and now:

“We need AI – the AI agents will do the collaboration for us.”

Tools matter, but they are amplifiers. They amplify existing behavior. They do not create it. While finalizing this article, I saw this post from Dr. Sebastian Wernicke coming in, containing this quote:

Agents are software. Maturity is culture. And culture, inconveniently, doesn’t come with an install package.

If trust is low, a collaboration platform becomes a battlefield. If incentives are misaligned, shared dashboards become weapons. If fear dominates, transparency becomes a threat.

![]() Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

The Limbic Brain Is Always There

Beneath the rational layer of strategy and planning sits something older: the limbic system. The part of us that cares about belonging, safety, recognition, autonomy, and purpose.

Collaboration thrives when the limbic brain’s needs are met. It collapses when they are threatened.

- If people feel unsafe, they protect information!

- If they feel undervalued, they withdraw effort!

- If they feel controlled, they resist alignment!

You cannot mandate collaboration if the emotional system of the organization is defensive.

The question is not “How do we force collaboration?”

The question is “How do we create conditions where collaboration feels natural?”

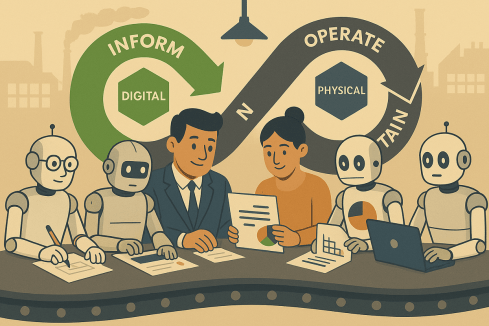

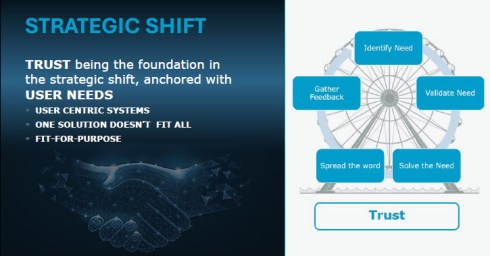

And that requires leaders to connect to the human, not just to the role or an artificial intelligence solution. They should be inspired by this iconic image from Share PLM:

Besides a difficult-to-quantify ROI, there is another reason why collaboration struggles to gain executive traction: it rarely creates immediate success.

It prevents future failure, and we humans in general do not prioritize prevention, thinking of our environmental, financial and potential even health behavior. Where prevention has the lowest cost, most of the time, fixing the damage lies in our nature.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For me, the firefighters are the biggest challenge to successfully implementing a PLM infrastructure. The image to the left comes from a 2014 presentation when discussing potential resistance to a successful PLM implementation.

In vertical systems, firefighting is visible. Prevention is silent and therefore collaboration activities feel like a cost center rather than a strategic lever.

Where to Push, Where to Invest?

![]() If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

When people meet outside their vertical silos, something subtle shifts. They see faces instead of functions. They understand constraints instead of assuming incompetence. They replace narratives with conversations.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Also, when looking at events where people share experiences, there is a difference between a traditional vertical PLM/CM/IT/ERP conference where specialists focus on one discipline and on the other side, a human-centric conference, where humans share their experiences in an organization.

The Share PLM Summit in May last year was an eye-opener for me. Starting from the human perspective brought a lot of energy and willingness to discuss various insights – collaboration at its best.

Events, summits, workshops—when done well—create human connection. They remind participants that behind every deliverable sits a person trying to do meaningful work.

The focus on the human perspective is not soft. It is strategic because collaboration is not primarily about information exchange. It is about relationship quality and trust.

The Real Question

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

Because collaboration is not free, it requires time. It requires emotional energy. It requires psychological safety. It sometimes requires giving up local control for global benefit.

In systems terms, it requires shifting from local optimization to whole-system optimization.

That is uncomfortable.

But if our products are complex, interconnected, and rapidly evolving—as most are today—then vertical thinking alone is no longer sufficient. The world has become horizontal, even if our org charts have not.

And perhaps the real challenge is not how to measure collaboration, but how to design organizations where collaboration is no longer something we need to sell at all. An article from McKinsey might inspire you here for this transition – for me, it did: Toward an integrated technology operating model.

Beyond AI

While everyone talks and writes about AI, I do not believe AI will solve the collaboration issue. For sure, AI collaboration with agents will increase personal and organizational effectiveness, but it never touches our limbic brain, the irreplaceable part that makes us typical humans and unique.

There will always be a need for that, unless we become numb and addicted to the AI environments. There are various studies popping up on how AI “untrains” our brain muscles, reduces patience and deep thinking. Finding a new human balance is crucial.

Conclusion

Triggered by Chad Jackson’s post about MBSE and collaboration, I took the time to deep-dive into the aspects of collaboration in the PLM domain. How do you manage collaboration?

Come and share your experiences at the upcoming Share PLM 2026 summit from 19-20 May in Jerez. The title of my keynote: Are Humans Still Resources? Agentic AI and the Future of Work and PLM.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

If you belong to that next generation, please read until the end and share your thoughts.

With last week’s announcement from the US government, no longer treating greenhouse gas emissions as a threat to the planet or climate.

We see a push to remove regulations that limit companies from continuing or expanding business without considering the broader consequences for other countries and future generations.

It feels like a short-term, greedy decision, largely influenced by those who benefit from fossil-carbon economies. Decisions like this make the energy transition harder, because the path of least resistance is always the easiest to follow.

Transitions are never simple. But when science is ignored, data is removed, and opinions replace facts, we are no longer supporting a transition — we are actively working against it.

My Story

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

For me, however, it stayed outside my daily focus. I was at the beginning of my career, excited about the new challenges.

And important to notice that connecting to the internet with a 28k modem was the standard, a world without social media constantly reminding us of global issues.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

Back then, the effects of carbon emissions and global warming felt distant, almost abstract. Only around 2014 did the conversation become more mainstream for me, helped by social media, before algorithms and bots began driving polarization.

In 2015, while writing about PLM and global warming, I realized something that still resonates today: even when we understand change is needed, we often stick to familiar habits, because investments in the future rarely deliver immediate ROI for ourselves or our shareholders.

The PLM Green Global Alliance

When Rich McFall approached me in 2019 with the idea of creating an alliance where people and companies could share ideas and experiences around sustainability in the PLM domain, I was immediately interested — for two reasons.

- First, there was a certain sense of responsibility related to my past activities as the Flying Dutchman. Not guilt — life is about learning and gaining insight — but awareness that I needed to change, even if the past could not be changed.

- Second, and more importantly, the PLM Green Global Alliance offered a way to contribute. It gave me a reason to act — for personal peace of mind and for future generations. Not only for my children or grandchildren, but for all those who will share this planet with them.

In the first years of the PGGA, we saw strong engagement from younger professionals. Over time, however, we noticed that career priorities often came first — which is understandable.

Like me at the start of my career, many focus first on building their future. Career and sustainability can coexist, but investing extra time in long-term change is not easy when daily responsibilities already demand so much.

Your Chance to Work on the Future

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

At the same time, I understand that not everyone is in a position to speak out or dedicate time to sustainability initiatives. Circumstances differ. For many, current responsibilities leave little space for additional commitments.

Still, for those willing to join us, we have two requests to better understand your expectations.

Two weeks ago, I connected with our 40 newest members of the PLM Green Global Alliance. We are now close to 1,600 members — up from around 1,500 in September 2025, as mentioned in Working on the Long Term.

That post was a gentle call to action. Seeing our PGGA membership continue to grow is encouraging — and naturally raises a question:

1. What motivates people to join the PGGA LinkedIn group?

So far, only a small number of the recent new members have completed a survey that was especially sent to them to explore changing priorities. Due to the low response, we extended the invitation to all members. We are curious about your expectations — and quietly hopeful about your involvement.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

2. Design for Sustainability – your contribution?

Last year, Erik Rieger and Matthew Sullivan launched a new workgroup within the PLM Green Global Alliance focused on Design for Sustainability. While the initial energy was strong, changes in personal priorities meant the team could not continue at the pace they hoped. Since many new members have joined since last May, we decided to relaunch the initiative.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

Note: If you are worried about clicking on the links for the survey, you can always contact us directly (in private) to share your ambition

Conclusion

The outside world often pushes us to focus only on daily business. In some places, there is even active pressure to avoid long-term sustainability investments. Remember that pressure often comes from those invested in keeping the current system unchanged.

If you care about the future — your generation and those that follow — stay engaged. Small actions by millions of people can create meaningful change.

We look forward to your input and participation.

— says the boomer who still cares 😉

The last month, it seems like in my ecosystem, people are incredibly focused on “THE BOM” combined with AI agents working around the clock. One of the reasons I have this impression, of course, is my irregular participation in the Future of PLM panel discussions, moderated and organized by Michael Finocharrio.

Yesterday, the continuously growing Future of PLM team held another interesting discussion: “A BOMversation”. You can watch the replay and the comments during the debate here: To BOM or Not to BOM: A BOMversation

On the other hand, there is Prof. Jorg Fischer with his provocative post: 📌 2026 – The year we have to unlearn BOMs! –

On the other hand, there is Prof. Jorg Fischer with his provocative post: 📌 2026 – The year we have to unlearn BOMs! –

Sounds like a dramatic opening, but when you read his post and my post below, you will learn that there is a lot of (conceptual) alignment.

Then there are PLM vendors who announce “next-generation BOM management,” startup companies that promise AI-powered configuration engines, and consultants who explain how the BOM has become the foundation of digital transformation. (I do not think so)

And as Anup Karumanchi states, BOMs can be the reason if production keeps breaking.

I must confess that I also have a strong opinion about the various BOMs and their application in multiple industries.

I must confess that I also have a strong opinion about the various BOMs and their application in multiple industries.

My 2019 blog post: The importance of EBOM and MBOM is in the top 3 of most-read posts. BOM discussions, single BOM, multiview BOM, etc., always attract an audience.

I continuously observe a big challenge at the companies I am working with – the difference between theory and reality.

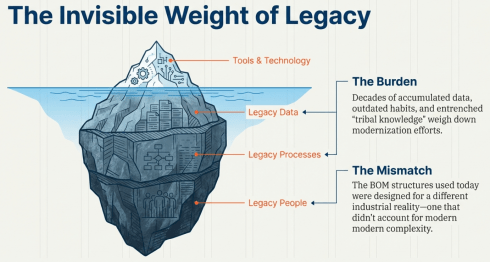

![]() If the BOM is so important, why do so many organizations still struggle to make it work across engineering, manufacturing, supply chain, and service?

If the BOM is so important, why do so many organizations still struggle to make it work across engineering, manufacturing, supply chain, and service?

The answer is two-fold: LEGACY DATA, PROCESSES and PEOPLE, and the understanding that the BOM we are using today was designed for a different industrial reality.

Let me share my experiences, which take longer to digest than an entertaining webinar.

Some BOM history and theory

Historically, the BOM was a production artifact. It described what was needed to build something and in what quantities. When PLM systems emerged, the 3D CAD model structure became the authoritative structure representing product definition, driven mainly by the PLM vendors with dominant 3D CAD tools in their portfolio.

Historically, the BOM was a production artifact. It described what was needed to build something and in what quantities. When PLM systems emerged, the 3D CAD model structure became the authoritative structure representing product definition, driven mainly by the PLM vendors with dominant 3D CAD tools in their portfolio.

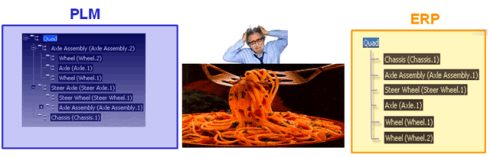

As the various disciplines in the company were not integrated at all, the BOM structure derived from the 3D CAD model was often a simplified way to prepare a BOM for ERP. The transfer to ERP was done manually (retype the structure in ERP), advanced (using Excel export and import with some manipulation) or advanced through an “intelligent” interface.

![]() There are still a lot of companies working this way, probably because, due to the siloed organization, there is no one owning or driving a smooth flow of information in the company.

There are still a lot of companies working this way, probably because, due to the siloed organization, there is no one owning or driving a smooth flow of information in the company.

The need for an eBOM and mBOM

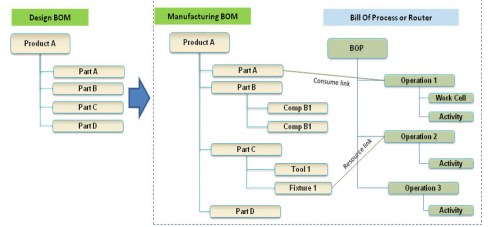

When companies become more mature and start to implement a PLM system, they will discover, depending on their core business processes, that it makes sense to split the BOM concept into a specification structure, the eBOM and a manufacturing structure for ERP, the mBOM.

The advantage of this split is that the engineering specification can remain stable over time, as it provides a functional view of the product with its functional assemblies and part definitions.

This definition needs to be resolved and adapted for a specific plant with its local suppliers and resources. PLM systems often support the transformation from the eBOM to a proposed mBOM, and if done more completely with a Bill of Process.

This definition needs to be resolved and adapted for a specific plant with its local suppliers and resources. PLM systems often support the transformation from the eBOM to a proposed mBOM, and if done more completely with a Bill of Process.

The advantages of a split in an eBOM and an mBOM are:

- Reduced the number of engineering changes when supplier parts change

- Centralized control of all product IP related to its specifications (eBOM/3DCAD)

- Efficient support for modularity, as each module has its own lifecycle and can be used in multiple products.

Implementing an eBOM/mBOM concept

The theory, the methodology and implementation are clear, and you can ask ChatGPT and others to support you in this step.

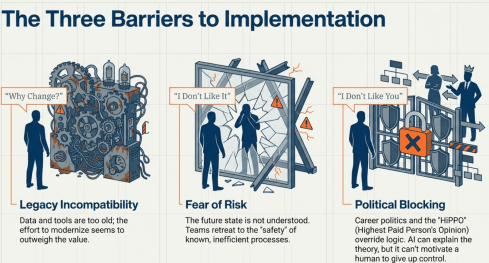

However where ChatGPT or service providers often fail is to motivate a company to move to this next steps, as either their legacy data and tools are incompatible (WHY CHANGE?), the future is not understood and feels risky (I DON’T LIKE IT) or for political career reasons a change is blocked (I DON’T LIKE YOU or the HIPPO says differently)

Extending to the sBOM

When you sell products in large volumes, like cars or consumer products, companies have discovered and organized a well-established service business, as the margins are high here.

Companies that sell almost unique solutions for customers, batch-size 1 or small series, are also discovering or asked by their customers to come up with service plans and related pricing.

The challenge for these companies is that there is a lot of guesswork to be done, as the service business was not planned in their legacy business. A quick and dirty solution was to use the mBOM in ERP as the source of information. However, the ERP system usually does not provide any context information, such as where the part is located and what potential other parts need to be replaced—a challenging job for service engineers.

The challenge for these companies is that there is a lot of guesswork to be done, as the service business was not planned in their legacy business. A quick and dirty solution was to use the mBOM in ERP as the source of information. However, the ERP system usually does not provide any context information, such as where the part is located and what potential other parts need to be replaced—a challenging job for service engineers.

A less quick and still a little dirty solution was create a new structure in the PLM system, which provided the service kits and service parts for the defined product, preferably done based on the eBOM, if an eBOM exists.

![]() The ideal solution would be that service engineers are working in parallel and in the same environment as the other engineers, but this requires an organisational change.

The ideal solution would be that service engineers are working in parallel and in the same environment as the other engineers, but this requires an organisational change.

The organization often becomes the blocker.

As long as the PLM system is considered a tool for engineering, advanced extensions to other disciplines will be hard to achieve.

A linear organization aligned with a traditional release process will have difficulties changing to work with a common PLM backbone that satisfies engineering, manufacturing engineering and service engineering at the same time.

Now, the term PLM becomes Product Lifecycle MANAGEMENT and this brings us to the core issue: the BOM is too often reduced to a parts list without understanding the broader context of the product, needed for service or operation support where artifacts can be hardware and software in a system.

What is really needed is an extended data model with at least a logical product structure that can represent multiple views of the same product: engineering intent, manufacturing reality, service configuration, software composition, and operational context. These views should not be separate silos connected by fragile integrations. They should be derived from a shared, consistent digital infrastructure – this is what I extract from Prof. Jorg Fischer’s post, be it that he comes with a strong SAP background and focus on CTO+

Most companies are still organized around linear processes with a focus on mechanical products: engineering hands over to manufacturing, manufacturing hands over to service, and feedback loops are weak or nonexistent.

Most companies are still organized around linear processes with a focus on mechanical products: engineering hands over to manufacturing, manufacturing hands over to service, and feedback loops are weak or nonexistent.

Changing the BOM without changing the organization is like repainting a house with structural cracks. It may look better, but the underlying issues remain.

Listen to this snippet from the BOMversation where Patrick Hilberg touches this point too.

With this approach, the digital thread becomes more than a buzzword. A digital thread must provide digital continuity, which means that changes propagate across domains, that data is contextualized, and that lifecycle feedback flows back into product development. Without this continuity, digital twins concepts remain isolated models rather than living representations of real products.

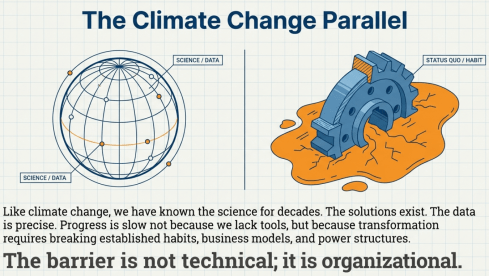

![]() However, the most significant barrier is not technical. It is organizational. There is an interesting parallel with how we address climate change and are willing to take action against it.

However, the most significant barrier is not technical. It is organizational. There is an interesting parallel with how we address climate change and are willing to take action against it.

For decades, we have known what needs to change. The science is precise. The solutions exist. Yet progress is slow because transformation requires breaking established habits, business models, and power structures.

Digital transformation in product lifecycle management follows a similar pattern. Everyone agrees that data silos are a problem. Everyone wants “end-to-end visibility.” Yet few organizations are willing to rethink ownership of product data and processes fundamentally.

So what does the future BOM look like?

It is not a single hierarchical tree. It is part of a maze; some will say it is a graph. It is a connected network of product-related information: physical components, software artifacts, service elements, configurations, requirements, and operational data. It supports multiple synchronized views without duplicating information. It evolves as products change when operated in the field.

Most importantly, it is not owned by one department. It becomes a shared enterprise asset – with shared accountability for various datasets. But we should not abandon the BOM concept. On the contrary, the BOM remains essential and managing BOMs consistently is already a challenge.

But its role must shift from being a collection of static structures to becoming part of the digital product definition infrastructure, extended by a logical product structure and beyond – the MBSE question.

![]() The BOM is not dead. But the traditional BOM mindset is no longer sufficient. The question is not whether the BOM will change. It already is. The real question is whether organizations are ready to change with it.

The BOM is not dead. But the traditional BOM mindset is no longer sufficient. The question is not whether the BOM will change. It already is. The real question is whether organizations are ready to change with it.

Conclusion

Inspired by various BOMversations and AI graphical support, I tried to reflect the business reality, observed for over 10++ years. Technology and the Academic truth do not create breakthroughs in organisations due to the big legacy and fear of failure. Will AI fix this gap, as many software vendors believe, or do we need a new generation with no legacy PLM experience, as some others suggest? Your thoughts?

p.s. My trick to join the BOMversation without being thrown from the balcony 🙃

This post is for the 1,564 members of the PLM Green Global Alliance LinkedIn group—and for those wondering why they should join.

Since October 2019, when Rich McFall launched the group alongside the PLM Green Global Alliance website, we’ve been building a shared space to consolidate insights, discussions, and lessons learned.

Since October 2019, when Rich McFall launched the group alongside the PLM Green Global Alliance website, we’ve been building a shared space to consolidate insights, discussions, and lessons learned.

Today, the core team—Jos Voskuil, Klaus Brettschneider, Mark Reisig, Evgeniya Burimskaya, and Erik Rieger—continues to contribute deep expertise across sustainability, LCA, energy, circular economy, and design.

In November 2025, we marked our fifth anniversary with a webinar reflecting on past learnings and looking ahead to 2026. The conversation is still worth revisiting.

Now, as 2026 unfolds, the mood may feel mixed. Progress is never automatic. There are ups, downs, and work to be done.

Rich McFall stepping back!

In early December, it became clear that Rich would no longer be able to support the PGGA for personal reasons. We respect his decision and thank Rich for the energy and private money he has put into setting up the website, pushing the moderators to remain active and publishing the newsletter every month. From the frequency of the newsletter over the last year, you might have noticed Rich struggled to be active.

In early December, it became clear that Rich would no longer be able to support the PGGA for personal reasons. We respect his decision and thank Rich for the energy and private money he has put into setting up the website, pushing the moderators to remain active and publishing the newsletter every month. From the frequency of the newsletter over the last year, you might have noticed Rich struggled to be active.

Rich, we all wish you a happy and healthy continuation of your life and hope we will see you back once in a while as a contributor.

Meanwhile, Klaus Brettschneider is working on taking over and upgrading the PGGA website to bring our mission to the next level.

PGGA – the challenge is in the name

When you launch a  product or start an alliance, the name can be excellent at the start, but later it might work against you. I believe we are facing this situation too with our PGGA (PLM Green Global Alliance)

product or start an alliance, the name can be excellent at the start, but later it might work against you. I believe we are facing this situation too with our PGGA (PLM Green Global Alliance)

Let’s analyze the name.

The P stands for PLM.

Whether a business delivers products or services, most of the environmental impact is locked in during the design phase—often quoted at close to 80%. That makes design a strategic responsibility not only for engineering.

Whether a business delivers products or services, most of the environmental impact is locked in during the design phase—often quoted at close to 80%. That makes design a strategic responsibility not only for engineering.

Any company with a long-term vision—call it sustainable in the broad sense—must understand the environmental footprint of its offerings across manufacturing, operation, and repurposing.

Today’s “we don’t care” wave may be loud and egoistic, but it lacks durability. Forward-looking countries and companies already know this.

Moving toward a circular, fossil-free economy demands innovation. In that future, waste and recycling are costly options, pushed out by regulation and resource scarcity alike. Better to change your product or service delivery to align with a circular economy where possible. You can read more in my 2024 post: The Product Service System and a Circular Economy

Changing business models only possible by changing your PLM approach is often overlooked at the board level. PLM as a strategy shapes outcomes—and ultimately drives business success.

![]() Therefore, the P remains our primary focus area.

Therefore, the P remains our primary focus area.

The G for Green

Green has gradually acquired a negative connotation, weakened by early marketing hype and repeated greenwashing exposures. For many, green has lost its attractiveness.

Green has gradually acquired a negative connotation, weakened by early marketing hype and repeated greenwashing exposures. For many, green has lost its attractiveness.

After the 2015 United Nations Climate Change Conference, countries began exploring how to contribute to a healthier planet, increasingly at risk from rising carbon emissions caused by fossil fuels. This momentum led Europe, in 2020, to launch the Green Deal.

The spirit was right, but as with every new direction, failures followed. Lessons were learned, and perhaps too much academic work was done without involving businesses or the populations affected.

It resembles old, failing PLM projects, where management launches a big-bang implementation without grasping the friction and complexity of a fundamental transformation. Behavioral change, as always, is hard, as the iconic Share PLM image illustrates – click on the image for the details.

It resembles old, failing PLM projects, where management launches a big-bang implementation without grasping the friction and complexity of a fundamental transformation. Behavioral change, as always, is hard, as the iconic Share PLM image illustrates – click on the image for the details.

Meanwhile, threatened industries stirred resistance, science came under pressure, and a brown movement began pushing the green one aside.

The good news is that sustainability is still alive, progressing quietly. Long-term sustainability means reducing dependence on fossil fuels, while a new battle emerges over scarce materials for the energy transition. Those who understand this are already acting—now calling it future risk avoidance.

![]() Green has gone, replaced by proactive business sustainability.

Green has gone, replaced by proactive business sustainability.

The G from Global

When reading or listening to the news, it seems that globalization is over and imperialism is back with a primary focus on economic control. For some countries, this means even control over people’s information and thoughts, by restricting access to information, deleting scientific data and meanwhile dividing humanity into good and bad people.

When reading or listening to the news, it seems that globalization is over and imperialism is back with a primary focus on economic control. For some countries, this means even control over people’s information and thoughts, by restricting access to information, deleting scientific data and meanwhile dividing humanity into good and bad people.

And while these countries might control 25 % of their population, the majority of people still have the opportunity to fight climate change within their reach, work on a more sustainable economy and world.

As a global community, we have to fight and ensure that what we do is based on science and sharing facts and findings is crucial. As a coincidence, while writing this post, this article from my fellow Dutchman Robert Dijkgraaf, who is a leader in research and policy in many roles, including as minister of Education, Culture, and Science of the Netherlands (2022-2024) and director of the Institute for Advanced Study in Princeton (2012-2022).

As a global community, we have to fight and ensure that what we do is based on science and sharing facts and findings is crucial. As a coincidence, while writing this post, this article from my fellow Dutchman Robert Dijkgraaf, who is a leader in research and policy in many roles, including as minister of Education, Culture, and Science of the Netherlands (2022-2024) and director of the Institute for Advanced Study in Princeton (2012-2022).

His article: The fight to keep science global fits the mindset of our PGGA – take the time to read it and reflect on it.

![]() Global remains our goal, and given the global population, we are looking for more voices from Asia and Africa.

Global remains our goal, and given the global population, we are looking for more voices from Asia and Africa.

The A from Alliance

With more than 1500 members in our LinkedIn group, we are curious why you joined the PLM Global Green Alliance and assuming your positive intent to contribute to a sustainable future, where can we help you best?

With more than 1500 members in our LinkedIn group, we are curious why you joined the PLM Global Green Alliance and assuming your positive intent to contribute to a sustainable future, where can we help you best?

There are the contributions from the leading PLM software vendors (PTC, Dassault Systèmes, SAP, Siemens, Aras, Autodesk), solution providers(Sustaira, Makersite, aPriori, Direktin) and service partners (CIMPA, ecoPLM, CIMdata) that focus on providing capabilities, methodologies and consultancy to support the transition to a more sustainable economy and bearable climate.

![]() Let us know your questions, struggles, and intentions, either through personalized or anonymized interactions on LinkedIn – together we are an alliance.

Let us know your questions, struggles, and intentions, either through personalized or anonymized interactions on LinkedIn – together we are an alliance.

Conclusion

Although the political climate is currently in the same condition as the planetary climate, there is still work to be done. Being an observer is the worst option, and changing the world as an individual is also a mission impossible.

However, with our niche group, The PLM Green Global Alliance, we can be part of the generation that actively pursues a sustainable future for ourselves and the next generations.

And as you can learn from this podcast, you are not alone.

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

You can still read my post here in case you missed it: A very long week after PLM Roadmap / PDT Europe 2025

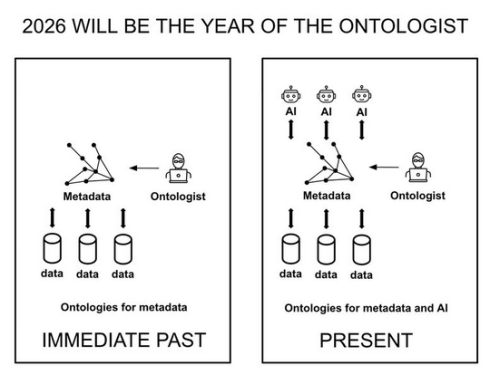

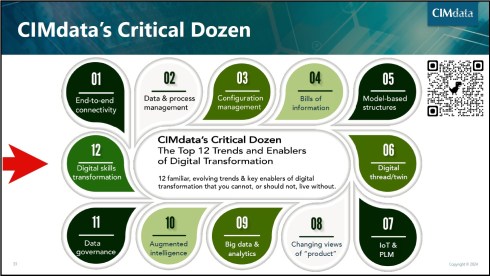

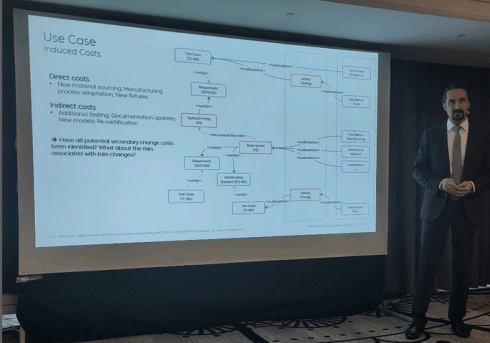

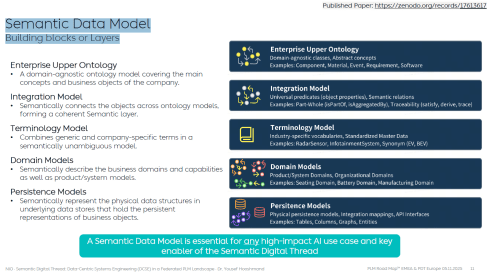

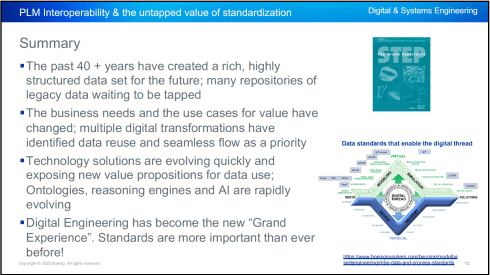

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

This post will focus on the other aspects of product lifecycle management – the evolving methodologies and the human side.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

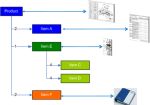

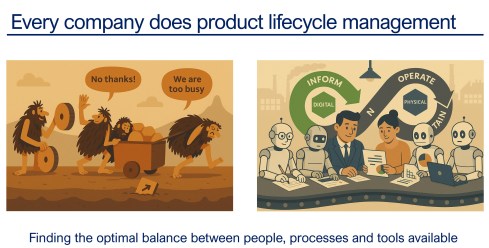

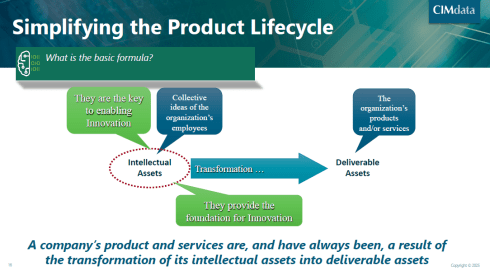

And as a reminder, I used the image above in other conversations. Every company does product lifecycle management; only the number of people, their processes, or their tools might differ. As Peter Billelo mentioned in his opening speech, the products are why the company exists.

Unlocking Efficiency with Model-Based Definition

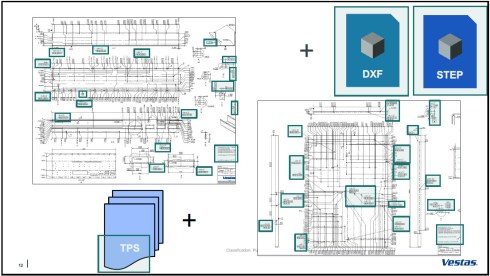

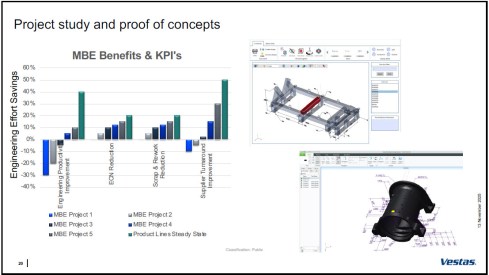

![]() Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Personally, I consider MBD as one of the stepping stones to learning and mastering a model-based enterprise, although do not be confused by the term “model”. In MBD, we use the 3D CAD model as the source to manage and support a data-driven connection among engineering, manufacturing, and suppliers. The business benefits are clear, as reported by companies that follow this approach.

However, it also involves changes in technology, methodology, skills, and even contractual relations.

Dennys started sharing the analysis they conducted on the amount of information in current manufacturing drawings. The image below shows that only the green marker information was used, so the time and effort spent creating the drawings were wasted.

It was an opportunity to explore model-based definition, and the team ran several pilots to learn how to handle MBD, improve their skills, methodologies, and tool usage. As mentioned before, it is a profound change to move from coordinated to connected ways of working; it does not happen by simply installing a new tool.

The image above shows the learning phases and the ultimate benefits accomplished. Besides moving to a model-based definition of the information, Dennys mentioned they used the opportunity to simplify and automate the generation of the information.

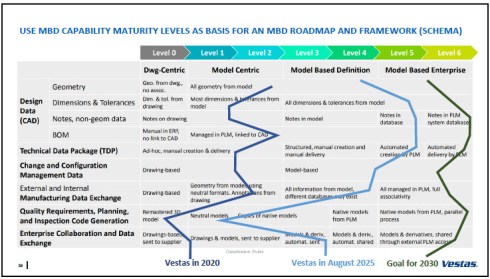

Vestas is on a clear path, and it is interesting to see their ambition in the MBD roadmap below.

An inspirational story, hopefully motivating other companies to make this first step to a model-based enterprise. Perhaps difficult at the beginning from the people’s perspective, but as a business, it is a profitable and required direction.

Bridging The Gap Between IT and Business

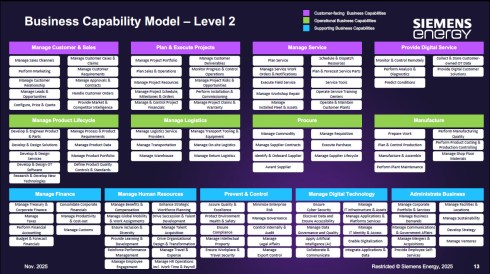

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

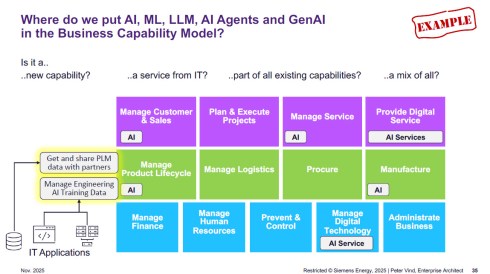

To answer these questions, Peter is referring to the Business Capability Model (BCM) he uses as an Enterprise Architect.

Business Capabilities define ‘what’ a company needs to do to execute its strategy, are structured into logical clusters, and should be the foundation for the enterprise, on which both IT and business can come to a common approach.

The detailed image above is worth studying if you are interested in the levels and the mappings of the capabilities. The BCM approach was beneficial when the company became disconnected from Siemens AG, enabling it to rationalize its application portfolio.

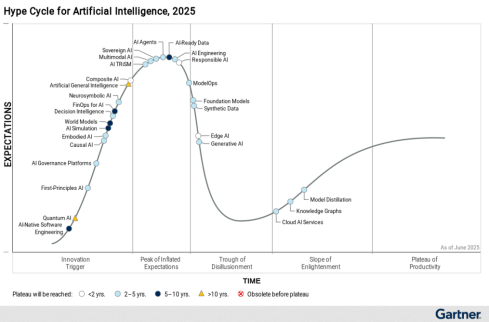

Next, Peter zoomed in on some of the examples of how a BCM and structured application portfolio management can help to rationalize the AI hype/demand – where is it applicable, where does AI have impact – and as he illustrated, it is not that simple. With the BCM, you have a base for further analysis.

Other future-relevant topics he shared included how to address the introduction of the digital product passport and how the BCM methodology supports the shift in business models toward a modern “Power-as-a-Service” model.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

Holistic PLM in Action.

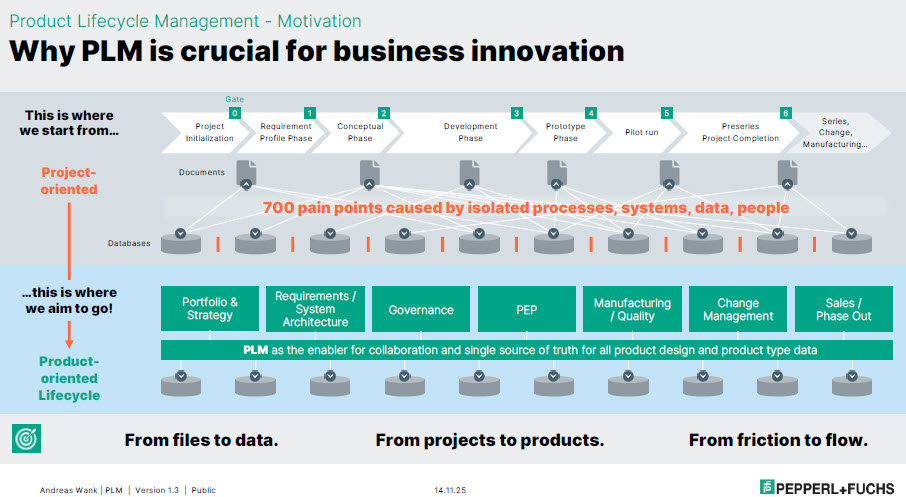

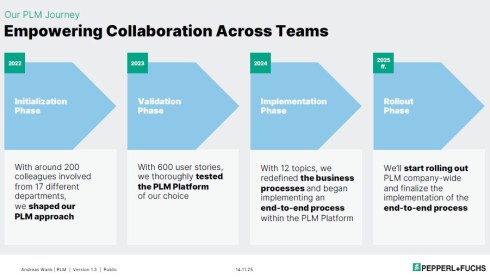

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

It started with the WHY. With such a massive portfolio, business innovation is under pressure without a PLM infrastructure. Too many changes, fragmented data, no single source of truth, and siloed ways of working lead to much rework, errors, and iterations that keep the company busy while missing the global value drivers.

Next, the journey!

The above image is an excellent way to communicate the why, what, and how to a broader audience. All the main messages are in the image, which helps people align with them.

The first phase of the project, creating digital continuity, is also an excellent example of digital transformation in traditional document-driven enterprises. From files to data align with the From Coordinated To Connected theme.

Next, the focus was to describe these new ways of working with all stakeholders involved before starting the selection and implementation of PLM tools. This approach is so crucial, as one of my big lessons learned from the past is: “Never start a PLM implementation in R&D.”

If you start in R&D, the priority shifts away from the easy flow of data between all stakeholders; it becomes an R&D System that others will have to live with.

You never get a second, first impression!

Pepperl+Fuchs spends a long time validating its PLM selection – something you might only see in privately owned companies that are not driven by shareholder demands, but take the time to prepare and understand their next move.

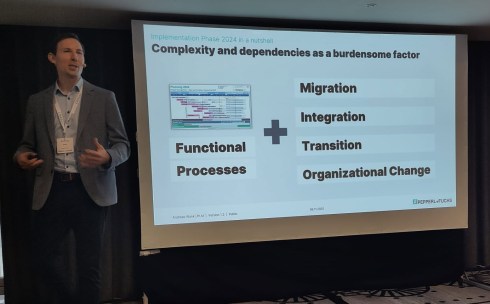

As Andreas also explained, it is not only about the functional processes. As the image shows, migration (often the elephant in the room) and integration with the other enterprise systems also need to be considered. And all of this is combined with managing the transition and the necessary organizational change.

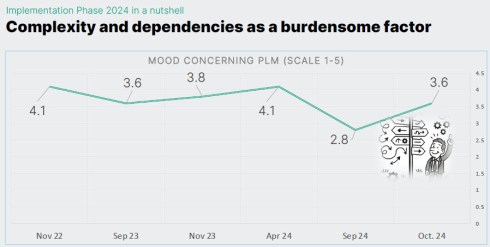

Andreas shared some best practices illustrating the focus on the transition and human aspects. They have implemented a regular survey to measure the PLM mood in the company. And when the mood went radical down on Sept 24, from 4.1 to 2.8 on a scale of 1 to 5, it was time to act.

They used one week at a separate location, where 30 of his colleagues worked on the reported issues in one room, leading to 70 decisions that week. And the result was measurable, as shown in the image below.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Trust, Small Changes, and Transformation.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

To understand their story, it is essential to comprehend Assa Abloy as a special company, as the image below explains. With over 1000 sites, 200 production facilities, and, last year, on average every two weeks, a new acquisition, it is hard to standardize the company, driven by a corporate organization.

However, this was precisely what Assa Abloy has been trying to do over the past few years. Working towards a single PLM system, with generic processes for all, spending a lot of time integrating and migrating data from the different entities became a mission impossible.

To increase user acceptance, they fell into the trap of customizing the system ever more to meet many user demands. A dead end, as many other companies have probably experienced similarly.

And then they came with a strategic shift. Instead of holding on to the past and the money invested in technology, they shifted to the human side.

The PLM group became a trusted organisation supporting the individual entities. Instead of telling them what to do (Top-Down), they talked with the local business and provided standardized PLM knowledge and capabilities where needed (Bottom-Up).

This “modular” approach made the PLM group the trusted partner of the individual business. A unique approach, making us realize that the human aspect remains part of implementing PLM

Humans cannot be transformed

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

You can find my presentation here on SlideShare as usual, and perhaps the best summary from my session was given in this post from Paul Comis. Enjoy his conclusion.

Conclusion

Two and a half intensive days in Paris again at the PLM Roadmap / PDT Europe conference, where some of the crucial aspects of PLM were shared in detail. The value of the conference lies in the stories and discussions with the participants. Only slides do not provide enough education. You need to be curious and active to discover the best perspective.

For those celebrating: Wishing you a wonderful Thanksgiving!

For those of you following my blog over the years, there is, every time after the PLM Roadmap PDT Europe conference, one or two blog posts, where the first starts with “The weekend after ….”

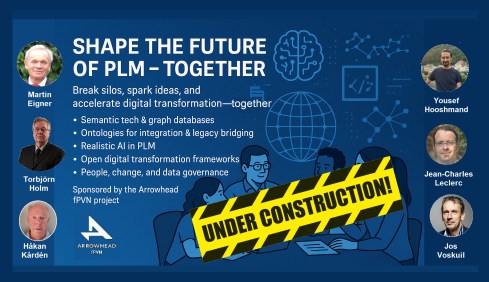

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

And now it is time for the PLM roadmap / PDT conference review

The conference

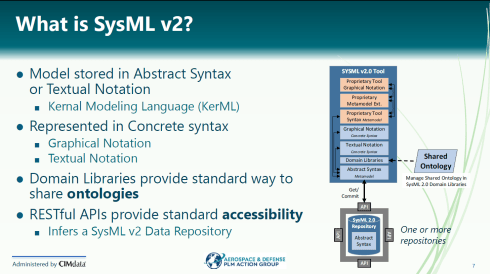

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.