You are currently browsing the tag archive for the ‘PLM’ tag.

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

Most companies I am working with focus on the first option – there needs to be a business case.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

It is a visionary article about what is possible in the PLM space (if there was no legacy ☹), based on Robust Reasoning and how you could even start with LLM Augmentation for PLM “Micro-Tasks.

Interestingly, the articles from both Rob and Benedict were supported by AI-generated images – I believe this is the future: Creating an AI image of the message you have in mind.

![]() When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

From a system to a strategy

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The System approach

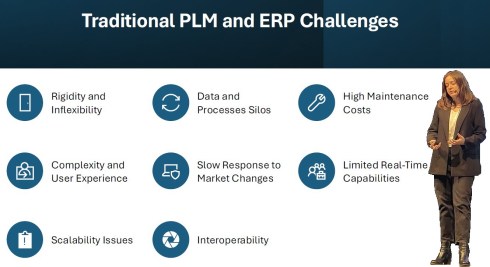

Traditional organizations are siloed, and initially, PLM always had the challenge of supporting product information shared throughout the whole lifecycle, where there was no conventional focus per discipline to invest in sharing – every discipline has its P&L – and sharing comes with a cost.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

In some cases, I noticed that the investment in ERP was twenty times more than the PLM investment.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

See also my old 2015 article: How do you measure collaboration?

As I mentioned, the Cost of Non-Quality, too many iterations, time lost by searching, material scrap, manufacturing delays or customer complaints – often are considered inescapable parts of doing business (like everyone else) – it happens all the time..

The strategy approach

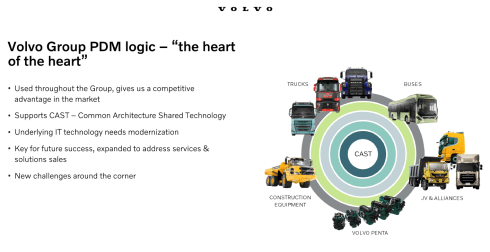

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

When you implement a strategy, it is evident that there should be a long(er) term vision behind it, which can be challenging for companies. Also, please read my previous article: The importance of a (PLM) vision.

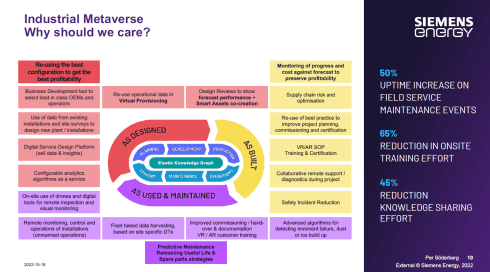

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

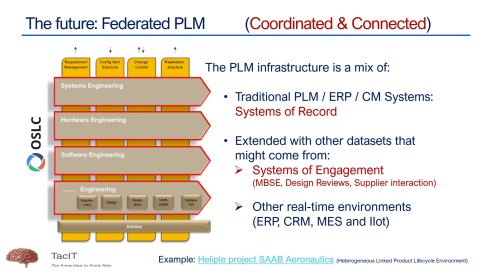

It is a process I have been preaching: From Coordinated to Coordinated and Connected.

We can be sure that at the board level, strategy discussions should be about value creation, not about reducing costs or avoiding risks as the future strategy.

Understanding the (PLM) value

The biggest challenge for companies is to understand how to modernize their PLM infrastructure to bring value.

* Step 1 is obvious. Stop considering PLM as a system with capabilities, but investigate how you transform your infrastructure from a collection of systems and (document) interfaces towards a federated infrastructure of connected tools.

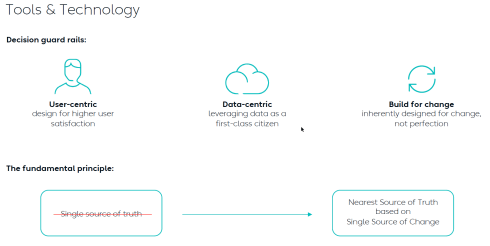

![]() Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

* Step 2 is education. A data-driven approach creates new opportunities and impacts how companies should run their business. Different skills are needed, and other organizational structures are required, from disciplines working in siloes to hybrid organizations where people can work in domain-driven environments (the Systems of Record) and product-centric teams (the System of Engagement). AI tools and capabilities will likely create an effortless flow of information within the enterprise.

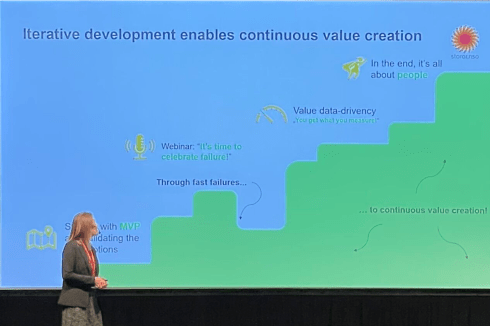

* Step 3 is building a compelling story to implement the vision. Implementing new ways of working based on new technical capabilities requires also organizational change. If your organization keeps working similarly, you might gain some percentage of efficiency improvements.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

Companies understand the WHY and WHAT but leave the HOW to the middle management.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

Feel free to contact me to explore the opportunity for your business.

It is not the technology!

With the upcoming availability of AI tools, implementing a PLM strategy will no longer depend on how IT understands the technology, the systems and the interfaces needed.

As Yousef Hooshmand‘s above image describes, a federated infrastructure of connected (SaaS) solutions will enable companies to focus on accurate data (priority #1) and people creating and using accurate data (priority #1). As you can see, people and data in modern PLM are the highest priority.

Therefore, I look forward to participating in the upcoming Share PLM Summit on 27-28 May in Jerez.

It will be a breakthrough – where traditional PLM conferences focus on technology and best practices. This conference will focus on how we can involve and motivate people. Regardless of which industry you are active in, it is a universal topic for any company that wants to transform.

Conclusion

Returning to this article’s introduction, modern PLM is an opportunity to transform the business and make it future-proof. It needs to be done for sure now or in the near future. Therefore PLM initiatives should be considered from the value point first instead of focusing on the costs. How well are you connected to your management’s vision to make PLM a value discussion?

Enjoy the podcast – several topics discuss relate to this post.

This year, I will celebrate 25 years since I started my company, TacIT, to focus on knowledge management. However, quickly, I was back in the domain of engineering data management, which became a broader topic, which we now call PLM.

This year, I will celebrate 25 years since I started my company, TacIT, to focus on knowledge management. However, quickly, I was back in the domain of engineering data management, which became a broader topic, which we now call PLM.

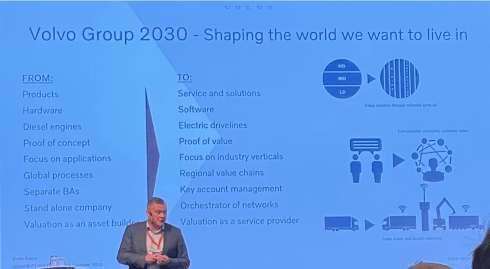

Looking back, there have been significant changes in these 25 years, from systems to strategy, for documents to data, from linear to iterative. However, in this post, I want to look at my 2024 observations to see where we can progress. This brings me to the first observation.

PLM is human

Despite many academic and marketing arguments describing WHAT and WHY companies need specific business or software capabilities, there is, above all, the need for people to be personally inspired and connected. We want to belong to a successful group of people, teams and companies because we are humans, not resources.

It is all about people, which was also the title of my session during the March 2024 3DEXPERIENCE User Conference in Eindhoven (NL). I led a panel discussion on the importance of people with Dr. Cara Antoine, Daniel Schöpf, and Florens Wolters, each of whom actively led transformational initiatives within their companies.

Through Dr. Cara Antoine, e at Capgemini and a key voice for women in tech, I learned about her book Make It Personal. The book inspired me and motivated me to continue using a human-centric approach. Give this book to your leadership and read it yourself. It is practical, easy to read, and encouraging

Through Dr. Cara Antoine, e at Capgemini and a key voice for women in tech, I learned about her book Make It Personal. The book inspired me and motivated me to continue using a human-centric approach. Give this book to your leadership and read it yourself. It is practical, easy to read, and encouraging

Recently, in my post “PLM in real life and Gen AI“, I shared insights related to PLM blogs and Gen AI – original content is becoming increasingly the same, and the human touch is disappearing, while generating more and longer blogs.

I propose keeping Gen AI-generated text for the boring part of PLM and exploring the human side of PLM engagements in blogs. What does this mean? In the post, I also shared the highlights of the Series 2 podcast I did together with Helena Gutierrez from Share PLM. Every recording had its unique human touch and knowledge.

I propose keeping Gen AI-generated text for the boring part of PLM and exploring the human side of PLM engagements in blogs. What does this mean? In the post, I also shared the highlights of the Series 2 podcast I did together with Helena Gutierrez from Share PLM. Every recording had its unique human touch and knowledge.

We are now in full preparation for Series 3—let us know who your hero is and who should be our guest in 2025!

PLM is business

One of the most significant changes I noticed in my PLM-related projects was that many of the activities connected the PLM activities to the company’s business objectives. Not surprisingly, it was mostly a bottom-up activity, explaining to the upper management that a modern, data-driven PLM strategy is crucial to achieving business or sustainability goals.

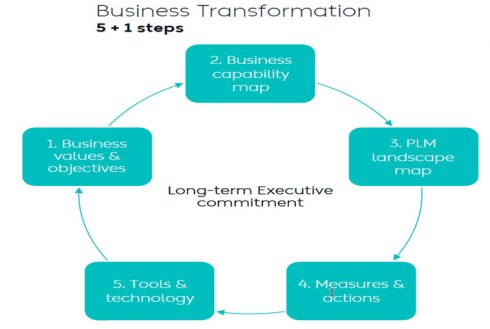

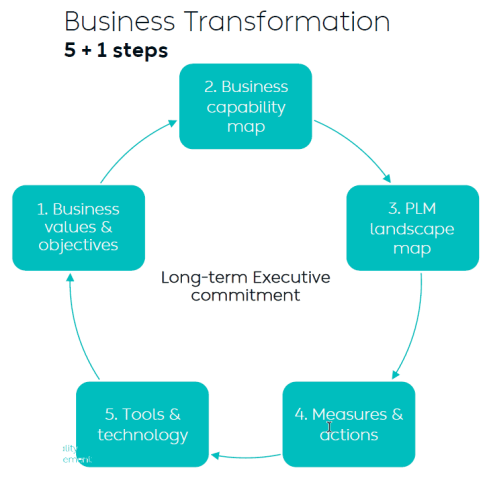

I wrote two long posts about these experiences. The first one,” PLM – business first,” zooms in on the changing mindset that PLM is not an engineering system anymore but part of a digital infrastructure that supports companies in achieving their business goals. The image below from Dr. Yousef Hooshmand is one of my favorites in this context. The 5 + 1 steps, where the extra step is crucial: Long Executive Commitment.

So, to get an executive commitment, you need to explain and address business challenges.

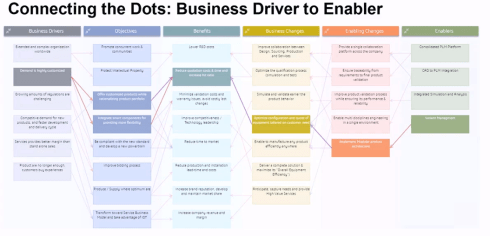

Executive commitment and participation can be achieved through a Benefits Dependency Network approach, as illustrated in this webinar I did with the Heliple-2 team, where we were justifying the business needs for Federated PLM. More about the Federated PLM part in the next paragraph.

Another point to consider is that when the PLM team is part of the IT organization (the costs side), they have a big challenge in leading or even participating in business discussions. In this context, read (again) Jan Bosch’s post: Structure Eats Strategy.

The second post, more recent, summarized the experiences I had with several customer engagements. The title says it all: “Don’t use the P**-word! – 5 lessons learned“, with an overlap in content with the first post.

The second post, more recent, summarized the experiences I had with several customer engagements. The title says it all: “Don’t use the P**-word! – 5 lessons learned“, with an overlap in content with the first post.

Conclusion: A successful PLM strategy starts with the business and needs storytelling to align all stakeholders with a shared vision or goal.

PLM is technology

This year has seen the maturation of PLM technology concepts. We are moving away from a monolithic PLM system and exploring federated and connected infrastructures, preferably a mix of Systems of Record (the old PLMs/ERPs) and Systems of Engagement (the new ways of domain collaboration). The Heliple project manifests such an approach, where the vertical layers are Systems of Record, and the horizontal modules could be Systems of Engagement.

I had several discussions with typical System of Engagement vendors, like Colab (“Where traditional PLM fails”) and Partful (“Connected Digital Thread for Lower and Mid-market OEMs“), but I also had broader discussions during the PLM Roadmap PDT Europe conference – see: R-evolutionizing PLM and ERP and Heliple.

I also follow Dr. Jorg Fischer, who lectures about digital transformation concepts in the manufacturing business domain. Unfortunately, for a broader audience, Jörg published a lot in German, and typically, his references for PLM and ERP are based on SAP and Teamcenter. His blog posts are always interesting to follow – have a look at his recent blog in English: 7 keys to solve PLM & ERP.

I also follow Dr. Jorg Fischer, who lectures about digital transformation concepts in the manufacturing business domain. Unfortunately, for a broader audience, Jörg published a lot in German, and typically, his references for PLM and ERP are based on SAP and Teamcenter. His blog posts are always interesting to follow – have a look at his recent blog in English: 7 keys to solve PLM & ERP.

Of course, Oleg Shilovitsky’s impressive and continuous flow of posts related to modern PLM concepts is amazing—just browse through his Beyond PLM home page to read about the actual topics happening in his PLM ecosystem or for example, read about modern technology concepts in this recent OpenBOM article.

Of course, Oleg Shilovitsky’s impressive and continuous flow of posts related to modern PLM concepts is amazing—just browse through his Beyond PLM home page to read about the actual topics happening in his PLM ecosystem or for example, read about modern technology concepts in this recent OpenBOM article.

Conceptually, we are making progress. As a commonality, all future concepts focus on data, not so much on managing documents—and here comes the focus on data.

PLM needs accurate data

In a data-driven environment, apps or systems will use a collection of datasets to provide a user with a working environment, either a dashboard or an interactive real-time environment. Below is my AI (Artist Impression) of a digital enterprise.

Of course, it seems logical; the data must be accurate as you no longer have control over access to the data in a data-driven environment. You can be accountable for the data; others can consume the data you created without checking its accuracy by your guidance.

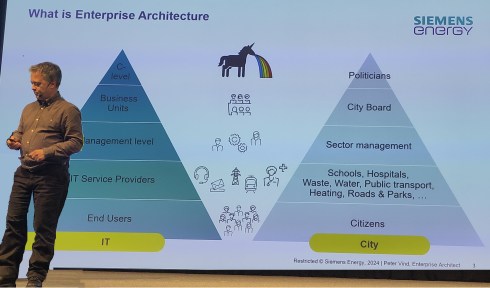

Therefore, data governance and an excellent enterprise architecture are crucial to support the new paradigm:

The nearest source of truth supported by a single source of change

Quote: Yousef Hoohmand

Forget the Single Source of Truth idea, a previous century paradigm.

With data comes Artificial intelligence and algorithms that can play an essential role in your business, providing solutions or insights that support decision-making.

![]() In 2024, most of us have been exploring the benefits of ChatGPT and Generative AI. You can describe examples of where AI could assist in every aspect of the product lifecycle. I saw great examples from Eaton, Ocado, and others at the PLM Roadmap/PDT Europe conference.

In 2024, most of us have been exploring the benefits of ChatGPT and Generative AI. You can describe examples of where AI could assist in every aspect of the product lifecycle. I saw great examples from Eaton, Ocado, and others at the PLM Roadmap/PDT Europe conference.

See my review here: A long week after the PLM Roadmap / PDT Europe conference.

Still, before benefiting from AI in your organization, it remains essential that the AI runs on top of accurate data.

Sustainability needs (digital) PLM

This paragraph is the only reverse dependency towards PLM and probably the one that is less in people’s minds, perhaps because PLM is already complex enough. In 2024, with the PLM Green Global Alliance, we had good conversations with PLM-related software vendors or service partners (aPriori, Configit, Makersite, PTC, SAP, Siemens and Transition Technologies PSC) where we discussed their solutions and how they are used in the field by companies.

This paragraph is the only reverse dependency towards PLM and probably the one that is less in people’s minds, perhaps because PLM is already complex enough. In 2024, with the PLM Green Global Alliance, we had good conversations with PLM-related software vendors or service partners (aPriori, Configit, Makersite, PTC, SAP, Siemens and Transition Technologies PSC) where we discussed their solutions and how they are used in the field by companies.

We discovered here that most activities are driven by regulations, like ESG reporting, the new CSRD directive for Europe and the implementation of the Digital Product Passport. What is clear from all these activities is that companies need to have a data-driven PLM infrastructure to connect product data to environmental impacts, like carbon emissions equivalents.

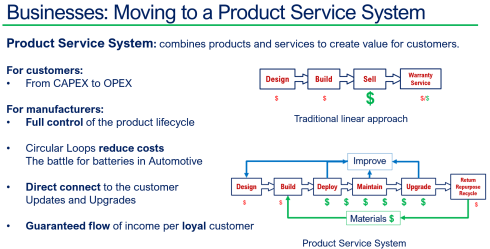

Besides complying with regulations, I have been discussing the topic of Product-As-A-Service, or the Product Service System, this year, with excellent feedback from Dave Duncan. You can find a link to his speech: Improving Product Sustainability – PTC with PGGA.

Also, during the PLM Roadmap / PDT Europe conference, I discussed this topic, explaining that achieving a circular economy is a long-term vision, and the starting point is to establish a connected infrastructure within your organizations and with your customers/users in the field.

Sustainability should be on everyone’s agenda. From the interactions on LinkedIn, you can see that we prefer to discuss terms like PDM/PLM or eBOM/mBOM in the PLM domain. Very few connect PLM to sustainability.

Sustainability should be on everyone’s agenda. From the interactions on LinkedIn, you can see that we prefer to discuss terms like PDM/PLM or eBOM/mBOM in the PLM domain. Very few connect PLM to sustainability.

Sustainability is a long-term mission; however, as we have seen from long-term missions, they can be overwhelmed by the day’s madness and short-term needs.

PLM is Politics

You might not expect this paragraph in my log, as most PLM discussions are about the WHAT and the WHY of a PLM solution or infrastructure. However, the most challenging part of PLM is the HOW, and this is the area that I am still focused on.

In the early days of mediating mainly in SmarTeam implementations, it became clear that the technology was not the issue. A crisis was often due to a lack of (technical) skills or methodology and misplaced expectations.

When the way out became clear, politics often started. Sometimes, there was the HIPPO (HIghest Paid Person’s Opinion) in the company, as Peter Vind explained, or there was the blame game, which I described in my 2019 “The PLM blame game post”.

What makes it even more difficult is that people’s opinions in PLM discussions are often influenced by their friendly relations or history with a particular vendor or implementer from the past, which troubles a proper solution path.

These aspects are challenging to discuss, and nobody wants to discuss them openly. A company (and a country) must promote curiosity instead of adhering to mainstream thinking and working methods. In our latest Share PLM podcast, Brian Berger, a VP at Metso, mentions the importance of diversity within an organization.

“It is a constant element of working in a global business, and the importance cannot be overstated.”

This observation should make us think again when we want to simplify everything and dim the colors.

Conclusion

Initially, I thought this would be a shorter post, but again, it became a long read – therefore, perhaps ideal when closing 2024 and looking forward to activities and focus for 2025. Use this time to read books and educate yourself beyond the social media posts (even my blogs are limited 😉)

In addition, I noticed the build-up of this post was unconsciously influenced by Martijn Dullaart‘s series of messages titled “Configuration Management is ……”. Thanks, Martijn, for your continuous contributions to our joint passion – a digital enterprise where PLM and CM flawlessly interact based on methodology and accurate data.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

With the upcoming of Gen AI and ChatGPT, I believe my PLM feeds are flooded by AI-generated blog posts.

The ChatGPT option

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

I just tried these five questions:

- Why do we need an MBOM in PLM, and which industries benefit the most?

- What is the difference between a PLM system and a PLM strategy?

- Why do so many PLM projects fail?

- Why do so many ERP projects fail?

- What are the changes and benefits of a model-based approach to product lifecycle management?

![]() Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

All these questions provided enough information for a blog post based on the answer. This illustrates that if you are writing about what are current best practices in the field – stop writing – the knowledge is there.

PLM in the real life

Recently, I had several discussions about which skills a PLM expert should have or which topics a PLM project should address.

PLM for the individual

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

PLM vendors and system integrators often certify their staff or resellers to guarantee the quality of their solution delivery. Potential topics will be missed as they do not fulfill the vendor’s or integrator’s business purpose.

Asking ChatGPT about the required skills for a PLM expert, these were the top 5 answers:

- Technical skills

- Domain Knowledge

- Analytical and Problem-Solving Skills

- Interpersonal and Management Skills

- Strategic Thinking

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

Although we want people to be that broad in their skills, job offerings are mainly looking for the expert in one domain, be it strategy, communication, industry or technology. To get an impression of the skills read my PLM and Education concluding blog post.

Now, let’s see what it means for an organization.

PLM for the organization

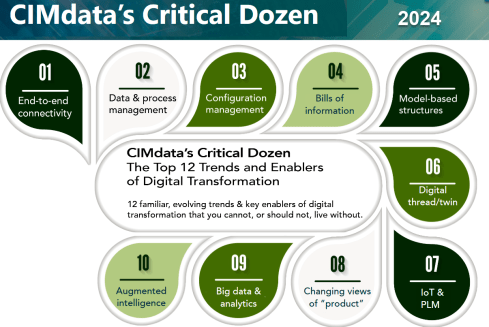

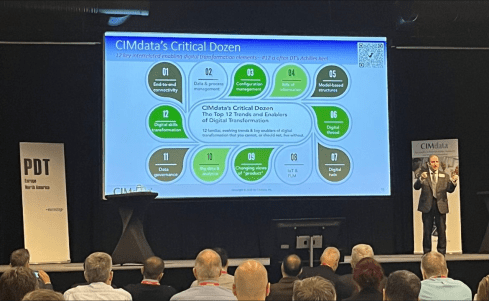

In this area, one of the most consistent frameworks I have seen over time is CIMdata‘s Critical Dozen. Although they refer less to skills and more to trends and enablers, a company should invest in – educate people & build skills – to support a successful digital transformation in the PLM domain.

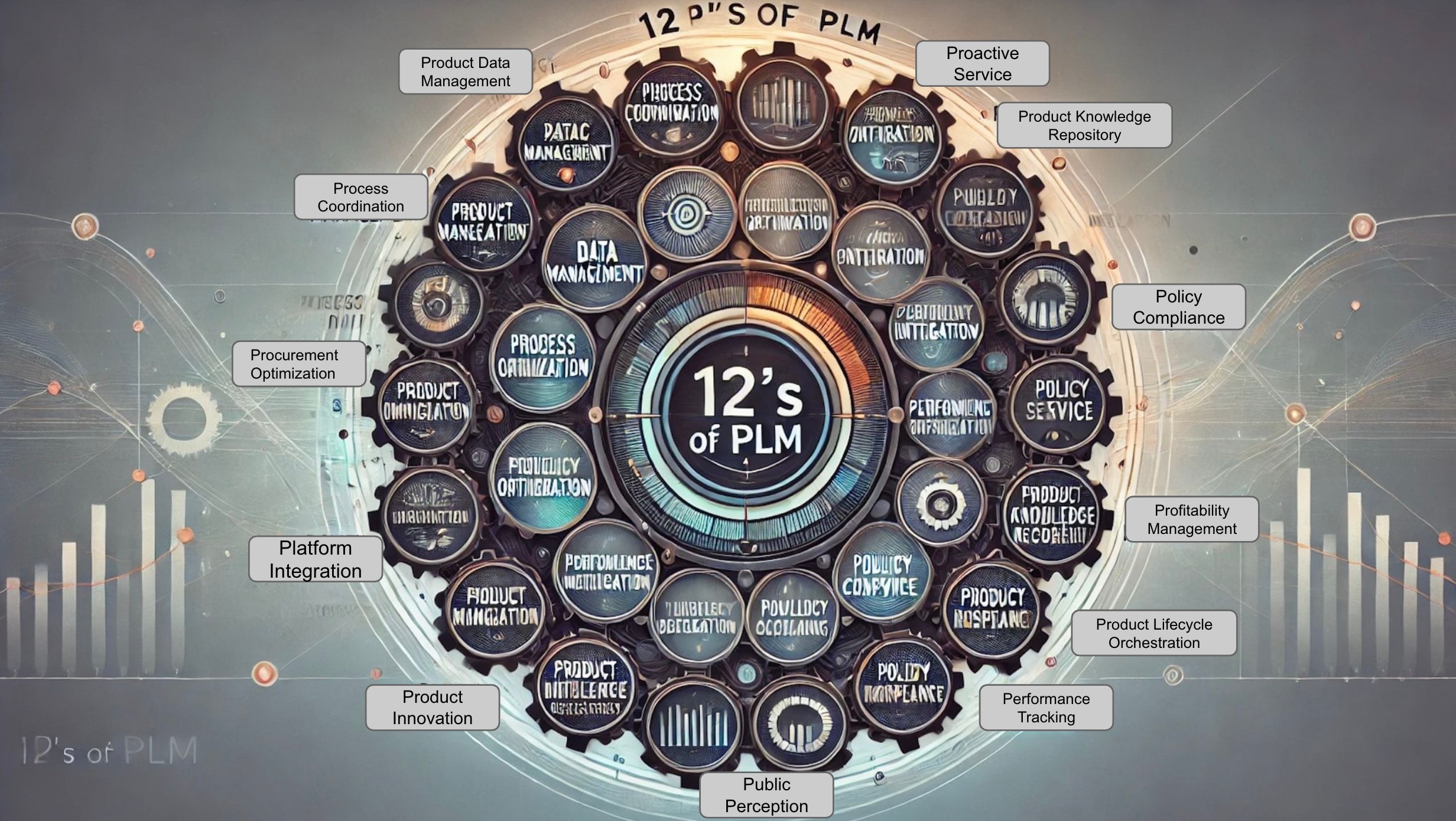

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

The challenge I see with these types of posts is: “OK, what to do now? Where to start?”

I believe where to start at the first place is a commonly agreed topic.

Everything starts from having a purpose and a vision. And this vision should be supported by a motivating story about the WHY that inspires everyone.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

An important reason why we have to make transformations personal is because we are dealing first of all with human beings. And human beings are driven by emotions first even before ratio kicks in. We see it everywhere and unfortunately also in politics.

The HOW from real-life

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

Building the HOW is not an easy journey, and to illustrate the variety of skills needed to be successful, I worked with Share PLM on their Series 2 podcast. You can find the complete overview here. There is one more to come to conclude this year.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

And this is what we learned:

Unveiling FLSmidth’s Industrial Equipment PLM Transformation: From Projects to Products

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

FLSmidth provides the global mining and cement industries with equipment and services, which is very much an ETO business moving towards CTO.

We discussed their Industrial Equipment PLM Transformation and the impact it has made.

Start With People: ABB’s Engineering Approach to Digital Transformation

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

To grow, you need to focus on your people. If your people are happy, you will automatically grow. If your people are unhappy, they will leave you or work against you.

Enabling change: Exploring the human side of digital transformations

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

“I see a PLM expert as someone with a lot of experience in organizational change management. Of course, maybe people with a different background can see a PLM expert with someone with a lot of knowledge of how you develop products, all the best practices around products, etc. We first need to agree on what a PLM expert is, and then we can agree on how you become an expert in such a domain.”

Revolutionizing PLM: Insights from Yousef Hooshmand

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

Data Mesh, with over 15 years of experience in the PLM domain, currently PLM Lead at NIO, we discussed the complexity of digital transformation in the PLM domain and How to deal with legacy, meanwhile implementing a user-centric, data-driven future.

My favorite quote: The End of Single Source of Truth, now it is about The nearest Source of Truth and Single Source of Change.

Steadfast Consistency: Delving into Configuration Management with Martijn Dullaart

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

The ROI of Digitalization: A Deep Dive into Business Value with Susanna Maëntausta

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

Susanna teaches us to ensure PLM projects are value-driven, connecting business objectives and KPIs to the implementation and execution steps in the field. Susanna is highly skilled in connecting people at any level of the organization.

Narratives of Change: Grundfos Transformation Tales with Björn Axling

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

In this episode, you will learn all the various aspects that come together when leading such a transformation in terms of culture, people, communication, and modern technology.

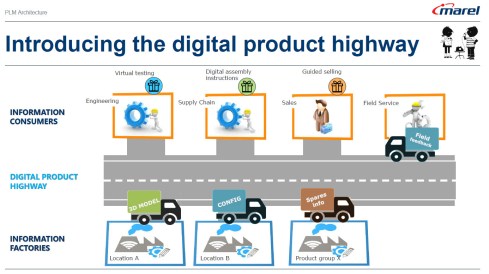

The Next Lane: Marel and the Digital Product Highway with Roger Kabo

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

Step 1: Start with the end in mind. Every successful business starts with a clear and compelling vision. Your vision should be specific, inspiring, and something your team can rally behind.

Next, build on value and do it step by step.

How do you manage technology and data when you have a diverse product portfolio?

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

Key Takeaway: Evolving technology requires a clear strategy!

Jim underscores the importance of having a north star to guide your technological advancements, ensuring you remain focused and adaptable in an ever-changing landscape.

Diverse Products, Unified Systems: MBSE Insights with Max Gravel from Moog

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

Max Gravel, Manager of Model-Based Engineering at Moog Inc., who is also active in modern CM, emphasizes that understanding your company’s goals with MBD is crucial.

There’s no one-size-fits-all solution: it’s about tailoring the strategy to drive real value for your business. The tools are available, but the key lies in addressing the right questions and focusing on what matters most. A great, motivating story containing all the aspects of digital transformation in the PLM domain/

Customer-First PLM: Insights on Digital Transformation and Leadership

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

We also discussed the role of AI in shaping the future of PLM and the importance of vision, diverse skill sets, and teamwork in transformations.

Conclusion

I believe the time of traditional blogging is over – current PLM concepts and issues can be easily queried by using ChatGPT-like solutions. The fundamental understanding of what you can do now comes from learning and listening to people, not as fast as a TikTok video or Insta message. For me, a podcast is a comfortable method of holistic learning.

Let us know what you think and who should be in Season 3

And for my friends in the United States – Happy Thanksgiving and think about the day after ……..

Recently, I attended several events related to the various aspects of product lifecycle management; most of them were tool-centric, explaining the benefits and values of their products.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

However, nowadays, people working in the business are feeling more and more challenged and pained by not acting adequately to the upcoming business demands.

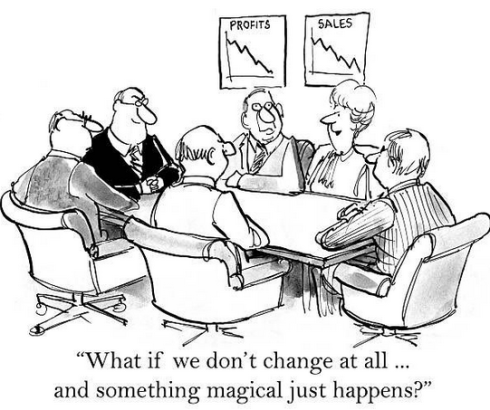

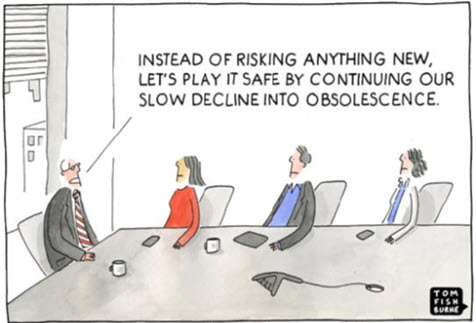

The image below has been shown so many times, and every time, the context becomes more relevant.

Too often, an evolutionary mindset with small steps is considered instead of looking toward the future and reasoning back for what needs to be done.

Let me share some experiences and potential solutions.

Don’t use the P** word!

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

PLM vendors, like Dassault Systèmes and Siemens, have recognized this, and the word PLM is no longer on their home pages.

They are now delivering experiences or digital industries software.

Other companies, such as PTC and Aras, broadened the discussion by naming other domains, such as manufacturing and services, all connected through a digital thread.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

For all of these vendors, success can come if champions inside the targeted company understand the technology and can translate its needs into their daily work.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Lesson 1:

Don’t use the word PLM in your management messaging.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

As soon as you use the word PLM, most people think about a (costly) system, as this is how PLM is framed. Engineering, like IT, is often considered a cost center, as money is made by manufacturing and selling products.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

To avoid this framing, first of all, try to explain what you want to establish for the business. The terms Digital Product Highway or Digital Infrastructure, for example, avoid thinking in systems.

Lesson 2:

Don’t tell your management why they need to reward your project – they should tell you what they need.

This might seem like a bit of strange advice; however, you have to realize that most of the time, people do not talk about the details at the management level. At the management level, there are strategies and business objectives, and you will only get attention when your proposal addresses the business needs. At the management level, there should be an understanding of the business need and its potential value for the organization. Next, analyzing the business changes and required tools will lead to an understanding of what value the PLM team can bring.

Yousef Hooshmand’s 5 + 1 approach illustrates this perfectly. It is crucial to note that long-term executive commitment is needed to have a serious project, and therefore, the connection to their business objective is vital.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Lesson 3:

Alignment must come from within the organization.

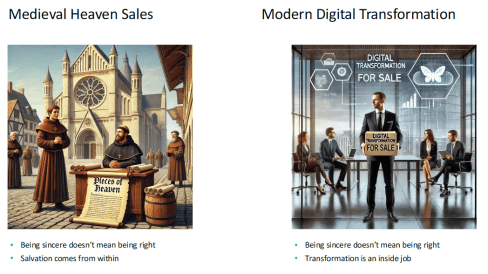

Last week, at the 20th anniversary of the Dutch PLM platform, Yousef Hooshmand gave the keynote speech starting with the images below:

On the left side, we see the medieval Catholic church sincerely selling salvation through indulgences, where the legend says Luther bought the hell, demonstrating salvation comes from inside, not from external activities – read the legend here.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

As Yousef mentioned, the intentions of these people can be sincere, but also, here, the transformation must come from inside (the company).

When I work with companies, I use the Benefits Dependency Network methodology to create a storyboard for the company. The BDN network then serves as a base for creating storylines that help people in the organization have a connected view starting from their perspective.

Companies might hire strategic consultancy firms to help them formulate their long-term strategy. This can be very helpful where, in the best case, the consultancy firm educates the company, but the company should decide on the direction.

In an older blog post, I wrote about this methodology, presented by Johannes Storvik at the Technia Innovation forum, and how it defines a value-driven implementation.

Dassault Systèmes and its partners use this methodology in their Value Engagement process, which is tuned to their solution portfolio.

You can also watch the webinar Federated PLM Webinar 5 – The Business Case for the Federated PLM, in which I explained the methodology used.

Lesson 4:

PLM is a business need not an IT service

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

The business usually focuses on incremental and economic profitability, less on transformational ways of working.

In this context, it is relevant to read Chris Seiler’s post: How to escape the vicious circle in times of transformation? Where he reflects on his 2002 MBA study, which is still valid for many big corporate organizations.

It is a long read, but it is gratifying if you are interested. It shows that PLM concepts should be discussed and executed at the business level. Of course, I read the article with my PLM-twisted brain.

The image above from Chris’s post could be a starting point for a Benefits-Dependent Network diagram, expanded with Objectives, Business Changes and Benefits to fight this vicious downturn.

As PLM is no longer a system but a business strategy, the PLM team should be integrated into the business potential overlooked by the CIO or CDO, as a CEO is usually not able to give this long-term executive commitment.

Lesson 5:

Educate yourselves and your management

![]() The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

However, before jumping on these new technologies, everyone, at every level in the organization, should be aware of:

WHY will this be relevant for our business? Is it to cut costs – being more efficient as fewer humans are in the process? Is it to be able to comply with new upcoming (sustainability) regulations? Is it because the aging workforce leaves a knowledge gap?

WHAT will our business need in the next 5 to 10 years? Are there new ways of working that we want to introduce, but we lack the technology and the tools? Do we have skills in-house? Remember, digital transformation must come from the inside.

HOW are we going to adapt our business? Can we do it in a learning mode, as the end target is not clear yet—the MVP (Minimum Viable Product) approach? Are we moving from selling products to providing a Product Service System?

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

If you want to learn more – join us – here is the link to the agenda.

Conclusion

I hope you enjoyed reading a blog post not generated by ChatGPT, although I am using bullet points. With the overflow of information, it remains crucial to keep a holistic overview. I hope that with this post, I have helped the P** teams in their mission, and I look forward to learning from your experiences in this domain.

![]() I attended the PDSVISION forum for the first time, a two-day PLM event in Gothenburg organized by PTC’s largest implementer in the Nordics, also active in North America, the UK, and Germany.

I attended the PDSVISION forum for the first time, a two-day PLM event in Gothenburg organized by PTC’s largest implementer in the Nordics, also active in North America, the UK, and Germany.

The theme of the conference: Master your Digital Thread – a hot topic, as it has been discussed in various events, like the recent PLM Roadmap/PDT Europe conference in November 2023.

The event drew over 200 attendees, showing the commitment of participants, primarily from the Nordics, to knowledge sharing and learning.

The diverse representation included industry leaders like Vestas, pioneers in Sustainable Energy, and innovative startups like CorPower Ocean, who are dedicated to making wave energy reliable and competitive. Notably, the common thread among these diverse participants was their focus on sustainability, a growing theme in PLM conferences and an essential item on every board’s strategic agenda.

I enjoyed the structure and agenda of the conference. The first day was filled with lectures and inspiring keynotes. The second day was a day of interactive workshops divided into four tracks, which were of decent length so we could really dive into the topics. As you can imagine, I followed the sustainability track.

Here are some of my highlights of this conference.

Catching the Wind: A Digital Thread From Design to Service

Simon Saandvig Storbjerg, unfortunately remote, gave an overview of the PLM-related challenges that Vestas is addressing. Vestas, the undisputed market leader in wind energy, is indirectly responsible for 231 million tonnes of CO2 per year.

Simon Saandvig Storbjerg, unfortunately remote, gave an overview of the PLM-related challenges that Vestas is addressing. Vestas, the undisputed market leader in wind energy, is indirectly responsible for 231 million tonnes of CO2 per year.

One of the challenges of wind power energy is the growing complexity and need for variants. With continuous innovation and the size of the wind turbine, it is challenging to achieve economic benefits of scale.

As an example, Simon shared data related to the Lost Production Factor, which was around 5% in 2009 and reduced to 2% in 2017 and is now growing again. This trend is valid not only for Vestas but also for all wind turbine manufacturers, as variability is increasing.

Vestas is introducing modularity to address these challenges. I reported last year about their modularity journey related to the North European Modularity biannual meeting held at Vestas in Ringkøbing – you can read the post here.

Simon also addressed the importance of Model-Based Definition (MBD), which is crucial if you want to achieve digital continuity between engineering and manufacturing. In particular, in this industry, MBD is a challenge to involve the entire value chain, despite the fact that the benefits are proven and known. Change in people skills and processes remains a challenge.

The Future of Product Design and Development

The session led by PTC from Mark Lobo, General Manager for the PLM Segment, and Brian Thompson, General Manager of the CAD Segment, brought clarity to the audience on the joint roadmap of Windchill and Creo.

The session led by PTC from Mark Lobo, General Manager for the PLM Segment, and Brian Thompson, General Manager of the CAD Segment, brought clarity to the audience on the joint roadmap of Windchill and Creo.

Mark and Brian highlighted the benefits of a Model-Based Enterprise and Model-Based Definition, which are musts if you want to be more efficient in your company and value chain.

Mark and Brian highlighted the benefits of a Model-Based Enterprise and Model-Based Definition, which are musts if you want to be more efficient in your company and value chain.

The WHY is known, see the benefits described in the image, and requires new ways of working, something organizations need to implement anyway when aiming to realize a digital thread or digital twin.

In addition, Mark addressed PTC’s focus on Design for Sustainability and their partner network. In relation to materials science, the partnership with Ansys Granta MI is essential. It was presented later by Ansys and discussed on day two during one of the sustainability workshops.

Mark and Brian elaborated on the PTC SaaS journey – the future atlas platform and the current status of WindChill+ and Creo+, addressing a smooth transition from existing customers to a new future architecture.

And, of course, there was the topic of Artificial Intelligence.

Mark explained that PTC is exploring AI in various areas of the product lifecycle, like validating requirements, optimizing CAD models, streamlining change processes on the design side but also downstream activities like quality and maintenance predictions, improved operations and streamlined field services and service parts are part of the PTC Copilot strategy.

Mark explained that PTC is exploring AI in various areas of the product lifecycle, like validating requirements, optimizing CAD models, streamlining change processes on the design side but also downstream activities like quality and maintenance predictions, improved operations and streamlined field services and service parts are part of the PTC Copilot strategy.

PLM combined with AI is for sure a topic where the applicability and benefits can be high to improve decision-making.

PLM Data Merge in the PTC Cloud: The Why & The How

Mikael Gustafson from Xylem, a leading Global Water Solutions provider, described their recently completed project: merging their on-premise Windchill instance TAPIR and their cloud Windchill XGV into a single environment.

Mikael Gustafson from Xylem, a leading Global Water Solutions provider, described their recently completed project: merging their on-premise Windchill instance TAPIR and their cloud Windchill XGV into a single environment.

TAPIR stands for Technical Administration, Part Information Repository and is very much part-centric and used in one organization. XGV stands for Xylem Global Vault, and it is used in 28 organizations with more of a focus on CAD data (Creo and AutoCAD). Two different siloes are to be joined in one instance to build a modern, connected, data-driven future or, as Mikael phrased it: “A step towards a more manageable Virtual Product“.

It was a severe project involving a lot of resources and time, again showing the challenges of migrations. I am planning to publish a blog post, the draft title “Migration Migraine,” as this type of migration is prevalent in many places because companies want to implement a single PLM backbone beyond (mechanical) engineering.

It was a severe project involving a lot of resources and time, again showing the challenges of migrations. I am planning to publish a blog post, the draft title “Migration Migraine,” as this type of migration is prevalent in many places because companies want to implement a single PLM backbone beyond (mechanical) engineering.

What I liked about the approach was its focus on assessing the risks and prioritizing a mitigation strategy if necessary. As the list below shows, even the COVID-19 pandemic was challenging the project.

Often, big migration projects fail due to optimism or by assessing some of the risks at the start and then giving it a go.

When failures happen, there is often the blame game: Was it the software, the implementer, or the customer (past or present) that caused the troubles? Mediating in such environments has been a long time my mission as the “Flying Dutchman,” and from my experience, it is not about the blame game; it is, most of the time, too high expectations and not enough time or resources to fully control this journey.

When failures happen, there is often the blame game: Was it the software, the implementer, or the customer (past or present) that caused the troubles? Mediating in such environments has been a long time my mission as the “Flying Dutchman,” and from my experience, it is not about the blame game; it is, most of the time, too high expectations and not enough time or resources to fully control this journey.

As Michael said, Xylem was successful, and during the go-live, only a few non-critical issues popped up.

When asked what he would do differently with the project’s hindsight, Mikael mentioned he would do the migrations not as a big project but as smaller projects.

When asked what he would do differently with the project’s hindsight, Mikael mentioned he would do the migrations not as a big project but as smaller projects.

I can relate a lot to this answer as, by experience, the “one-time” migration projects have created a lot of stress for the company, and only a few of them were successful.

Starting being coordinated and then connected

Several sessions were held where companies shared their PLM journey, to be mapped along the maturity slide (slide 8) I shared in my session: The Why, What and How of Digital Transformation in the PLM domain. You can review the content here on SlideShare.

There was Evolabel, a company starting its PLM journey because they are suffering from ineffective work procedures, information islands and the increasing complexity of its products.

Evolabel realized it needed PLM to realize its market ambition: To be a market leader within five years. For Evolabel, PLM is a must that is repeatable and integrated internally.

Evolabel realized it needed PLM to realize its market ambition: To be a market leader within five years. For Evolabel, PLM is a must that is repeatable and integrated internally.

They shared how they first defined the required understanding and mindset for the needed capabilities before implementing them. In my terminology, they started to implement a coordinated PLM approach.

Teddy Svenson from JBT, a well-known manufacturer of food-tech solutions, described their next step in PLM. From an old AS/400 system with very little integration to PDM to a complete PLM system with parts, configurations, and change management.

Teddy Svenson from JBT, a well-known manufacturer of food-tech solutions, described their next step in PLM. From an old AS/400 system with very little integration to PDM to a complete PLM system with parts, configurations, and change management.

It is not an easy task but a vital stepping stone for future development and a complete digital thread, from sales to customer care. In my terminology, they were upgrading their technology to improve their coordinated approach to be ready for the next digital evolution.

![]() There were several other presentations on Day One – See the agenda here I cannot cover them all given the limited size of this blog post.

There were several other presentations on Day One – See the agenda here I cannot cover them all given the limited size of this blog post.

The Workshops

As I followed the Sustainability track, I cannot comment much on the other track; however, given the presenters and the topics, they all appeared to be very pragmatic and interactive – given the format.

Achieving sustainability goals by integrating material intelligence into the design process

![]() In the sustainability track, we started with Manuelle Clavel from Ansys Granta, who explained in detail how material data and its management are crucial for designing better-performing, more sustainable, and compliant products.

In the sustainability track, we started with Manuelle Clavel from Ansys Granta, who explained in detail how material data and its management are crucial for designing better-performing, more sustainable, and compliant products.

With the importance of compliance with (upcoming) regulations and the usage of material characteristics in the context of more sustainable products and being able to perform a Life Cycle Assessment, it is crucial to have material information digitally available, both in the CAD design environment as well in the PLM environment.

For me, a dataset of material properties is an excellent example of how it is used in a connected enterprise. You do not want to copy the information from system to system; it needs to be connected and available in real-time.

How can we design more sustainable products?

Together with Martin Lundqvist from QCM, I conducted an interactive session. We started with the need for digitalization, then looked at RoHS and REACH compliance and discussed the upcoming requirements of the Digital Product Passport.

We closed the session with a dialogue on the circular economy.

From the audience, we learned that many companies are still early in understanding the implementation of sustainability requirements and new processes. However, some were already quite advanced and acting. In particular, it is essential to know if your company is involved with batteries (DPP #1) or is close to consumers.

Conclusion

The PDSFORUM was for me an interesting experience for meeting companies at all different stages of their PLM journey. All sessions I attended were realistic, and the solutions were often pragmatic. In my day-to-day life, inspiring companies to understand a digital and sustainable future, you sometimes forget the journey everyone is going through.

Thanks, PDVISION, for inviting me to speak and learn at this conference.

and some sad news …..

I was sorry to learn that last week, Dr. Ken Versprille suddenly passed away. I know Ken, as shown in the picture – a passionate moderator and timekeeper of the PLM Roadmap / PDT conferences, well prepared for the details. May his spirit live through the future conferences – the next one already on May 8-9th in Washington, DC.

Today I read Rhiannon Gallagherer’s LinkedIn post: If Murray Isn’t Happy, No One Is Happy: Value Your Social Nodes. The story reminded me of a complementary blog post I wrote in 2014, although with a small different perspective.

Today I read Rhiannon Gallagherer’s LinkedIn post: If Murray Isn’t Happy, No One Is Happy: Value Your Social Nodes. The story reminded me of a complementary blog post I wrote in 2014, although with a small different perspective.

After reviewing my post, I discovered that nine years later, we are still having the same challenges of how to involve people in a business transformation.

People are the most important assets companies claim, but where do they focus their spending and efforts?

Probably more on building the ideal processes and having the best IT solution.

Organisational Change Management is not in their comfort zone. People like Rhiannon Gallagher, but also in my direct network, the team from Share PLM, are focusing on this blind spot. Don’t forget this part of your digital transformation efforts.

Organisational Change Management is not in their comfort zone. People like Rhiannon Gallagher, but also in my direct network, the team from Share PLM, are focusing on this blind spot. Don’t forget this part of your digital transformation efforts.

And just for fun, there rest of the post below is the article from 2014. At that time, I was not yet focusing on digital transformation in the PLM domain. That started end of 2014 – the beginning of 2015.

PLM and Blockers

(read it with 2014 in mind – where were you?)

In the past month (April 2014), I had several discussions related to the complexity of PLM.

- Why is PLM conceived as complex?

- Why is it hard to sell PLM internally into an organization?

- Or, to phrase it differently: “What makes PLM so difficult for normal human beings. As conceptually it is not so complex”

(2023 addition: PLM is complex (and we have to accept it?) )

So what makes it complex? What is behind PLM?

The main concept behind PLM is that people need to share data. It can be around a project, a product, or a plant through the whole lifecycle. In particular, during the early lifecycle phases, there is a lot of information that is not yet 100 percent mature.

The main concept behind PLM is that people need to share data. It can be around a project, a product, or a plant through the whole lifecycle. In particular, during the early lifecycle phases, there is a lot of information that is not yet 100 percent mature.

You could decide to wait till everything is mature before sharing it with others (the classical sequential manner). However, the chances of doing it right the first time are low. Several iterations between disciplines will be required before the data is approved.

The more and more a company works sequentially, the higher the costs of changes and the longer the time to market. Due to the rigidness of this sequential approach, it becomes difficult to respond rapidly to changing customer or market demands.

Therefore, in theory (and it is not only a PLM theory), concurrent engineering should reduce the number of iterations and the total time to market by working in parallel on not yet approved data.

PLM goes further. It is about the sharing of data, and as it originally started in the early phases of the product lifecycle, the concept of PLM was considered something related to engineering. And to be fair, most of the PLM (CAD-related) vendors have a high focus on the early stages of the lifecycle and have strengthened this idea.

PLM goes further. It is about the sharing of data, and as it originally started in the early phases of the product lifecycle, the concept of PLM was considered something related to engineering. And to be fair, most of the PLM (CAD-related) vendors have a high focus on the early stages of the lifecycle and have strengthened this idea.

However, sharing can go much further, e.g., early involvement of suppliers (still engineering) or downstream support for after-sales/services (the new acronym SLM – Service Lifecycle Management).

In my recent (2014) blog posts, I discussed the concepts of SLM and the required data model for that.

Anticipated sharing

The complexity lies in the word “sharing”. What does sharing mean for an organization, where historically, every person was awarded for their knowledge instead of being awarded for sharing and spreading knowledge. Guarding your knowledge was job protection.

Many so-called PLM implementations have failed to reach the sharing target as the implementation focus was on storing data per discipline and not necessarily storing data to become shareable and used by others. This is a huge difference.

(2023 addition: At that time, all PLM systems were Systems of Record)

Some famous (ERP) vendors claim if you store everything in their system, you have a “single version of the truth”.

Some famous (ERP) vendors claim if you store everything in their system, you have a “single version of the truth”.

Sounds attractive. However, my garbage bin at home is also a place where everything ends up in a single place, but a garbage bin has not been designed for sharing. Another person has no clue or time to analyze what is inside.

Even data stored in the same system can be hidden from others as the way to find data is not anticipated.

Data sharing instead of document deliverables

The complexity of PLM is that data should be created and shared in a matter not necessarily in the most efficient manner for a single purpose. With some extra effort, you can make the information usable and searchable for others. Typical examples are drawings and document management, where the whole process for a person is focused on delivering a specific document on time. Ok, for that purpose, but this document becomes a legacy for the long term as you need to know (or remember) what is inside the document.

A logical implication of data sharing is that, instead of managing documents, organizations start to collect and share data elements (a 3D model, functional properties, requirements, physical properties, logistical properties, etc.). Data can be connected and restructured easily through reports and dashboards, therefore, providing specific views for different roles in the organization. Sharing has become possible, and it can be done online. Nobody needed to consolidate and extract data from documents (Excels ?)

A logical implication of data sharing is that, instead of managing documents, organizations start to collect and share data elements (a 3D model, functional properties, requirements, physical properties, logistical properties, etc.). Data can be connected and restructured easily through reports and dashboards, therefore, providing specific views for different roles in the organization. Sharing has become possible, and it can be done online. Nobody needed to consolidate and extract data from documents (Excels ?)

(2023 addition: The data-driven PLM infrastructure talking about datasets)

This does not fit older generations and departmental-managed business units that are rewarded only for their individual efficiency.

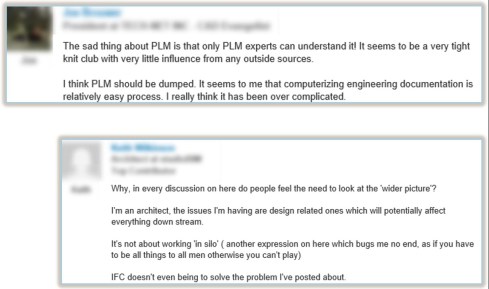

Here is an extract of a LinkedIn discussion from 2014, where the two extremes are visible. Unfortunately (or perhaps good), LinkedIn does not keep everything online. There is already so much “dark data” on the internet.

Here is an extract of a LinkedIn discussion from 2014, where the two extremes are visible. Unfortunately (or perhaps good), LinkedIn does not keep everything online. There is already so much “dark data” on the internet.

Joe stating:

“The sad thing about PLM is that only PLM experts can understand it! It seems to be a very tight knit club with very little influence from any outside sources.

I think PLM should be dumped. It seems to me that computerizing engineering documentation is relatively easy process. I really think it has been over complicated. Of course we need to get the CAD vendors out of the way. Yes it was an obvious solution, but if anyone took the time to look down the road they would see that they were destroying a well established standard that were so cost effective and simple. But it seems that there is no money in simple”

And at the other side, Kais stated:

“If we want to be able to use state-of-the art technology to support the whole enterprise, and not just engineering, and through-life; then product information, in its totality, must be readily accessible and usable at all times and not locked in any perishable CAD, ERP or other systems. The Data Centric Approach that we introduced in the Datamation PLM Model is built on these concepts”

Readers from my blog will understand I am very much aligned with Kais, and PLM guys have a hard time convincing Joe of the benefits of PLM (I did not try).

Making the change happen

Besides this LinkedIn discussion, I had discussions with several companies where my audience understood the data-centric approach. It was nice to be in the room together, sharing ideas of what would be possible. However, the outside world is hard to convince, and here the challenge is organizational change management. Who will support you and who will work against you?.

Besides this LinkedIn discussion, I had discussions with several companies where my audience understood the data-centric approach. It was nice to be in the room together, sharing ideas of what would be possible. However, the outside world is hard to convince, and here the challenge is organizational change management. Who will support you and who will work against you?.

BLOCKERS: I read an interesting article in IndustryWeek from John Dyer with the title: What Motivates Blockers to Resist Change?

John describes the various types of blockers, and when reading the article combined with my PLM twisted brain, I understood again that this is one of the reasons why PLM is perceived as complex – you need to change, and there are blockers:

Blocker (noun) – Someone who purposefully opposes any change (improvement) to a process for personal reasons

“Blockers” can occupy any position in a company. They can be any age, gender, education level or pay rate. We tend to think of blockers as older, more experienced workers who have been with the company for a long time, and they don’t want to consider any other way to do things. While that may be true in some cases, don’t be surprised to find blockers who are young, well-educated and fairly new to the company.”

The problem with blockers

The combination of business change and the existence of blockers is one of the biggest risks for companies to go through a business transformation. By the way, this is not only related to PLM; it is related to any required change in business.

Some examples:

A company I worked with was eager to study its path to the future, which required more global collaboration, a competitive business model and a more customer-centric approach. After a long evaluation phase, they decided they needed PLM, which was new for most of the people in the company. Although the project team was enthusiastic, they were not able to pass the blockers for a change – so no PLM. Ironically enough, they lost a significant part of their business to companies that have implemented PLM. Defending the past is not a guarantee for the future.

A company I worked with was eager to study its path to the future, which required more global collaboration, a competitive business model and a more customer-centric approach. After a long evaluation phase, they decided they needed PLM, which was new for most of the people in the company. Although the project team was enthusiastic, they were not able to pass the blockers for a change – so no PLM. Ironically enough, they lost a significant part of their business to companies that have implemented PLM. Defending the past is not a guarantee for the future.