You are currently browsing the tag archive for the ‘Innovation’ tag.

The past two weeks have been a fascinating journey, delving into the intersection of Curiosity, Innovation, and modern PLM. Where many PLM-related posts are about the best products and the best architectures, there is also the “soft” angle – people and culture – which I believe is the most important to start from. Without the right people and the right mindset, every PLM implementation is ready to fail.

The past two weeks have been a fascinating journey, delving into the intersection of Curiosity, Innovation, and modern PLM. Where many PLM-related posts are about the best products and the best architectures, there is also the “soft” angle – people and culture – which I believe is the most important to start from. Without the right people and the right mindset, every PLM implementation is ready to fail.

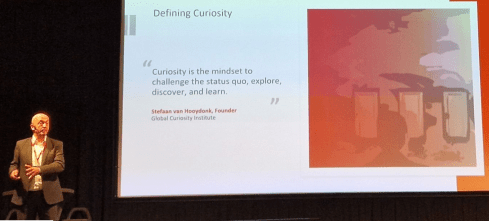

First, I worked with Stefaan van Hooydonk, the founder of the Global Curiosity Institute and author of the bestselling book The Workplace Curiosity Manifesto, on the article Curiosity as Guiding Principle for PLM Change, which explained the importance of Curiosity in the context of sustainable product development (PLM).

First, I worked with Stefaan van Hooydonk, the founder of the Global Curiosity Institute and author of the bestselling book The Workplace Curiosity Manifesto, on the article Curiosity as Guiding Principle for PLM Change, which explained the importance of Curiosity in the context of sustainable product development (PLM).

The intersection between Curiosity and modern PLM is Systems Thinking.

Systems Thinking: A Crucial 21st Century Skill for Sustainable Product Development, Driven by Curiosity.

Last week, I had the privilege of attending the CADCAM Lab conference in Ljubljana. In addition to my keynote, I was inspired by several presentations on the various aspects of digital transformation: the tools, possible enablement, and the needed mindset.

One of the highlights was the talk by Tanja Mohorič, the director for innovation culture and European projects in Slovene corporation Hidria and director of Slovene Automotive Cluster ACS. Tanja shared her insights on fostering Innovation, a crucial driver for a sustainable business as companies need to innovate in order to remain significant.

One of the highlights was the talk by Tanja Mohorič, the director for innovation culture and European projects in Slovene corporation Hidria and director of Slovene Automotive Cluster ACS. Tanja shared her insights on fostering Innovation, a crucial driver for a sustainable business as companies need to innovate in order to remain significant.

One of the intersections between Innovation and modern PLM is Curiosity

Innovation is defined as the process of bringing about new ideas, methods, products, services, or solutions that have significant positive impact and value.

Let’s zoom in on these two themes.

Curiosity

I knew Stefaan from his keynote at the PLM Road Map / PDT Europe 2022 conference; you can read my review from his session here: The week after PLM Roadmap / PDT Europe 2022.

It was an eye-opener for many of us focusing on the PLM domain. Stefaan’s message is that Curiosity is not only a personal skill; it is also something of a company’s culture. And in this age of rapid change, companies that embrace a culture of openness are outperforming their peers.

This time, on Earth Day (April 22nd), Stefaan organized an interactive webinar titled “Curiosity and the Planet,” which addressed the need for new technologies and approaches to living in a sustainable future. With my Green PLM-twisted mind, I immediately saw the overlap and intersection between our missions.

This time, on Earth Day (April 22nd), Stefaan organized an interactive webinar titled “Curiosity and the Planet,” which addressed the need for new technologies and approaches to living in a sustainable future. With my Green PLM-twisted mind, I immediately saw the overlap and intersection between our missions.

We decided to write an article together on this topic, in which we described a pathway for companies that want to develop more sustainable products or solutions, using Curiosity as one of the means.

As companies need to find their path to the digitization of their PLM infrastructure due to regulations, ESG reporting, and potentially the introduction of digital product passports and the circular economy, they need to act fast in an area not familiar to them.

As companies need to find their path to the digitization of their PLM infrastructure due to regulations, ESG reporting, and potentially the introduction of digital product passports and the circular economy, they need to act fast in an area not familiar to them.

Here, a curious organization will outperform the traditional, controlled enterprise.

You can read the full article here: Curiosity as Guiding Principle for PLM Change.

You can read the full article here: Curiosity as Guiding Principle for PLM Change.

And as I know in our hasty society, not everyone will read the article although I think you should. For those who do not read the details, I close this topic with a quote from the article:

We define Curiosity as the mindset to challenge the status quo, explore, discover and learn.

Curiosity is often considered a trait linked to an individual, as exemplified by the constant questions of children or scientists. Groups of people or organizations can also be curious collectively. Research from INSEAD studying the level of Curiosity across the executive team uncovered that these teams are superior in two distinct ways: first, they are better at future Innovation, and second, they are better at optimizing their current operations. Curiosity on the executive team leads not only to future success but also to better short-term business results. Such teams create the perfect environment for their teams to thrive.

Change, however, is hard, and people are often left to their own devices; they prefer to perpetuate the known past rather than invite an unknown future. Curiosity helps us lean into uncertainty. It encourages us to slow down and observe whether the status quo we hold dear is still relevant. Curiosity is the prime catalyst for change. It invites open questions.

Innovation

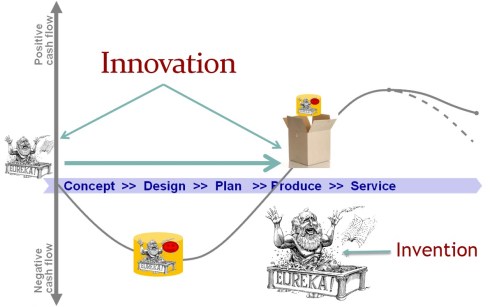

There is often confusion between Invention and Innovation. Where invention is the “Eureka” moment where a new idea gets its shape, Innovation is the process of bringing new ideas, methods, products, services, or solutions to the market.

I presented this topic at the 2013 Product Innovation Conference in Berlin. The title of the presentation was PLM Loves Innovation, and you can find it here on SlideShare.

I presented this topic at the 2013 Product Innovation Conference in Berlin. The title of the presentation was PLM Loves Innovation, and you can find it here on SlideShare.

Looking back at the presentation, I realized we were thinking linear.

Concepts of an iterative approach, DevOps and a Minimum Viable Product (MVP) were not yet there. Meanwhile, thanks to digitization, bringing Innovation to the market has changed, which made Tanja Mohorič’s presentation a significant refresh of the mind.

Tanja’s lecture was illustrated by various quotes, you can find them in her presentation . Here are a few examples:

If you really look closely, most overnight successes took a long time. (Steve Jobs)

If you read Steve Jobs’s history at Apple, you will discover it has been a long journey. Although we like to praise the hero, there were many other, less visible people and patents involved in bringing Apple’s Innovation to the market.

Innovation is the ability to convert ideas into invoices (Lewis Dunacan)

What I like about this quote is that it also shows the importance of having a positive financial outcome. Bringing Innovation to the market is a matter of timing. If you are too early, there is no market for your product (yet), and if you are too late, the market share or margin is gone.

Minds are like parachutes – they only function when open (Thomas Dewar)

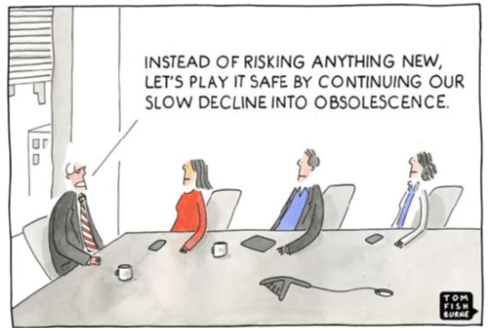

Curiosity and an open mind remain needed. The parachute quote is a quote to remember, mainly if you work in a traditional, established company. The risk of conformance is high, and a “we know the best” attitude might be killing the company, as we have seen from some management examples, like Kodak, NOKIA, and others.

Tanja’s presentation addressing the elements that support Innovation and those that kill Innovation can be found here: INNOVATION AS A PRECONDITION TO SUCCESS_Tanja Mohorič.

Tanja’s presentation addressing the elements that support Innovation and those that kill Innovation can be found here: INNOVATION AS A PRECONDITION TO SUCCESS_Tanja Mohorič.

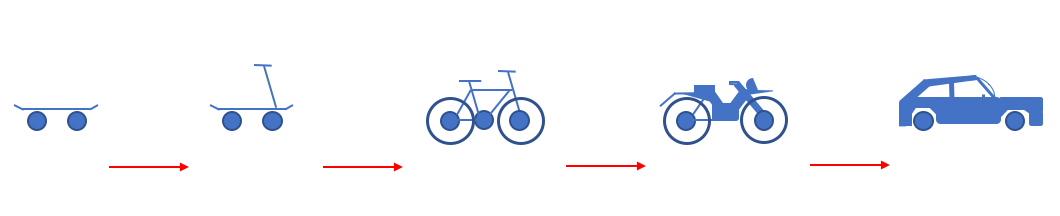

I want to close with one of the essential images that she shared, which is very aligned with how I see companies should consider their future, not as an evolutionary path to survive but as a journey to be inspired.

Coaching

As the CADCAM Group is a significant implementer of the Dassault Systèmes portfolio, my presentation about digital transformation in the PLM Domain was focused on their terminology and capabilities. You can find my presentation on SlideShare here.

As the CADCAM Group is a significant implementer of the Dassault Systèmes portfolio, my presentation about digital transformation in the PLM Domain was focused on their terminology and capabilities. You can find my presentation on SlideShare here.

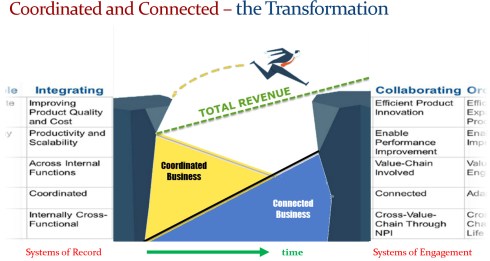

However, the HOW part of digital transformation is more or less independent of the software. Here, it is about people, digital skills and new ways of working, which can be challenging for an existing enterprise as the linear business must continue. You might have seen the diagram below from previous blog posts/presentations.

The challenge I discussed with a few companies was how to apply it to your company.

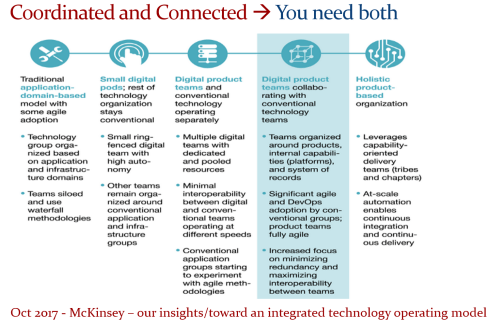

First of all, I am still promoting McKinsey’s approach described in their article Our insights/toward an integrated technology operating model from 2017, which might not directly mention PLM at first glance. The way you work in your business should reflect the way you work with PLM and vice versa.

Where the traditional application-domain-based model reflects the existing coordinated business, the transformation takes place by learning to work first in small pods and later in digital product teams.

It seems evident that these new teams will be staffed with young, digital-native people. However, it remains crucial that these teams are coached by experienced people who help the team benefit from their vast experience.

It is like in soccer. Having eleven highly skilled young players does not make a team successful. Success depends on the combination of the trainer and the coach, and it is a continuous interaction throughout the season.

It is like in soccer. Having eleven highly skilled young players does not make a team successful. Success depends on the combination of the trainer and the coach, and it is a continuous interaction throughout the season.

Therefore, a question for your organization: “Where are your coaches and trainers?”

I addressed this topic in my post: PLM 2020- The next decade (4 challenges), where the topic of changing organizations and retiring people became apparent.

As a rule of thumb, I would claim that you should try to give somebody with unique knowledge and who will be retiring in 2 – 3 years the role of coach and is no longer an operational mission. It may look less effective; however, it will contribute to a smooth knowledge transition from a coordinated to a coordinated and connected enterprise.

Conclusion

It was great to be inspired by some of the “soft” topics related to modern PLM. We like to discuss the usage of drawings, intelligent part numbers, the EBOM, MBOM, and SBOM or a cloud infrastructure. However I enjoyed discussing perhaps the most essential parts of a successful PLM implementation: the people, their motivation and their attitude to Curiosity and Innovation – their willingness to get inspired by the future.

What do you see as the most important topic to address in the future?

In the past few weeks, together with Share PLM, we recorded and prepared a few podcasts to be published soon. As you might have noticed, for Season 2, our target is to discuss the human side of PLM and PLM best practices and less the technology side. Meaning:

In the past few weeks, together with Share PLM, we recorded and prepared a few podcasts to be published soon. As you might have noticed, for Season 2, our target is to discuss the human side of PLM and PLM best practices and less the technology side. Meaning:

- How to align and motivate people around a PLM initiative?

- What are the best practices when running a PLM initiative?

- What are the crucial skills you need to have as a PLM lead?

And as there are always many success stories to learn on the internet, we also challenged our guests to share the moments where they got experienced.

As the famous quote says:

Experience is what you get when you don’t get what you expect!

We recently published our with Antonio Casaschi from Assa Abloy, a Swedish company you might have never noticed, although their products and services are a part of your daily life.

It was a discussion to my heart. We discussed the various aspects of PLM. What makes a person a PLM professional? And if you have no time to listen for these 35 minutes, read and scan the recording transcript on the transcription tab.

At 0:24:00, Antonio mentioned the concept of Proof of Concept as he had good experiences with them in the past. The remark triggered me to share some observations that a Proof of Concept (POC) is an old-fashioned way to drive change within organizations. Not discussed in this podcast but based on my experience, companies have been using the Proof Of Concepts to win time, as they were afraid to make a decision.

A POC to gain time?

Company A

When working with a well-known company in 2014, I learned they were planning approximately ten POC per year to explore new ways of working or new technologies. As it was a POC based on an annual time scheme, the evaluation at the end of the year was often very discouraging.

When working with a well-known company in 2014, I learned they were planning approximately ten POC per year to explore new ways of working or new technologies. As it was a POC based on an annual time scheme, the evaluation at the end of the year was often very discouraging.

Most of the time, the conclusion was: “Interesting, we should explore this further” /“What are the next POCs for the upcoming year?”

There was no commitment to follow-up; it was more of a learning exercise not connected to any follow-up.

Company B

During one of the PDT events, a company presented that two years POC with the three leading PLM vendors, exploring supplier collaboration. I understood the PLM vendors had invested much time and resources to support this POC, expecting a big deal. However, the team mentioned it was an interesting exercise, and they learned a lot about supplier collaboration.

During one of the PDT events, a company presented that two years POC with the three leading PLM vendors, exploring supplier collaboration. I understood the PLM vendors had invested much time and resources to support this POC, expecting a big deal. However, the team mentioned it was an interesting exercise, and they learned a lot about supplier collaboration.

And nothing happened afterward ………

In 2019

At the 2019 Product Innovation Conference in London, when discussing Digital Transformation within the PLM domain, I shared in my conclusion that the POC was mainly a waste of time as it does not push you to transform; it is an option to win time but is uncommitted.

My main reason for not pushing a POC is that it is more of a limited feasibility study.

- Often to push people and processes into the technical capabilities of the systems used. A focus starting from technology is the opposite of what I have been pushing for longer: First, focus on the value stream – people and processes- and then study which tools and technologies support these demands.

- Second, the POC approach often blocks innovation as the incumbent system providers will claim the desired capabilities will come (soon) within their systems—a safe bet.

The Minimum Viable Product approach (MVP)

With the awareness that we need to work differently and benefit from digital capabilities also came the term Minimum Viable Product or MVP.

The abbreviation MVP is not to be confused with the minimum valuable products or most valuable players.

There are two significant differences with the POC approach:

- You admit the solution does not exist anywhere – so it cannot be purchased or copied.

- You commit to the fact that this new approach will be the right direction to take and agree that a perfect fit solution is not blocking you from starting for real.

These two differences highlight the main challenges of digital transformation in the PLM domain. Digital Transformation is a learning process – it takes time for organizations to acquire and master the needed skills. And secondly, it cannot be a big bang, and I have often referred to the 2017 article from McKinsey: Toward an integrated technology operating model. Image below.

We will soon hear more about digital transformation within the PLM domain during the next episode of our SharePLM podcast. We spoke with Yousef Hooshmand, currently working for NIO, a Chinese multinational automobile manufacturer specializing in designing and developing electric vehicles, as their PLM data lead.

You might have discovered Yousef earlier when he published his paper: “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”. It is highly recommended that to read the paper if you are interested in a potential PLM future infrastructure. I wrote about this whitepaper in 2022: A new PLM paradigm discussing the upcoming Systems of Engagement on top of a Systems or Record infrastructure.

You might have discovered Yousef earlier when he published his paper: “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”. It is highly recommended that to read the paper if you are interested in a potential PLM future infrastructure. I wrote about this whitepaper in 2022: A new PLM paradigm discussing the upcoming Systems of Engagement on top of a Systems or Record infrastructure.

To align our terminology with Yousef’s wording, his domains align with the Systems of Engagement definition.

As we discovered and discussed with Yousef, technology is not the blocking issue to start. You must understand the target infrastructure well and where each domain’s activities fit. Yousef mentions that there is enough literature about this topic, and I can refer to the SAAB conference paper: Genesis -an Architectural Pattern for Federated PLM.

For a less academic impression, read my blog post, The week after PLM Roadmap / PDT Europe 2022, where I share the highlights of Erik Herzog’s presentation: Heterogeneous and Federated PLM – is it feasible?

For a less academic impression, read my blog post, The week after PLM Roadmap / PDT Europe 2022, where I share the highlights of Erik Herzog’s presentation: Heterogeneous and Federated PLM – is it feasible?

There is much to learn and discover which standards will be relevant, as both Yousef and Erik mention the importance of standards.

The podcast with Yousef (soon to be found HERE) was not so much about organizational change management and people.

However, Yousef mentioned the most crucial success factor for the transformation project he supported at Daimler. It was C-level support, trust and understanding of the approach, knowing it will be many years, an unavoidable journey if you want to remain competitive.

However, Yousef mentioned the most crucial success factor for the transformation project he supported at Daimler. It was C-level support, trust and understanding of the approach, knowing it will be many years, an unavoidable journey if you want to remain competitive.

And with the journey aspect comes the importance of the Minimal Viable Product. You are starting a journey with an end goal in mind (top-of-the-mountain), and step by step (from base camp to base camp), people will be better covered in their day-to-day activities thanks to digitization.

And with the journey aspect comes the importance of the Minimal Viable Product. You are starting a journey with an end goal in mind (top-of-the-mountain), and step by step (from base camp to base camp), people will be better covered in their day-to-day activities thanks to digitization.

A POC would not help you make the journey; perhaps a small POC would understand what it takes to cross a barrier.

Conclusion

The concept of POCs is outdated in a fast-changing environment where technology is not necessary the blocking issue. Developing practices, new architectures and using the best-fit standards is the future. Embrace the Minimal Viable Product approach. Are you?

Last week I enjoyed visiting LiveWorx 2023 on behalf of the PLM Global Green Alliance. PTC had invited us to understand their sustainability ambitions and meet with the relevant people from PTC, partners, customers and several of my analyst friends. It felt like a reunion.

Last week I enjoyed visiting LiveWorx 2023 on behalf of the PLM Global Green Alliance. PTC had invited us to understand their sustainability ambitions and meet with the relevant people from PTC, partners, customers and several of my analyst friends. It felt like a reunion.

In addition, I used the opportunity to understand better their Velocity SaaS offering with OnShape and Arena. The almost 4-days event, with approximately 5000 attendees, was massive and well-organized.

So many people were excited that this was again an in-person event after four years.

With PTC’s broad product portfolio, you could easily have a full agenda for the whole event, depending on your interests.

I was personally motivated that I had a relatively full schedule focusing purely on Sustainability, leaving all these other beautiful end-to-end concepts for another time.

Here are some of my observations

Jim Heppelman’s keynote

The primary presentation of such an event is the keynote from PTC’s CEO. This session allows you to understand the company’s key focus areas.

My takeaways:

- Need for Speed: Software-driven innovation, or as Jim said, Software is eating the BOM, reminding me of my recent blog post: The Rise and Fall of the BOM. Here Jim was referring to the integration with ALM (CodeBeamer) and IoT to have full traceability of products. However, including Software also requires agile ways of working.

- Need for Speed: Agile ways of working – the OnShape and Arena offerings are examples of agile working methods. A SaaS solution is easy to extend with suppliers or other stakeholders. PTC calls this their Velocity offering, typical Systems of Engagement, and I spoke later with people working on this topic. More in the future.

- Need for Speed: Model-based digital continuity – a theme I have discussed in my blog post too. Here Jim explains the interaction between Windchill and ServiceMax, both Systems of Record for product definition and Operation.

- Environmental Sustainability: introducing Catherine Kniker, PTC’s Chief Strategy and Sustainability Officer, announcing that PTC has committed to Science Based Targets, pledging near-term emissions reductions and long-term net-zero targets – see image below and more on Sustainability in the next section.

- A further investment in a SaaS architecture, announcing CREO+ as a SaaS solution supporting dynamic multi-user collaboration (a System of Engagement)

- A further investment in the partnership with Ansys fits the needs of a model-based future where modeling and simulation go hand in hand.

You can watch the full session Path to the Future: Products in the Age of Transformation here.

Sustainability

The PGGA spoke with Dave Duncan and James Norman last year about PTC’s sustainability initiatives. Remember: PLM and Sustainability: talking with PTC. Therefore, Klaus Brettschneider and I were happy to meet Dave and James in person just before the event and align on understanding what’s coming at PTC.

The PGGA spoke with Dave Duncan and James Norman last year about PTC’s sustainability initiatives. Remember: PLM and Sustainability: talking with PTC. Therefore, Klaus Brettschneider and I were happy to meet Dave and James in person just before the event and align on understanding what’s coming at PTC.

We agreed there is no “sustainability super app”; it is more about providing an open, digital infrastructure to connect data sources at any time of the product lifecycle, supporting decision-making and analysis. It is all about reliable data.

Product Sustainability 101

On Tuesday, Dave Duncan gave a great introductory session, Product Sustainability 101, addressing Business Drivers and Technical Opportunities. Dave started by explaining the business context aiming at greenhouse gas (GHG) reduction based on science-based targets, describing the content of Scope 1, Scope 2 and Scope 3 emissions.

The image above, which came back in several presentations later that week, nicely describes the mapping of lifecycle decisions and operations in the context of the GHG protocol.

Design for Sustainability (DfS)

On Wednesday, I started with a session moderated by James Norman titled Design for Sustainability: Harnessing Innovation for a Resilient Future. The panel consisted of Neil D’Souza (CEO Makersite), Tim Greiner (MD Pure Strategies), Francois Lamy (SVP Product Management PTC) and Asheen Phansey (Director ESG & Sustainability at PagerDuty). You can find the topic discussed below:

Some of the notes I took:

- No specific PLM modules are needed, LCA needs to become an additional practice for companies, and they rely on a connected infrastructure.

- Where to start? First, understand the current baseline based on data collection – what is your environmental impact? Next, decide where to start

- The importance of Design for Service – many companies design products for easy delivery, not for service. Being able to service products better will extend their lifetime, therefore reducing their environmental impact (manufacturing/decommissioning)

- There Is a value chain for carbon data. In addition, suppliers significantly impact reaching net zero, as many OEMs have an Assembly To Order process, and most of the emissions are done during part manufacturing.

DfS: an example from Cummins

Next, on Wednesday, I attended the session from David Genter from Cummins, who presented their Design for Sustainability (DfS) project.

Next, on Wednesday, I attended the session from David Genter from Cummins, who presented their Design for Sustainability (DfS) project.

Dave started by sharing their 2030 sustainability goals:

- On Facilities and Operations: A reduction of 50 % of GHG emissions, reducing water usage by 30 %, reducing waste by 25 % and reducing organic compound emissions by 50%

- Reducing Scope 3 emissions for new products by 25%

- In general, reducing Scope 3 emissions by 55M metric tons.

The benefits for products were documented using a standardized scorecard (example below) to ensure the benefits are real and not based on wishful thinking.

Many motivated people wanted to participate in the project, and the ultimate result demonstrated that DfS has both business value for Cummins and the environment.

The project has been very well described in this whitepaper: How Cummins Made Changes to Optimize Product Designs for the Environment – a recommended case study to read.

Tangible Strategies for Improving Product Sustainability

The session was a dialogue between Catherine Kniker and Dave Duncan, discussing the strategies to move forward with Sustainability.

They reiterated the three areas where we as a PLM community can improve: Material choice and usage, Addressing Energy Emissions and Reducing Waste. And it is worth addressing them all, as you can see below – it is not only about carbon reduction.

It was an informative dialogue going through the different aspects of where we, as an engineering/ PLM community, can contribute. You can watch their full dialog here: Tangible Strategies for Improving Product Sustainability.

Conclusion

It was encouraging to see that at such an event as LiveWorx, you could learn about Sustainability and discuss Sustainability with the audience and PTC partners. And as I mentioned before, we need to learn to measure (data-driven / reliable data), and we need to be able to work in a connected infrastructure (digital thread) to allow design, simulation, validation and feedback to go hand in hand. It requires adapting a business strategy, not just a tactical solution. With the PLM Global Green Alliance, we are looking forward to following up on these.

NOTE: PTC covered the expenses associated with my participation in this event but did not in any way influence the content of this post – I made my tour fully independent through the conference and got encouraged by all the conversations I had.

Happy New Year to all of you, and may this year be a year of progress in understanding and addressing the challenges ahead of us.

Happy New Year to all of you, and may this year be a year of progress in understanding and addressing the challenges ahead of us.

To help us focus, I selected three major domains I will explore further this year. These domains are connected – of course – as nothing is isolated in a world of System Thinking. Also, I wrote about these domains in the past, as usually, noting happens out of the blue.

Meanwhile, there are a lot of discussions related to Artificial Intelligence (AI), in particular ChatGPT (openAI). But can AI provide the answers? I believe not, as AI is mainly about explicit knowledge, the knowledge you can define by (learning) algorithms.

Meanwhile, there are a lot of discussions related to Artificial Intelligence (AI), in particular ChatGPT (openAI). But can AI provide the answers? I believe not, as AI is mainly about explicit knowledge, the knowledge you can define by (learning) algorithms.

Expert knowledge, often called Tacit knowledge, is the knowledge of the expert, combining information from different domains into innovative solutions.

![]() I started my company, TacIT, in 1999 because I thought (and still think) that Tacit knowledge is the holy grail for companies.

I started my company, TacIT, in 1999 because I thought (and still think) that Tacit knowledge is the holy grail for companies.

Let’s see with openAI how far we get ……

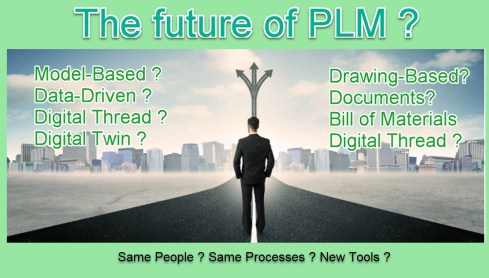

Digitization of the PLM domain

The PLM domain is suffering from its legacy data (documents), legacy processes (linear – mechanical focus) and legacy people (siloed). The statement is a generalization.

The PLM domain is suffering from its legacy data (documents), legacy processes (linear – mechanical focus) and legacy people (siloed). The statement is a generalization.

More details can be found in my blog series: The road to model-based and connected PLM.

So why should companies move to a model-based and connected approach for their PLM infrastructure?

![]() There are several reasons why companies may want to move to a model-based and connected approach for their Product Lifecycle Management (PLM) infrastructure:

There are several reasons why companies may want to move to a model-based and connected approach for their Product Lifecycle Management (PLM) infrastructure:

- Increased efficiency: A model-based approach allows for creating a digital twin of the product, which can be used to simulate and test various design scenarios, reducing the need for physical prototypes and testing. This can lead to faster and more efficient product development.

- Improved collaboration: A connected PLM infrastructure allows for better collaboration between different teams and departments, as all product-related information is stored in a central location and can be accessed by authorized personnel. This can improve communication and decision-making within the organization.

- Enhanced visibility: A model-based PLM system provides a single source of truth for all product-related data, giving management a clear and comprehensive view of the product development process. This can help identify bottlenecks and areas for improvement.

- Reduced risk: By keeping all product-related information in a centralized location, the risk of data loss or inconsistencies is reduced. This can help ensure that the product is developed in accordance with regulatory requirements and company standards.

- Increased competitiveness: A model-based and connected PLM infrastructure can help companies bring new products to market faster and with fewer errors, giving them a competitive advantage in their industry.

The text in italics was created by ChatGPT. After three learning cycles, this was the best answer I got. What we are missing in this answer is the innovative and transformative part that modern PLM can bring. Where is the concept of different ways of working, and new business models, both drivers for digitalization in many businesses?

Expert knowledge related to Federated PLM (or Killing the PLM Monolith) are topics you will not find through AI. This is, for me, the most interesting part to explore.

Expert knowledge related to Federated PLM (or Killing the PLM Monolith) are topics you will not find through AI. This is, for me, the most interesting part to explore.

We see the need but lack a common understanding of the HOW.

Algorithms will not innovate; for that, you need Tacit intelligence & Curiosity instead of Artificial Intelligence. More exploration of Federated PLM this year.

PLM and Sustainability

Last year as part of the PLM Global Green Alliance, we spoke with six different PLM solution providers to understand their sustainability goals, targets, and planned support for Sustainability. All of them confirmed Sustainability has become an important issue for their customers in 2022. Sustainability is on everyone’s agenda.

Last year as part of the PLM Global Green Alliance, we spoke with six different PLM solution providers to understand their sustainability goals, targets, and planned support for Sustainability. All of them confirmed Sustainability has become an important issue for their customers in 2022. Sustainability is on everyone’s agenda.

Why is PLM important for Sustainability?

PLM is important for Sustainability because a PLM helps organizations manage the entire lifecycle of a product, from its conception and design to its manufacture, distribution, use, and disposal. PLM can be important for Sustainability because it can help organizations make more informed decisions about the environmental impacts of their products and take steps to minimize those impacts throughout the product’s lifecycle.

PLM is important for Sustainability because a PLM helps organizations manage the entire lifecycle of a product, from its conception and design to its manufacture, distribution, use, and disposal. PLM can be important for Sustainability because it can help organizations make more informed decisions about the environmental impacts of their products and take steps to minimize those impacts throughout the product’s lifecycle.

For example, using PLM, an organization can consider the environmental impacts of the materials that are used in a product, the energy consumption of the manufacturing process, the product’s end-of-life disposal, and other factors that may affect its overall Sustainability. By considering these factors early in the design process, organizations can make more sustainable choices that reduce the environmental impact of their products over their lifecycle.

In addition, PLM can help organizations track and measure the Sustainability of their products over time, allowing them to continuously improve and optimize their products for Sustainability. This can be particularly important for organizations that are looking to meet regulatory requirements or consumer demand for more sustainable products.

Again not a wrong answer, but there is no mention of supporting the concepts of the circular economy, the potential of using digital twins at the various lifecycle stages of the products to perform trade-off studies in the virtual world, or to analyze the product behavior from the physical world in the virtual world for optimization.

This is (again), for me, the most interesting part to explore.

We see the need but lack the sense of urgency on the NOW.

More on Sustainability this year.

PLM Education at all levels

Historically PLM was framed as an “engineering thing” or a system needed for R&D/Engineering. Modern PLM is much broader and, unfortunately, more hard to grasp. I support the CIMdata definition:

CIMdata defines PLM as:

-

A strategic business approach that applies a consistent set of business solutions that support the collaborative creation, management, dissemination, and use of product definition information

-

Supporting the extended enterprise (customers, design and supply partners, etc.)

-

Spanning from concept to end of life of a product or plant

-

Integrating people, processes, business systems, and information

And even here, I would say this definition is challenging as the scope is extensive and could touch a lot of activities inside an enterprise. As I wrote last year in my series of posts related to PLM and Education, there is:

And even here, I would say this definition is challenging as the scope is extensive and could touch a lot of activities inside an enterprise. As I wrote last year in my series of posts related to PLM and Education, there is:

Strategy education (what are the trends/potential of digital transformation in the business domain related to the company’s products)

Methodology/ Academic Education (independent from tools, what are the best practices, where they reside, potential lessons learned and pitfalls to avoid)

Organisational Change Management (the Education and support needed to enable a business transformation – modern PLM is a transformation enabler)

By asking ChatGPT for their opinion, we learn the following:

Product Lifecycle Management (PLM) education refers to training and development programs that focus on teaching individuals how to effectively manage the lifecycle of a product within an organization. This can include a wide range of topics, such as product design, development, and engineering; supply chain management; quality control and assurance; and product retirement and disposal.

Product Lifecycle Management (PLM) education refers to training and development programs that focus on teaching individuals how to effectively manage the lifecycle of a product within an organization. This can include a wide range of topics, such as product design, development, and engineering; supply chain management; quality control and assurance; and product retirement and disposal.

Some specific types of PLM education that a company may need to provide to its employees include:

- Introduction to PLM: This type of training is designed to provide a general overview of PLM concepts and practices and may be suitable for employees who are new to the field or who have limited experience with PLM.

- PLM software training: Many companies use specialized PLM software to manage their product lifecycle processes. Employees who will be using this software will need training on how to use it effectively.

- Industry-specific PLM training: Depending on the industry in which a company operates, there may be specific PLM practices and regulations that employees need to be familiar with. For example, a company in the aerospace industry may need to provide training on PLM practices that are specific to the aerospace industry.

- Leadership and management training: For employees who are responsible for leading and managing PLM projects or teams, additional training in leadership and project management may be necessary.

Overall, the types of PLM education that a company needs will depend on the specific needs and goals of the organization, as well as the roles and responsibilities of its employees.

In general, we see from the answer that strategy and organizational change management beyond the tool are not considered.

This is precisely the area where a PLM Expert can help.

We see the need for Education, but we lack the willingness to invest in it.

Conclusion

It was an exciting exercise to combine my blogging thoughts with the answers from OpenAI. I am impressed by the given answers, knowing that the topics discussed about PLM are not obvious. On the other hand, I am not worried that AI will take over the job of the PLM consultant. As I mentioned before, the difference between Explicit Knowledge and Tacit Knowledge is clear, and business transformations will largely depend on the usage of Tacit knowledge.

I am curious about your experiences and will follow the topics mentioned in this post and write about them with great interest.

With great pleasure, I am writing this post, part of a tradition that started for me in 2014. Posts starting with “The weekend after …. “describing what happened during a PDT conference, later the event merged with CIMdata becoming THE PLM event for discussions beyond marketing.

With great pleasure, I am writing this post, part of a tradition that started for me in 2014. Posts starting with “The weekend after …. “describing what happened during a PDT conference, later the event merged with CIMdata becoming THE PLM event for discussions beyond marketing.

For many of us, this conference was the first time after COVID-19 in 2020. It was a 3D (In person) conference instead of a 2D (digital) conference. With approximately 160 participants, this conference showed that we wanted to meet and network in person and the enthusiasm and interaction were great.

The conference’s theme, Digital Transformation and PLM – a call for PLM Professionals to redefine and re-position the benefits and value of PLM, was quite open.

There are many areas where digitization affects the way to implement a modern PLM Strategy.

Now some of my highlights from day one. I needed to filter to remain around max 1500 words. As all the other sessions, including the sponsor vignettes, were informative, they increased the value of this conference.

Digital Skills Transformation -Often Forgotten Critical Element of Digital Transformation

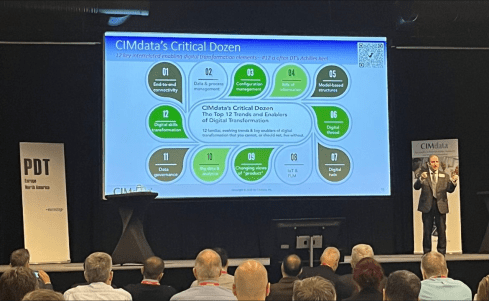

![]() Day 1 started traditionally with the keynote from Peter Bilello, CIMdata’s president and CEO. In previous conferences, Peter has recently focused on explaining the CIMdata’s critical dozen (image below). If you are unfamiliar with them, there is a webinar on November 10 where you can learn more about them.

Day 1 started traditionally with the keynote from Peter Bilello, CIMdata’s president and CEO. In previous conferences, Peter has recently focused on explaining the CIMdata’s critical dozen (image below). If you are unfamiliar with them, there is a webinar on November 10 where you can learn more about them.

All twelve are equally important; it is not a sequence of priorities. This time Peter spent more time on Organisational Change management (OCM), number 12 of the critical dozen – or, as stated, the Digital Transformation’s Achilles heel. Although we always mention people are important, in our implementation projects, they often seem to be the topic that gets the less focus.

We all agree on the statement: People, Process, Tools & Data. Often the reality is that we start with the tools, try to build the processes and push the people in these processes. Is it a coincidence that even CIMdata puts Digital Skills transformation as number 12? An unconscious bias?

We all agree on the statement: People, Process, Tools & Data. Often the reality is that we start with the tools, try to build the processes and push the people in these processes. Is it a coincidence that even CIMdata puts Digital Skills transformation as number 12? An unconscious bias?

This time, the people’s focus got full attention. Peter explained the need for a digital skills transformation framework to educate, guide and support people during a transformation. The concluding slide below says it all.

Transformation Journey and PLM & PDM Modernization to the Digital Future

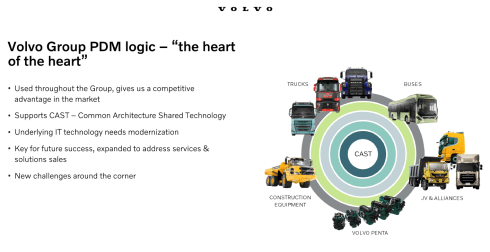

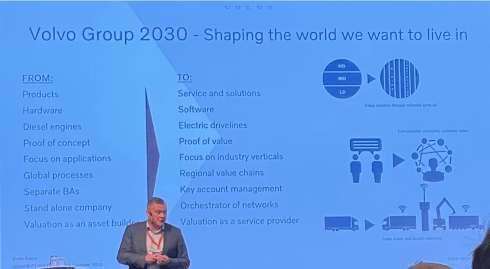

The second keynote of the day was from Josef Schiöler, Head of Core Platform Area PLM/PDM from the Volvo Group. Josef and his team have a huge challenge as they are working on a foundation for the future of the Volvo Group.

The second keynote of the day was from Josef Schiöler, Head of Core Platform Area PLM/PDM from the Volvo Group. Josef and his team have a huge challenge as they are working on a foundation for the future of the Volvo Group.

The challenge is that it will provide the foundation for new business processes and the various group members, as the image shows below:

As Josef said, it is really the heart of the heart, crucial for the future. Peter Bilello referred to this project as open-heart surgery while the person is still active, as the current business must go on too.

The picture below gives an impression of the size of the operation.

And like any big transformation project also, the Volvo Group has many questions to explore as there is no existing blueprint to use.

To give you an impression:

- How to manage complex documentation with existing and new technology and solution co-existing?

(My take: the hybrid approach) - How to realize benefits and user adoption with user experience principles in mind?

(My take: Understand the difference between a system of engagement and a system of record) - How to avoid seeing modernization as pure an IT initiative and secure that end-user value creation is visible while still keeping a focus on finalizing the technology transformation?

(My take: think hybrid and focus first on the new systems of engagement that can grow) - How to efficiently partner with software vendors to ensure vendor solutions fit well in the overall PLM/PDM enterprise landscape without heavy customization?

(My take: push for standards and collaboration with other similar companies – they can influence a vendor)

![]() Note: My takes are just a starting point of the conversation. There is a discussion in the PLM domain, which I described in my blog post: A new PLM paradigm.

Note: My takes are just a starting point of the conversation. There is a discussion in the PLM domain, which I described in my blog post: A new PLM paradigm.

The day before the conference, we had a ½ day workshop initiated by SAAB and Eurostep where we discussed the various angles of the so-called Federated PLM.

The day before the conference, we had a ½ day workshop initiated by SAAB and Eurostep where we discussed the various angles of the so-called Federated PLM.

I will return to that topic soon after some consolidation with the key members of that workshop.

Steering future Engineering Processes with System Lifecycle Management

Patrick Schäfer‘s presentation was different than the title would expect. Patrick is the IT Architect Engineering IT from ThyssenKrupp Presta AG. The company provides steering systems for the automotive industry, which is transforming from mechanical to autonomous driving, e-mobility, car-to-car connectivity, stricter safety, and environmental requirements.

Patrick Schäfer‘s presentation was different than the title would expect. Patrick is the IT Architect Engineering IT from ThyssenKrupp Presta AG. The company provides steering systems for the automotive industry, which is transforming from mechanical to autonomous driving, e-mobility, car-to-car connectivity, stricter safety, and environmental requirements.

The steering system becomes a system depending on hardware and software. And as current users of Agile PLM, the old Eigner PLM software, you can feel Martin Eigner’s spirit in the project.

The steering system becomes a system depending on hardware and software. And as current users of Agile PLM, the old Eigner PLM software, you can feel Martin Eigner’s spirit in the project.

I briefly discussed Martin’s latest book on System Lifecycle Management in my blog post, The road to model-based and connected PLM (part 5).

Martin has always been fighting for a new term for modern PLM, and you can see how conservative we are – for sometimes good reasons.

Still, ThyssenKrupp Presta has the vision to implement a new environment to support systems instead of hardware products. And in addition, they had to work fast to upgrade their current almost obsolete PLM environment to a new supported environment.

The wise path they chose was first focusing on a traditional upgrade, meaning making sure their PLM legacy data became part of a modern (Teamcenter) PLM backbone. Meanwhile, they started exploring the connection between requirements management for products and software, as shown below.

From my perspective, I would characterize this implementation as the coordinated approach creating a future option for the connected approach when the organization and future processes are more mature and known.

A good example of a pragmatic approach.

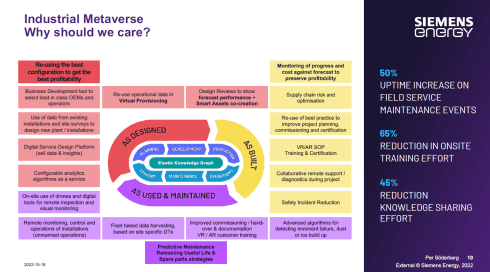

Digital Transformation in the Domain of Products and Plants at Siemens Energy

Per Soderberg, Head of Digital PLM at Siemens Energy, talked about their digital transformation project that started 6 – 7 years ago. Knowing the world of gas- and steam turbines, it is a domain where a lot of design and manufacturing information is managed in drawings.

Per Soderberg, Head of Digital PLM at Siemens Energy, talked about their digital transformation project that started 6 – 7 years ago. Knowing the world of gas- and steam turbines, it is a domain where a lot of design and manufacturing information is managed in drawings.

The ultimate vision from Siemens Energy is to create an Industrial Metaverse for its solutions as the benefits are significant.

Is this target too ambitious, like GE’s 2014 Industrial Transformation with Predix? Time will tell. And I am sure you will soon hear more from Siemens Energy; therefore, I will keep it short. An interesting and ambitious program to follow. Sure you will read about them in the near future.

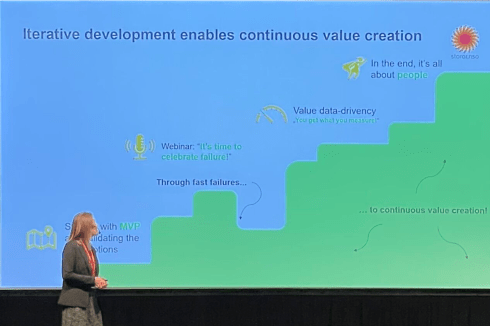

Accelerating Digitalization at Stora Enso

Stora Enso is a Finish company, a leading global provider of renewable solutions in packaging, biomaterials, wooden construction and paper. Their director of Innovation Services, Kaisa Suutari, shared Stora Enso’s digital transformation program that started six years ago with a 10 million/year budget (some people started dreaming too). Great to have a budget but then where to start?

Stora Enso is a Finish company, a leading global provider of renewable solutions in packaging, biomaterials, wooden construction and paper. Their director of Innovation Services, Kaisa Suutari, shared Stora Enso’s digital transformation program that started six years ago with a 10 million/year budget (some people started dreaming too). Great to have a budget but then where to start?

In a very systematic manner using an ideas funnel and always starting from the business need, they spend the budget in two paths, shown in the image below.

Their interesting approach was in the upper path, which Kaisa focused on. Instead of starting with an analysis of how the problem could be addressed, they start by doing and then analyze the outcome and improve.

Their interesting approach was in the upper path, which Kaisa focused on. Instead of starting with an analysis of how the problem could be addressed, they start by doing and then analyze the outcome and improve.

I am a great fan of this approach as it will significantly reduce the time to maturity. However, how much time is often wasted in conducting the perfect analysis?

Their Digi Fund process is a fast process to quickly go from idea to concept, to POC and to pilot, the left side of the funnel. After a successful pilot, an implementation process starts small and scales up.

There were so many positive takeaways from this session. Start with an MVP (Minimal Viable Product) to create value from the start. Next, celebrate failure when it happens, as this is the moment you learn. Finally, continue to create measurable value created by people – the picture below says it all.

It was the second time I was impressed by Stora Enso’s innovative approach. During the PI PLMX 2020 London, Samuli Savo, Chief Digital Officer at Stora Enso, gave us insights into their innovation process. At that time, the focus was a little bit more on open innovation with startups. See my post: The weekend after PI PLMx London 2020. An interesting approach for other businesses to make their digital transformation business-driven and fun for the people

It was the second time I was impressed by Stora Enso’s innovative approach. During the PI PLMX 2020 London, Samuli Savo, Chief Digital Officer at Stora Enso, gave us insights into their innovation process. At that time, the focus was a little bit more on open innovation with startups. See my post: The weekend after PI PLMx London 2020. An interesting approach for other businesses to make their digital transformation business-driven and fun for the people

A day-one summary

There was Kyle Hall, who talked about MoSSEC and the importance of this standard in a connected enterprise. MoSSEC (Modelling and Simulation information in a collaborative Systems Engineering Context) is the published ISO standard (ISO 10303-243) for improving the decision-making process for complex products. Standards are a regular topic for this conference, more about MoSSEC here.

There was Robert Rencher, Sr. Systems Engineer, Associate Technical Fellow at Boeing, talking about the progress that the A&D action group is making related to Digital Thread, Digital Twins. Sometimes asking more questions than answers as they try to make sense of the marketing definition and what it means for their businesses. You can find their latest report here.

There was Samrat Chatterjee, Business Process Manager PLM at the ABB Process Automation division. Their businesses are already quite data-driven; however, by embedding PLM into the organization’s fabric, they aim to improve effectiveness, manage a broad portfolio, and be more modular and efficient.

The day was closed with a CEO Spotlight, Peter Bilello. This time the CEOs were not coming from the big PLM vendors but from complementary companies with their unique value in the PLM domain. Henrik Reif Andersen, co-founder of Configit; Dr. Mattias Johansson, CEO of Eurostep; Helena Gutierrez, co-founder of Share PLM; Javier Garcia, CEO of The Reuse Company and Karl Wachtel, CEO, XPLM discussed their various perspectives on the PLM domain.

Conclusion

Already so much to say; sorry, I reached the 1500 words target; you should have been there. Combined with the networking dinner after day one, it was a great start to the conference. Are you curious about day 2 – stay tuned, and your curiosity will be rewarded.

Thanks to Ewa Hutmacher, Sumanth Madala and Ashish Kulkarni, who shared their pictures of the event on LinkedIn. Clicking on their names will lead you to the relevant posts.

Thanks to Ewa Hutmacher, Sumanth Madala and Ashish Kulkarni, who shared their pictures of the event on LinkedIn. Clicking on their names will lead you to the relevant posts.

One of my favorite conferences is the PLM Road Map & PDT conference. Probably because in the pre-COVID days, it was the best PLM conference to network with peers focusing on PLM practices, standards, and sustainability topics. Now the conference is virtual, and hopefully, after the pandemic, we will meet again in the conference space to elaborate on our experiences further.

One of my favorite conferences is the PLM Road Map & PDT conference. Probably because in the pre-COVID days, it was the best PLM conference to network with peers focusing on PLM practices, standards, and sustainability topics. Now the conference is virtual, and hopefully, after the pandemic, we will meet again in the conference space to elaborate on our experiences further.

Last year’s fall conference was special because we had three days filled with a generic PLM update and several A&D (Aerospace & Defense) working groups updates, reporting their progress and findings. Sessions related to the Multiview BOM research, Global Collaboration, and several aspects of Model-Based practices: Model-Based Definition, Model-Based Engineering & Model-Based Systems engineering.

All topics that I will elaborate on soon. You can refresh your memory through these two links:

- The weekend after PLM Roadmap / PDT 2020 – part 1

- The next weekend after PLM Roadmap / PDT 2020 – part 2

This year, it was a two-day conference with approximately 200 attendees discussing how emerging technologies can disrupt the current PLM landscape and reshape the PLM Value Equation. During the first day of the conference, we focused on technology.

This year, it was a two-day conference with approximately 200 attendees discussing how emerging technologies can disrupt the current PLM landscape and reshape the PLM Value Equation. During the first day of the conference, we focused on technology.

On the second day, we looked in addition to the impact new technology has on people and organizations.

Today’s Emerging Trends & Disrupters

Peter Bilello, CIMdata’s President & CEO, kicked off the conference by providing CIMdata observations of the market. An increasing number of technology capabilities, like cloud, additive manufacturing, platforms, digital thread, and digital twin, all with the potential of realizing a connected vision. Meanwhile, companies evolve at their own pace, illustrating that the gap between the leaders and followers becomes bigger and bigger.

Where is your company? Can you afford to be a follower? Is your PLM ready for the future? Probably not, Peter states.

Next, Peter walked us through some technology trends and their applicability for a future PLM, like topological data analytics (TDA), the Graph Database, Low-Code/No-Code platforms, Additive Manufacturing, DevOps, and Agile ways of working during product development. All capabilities should be related to new ways of working and updated individual skills.

Next, Peter walked us through some technology trends and their applicability for a future PLM, like topological data analytics (TDA), the Graph Database, Low-Code/No-Code platforms, Additive Manufacturing, DevOps, and Agile ways of working during product development. All capabilities should be related to new ways of working and updated individual skills.

I fully agreed with Peter’s final slide – we have to actively rethink and reshape PLM – not by calling it different but by learning, experimenting, and discussing in the field.

Digital Transformation Supporting Army Modernization

An interesting viewpoint related to modern PLM came from Dr. Raj Iyer, Chief Information Officer for IT Reform from the US Army. Rai walked us through some of the US Army’s challenges, and he gave us some fantastic statements to think about. Although an Army cannot be compared with a commercial business, its target remains to be always ahead of the competition and be aware of the competition.

Where we would say “data is the new oil”, Rai Iyer said: “Data is the ammunition of the future fight – as fights will more and more take place in cyberspace.”

The US Army is using a lot of modern technology – as the image below shows. The big difference here with regular businesses is that it is not about ROI but about winning fights.

Also, for the US Army, the cloud becomes the platform of the future. Due to the wide range of assets, the US Army has to manage, the importance of product data standards is evident. – Rai mentioned their contribution and adherence to the ISO 10303 STEP standard crucial for interoperability. It was an exciting insight into the US Army’s current and future challenges. Their primary mission remains to stay ahead of the competition.

Joining up Engineering Data without losing the M in PLM

![]() Nigel Shaw’s (Eurostep) presentation was somehow philosophical but precisely to the point what is the current dilemma in the PLM domain. Through an analogy of the internet, explaining that we live in a world of HTTP(s) linking, we create new ways of connecting information. The link becomes an essential artifact in our information model.

Nigel Shaw’s (Eurostep) presentation was somehow philosophical but precisely to the point what is the current dilemma in the PLM domain. Through an analogy of the internet, explaining that we live in a world of HTTP(s) linking, we create new ways of connecting information. The link becomes an essential artifact in our information model.

Where it is apparent links are crucial for managing engineering data, Nigel pointed out some of the significant challenges of this approach, as you can see from his (compiled) image below.

I will not discuss this topic further here as I am planning to come back to this topic when explaining the challenges of the future of PLM.

As Nigel said, they have a debate with one of their customers to replace the existing PLM tools or enhance the existing PLM tools. The challenge of moving from coordinated information towards connected data is a topic that we as a community should study.

Integration is about more than Model Format.

This was the presentation I have been waiting for. Mark Williams from Boeing had built the story together with Adrian Burton from Airbus. Nigel Shaw, in the previous session, already pointed to the challenge of managing linked information. Mark elaborated further about the model-based approach for system definition.

This was the presentation I have been waiting for. Mark Williams from Boeing had built the story together with Adrian Burton from Airbus. Nigel Shaw, in the previous session, already pointed to the challenge of managing linked information. Mark elaborated further about the model-based approach for system definition.

All content was related to the understanding that we need a model-based information infrastructure for the future because storing information in documents (the coordinated approach) is no longer viable for complex systems. Mark ‘slide below says it all.

Mark stressed the importance of managing model information in context, and it has become a challenge.

Mark mentioned that 20 years ago, the IDC (International Data Corporation) measured Boeing’s performance and estimated that each employee spent 2 ½ hours per day. In 2018, the IDC estimated that this number has grown to 30 % of the employee’s time and could go up to 50 % when adding the effort of reusing and duplicating data.

Mark mentioned that 20 years ago, the IDC (International Data Corporation) measured Boeing’s performance and estimated that each employee spent 2 ½ hours per day. In 2018, the IDC estimated that this number has grown to 30 % of the employee’s time and could go up to 50 % when adding the effort of reusing and duplicating data.

The consequence of this would be that a full-service enterprise, having engineering, manufacturing and services connected, probably loses 70 % of its information because they cannot find it—an impressive number asking for “clever” ways to find the correct information in context.

It is not about just a full indexed search of the data, as some technology geeks might think. It is also about describing and standardizing metadata that describes the models. In that context, Mark walked through a list of existing standards, all with their pros and cons, ending up with the recommendation to use the ISO 10303-243 – MoSSEC standard.

It is not about just a full indexed search of the data, as some technology geeks might think. It is also about describing and standardizing metadata that describes the models. In that context, Mark walked through a list of existing standards, all with their pros and cons, ending up with the recommendation to use the ISO 10303-243 – MoSSEC standard.

MoSSEC standing for Modelling and Simulation information in a collaborative Systems Engineering Context to manage and connect the relationships between models.

MoSSEC and its implication for future digital enterprises are interesting, considering the importance of a model-based future. I am curious how PLM Vendors and tools will support and enable the standard for future interoperability and collaboration.

Additive Manufacturing

– not as simple as paper printing – yet

Andreas Graichen from Siemens Energy closed the day, coming back to the new technologies’ topic: Additive Manufacturing or in common language 3D Printing. Andreas shared their Additive Manufacturing experiences, matching the famous Gartner Hype Cycle. His image shows that real work needs to be done to understand the technology and its use cases after the first excitement of the hype is over.

Material knowledge was one of the important topics to study when applying additive manufacturing. It is probably a new area for most companies to understand the material behaviors and properties in an Additive Manufacturing process.

The ultimate goal for Siemens Energy is to reach an “autonomous” workshop anywhere in the world where gas turbines could order their spare parts by themselves through digital warehouses. It is a grand vision, and Andreas confirmed that the scalability of Additive Manufacturing is still a challenge.

For rapid prototyping or small series of spare parts, Additive Manufacturing might be the right solution. The success of your Additive Manufacturing process depends a lot on how your company’s management has realistic expectations and the budget available to explore this direction.

Conclusion

Day 1 was enjoyable and educational, starting and ending with a focus on disruptive technologies. The middle part related to data the data management concepts needed for a digital enterprise were the most exciting topics to follow up in my opinion.

Next week I will follow up with reviewing day 2 and share my conclusions. The PLM Road Map & PDT Spring 2021 conference confirmed that there is work to do to understand the future (of PLM).

This time in the series of complementary practices to PLM, I am happy to discuss product modularity. In my previous post related to Virtual Events, I mentioned I had finished reading the book “The Modular Way”, written by Björn Eriksson & Daniel Strandhammar, founders of the consulting company Brick Strategy.

This time in the series of complementary practices to PLM, I am happy to discuss product modularity. In my previous post related to Virtual Events, I mentioned I had finished reading the book “The Modular Way”, written by Björn Eriksson & Daniel Strandhammar, founders of the consulting company Brick Strategy.

The first time I got aware of Brick Strategy was precisely a year ago during the Technia Innovation Forum, the first virtual event I attended since COVID-19. Daniel’s presentation at that event was one of the four highlights that I shared about the conference. See My four picks from PLMIF.

The first time I got aware of Brick Strategy was precisely a year ago during the Technia Innovation Forum, the first virtual event I attended since COVID-19. Daniel’s presentation at that event was one of the four highlights that I shared about the conference. See My four picks from PLMIF.

As I wrote in my last post:

Modularity is a popular topic in many board meetings. How often have you heard: “We want to move from Engineering To Order (ETO) to more Configure To Order (CTO)”? Or another related incentive: “We need to be cleverer with our product offering and reduced the number of different parts”.

Next, the company buys a product that supports modularity, and management believes the work has been done. Of course, not. Modularity requires a thoughtful strategy.

I am now happy to have a dialogue with Daniel to learn and understand Brick Strategy’s view on PLM and Modularization. Are these topics connected? Can one live without the other? Stay tuned till the end if you still have questions for a pleasant surprise.

The Modular Way

Daniel, first of all, can you give us some background and intentions of the book “The Modular Way”?

Let me start by putting the book in perspective. In today’s globalized business, competition among industrial companies has become increasingly challenging with rapidly evolving technology, quickly changing customer behavior, and accelerated product lifecycles. Many companies struggle with low profitability.

Let me start by putting the book in perspective. In today’s globalized business, competition among industrial companies has become increasingly challenging with rapidly evolving technology, quickly changing customer behavior, and accelerated product lifecycles. Many companies struggle with low profitability.

To survive, companies need to master product customizations, launch great products quickly, and be cost-efficient – all at the same time. Modularization is a good solution for industrial companies with ambitions to improve their competitiveness significantly.

The aim of modularization is to create a module system. It is a collection of pre-defined modules with standardized interfaces. From this, you can build products to cater to individual customer needs while keeping costs low. The main difference from traditional product development is that you develop a set of building blocks or modules rather than specific products.

The aim of modularization is to create a module system. It is a collection of pre-defined modules with standardized interfaces. From this, you can build products to cater to individual customer needs while keeping costs low. The main difference from traditional product development is that you develop a set of building blocks or modules rather than specific products.

The Modular Way explains the concept of modularization and the ”how-to.” It is a comprehensive and practical guidebook, providing you with inspiration, a framework, and essential details to succeed with your journey. The book is based on our experience and insights from some of the world’s leading companies.

Björn and I have long thought about writing a book to share our combined modularization experience and learnings. Until recently, we have been fully busy supporting our client companies, but the halted activities during the peak of the COVID-19 pandemic gave us the perfect opportunity.

Björn and I have long thought about writing a book to share our combined modularization experience and learnings. Until recently, we have been fully busy supporting our client companies, but the halted activities during the peak of the COVID-19 pandemic gave us the perfect opportunity.

PLM and Modularity

Did you have PLM in mind when writing the book?

Yes, definitely. We believe that modularization and a modular way of working make product lifecycle management more efficient. Then we talk foremost about the processes, roles, product structure, decision making etc. Companies often need minor adjustments to their IT systems to support and sustain the new way of working.

Yes, definitely. We believe that modularization and a modular way of working make product lifecycle management more efficient. Then we talk foremost about the processes, roles, product structure, decision making etc. Companies often need minor adjustments to their IT systems to support and sustain the new way of working.

Companies benefit the most from modularization when the contents, or foremost the products, are well structured for configuration in streamlined processes.

![]() Many times, this means “thinking ahead” and preparing your products for more configuration and less engineering in the sales process, i.e., go from ETO to CTO.

Many times, this means “thinking ahead” and preparing your products for more configuration and less engineering in the sales process, i.e., go from ETO to CTO.

Modularity for Everybody?

It seems like the modularity concept is prevalent in the Scandinavian countries, with famous examples of Scania, LEGO, IKEA, and Electrolux mentioned in your book. These examples come from different industries. Does it mean that all companies could pursue modularity, or are there some constraints?

It seems like the modularity concept is prevalent in the Scandinavian countries, with famous examples of Scania, LEGO, IKEA, and Electrolux mentioned in your book. These examples come from different industries. Does it mean that all companies could pursue modularity, or are there some constraints?

We believe that companies designing and manufacturing products fulfilling different customer needs within a defined scope could benefit from modularization. Off-the-shelf content, commonality and reuse increase efficiency. However, the focus, approach and benefits are different among different types of companies.

We believe that companies designing and manufacturing products fulfilling different customer needs within a defined scope could benefit from modularization. Off-the-shelf content, commonality and reuse increase efficiency. However, the focus, approach and benefits are different among different types of companies.

We have, for example, seen low-volume companies expecting the same benefits as high-volume consumer companies. This is unfortunately not the case.

Companies can improve their ability and reduce the efforts to configure products to individual needs, i.e., customization. And when it comes to cost and efficiency improvements, high-volume companies can reduce product and operational costs.

Companies can improve their ability and reduce the efforts to configure products to individual needs, i.e., customization. And when it comes to cost and efficiency improvements, high-volume companies can reduce product and operational costs.

Image:

Low-volume companies can shorten lead time and increase efficiency in R&D and product maintenance. Project solution companies can shorten the delivery time through reduced engineering efforts.

As an example, Electrolux managed to reduce part costs by 20 percent. Half of the reduction came from volume effects and the rest from design for manufacturing and assembly.

All in all, Electrolux has estimated its operating cost savings at approximately SEK 4bn per year with full effect, or around 3.5 percentage points of total costs, compared to doing nothing from 2010–2017. Note: SEK 4 bn is approximate Euro 400 Mio

All in all, Electrolux has estimated its operating cost savings at approximately SEK 4bn per year with full effect, or around 3.5 percentage points of total costs, compared to doing nothing from 2010–2017. Note: SEK 4 bn is approximate Euro 400 Mio

Where to start?

Thanks to your answer, I understand my company will benefit from modularity. To whom should I talk in my company to get started? And if you would recommend an executive sponsor in my company, who would recommend leading this initiative.

Thanks to your answer, I understand my company will benefit from modularity. To whom should I talk in my company to get started? And if you would recommend an executive sponsor in my company, who would recommend leading this initiative.

Defining a modular system, and implementing a modular way of working, is a business-strategic undertaking. It is complex and has enterprise-wide implications that will affect most parts of the organization. Therefore, your management team needs to be aligned, engaged, and prioritize the initiative.

Defining a modular system, and implementing a modular way of working, is a business-strategic undertaking. It is complex and has enterprise-wide implications that will affect most parts of the organization. Therefore, your management team needs to be aligned, engaged, and prioritize the initiative.

The implementation requires a cross-functional team to ensure that you do it from a market and value chain perspective. Modularization is not something that your engineering or IT organization can solve on its own.

![]() We recommend that the CTO or CEO owns the initiative as it requires horizontal coordination and agreement.

We recommend that the CTO or CEO owns the initiative as it requires horizontal coordination and agreement.

Modularity and Digital Transformation

The experiences you are sharing started before digital transformation became a buzzword and practice in many companies. In particular, in the PLM domain, companies are still implementing past practices. Is modularization applicable for the current (coordinated) and for the (connected) future? And if yes, is there a difference?

The experiences you are sharing started before digital transformation became a buzzword and practice in many companies. In particular, in the PLM domain, companies are still implementing past practices. Is modularization applicable for the current (coordinated) and for the (connected) future? And if yes, is there a difference?

Modularization means that your products have a uniform design based on common concepts and standardized interfaces. To the market, the end products are unique, and your processes are consistent. Thus, modularization plays a role independently of where you are on the digital transformation journey.

Modularization means that your products have a uniform design based on common concepts and standardized interfaces. To the market, the end products are unique, and your processes are consistent. Thus, modularization plays a role independently of where you are on the digital transformation journey.