You are currently browsing the category archive for the ‘Education’ category.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

If you belong to that next generation, please read until the end and share your thoughts.

With last week’s announcement from the US government, no longer treating greenhouse gas emissions as a threat to the planet or climate.

We see a push to remove regulations that limit companies from continuing or expanding business without considering the broader consequences for other countries and future generations.

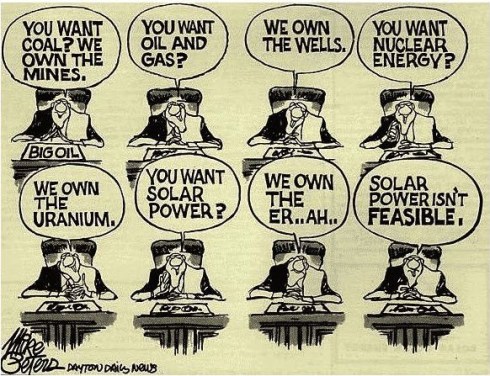

It feels like a short-term, greedy decision, largely influenced by those who benefit from fossil-carbon economies. Decisions like this make the energy transition harder, because the path of least resistance is always the easiest to follow.

Transitions are never simple. But when science is ignored, data is removed, and opinions replace facts, we are no longer supporting a transition — we are actively working against it.

My Story

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

For me, however, it stayed outside my daily focus. I was at the beginning of my career, excited about the new challenges.

And important to notice that connecting to the internet with a 28k modem was the standard, a world without social media constantly reminding us of global issues.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

Back then, the effects of carbon emissions and global warming felt distant, almost abstract. Only around 2014 did the conversation become more mainstream for me, helped by social media, before algorithms and bots began driving polarization.

In 2015, while writing about PLM and global warming, I realized something that still resonates today: even when we understand change is needed, we often stick to familiar habits, because investments in the future rarely deliver immediate ROI for ourselves or our shareholders.

The PLM Green Global Alliance

When Rich McFall approached me in 2019 with the idea of creating an alliance where people and companies could share ideas and experiences around sustainability in the PLM domain, I was immediately interested — for two reasons.

- First, there was a certain sense of responsibility related to my past activities as the Flying Dutchman. Not guilt — life is about learning and gaining insight — but awareness that I needed to change, even if the past could not be changed.

- Second, and more importantly, the PLM Green Global Alliance offered a way to contribute. It gave me a reason to act — for personal peace of mind and for future generations. Not only for my children or grandchildren, but for all those who will share this planet with them.

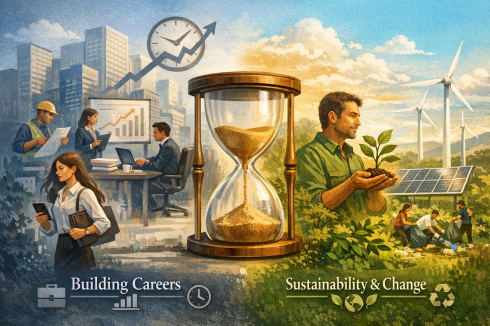

In the first years of the PGGA, we saw strong engagement from younger professionals. Over time, however, we noticed that career priorities often came first — which is understandable.

Like me at the start of my career, many focus first on building their future. Career and sustainability can coexist, but investing extra time in long-term change is not easy when daily responsibilities already demand so much.

Your Chance to Work on the Future

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

At the same time, I understand that not everyone is in a position to speak out or dedicate time to sustainability initiatives. Circumstances differ. For many, current responsibilities leave little space for additional commitments.

Still, for those willing to join us, we have two requests to better understand your expectations.

Two weeks ago, I connected with our 40 newest members of the PLM Green Global Alliance. We are now close to 1,600 members — up from around 1,500 in September 2025, as mentioned in Working on the Long Term.

That post was a gentle call to action. Seeing our PGGA membership continue to grow is encouraging — and naturally raises a question:

1. What motivates people to join the PGGA LinkedIn group?

So far, only a small number of the recent new members have completed a survey that was especially sent to them to explore changing priorities. Due to the low response, we extended the invitation to all members. We are curious about your expectations — and quietly hopeful about your involvement.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

2. Design for Sustainability – your contribution?

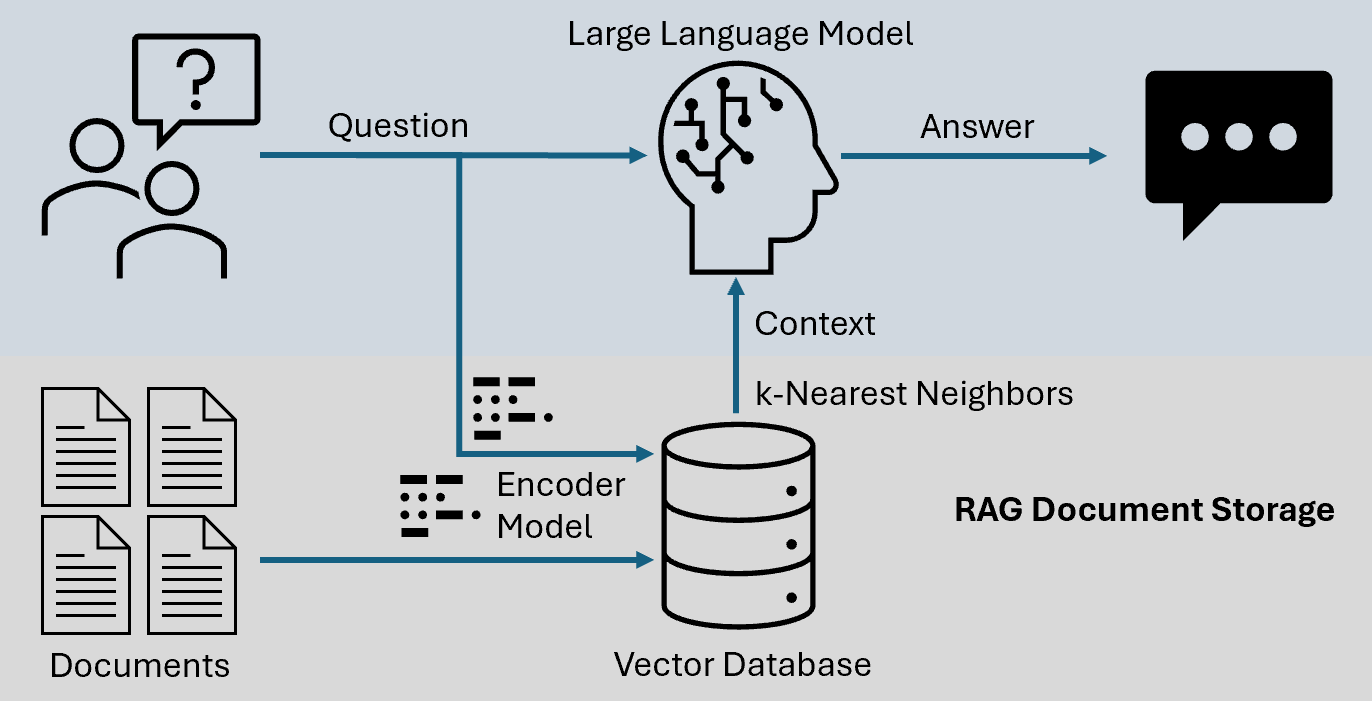

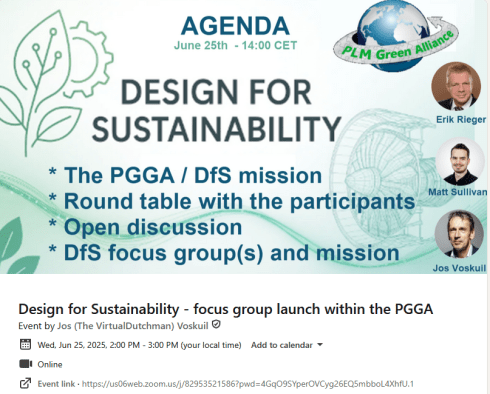

Last year, Erik Rieger and Matthew Sullivan launched a new workgroup within the PLM Green Global Alliance focused on Design for Sustainability. While the initial energy was strong, changes in personal priorities meant the team could not continue at the pace they hoped. Since many new members have joined since last May, we decided to relaunch the initiative.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

Note: If you are worried about clicking on the links for the survey, you can always contact us directly (in private) to share your ambition

Conclusion

The outside world often pushes us to focus only on daily business. In some places, there is even active pressure to avoid long-term sustainability investments. Remember that pressure often comes from those invested in keeping the current system unchanged.

If you care about the future — your generation and those that follow — stay engaged. Small actions by millions of people can create meaningful change.

We look forward to your input and participation.

— says the boomer who still cares 😉

This week is busy for me as I am finalizing several essential activities related to my favorite hobby, product lifecycle management or is it PLM😉?

And most of these activities will result in lengthy blog posts, starting with:

“The week(end) after <<fill in the event>>”.

Here are the upcoming actions:

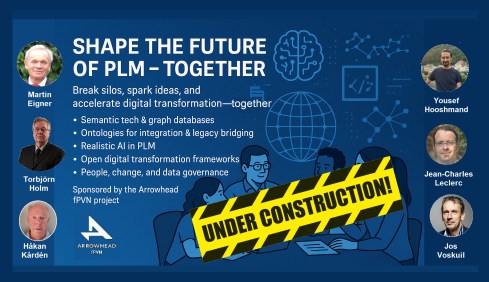

Click on each image if you want to see the details:

In this Future of PLM Podcast series, moderated by Michael Finocciaro, we will continue the debate on how to position PLM (as a system or a strategy) and move away from an engineering framing. Personally, I never saw PLM as a system and started talking more and more about product lifecycle management (the strategy) versus PLM/PDM (the systems).

Note: the intention is to be interactive with the audience, so feel free to post questions/remarks in the comments, either upfront or during the event.

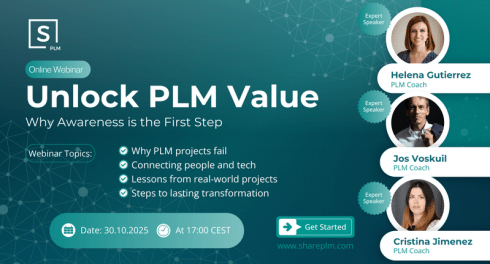

You might have seen in the past two weeks some posts and discussions I had with the Share PLM team about a unique offering we are preparing: the PLM Awareness program. From our field experience, PLM is too often treated as a technical issue, handled by a (too) small team.

We believe every PLM program should start by fostering awareness of what people can expect nowadays, given the technology, experiences, and possibilities available. If you want to work with motivated people, you have to involve them and give them all the proper understanding to start with.

Join us for the online event to understand the value and ask your questions. We are looking forward to your participation.

This is another event related to the future of PLM; however, this time it is an in-person workshop, where, inspired by four PLM thought leaders, we will discuss and work on a common understanding of what is required for a modern PLM framework. The workshop, sponsored by the Arrowhead fPVN project, will be held in Paris on November 4th, preceding the PLM Roadmap/PDT Europe conference.

We will not discuss the term PLM; we will discuss business drivers, supporting technologies and more. My role as a moderator of this event is to assist with the workshop, and I will share its findings with a broader audience that wasn’t able to attend.

Be ready to learn more in the near future!

Suppose you have followed my blog posts for the past 10 years. In that case, you know this conference is always a place to get inspired, whether by leading companies across industries or by innovative and engaging new developments. This conference has always inspired and helped me gain a better understanding of digital transformation in the PLM domain and how larger enterprises are addressing their challenges.

This time, I will conclude the conference with a lecture focusing on the challenging side of digital transformation and AI: we humans cannot transform ourselves, so we need help.

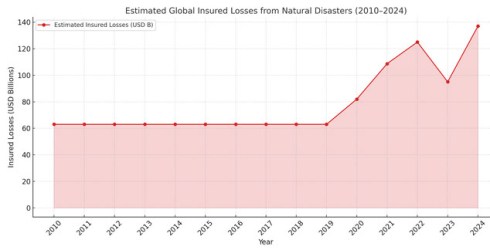

At the end of this year, we will “celebrate” our fifth anniversary of the PLM Green Global Alliance. When we started the PGGA in 2020, there was an initial focus on the impact of carbon emissions on the climate, and in the years that followed, climate disasters around the world caused serious damage to countries and people.

How could we, as a PLM community, support each other in developing and sharing best practices for innovative, lower-carbon products and processes?

In parallel, driven by regulations, there was also a need to improve current PLM practices to efficiently support ESG reporting, lifecycle analysis, and, soon, the Digital Product Passport. Regulations that push for a modern data-driven infrastructure, and we discussed this with the major PLM vendors and related software or solution partners. See our YouTube channel @PLM_Green_Global_Alliance

In this online Zoom event, we invite you to join us to discuss the topics mentioned in the announcement. Join us in this event and help us celebrate!

I am closing that week at the PTC/User Benelux event in Eindhoven, the Netherlands, with a keynote speech about digital transformation in the PLM domain. Eindhoven is the city where I grew up, completed my amateur soccer career, ran my first and only marathon, and started my career in PLM with SmarTeam. The city and location feel like home. I am looking forward to discussing and meeting with the PTC user community to learn how they experience product lifecycle management, or is it PLM😉?

With all these upcoming events, I did not have the time to focus on a new blog post; however, luckily, in the 10x PLM discussion started by Oleg Shilovitsky there was an interesting comment from Rob Ferrone related to that triggered my mind. Quote:

With all these upcoming events, I did not have the time to focus on a new blog post; however, luckily, in the 10x PLM discussion started by Oleg Shilovitsky there was an interesting comment from Rob Ferrone related to that triggered my mind. Quote:

The big breakthrough will come from 1. advances in human-machine interface and 2. less % of work executed by human in the loop. Copy/paste, typing, voice recognition are all significant limits right now. It’s like trying to empty a bucket of water through a drinking straw. When tech becomes more intelligent and proactive then we will see at least 10x.

This remark reminded me of one of my first blog posts in 2008, when I was trying to predict what PLM would look like in 2050. I thought it is a nice moment to read it (again). Enjoy!

PLM in 2050

As the year ends, I decided to take my crystal ball to see what would happen with PLM in the future. It felt like a virtual experience, and this is what I saw:

As the year ends, I decided to take my crystal ball to see what would happen with PLM in the future. It felt like a virtual experience, and this is what I saw:

- Data is no longer replicated – every piece of information will have a Universal Unique ID, also known as a UUID. In 2020, this initiative became mature, thanks to the merger of some big PLM and ERP vendors, who brought this initiative to reality. This initiative dramatically reduced exchange costs in supply chains and led to bankruptcy for many companies that provided translation and exchange software.

- Companies store their data in ‘the cloud’ based on the concept outlined above. Only some old-fashioned companies still handle their own data storage and exchange, as they fear someone will access their data. Analysts compare this behavior with the situation in the year 1950, when people kept their money under a mattress, not trusting banks (and they were not always wrong)

- After 3D, a complete virtual world based on holography became the next step in product development and understanding. Thanks to the revolutionary quantum-3D technology, this concept could even be applied to life sciences. Before ordering a product, customers could first experience and describe their needs in a virtual environment.

- Finally, the cumbersome keyboard and mouse were replaced by voice and eye recognition. Initially, voice recognition

and eye tracking were cumbersome. Information was captured by talking to the system and by recording eye movements during hologram analysis. This made the life of engineers so much easier, as while researching and talking, their knowledge was stored and tagged for reuse. No need for designers to send old-fashioned emails or type their design decisions for future reuse - Due to the hologram technology, the world became greener. People did not need to travel around the world, and the standard became virtual meetings with global teams(airlines discontinued business class). Even holidays can be experienced in the virtual world thanks to a Dutch initiative inspired by coffee. The whole IT infrastructure was powered by efficient solar energy, drastically reducing the amount of carbon dioxide.

- Then, with a shock, I noticed PLM no longer existed. Companies were focusing on their core business processes. Systems/terms like PLM, ERP, and CRM no longer existed. Some older people still remembered the battle between those systems over data ownership and the political discomfort this caused within companies.

- As people were working so efficiently, there was no need to work all week. There were community time slots when everyone was active, but 50 per cent of the time, people had time to recreate (to re-create or recreate was the question). Some older French and German designers remembered the days when they had only 10 weeks holiday per year, unimaginable nowadays.

As we still have more than 40 years to reach this future, I wish you all a successful and excellent 2009.

I am looking forward to being part of the green future next year.

In recent months, I’ve noticed a decline in momentum around sustainability discussions, both in my professional network and personal life. With current global crises—like the Middle East conflict and the erosion of democratic institutions—dominating our attention, long-term topics like sustainability seem to have taken a back seat.

In recent months, I’ve noticed a decline in momentum around sustainability discussions, both in my professional network and personal life. With current global crises—like the Middle East conflict and the erosion of democratic institutions—dominating our attention, long-term topics like sustainability seem to have taken a back seat.

But don’t stop reading yet—there is good news, though we’ll start with the bad.

The Convenient Truth

Human behavior is primarily emotional. A lesson valuable in the PLM domain and discussed during the Share PLM summit. As SharePLM notes in their change management approach, we rely on our “gator brain”—our limbic system – call it System 1 and System 2 or Thinking Fast and Slow. Faced with uncomfortable truths, we often seek out comforting alternatives.

Human behavior is primarily emotional. A lesson valuable in the PLM domain and discussed during the Share PLM summit. As SharePLM notes in their change management approach, we rely on our “gator brain”—our limbic system – call it System 1 and System 2 or Thinking Fast and Slow. Faced with uncomfortable truths, we often seek out comforting alternatives.

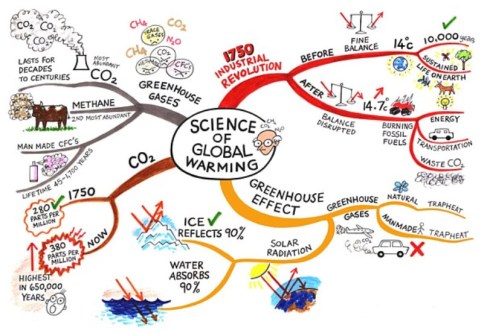

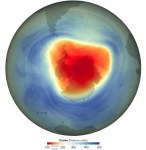

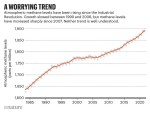

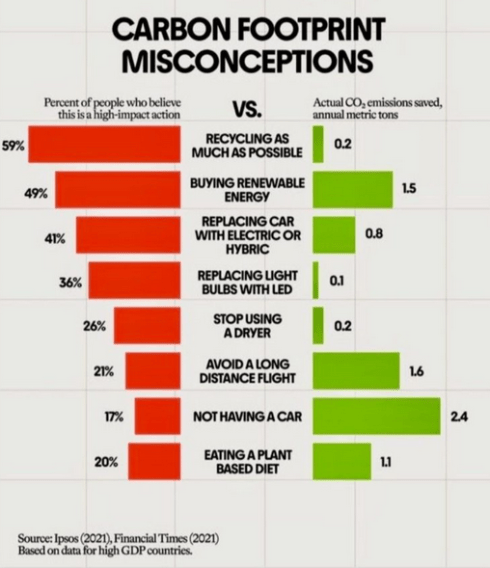

The film Don’t Look Up humorously captures this tendency. It mirrors real-life responses to climate change: “CO₂ levels were high before, so it’s nothing new.” Yet the data tells a different story. For 800,000 years, CO₂ ranged between 170–300 ppm. Today’s level is ~420 ppm—an unprecedented spike in just 150 years as illustrated below.

Frustratingly, some of this scientific data is no longer prominently published. The narrative has become inconvenient, particularly for the fossil fuel industry.

Persistent Myths

Then there is the pseudo-scientific claim that fossil fuels are infinite because the Earth’s core continually generates them. The Abiogenic Petroleum Origin theory is a fringe theory, sometimes revived from old Soviet science, and lacks credible evidence. See image below

Oil remains a finite, biologically sourced resource. Yet such myths persist, often supported by overly complex jargon designed to impress rather than inform.

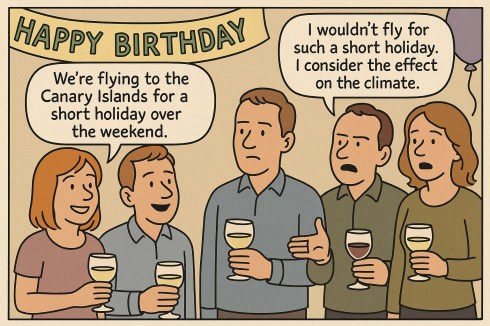

The Dissonance of Daily Life

A young couple casually mentioned flying to the Canary Islands for a weekend at a recent birthday party. When someone objected on climate grounds, they simply replied, “But the climate is so nice there!”

“Great climate on the Canary Islands”

This reflects a common divide among young people—some are deeply concerned about the climate, while many prioritize enjoying life now. And that’s understandable. The sustainability transition is hard because it challenges our comfort, habits, and current economic models.

The Cost of Transition

Companies now face regulatory pressure such as CSRD (Corporate Sustainability Reporting Directive), DPP (Digital Product Passport), ESG, and more, especially when selling in or to the European market. These shifts aren’t usually driven by passion but by obligation. Transitioning to sustainable business models comes at a cost—learning curves and overheads that don’t align with most corporations’ short-term, profit-driven strategies.

Companies now face regulatory pressure such as CSRD (Corporate Sustainability Reporting Directive), DPP (Digital Product Passport), ESG, and more, especially when selling in or to the European market. These shifts aren’t usually driven by passion but by obligation. Transitioning to sustainable business models comes at a cost—learning curves and overheads that don’t align with most corporations’ short-term, profit-driven strategies.

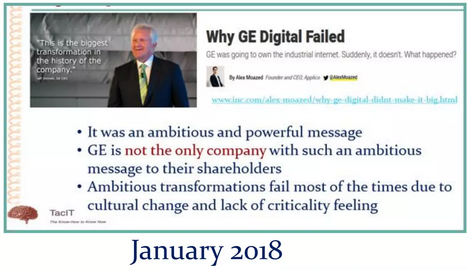

However, we have also seen how long-term visions can be crushed by shareholder demands:

- Xerox (1970s–1980s) pioneered GUI, the mouse, and Ethernet, but failed to commercialize them. Apple and Microsoft reaped the benefits instead.

- General Electric under Jeff Immelt tried to pivot to renewables and tech-driven industries. Shareholders, frustrated by slow returns, dismantled many initiatives.

- Despite ambitious sustainability goals, Siemens faced similar investor pressure, leading to spin-offs like Siemens Energy and Gamesa.

The lesson?

Transforming a business sustainably requires vision, compelling leadership, and patience—qualities often at odds with quarterly profit expectations. I explored these tensions again in my presentation at the PLM Roadmap/PDT Europe 2024 conference, read more here: Model-Based: The Digital Twin.

I noticed discomfort in smaller, closed-company sessions, some attendees said, “We’re far from that vision. ”

I noticed discomfort in smaller, closed-company sessions, some attendees said, “We’re far from that vision. ”

My response: “That’s okay. Sustainability is a generational journey, but it must start now”.

Signs of Hope

Now for the good news. In our recent PGGA (PLM Green Global Alliance) meeting, we asked: “Are we tired?” Surprisingly, the mood was optimistic.

Yes, some companies are downscaling their green initiatives or engaging in superficial greenwashing. But other developments give hope:

- China is now the global leader in clean energy investments, responsible for ~37% of the world’s total. In 2023 alone, it installed over 216 GW of solar PV—more than the rest of the world combined—and leads in wind power too. With over 1,400 GW of renewable capacity, China demonstrates that a centralized strategy can overcome investor hesitation.

- Long-term-focused companies like Iberdrola (Spain), Ørsted (Denmark), Tesla (US), BYD, and CATL (China) continue to invest heavily in EVs and batteries—critical to our shared future.

A Call to Engineers: Design for Sustainability

We may be small at the PLM Green Global Alliance, but we’re committed to educating and supporting the Product Lifecycle Management (PLM) community on sustainability.

That’s why I’m excited to announce the launch of our Design for Sustainability initiative on June 25th.

Led by Eric Rieger and Matthew Sullivan, this initiative will bring together engineers to collaborate and explore sustainable design practices. Whether or not you can attend live, we encourage everyone to engage with the recording afterward.

Conclusion

Sustainability might not dominate headlines today. In fact, there’s a rising tide of misinformation, offering people a “convenient truth” that avoids hard choices. But our work remains urgent. Building a livable planet for future generations requires long-term vision and commitment, even when it is difficult or unpopular.

So, are you tired—or ready to shape the future?

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

Most companies I am working with focus on the first option – there needs to be a business case.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

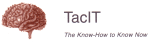

It is a visionary article about what is possible in the PLM space (if there was no legacy ☹), based on Robust Reasoning and how you could even start with LLM Augmentation for PLM “Micro-Tasks.

Interestingly, the articles from both Rob and Benedict were supported by AI-generated images – I believe this is the future: Creating an AI image of the message you have in mind.

![]() When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

From a system to a strategy

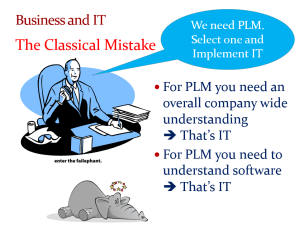

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The System approach

Traditional organizations are siloed, and initially, PLM always had the challenge of supporting product information shared throughout the whole lifecycle, where there was no conventional focus per discipline to invest in sharing – every discipline has its P&L – and sharing comes with a cost.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

In some cases, I noticed that the investment in ERP was twenty times more than the PLM investment.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

See also my old 2015 article: How do you measure collaboration?

As I mentioned, the Cost of Non-Quality, too many iterations, time lost by searching, material scrap, manufacturing delays or customer complaints – often are considered inescapable parts of doing business (like everyone else) – it happens all the time..

The strategy approach

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

When you implement a strategy, it is evident that there should be a long(er) term vision behind it, which can be challenging for companies. Also, please read my previous article: The importance of a (PLM) vision.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

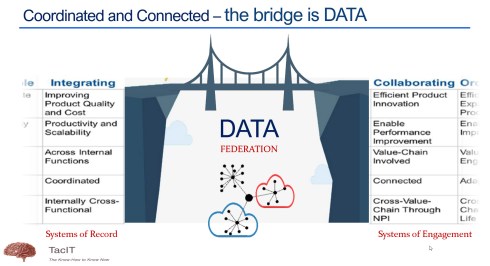

It is a process I have been preaching: From Coordinated to Coordinated and Connected.

We can be sure that at the board level, strategy discussions should be about value creation, not about reducing costs or avoiding risks as the future strategy.

Understanding the (PLM) value

The biggest challenge for companies is to understand how to modernize their PLM infrastructure to bring value.

* Step 1 is obvious. Stop considering PLM as a system with capabilities, but investigate how you transform your infrastructure from a collection of systems and (document) interfaces towards a federated infrastructure of connected tools.

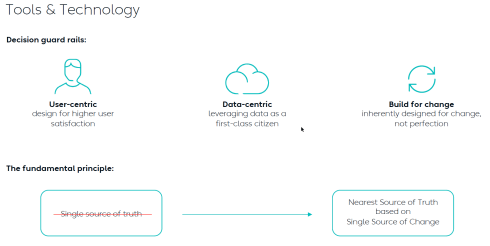

![]() Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

* Step 2 is education. A data-driven approach creates new opportunities and impacts how companies should run their business. Different skills are needed, and other organizational structures are required, from disciplines working in siloes to hybrid organizations where people can work in domain-driven environments (the Systems of Record) and product-centric teams (the System of Engagement). AI tools and capabilities will likely create an effortless flow of information within the enterprise.

* Step 3 is building a compelling story to implement the vision. Implementing new ways of working based on new technical capabilities requires also organizational change. If your organization keeps working similarly, you might gain some percentage of efficiency improvements.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

Companies understand the WHY and WHAT but leave the HOW to the middle management.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

Feel free to contact me to explore the opportunity for your business.

It is not the technology!

With the upcoming availability of AI tools, implementing a PLM strategy will no longer depend on how IT understands the technology, the systems and the interfaces needed.

As Yousef Hooshmand‘s above image describes, a federated infrastructure of connected (SaaS) solutions will enable companies to focus on accurate data (priority #1) and people creating and using accurate data (priority #1). As you can see, people and data in modern PLM are the highest priority.

Therefore, I look forward to participating in the upcoming Share PLM Summit on 27-28 May in Jerez.

It will be a breakthrough – where traditional PLM conferences focus on technology and best practices. This conference will focus on how we can involve and motivate people. Regardless of which industry you are active in, it is a universal topic for any company that wants to transform.

Conclusion

Returning to this article’s introduction, modern PLM is an opportunity to transform the business and make it future-proof. It needs to be done for sure now or in the near future. Therefore PLM initiatives should be considered from the value point first instead of focusing on the costs. How well are you connected to your management’s vision to make PLM a value discussion?

Enjoy the podcast – several topics discuss relate to this post.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

With the upcoming of Gen AI and ChatGPT, I believe my PLM feeds are flooded by AI-generated blog posts.

The ChatGPT option

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

I just tried these five questions:

- Why do we need an MBOM in PLM, and which industries benefit the most?

- What is the difference between a PLM system and a PLM strategy?

- Why do so many PLM projects fail?

- Why do so many ERP projects fail?

- What are the changes and benefits of a model-based approach to product lifecycle management?

![]() Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

All these questions provided enough information for a blog post based on the answer. This illustrates that if you are writing about what are current best practices in the field – stop writing – the knowledge is there.

PLM in the real life

Recently, I had several discussions about which skills a PLM expert should have or which topics a PLM project should address.

PLM for the individual

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

PLM vendors and system integrators often certify their staff or resellers to guarantee the quality of their solution delivery. Potential topics will be missed as they do not fulfill the vendor’s or integrator’s business purpose.

Asking ChatGPT about the required skills for a PLM expert, these were the top 5 answers:

- Technical skills

- Domain Knowledge

- Analytical and Problem-Solving Skills

- Interpersonal and Management Skills

- Strategic Thinking

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

Although we want people to be that broad in their skills, job offerings are mainly looking for the expert in one domain, be it strategy, communication, industry or technology. To get an impression of the skills read my PLM and Education concluding blog post.

Now, let’s see what it means for an organization.

PLM for the organization

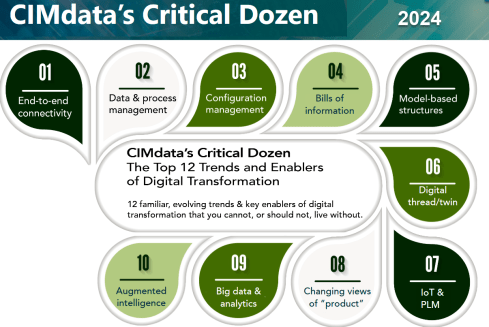

In this area, one of the most consistent frameworks I have seen over time is CIMdata‘s Critical Dozen. Although they refer less to skills and more to trends and enablers, a company should invest in – educate people & build skills – to support a successful digital transformation in the PLM domain.

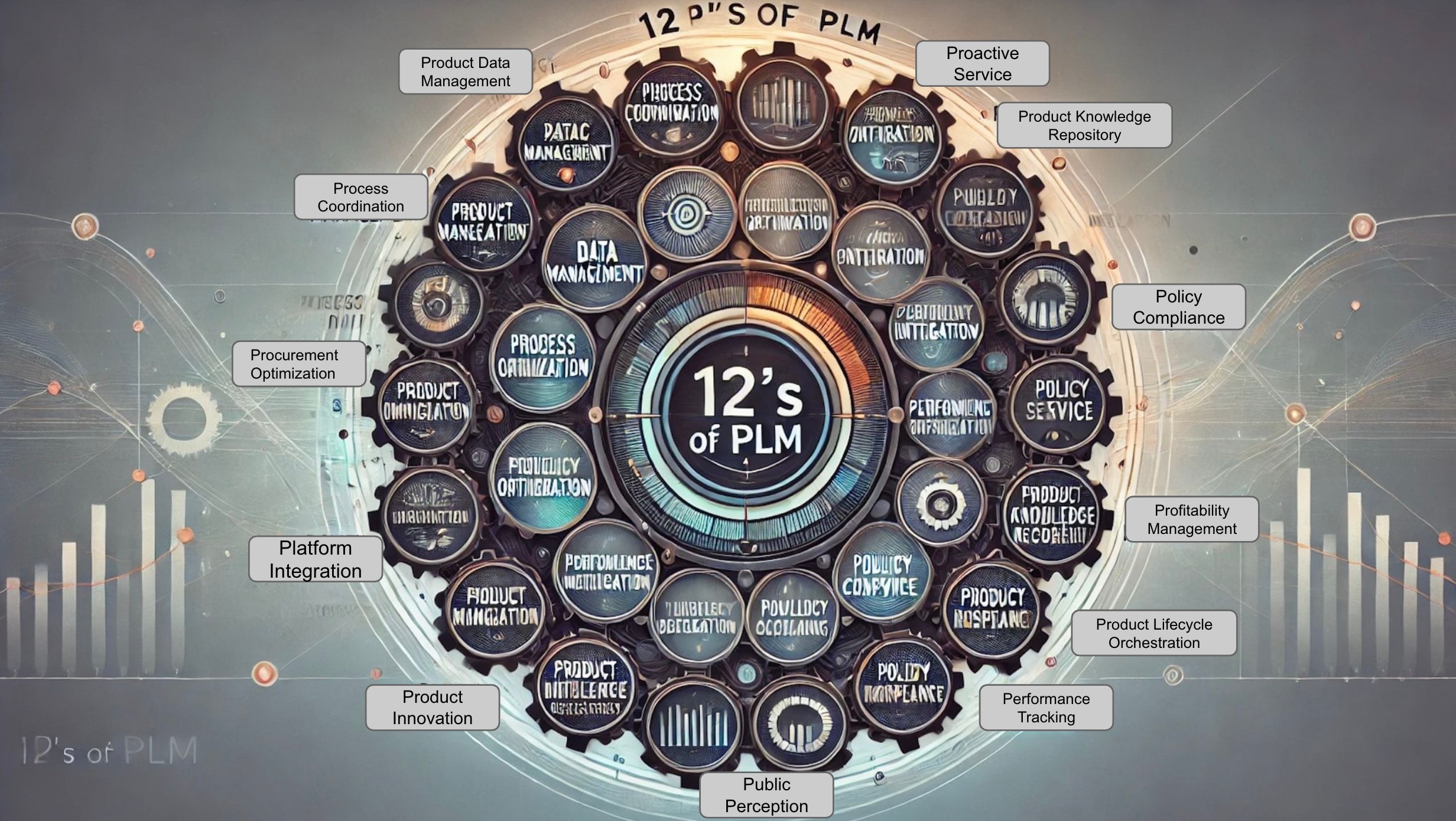

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

The challenge I see with these types of posts is: “OK, what to do now? Where to start?”

I believe where to start at the first place is a commonly agreed topic.

Everything starts from having a purpose and a vision. And this vision should be supported by a motivating story about the WHY that inspires everyone.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

An important reason why we have to make transformations personal is because we are dealing first of all with human beings. And human beings are driven by emotions first even before ratio kicks in. We see it everywhere and unfortunately also in politics.

The HOW from real-life

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

Building the HOW is not an easy journey, and to illustrate the variety of skills needed to be successful, I worked with Share PLM on their Series 2 podcast. You can find the complete overview here. There is one more to come to conclude this year.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

And this is what we learned:

Unveiling FLSmidth’s Industrial Equipment PLM Transformation: From Projects to Products

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

FLSmidth provides the global mining and cement industries with equipment and services, which is very much an ETO business moving towards CTO.

We discussed their Industrial Equipment PLM Transformation and the impact it has made.

Start With People: ABB’s Engineering Approach to Digital Transformation

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

To grow, you need to focus on your people. If your people are happy, you will automatically grow. If your people are unhappy, they will leave you or work against you.

Enabling change: Exploring the human side of digital transformations

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

“I see a PLM expert as someone with a lot of experience in organizational change management. Of course, maybe people with a different background can see a PLM expert with someone with a lot of knowledge of how you develop products, all the best practices around products, etc. We first need to agree on what a PLM expert is, and then we can agree on how you become an expert in such a domain.”

Revolutionizing PLM: Insights from Yousef Hooshmand

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

Data Mesh, with over 15 years of experience in the PLM domain, currently PLM Lead at NIO, we discussed the complexity of digital transformation in the PLM domain and How to deal with legacy, meanwhile implementing a user-centric, data-driven future.

My favorite quote: The End of Single Source of Truth, now it is about The nearest Source of Truth and Single Source of Change.

Steadfast Consistency: Delving into Configuration Management with Martijn Dullaart

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

The ROI of Digitalization: A Deep Dive into Business Value with Susanna Maëntausta

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

Susanna teaches us to ensure PLM projects are value-driven, connecting business objectives and KPIs to the implementation and execution steps in the field. Susanna is highly skilled in connecting people at any level of the organization.

Narratives of Change: Grundfos Transformation Tales with Björn Axling

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

In this episode, you will learn all the various aspects that come together when leading such a transformation in terms of culture, people, communication, and modern technology.

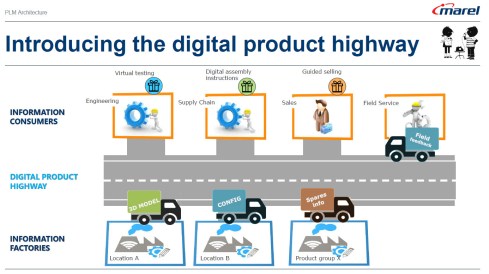

The Next Lane: Marel and the Digital Product Highway with Roger Kabo

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

Step 1: Start with the end in mind. Every successful business starts with a clear and compelling vision. Your vision should be specific, inspiring, and something your team can rally behind.

Next, build on value and do it step by step.

How do you manage technology and data when you have a diverse product portfolio?

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

Key Takeaway: Evolving technology requires a clear strategy!

Jim underscores the importance of having a north star to guide your technological advancements, ensuring you remain focused and adaptable in an ever-changing landscape.

Diverse Products, Unified Systems: MBSE Insights with Max Gravel from Moog

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

Max Gravel, Manager of Model-Based Engineering at Moog Inc., who is also active in modern CM, emphasizes that understanding your company’s goals with MBD is crucial.

There’s no one-size-fits-all solution: it’s about tailoring the strategy to drive real value for your business. The tools are available, but the key lies in addressing the right questions and focusing on what matters most. A great, motivating story containing all the aspects of digital transformation in the PLM domain/

Customer-First PLM: Insights on Digital Transformation and Leadership

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

We also discussed the role of AI in shaping the future of PLM and the importance of vision, diverse skill sets, and teamwork in transformations.

Conclusion

I believe the time of traditional blogging is over – current PLM concepts and issues can be easily queried by using ChatGPT-like solutions. The fundamental understanding of what you can do now comes from learning and listening to people, not as fast as a TikTok video or Insta message. For me, a podcast is a comfortable method of holistic learning.

Let us know what you think and who should be in Season 3

And for my friends in the United States – Happy Thanksgiving and think about the day after ……..

Recently, I attended several events related to the various aspects of product lifecycle management; most of them were tool-centric, explaining the benefits and values of their products.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

However, nowadays, people working in the business are feeling more and more challenged and pained by not acting adequately to the upcoming business demands.

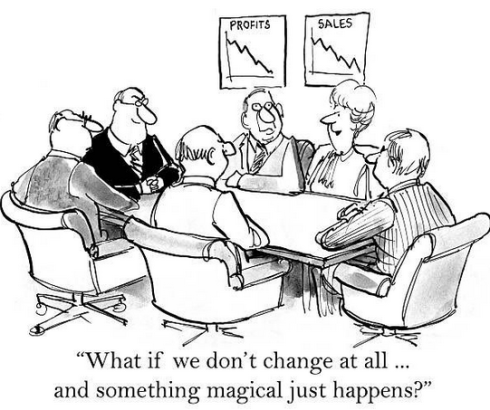

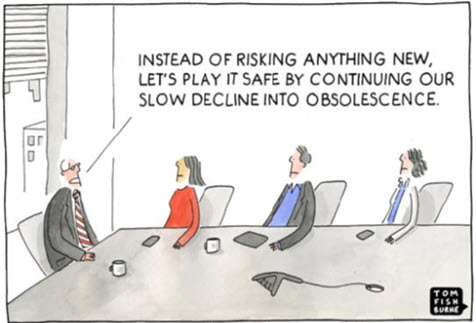

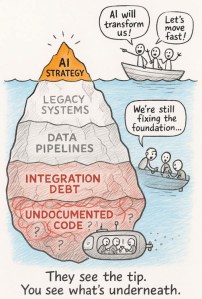

The image below has been shown so many times, and every time, the context becomes more relevant.

Too often, an evolutionary mindset with small steps is considered instead of looking toward the future and reasoning back for what needs to be done.

Let me share some experiences and potential solutions.

Don’t use the P** word!

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

PLM vendors, like Dassault Systèmes and Siemens, have recognized this, and the word PLM is no longer on their home pages.

They are now delivering experiences or digital industries software.

Other companies, such as PTC and Aras, broadened the discussion by naming other domains, such as manufacturing and services, all connected through a digital thread.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

For all of these vendors, success can come if champions inside the targeted company understand the technology and can translate its needs into their daily work.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Lesson 1:

Don’t use the word PLM in your management messaging.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

As soon as you use the word PLM, most people think about a (costly) system, as this is how PLM is framed. Engineering, like IT, is often considered a cost center, as money is made by manufacturing and selling products.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

To avoid this framing, first of all, try to explain what you want to establish for the business. The terms Digital Product Highway or Digital Infrastructure, for example, avoid thinking in systems.

Lesson 2:

Don’t tell your management why they need to reward your project – they should tell you what they need.

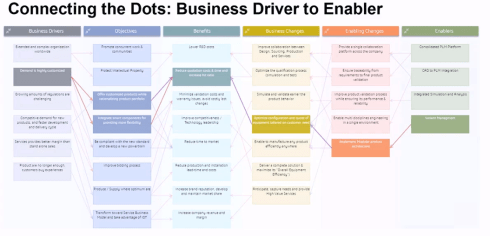

This might seem like a bit of strange advice; however, you have to realize that most of the time, people do not talk about the details at the management level. At the management level, there are strategies and business objectives, and you will only get attention when your proposal addresses the business needs. At the management level, there should be an understanding of the business need and its potential value for the organization. Next, analyzing the business changes and required tools will lead to an understanding of what value the PLM team can bring.

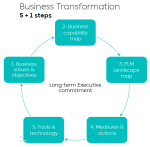

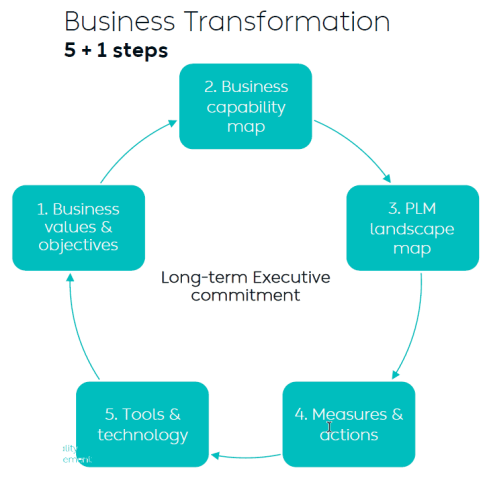

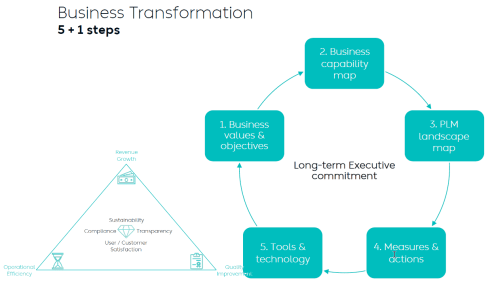

Yousef Hooshmand’s 5 + 1 approach illustrates this perfectly. It is crucial to note that long-term executive commitment is needed to have a serious project, and therefore, the connection to their business objective is vital.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Lesson 3:

Alignment must come from within the organization.

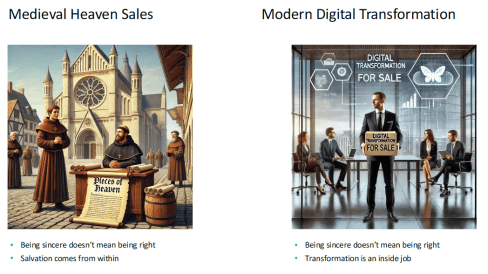

Last week, at the 20th anniversary of the Dutch PLM platform, Yousef Hooshmand gave the keynote speech starting with the images below:

On the left side, we see the medieval Catholic church sincerely selling salvation through indulgences, where the legend says Luther bought the hell, demonstrating salvation comes from inside, not from external activities – read the legend here.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

As Yousef mentioned, the intentions of these people can be sincere, but also, here, the transformation must come from inside (the company).

When I work with companies, I use the Benefits Dependency Network methodology to create a storyboard for the company. The BDN network then serves as a base for creating storylines that help people in the organization have a connected view starting from their perspective.

Companies might hire strategic consultancy firms to help them formulate their long-term strategy. This can be very helpful where, in the best case, the consultancy firm educates the company, but the company should decide on the direction.

In an older blog post, I wrote about this methodology, presented by Johannes Storvik at the Technia Innovation forum, and how it defines a value-driven implementation.

Dassault Systèmes and its partners use this methodology in their Value Engagement process, which is tuned to their solution portfolio.

You can also watch the webinar Federated PLM Webinar 5 – The Business Case for the Federated PLM, in which I explained the methodology used.

Lesson 4:

PLM is a business need not an IT service

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

The business usually focuses on incremental and economic profitability, less on transformational ways of working.

In this context, it is relevant to read Chris Seiler’s post: How to escape the vicious circle in times of transformation? Where he reflects on his 2002 MBA study, which is still valid for many big corporate organizations.

It is a long read, but it is gratifying if you are interested. It shows that PLM concepts should be discussed and executed at the business level. Of course, I read the article with my PLM-twisted brain.

The image above from Chris’s post could be a starting point for a Benefits-Dependent Network diagram, expanded with Objectives, Business Changes and Benefits to fight this vicious downturn.

As PLM is no longer a system but a business strategy, the PLM team should be integrated into the business potential overlooked by the CIO or CDO, as a CEO is usually not able to give this long-term executive commitment.

Lesson 5:

Educate yourselves and your management

![]() The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

However, before jumping on these new technologies, everyone, at every level in the organization, should be aware of:

WHY will this be relevant for our business? Is it to cut costs – being more efficient as fewer humans are in the process? Is it to be able to comply with new upcoming (sustainability) regulations? Is it because the aging workforce leaves a knowledge gap?

WHAT will our business need in the next 5 to 10 years? Are there new ways of working that we want to introduce, but we lack the technology and the tools? Do we have skills in-house? Remember, digital transformation must come from the inside.

HOW are we going to adapt our business? Can we do it in a learning mode, as the end target is not clear yet—the MVP (Minimum Viable Product) approach? Are we moving from selling products to providing a Product Service System?

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

If you want to learn more – join us – here is the link to the agenda.

Conclusion

I hope you enjoyed reading a blog post not generated by ChatGPT, although I am using bullet points. With the overflow of information, it remains crucial to keep a holistic overview. I hope that with this post, I have helped the P** teams in their mission, and I look forward to learning from your experiences in this domain.

I have not been writing much new content recently as I feel that from the conceptual side, so much has already been said and written. A way to confuse people is to overload them with information. We see it in our daily lives and our PLM domain.

I have not been writing much new content recently as I feel that from the conceptual side, so much has already been said and written. A way to confuse people is to overload them with information. We see it in our daily lives and our PLM domain.

With so much information, people become apathetic, and you will hear only the loudest and most straightforward solutions.

One desire may be that we should go back to the past when everything was easier to understand—are you sure about that?

This attitude has often led to companies doing nothing, not taking any risks, and just providing plasters and stitches when things become painful. Strategic decision-making is the key to avoiding this trap.

I just read this article in the Guardian: The German problem? It is an analog country in a digital world.

The article also describes the lessons learned from the UK (quote):

Britain was the dominant economic power in the 19th century on the back of the technologies of the first Industrial Revolution and found it hard to break with the old ways even when it should have been obvious that its coal and textile industries were in long-term decline.

As a result, Britain lagged behind its competitors. One of these was Germany, which excelled in advanced manufacturing and precision engineering.

Many technology concepts originated from Germany in the past and even now we are talking about Industrie 4.0 and Catena-X as advanced concepts. But are they implemented? Did companies change their culture and ways of working required for a connected and digital enterprise?

Technology is not the issue.

The current PLM concepts, which discuss a federated PLM infrastructure based on connected data, have become increasingly stable.

The current PLM concepts, which discuss a federated PLM infrastructure based on connected data, have become increasingly stable.

Perhaps people are using different terminologies and focusing on specific aspects of a business; however, all these (technical) discussions talk about similar business concepts:

- Prof. Dr. Jorg W. Fischer, managing partner at Steinbeis – Reshape Information Management (STZ-RIM), writes a lot about a modern data-driven infrastructure, mainly in the context of PLM and ERP. His recent article: The Freeway from PLM to ERP.

- Oleg Shilovitsky, CEO of OpenBOM, has a never-ending flow of information about data and infrastructure concepts and an understandable focus on BOMs. One of his recent articles, PLM 2030: Challenges and Opportunities of Data Lifecycle Management

- Matthias Ahrens, enterprise architect at Forvia / Hella, often shares interesting concepts related to enterprise architecture relevant to PLM. His latest share: Think PLM beyond a chain of tools!

- Dr. Yousef Hooshmand, PLM lead at NIO, shared his academic white paper and experiences at Daimler and NIO through various presentations. His publication can be found here: From a Monolithic PLM Landscape to a Federated Domain and Data Mesh.

- Erik Herzog, technical fellow at SAAB Aeronautics, has been active for the past two years, sharing the concept of federated PLM applied in the Heliple project. His latest publication post: Heliple Federated PLM at the INCOSE International Symposium in Dublin

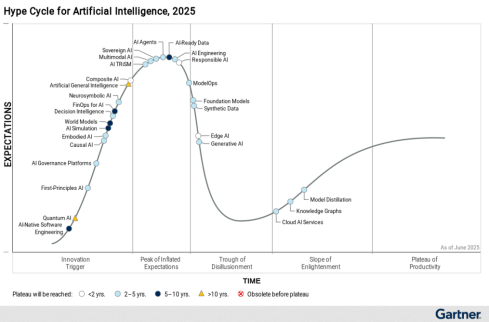

![]() Several more people are sharing their knowledge and experience in the domain of modern PLM concepts, and you will see that technology is not the issue. The hype of AI may become an issue.

Several more people are sharing their knowledge and experience in the domain of modern PLM concepts, and you will see that technology is not the issue. The hype of AI may become an issue.

From IT focus to Business focus

One issue I observed at several companies I worked with is that the PLM’s responsibility is inside the IT organization – click on the image to get the mindset.

One issue I observed at several companies I worked with is that the PLM’s responsibility is inside the IT organization – click on the image to get the mindset.

This situation is a historical one, as in the traditional PLM mode, the focus was on the on-premise installation and maintenance of a PLM system. Topics like stability, performance and security are typical IT topics.

IT departments have often been considered cost centers, and their primary purpose is to keep costs low.

Does the slogan ONE CAD, ONE PLM or ONE ERP resonate in your company?

It is all a result of trying to standardize a company’s tools. It is not deficient in a coordinated enterprise where information is exchanged in documents and BOMs. Although I wrote in 2011 about the tension between business and IT in my post “PLM and IT—love/hate relation?”

It is all a result of trying to standardize a company’s tools. It is not deficient in a coordinated enterprise where information is exchanged in documents and BOMs. Although I wrote in 2011 about the tension between business and IT in my post “PLM and IT—love/hate relation?”

Now, modern PLM is about a connected infrastructure where accurate data is the #1 priority.

Most of the new processes will be implemented in value streams, where the data is created in SaaS solutions running in the cloud. In such environments, business should be leading, and of course, where needed, IT should support the overall architecture concepts.

In this context, I recommend an older but still valid article: The Changing Role of IT: From Gatekeeper to Business Partner.

This changing role for IT should come in parallel to the changing role for the PLM team. The PLM team needs to first focus on enabling the new types of businesses and value streams, not on features and capabilities. This change in focus means they become part of the value creation teams instead of a cost center.

From successful PLM implementations, I have seen that the team directly reported to the CEO, CTO or CIO, no longer as a subdivision of the larger IT organization.

Where is your PLM team?

Is it a cost center or a value-creation engine?

The role of business leaders

As mentioned before, with a PLM team reporting to the business, communication should transition from discussing technology and capabilities to focusing on business value.

I recently wrote about this need for a change in attitude in my post: PLM business first. The recommended flow is nicely represented in the section “Starting from the business.”

I recently wrote about this need for a change in attitude in my post: PLM business first. The recommended flow is nicely represented in the section “Starting from the business.”

Image: Yousef Hooshmand.

Business leaders must realize that a change is needed due to upcoming regulations, like ESG and CSRD reporting, the Digital Product Passport and the need for product Life Cycle Analysis (LCA), which is more than just a change of tools.

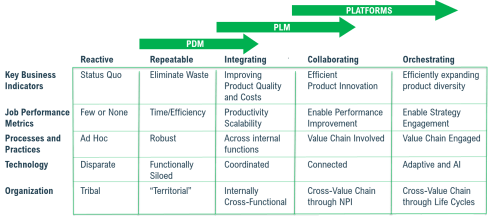

I have often referred to the diagram created by Mark Halpern from Gartner in 2015. Below you can see and adjusted diagram for 2024 including AI.

It looks like we are moving from Coordinated technology toward Connected technology. This seems easy to frame. However, my experience discussing this step in the past four to five years has led to the following four lessons learned:

- It is not a transition from Coordinated to Connected.

At this step, a company has to start in a hybrid mode – there will always remain Coordinated ways of working connected to Connected ways of working. This is the current discussion related to Federated PLM and the introduction of the terms System of Record (traditional systems / supporting linear ways of working) and Systems of Engagement (connected environments targeting real-time collaboration in their value chain) - It is not a matter of buying or deploying new tools.

Digital transformation is a change in ways of working and the skills needed. In traditional environments, where people work in a coordinated approach, they can work in their discipline and deliver when needed. People working in the connected approach have different skills. They work data-driven in a multidisciplinary mode. These ways of working require modern skills. Companies that are investing in new tools often hesitate to change their organization, which leads to frustration and failure. - There is no blueprint for your company.

Digital transformation in a company is a learning process, and therefore, the idea of a digital transformation project is a utopia. It will be a learning journey where you have to start small with a Minimum Viable Product approach. Proof of Concepts is a waste of time as they do not commit to implementing the solution. - The time is now!

The role of management is to secure the company’s future, which means having a long-term vision. And as it is a learning journey, the time is now to invest and learn using connected technology to be connected to coordinated technology. Can you avoid waiting to learn?

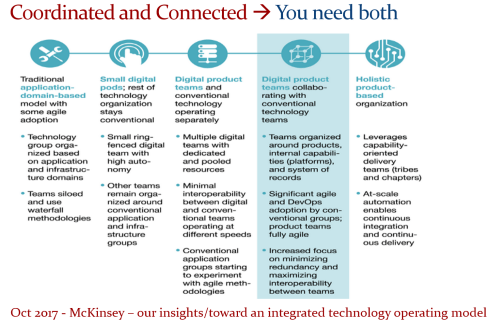

I have shared the image below several times as it is one of the best blueprints for describing the needed business transition. It originates from a McKinsey article that does not explicitly refer to PLM, again demonstrating it is first about a business strategy.

It is up to the management to master this process and apply it to their business in a timely manner. If not, the company and all its employees will be at risk for a sustainable business. Here, the word Sustainable has a double meaning – for the company and its employees/shareholders and the outside world – the planet.

Want to learn and discuss more?

Currently, I am preparing my session for the upcoming PLM Roadmap/PDT Europe conference on 23 and 24 October in Gothenburg. As I mentioned in previous years, this conference is my preferred event of the year as it is vendor-independent, and all participants are active in the various phases of a PLM implementation.

If you want to attend the conference, look here for the agenda and registration. I look forward to discussing modern PLM and its relation to sustainability with you. More in my upcoming posts till the conference.

Conclusion

Digital transformation in the PLM domain is going slow in many companies as it is complex. It is not an easy next step, as companies have to deal with different types of processes and skills. Therefore, a different organizational structure is needed. A decision to start with a different business structure always begins at the management level, driven by business goals. The technology is there—waiting for the business to lead.

In recent years, I have assisted several companies in defining their PLM strategy. The good news is that these companies are talking first about a PLM strategy and not immediately about a PLM system selection.

In recent years, I have assisted several companies in defining their PLM strategy. The good news is that these companies are talking first about a PLM strategy and not immediately about a PLM system selection.

In addition, a PLM strategy should not be defined in isolation but rather as an integral part of a broader business strategy. One of my favorite one-liners is:

“Are we implementing the past, or are we implementing the future?”

When companies implement the past, it feels like they modernize their current ways of working with new technology and capabilities. The new environment is more straightforward to explain to everybody in the company, and even the topic of migration can be addressed as migration might be manageable.

Note: Migration should always be considered – the elephant in the room.

Note: Migration should always be considered – the elephant in the room.

I wrote about Migration Migraine in two posts earlier this year, one describing the basics and the second describing the lessons learned and the path to a digital future.

Implementing PLM now should be part of your business strategy.

Threats coming from different types of competitors, necessary sustainability-related regulations (e.g., CSRD reporting), and, on the positive side, new opportunities are coming (e.g., Product as a Service), all requiring your company to be adaptable to changes in products, services and even business models.

Threats coming from different types of competitors, necessary sustainability-related regulations (e.g., CSRD reporting), and, on the positive side, new opportunities are coming (e.g., Product as a Service), all requiring your company to be adaptable to changes in products, services and even business models.

Suppose your company wants to benefit from concepts like the Digital Twin and AI. In that case, it needs a data-driven infrastructure—

Digital Twins do not run on documents, and algorithms need reliable data.

Digital Transformation in the PLM domain means combining Coordinated and Connected working methods. In other words, you need to build an infrastructure based on Systems of Record and Systems of Engagement. Followers of my blog should be familiar with these terms.

PLM is not an R&D and Engineering solution

(any more)

One of the biggest misconceptions still made is that PLM is implemented by a single system mainly used by R&D and Engineering. These disciplines are considered the traditional creators of product data—a logical assumption at the time when PLM was more of a silo, Managing Projects with CAD and BOM data.

However, this misconception frames many discussions towards discussions about what is the best system for my discipline, more or less strengthening the silos in an organization. Being able to break the silos is one of the technical capabilities digitization brings.

However, this misconception frames many discussions towards discussions about what is the best system for my discipline, more or less strengthening the silos in an organization. Being able to break the silos is one of the technical capabilities digitization brings.

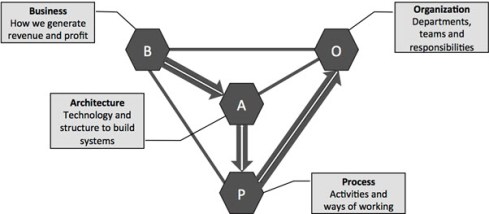

Business and IT architecture are closely related. Perhaps you have heard about Conway’s law (from 1967):

“Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization’s communication structure.”

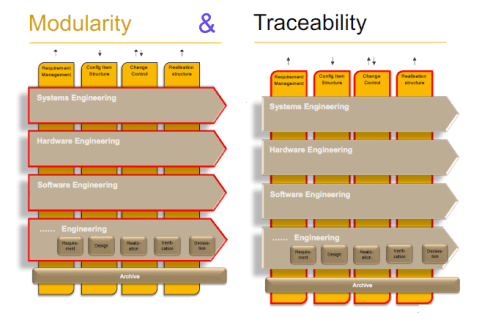

This means that if you plan to implement or improve a PLM infrastructure without considering an organizational change, you will be locked again into your traditional ways of working – the coordinated approach, which is reflected on the left side of the image (click on it to enlarge it).

This means that if you plan to implement or improve a PLM infrastructure without considering an organizational change, you will be locked again into your traditional ways of working – the coordinated approach, which is reflected on the left side of the image (click on it to enlarge it).

An organizational change impacts middle management, a significant category we often neglect. There is the C-level vision and the voice of the end user. Middle management has to connect them and still feel their jobs are not at risk. I wrote about it some years ago: The Middle Management Dilemma.

How do we adapt the business?

The biggest challenge of a business transformation is that it starts with the WHY and should be understood and supported at all organizational levels.

If there is no clear vision for change but a continuous push to be more efficient, your company is at risk!

For over 60 years, companies have been used to working in a coordinated approach, from paper-based to electronic deliverables.

- How do you motivate your organization to move in a relatively unknown direction?

- Who in your organization are the people who can build a digital vision and Strategy?

These two questions are fundamental, and you cannot outsource ownership of it.

People in the transformation teams need to be digitally skilled (not geeks), communicators (storytellers), and, very importantly, connected to the business.

People in the transformation teams need to be digitally skilled (not geeks), communicators (storytellers), and, very importantly, connected to the business.