You are currently browsing the category archive for the ‘Observations’ category.

First, an important announcement. In the last two weeks, I have finalized preparations for the upcoming Share PLM Summit in Jerez on 27-28 May. With the Share PLM team, we have been working on a non-typical PLM agenda. Share PLM, like me, focuses on organizational change management and the HOW of PLM implementations; there will be more emphasis on the people side.

First, an important announcement. In the last two weeks, I have finalized preparations for the upcoming Share PLM Summit in Jerez on 27-28 May. With the Share PLM team, we have been working on a non-typical PLM agenda. Share PLM, like me, focuses on organizational change management and the HOW of PLM implementations; there will be more emphasis on the people side.

Often, PLM implementations are either IT-driven or business-driven to implement a need, and yes, there are people who need to work with it as the closing topic. Time and budget are spent on technology and process definitions, and people get trained. Often, only train the trainer, as there is no more budget or time to let the organization adapt, and rapid ROI is expected.

This approach neglects that PLM implementations are enablers for business transformation. Instead of doing things slightly more efficiently, significant gains can be made by doing things differently, starting with the people and their optimal new way of working, and then providing the best tools.

This approach neglects that PLM implementations are enablers for business transformation. Instead of doing things slightly more efficiently, significant gains can be made by doing things differently, starting with the people and their optimal new way of working, and then providing the best tools.

The conference aims to start with the people, sharing human-related experiences and enabling networking between people – not only about the industry practices (there will be sessions and discussions on this topic too).

If you are curious about the details, listen to the podcast recording we published last week to understand the difference – click on the image on the left.

If you are curious about the details, listen to the podcast recording we published last week to understand the difference – click on the image on the left.

And if you are interested and have the opportunity, join us and meet some great thought leaders and others with this shared interest.

Why is modern PLM a dream?

If you are connected to the LinkedIn posts in my PLM feed, you might have the impression that everyone is gearing up for modern PLM. Articles often created with AI support spark vivid discussions. Before diving into them with my perspective, I want to set the scene by explaining what I mean by modern PLM and traditional PLM.

If you are connected to the LinkedIn posts in my PLM feed, you might have the impression that everyone is gearing up for modern PLM. Articles often created with AI support spark vivid discussions. Before diving into them with my perspective, I want to set the scene by explaining what I mean by modern PLM and traditional PLM.

Traditional PLM

Traditional PLM is often associated with implementing a PLM system, mainly serving engineering. Downstream engineering data usage is usually pushed manually or through interfaces to other enterprise systems, like ERP, MES and service systems.

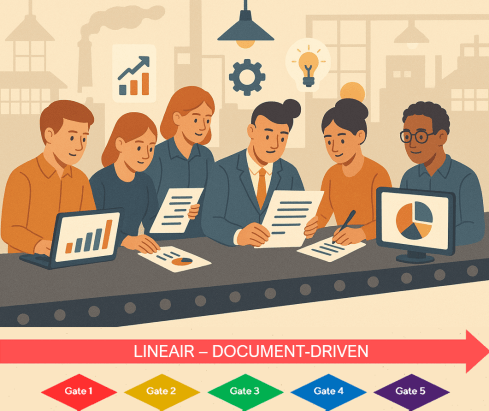

Traditional PLM is closely connected to the coordinated way of working: a linear process based on passing documents (drawings) and datasets (BOMs). Historically, CAD integrations have been the most significant characteristic of these systems.

The coordinated approach fits people working within their authoring tools and, through integrations, sharing data. The PLM system becomes a system of record, and working in a system of record is not designed to be user-friendly.

The coordinated approach fits people working within their authoring tools and, through integrations, sharing data. The PLM system becomes a system of record, and working in a system of record is not designed to be user-friendly.

Unfortunately, most PLM implementations in the field are based on this approach and are sometimes characterized as advanced PDM.

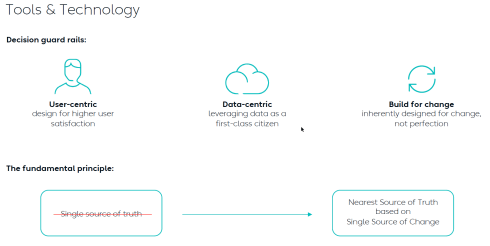

You recognize traditional PLM thinking when people talk about the single source of truth.

Modern PLM

When I talk about modern PLM, it is no longer about a single system. Modern PLM starts from a business strategy implemented by a data-driven infrastructure. The strategy part remains a challenge at the board level: how do you translate PLM capabilities into business benefits – the WHY?

When I talk about modern PLM, it is no longer about a single system. Modern PLM starts from a business strategy implemented by a data-driven infrastructure. The strategy part remains a challenge at the board level: how do you translate PLM capabilities into business benefits – the WHY?

More on this challenge will be discussed later, as in our PLM community, most discussions are IT-driven: architectures, ontologies, and technologies – the WHAT.

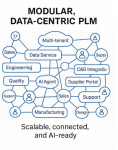

For the WHAT, there seems to be a consensus that modern PLM is based on a federated

For the WHAT, there seems to be a consensus that modern PLM is based on a federated

I think this article from Oleg Shilovitsky, “Rethinking PLM: Is It Time to Move Beyond the Monolith?“ AND the discussion thread in this post is a must-read. I will not quote the content here again.

After reading Oleg’s post and the comments, come back here

The reason for this approach: It is a perfect example of the connected approach. Instead of collecting all the information inside one post (book ?), the information can be accessed by following digital threads. It also illustrates that in a connected environment, you do not own the data; the data comes from accountable people.

Building such a modern infrastructure is challenging when your company depends mainly on its legacy—the people, processes and systems. Where to change, how to change and when to change are questions that should be answered at the top and require a vision and evolutionary implementation strategy.

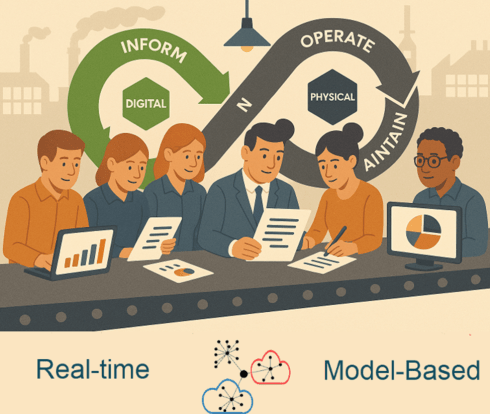

A company should build a layer of connected data on top of the coordinated infrastructure to support users in their new business roles. Implementing a digital twin has significant business benefits if the twin is used to connect with real-time stakeholders from both the virtual and physical worlds.

But there is more than digital threads with real-time data. On top of this infrastructure, a company can run all kinds of modeling tools, automation and analytics. I noticed that in our PLM community, we might focus too much on the data and not enough on the importance of combining it with a model-based business approach. For more details, read my recent post: Model-based: the elephant in the room.

But there is more than digital threads with real-time data. On top of this infrastructure, a company can run all kinds of modeling tools, automation and analytics. I noticed that in our PLM community, we might focus too much on the data and not enough on the importance of combining it with a model-based business approach. For more details, read my recent post: Model-based: the elephant in the room.

Again, there are no quotes from the article; you know how to dive deeper into the connected topic.

Despite the considerable legacy pressure there are already companies implementing a coordinated and connected approach. An excellent description of a potential approach comes from Yousef Hooshmand‘s paper: From a Monolithic PLM Landscape to a Federated Domain and Data Mesh.

Despite the considerable legacy pressure there are already companies implementing a coordinated and connected approach. An excellent description of a potential approach comes from Yousef Hooshmand‘s paper: From a Monolithic PLM Landscape to a Federated Domain and Data Mesh.

You might recognize modern PLM thinking when people talk about the nearest source of truth and the single source of change.

Is Intelligent PLM the next step?

So far in this article, I have not mentioned AI as the solution to all our challenges. I see an analogy here with the introduction of the smartphone. 2008 was the moment that platforms were introduced, mainly for consumers. Airbnb, Uber, Amazon, Spotify, and Netflix have appeared and disrupted the traditional ways of selling products and services.

So far in this article, I have not mentioned AI as the solution to all our challenges. I see an analogy here with the introduction of the smartphone. 2008 was the moment that platforms were introduced, mainly for consumers. Airbnb, Uber, Amazon, Spotify, and Netflix have appeared and disrupted the traditional ways of selling products and services.

The advantage of these platforms is that they are all created data-driven, not suffering from legacy issues.

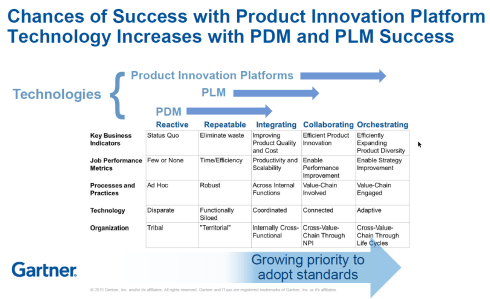

In our PLM domain, it took more than 10 years for platforms to become a topic of discussion for businesses. The 2015 PLM Roadmap/PDT conference was the first step in discussing the Product Innovation Platform – see my The Weekend after PDT 2015 post.

In our PLM domain, it took more than 10 years for platforms to become a topic of discussion for businesses. The 2015 PLM Roadmap/PDT conference was the first step in discussing the Product Innovation Platform – see my The Weekend after PDT 2015 post.

At that time, Peter Bilello shared the CIMdata perspective, Marc Halpern (Gartner) showed my favorite positioning slide (below), and Martin Eigner presented, according to my notes, this digital trend in PLM in his session:” What becomes different for PLM/SysLM?”

2015 Marc Halpern – the Product Innovation Platform (PIP)

While concepts started to become clearer, businesses mainly remained the same. The coordinated approach is the most convenient, as you do not need to reshape your organization. And then came the LLMs that changed everything.

Suddenly, it became possible for organizations to unlock knowledge hidden in their company and make it accessible to people.

Without drastically changing the organization, companies could now improve people’s performance and output (theoretically); therefore, it became a topic of interest for management. One big challenge for reaping the benefits is the quality of the data and information accessed.

I will not dive deeper into this topic today, as Benedict Smith, in his article Intelligent PLM – CFO’s 2025 Vision, did all the work, and I am very much aligned with his statements. It is a long read (7000 words) and a great starting point for discovering the aspects of Intelligent PLM and the connection to the CFO.

I will not dive deeper into this topic today, as Benedict Smith, in his article Intelligent PLM – CFO’s 2025 Vision, did all the work, and I am very much aligned with his statements. It is a long read (7000 words) and a great starting point for discovering the aspects of Intelligent PLM and the connection to the CFO.

You might recognize intelligent PLM thinking when people and AI agents talk about the most likely truth.

Conclusion

Are you interested in these topics and their meaning for your business and career? Join me at the Share PLM conference, where I will discuss “The dilemma: Humans cannot transform—help them!” Time to work on your dreams!

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

Most companies I am working with focus on the first option – there needs to be a business case.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

It is a visionary article about what is possible in the PLM space (if there was no legacy ☹), based on Robust Reasoning and how you could even start with LLM Augmentation for PLM “Micro-Tasks.

Interestingly, the articles from both Rob and Benedict were supported by AI-generated images – I believe this is the future: Creating an AI image of the message you have in mind.

![]() When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

From a system to a strategy

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The System approach

Traditional organizations are siloed, and initially, PLM always had the challenge of supporting product information shared throughout the whole lifecycle, where there was no conventional focus per discipline to invest in sharing – every discipline has its P&L – and sharing comes with a cost.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

In some cases, I noticed that the investment in ERP was twenty times more than the PLM investment.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

See also my old 2015 article: How do you measure collaboration?

As I mentioned, the Cost of Non-Quality, too many iterations, time lost by searching, material scrap, manufacturing delays or customer complaints – often are considered inescapable parts of doing business (like everyone else) – it happens all the time..

The strategy approach

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

When you implement a strategy, it is evident that there should be a long(er) term vision behind it, which can be challenging for companies. Also, please read my previous article: The importance of a (PLM) vision.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

It is a process I have been preaching: From Coordinated to Coordinated and Connected.

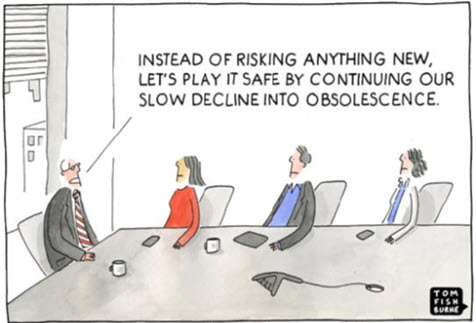

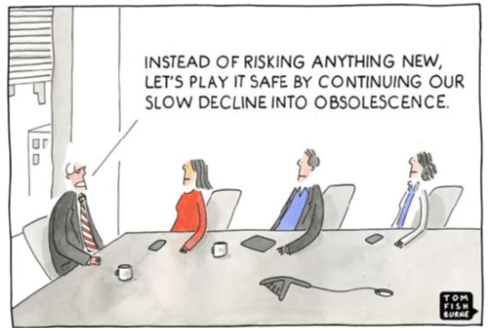

We can be sure that at the board level, strategy discussions should be about value creation, not about reducing costs or avoiding risks as the future strategy.

Understanding the (PLM) value

The biggest challenge for companies is to understand how to modernize their PLM infrastructure to bring value.

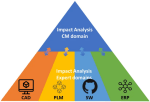

* Step 1 is obvious. Stop considering PLM as a system with capabilities, but investigate how you transform your infrastructure from a collection of systems and (document) interfaces towards a federated infrastructure of connected tools.

![]() Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

* Step 2 is education. A data-driven approach creates new opportunities and impacts how companies should run their business. Different skills are needed, and other organizational structures are required, from disciplines working in siloes to hybrid organizations where people can work in domain-driven environments (the Systems of Record) and product-centric teams (the System of Engagement). AI tools and capabilities will likely create an effortless flow of information within the enterprise.

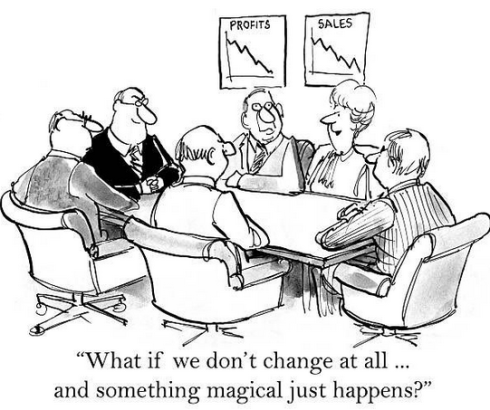

* Step 3 is building a compelling story to implement the vision. Implementing new ways of working based on new technical capabilities requires also organizational change. If your organization keeps working similarly, you might gain some percentage of efficiency improvements.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

Companies understand the WHY and WHAT but leave the HOW to the middle management.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

Feel free to contact me to explore the opportunity for your business.

It is not the technology!

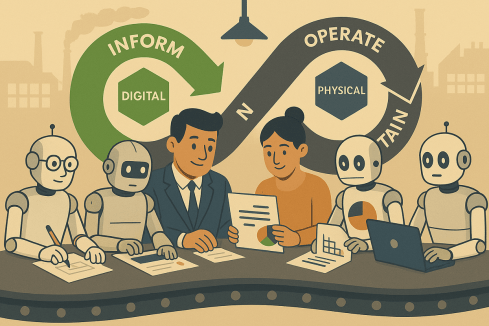

With the upcoming availability of AI tools, implementing a PLM strategy will no longer depend on how IT understands the technology, the systems and the interfaces needed.

As Yousef Hooshmand‘s above image describes, a federated infrastructure of connected (SaaS) solutions will enable companies to focus on accurate data (priority #1) and people creating and using accurate data (priority #1). As you can see, people and data in modern PLM are the highest priority.

Therefore, I look forward to participating in the upcoming Share PLM Summit on 27-28 May in Jerez.

It will be a breakthrough – where traditional PLM conferences focus on technology and best practices. This conference will focus on how we can involve and motivate people. Regardless of which industry you are active in, it is a universal topic for any company that wants to transform.

Conclusion

Returning to this article’s introduction, modern PLM is an opportunity to transform the business and make it future-proof. It needs to be done for sure now or in the near future. Therefore PLM initiatives should be considered from the value point first instead of focusing on the costs. How well are you connected to your management’s vision to make PLM a value discussion?

Enjoy the podcast – several topics discuss relate to this post.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

With the upcoming of Gen AI and ChatGPT, I believe my PLM feeds are flooded by AI-generated blog posts.

The ChatGPT option

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

I just tried these five questions:

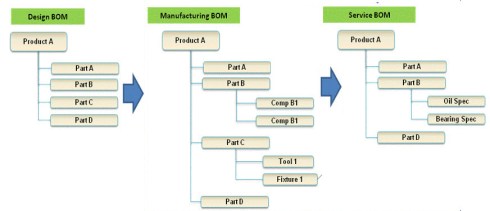

- Why do we need an MBOM in PLM, and which industries benefit the most?

- What is the difference between a PLM system and a PLM strategy?

- Why do so many PLM projects fail?

- Why do so many ERP projects fail?

- What are the changes and benefits of a model-based approach to product lifecycle management?

![]() Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

All these questions provided enough information for a blog post based on the answer. This illustrates that if you are writing about what are current best practices in the field – stop writing – the knowledge is there.

PLM in the real life

Recently, I had several discussions about which skills a PLM expert should have or which topics a PLM project should address.

PLM for the individual

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

PLM vendors and system integrators often certify their staff or resellers to guarantee the quality of their solution delivery. Potential topics will be missed as they do not fulfill the vendor’s or integrator’s business purpose.

Asking ChatGPT about the required skills for a PLM expert, these were the top 5 answers:

- Technical skills

- Domain Knowledge

- Analytical and Problem-Solving Skills

- Interpersonal and Management Skills

- Strategic Thinking

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

Although we want people to be that broad in their skills, job offerings are mainly looking for the expert in one domain, be it strategy, communication, industry or technology. To get an impression of the skills read my PLM and Education concluding blog post.

Now, let’s see what it means for an organization.

PLM for the organization

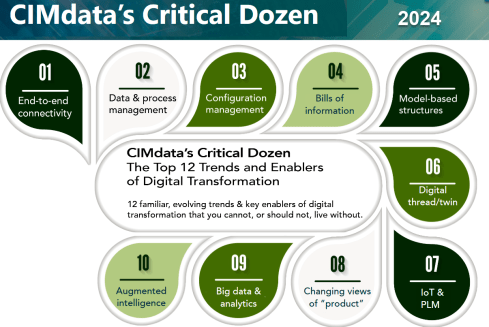

In this area, one of the most consistent frameworks I have seen over time is CIMdata‘s Critical Dozen. Although they refer less to skills and more to trends and enablers, a company should invest in – educate people & build skills – to support a successful digital transformation in the PLM domain.

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

The challenge I see with these types of posts is: “OK, what to do now? Where to start?”

I believe where to start at the first place is a commonly agreed topic.

Everything starts from having a purpose and a vision. And this vision should be supported by a motivating story about the WHY that inspires everyone.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

An important reason why we have to make transformations personal is because we are dealing first of all with human beings. And human beings are driven by emotions first even before ratio kicks in. We see it everywhere and unfortunately also in politics.

The HOW from real-life

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

Building the HOW is not an easy journey, and to illustrate the variety of skills needed to be successful, I worked with Share PLM on their Series 2 podcast. You can find the complete overview here. There is one more to come to conclude this year.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

And this is what we learned:

Unveiling FLSmidth’s Industrial Equipment PLM Transformation: From Projects to Products

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

FLSmidth provides the global mining and cement industries with equipment and services, which is very much an ETO business moving towards CTO.

We discussed their Industrial Equipment PLM Transformation and the impact it has made.

Start With People: ABB’s Engineering Approach to Digital Transformation

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

To grow, you need to focus on your people. If your people are happy, you will automatically grow. If your people are unhappy, they will leave you or work against you.

Enabling change: Exploring the human side of digital transformations

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

“I see a PLM expert as someone with a lot of experience in organizational change management. Of course, maybe people with a different background can see a PLM expert with someone with a lot of knowledge of how you develop products, all the best practices around products, etc. We first need to agree on what a PLM expert is, and then we can agree on how you become an expert in such a domain.”

Revolutionizing PLM: Insights from Yousef Hooshmand

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

Data Mesh, with over 15 years of experience in the PLM domain, currently PLM Lead at NIO, we discussed the complexity of digital transformation in the PLM domain and How to deal with legacy, meanwhile implementing a user-centric, data-driven future.

My favorite quote: The End of Single Source of Truth, now it is about The nearest Source of Truth and Single Source of Change.

Steadfast Consistency: Delving into Configuration Management with Martijn Dullaart

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

The ROI of Digitalization: A Deep Dive into Business Value with Susanna Maëntausta

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

Susanna teaches us to ensure PLM projects are value-driven, connecting business objectives and KPIs to the implementation and execution steps in the field. Susanna is highly skilled in connecting people at any level of the organization.

Narratives of Change: Grundfos Transformation Tales with Björn Axling

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

As Head of PLM and part of the Group Innovation management team at Grundfos, Bjorn Axling aims to drive a Group-wide, cross-functional transformation into more innovative, more efficient, and data-driven ways of working through the product lifecycle from ideation to end-of-life.

In this episode, you will learn all the various aspects that come together when leading such a transformation in terms of culture, people, communication, and modern technology.

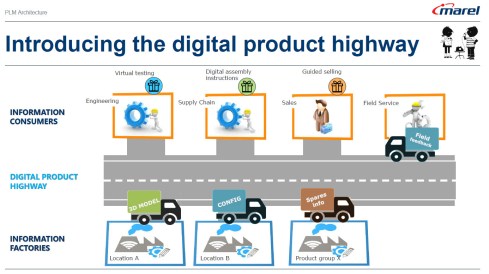

The Next Lane: Marel and the Digital Product Highway with Roger Kabo

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

With Roger Kabo, we discussed the steps needed to replace a legacy PLM environment and be open to a modern, federated, and data-driven future.

Step 1: Start with the end in mind. Every successful business starts with a clear and compelling vision. Your vision should be specific, inspiring, and something your team can rally behind.

Next, build on value and do it step by step.

How do you manage technology and data when you have a diverse product portfolio?

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

We talked with Jim van Oss, the former CIO of Moog Inc., for a deep dive into the fascinating world of technology transformations.

Key Takeaway: Evolving technology requires a clear strategy!

Jim underscores the importance of having a north star to guide your technological advancements, ensuring you remain focused and adaptable in an ever-changing landscape.

Diverse Products, Unified Systems: MBSE Insights with Max Gravel from Moog

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

We discussed the future of the Model-Based approaches with Max Gravel – MBD at Gulfstream and MBSE at Moog.

Max Gravel, Manager of Model-Based Engineering at Moog Inc., who is also active in modern CM, emphasizes that understanding your company’s goals with MBD is crucial.

There’s no one-size-fits-all solution: it’s about tailoring the strategy to drive real value for your business. The tools are available, but the key lies in addressing the right questions and focusing on what matters most. A great, motivating story containing all the aspects of digital transformation in the PLM domain/

Customer-First PLM: Insights on Digital Transformation and Leadership

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

With Helene Arlander, who has been involved in big transformation projects in the telecom industry. Starting from a complex legacy environment, implementing new data-driven approaches. We discussed the importance of managing product portfolios end-to-end and the leadership strategies needed for engaging people in charge.

We also discussed the role of AI in shaping the future of PLM and the importance of vision, diverse skill sets, and teamwork in transformations.

Conclusion

I believe the time of traditional blogging is over – current PLM concepts and issues can be easily queried by using ChatGPT-like solutions. The fundamental understanding of what you can do now comes from learning and listening to people, not as fast as a TikTok video or Insta message. For me, a podcast is a comfortable method of holistic learning.

Let us know what you think and who should be in Season 3

And for my friends in the United States – Happy Thanksgiving and think about the day after ……..

Recently, I attended several events related to the various aspects of product lifecycle management; most of them were tool-centric, explaining the benefits and values of their products.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

In parallel, I am working with several companies, assisting their PLM teams to make their plans understood by the upper management, which has always been my mission in the past.

However, nowadays, people working in the business are feeling more and more challenged and pained by not acting adequately to the upcoming business demands.

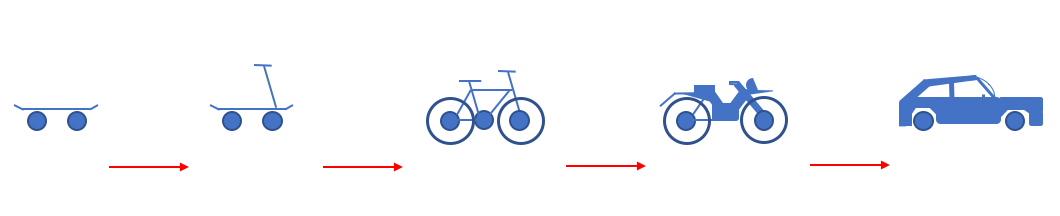

The image below has been shown so many times, and every time, the context becomes more relevant.

Too often, an evolutionary mindset with small steps is considered instead of looking toward the future and reasoning back for what needs to be done.

Let me share some experiences and potential solutions.

Don’t use the P** word!

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

The title of this post is one of the most essential points to consider. By using the term PLM, the discussion is most of the time framed in a debate related to the purchase or installation of a system, the PLM system, which is an engineering tool.

PLM vendors, like Dassault Systèmes and Siemens, have recognized this, and the word PLM is no longer on their home pages.

They are now delivering experiences or digital industries software.

Other companies, such as PTC and Aras, broadened the discussion by naming other domains, such as manufacturing and services, all connected through a digital thread.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

The challenge for all these software vendors is why a company would consider buying their products. A growing issue for them is also why would they like to change their existing PLM system to another one, as there is so much legacy.

For all of these vendors, success can come if champions inside the targeted company understand the technology and can translate its needs into their daily work.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Here, we meet the internal PLM team, which is motivated by the technology and wants to spread the message to the organization. Often, with no or limited success, as the value and the context they are considering are not understood or felt as urgent.

Lesson 1:

Don’t use the word PLM in your management messaging.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

In some of the current projects I have seen, people talk about the digital highway or a digital infrastructure to take this hurdle. For example, listen to the SharePLM podcast with Roger Kabo from Marel, who talks about their vision and digital product highway.

As soon as you use the word PLM, most people think about a (costly) system, as this is how PLM is framed. Engineering, like IT, is often considered a cost center, as money is made by manufacturing and selling products.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

According to experts (CIMdata/Gartner), Product Lifecycle Management is considered a strategic approach. However, the majority of people talk about a PLM system. Of course, vendors and system integrators will speak about their PLM offerings.

To avoid this framing, first of all, try to explain what you want to establish for the business. The terms Digital Product Highway or Digital Infrastructure, for example, avoid thinking in systems.

Lesson 2:

Don’t tell your management why they need to reward your project – they should tell you what they need.

This might seem like a bit of strange advice; however, you have to realize that most of the time, people do not talk about the details at the management level. At the management level, there are strategies and business objectives, and you will only get attention when your proposal addresses the business needs. At the management level, there should be an understanding of the business need and its potential value for the organization. Next, analyzing the business changes and required tools will lead to an understanding of what value the PLM team can bring.

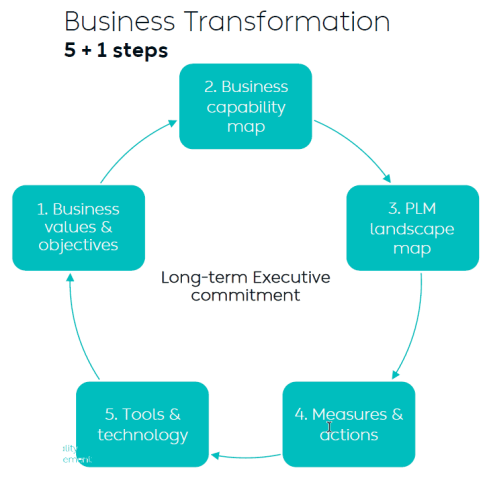

Yousef Hooshmand’s 5 + 1 approach illustrates this perfectly. It is crucial to note that long-term executive commitment is needed to have a serious project, and therefore, the connection to their business objective is vital.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Therefore, if you can connect your project to the business objectives of someone in management, you have the opportunity to get executive sponsorship. A crucial advice you hear all the time when discussing successful PLM projects.

Lesson 3:

Alignment must come from within the organization.

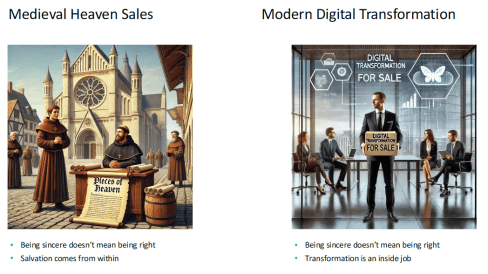

Last week, at the 20th anniversary of the Dutch PLM platform, Yousef Hooshmand gave the keynote speech starting with the images below:

On the left side, we see the medieval Catholic church sincerely selling salvation through indulgences, where the legend says Luther bought the hell, demonstrating salvation comes from inside, not from external activities – read the legend here.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

On the right side, we see the Digital Transformation expert sincerely selling digital transformation to companies. According to LinkedIn, there are about 1.170.000 people with the term Digital Transformation in their profile.

As Yousef mentioned, the intentions of these people can be sincere, but also, here, the transformation must come from inside (the company).

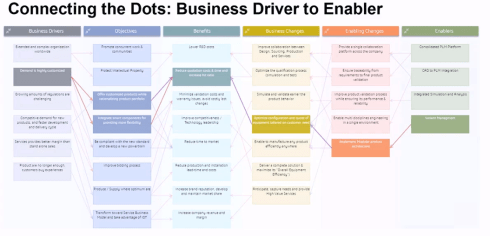

When I work with companies, I use the Benefits Dependency Network methodology to create a storyboard for the company. The BDN network then serves as a base for creating storylines that help people in the organization have a connected view starting from their perspective.

Companies might hire strategic consultancy firms to help them formulate their long-term strategy. This can be very helpful where, in the best case, the consultancy firm educates the company, but the company should decide on the direction.

In an older blog post, I wrote about this methodology, presented by Johannes Storvik at the Technia Innovation forum, and how it defines a value-driven implementation.

Dassault Systèmes and its partners use this methodology in their Value Engagement process, which is tuned to their solution portfolio.

You can also watch the webinar Federated PLM Webinar 5 – The Business Case for the Federated PLM, in which I explained the methodology used.

Lesson 4:

PLM is a business need not an IT service

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

This lesson is essential for those who believe that PLM is still a system or an IT service. In some companies, I have seen that the (understaffed) PLM team is part of a larger IT organization. In this type of organization, the PLM team, as part of IT, is purely considered a cost center that is available to support the demand from the business.

The business usually focuses on incremental and economic profitability, less on transformational ways of working.

In this context, it is relevant to read Chris Seiler’s post: How to escape the vicious circle in times of transformation? Where he reflects on his 2002 MBA study, which is still valid for many big corporate organizations.

It is a long read, but it is gratifying if you are interested. It shows that PLM concepts should be discussed and executed at the business level. Of course, I read the article with my PLM-twisted brain.

The image above from Chris’s post could be a starting point for a Benefits-Dependent Network diagram, expanded with Objectives, Business Changes and Benefits to fight this vicious downturn.

As PLM is no longer a system but a business strategy, the PLM team should be integrated into the business potential overlooked by the CIO or CDO, as a CEO is usually not able to give this long-term executive commitment.

Lesson 5:

Educate yourselves and your management

![]() The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

The last lesson is crucial, as due to improving technologies like AI and, earlier, the concepts of the digital twin, traditional ways of coordinated working will become inefficient and redundant.

However, before jumping on these new technologies, everyone, at every level in the organization, should be aware of:

WHY will this be relevant for our business? Is it to cut costs – being more efficient as fewer humans are in the process? Is it to be able to comply with new upcoming (sustainability) regulations? Is it because the aging workforce leaves a knowledge gap?

WHAT will our business need in the next 5 to 10 years? Are there new ways of working that we want to introduce, but we lack the technology and the tools? Do we have skills in-house? Remember, digital transformation must come from the inside.

HOW are we going to adapt our business? Can we do it in a learning mode, as the end target is not clear yet—the MVP (Minimum Viable Product) approach? Are we moving from selling products to providing a Product Service System?

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

My lesson: Get inspired by the software vendors who will show you what might be possible. Get educated on the topic and understand what it would mean for your organization. Start from the people and the business needs before jumping on the tools.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

In the upcoming PLM Roadmap/PDT Europe conference on 23-24 October, we will be meeting again with a group of P** experts to discuss our experiences and progress in this domain. I will give a lecture here about what it takes to move to a sustainable economy based on a Product-as-a-service concept.

If you want to learn more – join us – here is the link to the agenda.

Conclusion

I hope you enjoyed reading a blog post not generated by ChatGPT, although I am using bullet points. With the overflow of information, it remains crucial to keep a holistic overview. I hope that with this post, I have helped the P** teams in their mission, and I look forward to learning from your experiences in this domain.

In the past few weeks, together with Share PLM, we recorded and prepared a few podcasts to be published soon. As you might have noticed, for Season 2, our target is to discuss the human side of PLM and PLM best practices and less the technology side. Meaning:

In the past few weeks, together with Share PLM, we recorded and prepared a few podcasts to be published soon. As you might have noticed, for Season 2, our target is to discuss the human side of PLM and PLM best practices and less the technology side. Meaning:

- How to align and motivate people around a PLM initiative?

- What are the best practices when running a PLM initiative?

- What are the crucial skills you need to have as a PLM lead?

And as there are always many success stories to learn on the internet, we also challenged our guests to share the moments where they got experienced.

As the famous quote says:

Experience is what you get when you don’t get what you expect!

We recently published our with Antonio Casaschi from Assa Abloy, a Swedish company you might have never noticed, although their products and services are a part of your daily life.

It was a discussion to my heart. We discussed the various aspects of PLM. What makes a person a PLM professional? And if you have no time to listen for these 35 minutes, read and scan the recording transcript on the transcription tab.

At 0:24:00, Antonio mentioned the concept of Proof of Concept as he had good experiences with them in the past. The remark triggered me to share some observations that a Proof of Concept (POC) is an old-fashioned way to drive change within organizations. Not discussed in this podcast but based on my experience, companies have been using the Proof Of Concepts to win time, as they were afraid to make a decision.

A POC to gain time?

Company A

When working with a well-known company in 2014, I learned they were planning approximately ten POC per year to explore new ways of working or new technologies. As it was a POC based on an annual time scheme, the evaluation at the end of the year was often very discouraging.

When working with a well-known company in 2014, I learned they were planning approximately ten POC per year to explore new ways of working or new technologies. As it was a POC based on an annual time scheme, the evaluation at the end of the year was often very discouraging.

Most of the time, the conclusion was: “Interesting, we should explore this further” /“What are the next POCs for the upcoming year?”

There was no commitment to follow-up; it was more of a learning exercise not connected to any follow-up.

Company B

During one of the PDT events, a company presented that two years POC with the three leading PLM vendors, exploring supplier collaboration. I understood the PLM vendors had invested much time and resources to support this POC, expecting a big deal. However, the team mentioned it was an interesting exercise, and they learned a lot about supplier collaboration.

During one of the PDT events, a company presented that two years POC with the three leading PLM vendors, exploring supplier collaboration. I understood the PLM vendors had invested much time and resources to support this POC, expecting a big deal. However, the team mentioned it was an interesting exercise, and they learned a lot about supplier collaboration.

And nothing happened afterward ………

In 2019

At the 2019 Product Innovation Conference in London, when discussing Digital Transformation within the PLM domain, I shared in my conclusion that the POC was mainly a waste of time as it does not push you to transform; it is an option to win time but is uncommitted.

My main reason for not pushing a POC is that it is more of a limited feasibility study.

- Often to push people and processes into the technical capabilities of the systems used. A focus starting from technology is the opposite of what I have been pushing for longer: First, focus on the value stream – people and processes- and then study which tools and technologies support these demands.

- Second, the POC approach often blocks innovation as the incumbent system providers will claim the desired capabilities will come (soon) within their systems—a safe bet.

The Minimum Viable Product approach (MVP)

With the awareness that we need to work differently and benefit from digital capabilities also came the term Minimum Viable Product or MVP.

The abbreviation MVP is not to be confused with the minimum valuable products or most valuable players.

There are two significant differences with the POC approach:

- You admit the solution does not exist anywhere – so it cannot be purchased or copied.

- You commit to the fact that this new approach will be the right direction to take and agree that a perfect fit solution is not blocking you from starting for real.

These two differences highlight the main challenges of digital transformation in the PLM domain. Digital Transformation is a learning process – it takes time for organizations to acquire and master the needed skills. And secondly, it cannot be a big bang, and I have often referred to the 2017 article from McKinsey: Toward an integrated technology operating model. Image below.

We will soon hear more about digital transformation within the PLM domain during the next episode of our SharePLM podcast. We spoke with Yousef Hooshmand, currently working for NIO, a Chinese multinational automobile manufacturer specializing in designing and developing electric vehicles, as their PLM data lead.

You might have discovered Yousef earlier when he published his paper: “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”. It is highly recommended that to read the paper if you are interested in a potential PLM future infrastructure. I wrote about this whitepaper in 2022: A new PLM paradigm discussing the upcoming Systems of Engagement on top of a Systems or Record infrastructure.

You might have discovered Yousef earlier when he published his paper: “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”. It is highly recommended that to read the paper if you are interested in a potential PLM future infrastructure. I wrote about this whitepaper in 2022: A new PLM paradigm discussing the upcoming Systems of Engagement on top of a Systems or Record infrastructure.

To align our terminology with Yousef’s wording, his domains align with the Systems of Engagement definition.

As we discovered and discussed with Yousef, technology is not the blocking issue to start. You must understand the target infrastructure well and where each domain’s activities fit. Yousef mentions that there is enough literature about this topic, and I can refer to the SAAB conference paper: Genesis -an Architectural Pattern for Federated PLM.

For a less academic impression, read my blog post, The week after PLM Roadmap / PDT Europe 2022, where I share the highlights of Erik Herzog’s presentation: Heterogeneous and Federated PLM – is it feasible?

For a less academic impression, read my blog post, The week after PLM Roadmap / PDT Europe 2022, where I share the highlights of Erik Herzog’s presentation: Heterogeneous and Federated PLM – is it feasible?

There is much to learn and discover which standards will be relevant, as both Yousef and Erik mention the importance of standards.

The podcast with Yousef (soon to be found HERE) was not so much about organizational change management and people.

However, Yousef mentioned the most crucial success factor for the transformation project he supported at Daimler. It was C-level support, trust and understanding of the approach, knowing it will be many years, an unavoidable journey if you want to remain competitive.

However, Yousef mentioned the most crucial success factor for the transformation project he supported at Daimler. It was C-level support, trust and understanding of the approach, knowing it will be many years, an unavoidable journey if you want to remain competitive.

And with the journey aspect comes the importance of the Minimal Viable Product. You are starting a journey with an end goal in mind (top-of-the-mountain), and step by step (from base camp to base camp), people will be better covered in their day-to-day activities thanks to digitization.

And with the journey aspect comes the importance of the Minimal Viable Product. You are starting a journey with an end goal in mind (top-of-the-mountain), and step by step (from base camp to base camp), people will be better covered in their day-to-day activities thanks to digitization.

A POC would not help you make the journey; perhaps a small POC would understand what it takes to cross a barrier.

Conclusion

The concept of POCs is outdated in a fast-changing environment where technology is not necessary the blocking issue. Developing practices, new architectures and using the best-fit standards is the future. Embrace the Minimal Viable Product approach. Are you?

In the past two weeks, I had several discussions with peers in the PLM domain about their experiences.

In the past two weeks, I had several discussions with peers in the PLM domain about their experiences.

Some of them I met after a long time again face-to-face at the LiveWorx 2023 event. See my review of the event here: The Weekend after LiveWorx 2023.

And there were several interactions on LinkedIn, leading to a more extended discussion thread (an example of a digital thread ?) or a Zoom discussion (a so-called 2D conversation).

To complete the story, I also participated in two PLM podcasts from Share PLM, where we interviewed Johan Mikkelä (currently working at FLSmidth) and, in the second episode Issam Darraj (presently working at ABB) about their PLM experiences. Less a discussion, more a dialogue, trying to grasp the non-documented aspects of PLM. We are looking for your feedback on these podcasts too.

To complete the story, I also participated in two PLM podcasts from Share PLM, where we interviewed Johan Mikkelä (currently working at FLSmidth) and, in the second episode Issam Darraj (presently working at ABB) about their PLM experiences. Less a discussion, more a dialogue, trying to grasp the non-documented aspects of PLM. We are looking for your feedback on these podcasts too.

All these discussions led to a reconfirmation that if you are a PLM practitioner, you need a broad skillset to address the business needs, translate them into people and process activities relevant to the industry and ultimately implement the proper collection of tools.

![]() As a sneaky preview for the podcast sessions, we asked both Johan and Issam about the importance of the tools. I will not disclose their answers here; you have to listen.

As a sneaky preview for the podcast sessions, we asked both Johan and Issam about the importance of the tools. I will not disclose their answers here; you have to listen.

Let’s look at some of the discussions.

NOTE: Just before pushing the Publish button, Oleg Shilovitsky published this blog article PLM Project Failures and Unstoppable PLM Playbook. I will comment on his points at the end of this post. It is all part of the extensive discussion.

NOTE: Just before pushing the Publish button, Oleg Shilovitsky published this blog article PLM Project Failures and Unstoppable PLM Playbook. I will comment on his points at the end of this post. It is all part of the extensive discussion.

PLM, LinkedIn and complexity

The most popular discussions on LinkedIn are often related to the various types of Bills of Materials (eBOM, mBOM, sBOM), Part numbering schemes (intelligent or not), version and revision management and the famous FFF discussions.

The most popular discussions on LinkedIn are often related to the various types of Bills of Materials (eBOM, mBOM, sBOM), Part numbering schemes (intelligent or not), version and revision management and the famous FFF discussions.

This post: PLM and Configuration Management Best Practices: Working with Revisions, from Andreas Lindenthal, was a recent example that triggered others to react.

I had some offline discussions on this topic last week, and I noticed Frédéric Zeller wrote his post with the title PLM, LinkedIn and complexity, starting his post with (quote):

I am stunned by the average level of posts on the PLM on LinkedIn.

I’m sorry, but in 2023 :

- Part Number management (significant, non-significant) should no longer be a problem.

- Revision management should no longer be a question.

- Configuration management theory should no longer be a question.

- Notions of EBOMs, MBOMs … should no longer be a question.

So why are there still problems on these topics?

You can see from the at least 40+ comments that this statement created a lot of reactions, including mine. Apparently, these topics are touching many people worldwide, and there is no simple, single answer to each of these topics. And there are so many other topics relevant to PLM.

Talking later with Frederic for one hour in a Zoom session, we discussed the importance of the right PLM data model.

Talking later with Frederic for one hour in a Zoom session, we discussed the importance of the right PLM data model.

I also wrote a series about the (traditional) PLM data model: The importance of a (PLM) data model.

Frederic is more of a PLM architect; we even discussed the wording related to the EBOM and the MBOM. A topic that I feel comfortable discussing after many years of experience seeing the attempts that failed and the dreams people had. And this was only one aspect of PLM.

You also find the discussion related to a PLM certification in the same thread. How would you certify a person as a PLM expert?

You also find the discussion related to a PLM certification in the same thread. How would you certify a person as a PLM expert?

There are so many dimensions to PLM. Even more important, the PLM from 10-15 years ago (more of a system discussion) is no longer the PLM nowadays (a strategy and an infrastructure) –

This is a crucial difference. Learning to use a PLM tool and implement it is not the same as building a PLM strategy for your company. It is Tools, Process, People versus Process, People, Tools and Data.

Time for Methodology workshops?

I recently discussed with several peers what we could do to assist people looking for best practices discussion and lessons learned. There is a need, but how to organize them as we cannot expect this to be voluntary work.

![]() In the past, I suggested MarketKey, the organizer of the PI DX events, extend its theme workshops. For example, instead of a 45-min Focus group with a short introduction to a theme (e.g., eBOM-mBOM, PLM-ERP interfaces), make these sessions last at least half a day and be independent of the PLM vendors.

In the past, I suggested MarketKey, the organizer of the PI DX events, extend its theme workshops. For example, instead of a 45-min Focus group with a short introduction to a theme (e.g., eBOM-mBOM, PLM-ERP interfaces), make these sessions last at least half a day and be independent of the PLM vendors.

Apparently, it did not fit in the PI DX programming; half a day would potentially stretch the duration of the conference and more and more, we see two days of meetings as the maximum. Longer becomes difficult to justify even if the content might have high value for the participants.

I observed a similar situation last year in combination with the PLM roadmap/PDT Europe conference in Gothenburg. Here we had a half-day workshop before the conference led by Erik Herzog(SAAB Aeronautics)/ Judith Crockford (Europstep) to discuss concepts related to federated PLM – read more in this post: The week after PLM Roadmap/PDT Europe 2022.

I observed a similar situation last year in combination with the PLM roadmap/PDT Europe conference in Gothenburg. Here we had a half-day workshop before the conference led by Erik Herzog(SAAB Aeronautics)/ Judith Crockford (Europstep) to discuss concepts related to federated PLM – read more in this post: The week after PLM Roadmap/PDT Europe 2022.

It reminded me of an MDM workshop before the 2015 Event, led by Marc Halpern from Gartner. Unfortunately, the federated PLM discussion remained a pretty Swedish initiative, and the follow-up did not reach a wider audience.

And then there are the Aerospace and Defense PLM action groups that discuss moderated by CIMdata. It is great that they published their findings (look here), although the best lessons learned are during the workshops.

And then there are the Aerospace and Defense PLM action groups that discuss moderated by CIMdata. It is great that they published their findings (look here), although the best lessons learned are during the workshops.

However, I also believe the A&D industry cannot be compared to a mid-market machinery manufacturing company. Therefore, it is helpful for a smaller audience only.

And here, I inserted a paragraph dedicated to Oleg’s recent post, PLM Project Failures and Unstoppable PLM Playbook – starting with a quote:

How to learn to implement PLM? I wrote about it in my earlier article – PLM playbook: how to learn about PLM? While I’m still happy to share my knowledge and experience, I think there is a bigger need in helping manufacturing companies and, especially PLM professionals, with the methodology of how to achieve the right goal when implementing PLM. Which made me think about the Unstoppable PLM playbook ©.

I found a similar passion for helping companies to adopt PLM while talking to Helena Gutierrez. Over many conversations during the last few months, we talked about how to help manufacturing companies with PLM adoption. The unstoppable PLM playbook is still a work in progress, but we want to start talking about it to get your feedback and start the conversation.

It is an excellent confirmation of the fact that there is a need for education and that the education related to PLM on the Internet is not good enough.

As a former teacher in Physics, I do not believe in the Unstoppable PLM Playbook, even if it is a branded name. Many books are written by specific authors, giving their perspectives based on their (academic) knowledge.

As a former teacher in Physics, I do not believe in the Unstoppable PLM Playbook, even if it is a branded name. Many books are written by specific authors, giving their perspectives based on their (academic) knowledge.

Are they useful? I believe only in the context of a classroom discussion where the applicability can be discussed,

Therefore my questions to vendor-neutral global players, like CIMdata, Eurostep, Prostep, SharePLM, TCS and others, are you willing to pick up this request? Or are there other entities that I missed? Please leave your thoughts in the comments. I will be happy to assist in organizing them.

Therefore my questions to vendor-neutral global players, like CIMdata, Eurostep, Prostep, SharePLM, TCS and others, are you willing to pick up this request? Or are there other entities that I missed? Please leave your thoughts in the comments. I will be happy to assist in organizing them.

There are many more future topics to discuss and document too.

- What about the potential split of a PLM infrastructure between Systems of Record & Systems of Engagement?

- What about the Digital Thread, a more and more accepted theme in discussions, but what is the standard definition?

- Is it traceability as some vendors promote it, or is it the continuity of data, direct usable in various contexts – the DevOps approach?

Who likes to discuss methodology?

When asking myself this question, I see the analogy with standards. So let’s look at the various players in the PLM domain – sorry for the immense generalization.

When asking myself this question, I see the analogy with standards. So let’s look at the various players in the PLM domain – sorry for the immense generalization.

Strategic consultants: standards are essential, but spare me the details.

Vendors: standards are limiting the unique capabilities of my products

Implementers: two types – Those who understand and use standards as they see the long-term benefits. Those who avoid standards as it introduces complexity.

Companies: they love standards if they can be implemented seamlessly.

Universities: they love to explore standards and help to set the standards even if they are not scalable

Just replace standards with methodology, and you see the analogy.

We like to discuss the methodology.

As I mentioned in the introduction, I started to work with Share PLM on a series of podcasts where we interview PLM experts in the field that have experience with the people, the process, the tools and the data side. Through these interviews, you will realize PLM is complex and has become even more complicated when you consider PLM a strategy instead of a tool.

As I mentioned in the introduction, I started to work with Share PLM on a series of podcasts where we interview PLM experts in the field that have experience with the people, the process, the tools and the data side. Through these interviews, you will realize PLM is complex and has become even more complicated when you consider PLM a strategy instead of a tool.

We hope these podcasts might be a starting point for further discussion – either through direct interactions or through contributions to the podcast. If you have PLM experts in your network that can explain the complexity of PLM from various angles and have the experience. Please let us know – it is time to share.

Conclusion

By switching gears, I noticed that PLM has become complex. Too complex for a single person to master. With an aging traditional PLM workforce (like me), it is time to consolidate the best practices of the past and discuss the best practices for the future. There are no simple answers, as every industry is different. Help us to energize the PLM community – your thoughts/contributions?

After the first episode of “The PLM Doctor is IN“, this time a question from Helena Gutierrez. Helena is one of the founders of SharePLM, a young and dynamic company focusing on providing education services based on your company’s needs, instead of leaving it to function-feature training.

I might come back on this topic later this year in the context of PLM and complementary domains/services.

Now sit back and enjoy.

Note: Due to a technical mistake Helena’s mimic might give you a “CNN-like” impression as the recording of her doctor visit was too short to cover the full response.

PLM and Startups – is this a good match?

Relevant links discussed in this video

![]() Marc Halpern (Gartner): The PLM maturity table

Marc Halpern (Gartner): The PLM maturity table

VirtualDutchman: Digital PLM requires a Model-Based Enterprise

Conclusion

I hope you enjoyed the answer and look forward to your questions and comments. Let me know if you want to be an actor in one of the episodes.

The main rule: A single open question that is puzzling you related to PLM.

For those living in the Northern Hemisphere: This week, we had the shortest day, or if you like the dark, the longest night. This period has always been a moment of reflection. What have we done this year?

For those living in the Northern Hemisphere: This week, we had the shortest day, or if you like the dark, the longest night. This period has always been a moment of reflection. What have we done this year?

Rob Ferrone (Quick Release), the Santa on the left (the leftist), and Jos Voskuil (TacIT), the Santa on the right (the rightist), share in a dialogue their highlights from 2020

Wishing you all a great moment of reflection and a smooth path into a Corona-proof future.

It will be different; let’s make it better.

In the last two weeks, three events were leading to this post.

First, I read John Stark’s recent book Products2019. A must-read for anyone who wants to understand the full reach of product lifecycle related activities. See my recent post: Products2019, a must-read if you are new to PLM

First, I read John Stark’s recent book Products2019. A must-read for anyone who wants to understand the full reach of product lifecycle related activities. See my recent post: Products2019, a must-read if you are new to PLM

Afterwards, I talked with John, discussing the lack of knowledge and teaching of PLM, not to be confused by PLM capabilities and features.

Second, I participated in an exciting PI DX USA 2020 event. Some of the sessions and most of the roundtables provided insights to me and, hopefully, many other participants. You can get an impression in the post: The Weekend after PI DX 2020 USA.

Second, I participated in an exciting PI DX USA 2020 event. Some of the sessions and most of the roundtables provided insights to me and, hopefully, many other participants. You can get an impression in the post: The Weekend after PI DX 2020 USA.

A small disappointment in that event was the closing session with six vendors, as I wrote. I know it is evident when you put a group of vendors in the arena, it will be about scoring points instead of finding alignment. Still, having criticism does not mean blaming, and I am always open to having a dialogue. For that reason, I am grateful for their sponsorship and contribution.

Oleg Shilovitsky mentioned cleverly that this statement is a contradiction.

“How can you accuse PLM vendors of having a limited view on PLM and thanking them for their contribution?”

I hope the above explanation says it all, combined with the fact that I grew up in a Dutch culture of not hiding friction, meanwhile being respectful to others.

We cannot simplify PLM by just a better tool or technology or by 3D for everybody. There are so many more people and processes related to product lifecycle management involved in this domain if you want a real conference, however many of them will not sponsor events.

It is well illustrated in John Stark’s book. Many disciplines are involved in the product lifecycle. Therefore, if you only focus on what you can do with your tool, it will lead to an incomplete understanding.

It is well illustrated in John Stark’s book. Many disciplines are involved in the product lifecycle. Therefore, if you only focus on what you can do with your tool, it will lead to an incomplete understanding.

If your tool is a hammer, you hope to see nails everywhere around you to demonstrate your value

The thirds event was a LinkedIn post from John Stark – 16 groups needing Product Lifecycle Knowledge, which for me was a logical follow-up on the previous two events. I promised John to go through these 16 groups and provide my thoughts.

The thirds event was a LinkedIn post from John Stark – 16 groups needing Product Lifecycle Knowledge, which for me was a logical follow-up on the previous two events. I promised John to go through these 16 groups and provide my thoughts.

Please read his post first as I will not rewrite what has been said by John already.

CEOs and CTOs

John suggested that they should read his book, which might take more than eight hours. CEOs and CTOs, most of the time, do not read this type of book with so many details, so probably mission impossible.

John suggested that they should read his book, which might take more than eight hours. CEOs and CTOs, most of the time, do not read this type of book with so many details, so probably mission impossible.

They want to keep up with the significant trends and need to think about future business (model).

New digital and technical capabilities allow companies to move from a linear, coordinated business towards a resilient, connected business. This requires exploring future business models and working methods by experimenting in real-life, not Proof of Concept. Creating a learning culture and allowing experiments to fail is crucial, as you only learn by failing.

CDO, CIOs and Digital Transformation Executives