You are currently browsing the tag archive for the ‘PLCS’ tag.

Last week, I shared my first impressions from my favorite conference, in the post: The weekend after PLM Roadmap/PDT Europe 2023, where most impressions could be classified as traditional PLM and model-based.

Last week, I shared my first impressions from my favorite conference, in the post: The weekend after PLM Roadmap/PDT Europe 2023, where most impressions could be classified as traditional PLM and model-based.

There is nothing wrong with conventional PLM, as there is still much to do within this scope. A model-based approach for MBSE (Model-Based Systems Engineering) and MBD (Model-Based Definition) and efficient supplier collaboration are not topics you solve by implementing a new system.

Ultimately, to have a business-sustainable PLM infrastructure, you need to structure your company internally and connect to the outside world with a focus on standards to avoid a vendor lock-in or a dead end.

Ultimately, to have a business-sustainable PLM infrastructure, you need to structure your company internally and connect to the outside world with a focus on standards to avoid a vendor lock-in or a dead end.

In short, this is what I described so far in The weekend after ….part 1.

Now, let’s look at the relatively new topics for this audience.

Enabling the Marketing, Engineering & Manufacturing Digital Thread

Cyril Bouillard, the PLM & CAD Tools Referent at the Mersen Electrical Protection (EP) business unit, shared his experience implementing an end-to-end digital backbone from marketing through engineering and manufacturing.

Cyril Bouillard, the PLM & CAD Tools Referent at the Mersen Electrical Protection (EP) business unit, shared his experience implementing an end-to-end digital backbone from marketing through engineering and manufacturing.

Cyril showed the benefits of a modern PLM infrastructure that is not CAD-centric and focused on engineering only. The advantages of this approach are a seamless integrated flow of PLM and PIM (Product Information Management).

I wrote about this topic in 2019: PLM and PIM – the complementary value in a digital enterprise. Combining the concepts of PLM and PIM in an integrated, connected environment could also provide a serious benefit when collaborating with external parties.

Another benefit Cyril demonstrated was the integration of RoHS compliance to the BOM as an integrated environment. In my session, I also addressed integrated RoHS compliance as a stepping stone to efficiency in future compliance needs.

Another benefit Cyril demonstrated was the integration of RoHS compliance to the BOM as an integrated environment. In my session, I also addressed integrated RoHS compliance as a stepping stone to efficiency in future compliance needs.

Read more later or in this post: Material Compliance – as a stepping-stone towards Life Cycle Assessment (LCA)

Cyril concluded with some lessons learned.

Data quality is essential in such an environment, and there are significant time savings implementing the connected Digital Thread.

Meeting the Challenges of Sustainability in Critical Transport Infrastructures

Etienne Pansart, head of digital engineering for construction at SYSTRA, explained how they address digital continuity with PLM throughout the built assets’ lifecycle.

Etienne Pansart, head of digital engineering for construction at SYSTRA, explained how they address digital continuity with PLM throughout the built assets’ lifecycle.

Etienne’s story was related to the complexity of managing a railway infrastructure, which is a linear and vertical distribution at multiple scales; it needs to be predictable and under constant monitoring; it is a typical system of systems network, and on top of that, maintenance and operational conditions need to be continued up to date.

Regarding railway assets – a railway needs renewal every two years, bridges are designed to last a hundred years, and train stations should support everyday use.

When complaining about disturbances, you might have a little more respect now (depending on your country). However, on top of these challenges, Etienne also talked about the additional difficulties expected due to climate change: floods, fire, earth movements, and droughts, all of which will influence the availability of the rail infrastructure.

In that context, Etienne talked about the MINERVE project – see image below:

As you can see from the main challenges, there is an effort of digitalization for both the assets and a need to provide digital continuity over the entire asset lifecycle. This is not typically done in an environment with many different partners and suppliers delivering a part of the information.

Etienne explained in more detail how they aim to establish digital twins and MBSE practices to build and maintain a data-driven, model-based environment.

Having digital twins allows much more granular monitoring and making accurate design decisions, mainly related to sustainability, without the need to study the physical world.

His presentation was again a proof point that through digitalization and digital twins, the traditional worlds of Product Lifecycle Management and Asset Information Management become part of the same infrastructure.

And it may be clear that in such a collaboration environment, standards are crucial to connect the various stakeholder’s data sources – Etienne mentioned ISO 16739 (IFC), IFC Rail, and ISO 19650 (BIM) as obvious standards but also ISO 10303 (PLCS) to support the digital thread leveraged by OSLC.

And it may be clear that in such a collaboration environment, standards are crucial to connect the various stakeholder’s data sources – Etienne mentioned ISO 16739 (IFC), IFC Rail, and ISO 19650 (BIM) as obvious standards but also ISO 10303 (PLCS) to support the digital thread leveraged by OSLC.

I am curious to learn more about the progress of such a challenging project – having worked with the high-speed railway project in the Netherlands in 1995 – no standards at that time (BIM did not exist) – mainly a location reference structure with documents. Nothing digital.

The connected Digital Thread

The theme of the conference was The Digital Thread in a Heterogeneous, Extended Enterprise Reality, and in the next section, I will zoom in on some of the inspiring sessions for the future, where collaboration or information sharing is all based on a connected Digital Thread – a term I will explain in more depth in my next blog post.

Transforming the PLM Landscape:

The Gateway to Business Transformation

Yousef Hooshmand‘s presentation was the highlight of this conference for me.

Yousef Hooshmand‘s presentation was the highlight of this conference for me.

Yousef is the PLM Architect and Lead for the Modernization of the PLM Landscape at NIO, and he has been active before in the IT-landscape transformation at Daimler, on which he published the paper: From a monolithic PLM landscape to a federated domain and data mesh.

If you read my blog or follow Share PLM, you might seen the reference to Yousef’s work before, or recently, you can hear the full story at the Share PLM Podcast: Episode 6: Revolutionizing PLM: Insights.

If you read my blog or follow Share PLM, you might seen the reference to Yousef’s work before, or recently, you can hear the full story at the Share PLM Podcast: Episode 6: Revolutionizing PLM: Insights.

It was the first time I met Yousef in 3D after several virtual meetings, and his passion for the topic made it hard to fit in the assigned 30 minutes.

There is so much to share on this topic, and part of it we already did before the conference in a half-day workshop related to Federated PLM (more on this in the following review).

First, Yousef started with the five steps of the business transformation at NIO, where long-term executive commitment is a must.

His statement: “If you don’t report directly to the board, your project is not important”, caused some discomfort in the audience.

As the image shows, a business transformation should start with a systematic description and analysis of which business values and objectives should be targeted, where they fit in the business and IT landscape, what are the measures and how they can be tracked or assessed and ultimately, what we need as tools and technology.

In his paper From a Monolithic PLM Landscape to a Federated Domain and Data Mesh, Yousef described the targeted federated landscape in the image below.

And now some vendors might say, we have all these domains in our product portfolio (or we have slides for that) – so buy our software, and you are good.

And here Yousef added his essential message, illustrated by the image below.

Start by delivering the best user-centric solutions (in an MVP manner – days/weeks – not months/years). Next, be data-centric in all your choices and ultimately build an environment ready for change. As Yousef mentioned: “Make sure you own the data – people and tools can leave!”

And to conclude reporting about his passionate plea for Federated PLM:

“Stop talking about the Single Source of Truth, start Thinking of the Nearest Source of Truth based on the Single Source of Change”.

Heliple-2 PLM Federation:

A Call for Action & Contributions

A great follow-up on Yousef’s session was Erik Herzog‘s presentation about the final findings of the Heliple 2 project, where SAAB Aeronautics, together with Volvo, Eurostep, KTH, IBM and Lynxwork, are investigating a new way of federated PLM, by using an OSLC-based, heterogeneous linked product lifecycle environment.

A great follow-up on Yousef’s session was Erik Herzog‘s presentation about the final findings of the Heliple 2 project, where SAAB Aeronautics, together with Volvo, Eurostep, KTH, IBM and Lynxwork, are investigating a new way of federated PLM, by using an OSLC-based, heterogeneous linked product lifecycle environment.

Heliple stands for HEterogeneous LInked Product Lifecycle Environment

The image below, which I shared several times before, illustrates the mindset of the project.

Last year, during the previous conference in Gothenburg, Erik introduced the concept of federated PLM – read more in my post: The week after PLM Roadmap / PDT Europe 2022, mentioning two open issues to be investigated: Operational feasibility (is it maintainable over time) and Realisation effectivity (is it affordable and maintainable at a reasonable cost)

As you can see from the slide below, the results were positive and encouraged SAAB to continue on this path.

One of the points to mention was that during this project, Lynxwork was used to speed up the development of the OSLC adapter, reducing costs, time and needed skills.

After this successful effort, Erik and several others who joined us at the pre-conference workshop agreed that this initiative is valid to be tested, discussed and exposed outside Sweden.

After this successful effort, Erik and several others who joined us at the pre-conference workshop agreed that this initiative is valid to be tested, discussed and exposed outside Sweden.

Therefore, the Federated PLM Interest Group was launched to join people worldwide who want to contribute to this concept with their experiences and tools.

A first webinar from the group is already scheduled for December 12th at 4 PM CET – you can join and register here.

More to come

Given the length of this blog post, I want to stop here.

Topics to share in the next post are related to my contribution at the conference The Need for a Governance Digital Thread, where I addressed the need for federated PLM capabilities with the upcoming regulations and practices related to sustainability, which require a connected Digital.

Topics to share in the next post are related to my contribution at the conference The Need for a Governance Digital Thread, where I addressed the need for federated PLM capabilities with the upcoming regulations and practices related to sustainability, which require a connected Digital.

I want to combine this post with the findings that Mattias Johansson, CEO of Eurostep, shared in his session: Why a Digital Thread makes a lot of sense, goes beyond manufacturing, and should be standards-based.

I want to combine this post with the findings that Mattias Johansson, CEO of Eurostep, shared in his session: Why a Digital Thread makes a lot of sense, goes beyond manufacturing, and should be standards-based.

There are some interesting findings in these two presentations.

And there was the introduction of AI at the conference, with some experts’ talks and thoughts. Perhaps at this stage, it is too high on Gartner’s hype cycle to go into details. It will surely be THE topic of discussion or interest you must have noticed.

And there was the introduction of AI at the conference, with some experts’ talks and thoughts. Perhaps at this stage, it is too high on Gartner’s hype cycle to go into details. It will surely be THE topic of discussion or interest you must have noticed.

The recent turmoil at OpenAI is an example of that. More to come for sure in the future.

Conclusion

The PLM Roadmap/PDT Europe conference was significant for me because I discovered that companies are working on concepts for a data-driven infrastructure for PLM and are (working on) implementing them. The end of monolithic PLM is visible, and companies need to learn to master data using ontologies, standards and connected digital threads.

I hope you all remained curious after last week’s report from day 1 of the PLM Roadmap / PDT Europe 2022 conference in Gothenburg. The networking dinner after day 1 and the Share PLM after-party allowed us to discuss and compare our businesses. Now the highlights of day 2

I hope you all remained curious after last week’s report from day 1 of the PLM Roadmap / PDT Europe 2022 conference in Gothenburg. The networking dinner after day 1 and the Share PLM after-party allowed us to discuss and compare our businesses. Now the highlights of day 2

The Power of Curiosity

We started with a keynote speech from Stefaan van Hooydonk, Founder of the Global Curiosity Institute. It was a well-received opener of the day and an interesting theme concerning PLM.

According to Stefaan, in the previous century, curiosity had a negative connotation. Curiosity killing the cat is one of these expressions confirming the mindset. It was all about conformity to the majority, the company, and curiosity was non-conformant.

According to Stefaan, in the previous century, curiosity had a negative connotation. Curiosity killing the cat is one of these expressions confirming the mindset. It was all about conformity to the majority, the company, and curiosity was non-conformant.

The same mindset I would say we have with traditional PLM; we all have to work the same way with the same processes.

In the 21st century, modern enterprises stimulate curiosity as we understand that throughout history, curiosity has been the engine of individual, organizational, and societal progress. And in particular, in modern, unpredictable times, curiosity becomes important, for the world, the others around us and ourselves.

As Stefaan describes in his book, the Curiosity Manifesto, organizations and individuals can develop curiosity. Stefaan pushed us to reflect on our personal curiosity behavior.

- Are we really interested in the person, the topic I do not know or do not like?

- Are we avoiding curious steps out of fear? Fear for failing, judgment?

After Stefaan’s curiosity storm, you could see that the audience was inspired to apply it to themselves and their PLM mission(s).

I hope the latter – as here there is a lot to discover.

Digital Transformation – Time to roll up your sleeves

In his presentation, Torbjörn Holm, co-founder of Eurostep, addressed one of the bigger elephants in the modern enterprise: how to deal with data?

Thanks to digitization, companies are gathering ad storing data, and there seem to be no limits. However, data centers compete for electricity from the grid with civilians.

Torbjörn also introduced the term “Dark data – the dirty secret of the ICT sector. We store too much data; some research mentions that only 12 % of the data stored is critical, and the rest clogs up on some file servers. Storing unstructured and unused data generates millions of greenhouse gasses yearly.

Torbjörn also introduced the term “Dark data – the dirty secret of the ICT sector. We store too much data; some research mentions that only 12 % of the data stored is critical, and the rest clogs up on some file servers. Storing unstructured and unused data generates millions of greenhouse gasses yearly.

It is time for a data cleanup day, and inspired by Torbjörn’s story, I have already started to clean up my cloud storage. However, I did not touch my backup hard disks as they do not use energy when switched off.

Further, Torbjörn elaborated that companies need to have end-to-end data policies. Which data is required? And in the case of contracted work or suppliers, data is crucial.

Ultimately companies that want to benefit from a virtual twin of their asset in operation need to have processes in place to acquire the correct data and maintain the valid data. Digital twins do not run on documents; as mentioned in some of my blog posts, they need accurate data.

Torbjörn once more reminded us that the PLCS objective is designed for that.

Heterogeneous and federated PLM – is it feasible?

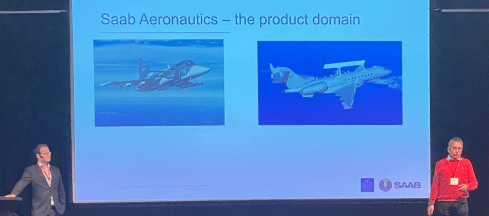

One of the sessions that upfront had most of my attention was the presentation from Erik Herzog, Technical Fellow at Saab Aeronautics and Jad El-Khoury, Researcher at the KTH/Royal Institute of Technology.

Their presentation was closely related to the pre-conference workshop we had organized by Erik and Eurostep. More about this topic in the future.

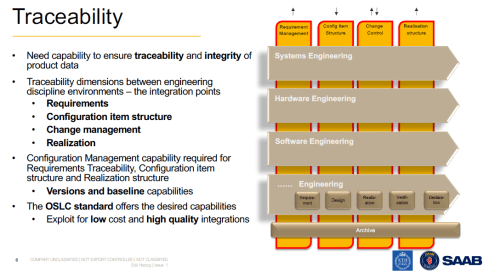

Saab, Eurostep and KTH conducted a research project named Helipe to analyze and test a federated PLM architecture. The concept was strongly driven by engineering. The idea is shown in the images below.

First are the four main modular engineering environments; in the image, we see mechanical, electrical, software and engineering environments. The target is to keep these environments as standard as possible towards the outside world so that later, an environment could be swapped for a better environment. Inside an environment, automation should provide optimal performance for the users.

In my terminology, these environments serve as systems of engagement.

The second dimension of this architecture is the traceability layer(s) – the requirements management layer, the configuration item structures, change control and realization structures.

These traceability structures look much like what we have been doing with traditional PLM, CM and ERP systems. In my terminology, they are the systems or record, not mentioned to directly serve end-users but to provide traceability, baselines for configuration, compliance and more.

The team chose the OSLC standard to realize these capabilities. One of the main reasons because OSLC is an existing open standard based on linked data, not replicating data. In this way, a federated environment would be created with designated connections between datasets.

Jad El-Koury demonstrated how to link an existing requirement in Siemens Polarion to a Defect in IBM ELM and then create a new requirement in Polarion and link this requirement to the same defect. I never get excited from technical demos; more important to learn is the effort to build such integration and its stability over time. Click on the image for the details

Jad El-Koury demonstrated how to link an existing requirement in Siemens Polarion to a Defect in IBM ELM and then create a new requirement in Polarion and link this requirement to the same defect. I never get excited from technical demos; more important to learn is the effort to build such integration and its stability over time. Click on the image for the details

The conclusions from the team below give the right indicators where the last two points seem feasible.

Still, we need more benchmarking in other environments to learn.

Still, we need more benchmarking in other environments to learn.

I remain curious about this approach as I believe it is heading toward what is necessary for the future, the mix of systems of record and systems of engagement connected through a digital web.

The bold part of the last sentence may be used by marketers.

Sustainability and Data-driven PLM – the perfect storm

For those familiar with my blog (virtualdutchman.com) and my contribution to the PLM Global Green Alliance, it will be no surprise that I am currently combining new ways of working for the PLM domain (digitization) with an even more hot topic, sustainability.

For those familiar with my blog (virtualdutchman.com) and my contribution to the PLM Global Green Alliance, it will be no surprise that I am currently combining new ways of working for the PLM domain (digitization) with an even more hot topic, sustainability.

More hot is perhaps a cynical remark.

In my presentation, I explained that a model-based, data-driven enterprise will be able to use digital twins during the design phase, the manufacturing process planning and twins of products in operation. Each twin has a different purpose.

The virtual product during the design phase does not have a real physical twin yet, so some might say it is not a twin at this stage. The virtual product/twin allows companies to perform trade-offs, verification and validation relatively fast and inexpensively. The power of analyzing this virtual twin will enable companies to design products not only at the best price/performance range but even as important, with the lowest environmental impact during manufacturing and usage in the field.

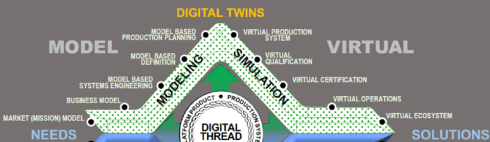

As the Boeing diamond nicely shows, there is a whole virtual world for digital twins. The manufacturing digital twin allows companies to analyze their manufacturing process and virtually analyze the most effective manufacturing process, preferably with the lowest environmental impact.

For digital twins from a product in the field, we can analyze its behavior and optimize performance, hopefully with environmental performance indicators in mind.

For a sustainable future, it is clear that we need to implement concepts of the circular economy as the earth does not have enough resources and renewables to support our current consumption behavior and ways of living.

Note: not for everybody on the globe, a quote from the European Environment Agency below:

Europe consumes more resources than most other regions. An average European citizen uses approximately four times more resources than one in Africa and three times more than one in Asia, but half of that of a citizen of the USA, Canada, or Australia

To reduce consumption, one of the recommendations is to switch the business model from owning products to products as a service. In the case of products as a service, the manufacturer becomes the owner of the full product lifecycle. Therefore, the manufacturer will have business reasons to make the products repairable, upgradeable, recyclable and using energy efficiently, preferably with renewables. If not, the product might become too expensive; fossil energy will be too expensive as carbon taxes will increase, and virgin materials might become too expensive.

It is a business change; however, sustainability will push organizations to change faster than we are used to. For example, we learned this week that the peeking energy prices and Russia’s current war in Ukraine have led to strong investments in renewables.

It is a business change; however, sustainability will push organizations to change faster than we are used to. For example, we learned this week that the peeking energy prices and Russia’s current war in Ukraine have led to strong investments in renewables.

As a result, many countries no longer want to depend on Russian energy. The peak of carbon emissions for the world is now expected in 2025.

(Although we had a very bad year so far)

Therefore, my presentation concluded that we should use sustainability as an additional driver for our digital transformation in the PLM domain. The planet cannot wait until we slowly change our traditional working methods.

Therefore, my presentation concluded that we should use sustainability as an additional driver for our digital transformation in the PLM domain. The planet cannot wait until we slowly change our traditional working methods.

Therefore, the need for digital twins to support sustainability and systems thinking are the perfect storm to speed up our digitization projects.

You can find my presentation as usual, here on SlideShare and a “spoken” version on our PGGA YouTube channel here

Digitalization for the Development and Industrialization of Innovative and Sustainable Solutions

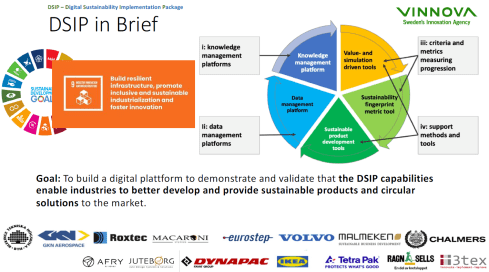

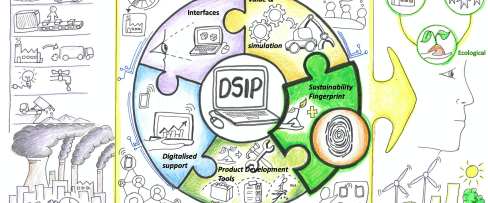

This session, given by Ola Isaksson, Professor, Product Development & Systems Engineering Design Research Group Leader at Chalmers University, was a great continuation on my part of sustainability. Ola went deeper into the aspects of sustainable products and sustainable business models.

The DSIP project (Digital Sustainability Implementation Package – image above) aims to help companies understand all aspects of sustainable development. Ola mentioned that today’s products’ evolution is insufficient to ensure a sustainable outcome. Currently, not products nor product development practices are adequate enough as we do not understand all the aspects.

For example, Ola used the electrification process, taking the Lithium raw material needed for the batteries. If we take the Nissan Leaf car as the point of measure, we would have used all Lithium resources within 50 years.

Therefore, other business models are also required, where the product ownership is transferred to the manufacturer. This is one of the 9Rs (or 10), as the image shows moving from a linear economy towards a circular economy.

Also, as I mentioned in my session, Ola referred to the upcoming regulations forcing manufacturers to change their business model or product design. All these aspects are discussed in the DSIP project, and I look forward to learning the impact this project had on educating and supporting companies in their sustainability journey.

A day 2 summary

We had Bernd Feldvoss, Value Stream Leader PLM Interoperability Standards at Airbus, reporting on the progress of the A&D action group focusing on Collaboration. At this stage, the project team has developed an open-service Collaboration Management System (CMS) web application, providing navigation through the eight-step guidelines and offering the potential to improve OEM-supplier collaboration consistency and efficiency within the A&D community.

We had Bernd Feldvoss, Value Stream Leader PLM Interoperability Standards at Airbus, reporting on the progress of the A&D action group focusing on Collaboration. At this stage, the project team has developed an open-service Collaboration Management System (CMS) web application, providing navigation through the eight-step guidelines and offering the potential to improve OEM-supplier collaboration consistency and efficiency within the A&D community.

We had Henrik Lindblad, Group Leader PLM & Process Support at the European Spallation Source, building and soon operating the world’s most powerful neutron source, enabling scientific breakthroughs in research related to materials, energy, health and the environment. Besides a scientific breakthrough, this project is also an example of starting with building a virtual twin of the facility from the start providing a multidisciplinary collaboration space.

We had Henrik Lindblad, Group Leader PLM & Process Support at the European Spallation Source, building and soon operating the world’s most powerful neutron source, enabling scientific breakthroughs in research related to materials, energy, health and the environment. Besides a scientific breakthrough, this project is also an example of starting with building a virtual twin of the facility from the start providing a multidisciplinary collaboration space.

Conclusion

I left the conference with a lot of positive energy. The Curiosity session from Stefaan van Hooydonk energized us all, but as important for our PLM domain, I saw the trend towards more federated PLM environments, more discussions related to sustainability, and people in 3D again. So far, my takeaways this time. Enough to explore till the next event.

Last week I shared the first impression from my favorite conference, the PLM Roadmap / PDT conference organized by CIMdata and Eurostep. You can read some of the highlights here: The weekend after PLM Roadmap / PDT 2019 Day 1.

Last week I shared the first impression from my favorite conference, the PLM Roadmap / PDT conference organized by CIMdata and Eurostep. You can read some of the highlights here: The weekend after PLM Roadmap / PDT 2019 Day 1.

Click on the logo to see what was the full agenda. In this post, I will focus on some of the highlights of day 2.

Chernobyl, The megaproject with the New Arch

Christophe Portenseigne from the Bouygues Construction Group shared with us his personal story about this megaproject, called Novarka. 33 years ago, reactor #4 exploded and has been confined with an object shelter within six months in 1986. This was done with heroic speed, and it was anticipated that the shelter would only last for 20 – 30 years. You can read about this project here.

Christophe Portenseigne from the Bouygues Construction Group shared with us his personal story about this megaproject, called Novarka. 33 years ago, reactor #4 exploded and has been confined with an object shelter within six months in 1986. This was done with heroic speed, and it was anticipated that the shelter would only last for 20 – 30 years. You can read about this project here.

The Novarka project was about creating a shelter for Confinement of the radioactive dust and protection of the existing against external actions (wind, water, snow…) for the next 100 years!

And even necessary, the inside the arch would be a plant where people could work safely on the process of decommissioning the existing contaminated structures. You can read about the full project here at the Novarka website.

What impressed me the most the personal stories of Christophe taking us through some of the massive challenges that need to be solved with innovative thinking. High complexity, a vast number of requirements, many parties, stakeholders involved closed in June 2019. As Christophe mentioned, this was a project to be proud of as it creates a kind of optimism that no matter how big the challenges are, with human ingenuity and effort, we can solve them.

A Model Factory for the Efficient Development of High Performing Vehicles

Eric Landel, expert leader for Numerical Modeling and Simulation at Renault, gave us an interesting insight into an aspect of digitalization that has become very valuable, the connection between design and simulation to develop products, in this case, the Renault CLIO V, as much as possible in the virtual world. You need excellent simulation models to match future reality (and tests). The target of simulation was to get the highest safety test results in the Europe NCAP rating – 5 stars.

The Renault modeling factory implemented a digital loop (below) to ensure that at the end of the design/simulation, a robust design would exist. Eric mentioned that for the Clio, they did not build a prototype anymore. The first physical tests were done on cars coming from the plant. Despite the investment in simulation software, a considerable saving in crash part over cost before TGA (Tooling Go Ahead).

Combined with the savings, the process has been much faster than before. From 10 weeks for a simulation loop towards 4 weeks. The next target is to reduce this time to 1 week. A real example of digitization and a connected model-based approach.

From virtual prototype to hybrid twin

ESI – their sponsor session Evolving from Virtual Prototype Testing to Hybrid Twin: Challenges & Benefits was an excellent complementary session to the presentation from Renault

PLM, MBSE and Supply chain – challenges and opportunities

Nigel Shaw’s presentation was one of my favorite presentations, as Nigel addressed the same topics that I have been discussing in the past years. His focus was on collaboration between the OEM and supplier with the various aspects of requirements management, configuration management, simulation and the different speeds of PLM (focus on mechanical) and ALM (focus on software)

How can such activities work in a digitally-connected environment instead of a document-based approach? Nigel looked into the various aspects of existing standards in their domains and their future. There is a direction to MBE (Model-Based Everything) but still topics to consider. See below:

I agree with Nigel – the future is model-based – when will be the issue for the market leaders.

The ISO AP239 ed3 Project and the Through Life Cycle Interoperability Challenge

Yves Baudier from AFNET, a reference association in France regarding industry digitation, digital threads, and digital processes for Extended Enterprise/Supply chain. All about a digital future and Yves presentation was about the interoperability challenge, mentioning three of my favorite points to consider:

- Data becoming more and more a strategic asset – as digitalization of Industry and Services, new services enabled by data analytics

- All engineering domains (from concept design to system end of life) need to develop a data-centric approach (not only model-centric)– An opportunity for PLM to cover the full life-cycle

- Effectivity and efficiency of data interoperability through the life-cycle is now an essential industry requirement – e.g., “virtual product” and “digital twin” concepts

All the points are crucial for the domain of PLM.

In that context, Yves discussed the evolution of the ISO 10303-239 standard, also known as PLCS. The target with ISO AP239 ed3 is to become the standard for Aerospace and Defense for the full product lifecycle and through this convergence being able to push IT/PLM Vendors to comply – crucial for a digital enterprise

Time for the construction / civil industry

Christophe Castaing, director of digital engineering at Egis, shared with us their solution framework to manage large infrastructure projects by focusing on both the Asset Information (BIM-based) and the collaborative processes between the stakeholders, all based on standards. It was a broad and in-depth presentation – too much to share in a blog post. To conclude (see also Christophe’s slide below) in the construction industry more and more, there is the desire to have a digital twin of a given asset (building/construction), creating the need for standard information models.

Pierre Benning, IT director from Bouygues Public Works gave us an update on the MINnD project. MINnD standing for Modeling INteroperable INformation for sustainable INfrastructures in xD, a French research project dedicated to the deployment of BIM and digital engineering in the infrastructure sector. Where BIM has been starting from the construction industry, there is a need for a similar, digital modeling approach for civil infrastructure. In 2014 Christophe Castaing already reported the activities of the MINnD project – see The weekend after PDT 2014. Now Pierre was updating us on what are the activities for MINnD Season 2 – see below:

As you can see, again, the interest in digital twins for operations and maintenance. Perhaps here, the civil infrastructure industry will be faster than traditional industries because of its enormous value. BIM and GIS reconciliation is a precise topic as many civil infrastructures have a GIS aspect – Road/Train infrastructure for example. The third bullet is evident to me. With digitization and the integration of contractors and suppliers, BIM and PLM will be more-and-more conceptual alike. The big difference still at this moment: BIM has one standard framework where PLM-standards are still not in a consolidation stage.

Digital Transformation for PLM is not an evolution

If you have been following my blog in the past two years, you may have noticed that I am exploring ways to solve the transition from traditional, coordinated PLM processes towards future, connected PLM. In this session, I shared with the audience that digital transformation is disruptive for PLM and requires thinking in two modes.

If you have been following my blog in the past two years, you may have noticed that I am exploring ways to solve the transition from traditional, coordinated PLM processes towards future, connected PLM. In this session, I shared with the audience that digital transformation is disruptive for PLM and requires thinking in two modes.

Thinking in two modes is not what people like, however, organizations can run in two modes. Also, I shared some examples from digital transformation stories that illustrate there was no transformation, either failure or smoke, and mirrors. You can download my presentation via SlideShare here.

Fireplace discussion: Bringing all the Trends Together, What’s next

We closed the day and the conference with a fireplace chat moderated by Dr. Ken Versprille from CIMdata, where we discussed, among other things, the increasing complexity of products and products as a service. We have seen during the sessions from BAE Systems Maritime and Bouygues Construction Group that we can do complex projects, however, when there are competition and time to deliver pressure, we do not manage the project so much, we try to contain the potential risk. It was an interactive fireplace giving us enough thoughts for next year.

Conclusion

Nothing to add to Håkan Kårdén’s closing tweet – I hope to see you next year.

A week ago I attended the joined CIMdata Roadmap and PDT Europe conference in Stuttgart as you can recall from last week’s post: The weekend after CIMdata Roadmap / PDT Europe 2018. As there was so much information to share, I had to split the report into two posts. This time the focus on the PDT Europe. In general, the PDT conferences have always been focusing on sharing experiences and developments related to standards. A topic you will not see at PLM Vendor conferences. Therefore, your chance to learn and take part if you believe in standards.

A week ago I attended the joined CIMdata Roadmap and PDT Europe conference in Stuttgart as you can recall from last week’s post: The weekend after CIMdata Roadmap / PDT Europe 2018. As there was so much information to share, I had to split the report into two posts. This time the focus on the PDT Europe. In general, the PDT conferences have always been focusing on sharing experiences and developments related to standards. A topic you will not see at PLM Vendor conferences. Therefore, your chance to learn and take part if you believe in standards.

This year’s theme: Collaboration in the Engineering and Manufacturing Supply Chain – the Extended Digital Thread and Smart Manufacturing. Industry 4.0 plays a significant role here.

Model-based X: What is it and what is the status?

I have seen Peter Bilello presenting this topic now several times, and every time there is a little more progress. The fact that there is still an acronym war illustrated that the various aspects of a model-based approach are not yet defined. Some critics will be stating that’s because we do not need model-based and it is only a vendor marketing trick again. Two comments here:

I have seen Peter Bilello presenting this topic now several times, and every time there is a little more progress. The fact that there is still an acronym war illustrated that the various aspects of a model-based approach are not yet defined. Some critics will be stating that’s because we do not need model-based and it is only a vendor marketing trick again. Two comments here:

- If you want to implement an end-to-end model-based approach including your customers and supply chain, you cannot avoid standard. More will become clear when you read the rest of this post. Vendors will not promote standards as it reduces their capabilities to deliver unique So standards must come from the market, not from the marketing.

- In 2007 Carl Bass, at that time CEO at Autodesk made his statement: “There are only three customers in the world that have a PLM problem; Dassault, PTC, and There are no other companies that say I have a PLM problem”. Have a look here. PLM is understood by now and even by Autodesk. The statement illustrates that in the beginning the PLM target was not clear and people thought PLM was a system instead of a strategic approach. Model-based ways of working have to go through the same learning path, hopefully, faster.

Peter’s presentation was a good walk-through pointing out what exists, where we focus and that there is still working to be done. Not by vendors but by companies. Therefore I wholeheartedly agree with Peter’s closing remarks – no time to sit back and watch if you want to benefit from model-based approaches.

Smart Manufacturing

Kenny Swope, known from his presentations related to Boeing, now spoke to us as the Chair of the ISO/TC 184/SC 4 workgroup related to Industrial Data. To say it in decoded mode: Kenny is heading Sub-committee 4 with a focus on Industrial Data. SC4 is part of a more prominent theme: Automation Systems and integration identified by TC 184 all as part of the ISO framework. The scope:

Standardization of the content, meaning, structure, representation and quality management of the information required to define an engineered product and its characteristics at any required level of detail at any part of its lifecycle from conception through disposal, together with the interfaces required to deliver and collect the information necessary to support any business or technical process or service related to that engineered product during its lifecycle.

Perhaps boring to read if you think about all the demos you have seen at trade shows related to Smart Manufacturing. If you want these demos to become true in a vendor-independent environment, you will need to agree on a common framework of definitions to ensure future continuity beyond the demo. And here lies the business excitement, the real competitive advantages companies can have implementing Smart Manufacturing in a Scaleable, future-oriented way.

One of the often heard statements is that standards are too slow or incomplete. Incomplete is not a problem when there is a need, the standard will follow. Compare it with language, we will always invent new words for new concepts.

One of the often heard statements is that standards are too slow or incomplete. Incomplete is not a problem when there is a need, the standard will follow. Compare it with language, we will always invent new words for new concepts.

Being slow might be the case in the past. Kenny showed the relative fast convergence from country-specific Smart Manufacturing standards into a joined ISO/IEC framework – all within three years. ISO and IEC have been teaming-up already to build Smart Manufacturing Reference models.

This is already a considerable effort, as the local reference models need to be studied and mapped to a common architecture. The target is to have a first Technical Specification for a joint standard final 2020 – quite fast!

Meinolf Gröpper from the German VDMA presented what they are doing to support Smart Manufacturing / Industrie 4.0. The VDMA is a well-known engineering federation with 3200 member companies, 85 % of them are Small and Medium Enterprises – the power of the German economy.

Meinolf Gröpper from the German VDMA presented what they are doing to support Smart Manufacturing / Industrie 4.0. The VDMA is a well-known engineering federation with 3200 member companies, 85 % of them are Small and Medium Enterprises – the power of the German economy.

The VDMA provides networking capabilities, readiness assessments for members to be the enabler for companies to transform. As Meinolf stated Industrie 4.0 is not about technology, it is about cross-border services and international cooperation. A strategy that every company has to develop and if possible implement at its own pace. Standards will accelerate the implementation of Industrie 4.0

The Smart Manufacturing session was concluded by Gunilla Sivard, Professor at KTH in Stockholm and Hampus Wranér, Consultant at Eurostep. They presented the work done on the DIgln project, targeting an infrastructure for Smart Manufacturing.

The presentation showed the implementation of the testbed using twittering bus communication and the ISO 10303-239 PLCS information standard as the persistent layer. The results were promising to further build capabilities on top of the infrastructure below:

The conclusion from the Smart Manufacturing session was that emerging and available standards can accelerate the deployment.

The conclusion from the Smart Manufacturing session was that emerging and available standards can accelerate the deployment.

Enabling digital continuity in the Factory of the Future

Alcibiades Gonzalez-Noval from Airbus shared challenges and the strategy for Airbus’s factory of the future based on digital continuity from the virtual world towards the physical world, connecting with PLM, ERP, and MOM. Concepts many companies are currently working on with various maturity stages.

I agree with his lessons learned. We cannot think in silos anymore in a digital future – everything is connected. And please forget the PoC, to gain time start piloting and fail or succeed fast. Companies have lost years because of just doing PoCs and not going into action. The last point, networks segregation for sure is an issue, relevant for plant operations. I experienced this also in the past when promoting PLM concepts for (nuclear) owners/operators of plants. Network security is for sure an issue to resolve.

I agree with his lessons learned. We cannot think in silos anymore in a digital future – everything is connected. And please forget the PoC, to gain time start piloting and fail or succeed fast. Companies have lost years because of just doing PoCs and not going into action. The last point, networks segregation for sure is an issue, relevant for plant operations. I experienced this also in the past when promoting PLM concepts for (nuclear) owners/operators of plants. Network security is for sure an issue to resolve.

Cross-Discipline Lifecycle Collaboration Forum

Setting up the digital thread across engineering and the value chain.

Peter Gerber, Chairman of CDLC Forum and Data Exchange & Integration Leader at Schaefller and Pierre Bodin at Senior Manager Mews Partners, presented their findings related to the challenge of managing complex products (mechanical, electrical, software using system engineering methodology) to work properly at affordable cost in a real-time mode, multidisciplinary and coordination across the whole value chain. Something you might expect could be done when reviewing all PLM Vendor’s marketing materials, something you might expect hard to do when remembering Martin Eigner’s statement that 95 % of the companies have not solved mechatronics collaboration yet. (See: The weekend after CIMdata PLM Roadmap and PDT Europe)

A demonstrator was defined, and various vendors participated in building a demonstrator based on their Out-Of-The-Box capabilities. The result showed that for all participants there were still gaps to resolve for full collaboration. A new version of the demonstrator is now planned for the middle of next year – curious to learn the results at that time. Multi-disciplinary collaboration is a (conceptual) pillar for future digital business – it needs to be possible.

A Digital Thread based on the PLCS standard.

Nigel Shaw, Eurostep’s managing director in the UK, took us through his evolution of PLCS (Product Life Cycle Support) and extension of the ISO 10303 STEP standard. (STEP Standard for Exchange of Product data). Nigel mentioned how over all these years, millions (and a lot of brain power) have been invested in PLCS to where it is now.

Nigel Shaw, Eurostep’s managing director in the UK, took us through his evolution of PLCS (Product Life Cycle Support) and extension of the ISO 10303 STEP standard. (STEP Standard for Exchange of Product data). Nigel mentioned how over all these years, millions (and a lot of brain power) have been invested in PLCS to where it is now.

PLCS has been extremely useful as an interface standard for contracting, provide product data in a neutral way. As an example, last year the Swedish Defense organization (FMV) and France’s DGA made PLCS DEXs as part of the contractual conditions. It would be too costly to have all product data for all defense systems in proprietary vendor formats and this over the product lifecycle.

PLCS has been extremely useful as an interface standard for contracting, provide product data in a neutral way. As an example, last year the Swedish Defense organization (FMV) and France’s DGA made PLCS DEXs as part of the contractual conditions. It would be too costly to have all product data for all defense systems in proprietary vendor formats and this over the product lifecycle.

Those following the standards in the process industry will rely on ISO 15926 / CFIHOS as this standard’s dictionary, and data model is more geared to process data- and in particular the exchange of data from the various contractors with the owner/operator.

Coming back to PLCS and the Digital Twin – it is all about digital continuity of information. Otherwise, if we have to recreate information in every lifecycle stage of a product (design/manufacturing / operations), it will be too costly and not digital connected. This illustrates the growing needs for standards. I had nothing to add to Nigel’s conclusions:

It is interesting to note that product management has moved a long way over the last 10-20 years however as we include more and more into PLM, there are all the time new concepts to be solved. The cases we discuss today in our PLM communities were most of the time visions 10 years ago. Nowadays we want to include Model-Based Systems Engineering, 3D Modeling and simulation, electronics and software and even aftermarket, product support in true PLM. This was not the case 20 years ago. The people involved in the development of PLCS were for sure visionaries as product data connectivity along the whole lifecycle is needed and enabled by the standard.

Investing in Industry 4.0?

Hard Realities of the Grand Vision.

![]() Marc Halpern from Gartner is one of the regular speakers at the PDT conference. Unfortunate he could not be with us that day, however, through a labor-intensive connection (mobile phone close to the speaker and Nigel Shaw trying to stay in sync with the presented slides) we could hear Marc speak about what we wanted to achieve too – a digital continuity.

Marc Halpern from Gartner is one of the regular speakers at the PDT conference. Unfortunate he could not be with us that day, however, through a labor-intensive connection (mobile phone close to the speaker and Nigel Shaw trying to stay in sync with the presented slides) we could hear Marc speak about what we wanted to achieve too – a digital continuity.

Marc restated the massive potential of Industrie 4.0 when it comes to scalability, agility, flexibility, and efficiency.

Although technologies are evolving rapidly, it is the existing legacy that inhibits fast adoption. A topic that was also central in my presentation. It is not just a change in technology, there is much more connected.

Marc recommends a changing role for IT, where they should focus more on business priorities and business leadership strategies. This as opposed to the classical role of the IT organization where IT needed to support the business, now they will be part of leading the business too.

To orchestrate such an IT evolution, Marc recommends a “systems of systems” planning and execution across IT and Business. One of my recent blog posts: Moving to a model-based enterprise: The business (information) model can be seen in that context.

To orchestrate such an IT evolution, Marc recommends a “systems of systems” planning and execution across IT and Business. One of my recent blog posts: Moving to a model-based enterprise: The business (information) model can be seen in that context.

How to deal with the incompatible future?

I was happy to conclude the sessions with the topic that concerns me the most at this time. Companies in their current business are already struggling to get aligned and coordinated between disciplines and external stakeholders, the gap to be connected is vast as it requires a master data management approach, an enterprise data model and model-based ways of working. Read my posts from the past ½ year starting here, and you get the picture.

Note: This image is based on Marc Halpern’s (Gartner) Technology/Maturity diagram from PDT 2015

I concluded with explaining companies need to learn to work in two modes. One mode will be the traditional way of working which I call the coordinated approach and a growing focus on operating in a connected mode. You can see my full presentation here on SlideShare: How to deal with the incompatible future.

Conclusion

The conference was closed with a panel discussion where we shared our concerns related to the challenges companies face to change their traditional ways of working meanwhile entering a digital era. The positive points are there – baby steps – PLM is becoming understood, the significance of standards is becoming more clear. The need: a long-term vision.

This concludes my review of an excellent conference – I learned again a lot and I hope to see you next year too. Thanks again to CIMdata and Eurostep for organizing this event

Last week I attended the long-awaited joined conference from CIMdata and Eurostep in Stuttgart. As I mentioned in earlier blog posts. I like this conference because it is a relatively small conference with a focused audience related to a chosen theme.

Last week I attended the long-awaited joined conference from CIMdata and Eurostep in Stuttgart. As I mentioned in earlier blog posts. I like this conference because it is a relatively small conference with a focused audience related to a chosen theme.

Instead of parallel sessions, all attendees follow the same tracks and after two days there is a common understanding for all. This time there were about 70 people discussing the themes: Digitalizing Reality—PLM’s role in enabling the digital revolution (CIMdata) and Collaboration in the Engineering and Manufacturing Supply Chain –the Extended Digital Thread and Smart Manufacturing (EuroStep)

Instead of parallel sessions, all attendees follow the same tracks and after two days there is a common understanding for all. This time there were about 70 people discussing the themes: Digitalizing Reality—PLM’s role in enabling the digital revolution (CIMdata) and Collaboration in the Engineering and Manufacturing Supply Chain –the Extended Digital Thread and Smart Manufacturing (EuroStep)

As you can see all about Digital. Here are my comments:

The State of the PLM Industry:

The Digital Revolution

Peter Bilello kicked off with providing an overview of the PLM industry. The PLM market showed an overall growth of 7.3 % toward 43.6 Billion dollars. Zooming in into the details cPDM grew with 2.9 %. The significant growth came from the PLM tools (7.7 %). The Digital Manufacturing sector grew at 6.2 %. These numbers show to my opinion that in particular, managing collaborating remains the challenging part for PLM. It is easier to buy tools than invest in cPDM.

Peter Bilello kicked off with providing an overview of the PLM industry. The PLM market showed an overall growth of 7.3 % toward 43.6 Billion dollars. Zooming in into the details cPDM grew with 2.9 %. The significant growth came from the PLM tools (7.7 %). The Digital Manufacturing sector grew at 6.2 %. These numbers show to my opinion that in particular, managing collaborating remains the challenging part for PLM. It is easier to buy tools than invest in cPDM.

Peter mentioned that at the board level you cannot sell PLM as this acronym is too much framed as an engineering tool. Also, people at the board have been trained to interpret transactional data and build strategies on that. They might embrace Digital Transformation. However, the Product innovation related domain is hard to define in numbers. What is the value of collaboration? How do you measure and value innovation coming from R&D? Recently we have seen more simplified approaches how to get more value from PLM. I agree with Peter, we need to avoid the PLM-framing and find better consumable value statements.

Nothing to add to Peter’s closing remarks:

An Alternative View of the Systems Engineering “V”

For me, the most interesting presentation of Day 1 was Don Farr’s presentation. Don and his Boeing team worked on depicting the Systems Engineering process for a Model-Based environment. The original “V” looks like a linear process and does not reflect the multi-dimensional iterations at various stages, the concept of a virtual twin and the various business domains that need to be supported.

For me, the most interesting presentation of Day 1 was Don Farr’s presentation. Don and his Boeing team worked on depicting the Systems Engineering process for a Model-Based environment. The original “V” looks like a linear process and does not reflect the multi-dimensional iterations at various stages, the concept of a virtual twin and the various business domains that need to be supported.

The result was the diamond symbol above. Don and his team have created a consistent story related to the depicted diamond which goes too far for this blog post. Current the diamond concept is copyrighted by Boeing, but I expect we will see more of this in the future as the classical systems engineering “V” was not design for our model-based view of the virtual and physical products to design AND maintain.

Sponsor vignette sessions

The vignette sponsors of the conference, Aras, ESI,-group, Granta Design, HCL, Oracle and TCS all got a ten minutes’ slot to introduce themselves, and the topics they believed were relevant for the audience. These slots served as a teaser to come to their booth during a break. Interesting for me was Granta Design who are bringing a complementary data service related to materials along the product lifecycle, providing a digital continuity for material information. See below.

The PLM – CLM Axis vital for Digitalization of Product Process

![]() Mikko Jokela, Head of Engineering Applications CoE, from ABB, completed the morning sessions and left me with a lot of questions. Mikko’s mission is to provide the ABB companies with an information infrastructure that is providing end-to-end digital services for the future, based on apps and platform thinking.

Mikko Jokela, Head of Engineering Applications CoE, from ABB, completed the morning sessions and left me with a lot of questions. Mikko’s mission is to provide the ABB companies with an information infrastructure that is providing end-to-end digital services for the future, based on apps and platform thinking.

Apparently, the digital continuity will be provided by all kind of BOM-structures as you can see below. In my post, Coordinated or Connected, related to a model-based enterprise I call this approach a coordinated approach, which is a current best practice, not an approach for the future. There we want a model-based enterprise instead of a BOM-centric approach to ensure a digital thread. See also Don Farr’s diamond. When I asked Mikko which data standard(s) ABB will use to implement their enterprise data model it became clear there was no concept yet in place. Perhaps an excellent opportunity to look at PLCS for the product related schema.

In my post, Coordinated or Connected, related to a model-based enterprise I call this approach a coordinated approach, which is a current best practice, not an approach for the future. There we want a model-based enterprise instead of a BOM-centric approach to ensure a digital thread. See also Don Farr’s diamond. When I asked Mikko which data standard(s) ABB will use to implement their enterprise data model it became clear there was no concept yet in place. Perhaps an excellent opportunity to look at PLCS for the product related schema.

A general comment: Many companies are thinking about building their own platform. Not all will build their platform from scratch. For those starting from scratch have a look at existing standards for your industry. And to manage the quality of data, you will need to implement Master Data Management, where for the product part the PLM system can play a significant role. See Master Data Management and PLM.

Systems of Systems Approach to Product Design

Professor Martin Eigner keynote presentation was about the concepts how new products and markets need a Systems of Systems approach combined with Model-Based Systems Engineering (MBSE) and Product Line Engineering (PLE) where the PLM system can be the backbone to support the MBSE artifacts in context. All these concepts require new ways of working as stated below:

And this is a challenge. A quick survey in the room (and coherent with my observations from the field) is the fact that most companies (95 %) haven’t even achieved to work integrated for mechatronics products. You can imagine the challenge to incorporate also Software, Simulation, and other business disciplines. Martin’s presentations are always an excellent conceptual framework for those who want to dive deeper a start point for discussion and learning.

Additive Manufacturing (Enabled Supply) at Moog

Moog Inc, a manufacturer of precision motion controls for various industries have made a strategic move towards Additive Manufacturing. Peter Kerl, Moog’s Engineering Systems Manager, gave a good introduction what is meant by Additive Manufacturing and how Moog is introducing Additive Manufacturing in their organization to create more value for their customer base and attract new customers in a less commodity domain. As you can image delivering products through Additive Manufacturing requires new skills (Design / Materials), new processes and a new organizational structure. And of course a new PLM infrastructure.

Moog Inc, a manufacturer of precision motion controls for various industries have made a strategic move towards Additive Manufacturing. Peter Kerl, Moog’s Engineering Systems Manager, gave a good introduction what is meant by Additive Manufacturing and how Moog is introducing Additive Manufacturing in their organization to create more value for their customer base and attract new customers in a less commodity domain. As you can image delivering products through Additive Manufacturing requires new skills (Design / Materials), new processes and a new organizational structure. And of course a new PLM infrastructure.

Jim van Oss, Moog’s PLM Architect and Strategist, explained how they have been involved in a technology solution for digital-enabled parts leveraging blockchain technology. Have a look at their VeriPart trademark. It was interesting to learn from Peter and Jim that they are actively working in a space that according to the Gartner’s hype curve is in the early transform phase. Peter and Jim’s presentation were very educational for the audience.

For me, it was also interesting to learn from Jim that at Moog they were really practicing the modes for PLM in their company. Two PLM implementations, one with the legacy data and the wrong data for the future and one with the new data model for the future. Both implementations build on the same PLM vendor’s release. A great illustration showing the past and the future data for PLM are not compatible

Value Creation through Synergies between PLM & Digital Transformation

![]() Daniel Dubreuil, Safran’s CDO for Products and Services gave an entertaining lecture related to Safran’s PLM journey and the introduction of new digital capabilities, moving from an inward PLM system towards a digital infrastructure supporting internal (model-based systems engineering / multiple BOMs) and external collaboration with their customers and suppliers introducing new business capabilities. Daniel gave a very precise walk-through with examples from the real world. The concluding slide: KEY SUCCESS FACTORS was a slide that we have seen so many times at PLM events.

Daniel Dubreuil, Safran’s CDO for Products and Services gave an entertaining lecture related to Safran’s PLM journey and the introduction of new digital capabilities, moving from an inward PLM system towards a digital infrastructure supporting internal (model-based systems engineering / multiple BOMs) and external collaboration with their customers and suppliers introducing new business capabilities. Daniel gave a very precise walk-through with examples from the real world. The concluding slide: KEY SUCCESS FACTORS was a slide that we have seen so many times at PLM events.

Apparently, the key success factors are known. However, most of the time one or more of these points are not possible to address due to various reasons. Then the question is: How to mitigate this risk as there will be issues ahead?

Bringing all the digital trends together. What’s next?

The day ended with a virtual Fire Place session between Peter Bilello and Martin Eigner, the audience did not see a fireplace however my augmented twitter feed did it for me:

Some interesting observations from this dialogue:

Peter: “Having studied physics is a good base for understanding PLM as you have to model things you cannot see” – As I studied physics I can agree.

Martin: “Germany is the center of knowledge for Mechanical, the US for Electronics and now China becoming the center for Electronics and Software” Interesting observation illustrating where the innovation will come from.

Both Peter and Martin spent serious time on the importance of multidisciplinary education. We are teaching people in silos, faculties work in silos. We all believe these silos must be broken down. It is hard to learn and experiment skills for the future. Where to start and lead?

Conclusion:

The PLM roadmap had some exciting presentations combined with CIMdata’s PLM update an excellent opportunity to learn and discuss reality. In particular for new methodologies and technologies beyond the hype. I want to thank CIMdata for the superb organization and allowing me to take part. Next week I will follow-up with a review of the PDT Europe conference part (Day 2)

In my earlier post The weekend after PDT Europe I wrote about the first day of this interesting conference. We ended that day with some food for thought related to a bimodal PLM approach. Now I will take you through the highlights of day 2.

In my earlier post The weekend after PDT Europe I wrote about the first day of this interesting conference. We ended that day with some food for thought related to a bimodal PLM approach. Now I will take you through the highlights of day 2.

Interoperability and openness in the air (aerospace)

I believe Airbus and Boeing are one of the most challenged companies when it comes to PLM. They have to cope with their stakeholders and massive amount of suppliers involved, constrained by a strong focus on safety and quality. And as airplanes have a long lifetime, the need to keep data accessible and available for over 75 years are massive challenges. The morning was opened by presentations from Anders Romare (Airbus) and Brian Chiesi (Boeing) where they confirmed they could switch the presenter´s role between them as the situations in Airbus and Boeing are so alike.

![]() Anders Romare started with a presentation called: Digital Transformation through an e2e PLM backbone, where he explained the concept of extracting data from the various silo systems in the company (CRM, PLM, MES, ERP) to make data available across the enterprise. In particular in their business transformation towards digital capabilities Airbus needed and created a new architecture on top of the existing business systems, focusing on data (“Data is the new oil”).

Anders Romare started with a presentation called: Digital Transformation through an e2e PLM backbone, where he explained the concept of extracting data from the various silo systems in the company (CRM, PLM, MES, ERP) to make data available across the enterprise. In particular in their business transformation towards digital capabilities Airbus needed and created a new architecture on top of the existing business systems, focusing on data (“Data is the new oil”).

In order to meet a data-driven environment, Airbus extracts and normalizes data from their business systems and provides a data lake with integrated data on top of which various apps can run to offer digital services to existing and new stakeholders on any type of device. The data-driven environment allows people to have information in context and almost real-time available to make right decisions. Currently, these apps run on top of this data layer.

Now imagine information captured by these apps could be stored or directed back in the original architecture supporting the standard processes. This would be a real example of the bimodal approach as discussed on day 1. As a closing remark Anders also stated that three years ago digital transformation was not really visible at Airbus, now it is a must.

Next Brian Chiesi from Boeing talked about Data Standards: A strategic lever for Boeing Commercial Airplanes. Brian talked about the complex landscape at Boeing. 2500 Applications / 5000 Servers / 900 changes annually (3 per day) impacting 40.000 users. There is a lot of data replication because many systems need their own proprietary format. Brian estimated that if 12 copies exist now, in the ideal world 2 or 3 will do. Brian presented a similar future concept as Airbus, where the traditional business systems (Systems Engineering, PLM, MRP, ERP, MES) are all connected through a service backbone. This new architecture is needed to address modern technology capabilities (social / mobile / analytics / cloud /IoT / Automation / ,,)

Next Brian Chiesi from Boeing talked about Data Standards: A strategic lever for Boeing Commercial Airplanes. Brian talked about the complex landscape at Boeing. 2500 Applications / 5000 Servers / 900 changes annually (3 per day) impacting 40.000 users. There is a lot of data replication because many systems need their own proprietary format. Brian estimated that if 12 copies exist now, in the ideal world 2 or 3 will do. Brian presented a similar future concept as Airbus, where the traditional business systems (Systems Engineering, PLM, MRP, ERP, MES) are all connected through a service backbone. This new architecture is needed to address modern technology capabilities (social / mobile / analytics / cloud /IoT / Automation / ,,)

Interesting part of this architecture is that Boeing aims to exchange data with the outside world (customers / regulatory/supply chain /analytics / manufacturing) through industry standard interfaces to have an optimal flow of information. Standardization would lead to a reduction of customized applications, minimize costs of integration and migration, break the obsolescence cycle and enable future technologies. Brian knows that companies need to pull for standards, vendors will deliver. Boeing will be pushing for standards in their contracts and will actively work together with five major Aerospace & Defense companies to define required PLM capabilities and have a unified voice to PLM solutions providers.

My conclusion on these to Aerospace giants is they express the need to adapt to move to modern digital businesses, no longer the linear approach from the classic airplane programs. Incremental innovation in various domains is the future. The existing systems need to be there to support their current fleet for many, many years to come. The new data-driven layer needs to be connected through normalization and standardization of data. For the future focus on standards is a must.

Simon Floyd from Microsoft talked about The Impact of Digital Transformation in the Manufacturing Enterprise where he talked us through Digital Transformation, IoT, and analytics in the product lifecycle, clarified by examples from the Rolls Royce turbine engine. A good and compelling story which could be used by any vendor explaining digital transformation and the relation to IoT. Next, Simon walked through the Microsoft portfolio and solution components to support a modern digital enterprise based on various platform services. At the end, Simon articulated how for example ShareAspace based on Microsoft infrastructure and technology can be an interface between various PLM environments through the product lifecycle.

Simon Floyd from Microsoft talked about The Impact of Digital Transformation in the Manufacturing Enterprise where he talked us through Digital Transformation, IoT, and analytics in the product lifecycle, clarified by examples from the Rolls Royce turbine engine. A good and compelling story which could be used by any vendor explaining digital transformation and the relation to IoT. Next, Simon walked through the Microsoft portfolio and solution components to support a modern digital enterprise based on various platform services. At the end, Simon articulated how for example ShareAspace based on Microsoft infrastructure and technology can be an interface between various PLM environments through the product lifecycle.

Simon’s presentation was followed by a panel discussion where the theme was: When is history and legacy an asset and barriers of entry and When does it become a burden and an invitation to future competitors.

Mark Halpern (Gartner) mentioned here again the bimodal thinking. Aras is bimodal. The classical PLM vendors running in mode 1 will not change radically and the new vendors, the mode 2 types will need time to create credibility. Other companies mentioned here PropelPLM (PLM on Salesforce platform) or OnShape will battle the next five years to become significant and might disrupt.

Mark Halpern (Gartner) mentioned here again the bimodal thinking. Aras is bimodal. The classical PLM vendors running in mode 1 will not change radically and the new vendors, the mode 2 types will need time to create credibility. Other companies mentioned here PropelPLM (PLM on Salesforce platform) or OnShape will battle the next five years to become significant and might disrupt.

Simon Floyd(Microsoft) mentioned that in order to keep innovation within Microsoft, they allow for startups within in the company, with no constraints in the beginning to Microsoft. This to keep disruption inside you company instead of being disrupted from outside. Another point mentioned was that Tesla did not want to wait till COTS software would be available for their product development and support platform. Therefore they develop parts themselves. Are we going back to the early days of IT ?

Interesting trend I believe too, in case the building blocks for such solution architecture are based on open (standardized ?) services.

Data Quality

After the lunch, the conference was split in three streams where I was participating in the “Creating and managing information quality stream.” As I discussed in my presentation from day 1, there is a need for accurate data, starting a.s.a.p. as the future of our businesses will run on data as we learned from all speakers (and this is not a secret – still many companies do not act).

In the context of data quality, Jean Brange from Boost presented the ISO 8000 framework for data and information quality management. This standard is now under development and will help companies to address their digital needs. The challenge of data quality is that we need to store data with the right syntax and semantic to be used and in addition, it needs to be pragmatic: what are we going to store that will have value. And then the challenge of evaluating the content. Empty fields can be discovered, however, how do you qualify the quality of field with a value. The ISO 8000 framework is a framework, like ISO 9000 (product quality) that allow companies to work in a methodological way towards acceptable and needed data quality.

In the context of data quality, Jean Brange from Boost presented the ISO 8000 framework for data and information quality management. This standard is now under development and will help companies to address their digital needs. The challenge of data quality is that we need to store data with the right syntax and semantic to be used and in addition, it needs to be pragmatic: what are we going to store that will have value. And then the challenge of evaluating the content. Empty fields can be discovered, however, how do you qualify the quality of field with a value. The ISO 8000 framework is a framework, like ISO 9000 (product quality) that allow companies to work in a methodological way towards acceptable and needed data quality.

Magnus Färneland from Eurostep addressed the topic of data quality and the foundation for automation based on the latest developments done by Eurostep on top of their already rich PLCS data model. The PLCS data model is an impressive model as it already supports all facets of product lifecycle from design, through development and operations. By introducing soft typing, EuroStep allows a more detailed tuning of the data model to ensure configuration management. When at which stage of the lifecycle is certain information required (and becomes mandatory) ? Consistent data quality enforced through business process logic.