You are currently browsing the category archive for the ‘ISO 15926’ category.

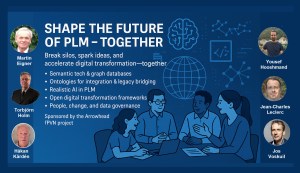

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

The meeting had two primary purposes.

- Firstly, we aimed to create an environment where these concepts could be discussed and presented to a broader audience, comprising academics, industrial professionals, and software developers. The group’s feedback could serve as a benchmark for them.

- The second goal was to bring people together and create a networking opportunity, either during the PLM Roadmap/PDT Europe conference, the day after, or through meetings established after this workshop.

Personally, it was a great pleasure to meet some people in person whose LinkedIn articles I had admired and read.

The meeting was sponsored by the Arrowhead fPVN project, a project I discussed in a previous blog post related to the PLM Roadmap/PDT Europe 2024 conference last year. Together with the speakers, we have begun working on a more in-depth paper that describes the similarities and the lessons learned that are relevant. This activity will take some time.

Therefore, this post only includes the abstracts from the speakers and links to their presentations. It concludes with a few observations from some attendees.

Reasoning Machines: Semantic Integration in Cyber-Physical Environments

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

The presentation outlines the phases of the product and production lifecycle, including requirements, specification, design, build-up, handover, and operations. It raises a question about unifying these phases and their associated technologies and standards, emphasizing that the most extended phase, which involves operation, maintenance, failure, and evolution until retirement, should be the primary focus.

It also discusses seamless integration, outlining a partial list of standards and technologies categorized into three sections: “Modelling & Representation Standards,” “Communication & Integration Protocols,” and “Architectural & Security Standards.” Each section contains a table listing various technology standards, their purposes, and references. Additionally, the presentation includes a “Conceptual Layer Mapping” table that details the different layers (Knowledge, Service, Communication, Security, and Data), along with examples, functions, and references.

The presentation outlines an approach for utilizing semantic technologies to ensure interoperability across heterogeneous datasets throughout a product’s lifecycle. Key strategies include using OWL 2 DL for semantic consistency, aligning domain-specific knowledge graphs with ISO 23726-3, applying W3C Alignment techniques, and leveraging Arrowhead’s microservice-based architecture and Framework Ontology for scalable and interoperable system integration.

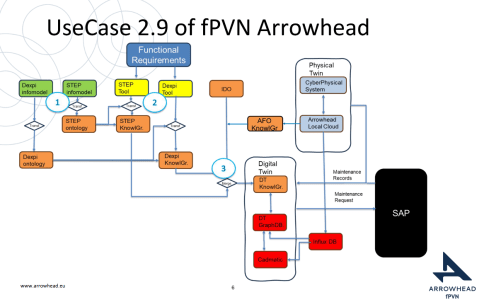

The utilized software architecture system, including three main sections: “Functional Requirements,” “Physical Twin,” and “Digital Twin,” each containing various interconnected components, will be presented. The Architecture includes today several Knowledge Graphs (KG): A DEXPI KG, A STEP (ISO 10303) KG, An Arrowhead Framework KG and under work the CFIHOS Semantics Ontology, all aligned.

👉The presentation: W3C Major standard interoperability_Paris

Beyond Handover: Building Lifecycle-Ready Semantic Interoperability

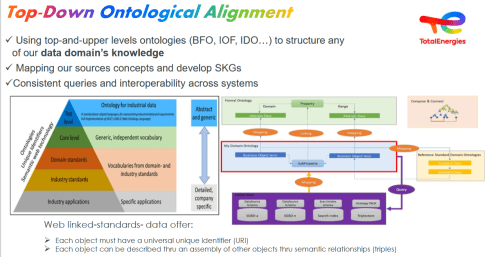

Jean-Charles Leclerc argued that Industrial data standards must evolve beyond the narrow scope of handover and static interoperability. To truly support digital transformation, they must embrace lifecycle semantics or, at the very least, be designed for future extensibility.

This shift enables technical objects and models to be reused, orchestrated, and enriched across internal and external processes, unlocking value for all stakeholders and managing the temporal evolution of properties throughout the lifecycle. A key enabler is the “pattern of change”, a dynamic framework that connects data, knowledge, and processes over time. It allows semantic models to reflect how things evolve, not just how they are delivered.

By grounding semantic knowledge graphs (SKGs) in such rigorous logic and aligning them with W3C standards, we ensure they are both robust and adaptable. This approach supports sustainable knowledge management across domains and disciplines, bridging engineering, operations, and applications.

Ultimately, it’s not just about technology; it’s about governance.

Being Sustainab’OWL (Web Ontology Language) by Design! means building semantic ecosystems that are reliable, scalable, and lifecycle-ready by nature.

Additional Insight: From Static Models to Living Knowledge

To transition from static information to living knowledge, organizations must reassess how they model and manage technical data. Lifecycle-ready interoperability means enabling continuous alignment between evolving assets, processes, and systems. This requires not only semantic precision but also a governance framework that supports change, traceability, and reuse, turning standards into operational levers rather than compliance checkboxes.

👉The presentation: Beyond Handover – Building Lifecycle Ready Semantic Interoperability

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

How can we achieve our vision of PLM – the Single Source of Truth?

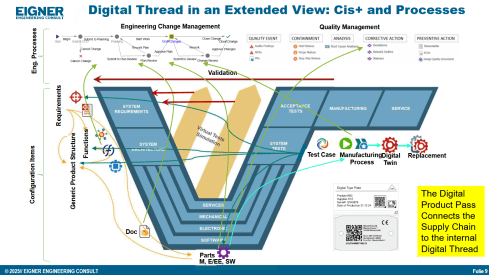

Martin Eigner stated that Product Lifecycle Management (PLM) has long promised to serve as the Single Source of Truth for organizations striving to manage product data, processes, and knowledge across their entire value chain. Yet, realizing this vision remains a complex challenge.

Achieving a unified PLM environment requires more than just implementing advanced software systems—it demands cultural alignment, organizational commitment, and seamless integration of diverse technologies. Central to this vision is data consistency: ensuring that stakeholders across engineering, manufacturing, supply chain, and service have access to accurate, up-to-date, and contextualized information along the Product Lifecycle. This involves breaking down silos, harmonizing data models, and establishing governance frameworks that enforce standards without limiting flexibility.

Emerging technologies and methodologies, such as Extended Digital Thread, Digital Twins, cloud-based platforms, and Artificial Intelligence, offer new opportunities to enhance collaboration and integrated data management.

However, their success depends on strong change management and a shared understanding of PLM as a strategic enabler rather than a purely technical solution. By fostering cross-functional collaboration, investing in interoperability, and adopting scalable architectures, organizations can move closer to a trustworthy single source of truth. Ultimately, realizing the vision of PLM requires striking a balance between innovation and discipline—ensuring trust in data while empowering agility in product development and lifecycle management.

👉The presentation: Martin – Workshop PLM Future 04_10_25

The Future is Data-Centric, Semantic, and Federated … Is your organization ready?

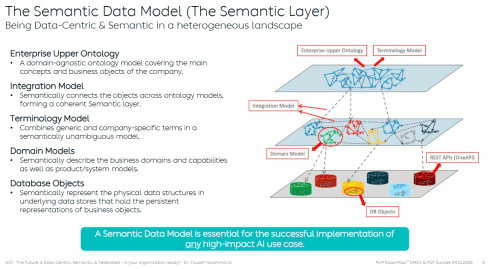

Yousef Hooshmand, who is currently working at NIO as PLM & R&D Toolchain Lead Architect, discussed the must-have relations between a data-centric approach, semantic models and a federated environment as the image below illustrates:

Why This Matters for the Future?

- Engineering is under unprecedented pressure: products are becoming increasingly complex, customers are demanding personalization, and development cycles must be accelerated to meet these demands. Traditional, siloed methods can no longer keep up.

- The way forward is a data-centric, semantic, and federated approach that transforms overwhelming complexity into actionable insights, reduces weeks of impact analysis to minutes, and connects fragmented silos to create a resilient ecosystem.

- This is not just an evolution, but a fundamental shift that will define the future of systems engineering. Is your organization ready to embrace it?

👉The presentation: The Future is Data-Centric, Semantic, and Federated.

Some of first impressions

👉 Bhanu Prakash Ila from Tata Consultancy Services– you can find his original comment in this LinkedIn post

Key points:

- Traditional PLM architectures struggle with the fundamental challenge of managing increasingly complex relationships between product data, process information, and enterprise systems.

- Ontology-Based Semantic Models – The Way Forward for PLM Digital Thread Integration: Ontology-based semantic models address this by providing explicit, machine-interpretable representations of domain knowledge that capture both concepts and their relationships. These lay the foundations for AI-related capabilities.

It’s clear that as AI, semantic technologies, and data intelligence mature, the way we think and talk about PLM must evolve too – from system-centric to value-driven, from managing data to enabling knowledge and decisions.

A quick & temporary conclusion

Typically, I conclude my blog posts with a summary. However, this time the conclusion is not there yet. There is work to be done to align concepts and understand for which industry they are most applicable. Using standards or avoiding standards as they move too slowly for the business is a point of ongoing discussion. The takeaway for everyone in the workshop was that data without context has no value. Ontologies, semantic models and domain-specific methodologies are mandatory for modern data-driven enterprises. You cannot avoid this learning path by just installing a graph database.

Due to other activities, I could not immediately share the second part of the review related to the PLM Roadmap / PDT Europe conference, held on 23-24 October in Gothenburg. You can read my first post, mainly about Day 1, here: The weekend after PLM Roadmap/PDT Europe 2024.

Due to other activities, I could not immediately share the second part of the review related to the PLM Roadmap / PDT Europe conference, held on 23-24 October in Gothenburg. You can read my first post, mainly about Day 1, here: The weekend after PLM Roadmap/PDT Europe 2024.

There were several interesting sessions which I will not mention here as I want to focus on forward-looking topics with a mix of (federated) data-driven PLM environments and the applicability of AI, staying around 1500 words.

R-evolutionizing PLM and ERP and Heliple

Cristina Paniagua from the Luleå University of Technology closed the first day of the conference, giving us food for thought to discuss over dinner. Her session, describing the Arrowhead fPTN project, fitted nicely with the concepts of the Federated PLM Heliple project presented by Erik Herzog also on Day 2.

They are both research products related to the future state of a digital enterprise. Therefore, it makes sense to treat them together.

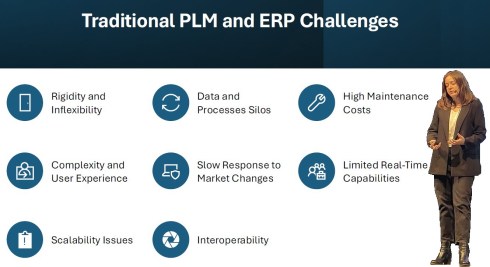

Cristina’s session started with sharing the challenges of traditional PLM and ERP systems:

These statements align with the drivers of the Heliple project. The PLM and ERP systems—Systems of Record—provide baselines and traceability. However, Systems of Record have not historically been designed to support real-time collaboration or to create an attractive user experience.

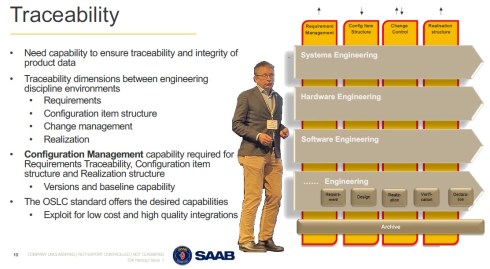

The Heliple project focuses on connecting various modules—the horizontal bars—for systems engineering, hardware engineering, etc., as real-time collaboration environments that can be highly customized and replaceable if needed. The Heliple project explored the usage of OSLC to connect these modules, the Systems of Engagement, with the Systems of Record.

![]() By using Lynxwork as a low-code wrapper to develop the OSLC connections and map them to the needed business scenarios, the team concluded that this approach is affordable for businesses.

By using Lynxwork as a low-code wrapper to develop the OSLC connections and map them to the needed business scenarios, the team concluded that this approach is affordable for businesses.

Now, the Heliple team is aiming to expand their research with industry scale validation through the Demoiple project (Validate that the Heliple-2 technology can be implemented and accredited in Saab Aeronautics’ operational IT) combined with the Nextiple project, where they will investigate the role of heterogeneous information models/ontologies for heterogeneous analysis.

![]() If you are interested in participating in Nextiple, don’t hesitate to contact Erik Herzog.

If you are interested in participating in Nextiple, don’t hesitate to contact Erik Herzog.

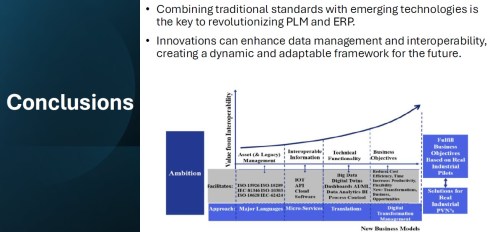

Christina’s Arrowhead flexible Production Value Network(fPVN) project aims to provide autonomous and evolvable information interoperability through machine-interpretable content for fPVN stakeholders. In less academic words, building a digital data-driven infrastructure.

Christina’s Arrowhead flexible Production Value Network(fPVN) project aims to provide autonomous and evolvable information interoperability through machine-interpretable content for fPVN stakeholders. In less academic words, building a digital data-driven infrastructure.

The resulting technology is projected to impact manufacturing productivity and flexibility substantially.

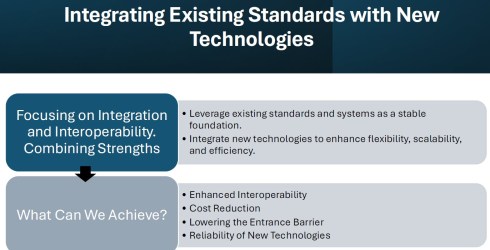

The exciting starting point of the Arrowhead project is that it wants to use existing standards and systems as a foundation and, on top of that, create a business and user-oriented layer, using modern technologies such as micro-services to support real-time processing and semantic technologies, ontologies, system modeling, and AI for data translations and learning—a much broader and ambitious scope than the Heliple project.

I believe that in our PLM domain, this resonates with actual discussions you will find on LinkedIn, too. @Oleg Shilovitsky, @Dr. Yousef Hooshmand, @Prof. Dr. Jörg W. Fischer and Martin Eigner are a few of them steering these discussions. I consider it a perfect match for one of the images I shared about the future the digital enterprise.

I believe that in our PLM domain, this resonates with actual discussions you will find on LinkedIn, too. @Oleg Shilovitsky, @Dr. Yousef Hooshmand, @Prof. Dr. Jörg W. Fischer and Martin Eigner are a few of them steering these discussions. I consider it a perfect match for one of the images I shared about the future the digital enterprise.

Potentially, there are five platforms with their own internal ways of working, a mix of systems of record and systems of engagement, supported by an overlay of several Systems of Engagement environments.

![]() I previously described these dedicated environments, e.g., OpenBOM, Colab, Partful, and Authentise. These solutions could also be dedicated apps supporting a specific ecosystem role.

I previously described these dedicated environments, e.g., OpenBOM, Colab, Partful, and Authentise. These solutions could also be dedicated apps supporting a specific ecosystem role.

See below my artist’s impression of how a Service Engineer would work in its app connected to CRM, PLM and ERP platform datasets:

The exciting part of the Arrowhead fPVN project is that it wants to explore the interactions between systems and user roles based on existing mature standards instead of leaving the connections to software developers.

Christina mentioned some of these standards below:

I greatly support this approach as, historically, much knowledge and effort has been put into developing standards to support interoperability. Maybe not in real-time, but the embedded knowledge in these standards will speed up the broader usage. Therefore, I concur with the concluding slide:

A final comment: Industrial users must push for these standards if they do not want a future vendor lock-in. Vendors will do what the majority of their customers ask for but will also keep their customers’ data in proprietary formats to prevent them from switching to another system.

Accelerated Product Development Enabled by Digitalization

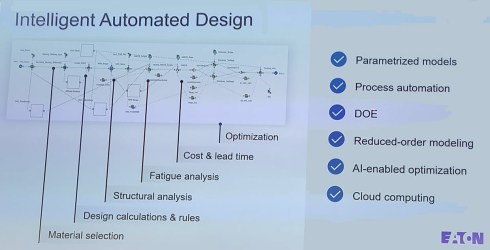

![]() The keynote session on Day 2, delivered by Uyiosa Abusomwan, Ph.D., Senior Global Technology Manager – Digital Engineering at Eaton, was a visionary story about the future of engineering.

The keynote session on Day 2, delivered by Uyiosa Abusomwan, Ph.D., Senior Global Technology Manager – Digital Engineering at Eaton, was a visionary story about the future of engineering.

With its broad range of products, Eaton is exploring new, innovative ways to accelerate product design by modeling the design process and applying AI to narrow design decisions and customer-specific engineering work. The picture below shows the areas of attention needed to model the design processes. Uyiosa mentioned the significant beneficial results that have already been reached.

Together with generative design, Eaton works towards modern digital engineering processes built on models and knowledge. His session was complementary to the Heliple and Arrowhead story. To reach such a contemporary design engineering environment, it must be data-driven and built upon open PLM and software components to fully use AI and automation.

Next Gen” Life Cycle Management in Next-Gen Nuclear Power and LTO Legacy Plants

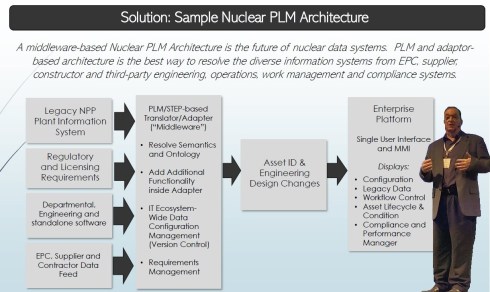

Kent Freeland‘s presentation was a trip into memory land when he discussed the issues with Long Term Operations of legacy nuclear plants.

I spent several years in Ringhals (Sweden) discussing and piloting the setup of a PLM front-end next to the MRO (Maintenance Repair Overhaul) system. As nuclear plants developed in the sixties, they required a longer than anticipated lifecycle, with access to the right design and operational data; maintenance and upgrade changes in the plant needed to be planned and controlled. The design data is often lacking; it resides at the EPC or has been stored in a document management system with limited retrieval capabilities.

I spent several years in Ringhals (Sweden) discussing and piloting the setup of a PLM front-end next to the MRO (Maintenance Repair Overhaul) system. As nuclear plants developed in the sixties, they required a longer than anticipated lifecycle, with access to the right design and operational data; maintenance and upgrade changes in the plant needed to be planned and controlled. The design data is often lacking; it resides at the EPC or has been stored in a document management system with limited retrieval capabilities.

See also my 2019 post: How PLM, ALM, and BIM converge thanks to the digital twin.

Kent described these experienced challenges – we must have worked in parallel universes – that now, for the future, we need a digitally connected infrastructure for both plant design and maintenance artifacts, as envisioned below:

The solution reminded me of a lecture I saw at the PI PLMx 2019 conference, where the Swedish ESS facility demonstrated its Asset Lifecycle Data Management solution based on the 3DEXPERIENCE platform.

The solution reminded me of a lecture I saw at the PI PLMx 2019 conference, where the Swedish ESS facility demonstrated its Asset Lifecycle Data Management solution based on the 3DEXPERIENCE platform.

You can still find the presentation here: Henrik Lindblad Ola Nanzell ESS – Enabling Predictive Maintenance Through PLM & IIOT.

Also, Kent focused on the relevant standards to support a “Single Source of Truth” concept, where I would say after all the federated PLM discussions, I would go for:

“The nearest source of truth and a single source of Change”

assuming this makes more sense in a digitally connected enterprise.

Why do you need to be SMART when contracting for information?

Rob Bodington‘s presentation was complementary to Kent Freeland’s presentation. Ron, a technical fellow at Eurostep, described the challenge of information acquisition when working with large assets that require access to the correct data once the asset is in operation. The large asset could be a nuclear plant or an aircraft carrier.

Rob Bodington‘s presentation was complementary to Kent Freeland’s presentation. Ron, a technical fellow at Eurostep, described the challenge of information acquisition when working with large assets that require access to the correct data once the asset is in operation. The large asset could be a nuclear plant or an aircraft carrier.

In the ideal world, the asset owner wants to have a digital twin of the asset fed by different data sources through a digital thread. Of course, this environment will only be reliable when accurate data is used and presented.

Getting accurate data starts with the information acquisition process, and Rob explained that this needed to be done SMARTly – see the image below:

Rob zoomed in on the SMART keywords and the challenge the various standards provide to make the information SMARTly accessible, like the ISO 10303 / PLCS standard, the CFIHOS exchange standard and more. And then there is the ISO 8000 standard about data quality.

Rob zoomed in on the SMART keywords and the challenge the various standards provide to make the information SMARTly accessible, like the ISO 10303 / PLCS standard, the CFIHOS exchange standard and more. And then there is the ISO 8000 standard about data quality.

Click on the image to get smart.

Rob believes that AI might be the silver bullet as it might help understand the data quality, ontology and context of the data and even improve contracting, generating data clauses for contracting….

And there was a lot of AI ….

![]() There was a dazzling presentation from Gary Langridge, engineering manager at Ocado, explaining their Ocado Smart Platform (OSP), which leverages AI, robotics, and automation to tackle the challenges of online grocery and allow their clients to excel in performance and customer responsiveness.

There was a dazzling presentation from Gary Langridge, engineering manager at Ocado, explaining their Ocado Smart Platform (OSP), which leverages AI, robotics, and automation to tackle the challenges of online grocery and allow their clients to excel in performance and customer responsiveness.

There was a significant AI component in his presentation, and if you are tired of reading, watch this video

![]() But here was more AI – from the 25 sessions in this conference, 19 of them mentioned the potential or usage of AI somewhere in their speech – this is more than 75 %!

But here was more AI – from the 25 sessions in this conference, 19 of them mentioned the potential or usage of AI somewhere in their speech – this is more than 75 %!

There was a dedicated closing panel discussion related to the real business value of Artificial Intelligence in the PLM domain, moderated by Peter Bilello and answered by selected speakers from the conference, Sandeep Natu (CIMdata), Lars Fossum (SAP), Diana Goenage (Dassault Systemes) and Uyiosa Abusomwan (Eaton).

The discussion was realistic and helpful for the audience. It is clear that to reap the benefits, companies must explore the technology and use it to create valuable business scenarios. One could argue that many AI tools are already available, but the challenge remains that they have to run on reliable data. The data foundation is crucial for a successful outcome.

An interesting point in the discussion was the statement from Diane Goenage, who repeatedly warned that using LLM-based solutions has an environmental impact due to the amount of energy they consume.

An interesting point in the discussion was the statement from Diane Goenage, who repeatedly warned that using LLM-based solutions has an environmental impact due to the amount of energy they consume.

We have a similar debate in the Netherlands – do we want the wind energy consumed by data centers (the big tech companies with a minimum workforce in the Netherlands), or should the Dutch citizens benefit from renewable energy resources?

Conclusion

There were even more interesting presentations during these two days, and you might have noticed that I did not advertise my content. This is because I have already reached 1600 words, but I also want to spend more time on the content separately.

It was about PLM and Sustainability, a topic often covered in this conference. Unfortunately, only 25 % of the presentations touched on sustainability, and AI over-hypes the topic.

Hopefully, it is not a sign of the time?

This week I attended the PLM Roadmap & PDT Fall 2021 with great expectations based on my enthusiasm last year. Unfortunately, the excitement was less this time, and I will explain in my conclusions why. This time it was unfortunate again a virtual event which makes it hard to be interactive, something I realize I am missing a lot.

This week I attended the PLM Roadmap & PDT Fall 2021 with great expectations based on my enthusiasm last year. Unfortunately, the excitement was less this time, and I will explain in my conclusions why. This time it was unfortunate again a virtual event which makes it hard to be interactive, something I realize I am missing a lot.

Over two hundred attendees connected for the two days, and you can find the agenda here. Typically I would discuss the relevant sessions; now, I want to group some of them related to a theme, as there was complementary information in these sessions.

Over two hundred attendees connected for the two days, and you can find the agenda here. Typically I would discuss the relevant sessions; now, I want to group some of them related to a theme, as there was complementary information in these sessions.

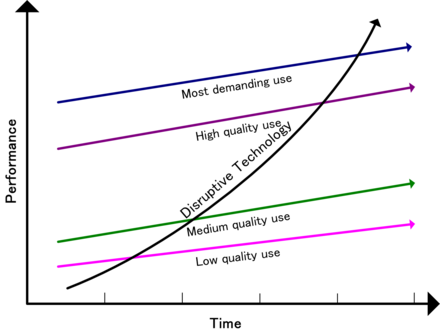

Disruption

Again like in the spring, the theme was focusing on DISRUPTION. The word disruption can give you an uncomfortable feeling when you are not in power. It is more fun to disrupt than to be disrupted, as I mentioned in my spring presentation. Read The week after PLM Roadmap & PDT Spring 2021

Again like in the spring, the theme was focusing on DISRUPTION. The word disruption can give you an uncomfortable feeling when you are not in power. It is more fun to disrupt than to be disrupted, as I mentioned in my spring presentation. Read The week after PLM Roadmap & PDT Spring 2021

In his keynote speech Peter Bilello (CIMdata) kicked off with: The Critical Dozen: 12 familiar, evolving trends and enablers of digital transformation that you cannot or should not live without.

You can see them on the slide below:

I believe many of them should be familiar to you as these themes have been “in the air” already for quite some time. Vendors first and slowly companies start to investigate them when relevant. You will find many of them back in my recent series: The road to model-based and connected PLM, where I explored the topics that would cross your path on that journey.

I believe many of them should be familiar to you as these themes have been “in the air” already for quite some time. Vendors first and slowly companies start to investigate them when relevant. You will find many of them back in my recent series: The road to model-based and connected PLM, where I explored the topics that would cross your path on that journey.

Like Peter said: “For most of the topics you cannot pick and choose as they are all connected.”

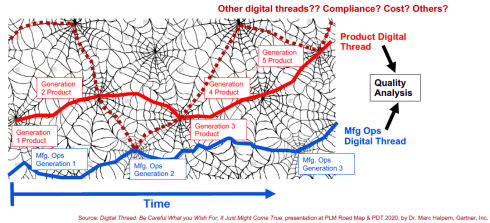

Another interesting observation was that we are more and more moving away from the concept of related structures (digital thread) but more to connected datasets (digital web). Marc Halpern first introduced this topic last year at the 2020 conference and has become an excellent image to frame what we should imagine in a connected world.

Digital web also has to do with the uprise of the graph database mentioned by Peter Bilello as a potentially disruptive technology during the fireside chat. Relational databases can be seen as rigid, associated with PLM structures. On the other hand, graph databases can be associated with flexible relations between different types of data – the image of the digital web.

Where Peter was mainly telling WHAT was happening, two presentations caught my attention because of the HOW.

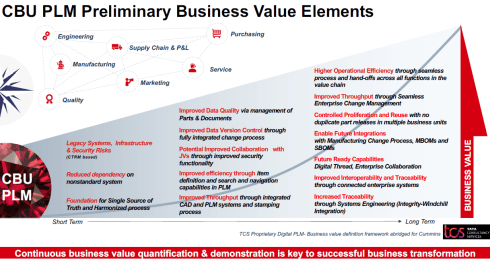

First of all, Dr. Rodney Ewing (Cummins) ‘s session: A Balanced Strategy to Reap Continuous Business Value from Digital PLM was a great story of a transformational project. It contained both having a continuous delivery of business value in mind while moving to the connected enterprise.

First of all, Dr. Rodney Ewing (Cummins) ‘s session: A Balanced Strategy to Reap Continuous Business Value from Digital PLM was a great story of a transformational project. It contained both having a continuous delivery of business value in mind while moving to the connected enterprise.

As Rodney mentioned, the contribution of TCS was crucial here, which I can imagine. It is hard for a company to understand what is happening in the outside (PLM) world when applying it to your company. Their transformation roadmap is an excellent example of having the long-term vision in mind, meanwhile delivering value during the transformation.

Talking about the right partner and synergy, the second presentation I liked in this context of disruption was Ian Quest’s presentation (Quick Release): Open-source Disruption in Support of Audacious Goals. As a sponsor of the conference, they had ten minutes to pitch their area of expertise.

After Ian’s presentation, focused on audacious goals (for non-English natives translated as “brave” goals), there was only one word that stuck to my mind: pragmatic.

After Ian’s presentation, focused on audacious goals (for non-English natives translated as “brave” goals), there was only one word that stuck to my mind: pragmatic.

Instead of discussions about the complexity, Ian gave examples of where a pragmatic data-centric approach could lead to great benefits, as you can see from one of the illustrated benefits below:

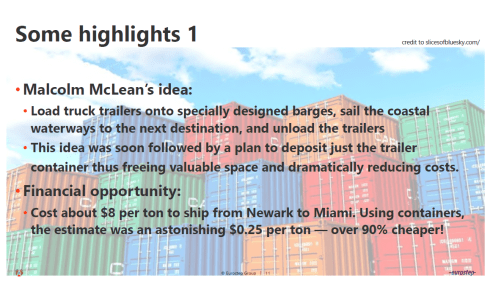

Standards

A characteristic topic of this conference is that we always talk about standards. Torbjörn Holm (Eurostep) gave an excellent overview of where standards have led to significant benefits. For example, the containerization of goods has dramatically improved transportation of goods (we all benefit) while killing proprietary means of transport (trains, type of ships, type of unloading). See the image below:

Torbjörn rightfully expanded this story to the current situation in the construction industry or the challenges for asset operators. Unfortunately, in these practices, many content suppliers remain focusing on their unique capabilities, reluctantly neglecting the demand for interoperability among the whole value chain.

It is a topic Marc Halpern also mentioned last year as an outcome of their Gartner PLM benefits survey. Gartner’s findings:

It is a topic Marc Halpern also mentioned last year as an outcome of their Gartner PLM benefits survey. Gartner’s findings:

Time to Market is not so much improved by using PLM as the inefficient interaction with suppliers is the impediment.

Like transport before containerization, the exchange of information is not standardized and designed for digital exchange. Torbjorn believes that more and more companies will insist on exchange standards – like CHIFOS – an ISO1596-derived exchange standard in the process industry. It is a user-driven standard, the best standard.

Like transport before containerization, the exchange of information is not standardized and designed for digital exchange. Torbjorn believes that more and more companies will insist on exchange standards – like CHIFOS – an ISO1596-derived exchange standard in the process industry. It is a user-driven standard, the best standard.

In this context, the presentation from Kenny Swope (Boeing) and Jean Yves Delaunay (Airbus) The Business Value of Standards-based Information Interoperability for Aerospace & Defense illustrated this fact.

In this context, the presentation from Kenny Swope (Boeing) and Jean Yves Delaunay (Airbus) The Business Value of Standards-based Information Interoperability for Aerospace & Defense illustrated this fact.

While working for competitors, the Aerospace industry understands the criticality of standards to become more efficient and less vendor-dependent. In the aerospace & defense group, they discuss these themes. The last year’s 2020 Fall sessions showed the results. You can read their publications here

The A&D PLM action group uses the following framework when evaluating standards – as you can see on the image below:

The result – and this is a combined exercise of many participating experts from the field; this is their recommendation:

To conclude:

People often complain about standards, framed by proprietary data format vendors, that they lead to a rigid environment, blocking agility.

In reality, standards allow companies to be more agile as the (proprietary) data flow is less an issue. Remember the containerization example.

Sustainability and System Thinking

This conference has always been known for its attention to the circular economy and green thinking. In the past, these topics might have been considered disconnected from our PLM practices; now, they have become a part of everyone’s mission.

This conference has always been known for its attention to the circular economy and green thinking. In the past, these topics might have been considered disconnected from our PLM practices; now, they have become a part of everyone’s mission.

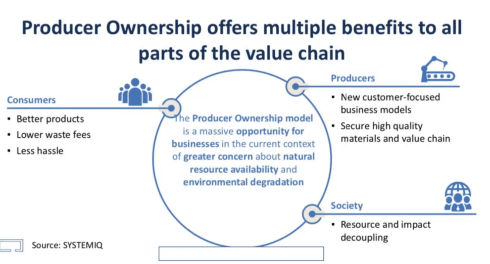

Two presentations stood out on this topic for me. First, Ken Webster, with his keynote speech: In the future, you will own nothing and you will be happy was a significant oversight of how we as consumers currently are disconnected from the circular economy. His plea, as shown below, for making manufacturers responsible for the legal ownership of the materials in the products they deliver would impact consumer behavior.

Product as a Service (PaaS) and new ways to provide a service is becoming essential. For example, buildings as power stations, as they are a place to collect solar or wind energy?

His thoughts are aligned with what is happening in Europe related to the European Green Deal (not in his presentation). There is a push for a PaaS model for all products as this would be an excellent stimulant for the circular economy. PaaS combined with a Digital Product Passport – more on that next year.

His thoughts are aligned with what is happening in Europe related to the European Green Deal (not in his presentation). There is a push for a PaaS model for all products as this would be an excellent stimulant for the circular economy. PaaS combined with a Digital Product Passport – more on that next year.

Making upgrades to your products has less impact on the environment than creating new products to sell (and creating waste of the old product). Ken Webster was an interesting statement about changing the economy – do we want to own products or do we want to benefit from the product and leave the legal ownership to the manufacturer.

A topic I discussed in the PLM Roadmap & PDT Conference Spring 2021 – look here at slide 11

Patrick Hillberg‘s presentation Rising to the challenge of engineering and optimizing . . . what? was the one closest to my heart. We discussed Sustainability and Systems Thinking with Patrick in our PLM Global Green Alliance, being pretty aligned on this topic. Patrick started by explaining the difference between Systems Engineering and Systems Thinking. Looking at the product go-to-market of an organization is more than the traditional V-model. Economic pressure and culture will push people to deviate from the ideal technological plan due to other priorities.

Expanding on this observation, Partick stated that there are limits to growth, a topic discussed by many people involved in the sustainable economy. Economic growth is impossible on a limited planet, and we have to take more dimensions into account. Patrick gave some examples of that, including issues related to the infamous Boeing 737 Max example.

For Patrick, the COVID-pandemic is the end of the old 2nd Industrial Revolution and a push for a new Fourth Industrial Revolution, which is not only technical, as the slide below indicates.

With Patrick, I believe we are at a decisive moment to disrupt ourselves, reconsider many things we do and are used to doing. Even for PLM practitioners, this is a new path to go.

Data

There were two presentations related to digitization and the shift from document-based to a data-driven approach.

First, there was Greg Weaver (Gulfstream) with his presentation Indexing Content – Finding Your Needle in the Haystack. Greg explained that by using indexation of existing document-based information combined with a specific dashboard, they could provide fast access to information that otherwise would have been hidden in so many document or even paper archives.

First, there was Greg Weaver (Gulfstream) with his presentation Indexing Content – Finding Your Needle in the Haystack. Greg explained that by using indexation of existing document-based information combined with a specific dashboard, they could provide fast access to information that otherwise would have been hidden in so many document or even paper archives.

It was a pragmatic solution, making me feel nostalgic seeing the SmarTeam profile cards. It was an excellent example of moving to a digital enterprise, and Gulfstream has always been a front runner on this topic.

Warning: Don’t use this by default at home (your company). The data in a regulated industry like Aerospace is expected to be of high quality due to the configuration management processes in place. If your company does not have a strong CM practice, the retrieved data might be inaccurate.

Warning: Don’t use this by default at home (your company). The data in a regulated industry like Aerospace is expected to be of high quality due to the configuration management processes in place. If your company does not have a strong CM practice, the retrieved data might be inaccurate.

Martijn Dullaart (ASML)’s presentation The Next disruption, please….. was the next step into the future. With his statement “No CM = No Trust,” he made an essential point for data-driven environments.

There is a need for Configuration Management, and I touched on this topic in my last post: The road to model-based and connected PLM (part 9 – CM).

There is a need for Configuration Management, and I touched on this topic in my last post: The road to model-based and connected PLM (part 9 – CM).

Martijn’s presentation can also be found on his blog here, and I encourage you to read it (saving me copy & paste text). It was interesting to see that Martijn improved his CM pyramid, as you can see, more discipline and activity-oriented instead of a system view. With Martijn and others, I will elaborate on this topic soon.

Conclusion

This has been an extremely long post, and thanks for reading until the end. Many interesting topics were presented at the conference. I was less excited this time because many of these topics are triggers for a discussion. Innovation comes from meeting people with different backgrounds. In a live conference, you would meet during the break or during the famous dinner. How can we ensure we follow up on all this interesting information.

Your thoughts? Contact me for a Corona Friday discussion.

To avoid that software geeks are getting curious about the title – in this context, ALM means Asset Lifecycle Management. In 2008 I was active for SmarTeam to promote PLM concepts relevant for Asset Lifecycle Management. The focus was on PLM being complementary to asset operation management (EAM Enterprise Asset Management and MRO – Maintenance Repair and Overhaul).

To avoid that software geeks are getting curious about the title – in this context, ALM means Asset Lifecycle Management. In 2008 I was active for SmarTeam to promote PLM concepts relevant for Asset Lifecycle Management. The focus was on PLM being complementary to asset operation management (EAM Enterprise Asset Management and MRO – Maintenance Repair and Overhaul).

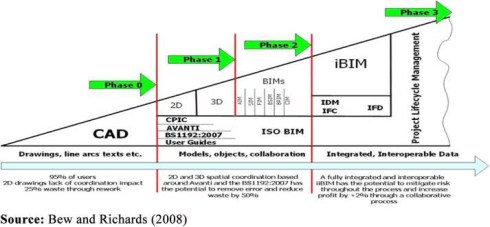

This topic has become actual for me in the past two months, having discussed and seen (PDT) the concepts of a model-based approach for assets and constructions. PLM, ALM, and BIM converge conceptually. Every year I give a one-day update from the field for students doing a master for PLM & BIM on top of their engineering/architectural background. Five years ago, there was no mentioning of BIM, now the ratio of BIM-oriented students has become significant. For me it is always great to see young students willing to learn PLM or BIM on top of their own skillset. Read more about this particular Master class in French when you click on the logo to the left.

This topic has become actual for me in the past two months, having discussed and seen (PDT) the concepts of a model-based approach for assets and constructions. PLM, ALM, and BIM converge conceptually. Every year I give a one-day update from the field for students doing a master for PLM & BIM on top of their engineering/architectural background. Five years ago, there was no mentioning of BIM, now the ratio of BIM-oriented students has become significant. For me it is always great to see young students willing to learn PLM or BIM on top of their own skillset. Read more about this particular Master class in French when you click on the logo to the left.

In 2012 I started to explain PLM benefits to EPC companies (Engineering Procurement Construction), targeting a more profitable and efficient delivery of their constructions (oil platform, plant, building, infrastructure). The simplified reasoning behind using PLM was related to a more efficient and quality of multidisciplinary collaboration, reducing costly fixes during construction, and smoothening the intensive process of data handover.

More and more in the process industry, standards, like ISO 15926 (Process Industry) and ISO 19650 (BIM – mainly in the UK), became crucial. At that time, it was difficult to convince companies to focus on the horizontal-integrated process instead of dedicated, disconnected tools. Meanwhile, this has changed, thanks to the Digital Twin hype. Let’s have a look.

More and more in the process industry, standards, like ISO 15926 (Process Industry) and ISO 19650 (BIM – mainly in the UK), became crucial. At that time, it was difficult to convince companies to focus on the horizontal-integrated process instead of dedicated, disconnected tools. Meanwhile, this has changed, thanks to the Digital Twin hype. Let’s have a look.

PLM and ALM

The initial value for using PLM concepts complementary to MRO systems came from the fact that MRO systems are mainly focusing on plant operations. You could compare these systems with ERP systems for manufacturing companies, focusing execution and continuous operation. Scheduled maintenance and inspections are also driven by the MRO system. Typical MRO systems are Maximo and SAP PM. PLM could deliver configuration management, linking the design intent to the physical implementation. Therefore provide higher data quality, visibility, and traceability of the asset history.

In 2010, I shared these concepts in two posts: Asset Lifecycle Management using a PLM-system and PLM for Asset Lifecycle Management and Asset Development based on lessons learned with some (nuclear) plant owner/operators. They started to discover the need for configuration management to ensure data quality for operations. In 2010-2014 the business case using PLM complementary to MRO was data quality and therefore reduced down-time when executing large maintenance programs (dependencies between the individual projects were not visible without PLM)

In MRO-systems, like in ERP-systems, the data for execution is based on information coming from various engineering sources – specifications, PFDs, P&IDs. Questions owner/operators ask themselves are:

In MRO-systems, like in ERP-systems, the data for execution is based on information coming from various engineering sources – specifications, PFDs, P&IDs. Questions owner/operators ask themselves are:

- What are the designed operational settings?

- Are the asset parameters currently running as designed?

- What is the optimized maintenance period?

- Can we stretch maintenance intervals?

- Can we reduce inspections?

- Can we reduce downtime for maintenance and overhaul?

- What about predictive maintenance?

Most of these questions are answered by experts that use their tacit knowledge and experience to give the best so far answers. And when the answers were wrong, they were accepted as new learning points. Next time we won’t make this mistake, and the experts become even more knowledgeable.

Most of these questions are answered by experts that use their tacit knowledge and experience to give the best so far answers. And when the answers were wrong, they were accepted as new learning points. Next time we won’t make this mistake, and the experts become even more knowledgeable.

Now, these questions could be answered if you can model your asset in a virtual environment. In the virtual world, you would use simulation models, logical models, and 3D Models to describe the asset. This is where Model-Based Systems Engineering practices are used. However, these models need to be calibrated based on reality. And that is where IoT and Asset Operation Monitoring comes in connecting physical behavior with virtual predicted behavior. You can read more about this relationship in my post: Will MBSE the new PLM instead of IoT?

PLM and BIM

In 2014 when I started to discuss PLM concepts with EPC-companies (Engineering, Procurement, and Construction), mainly in the Oil & Gas industry. Here excellent asset development tools (AVEVA, Intergraph, Bentley) are the standard, and as the purpose of an EPC company is to deliver a plant or platform. Each software tool has its purpose and there is no lifecycle strategy. The value PLM could bring was providing a program overview (complementary with Primavera), standardization, multidisciplinary coordination and visibility across projects to capture knowledge.

Most of the time, the EPC companies did not see the value of optimizing themselves as this was accepted in the process. Even while their productivity and cost due to poor quality (fixing during construction /commissioning) were absurd (10-20 % of the project budget). Cultural change – think longer instead of fix later – was hard to explain. In the end, the EPC was not responsible for operations, so why bother that much?

My blog posts: PLM for all Industries and 2014 – the year that the construction industry did not discover PLM illustrate the challenge at that time. None of the EPCs and construction companies had the, that improving collaboration based on information-continuity (not data-driven yet) could bring the significant benefits, despite their relatively low-profit margin (1- 3 % is considered excellent). Breaking the silos is too.

My blog posts: PLM for all Industries and 2014 – the year that the construction industry did not discover PLM illustrate the challenge at that time. None of the EPCs and construction companies had the, that improving collaboration based on information-continuity (not data-driven yet) could bring the significant benefits, despite their relatively low-profit margin (1- 3 % is considered excellent). Breaking the silos is too.

Two recent trends, however, changed the status quo that existed.

First of all, more and more, the owner/operator does not want to be responsible for the maintenance and operations of the asset. The typical EPC-companies now became DBO-companies (Design Build and Operate), this requires lifecycle thinking for these companies as most of the costs of an asset are during its maintenance and operation phase.

First of all, more and more, the owner/operator does not want to be responsible for the maintenance and operations of the asset. The typical EPC-companies now became DBO-companies (Design Build and Operate), this requires lifecycle thinking for these companies as most of the costs of an asset are during its maintenance and operation phase.

Advanced Thinking (read: (Model-Based) Systems Engineering) can help these companies to shift their focus on a more sustainable design of the asset for the future and get rewarded for that. In the old EPC-model, the target was “just” to deliver as specified.

A second significant trend is the availability of cloud infrastructure for the construction world. A cloud infrastructure does not require considerable investment for the stakeholders in a construction project. By introducing BIM in a common data environment (CDE), a comparable infrastructure to PLM is created and likely the Maintenance-and-Operatie stakeholder is eager to have the full virtual definition here for the future.

A second significant trend is the availability of cloud infrastructure for the construction world. A cloud infrastructure does not require considerable investment for the stakeholders in a construction project. By introducing BIM in a common data environment (CDE), a comparable infrastructure to PLM is created and likely the Maintenance-and-Operatie stakeholder is eager to have the full virtual definition here for the future.

Read more about BIM and CDE for example, here: CDE – strategic BIM process tool.

Of course, technology and standards are there to collaborate. Now it is up to the stakeholders involved to develop new skills for collaboration (learn or hire) and implement them through new ways of working. A learning process can never be pushed by a big-bang, so make sure your company operates in two modes while learning.

As I mentioned the Maintenance-and-Operate stakeholders or in traditional cases, the Owner/Operators are incredibly interested in a well-defined virtual model of the asset. This allows them to analyze and simulate the implementation of fixes and enhancements for the future with an optimum result. Again we are talking about a digital twin of the asset here

Conclusion

Even though the digital twin is on the top of the Gartner Hype cycle, it has become already a vital principle to implement in particular for substantial, critical assets. As these precious assets, minor inefficiencies in data continuity can still be afforded to learn. From the moment companies have established a digital continuity between their virtual and physical assets, the concept for Digital Twin can also be profitable (and required) for other industries. In particular when these companies want to deliver their products as a service.

Note: I have been talking this year a lot about the challenges of digital transformation applied to PLM in particular. During PI PLMx London 2020 on February 3 and 4, I will lead a Think Thank session related to the challenge of connecting your PLM transformation to your executives’ vision (and budget). See you there ?

Note: I have been talking this year a lot about the challenges of digital transformation applied to PLM in particular. During PI PLMx London 2020 on February 3 and 4, I will lead a Think Thank session related to the challenge of connecting your PLM transformation to your executives’ vision (and budget). See you there ?

This is for the moment the last post about the difference between files and a data-oriented approach. This time I will focus on the need for open exchange standards and the relation to proprietary systems. In my first post, I explained that a data-centric approach can bring many business benefits and is pointing to background information for those who want to learn more in detail. In my second post, I gave the example of dealing with specifications.

This is for the moment the last post about the difference between files and a data-oriented approach. This time I will focus on the need for open exchange standards and the relation to proprietary systems. In my first post, I explained that a data-centric approach can bring many business benefits and is pointing to background information for those who want to learn more in detail. In my second post, I gave the example of dealing with specifications.

It demonstrated that the real value for a data-centric approach comes at the moment there are changes of the information over time. For a specification that is right the first time and never changes there is less value to win with a data-centric approach. Moreover, aren’t we still dreaming that we do everything right the first time.

The specification example was based on dealing with text documents (sometimes called 1D information). The same benefits are valid for diagrams, schematics (2D information) and CAD models (3D information)

1D,2D,3D …..

The challenge for a data-oriented approach is that information needs to be stored in data elements in a database, independent of an individual file format. For text, this might be easy to comprehend. Text elements are relative simple to understand. Still the OpenDocument standard for Office documents is in the background based on a lot of technical know-how and experience to make it widely acceptable. For 2D and 3D information this is less obvious as this is for the domain of the CAD vendors.

The challenge for a data-oriented approach is that information needs to be stored in data elements in a database, independent of an individual file format. For text, this might be easy to comprehend. Text elements are relative simple to understand. Still the OpenDocument standard for Office documents is in the background based on a lot of technical know-how and experience to make it widely acceptable. For 2D and 3D information this is less obvious as this is for the domain of the CAD vendors.

CAD vendors have various reasons not to store their information in a neutral format.

- First of all, and most important for their business, a neutral format would reduce the dependency on their products. Other vendors could work with these formats too, therefore reducing the potential market capture. You could say that in a certain manner the Autodesk 2D format for DXF (and even DWG) have become a neutral format for 2D data as many other vendors have applications that read and write back information in the DXF-data format. So far DXF is stored in a file but you could store DXF data also inside a database and make it available as elements.

- This brings us to the second reason why using neutral data formats are not that evident for CAD vendors. It reduces their flexibility to change the format and optimize it for maximal performance. Commercially the significant, immediate disadvantage of working in neutral formats is that it has not been designed for particular needs in an individual application and therefore any “intelligent” manipulations on the data are hard to achieve..

The same reasoning can be applied to 3D data, where different neutral formats exist (IGES, STEP, …. ). It is very difficult to identify a common 3D standard without losing many benefits that an individual 3D CAD format brings currently. For example, CATIA is handling 3D CAD data in a complete different way as Creo does, and again handled different compared to NX, SolidWorks, Solid Edge and Inventor. Even some of them might use the same CAD kernel.

The same reasoning can be applied to 3D data, where different neutral formats exist (IGES, STEP, …. ). It is very difficult to identify a common 3D standard without losing many benefits that an individual 3D CAD format brings currently. For example, CATIA is handling 3D CAD data in a complete different way as Creo does, and again handled different compared to NX, SolidWorks, Solid Edge and Inventor. Even some of them might use the same CAD kernel.

However, it is not only about the geometry anymore; the shapes represent virtual objects that have metadata describing the objects. In addition other related information exists, not necessarily coming from the design world, like tasks (planning), parts (physical), suppliers, resources and more

PLM, ERP, systems and single source of truth

This brings us in the world of data management, in my world mainly PLM systems and ERP systems. An ERP system is already a data-centric application, the BOM is already available as metadata as well as all the scheduling and interaction with resources, suppliers and financial transactions. Still ERP systems store a lot of related documents and drawings, containing content that does not match their data model.

PLM systems have gradually becoming more and more data centric as the origin was around engineering data, mostly stored in files. In a data-centric approach, there is the challenge to exchange data between a PLM system and an ERP system. Usually there is a need to share information between two systems, mainly the items. Different definitions of an item on the PLM and ERP side make it hard to exchange information from one system to the other. It is for that reason why there are many discussions around PLM and ERP integration and the BOM.

In the modern data-centric approach however we should think less and less in systems and more and more in business processes performed on actual data elements. This requires a company-wide, actually an enterprise-wide or industry-wide data definition of all information that is relevant for the business processes. This leads into Master Data Management, the new required skill for enterprise solution architects

The data-centric approach creates the impression that you can achieve a single source of the truth as all objects are stored uniquely in a database. SAP solves the problem by stating everything fits in their single database. To my opinion this is more a black hole approach: Everything gets inside, but even light cannot escape. Usability and reuse of information that was stored with the intention not to be found is the big challenge here.

The data-centric approach creates the impression that you can achieve a single source of the truth as all objects are stored uniquely in a database. SAP solves the problem by stating everything fits in their single database. To my opinion this is more a black hole approach: Everything gets inside, but even light cannot escape. Usability and reuse of information that was stored with the intention not to be found is the big challenge here.

Other PLM and ERP vendors have different approaches. Either they choose for a service bus architecture where applications in the background link and synchronize common data elements from each application. Therefore, there is some redundancy, however everything is connected. More and more PLM vendors focus on building a platform of connected data elements, where on top applications will run, like the 3DExperience platform from Dassault Systèmes.

As users we are more and more used to platforms as Google, Apple provide these platforms already in the cloud for common use on our smartphones. The large amount of apps run on shared data elements (contacts, locations …) and store additional proprietary data.

As users we are more and more used to platforms as Google, Apple provide these platforms already in the cloud for common use on our smartphones. The large amount of apps run on shared data elements (contacts, locations …) and store additional proprietary data.

Platforms, Networks and standards

And here we enter an interesting area of discussion. I think it is a given that a single database concept is a utopia. Therefore, it will be all about how systems and platforms communicate with each other to provide in the end the right information to the user. The systems and platforms need to be data-centric as we learned from the discussion around the document (file centric) or data-centric approach.

In this domain, there are several companies already active for years. Datamation from Dr. Kais Al-Timimi in the UK is such a company. Kais is a veteran in the PLM and data modeling industry, and they provide a platform for data-centric collaboration. This quote from one of his presentations, illustrates we share the same vision:

“……. the root cause of all interoperability and data challenges is the need to transform data between systems using different, and often incompatible, data models.

It is fundamentally different from the current Application Centric Approach, in that data is SHARED, and therefore, ‘NOT OWNED’ by the applications that create it.

This means in a Data Centric Approach data can deliver MORE VALUE, as it is readily sharable and reusable by multiple applications. In addition, it removes the overhead of having to build and maintain non-value-added processes, e.g. to move data between applications.”

Another company in the same domain is Eurostep, who are also focusing on business collaboration between in various industries. Eurostep has been working with various industry standards, like AP203/214, PLCS and AP233. Eurostep has developed their Share-A-space platform to enable a data-centric collaboration.

This type of data collaboration is crucial for all industries. Where the aerospace and automotive industry are probably the most mature on this topic, the process industry and construction industry are currently also focusing on discovering data standards and collaboration models (ISO 15926 / BIM). It will be probably the innovators in these industries that clear the path for others. For sure it will not come from the software vendors as I discussed before.

This type of data collaboration is crucial for all industries. Where the aerospace and automotive industry are probably the most mature on this topic, the process industry and construction industry are currently also focusing on discovering data standards and collaboration models (ISO 15926 / BIM). It will be probably the innovators in these industries that clear the path for others. For sure it will not come from the software vendors as I discussed before.

Conclusion

If you reach this line, it means the topic has been interesting in depth for you. In the past three post starting from the future trend, an example and the data modeling background, I have tried to describe what is happening in a simplified manner.

If you really want to dive into the PLM for the future, I recommend you visit the upcoming PDT 2014 conference in Paris on October 14 and 15. Here experts from different industries will present and discuss the future PLM platform and its benefits. I hope to meet you there.

Some more to read:

https://us.sogeti.com/wp-content/uploads/2014/04/PLM-Systems-White-Paper.pdf

“Confused? You won’t be after this episode of Soap. “

Who does not remember this tagline from the first official Soap series starting in 1977 and released in the Netherlands in 1979?

Every week the Campbells and the Tates entertained us with all the ingredients of a real soap: murder, infidelity, aliens’ abduction, criminality, homosexuality and more.

The episode always ended with a set of questions, leaving you for a week in suspense , hoping the next episode would give you the answers.

For those who do not remember the series or those who never saw it because they were too young, this was the mother of all Soaps.

What has it to do with PLM?

Soap has to do with strange people that do weird things (I do not want to be more specific). Recently I noticed that this is happening even in the PLM blogger’s world. Two of my favorite blogs demonstrated something of this weird behavior.

First Steve Ammann in his Zero Wait-State blog post: A PLM junkie at sea point-solutions versus comprehensive mentioned sailing from Ventura CA to Cabo San Lucas, Mexico on a 35 foot sailboat and started thinking about PLM during his night shift. My favorite quote:

Besides dealing with a couple of visits from Mexican coast guard patrol boats hunting for suspected drug runners, I had time alone to think about my work in the PLM industry and specifically how people make decisions about what type of software system or systems they choose for managing product development information. Yes only a PLM “junkie” would think about PLM on a sailing trip and maybe this is why the Mexican coast guard was suspicious.

Second Oleg in his doomsday blog post: The End of PLM Communism, was thinking about PLM all the weekend. My favorite quote:

I’ve been thinking about PLM implementations over the weekend and some perspective on PLM concepts. In addition to that, I had some healthy debates over the weekend with my friends online about ideas of centralization and decentralization. All together made me think about potential roots and future paths in PLM projects.

It demonstrates the best thinking is done during out-of-office time and on casual locations. Knowing this from my long cycling tours in the weekend, I know it is true.

It demonstrates the best thinking is done during out-of-office time and on casual locations. Knowing this from my long cycling tours in the weekend, I know it is true.

I must confess that I have PLM thoughts during cycling.

Perhaps the best thinking happens outside an office?

I leave the follow up on this observation to my favorite Dutch psychologist Diederik Stapel, who apparently is out of office too.

Now back to serious PLM

Both posts touch the topic of a single comprehensive solution versus best-of-breed solutions. Steve is very clear in his post. He believes that in the long term a single comprehensive solution serves companies better, although user performance (usability) is still an issue to consider. He provides guidance in making the decision for either a point solution or an integrated solution.

And I am aligned with what Steve is proposing.

Oleg is coming from a different background and in his current position he believes more in a distributed or network approach. He looks at PLM vendors/implementations and their centralized approach through the eyes of someone who knows the former Soviet Union way of thinking: “Centralize and control”.

The association with communism which was probably not the best choice when you read the comments. This association makes you think as the former Soviet Union does not exist anymore, what about former PLM implementations and the future? According to Oleg PLM implementations should be more focused on distributed systems (on the cloud ?), working and interacting together connecting data and processes.

The association with communism which was probably not the best choice when you read the comments. This association makes you think as the former Soviet Union does not exist anymore, what about former PLM implementations and the future? According to Oleg PLM implementations should be more focused on distributed systems (on the cloud ?), working and interacting together connecting data and processes.

And I am aligned with what Oleg is proposing.

Confused? You want be after reading my recent experience.

I have been involved in the discussion around the best possible solution for an EPC contractor (Engineering Procurement Construction) in the Oil & Gas industry. The characteristic of their business is different from standard manufacturing companies. EPC contractors provide services for an owner/operator of a plant and they are selected because of their knowledge, their price, their price, their price, quality and time to deliver.

This means an EPC contractor is focusing on execution, making sure they have the best tools for each discipline and this is the way they are organized and used to work. The downside of this approach is everyone is working on its own island and there is no knowledge capitalization or sharing of information. The result each solution is unique, which brings a higher risk for errors and fixes required during construction. And the knowledge is in the head of experience people ….. and they retire at a certain moment.

So this EPC contractor wanted to build an integrated system, where all disciplines are connected and sharing information where relevant. In the Oil & Gas industry, ISO15926 is the standard. This standard is relative mature to serve as the neutral exchange standard of information between disciplines. The ideal world for best in class tools communicating with each other, or not ?

Imagine there are 6 discipline tools, an engineering environment optimized for plant engineering, a project management environment, an execution environment connecting suppliers and materials, a delivery environment assuring the content of a project is delivered in the right stages and finally a knowledge environment, capitalizing lessons learned, standards and best practices.

Imagine there are 6 discipline tools, an engineering environment optimized for plant engineering, a project management environment, an execution environment connecting suppliers and materials, a delivery environment assuring the content of a project is delivered in the right stages and finally a knowledge environment, capitalizing lessons learned, standards and best practices.

This results in 6 tools and 12 interfaces to a common service bus connecting these tools. 12 interfaces as information needs to be send and received from the service bus per application. Each tools will have redundant data for its own execution.

What happens if a PLM provider could offer three of these tools on a common platform? This would result into 4 tools to install and only 8 interfaces. The functionality in the common PLM system does not require data redundancy but shares common information and therefore will provide better performance in a cross-discipline scenario.

In the ultimate world all tools will be on one platform, providing the best performance and support for this EPC contractor. However this is utopia. It is almost impossible to have a 100 % optimized system for a group of independent companies working together. Suppliers will not give up their environment and own IP to embed it in a customer´s ideal environment. So there is always a compromise to find between a best integrated platform (optimal performance – reduced cost of interfaces and cost of ownership) and the best connected environment (tools connection through open standards).

And this is why both Steve and Oleg have a viewpoint that makes sense. Depending on the performance of the tools and the interaction with the supplier network the PLM platform can provide the majority of functionality. If you are a market dominating OEM you might even reach 100 % coverage for your own purpose, although the modern society is more about connecting information where possible.

MY CONCLUSION after reading both posts:

- Oleg tries to provoke, and like a soap, you might end up confused after each episode.

- Steve in his post gives a common sense guidance, useful if you spend time on digesting it, not a soap.

Now I hope you are not longer confused and wish you all a successful and meaningful 2013. The PLM soap will continue in alphabetical order:

- Will Aras survive 21-12-2012 and support the Next generation ?

- Will Autodesk get of the cloud or have a coming out ?

- Will Dassault get more Experienced ?

- Will Oracle PLM customers understand it is not a database ?

- Will PTC get out of the CAD jail and receive $ 200 ?

- Will SAP PLM be really 3D and user friendly ?

- Will Siemens PLM become a DIN or ISO standard ?

See the next episodes of my PLM blog in 2013

In the past months, I have talked and working with various companies about the topic of Asset Lifecycle Management (ALM) based on a PLM system. Conceptual it is a very strong concept and so far only a few companies have implemented this approach, as PLM systems have not been used so much outside the classical engineering world.

In the past months, I have talked and working with various companies about the topic of Asset Lifecycle Management (ALM) based on a PLM system. Conceptual it is a very strong concept and so far only a few companies have implemented this approach, as PLM systems have not been used so much outside the classical engineering world.

Why using a PLM system ?

To use a PLM system for managing all asset related information ( asset parameters, inventory, documents, locations, lifecycle status) in a single system assures the owner / operator that a ‘single version of the truth’ starts to exist. See also one of my older posts about ALM to understand the details.

The beauty lies in the fact that this single version of the truth concept combines the world of as-built for operators and the world of as-defined / as-planned for preparing changes. Instead of individual silos the ALM system provides all information, of course filtered in such a way that a user only sees information related to the user’s role in the system.

The beauty lies in the fact that this single version of the truth concept combines the world of as-built for operators and the world of as-defined / as-planned for preparing changes. Instead of individual silos the ALM system provides all information, of course filtered in such a way that a user only sees information related to the user’s role in the system.

The challenge for PLM vendors is to keep the implementation simple as PLM initially in its core industries was managing the complexity. Now the target is to keep it extremely simple and easy to used for the various user roles, meanwhile trying to stay away from heavy customizations to deliver the best Return on Investment.

Having a single version of the truth provides the company with a lot of benefits to enhance operations. Imagine you find information and from its status you know immediately if it is the latest version and if other versions exists. In the current owner / operator world often information is stored and duplicated in many different systems, and finding the information in one system does not mean that this is the right information. I am sure the upcoming event from IDC Manufacturing Insights will also contribute to these findings

It is clear that historically this situation has been created due to the non-intelligent interaction with the EPC contractors building or changing the plant. The EPC contractors use intelligent engineering software, like AVEVA , Bentley, Autodesk and others, but still during hand-over we provide dumb documents, paper based, tiff, PDF or some vendor specific formats which will become unreadable in the upcoming years. For long-term data security often considered the only way, as neutral standards like ISO-15926 still require additional vision and knowledge from the owner/operator to implement it.

, Bentley, Autodesk and others, but still during hand-over we provide dumb documents, paper based, tiff, PDF or some vendor specific formats which will become unreadable in the upcoming years. For long-term data security often considered the only way, as neutral standards like ISO-15926 still require additional vision and knowledge from the owner/operator to implement it.

Now back to the discussions…

In many discussions with potential customers the discussion often went into the same direction:

“How to get the management exited and motivated to invest into this vision ? The concept is excellent but applying it to our organization would lead to extra work and costs without immediate visibility of the benefits !”

This is an argument I partly discussed in one of my previous posts: PLM, CM and ALM not sexy. And this seems to be the major issue in western Europe and the US. Business is monitored and measured for the short term, maximum with a plan for the next 4 – 5 years. Nobody is rewarded for a long-term vision and when something severe happens, the current person in power will be to blame or to excuse himself.

As a Dutch inhabitant, I am still proud of what our former Dutch government decided and did in the after the flooding in 1953. The Dutch invested a lot of money and brain power into securing inhabitants behind the coast line in a project called the Delta Works. This was an example of vision instead of share holder value. After the project has been finished in the eighties there was no risk for a severe flooding anymore and the lessons learned from that time, brought the Dutch the knowledge to support other nations at risk for flooding. I am happy that in 1953 the government was not in the mood to optimize their bonus ( an unknown word at that time)

As a Dutch inhabitant, I am still proud of what our former Dutch government decided and did in the after the flooding in 1953. The Dutch invested a lot of money and brain power into securing inhabitants behind the coast line in a project called the Delta Works. This was an example of vision instead of share holder value. After the project has been finished in the eighties there was no risk for a severe flooding anymore and the lessons learned from that time, brought the Dutch the knowledge to support other nations at risk for flooding. I am happy that in 1953 the government was not in the mood to optimize their bonus ( an unknown word at that time)

Back to Asset Lifecycle Management ….