You are currently browsing the category archive for the ‘PLM’ category.

In the last two weeks, three events were leading to this post.

First, I read John Stark’s recent book Products2019. A must-read for anyone who wants to understand the full reach of product lifecycle related activities. See my recent post: Products2019, a must-read if you are new to PLM

First, I read John Stark’s recent book Products2019. A must-read for anyone who wants to understand the full reach of product lifecycle related activities. See my recent post: Products2019, a must-read if you are new to PLM

Afterwards, I talked with John, discussing the lack of knowledge and teaching of PLM, not to be confused by PLM capabilities and features.

Second, I participated in an exciting PI DX USA 2020 event. Some of the sessions and most of the roundtables provided insights to me and, hopefully, many other participants. You can get an impression in the post: The Weekend after PI DX 2020 USA.

Second, I participated in an exciting PI DX USA 2020 event. Some of the sessions and most of the roundtables provided insights to me and, hopefully, many other participants. You can get an impression in the post: The Weekend after PI DX 2020 USA.

A small disappointment in that event was the closing session with six vendors, as I wrote. I know it is evident when you put a group of vendors in the arena, it will be about scoring points instead of finding alignment. Still, having criticism does not mean blaming, and I am always open to having a dialogue. For that reason, I am grateful for their sponsorship and contribution.

Oleg Shilovitsky mentioned cleverly that this statement is a contradiction.

“How can you accuse PLM vendors of having a limited view on PLM and thanking them for their contribution?”

I hope the above explanation says it all, combined with the fact that I grew up in a Dutch culture of not hiding friction, meanwhile being respectful to others.

We cannot simplify PLM by just a better tool or technology or by 3D for everybody. There are so many more people and processes related to product lifecycle management involved in this domain if you want a real conference, however many of them will not sponsor events.

It is well illustrated in John Stark’s book. Many disciplines are involved in the product lifecycle. Therefore, if you only focus on what you can do with your tool, it will lead to an incomplete understanding.

It is well illustrated in John Stark’s book. Many disciplines are involved in the product lifecycle. Therefore, if you only focus on what you can do with your tool, it will lead to an incomplete understanding.

If your tool is a hammer, you hope to see nails everywhere around you to demonstrate your value

The thirds event was a LinkedIn post from John Stark – 16 groups needing Product Lifecycle Knowledge, which for me was a logical follow-up on the previous two events. I promised John to go through these 16 groups and provide my thoughts.

The thirds event was a LinkedIn post from John Stark – 16 groups needing Product Lifecycle Knowledge, which for me was a logical follow-up on the previous two events. I promised John to go through these 16 groups and provide my thoughts.

Please read his post first as I will not rewrite what has been said by John already.

CEOs and CTOs

John suggested that they should read his book, which might take more than eight hours. CEOs and CTOs, most of the time, do not read this type of book with so many details, so probably mission impossible.

John suggested that they should read his book, which might take more than eight hours. CEOs and CTOs, most of the time, do not read this type of book with so many details, so probably mission impossible.

They want to keep up with the significant trends and need to think about future business (model).

New digital and technical capabilities allow companies to move from a linear, coordinated business towards a resilient, connected business. This requires exploring future business models and working methods by experimenting in real-life, not Proof of Concept. Creating a learning culture and allowing experiments to fail is crucial, as you only learn by failing.

CDO, CIOs and Digital Transformation Executives

They are the crucial people to help the business to imagine what digital technologies can do. They should educate the board and the business teams about the power of having reliable, real-time data available for everyone connected. Instead of standardizing on systems and optimizing the siloes, they should assist and lead in new infrastructure for connected services, end-to-end flows delivered on connected platforms.

They are the crucial people to help the business to imagine what digital technologies can do. They should educate the board and the business teams about the power of having reliable, real-time data available for everyone connected. Instead of standardizing on systems and optimizing the siloes, they should assist and lead in new infrastructure for connected services, end-to-end flows delivered on connected platforms.

These concepts won’t be realized soon. However, doing nothing is a big risk, as the traditional business will decline in a competitive environment. Time to act.

Departmental Managers

These are the people that should worry about their job in the long term. Their current mission might be to optimize their department within its own Profit & Loss budget. The future is about optimizing the information flow for the whole value chain, including suppliers and customers.

These are the people that should worry about their job in the long term. Their current mission might be to optimize their department within its own Profit & Loss budget. The future is about optimizing the information flow for the whole value chain, including suppliers and customers.

I wrote about it in “The Middle Management Dilemma.” Departmental Managers should become more team leaders inspiring and supporting the team members instead of controlling the numbers.

Products Managers

This is a crucial role for the future, assuming a product manager is not only responsible for the marketing or development side of the product but also gets responsibility for understanding what happens with the product during production and sales performance. Understanding the full lifecycle performance and cost should be their mission, supported by a digital infrastructure.

This is a crucial role for the future, assuming a product manager is not only responsible for the marketing or development side of the product but also gets responsibility for understanding what happens with the product during production and sales performance. Understanding the full lifecycle performance and cost should be their mission, supported by a digital infrastructure.

Product Developers

They should read the book Products2019 to be aware there is so much related to their work. From this understanding, a product developer should ask the question:

“What can I do better to serve my internal and external customers ?”

This question will no arise in a hierarchical organization where people are controlled by managers that have a mission to optimize their silo. Product Developers should be trained and coached to operate in a broader context, which should be part of your company’s mission. Too many people complain about usability in their authoring and data management systems without having a holistic understanding of why you need change processes and configuration management.

This question will no arise in a hierarchical organization where people are controlled by managers that have a mission to optimize their silo. Product Developers should be trained and coached to operate in a broader context, which should be part of your company’s mission. Too many people complain about usability in their authoring and data management systems without having a holistic understanding of why you need change processes and configuration management.

Product Lifecycle Management (PLM) deployers

Here I have a little bit of the challenge that this might be read as PLM-system users. However, it should be clear that we mean here people using product data at any moment along the product lifecycle, not necessarily in a single system.

This is again related to your company’s management culture. In the ideal world, people work with a purpose and get informed on how their contribution fits the company’s strategy and execution.

Unfortunately, in most hierarchical organizations, the strategy and total overview get lost, and people become measured resources.

New Hires and others

John continues with five other groups within the organization. I will not comment on them, as the answers are similar to the ones above – it is about organization and culture.

Educators and Students

This topic is very close to my heart, and one of the reasons I continue blogging about PLM practices. There is not enough attention to product development methodology or processes. Engineers can get many years of education in specific domains, like product design principles, available tools and technologies, performing physical and logical simulations.

This topic is very close to my heart, and one of the reasons I continue blogging about PLM practices. There is not enough attention to product development methodology or processes. Engineers can get many years of education in specific domains, like product design principles, available tools and technologies, performing physical and logical simulations.

Not so much time is spent on educating current best practices, business models for product lifecycle management.

Check in your country how many vendor-independent methodology-oriented training you can find. Perhaps the only consistent organization I know is CIMdata, where the challenge is that they deliver training to companies after students have graduated. It would be great if education institutes would embed serious time for product lifecycle management topics in their curriculum. The challenge, of course, the time and budget needed to create materials and, coming next, prioritizing this topic on the overall agenda.

Check in your country how many vendor-independent methodology-oriented training you can find. Perhaps the only consistent organization I know is CIMdata, where the challenge is that they deliver training to companies after students have graduated. It would be great if education institutes would embed serious time for product lifecycle management topics in their curriculum. The challenge, of course, the time and budget needed to create materials and, coming next, prioritizing this topic on the overall agenda.

I am happy to participate to a Specialized Master education program aiming at the Products and Buildings Digital Engineering Manager (INGENUM). This program organized by Arts Et Metiers in France helps create the overview for understanding PLM and BIM – in the French language as before COVID-19 this was an on-site training course in Paris.

I am happy to participate to a Specialized Master education program aiming at the Products and Buildings Digital Engineering Manager (INGENUM). This program organized by Arts Et Metiers in France helps create the overview for understanding PLM and BIM – in the French language as before COVID-19 this was an on-site training course in Paris.

Hopefully, there are more institutes offering PLM eductation – feel free to add them in the comments of this post.

Consultants, Integrators and Software Company Employees

Of course, it would be nice if everyone in these groups understands the total flow and processes within an organization and how they relate to each other. Too often, I have seen experts in a specific domain, for example, a 3D CAD-system having no clue about revisioning, the relation of CAD to the BOM, or the fundamentals of configuration management.

Of course, it would be nice if everyone in these groups understands the total flow and processes within an organization and how they relate to each other. Too often, I have seen experts in a specific domain, for example, a 3D CAD-system having no clue about revisioning, the relation of CAD to the BOM, or the fundamentals of configuration management.

Consultants, Integrators and Software Company Employees have their own challenges as their business model is often looking for specialized skills they can sell to their clients, where a broader and general knowledge will come from experience on-the-job.

And if you are three years working full-time on a single project or perhaps work in three projects, your broader knowledge does not grow fast. You might become the hammer that sees nails everywhere.

For that reason, I recommend everyone in my ecosystem to invest your personal time to read related topics of interest. Read LinkedIn-posts from others and learn to differentiate between marketing messages and people willing to share experiences. Don’t waste your time on the marketing messages and react and participate in the other discussions. A “Like” is not enough. Ask questions or add your insights.

For that reason, I recommend everyone in my ecosystem to invest your personal time to read related topics of interest. Read LinkedIn-posts from others and learn to differentiate between marketing messages and people willing to share experiences. Don’t waste your time on the marketing messages and react and participate in the other discussions. A “Like” is not enough. Ask questions or add your insights.

In the context of my personal learning, I mentioned that I participated in the DigitalTwin-conference in the Netherlands this week. Unfortunately, due to the partial lockdown, mainly a virtual event.

In the context of my personal learning, I mentioned that I participated in the DigitalTwin-conference in the Netherlands this week. Unfortunately, due to the partial lockdown, mainly a virtual event.

I got several new insights that I will share with you soon. An event that illustrated Digital Twin as a buzzword might be hype, however several of the participants illustrated examples of where they applied or plan to apply Digital Twin concepts. A great touch with reality.

Another upcoming conference that will start next week in the PLM Roadmap 2020 – PDT conference. The theme: Digital Thread—the PLM Professionals’ Path to Delivering Innovation, Efficiency, and Quality is not a marketing theme as you can learn from the agenda. Step by step we are learning here from each other.

Another upcoming conference that will start next week in the PLM Roadmap 2020 – PDT conference. The theme: Digital Thread—the PLM Professionals’ Path to Delivering Innovation, Efficiency, and Quality is not a marketing theme as you can learn from the agenda. Step by step we are learning here from each other.

Conclusion

John Stark started with the question of who should need Product Lifecycle Knowledge. In general, Knowledge is power, and it does not come for free. Either by consultancy, reading or training. Related to Product Lifecycle Management, everyone must understand the bigger picture. For executives as they will need to steer the company in the right direction. For everyone else to streamline the company and enjoy working in a profitable environment where you contribute and can even inspire others.

An organization is like a human body; you cannot have individual cells or organs that optimize themselves only – we have a name for that disease. Want to learn more? Read this poem: Who should be the boss?

![]() It was a pleasure to participate this last week in the PI DX USA conference for several reasons. First, because Marketkey has been able to organize the event in the same manner as before. Meaning presentations, networking meetings, and roundtable discussions. Virtual, of course, however, in the same spirit. Secondly, the 3-day event took place during a late afternoon for Europe lasted 4 – 5 hours per day, allowing a larger audience to learn from each other. Before we had the European viewpoints and American viewpoints separate, now we had the chance to discuss and listen together. A single version of the truth!

It was a pleasure to participate this last week in the PI DX USA conference for several reasons. First, because Marketkey has been able to organize the event in the same manner as before. Meaning presentations, networking meetings, and roundtable discussions. Virtual, of course, however, in the same spirit. Secondly, the 3-day event took place during a late afternoon for Europe lasted 4 – 5 hours per day, allowing a larger audience to learn from each other. Before we had the European viewpoints and American viewpoints separate, now we had the chance to discuss and listen together. A single version of the truth!

A few highlights of the sessions that I attended. There was enough to choose from if you look at the agenda from these three days.

Creating a Digital Enterprise: What are the Challenges and Where to Start?

The conference started with a panel discussion lead by David Sherburne. The three panelists came from entirely different industries Jaswinder Walia, CIO Engineering, GE Aviation, Erik Olson, VP Product Innovation and Development, Crocs and Samuli Savo, SVP Product Management & Innovation, Stora Enso. It was interesting to see the commonalities and differences between these companies, all working towards a digital enterprise.

The conference started with a panel discussion lead by David Sherburne. The three panelists came from entirely different industries Jaswinder Walia, CIO Engineering, GE Aviation, Erik Olson, VP Product Innovation and Development, Crocs and Samuli Savo, SVP Product Management & Innovation, Stora Enso. It was interesting to see the commonalities and differences between these companies, all working towards a digital enterprise.

David’s last question was about getting advice from these gentlemen.

What mistakes to avoid and what to share?

Jaswinder: The mistake of doing too much analysis paralyzed the organization. Sometimes you need to move ahead and adapt during the journey – do not wait. Sometimes there is too much focus on the quality of a business solution and not enough attention to the flow of information

Eric: COVID-19 was THE success. It pushed people to work with digital tools. They had immediately proof points delivering a deal within 6 weeks.

Samuli: The success is in funding ideas. Samuli had a more extended session on this topic during the event. Do not invest in long time projects – visible success is needed in a few months, not in 1 year.

Probably I liked these three pieces of advice so much as it is the same as what I am advising companies. Moving from a traditional PLM-approach towards a more model-based approach. Instead of waiting and looking till others master this topic, start learning (small) and make sure you make progress. Learning is crucial for a digital transformation in the PLM-domain.

What is Stopping Manufacturers from Implementing Industry 4.0

This roundtable session was structured by Gavin Hill and Jonathan Bray, both from the AMRC (Advanced Manufacturing Research Centre), who gave several insights and examples from what AMRC has been doing as research in the context of Industry 4.0.

This roundtable session was structured by Gavin Hill and Jonathan Bray, both from the AMRC (Advanced Manufacturing Research Centre), who gave several insights and examples from what AMRC has been doing as research in the context of Industry 4.0.

A roundtable is supposed to be an interactive session, which would have been a challenge as I noticed 49 people subscribed to this session. However, during the session, it became clear there was a significant silent majority.

Gavin and Jonathan had a lot to share. When the time came to interact with the audience, it was mostly other vendors talking about their Industry 4.0 vision or capabilities.

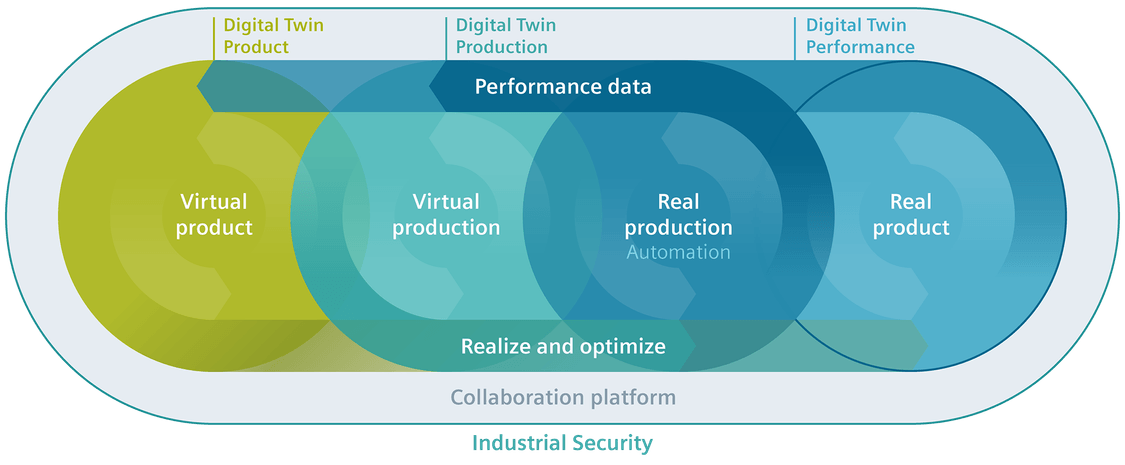

Vendors are perhaps more than ten years ahead with their vision as CIMdata’s image on the left states. When you would implement all these beautiful concepts, you will discover many frustrating gaps as your existing company’s processes, people, and skills are not that easy to change.

Vendors are perhaps more than ten years ahead with their vision as CIMdata’s image on the left states. When you would implement all these beautiful concepts, you will discover many frustrating gaps as your existing company’s processes, people, and skills are not that easy to change.

Smart Manufacturing: Simulating Workflows to Drive Efficiency and Productivity

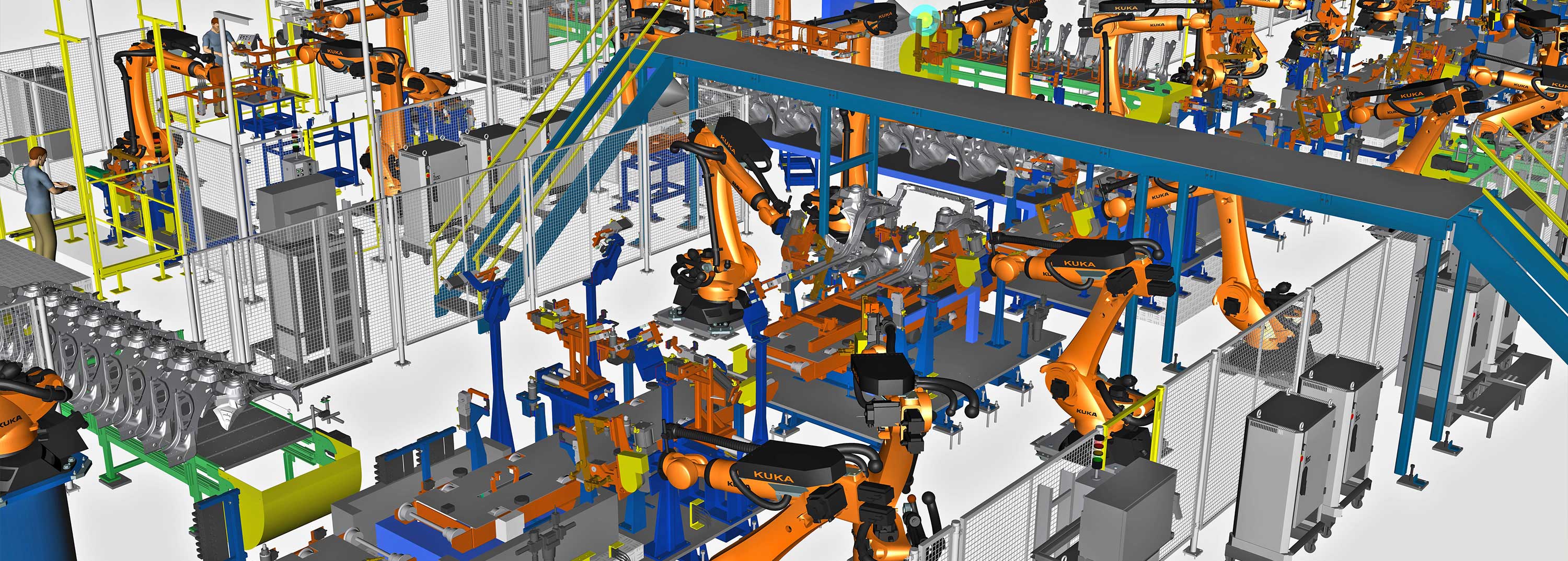

![]() A company that has been active in Smart Manufacturing is AGCO, who has been presenting several times their future strategy and lessons learned at PI.

A company that has been active in Smart Manufacturing is AGCO, who has been presenting several times their future strategy and lessons learned at PI.

See Susan Lauda’s presentation in The weekend after PLMx Hamburg 2018

In this session, Andreas Frank and Dominik Hammerl shared how AGCO utilizes line balancing simulation to identify bottlenecks and create a productive, efficient workflow as one of the projects within their Smart Factory strategy.

Their current solution was introducing a new that that had fast user adoption. The big elephant in the room remains to connect all these tools, having a flow of consistent data between all enterprise systems.

Their current solution was introducing a new that that had fast user adoption. The big elephant in the room remains to connect all these tools, having a flow of consistent data between all enterprise systems.

No problem at this time, as I heard in most of the sessions that I attended – stop analyzing and solving all the details upfront – start doing and learning – keeping the ultimate vision in mind/

Transforming to a Software-Centric Business Model: How the Need for Data is Changing Business

This was an exciting session to see digital transformation in action. Subramanian Kunchithapatham, VP Engineering of Sensormatic Solutions (Johnson Controls) who are focusing on the brick and mortar retail shops.

This was an exciting session to see digital transformation in action. Subramanian Kunchithapatham, VP Engineering of Sensormatic Solutions (Johnson Controls) who are focusing on the brick and mortar retail shops.

These shops have been evolving as online shopping. The shift of focus towards customer experience in the shop requires these businesses to adapt. By using a digital twin concept for shop behavior and operations, they can now sell software solutions that improve their customer’s performance, as you can see from the image below.

To What Degree Do We Need To Integrate PLM, ERP and MES?

This roundtable session, excellently moderated by Jan Johansson, Senior Director Digital Transformation at Terma A/S, was the type of roundtable you would like to participate in. I think the theme is actual for all of us

This roundtable session, excellently moderated by Jan Johansson, Senior Director Digital Transformation at Terma A/S, was the type of roundtable you would like to participate in. I think the theme is actual for all of us

Statements varied from”ERP is our only system of truth as here we manage our financial execution” till”We should include CRM, CPQ and all other TLAs inside an enterprise – the connected enterprise”

My observation was that many of us are still thinking in systems, an ERP-system, a PLM-system. We talk about”owning data” instead of”being accountable” for data in that context.

Another observation was to check who is responsible for PLM in your company. If it is engineering, probably your PLM-system is considered an engineering tool, not an infrastructure that enables product data to be available along the product lifecycle.

How to deal with legacy data, a challenge in the aerospace industry. Store data in neutral formats or select a preferred vendor-related format and stick to it.

How to deal with legacy data, a challenge in the aerospace industry. Store data in neutral formats or select a preferred vendor-related format and stick to it.

A great roundtable that hopefully inspired the participants to explore some of the options discussed or connect and learn more from each other experiences.

Overcoming Data Management Challenges with AI

Nicely complementary to the previous roundtable was the session moderated by Mo (Muhannad)Alomari’s, AI Hub Lead at Rolls Royce plc. As an introduction, Mo dazzled us with the amount of data/knowledge related to gas turbines. Impossible to comprehend or access by human beings without the support of Artificial Intelligence.

Nicely complementary to the previous roundtable was the session moderated by Mo (Muhannad)Alomari’s, AI Hub Lead at Rolls Royce plc. As an introduction, Mo dazzled us with the amount of data/knowledge related to gas turbines. Impossible to comprehend or access by human beings without the support of Artificial Intelligence.

Mo also brought the knowledge graph to our attention through this movie from the Google Knowledge Graph. We discussed this concept and its applicability for the PLM-domain. For sure, technology can bring high value for discovering information. However, there will still be a human-based interpretation required to filter out incorrect or unwanted associations. I think we all observe the challenges introduced by the”knowledge” algorithms on social media. Instead of building your knowledge, they try to drag you into even more absurd “facts.”

Mo also shared how, through AI, they are setting up data conversion practices. As you can imagine, a lot of Rolls Royce legacy data came from the era of paper/scanned drawings. Which text is meaningful on a drawing. Is the text a remark or an official annotation? AI-based best practices are not yet affordable for mainstream companies.

Mo also shared how, through AI, they are setting up data conversion practices. As you can imagine, a lot of Rolls Royce legacy data came from the era of paper/scanned drawings. Which text is meaningful on a drawing. Is the text a remark or an official annotation? AI-based best practices are not yet affordable for mainstream companies.

I believe we are all looking forward to learning from the best and bad practices of these frontrunners. As the group was small, it was an interesting discussion and learning session that you only can have by participating actively.

Embracing Digital in Face of Pandemic Disruption

I want to close my highlights by pointing to the final panel discussion. Where the theme was to”hear from experts who have been guiding customers through digital transformation projects before COVID-19 and supporting their clients throughout the crisis,” you would expect an expert discussion.

I want to close my highlights by pointing to the final panel discussion. Where the theme was to”hear from experts who have been guiding customers through digital transformation projects before COVID-19 and supporting their clients throughout the crisis,” you would expect an expert discussion.

Indeed, the first part illustrated the trend that COVID-19 accelerated the focus on an inclusive and flexible supply chain. Perhaps traditional PLM-systems have a massive engineering focus, now most panelists report a shifted focus to the supply chain. The point of gravity has shifted.

Indeed, the first part illustrated the trend that COVID-19 accelerated the focus on an inclusive and flexible supply chain. Perhaps traditional PLM-systems have a massive engineering focus, now most panelists report a shifted focus to the supply chain. The point of gravity has shifted.

The discussion started to shift, where the newcomers in PLM started to claim that they do not have an upgrade issue thanks to their cloud offering. An when Paul Powers started to pitch that upgrades should be as easy as smartphone upgrades and BOM-updates do not need people anymore because we have machine learning, it reminded me of my 2015 PDT presentation.

In The Perfect Storm or a Fatal Tsunami session (here on Slideshare) , I predicted that AI and machine learning would remove many traditional PLM-related processes in the long-term. However, the future solutions must be rigid, not just a demo.

In The Perfect Storm or a Fatal Tsunami session (here on Slideshare) , I predicted that AI and machine learning would remove many traditional PLM-related processes in the long-term. However, the future solutions must be rigid, not just a demo.

The discussion drifted toward “openness” and “PLM is dying out.” Again, here you could see the vendors’ fixation to talk about a single tool, not about a business strategy.

A statement like “PLM sucks” does not help the strategy. It shows these vendors cannot understand the PLM domain and prefer to create their own terminology, cornering PLM in the mechanical domain to be different. I will not go into the PLM sucks discussion as I mentioned this acronym at the PI 2016 event in Munich (slideshare).

However, we should be grateful that these companies sponsored this event. They imagine the (their) ideal future and thanks to their contribution, we were able to be in this event with fruitful discussions. Therefore my thanks to all the sponsors making this event happen.The challenge is always to imagine the future and next have a realistic path to get there on-time.

Conclusion

It was exciting to participate in this PI DX event. The Marketkey-team has transposed the conference concept to a virtual event, very close to the physical event. In particular, well-moderated roundtable sessions based on Teams are the big differentiator for me compared to other virtual events I have seen.

Expecting COVID-19 will not disappear next week, I look forward to the next event with such an interaction.

This time it is again about learning. Last week, I read John Stark’s book: Products2019: A project to map and blueprint the flow and management of products across the product lifecycle: Ideation; Definition; Realisation; Support of Use; Retirement and Recycling. John, a well-known PLM consultant and writer of academic books related to PLM, wrote this book during his lockdown due to the COVID-19 virus. The challenge with PLM (books) is that it is, in a way boring from the outside. Remember my post: How come PLM and CM are boring? (reprise) ?

This time it is again about learning. Last week, I read John Stark’s book: Products2019: A project to map and blueprint the flow and management of products across the product lifecycle: Ideation; Definition; Realisation; Support of Use; Retirement and Recycling. John, a well-known PLM consultant and writer of academic books related to PLM, wrote this book during his lockdown due to the COVID-19 virus. The challenge with PLM (books) is that it is, in a way boring from the outside. Remember my post: How come PLM and CM are boring? (reprise) ?

This time John wrapped the “boring” part into a story related to Jane from Somerset, who, as part of her MBA studies, is performing a research project for Josef Mayer Maschinenfabrik. The project is to describe for the newly appointed CEO what happens with the company’s products all along the lifecycle.

A story with a cliffhanger:

What happened to Newt from Cleveland?

Seven years in seven weeks

Poor Jane, in seven weeks, she is interviewing people on three sites. Two sites in Germany and one in France, and she is doing over a hundred interviews on her own. I realized that thanks to relation to SmarTeam at that time, it took me probably seven years to get in front of all these stakeholders in a company.

Poor Jane, in seven weeks, she is interviewing people on three sites. Two sites in Germany and one in France, and she is doing over a hundred interviews on her own. I realized that thanks to relation to SmarTeam at that time, it took me probably seven years to get in front of all these stakeholders in a company.

I had much more fun most of the time as you can see below. My engagements were teamwork, where you had some additional social relief after work. Jane works even at the weekends.

![]() However, there are also many similarities. Her daily rhythm during working days. Gasthaus Adler reflects many of the typical guesthouses that I have visited. People staying there with a laptop were signs of the new world. Like Jane, I enjoyed the weissbier and noticed that sometimes overhearing other guests is not good for their company’s reputation. A lot of personal and human experiences are wrapped into the storyline.

However, there are also many similarities. Her daily rhythm during working days. Gasthaus Adler reflects many of the typical guesthouses that I have visited. People staying there with a laptop were signs of the new world. Like Jane, I enjoyed the weissbier and noticed that sometimes overhearing other guests is not good for their company’s reputation. A lot of personal and human experiences are wrapped into the storyline.

Spoiler: Tarzan meets Jane!

Cultural differences

The book also illustrates the cultural difference between countries (Germany/France/US) nicely and even between regions (North & South). Just check the breakfast at your location to see it.

The book also illustrates the cultural difference between countries (Germany/France/US) nicely and even between regions (North & South). Just check the breakfast at your location to see it.

Although most of the people interviewed by Jane contributed to her research, she also meets that either for personal or political reasons, do not cooperate.

Having worked worldwide, including in Asian countries, I learned that understanding people and culture is crucial for successful PLM engagements.

John did an excellent job of merging cultural and human behavior in the book. I am sure we share many similar experiences, as both this book and my blog posts, do not mention particular tools. It is about the people and the processes.

John did an excellent job of merging cultural and human behavior in the book. I am sure we share many similar experiences, as both this book and my blog posts, do not mention particular tools. It is about the people and the processes.

Topics to learn

You will learn that 3D CAD is not the most important topic, as perhaps many traditional vendor-related PDM consultants might think.

You will learn that 3D CAD is not the most important topic, as perhaps many traditional vendor-related PDM consultants might think.

Portfolio Management is a topic well addressed. In my opinion, to be addressed in every PLM roadmap, as here, the business goals get connected to the products.

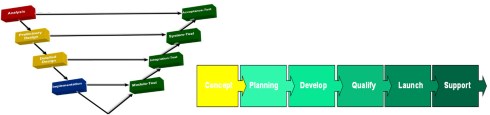

New Product Introduction, a stage-gate governance process, and the importance of Modularity are also topics that pop up in several cases.

The need for innovation, Industry 4.0 and AI (Artificial Intelligene) buzz, the world of software development and the “War for Talent” can all be found in the book.

And I was happy that even product Master Data Management was addressed. In my opinion, not enough companies realize that a data-driven future requires data quality and data governance. I wrote about this topic last year: PLM and PIM – the complementary value in a digital enterprise.

And I was happy that even product Master Data Management was addressed. In my opinion, not enough companies realize that a data-driven future requires data quality and data governance. I wrote about this topic last year: PLM and PIM – the complementary value in a digital enterprise.

There are fantastic technology terms, like APIs, microservices, Low Code platforms. They all rely on reliable and sharable data.

What’s next

Products2019 is written as the starting point for a sequel. In this book, you quickly learn all the aspects of a linear product lifecycle, as the image below shows

I see an opportunity for Products2020 (or later). What is the roadmap for a company in the future?

How to deal with more data-driven, more agile in their go-to-market strategy, as software, will be more and more defining the product’s capabilities?

How to deal with more data-driven, more agile in their go-to-market strategy, as software, will be more and more defining the product’s capabilities?

How to come from a linear siloed approach towards a horizontal flow of information, market-driven and agile?

Perhaps we will learn what happened with Newt from Cleveland?

Meanwhile, we have to keep on learning to build the future.

My learning continues this week with PI DX USA 2020. Usually, a conference I would not attend as traveling to the USA would have too much impact on my budget and time. Now I can hopefully learn and get inspired – you can do the same! Feel free to apply for a free registration if you are a qualified end-user – check here.

My learning continues this week with PI DX USA 2020. Usually, a conference I would not attend as traveling to the USA would have too much impact on my budget and time. Now I can hopefully learn and get inspired – you can do the same! Feel free to apply for a free registration if you are a qualified end-user – check here.

And there is more to learn, already mentioned in my previous post:

- November 4th, the DigitalTwin Conference in the Netherlands – a one day conference in the Central European Timezone – mainly virtual, there will be a small local audience

- November 17-18-19, the CIMdata PLM Road Map & PDT Fall 2020 conference – entirely virtual with short Q&A sessions, targeting both the European as American timezone.

Conclusion

John Stark wrote a great book to understand what is currently in most people’s heads in mid-size manufacturing companies. If you are relatively new to PLM, or if you have only been active in PDM, read it – it is affordable! With my series Learning from the past, I also shared twenty years of experience, more a quick walkthrough, and a more specialized view on some of the aspects of PLM. Keep on learning!

After the series about “Learning from the past,” it is time to start looking toward the future. I learned from several discussions that I probably work most of the time with advanced companies. I believe this would motivate companies that lag behind even to look into the future even more.

After the series about “Learning from the past,” it is time to start looking toward the future. I learned from several discussions that I probably work most of the time with advanced companies. I believe this would motivate companies that lag behind even to look into the future even more.

If you look into the future for your company, you need new or better business outcomes. That should be the driver for your company. A company does not need PLM or a Digital Twin. A company might want to reduce its time to market and improve collaboration between all stakeholders. These objectives can be realized by different ways of working and an IT infrastructure to allow these processes to become digital and connected.

That is the “game”. Coming back to the future of PLM. We do not need a discussion about definitions; I leave this to the academics and vendors. We will see the same applies to the concept of a Digital Twin.

That is the “game”. Coming back to the future of PLM. We do not need a discussion about definitions; I leave this to the academics and vendors. We will see the same applies to the concept of a Digital Twin.

My statement: The digital twin is not new. Everybody can have their own digital twin as long as you interpret the definition differently. Does this sound like the PLM definition?

The definition

I like to follow the Gartner definition:

A digital twin is a digital representation of a real-world entity or system. The implementation of a digital twin is an encapsulated software object or model that mirrors a unique physical object, process, organization, person, or other abstraction. Data from multiple digital twins can be aggregated for a composite view across a number of real-world entities, such as a power plant or a city, and their related processes.

As you see, not a narrow definition. Now we will look at the different types of interpretations.

Single-purpose siloed Digital Twins

- Simple – data only

One of the most straightforward applications of a digital twin is, for example, my Garmin Connect environment. My device registers performance parameters (speed, cadence, power, heartbeat, location) when cycling. Then, after every trip, I can analyze my performance. I can see changes in my overall performance; compare my performance with others in my category (weight, age, sex).

One of the most straightforward applications of a digital twin is, for example, my Garmin Connect environment. My device registers performance parameters (speed, cadence, power, heartbeat, location) when cycling. Then, after every trip, I can analyze my performance. I can see changes in my overall performance; compare my performance with others in my category (weight, age, sex).

Based on that, I can decide if I want to improve my performance. My personal business goal is to maintain and improve my overall performance, knowing I cannot stop aging by upgrading my body.

On November 4th, 2020, I am participating in the (almost virtual) Digital Twin conference organized by Bits&Chips in the Netherlands. In the context of human performance, I look forward to Natal van Riel’s presentation: Towards the metabolic digital twin – for sure, this direction is not simple. Natal is a full professor at the Technical University in Eindhoven, the “smart city” in the Netherlands.

On November 4th, 2020, I am participating in the (almost virtual) Digital Twin conference organized by Bits&Chips in the Netherlands. In the context of human performance, I look forward to Natal van Riel’s presentation: Towards the metabolic digital twin – for sure, this direction is not simple. Natal is a full professor at the Technical University in Eindhoven, the “smart city” in the Netherlands.

- Medium – data and operating models

Many connected devices in the world use the same principle. An airplane engine, an industrial robot, a wind turbine, a medical device, and a train carriage; all track the performance based on this connection between physical and virtual, based on some sort of digital connectivity.

Many connected devices in the world use the same principle. An airplane engine, an industrial robot, a wind turbine, a medical device, and a train carriage; all track the performance based on this connection between physical and virtual, based on some sort of digital connectivity.

The business case here is also monitoring performance, predicting maintenance, and upgrading the product when needed.

This is the domain of Asset Lifecycle Management, a practice that has existed for decades. Based on financial and performance models, the optimal balance between maintaining and overhauling has to be found. Repairs are disruptive and can be extremely costly. A manufacturing site that cannot produce can cost millions per day. Connecting data between the physical and the virtual model allows us to have real-time insights and be proactive. It becomes a digital twin.

This is the domain of Asset Lifecycle Management, a practice that has existed for decades. Based on financial and performance models, the optimal balance between maintaining and overhauling has to be found. Repairs are disruptive and can be extremely costly. A manufacturing site that cannot produce can cost millions per day. Connecting data between the physical and the virtual model allows us to have real-time insights and be proactive. It becomes a digital twin.

- Advanced – data and connected 3D model

The digital twin we see the most in marketing videos is a virtual twin, using a 3D representation for understanding and navigation. The 3D representation provides a Virtual Reality (VR) environment with connected data. When pointing at the virtual components, information might appear, or some animation might take place.

The digital twin we see the most in marketing videos is a virtual twin, using a 3D representation for understanding and navigation. The 3D representation provides a Virtual Reality (VR) environment with connected data. When pointing at the virtual components, information might appear, or some animation might take place.

Building such a virtual representation is a significant effort; therefore, there needs to be a serious business case.

The simplest business case is to use the virtual twin for training purposes. A flight simulator provides a virtual environment and behavior as-if you are flying in a physical airplane – the behavior model behind the simulator should match as well as possibly the real behavior. However, as it is a model, it will never be 100 % reality and requires updates when new findings or product changes appear.

A virtual model of a platform or plant can be used for training on Standard Operating Procedures (SOPs). In the physical world, there is no place or time to conduct such training. Here the complexity might be lower. There is a 3D Model; however, serious updates can only be expected after a major maintenance or overhaul activity.

A virtual model of a platform or plant can be used for training on Standard Operating Procedures (SOPs). In the physical world, there is no place or time to conduct such training. Here the complexity might be lower. There is a 3D Model; however, serious updates can only be expected after a major maintenance or overhaul activity.

These practices are not new either and are used in places where physical training cannot be done.

More challenging is the Augmented Reality (AR) use case. Here the virtual model, most of the time, a lightweight 3D Model, connects to real-time data coming from other sources. For example, AR can be used when an engineer has to service a machine. The AR environment might project actual data from the machine, indicate service points and service procedures.

More challenging is the Augmented Reality (AR) use case. Here the virtual model, most of the time, a lightweight 3D Model, connects to real-time data coming from other sources. For example, AR can be used when an engineer has to service a machine. The AR environment might project actual data from the machine, indicate service points and service procedures.

The positive side of the business case is clear for such an opportunity, ensuring service engineers always work with the right information in a real-time context. The main obstacle to implementing AR, in reality, is the access to data, the presentation of the data and keeping the data in the AR environment matching the reality.

![]() And although there are 3D Models in use, they are, to my knowledge, always created in siloes, not yet connected to their design sources. Have a look at the Digital Twin conference from Bits&Chips, as mentioned before.

And although there are 3D Models in use, they are, to my knowledge, always created in siloes, not yet connected to their design sources. Have a look at the Digital Twin conference from Bits&Chips, as mentioned before.

Several of the cases mentioned above will be discussed here. The conference’s target is to share real cases concluded by Q & A sessions, crucial for a virtual event.

Connected Virtual Twins along the product lifecycle

So far, we have been discussing the virtual twin concept, where we connect a product/system/person in the physical world to a virtual model. Now let us zoom in on the virtual twins relevant for the early parts of the product lifecycle, the manufacturing twin, and the development twin. This image from Siemens illustrates the concept:

On slides they imagine a complete integrated framework, which is the future vision. Let us first zoom in on the individual connected twins.

The digital production twin

This is the area of virtual manufacturing and creating a virtual model of the manufacturing plant. Virtual manufacturing planning is not a new topic. DELMIA (Dassault Systèmes) and Tecnomatix (Siemens) are already for a long time offering virtual manufacturing planning solutions.

This is the area of virtual manufacturing and creating a virtual model of the manufacturing plant. Virtual manufacturing planning is not a new topic. DELMIA (Dassault Systèmes) and Tecnomatix (Siemens) are already for a long time offering virtual manufacturing planning solutions.

At that time, the business case was based on the fact that the definition of a manufacturing plant and process done virtually allows you to optimize the plant before investing in physical assets.

Saving money as there is no costly prototype phase to optimize production. In a virtual world, you can perform many trade-off studies without extra costs. That was the past (and, for many companies, still the current situation).

Saving money as there is no costly prototype phase to optimize production. In a virtual world, you can perform many trade-off studies without extra costs. That was the past (and, for many companies, still the current situation).

With the need to be more flexible in manufacturing to address individual customer orders without increasing the overhead of delivering these customer-specific solutions, there is a need for a configurable plant that can produce these individual products (batch size 1).

This is where the virtual plant model comes into the picture again. Instead of having a virtual model to define the ultimate physical plant, now the virtual model remains an active model to propose and configure the production process for each of these individual products in the physical plant.

This is where the virtual plant model comes into the picture again. Instead of having a virtual model to define the ultimate physical plant, now the virtual model remains an active model to propose and configure the production process for each of these individual products in the physical plant.

This is partly what Industry 4.0 is about. Using a model-based approach to configure the plant and its assets in a connected manner. The digital production twin drives the execution of the physical plant. The factory has to change from a static factory to a dynamic “smart” factory.

In the domain of Industry 4.0, companies are reporting progress. However, in my experience, the main challenge is still that the product source data is not yet built in a model-based, configurable manner. Therefore, requires manual rework. This is the area of Model-Based Definition, and I have been writing about this aspect several times. Latest post: Model-Based: Connecting Engineering and Manufacturing

The business case for this type of digital twin, of course, is to be able to customer-specific products with extremely competitive speed and reduced cost compared to standard. It could be your company’s survival strategy. As it is hard to predict the future, as we see from COVID-19, it is still crucial to anticipate the future instead of waiting.

The business case for this type of digital twin, of course, is to be able to customer-specific products with extremely competitive speed and reduced cost compared to standard. It could be your company’s survival strategy. As it is hard to predict the future, as we see from COVID-19, it is still crucial to anticipate the future instead of waiting.

The digital development twin

Before a product gets manufactured, there is a product development process. In the past, this was pure mechanical with some electronic components. Nowadays, many companies are actually manufacturing systems as the software controlling the product plays a significant role. In this context, the model-based systems engineering approach is the upcoming approach to defining and testing a system virtually before committing to the physical world.

Before a product gets manufactured, there is a product development process. In the past, this was pure mechanical with some electronic components. Nowadays, many companies are actually manufacturing systems as the software controlling the product plays a significant role. In this context, the model-based systems engineering approach is the upcoming approach to defining and testing a system virtually before committing to the physical world.

Model-Based Systems Engineering can define a single complex product and perform all kinds of analyses on the system even before there is a physical system in place. I will explain more about model-based systems engineering in future posts. In this context, I want to stress that having a model-based system engineering environment combined with modularity (do not confuse it with model-based) is a solid foundation for dealing with unique custom products. Solutions can be configured and validated against their requirements already during the engineering phase.

Model-Based Systems Engineering can define a single complex product and perform all kinds of analyses on the system even before there is a physical system in place. I will explain more about model-based systems engineering in future posts. In this context, I want to stress that having a model-based system engineering environment combined with modularity (do not confuse it with model-based) is a solid foundation for dealing with unique custom products. Solutions can be configured and validated against their requirements already during the engineering phase.

The business case for the digital development twin is easy to make. Shorter time to market, improved and validated quality, and reduced engineering hours and costs compared to traditional ways of working. To achieve these results, for sure, you need to change your ways of working and the tools you are using. So it won’t be that easy!

The business case for the digital development twin is easy to make. Shorter time to market, improved and validated quality, and reduced engineering hours and costs compared to traditional ways of working. To achieve these results, for sure, you need to change your ways of working and the tools you are using. So it won’t be that easy!

For those interested in Industry 4.0 and the Model-Based System Engineering approach, join me at the upcoming PLM Road Map 2020 and PDT 2020 conference on 17-18-19 November. As you can see from the agenda, a lot of attention to the Digital Twin and Model-Based approaches.

For those interested in Industry 4.0 and the Model-Based System Engineering approach, join me at the upcoming PLM Road Map 2020 and PDT 2020 conference on 17-18-19 November. As you can see from the agenda, a lot of attention to the Digital Twin and Model-Based approaches.

Three digital half-days with hopefully a lot to learn and stay with our feet on the ground. In particular, I am looking forward to Marc Halpern’s keynote speech: Digital Thread: Be Careful What you Wish For, It Just Might Come True

Conclusion

It has been very noisy on the internet related to product features and technologies, probably due to COVID-19 and therefore disrupted interactions between all of us – vendors, implementers and companies trying to adjust their future. The Digital Twin concept is an excellent framing for a concept that everyone can relate to. Choose your business case and then look for the best matching twin.

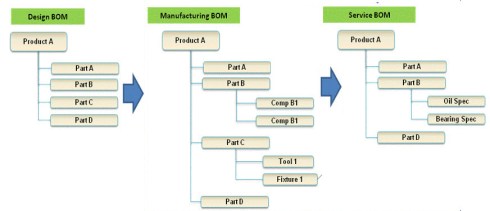

In the previous seven posts, learning from the past to understand the future, we have seen the evolution from manual 2D drawing handling. Next, the emerge of ERP and CAD followed by data management systems (PDM/PLM) and methodology (EBOM/MBOM) to create an infrastructure for product data from concept towards manufacturing.

In the previous seven posts, learning from the past to understand the future, we have seen the evolution from manual 2D drawing handling. Next, the emerge of ERP and CAD followed by data management systems (PDM/PLM) and methodology (EBOM/MBOM) to create an infrastructure for product data from concept towards manufacturing.

Before discussing the extension to the SBOM-concept, I first want to discuss Engineering Change Management and Configuration Management.

ECM and CM – are they the same?

Often when you talk with people in my PLM bubble, the terms Change Management and Configuration Management are mixed or not well understood.

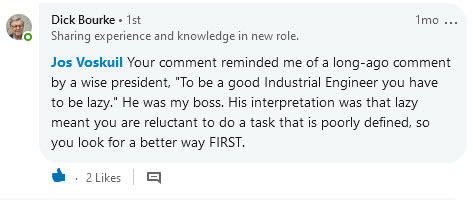

![]() When talking about Change Management, we should clearly distinguish between OCM (Organizational Change Management) and ECM (Engineering Change Management). In this post, I will focus on Engineering Change Management (ECM).

When talking about Change Management, we should clearly distinguish between OCM (Organizational Change Management) and ECM (Engineering Change Management). In this post, I will focus on Engineering Change Management (ECM).

When talking about Configuration Management also here we find two interpretations of it.

![]() The first one is a methodology describing technically how, in your PLM/CAD-environment, you can build the most efficient way connected data structures, representing all product variations. This technology varies per PLM/CAD-vendor, and therefore I will not discuss it here. The other interpretation of Configuration Management is described on Wiki as follows:

The first one is a methodology describing technically how, in your PLM/CAD-environment, you can build the most efficient way connected data structures, representing all product variations. This technology varies per PLM/CAD-vendor, and therefore I will not discuss it here. The other interpretation of Configuration Management is described on Wiki as follows:

Configuration management (CM) is a systems engineering process for establishing and maintaining consistency of a product’s performance, functional, and physical attributes with its requirements, design, and operational information throughout its life.

This is also the area where I will focus on this time.

And as-if great minds think alike and are synchronized, I was happy to see Martijn Dullaart’s recent blog post, referring to a poll and follow-up article on CM.

Here Martijn precisely touches the topic I address in this post. I recommend you to read his post: Configuration Management done right = Product-Centric first and then follow with the rest of this article.

Engineering Change Management

Initially, engineering change management was a departmental activity performed by engineering to manage the changes in a product’s definition. Other stakeholders are often consulted when preparing a change, which can be minor (affecting, for example, only engineering) or major (affecting engineering and manufacturing).

Initially, engineering change management was a departmental activity performed by engineering to manage the changes in a product’s definition. Other stakeholders are often consulted when preparing a change, which can be minor (affecting, for example, only engineering) or major (affecting engineering and manufacturing).

The way engineering change management has been implemented varies a lot. Over time companies all around the world have defined their change methodology, and there is a lot of commonality between these approaches. However, terminology as revision, version, major change, minor change all might vary.

I described the generic approach for engineering change processes in my blog post: ECR / ECO for Dummies from 2010.

The fact that companies have defined their own engineering change processes is not an issue when it works and is done manually. The real challenge came with PDM/PLM-systems that need to provide support for engineering change management.

Do you leave the methodology 100 % open, or do you provide business logic?

I have seen implementations where an engineer with a right-click could release an assembly without any constraints. Related drawings might not exist, parts in the assembly are not released, and more. To obtain a reliable engineering change management process, the company had to customize the PLM-system to its desired behavior.

I have seen implementations where an engineer with a right-click could release an assembly without any constraints. Related drawings might not exist, parts in the assembly are not released, and more. To obtain a reliable engineering change management process, the company had to customize the PLM-system to its desired behavior.

An exercise excellent for a system integrator as there was always a discussion with end-users that do not want to be restricted in case of an emergency (“we will complete the definition later” / “too many clicks” / “do I have to approve 100 parts ?”). In many cases, the system integrator kept on customizing the system to adapt to all wishes. Often the engineering change methodology on paper was not complete or contained contradictions when trying to digitize the processes.

For that reason, the PLM-vendors that aim to provide Out-Of-The-Box solutions have been trying to predefine certain behaviors in their system. For example, you cannot release a part, when its specifications (drawings/documents) are not released. Or, you cannot update a released assembly without creating a new revision.

For that reason, the PLM-vendors that aim to provide Out-Of-The-Box solutions have been trying to predefine certain behaviors in their system. For example, you cannot release a part, when its specifications (drawings/documents) are not released. Or, you cannot update a released assembly without creating a new revision.

These rules speed-up the implementation; however, they require more OCM (Organizational Change Management) as probably naming and methodology has to change within the company. This is the continuous battle in PLM-implementations. In particular where the company has a strong legacy or lack of business understanding, when implementing PLM.

There is an excellent webcast in this context on Minerva PLM TV – How to Increase IT Project Success with Organizational Change Management.

Click on the image or link to watch this recording.

Configuration Management

When we talk about configuration management, we have to think about managing the consistency of product data along the whole product lifecycle, as we have seen from the Wiki-definition before.

Configuration management existed long before we had IT-systems. Therefore, configuration management is more a collection of activities (see diagram above) to ensure the consistency of information is correct for any given product. Consistent during design, where requirements match product capabilities. Consistent with manufacturing, where the manufacturing process is based on the correct engineering specifications. And consistent with operations, meaning that we have the full definition of product in the field, the As-Built, in correct relation to its engineering and manufacturing definition.

This consistency is crucial for products where the cost of an error can have a massive impact on the manufacturer. The first industries that invested heavily in configuration management were the Aerospace and Defense industries. Configuration management is needed in these industries as the products are usually complex, and failure can have a fatal impact on the company. Combined with many regulatory constraints, managing the configuration of a product and the impact of changes is a discipline on its own.

Other industries have also introduced configuration management nowadays. The nuclear power industry and the pharmaceutical industry use configuration management as part of their regulatory compliance. The automotive industry requires configuration management partly for compliance, mainly driven by quality targets. An accident or a recall can be costly for a car manufacturer. Other manufacturing companies all have their own configuration management strategies, mainly depending on their own risk assessment. Configuration management is a pro-active discipline – it costs money – time, people and potential tools to implement it. In my experience, many of these companies try to do “some” configuration management, always hoping that a real disaster will not happen (or can happen). Proper configuration management allows you to perform reliable impact analysis for any change (image above)

What happens in the field?

When introducing PLM in mid-market companies, often, the dream was that with the new PLM-system configuration, management would be there too.

When introducing PLM in mid-market companies, often, the dream was that with the new PLM-system configuration, management would be there too.

Management believes the tools will fix the issue.

Partly because configuration management deals with a structured approach on how to manage changes, there was always confusion with engineering change management. Modern PLM-systems all have an impact analysis capability. However, most of the time, this impact analysis only reaches the content that is in the PLM-system. Configuration Management goes further.

If you think that configuration management is crucial for your company, start educating yourselves first before implementing anything in a tool. There are several places where you can learn all about configuration management.

- Probably the best-known organization is IpX (Institute for Process Excellence), teaching the CM2 methodology. Have a look here: CM2 certification and courses

- Closely related to IpX, Martijn Dullaart shares his thoughts coming from the field as Lead Architect for Enterprise Configuration Management at ASML (one of the Dutch crown jewels) in his blog: MDUX

- CMstat, a configuration and data management solution provider, provides educational posts from their perspective. Have a look at their posts, for example, PLM or PDM or CM

- If you want to have a quick overview of Configuration Management in general, targeted for the mid-market, have a look at this (outdated) course: Training for Small and Medium Enterprises on CONFIGURATION MANAGEMENT. Good for self-study to get an understanding of the domain.

To summarize

In regulated industries, Configuration Management and PLM are a must to ensure compliance and quality. Configuration management and (engineering) change management are, first of all, required methodologies that guarantee the quality of your products. The more complex your products are, the higher the need for change and configuration management.

In regulated industries, Configuration Management and PLM are a must to ensure compliance and quality. Configuration management and (engineering) change management are, first of all, required methodologies that guarantee the quality of your products. The more complex your products are, the higher the need for change and configuration management.

PLM-systems require embedded engineering change management – part of the PDM domain. Performing Engineering Change Management in a system is something many users do not like, as it feels like overhead. Too much administration or too many mouse clicks.

So far, there is no golden egg that performs engineering change management automatically. Perhaps in a data-driven environment, algorithms can speed-up change management processes. Still, there is a need for human decisions.

Similar to configuration management. If you have a PLM-system that connects all the data from concept, design, and manufacturing in a single environment, it does not mean you are performing configuration management. You need to have processes in place, and depending on your product and industry, the importance will vary.

Similar to configuration management. If you have a PLM-system that connects all the data from concept, design, and manufacturing in a single environment, it does not mean you are performing configuration management. You need to have processes in place, and depending on your product and industry, the importance will vary.

Conclusion

In the first seven posts, we discussed the design and engineering practices, from CAD to EBOM, ending with the MBOM. Engineering Change Management and, in particular, Configuration Management are methodologies to ensure the consistency of data along the product lifecycle. These methodologies are connected and need to be fit for the future – more on this when we move to modern model-based approaches.

Closing note:

While finishing this blog post today I read Jan Bosch’s post: Why you should not align. Jan touches the same topic that I try to describe in my series Learning from the Past ….., as my intention is to make us aware that by holding on to practices from the past we are blocking our future. Highly recommended to read his post – a quote:

While finishing this blog post today I read Jan Bosch’s post: Why you should not align. Jan touches the same topic that I try to describe in my series Learning from the Past ….., as my intention is to make us aware that by holding on to practices from the past we are blocking our future. Highly recommended to read his post – a quote:

The problem is, of course, that every time you resist change, you get a bit behind. You accumulate some business, process and technical debt. You become a little less “fitting” to the environment in which you’re operating

In the series learning from the past to understand the future, we have almost reached the current state of PLM before digitization became visible. In the last post, I introduced the value of having the MBOM preparation inside a PLM-system, so manufacturing engineering can benefit from early visibility and richer product context when preparing the manufacturing process.

In the series learning from the past to understand the future, we have almost reached the current state of PLM before digitization became visible. In the last post, I introduced the value of having the MBOM preparation inside a PLM-system, so manufacturing engineering can benefit from early visibility and richer product context when preparing the manufacturing process.

Does everyone need an MBOM?

It is essential to realize that you do not need an EBOM and a separate MBOM in case of an Engineering To Order primary process. The target of ETO is to deliver a unique customer product with no time to lose. Therefore, engineering can design with a manufacturing process in mind.

It is essential to realize that you do not need an EBOM and a separate MBOM in case of an Engineering To Order primary process. The target of ETO is to deliver a unique customer product with no time to lose. Therefore, engineering can design with a manufacturing process in mind.

The need for an MBOM comes when:

- You are selling a specific product over a more extended period of time. The engineering definition, in that case, needs to be as little as possible dependent on supplier-specific parts.

- You are delivering your portfolio based on modules. Modules need to be as long as possible stable, therefore independent of where they are manufactured and supplier-specific parts. The better you can define your modules, the more customers you can reach over time.

- You are having multiple manufacturing locations around the world, allowing you to source locally and manufacture based on local plant-specific resources. I described these options in the previous post

The challenge for all companies that want to move from ETO to BTO/CTO is the fact that they need to change their methodology – building for the future while supporting the past. This is typically something to be analyzed per company on how to deal with the existing legacy and installed base.

The challenge for all companies that want to move from ETO to BTO/CTO is the fact that they need to change their methodology – building for the future while supporting the past. This is typically something to be analyzed per company on how to deal with the existing legacy and installed base.

Configurable EBOM and MBOM

In some previous posts, I mentioned that it is efficient to have a configurable EBOM. This means that various options and variants are managed in the same EBOM-structure that can be filtered based on configuration parameters (date effectivity/version identifier/time baseline). A configurable EBOM is often called a 150 % EBOM

The MBOM can also be configurable as a manufacturing plant might have almost common manufacturing steps for different product variants. By using the same process and filtered MBOM, you will manufacture the specific product version. In that case, we can talk about a 120 % MBOM

The MBOM can also be configurable as a manufacturing plant might have almost common manufacturing steps for different product variants. By using the same process and filtered MBOM, you will manufacture the specific product version. In that case, we can talk about a 120 % MBOM

Note: the freedom of configuration in the EBOM is generally higher than the options in the configurable MBOM.

The real business change for EBOM/MBOM

So far, we have discussed the EBOM/MBOM methodology. It is essential to realize this methodology only brings value when the organization will be adapted to benefit from the new possibilities.

One of the recurring errors in PLM implementations is that users of the system get an extended job scope, without giving them the extra time to perform these activities. Meanwhile, other persons downstream might benefit from these activities. However, they will not complain. I realized that already in 2009, I mentioned such a case: Where is my PLM ROI, Mr. Voskuil?

Now let us look at the recommended business changes when implementing an EBOM/MBOM-strategy

- Working in a single, shared environment for engineering and manufacturing preparation is the first step to take.

Working in a PLM-system is not a problem for engineers who are used to the complexity of a PDM-system. For manufacturing engineers, a PLM-environment will be completely new. Manufacturing engineers might prepare their bill of process first in Excel and ultimately enter the complete details in their ERP-system. ERP-systems are not known for their user-friendliness. However, their interfaces are often so rigid that it is not difficult to master the process. Excel, on the other side, is extremely flexible but not connected to anything else.

And now, this new PLM-system requires people to work in a more user-friendly environment with limited freedom. This is a significant shift in working methodology. This means manufacturing engineers need to be trained and supported over several months. Changing habits and keep people motivated takes energy and time. In reality, where is the budget for these activities? See my 2016 post: PLM and Cultural Change Management – too expensive?

And now, this new PLM-system requires people to work in a more user-friendly environment with limited freedom. This is a significant shift in working methodology. This means manufacturing engineers need to be trained and supported over several months. Changing habits and keep people motivated takes energy and time. In reality, where is the budget for these activities? See my 2016 post: PLM and Cultural Change Management – too expensive?

- From sequential to concurrent

Once your manufacturing engineers are able to work in a PLM-environment, they are able to start the manufacturing definition before the engineering definition is released. Manufacturing engineers can participate in design reviews having the information in their environment available. They can validate critical manufacturing steps and discuss with engineers potential changes that will reduce the complexity or cost for manufacturing. As these changes will be done before the product is released, the cost of change is much lower. After all, having engineering and manufacturing working partially in parallel will reduce time to market.

One of the leading business drivers for many companies is introducing products or enhancements to the market. Bringing engineering and manufacturing preparation together also means that the PLM-system can no longer be an engineering tool under the responsibility of the engineering department.

The responsibility for PLM needs to be at a level higher in the organization to ensure well-balanced choices. A higher level in the organization automatically means more attention for business benefits and less attention for functions and features.

The responsibility for PLM needs to be at a level higher in the organization to ensure well-balanced choices. A higher level in the organization automatically means more attention for business benefits and less attention for functions and features.

From technology to methodology – interface issues?

The whole EBOM/MBOM-discussion often has become a discussion related to a PLM-system and an ERP-system. Next, the discussion diverted to how these two systems could work together, changing the mindset to the complexity of interfaces instead of focusing on the logical flow of information.

In an earlier PI Event in München 2016, I lead a focus group related to the PLM and ERP interaction. The discussion was not about technology, all about focusing on what is the logical flow of information. From initial creation towards formal usage in a product definition (EBOM/MBOM).

In an earlier PI Event in München 2016, I lead a focus group related to the PLM and ERP interaction. The discussion was not about technology, all about focusing on what is the logical flow of information. From initial creation towards formal usage in a product definition (EBOM/MBOM).

What became clear from this workshop and other customer engagements is that people are often locked in their siloed way of thinking. Proposed information flows are based on system capabilities, not on the ideal flow of information. This is often the reason why a PLM/ERP-interface becomes complicated and expensive. System integrators do not want to push for organizational change, they prefer to develop an interface that adheres to the current customer expectations.

SAP has always been promoting that they do not need an interface between engineering and manufacturing as their data management starts from the EBOM. They forgot to mention that they have a difficult time (and almost no intention) to manage the early ideation and design phase. As a Dutch SAP country manager once told me: “Engineers are resources that do not want to be managed.” This remark says all about the mindset of ERP.

After overlooking successful PLM-implementations, I can tell the PLM-ERP interface has never been a technical issue once the methodology is transparent. A company needs to agree on logical data flow from ideation through engineering towards design is the foundation.