You are currently browsing the category archive for the ‘Data centric’ category.

The summer holidays are over, and with the PLM Global Green Alliance, we are glad to continue with our series: PLM and Sustainability, where we interview PLM-related software vendors, talking about their sustainability mission and offering.

The summer holidays are over, and with the PLM Global Green Alliance, we are glad to continue with our series: PLM and Sustainability, where we interview PLM-related software vendors, talking about their sustainability mission and offering.

We talked with SAP, Autodesk, and Dassault Systèmes. This week we spoke with Sustaira, and soon we will talk with Aras. Sustaira, an independent Siemens partner, is the provider of a sustainability platform based on Mendix.

SUSTAIRA

The interview with Vincent de la Mar, founder and CEO of Sustaira, was quite different from the previous interviews. In the earlier interviews, we talked with people driving sustainability in their company and software portfolio. Now with Sustaira, we were talking with a relatively new company with a single focus on sustainability.

The interview with Vincent de la Mar, founder and CEO of Sustaira, was quite different from the previous interviews. In the earlier interviews, we talked with people driving sustainability in their company and software portfolio. Now with Sustaira, we were talking with a relatively new company with a single focus on sustainability.

Sustaira provides an open platform targeting purely sustainability by offering relevant apps and infrastructure based on Mendix.

Listen to the interview and discover the differences and the potential for you.

Slides shown during the interview and additional company information: Sustaira Overview 2022.

What we have learned

Using the proven technology of the Mendix platform allows you to build a data-driven platform focused on sustainability for your company.

Using the proven technology of the Mendix platform allows you to build a data-driven platform focused on sustainability for your company.

As I wrote in my post: PLM and Sustainability, there is the need to be data-driven and connected with federated data sources for accurate data.

This is a technology challenge. Sustaira, as a young company, has taken up this challenge and provides various apps related to sustainability topics on its platform. Still, they remain adaptable to your organization.

Secondly, I like the concept that although Mendix is part of the Siemens portfolio, you do not need to have Siemens PLM installed. The openness of the Sustaira platform allows you to implement it in your organization independent of your PLM infrastructure.

Secondly, I like the concept that although Mendix is part of the Siemens portfolio, you do not need to have Siemens PLM installed. The openness of the Sustaira platform allows you to implement it in your organization independent of your PLM infrastructure.

The final observation – the rule of people, process, and technology – is still valid. To implement Sustaira in an efficient and valuable manner, you need to be clear in your objectives and sustainability targets within the organization. And these targets should be more detailed than the corporate statement in the annual report.

Want to Learn more

To learn more about Sustaira and the wide variety of offerings, you can explore any of these helpful links:

- First, here is a short video introducing Sustaira

- With this link, anyone can sign up for the free version of the Sustaira platform and begin exploring today!

- Lastly, for additional information, demos, downloadable content, and more, head over to the Sustiara Content Hub.

Conclusion

It was interesting to learn about Sustaira and how they started with a proven technology platform (Mendix) to build their sustainability platform. Being sustainable involves using trusted data and calculations to understand the environmental impact at every lifecycle stage.

Again we can state that the technology is there. Now it is up to companies to act and connect the relevant data sources to underpin and improve their sustainability efforts.

In the last weeks, I had several discussions related to sustainability. What can companies do to become sustainable and prove it? But, unfortunately, there is so much greenwashing at this moment.

In the last weeks, I had several discussions related to sustainability. What can companies do to become sustainable and prove it? But, unfortunately, there is so much greenwashing at this moment.

Look at this post: 10 Companies and Corporations Called Out For Greenwashing.

Therefore I thought about which practical steps a company should take to prepare for a sustainable future, as the change will not happen overnight. It reminds me of the path towards a digital, model-based enterprise (my other passion). In my post Why Model-Based definition is important for all, I mentioned that MBD (Model-Based Definition) could be considered the first stepping-stone toward a Model-Based enterprise.

Therefore I thought about which practical steps a company should take to prepare for a sustainable future, as the change will not happen overnight. It reminds me of the path towards a digital, model-based enterprise (my other passion). In my post Why Model-Based definition is important for all, I mentioned that MBD (Model-Based Definition) could be considered the first stepping-stone toward a Model-Based enterprise.

The analogy for Material Compliance came after an Aras seminar I watched a month ago. The webinar How PLM Paves the Way for Sustainability with Insensia (an Aras implementer) demonstrates how material compliance is the first step toward sustainable product development.

The analogy for Material Compliance came after an Aras seminar I watched a month ago. The webinar How PLM Paves the Way for Sustainability with Insensia (an Aras implementer) demonstrates how material compliance is the first step toward sustainable product development.

Let’s understand why

The first steps

Companies that currently deliver solutions mostly only focus on economic gains. The projects or products they sell need to be profitable and competitive, which makes sense if you want a future.

Companies that currently deliver solutions mostly only focus on economic gains. The projects or products they sell need to be profitable and competitive, which makes sense if you want a future.

And this would not have changed if the awareness of climate impact has not become apparent.

First, CFKs and hazardous materials lead to new regulations. Next global agreements to fight climate change – the Paris agreement and more to come – have led and will lead to regulations that will change how products will be developed. All companies will have to change their product development and delivery models when it becomes a global mandate.

A required change is likely going to happen. In Europe, the Green Deal is making stable progress. However, what will happen in the US will be a mystery as even their supreme court becomes a political entity against sustainability (money first).

A required change is likely going to happen. In Europe, the Green Deal is making stable progress. However, what will happen in the US will be a mystery as even their supreme court becomes a political entity against sustainability (money first).

Still, compliance with regulations will be required if a company wants to operate in a global market.

What is Material Compliance?

In 2002, the European Union published a directive to restrict hazardous substances in materials. The directive, known as RoHS (Restriction of Hazardous Substances), was mainly related to electronic components. In the first directive, six hazardous materials were restricted.

In 2002, the European Union published a directive to restrict hazardous substances in materials. The directive, known as RoHS (Restriction of Hazardous Substances), was mainly related to electronic components. In the first directive, six hazardous materials were restricted.

The most infamous are Cadmium(Cd), Lead(Pb), and Mercury (Hg). In 2006 all products on the EU market must pass RoHS compliance, and in 2011 was now connected the CE marking of products sold in the European market was.

In 2015 four additional chemical substances were added, most softening PVC but also affecting the immune system. Meanwhile, other countries have introduced similar RoHS regulations; therefore, we can see it as a global restricting. Read more here: The RoHS guide.

In 2015 four additional chemical substances were added, most softening PVC but also affecting the immune system. Meanwhile, other countries have introduced similar RoHS regulations; therefore, we can see it as a global restricting. Read more here: The RoHS guide.

Consumers buying RoHS-compliant products now can be assured that none of the threshold values of the substances is reached in the product. The challenge for the manufacturer is to go through each of the components of the MBOM. To understand if it contains one of the ten restricted substances and, if yes, in which quantity.

Therefore, they need to get that information from each relevant supplier a RoHS declaration.

Besides RoHS, additional regulations protect the environment and the consumer. For example, REACH (Registration, Evaluation, Authorization and Restriction of Chemicals) compliance deals with the regulations created to improve the environment and protect human health. In addition, REACH addresses the risks associated with chemicals and promotes alternative methods for the hazard assessment of substances.

Besides RoHS, additional regulations protect the environment and the consumer. For example, REACH (Registration, Evaluation, Authorization and Restriction of Chemicals) compliance deals with the regulations created to improve the environment and protect human health. In addition, REACH addresses the risks associated with chemicals and promotes alternative methods for the hazard assessment of substances.

The compliance process in four steps

Material compliance is most of all the job of engineers. Therefore around 2005, some of my customers started to add RoHS support to their PLM environment.

Step 1

The image below shows the simple implementation – the PDF-from from the supplier was linked to the (M)BOM part.

An employee had to manually add the substances into a table and ensure the threshold values were not reached. But, of course, there was already a selection of preferred manufacturer parts during the engineering phase. Therefore RoHS compliance was almost guaranteed when releasing the EBOM.

An employee had to manually add the substances into a table and ensure the threshold values were not reached. But, of course, there was already a selection of preferred manufacturer parts during the engineering phase. Therefore RoHS compliance was almost guaranteed when releasing the EBOM.

But this process could be done more cleverly.

Step 2

So the next step was that manufacturers started to extend their PLM data model with the additional attributes for RoHS compliance. Again, this could be done cleverly or extremely generic, adding the attributes to all parts.

So now, when receiving the material declaration, a person just has to add the substance values to the part attributes. Then, through either standard functionality or customization, a compliance report could be generated for the (M)BOM. So this already saves some work.

So now, when receiving the material declaration, a person just has to add the substance values to the part attributes. Then, through either standard functionality or customization, a compliance report could be generated for the (M)BOM. So this already saves some work.

Step 3

The next step was to provide direct access to these attributes to the supplier and push the supplier to do the work.

Now the overhead for the manufacturer has been reduced again. This is because only the supplier needs to do the job for his customer.

Now the overhead for the manufacturer has been reduced again. This is because only the supplier needs to do the job for his customer.

Step 4

In step 4, we see a real connected environment, where information is stored only once, referenced by manufacturers, and kept actual by the part suppliers.

Who will host the RoHS databank? From some of my customer projects, I recall IHS as a data provider – it seems they are into this business when you look at their website HERE.

Who will host the RoHS databank? From some of my customer projects, I recall IHS as a data provider – it seems they are into this business when you look at their website HERE.

Where is your company at this moment?

Having seen the four stepping-stones leading towards efficient RoHS compliance, you see the challenge of moving from a document-driven approach to a data-driven approach.

Now let’s look into the future. Concepts like Life Cycle Assessment (LCA) or a Digital Product Passport (DPP) will require a fully connected approach.

Now let’s look into the future. Concepts like Life Cycle Assessment (LCA) or a Digital Product Passport (DPP) will require a fully connected approach.

Where is your company at this moment – have you reached RoHS compliance step 3 or 4? A first step to learn and work connected and data-driven.

Life Cycle Assessment – the ultimate target

A lifecycle assessment, or lifecycle analysis (two times LCA again), is a methodology to assess the environmental impact of a product (or solution) through its whole lifecycle. From materials sourcing, manufacturing, transportation, usage, service, and decommissioning. And by assessing, we mean a clear, verifiable, and shareable manner, not just guessing.

A lifecycle assessment, or lifecycle analysis (two times LCA again), is a methodology to assess the environmental impact of a product (or solution) through its whole lifecycle. From materials sourcing, manufacturing, transportation, usage, service, and decommissioning. And by assessing, we mean a clear, verifiable, and shareable manner, not just guessing.

Traditional engineering education is not bringing these skills, although LCA is not new, as this 10-years old YouTube movie from Autodesk illustrates:

What is new is that due to global understanding, we are reaching the limits of what our planet can endure; we must act now. Upcoming international regulations will enforce life cycle analysis reporting for manufacturers or service providers. This will happen gradually.

Meanwhile, we all should work on a circular economy, the major framework for a sustainable planet- click on the image on the left.

Meanwhile, we all should work on a circular economy, the major framework for a sustainable planet- click on the image on the left.

In my post, I wrote about these combined topics: SYSTEMS THINKING – a must-have skill in the 21st century.

Life Cycle Analysis – Digital Twin – Digitization

The big elephant in the room is that when we talk about introducing LCA in your company, it has a lot to do with the digitization of your company. Assessment data in a document can require too much human effort to maintain the data at the right quality. The costs are not affordable if your competitor is more efficient.

When coming to the Analysis part, here, a model-based, data-driven infrastructure is the most efficient way to run virtual analysis, using digital twin concepts at each stage of the product lifecycle.

When coming to the Analysis part, here, a model-based, data-driven infrastructure is the most efficient way to run virtual analysis, using digital twin concepts at each stage of the product lifecycle.

Virtual models for design, manufacturing and operations allow your company to make trade-off studies with low cost before committing to the physical world. 80 % of the environmental impact of a product comes from decisions in the virtual world.

Once you have your digital twins for each phase of the product lifecycle, you can benchmark your models with data reported from the physical world. All these interactions can be found in the beautiful Boeing diamond below, which I discussed before – Read A digital twin for everybody.

Conclusion

Efficient and sustainable life cycle assessment and analysis will come from connected information sources. The old document-driven paradigm is too costly and too slow to maintain. In particular, when the scope is not only a subset of your product, it is your full product and its full lifecycle with LCA. Another stepping stone towards the near future. Where are you?

Stepping-stone 1: From Model-Based Definition to an efficient Model-Based, Data-driven Enterprise

Stepping-stone 2: For RoHS compliance to an efficient and sustainable Model-Based, data-driven enterprise.

A  month ago, I wrote: It is time for BLM – PLM is not dead, which created an anticipated discussion. It is practically impossible to change a framed acronym. Like CRM and ERP, the term PLM is there to stay.

month ago, I wrote: It is time for BLM – PLM is not dead, which created an anticipated discussion. It is practically impossible to change a framed acronym. Like CRM and ERP, the term PLM is there to stay.

However, it was also interesting to see that people acknowledge that PLM should have a business scope and deserves a place at the board level.

The importance of PLM at business level is well illustrated by the discussion related to this LinkedIn post from Matthias Ahrens referring to the CIMdata roadmap conference CEO discussion.

My favorite quote:

Now it’s ‘lifecycle management,’ not just EDM or PDM or whatever they call it. Lifecycle management is no longer just about coming up with new stuff. We’re seeing more excitement and passion in our customers, and I think this is why.”

But it is not that simple

This is a perfect message for PLM vendors to justify their broad portfolio. However, as they do not focus so much on new methodologies and organizational change, their messages remain at the marketing level.

This is a perfect message for PLM vendors to justify their broad portfolio. However, as they do not focus so much on new methodologies and organizational change, their messages remain at the marketing level.

In the field, there is more and more awareness that PLM has a dual role. Just when I planned to write a post on this topic, Adam Keating, CEO en founder of CoLab, wrote the post System of Record meet System of Engagement.

Read the post and the comments on LinkedIn. Adam points to PLM as a System of Engagement, meaning an environment where the actual work is done all the time. The challenge I see for CoLab, like other modern platforms, e.g., OpenBOM, is how it can become an established solution within an organization. Their challenge is they are positioned in the engineering scope.

I believe for these solutions to become established in a broader customer base, we must realize that there is a need for a System of Record AND System(s) of Engagement.

In my discussions related to digital transformation in the PLM domain, I addressed them as separate, incompatible environments.

See the image below:

Now let’s have a closer look at both of them

What is a System of Record?

For me, PLM has always been the System of Record for product information. In the coordinated manner, engineers were working in their own systems. At a certain moment in the process, they needed to publish shareable information, a document(e.g., PDF) or BOM-table (e.g., Excel). The PLM system would support New Product Introduction processes, Release and Change Processes and the PLM system would be the single point of reference for product data.

For me, PLM has always been the System of Record for product information. In the coordinated manner, engineers were working in their own systems. At a certain moment in the process, they needed to publish shareable information, a document(e.g., PDF) or BOM-table (e.g., Excel). The PLM system would support New Product Introduction processes, Release and Change Processes and the PLM system would be the single point of reference for product data.

The reason I use the bin-image is that companies, most of the time, do not have an advanced information-sharing policy. If the information is in the bin, the experts will find it. Others might recreate the same information elsewhere, due to a lack of awareness.

Most of the time, engineers did not like PLM systems caused by integrations with their tools. Suddenly they were losing a lot of freedom due to check-in / check-out / naming conventions/attributes and more. Current PLM systems are good for a relatively stable product, but what happens when the product has a lot of parallel iterations (hardware & software, for example). How to deal with Work In Progress?

Most of the time, engineers did not like PLM systems caused by integrations with their tools. Suddenly they were losing a lot of freedom due to check-in / check-out / naming conventions/attributes and more. Current PLM systems are good for a relatively stable product, but what happens when the product has a lot of parallel iterations (hardware & software, for example). How to deal with Work In Progress?

Last week I visited the startup company PAL-V in the context of the Dutch PDM Platform. As you can see from the image, PAL-V is working on the world’s first Flying Car Production Model. Their challenge is to be certified for flying (here, the focus is on the design) and to be certified for driving (here, the focus is on manufacturing reliability/quality).

Last week I visited the startup company PAL-V in the context of the Dutch PDM Platform. As you can see from the image, PAL-V is working on the world’s first Flying Car Production Model. Their challenge is to be certified for flying (here, the focus is on the design) and to be certified for driving (here, the focus is on manufacturing reliability/quality).

During the PDM platform session, they showed their current Windchill implementation, which focused on managing and providing evidence for certification. For this type of company, the System of Record is crucial.

Their (mainly) SolidWorks users are trained to work in a controlled environment. The Aerospace and Automotive industries have started this way, which we can see reflected in current PLM systems.

And to finish with a PLM buzzword: modern systems of record provide a digital thread.

What is a System of Engagement?

The characteristic of a system of engagement is that it supports the user in real-time. This could be an environment for work in progress. Still, more importantly, all future concepts from MBSE, Industry 4.0 and Digital Twins rely on connected and real-time data.

As I previously mentioned, Digital Twins do not run on documents; they run on reliable data.

A system of engagement is an environment where different disciplines work together, using models and datasets. I described such an environment in my series The road to model-based and connected PLM. The System of Engagement environment must be user-friendly enough for these experts to work.

Due to the different targets of a system engagement, I believe we have to talk about Systems of Engagement as there will be several engagement models on a connected (federated) set of data.

Yousef Hooshmand shared the Daimler paper: “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh” in that context. Highly recommended to read if you are interested in a potential PLM future infrastructure.

Let’s look at two typical Systems of Engagement without going into depth.

The MBSE System of Engagement

In this environment, systems engineering is performed in a connected manner, building connected artifacts that should be available in real-time, allowing engineers to perform analysis and simulations to construct the optimal virtual solution before committing to physical solutions.

In this environment, systems engineering is performed in a connected manner, building connected artifacts that should be available in real-time, allowing engineers to perform analysis and simulations to construct the optimal virtual solution before committing to physical solutions.

It is an iterative environment. Click on the image for an impression.

The MBSE space will also be the place where sustainability needs to start. Environmental impact, the planet as a stakeholder, should be added to the engineering process. Life Cycle Assessment (LCA) defining the process and material choices will be fed by external data sources, for example, managed by ecoinvent, Higg and others to come. It is a new emergent market.

The MBSE space will also be the place where sustainability needs to start. Environmental impact, the planet as a stakeholder, should be added to the engineering process. Life Cycle Assessment (LCA) defining the process and material choices will be fed by external data sources, for example, managed by ecoinvent, Higg and others to come. It is a new emergent market.

The Digital Twin

In any phase of the product lifecycle, we can consider a digital twin, a virtual data-driven environment to analyze, define and optimize a product or a process. For example, we can have a digital twin for manufacturing, fulfilling the Industry 4.0 dreams.

In any phase of the product lifecycle, we can consider a digital twin, a virtual data-driven environment to analyze, define and optimize a product or a process. For example, we can have a digital twin for manufacturing, fulfilling the Industry 4.0 dreams.

We can have a digital twin for operation, analyzing, monitoring and optimizing a physical product in the field. These digital twins will only work if they use connected and federated data from multiple sources. Otherwise, the operating costs for such a digital twin will be too high (due to the inefficiency of accurate data)

In the end, you would like to have these digital twins running in a connected manner. To visualize the high-level concept, I like Boeing’s diamond presented by Don Farr at the PDT conference in 2018 – Image below:

Combined with the Daimler paper “From a Monolithic PLM Landscape to a Federated Domain and Data Mesh.” or the latest post from Oleg Shilovistky How PLM Can Build Ontologies? we can start to imagine a Systems of Engagement infrastructure.

You need both

And now the unwanted message for companies – you need both: a system of record and potential one or more systems of engagement. A System of Record will remain as long as we are not all connected in a blockchain manner. So we will keep producing reports, certificates and baselines to share information with others.

And now the unwanted message for companies – you need both: a system of record and potential one or more systems of engagement. A System of Record will remain as long as we are not all connected in a blockchain manner. So we will keep producing reports, certificates and baselines to share information with others.

It looks like the Gartner bimodal approach.

An example: If you manage your product requirements in your PLM system as connected objects to your product portfolio, you will and still can generate a product specification document to share with a supplier, a development partner or a certification company.

So do not throw away your current System of Record. Instead, imagine which types of Systems of Engagement your company needs. Most Systems of Engagement might look like a siloed solution; however, remember they are designed for the real-time collaboration of a certain community – designers, engineers, operators, etc.

So do not throw away your current System of Record. Instead, imagine which types of Systems of Engagement your company needs. Most Systems of Engagement might look like a siloed solution; however, remember they are designed for the real-time collaboration of a certain community – designers, engineers, operators, etc.

The real challenge will be connecting them efficiently with your System of Record backbone, which is preferable to using standard interface protocols and standards.

The Hybrid Approach

For those of you following my digital transformation story related to PLM, this is the point where the McKinsey report from 2017 becomes actual again.

Conclusion

The concepts are evolving and maturing for a digital enterprise using a System of Record and one or more Systems of Engagement. Early adopters are now needed to demonstrate these concepts to agree on standards and solution-specific needs. It is time to experiment (fast). Where are you in this process of learning?

While preparing my presentation for the Dutch Model-Based Definition solutions event, I had some reflections and experiences discussing Model-Based Definition. Particularly in traditional industries. In the Aerospace & Defense, and Automotive industry, Model-Based Definition has become the standard. However, other industries have big challenges in adopting this approach. In this post, I want to share my observations and bring clarifications about the importance.

While preparing my presentation for the Dutch Model-Based Definition solutions event, I had some reflections and experiences discussing Model-Based Definition. Particularly in traditional industries. In the Aerospace & Defense, and Automotive industry, Model-Based Definition has become the standard. However, other industries have big challenges in adopting this approach. In this post, I want to share my observations and bring clarifications about the importance.

What is a Model-Based Definition?

The Wiki-definition for Model-Based Definition is not bad:

Model-based definition (MBD), sometimes called digital product definition (DPD), is the practice of using 3D models (such as solid models, 3D PMI and associated metadata) within 3D CAD software to define (provide specifications for) individual components and product assemblies. The types of information included are geometric dimensioning and tolerancing (GD&T), component level materials, assembly level bills of materials, engineering configurations, design intent, etc.

Model-based definition (MBD), sometimes called digital product definition (DPD), is the practice of using 3D models (such as solid models, 3D PMI and associated metadata) within 3D CAD software to define (provide specifications for) individual components and product assemblies. The types of information included are geometric dimensioning and tolerancing (GD&T), component level materials, assembly level bills of materials, engineering configurations, design intent, etc.

By contrast, other methodologies have historically required the accompanying use of 2D engineering drawings to provide such details.

When I started to write about Model-Based definition in 2016, the concept of a connected enterprise was not discussed. MBD mainly enhanced data sharing between engineering, manufacturing, and suppliers at that time. The 3D PMI is a data package for information exchange between these stakeholders.

When I started to write about Model-Based definition in 2016, the concept of a connected enterprise was not discussed. MBD mainly enhanced data sharing between engineering, manufacturing, and suppliers at that time. The 3D PMI is a data package for information exchange between these stakeholders.

The main difference is that the 3D Model is the main information carrier, connected to 2D manufacturing views and other relevant data, all connected in this package.

MBD – the benefits

![]() There is no need to write a blog post related to the benefits of MBD. With some research, you find enough reasons. The most important benefits of MBD are:

There is no need to write a blog post related to the benefits of MBD. With some research, you find enough reasons. The most important benefits of MBD are:

- the information is and human-readable and machine-readable. Allowing the implementation of Smart Manufacturing / Industry 4.0 concepts

- the information relies on processes and data and is no longer dependent on human interpretation. This leads to better quality and error-fixing late in the process.

- MBD information is a building block for the digital enterprise. If you cannot master this concept, forget the benefits of MBSE and Virtual Twins. These concepts don’t run on documents.

To help you discover the benefits of MBD described by others – have a look here:

- What is MBD, and what are its benefits?

- MBD Efficiencies for Small Manufacturers

- 5 reasons to use MBD

- 10 reasons why everyone is moving away from traditional 2D drawings

MBD as a stepping stone to the future

When you are able to implement model-based definition practices in your organization and connect with your eco-system, you are learning what it means to work in a connected matter. Where the scope is limited, you already discover that working in a connected manner is not the same as mandating everyone to work with the same systems or tools. Instead, it is about new ways of working (skills & people), combined with exchange standards (which to follow).

When you are able to implement model-based definition practices in your organization and connect with your eco-system, you are learning what it means to work in a connected matter. Where the scope is limited, you already discover that working in a connected manner is not the same as mandating everyone to work with the same systems or tools. Instead, it is about new ways of working (skills & people), combined with exchange standards (which to follow).

Where MBD is part of the bigger model-based enterprise, the same principles apply for connecting upstream information (Model-Based Systems Engineering) and downstream information(IoT-based operation and service models).

Oleg Shilovitsky addresses the same need from a data point of view in his recent blog: PLM Strategy For Post COVID Time. He makes an important point about the Digital Thread:

Digital Thread is one of my favorite topics because it is leading directly to the topic of connected data and services in global manufacturing networks.

I agree with that statement as the digital thread is like MBD, another steppingstone to organize information in a connected manner, even beyond the scope of engineering-manufacturing interaction. However, Digital Thread is an intermediate step toward a full data-driven and model-based enterprise.

To master all these new ways is working, it is crucial for the management of manufacturing companies, both OEM and their suppliers, to initiate learning programs. Not as a Proof of Concept but as a real-life, growing activity.

To master all these new ways is working, it is crucial for the management of manufacturing companies, both OEM and their suppliers, to initiate learning programs. Not as a Proof of Concept but as a real-life, growing activity.

Why MBD is not yet a common practice?

If you look at the success of MBD in Aerospace & Defense and Automotive, one of the main reasons was the push from the OEMs to align their suppliers. They even dictated CAD systems and versions to enable smooth and efficient collaboration.

If you look at the success of MBD in Aerospace & Defense and Automotive, one of the main reasons was the push from the OEMs to align their suppliers. They even dictated CAD systems and versions to enable smooth and efficient collaboration.

In other industries, there we not so many giant OEMs that could dictate their supply chain. Often also, the OEM was not even ready for MBD. Therefore, the excuse was often we cannot push our suppliers to work different, let’s remain working as best as possible (the old way and some automation)

Besides the technical changes, MBD also had a business impact. Where the traditional 2D-Drawing was the contractual and leading information carrier, now the annotated 3D Model has to become the contractual agreement. This is much more complex than browsing through (paper) documents; now, you need an application to open up the content and select the right view(s) or datasets.

Besides the technical changes, MBD also had a business impact. Where the traditional 2D-Drawing was the contractual and leading information carrier, now the annotated 3D Model has to become the contractual agreement. This is much more complex than browsing through (paper) documents; now, you need an application to open up the content and select the right view(s) or datasets.

In the interaction between engineering and manufacturing, you could hear statements like:

you can use the 3D Model for your NC programming, but be aware the 2D drawing is leading. We cannot guarantee consistency between them.

In particular, this is a business change affecting the relationship between an OEM and its suppliers. And we know business changes do not happen overnight.

Smaller suppliers might even refuse to work on a Model-Based definition, as it is considered an extra overhead they do not benefit from.

In particular, when working with various OEMs that might have their own preferred MBD package content based on their preferred usage. There are standards; however, OEMs often push for their preferred proprietary format.

It is about an orchestrated change.

Implementing MBD in your company, like PLM, is challenging because people need to be aligned and trained on new ways of working. In particular, this creates resistance at the end-user level.

Similar to the introduction of mainstream CAD (AutoCAD in the eighties) and mainstream 3D CAD (Solidworks in the late nineties), it requires new processes, trained people, and matching tools.

Similar to the introduction of mainstream CAD (AutoCAD in the eighties) and mainstream 3D CAD (Solidworks in the late nineties), it requires new processes, trained people, and matching tools.

I am aware of learning materials coming from the US, not so much about European or Asian thought leaders. Feel free to add other relevant resources for the readers in this post’s comments. Have a look and talk with:

![]() Action Engineering with their OSCAR initiative: Bringing MBD Within Reach. I spoke with Jennifer Herron, founder of Action Engineering, a year ago about MBD and OSCAR in my blog post: PLM and Model-Based Definition.

Action Engineering with their OSCAR initiative: Bringing MBD Within Reach. I spoke with Jennifer Herron, founder of Action Engineering, a year ago about MBD and OSCAR in my blog post: PLM and Model-Based Definition.

Another interesting company to follow is Capvidia. Read their blog post to start with is MBD model-based definition in the 21st century.

The future

What you will discover from these two companies is that they focus on the connected flow of information between companies while anticipating that each stakeholder might have their preferred (traditional) PLM environment. It is about data federation.

The future of a connected enterprise is even more complex. So I was excited to see and download Yousef Hooshmand’s paper: ”From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”.

The future of a connected enterprise is even more complex. So I was excited to see and download Yousef Hooshmand’s paper: ”From a Monolithic PLM Landscape to a Federated Domain and Data Mesh”.

Yousef and some of his colleagues report about their PLM modernization project @Mercedes-Benz AG, aiming at transforming a monolithic PLM landscape into a federated Domain and Data Mesh.

This paper provides a lot of structured thinking related to the concepts I try to explain to my audience in everyday language. See my The road to model-based and connected PLM thoughts.

This paper has much more depth and is a must-read and must-discuss writing for those interested – perhaps an opportunity for new startups and a threat to traditional PLM vendors.

Conclusion

Vellum drawings are almost gone now – we have electronic 2D Drawings. The model-based definition has confirmed the benefits of improving the interaction between engineering, manufacturing & suppliers. Still, many industries are struggling with this approach due to process & people changes needed. If you are not able or willing to implement a model-based definition approach, be worried about the future. The eco-systems will only run efficiently (and survive) when their information exchange is based on data and models. Start learning now.

p.s. just out of curiosity:

If you are model-based advocate support this post with a

Once and a while, the discussion pops up if, given the changes in technology and business scope, we still should talk about PLM. John Stark and others have been making a point that PLM should become a profession.

Once and a while, the discussion pops up if, given the changes in technology and business scope, we still should talk about PLM. John Stark and others have been making a point that PLM should become a profession.

In a way, I like the vagueness of the definition and the fact that the PLM profession is not written in stone. There is an ongoing change, and who wants to be certified for the past or framed to the past?

However, most people, particularly at the C-level, consider PLM as something complex, costly, and related to engineering. Partly this had to do with the early introduction of PLM, which was a little more advanced than PDM.

However, most people, particularly at the C-level, consider PLM as something complex, costly, and related to engineering. Partly this had to do with the early introduction of PLM, which was a little more advanced than PDM.

The focus and capabilities made engineering teams happy by giving them more access to their data. But unfortunately, that did not work, as engineers are not looking for more control.

Old (current) PLM

Therefore, I would like to suggest that when we talk about PLM, we frame it as Product Lifecycle Data Management (the definition). A PLM infrastructure or system should be considered the System of Record, ensuring product data is archived to be used for manufacturing, service, and proving compliance with regulations.

In a modern way, the digital thread results from building such an infrastructure with related artifacts. The digital thread is somehow a slow-moving environment, connecting the various as-xxx structures (As-Designed, As-Planned, As-Manufactured, etc.). Looking at the different PLM vendor images, Aras example above, I consider the digital thread a fancy name for traceability.

I discussed the topic of Digital Thread in 2018: Document Management or Digital Thread. One of the observations was that few people talk about the quality of the relations when providing traceability between artifacts.

The quality of traceability is relevant for traditional Configuration Management (CM). Traditional CM has been framed, like PLM, to be engineering-centric.

The quality of traceability is relevant for traditional Configuration Management (CM). Traditional CM has been framed, like PLM, to be engineering-centric.

Both PLM and CM need to become enterprise activities – perhaps unified.

Read my blog post and see the discussion with Martijn Dullaart, Lisa Fenwick and Maxim Gravel when discussing the future of Configuration Management.

New digital PLM

In my posts, I talked about modern PLM. I described it as data-driven, often in relation to a model-based approach. And as a result of the data-driven approach, a digital PLM environment could be connected to processes outside the engineering domain. I wrote a series of posts related to the potential of such a new PLM infrastructure (The road to model-based and connected PLM)

In my posts, I talked about modern PLM. I described it as data-driven, often in relation to a model-based approach. And as a result of the data-driven approach, a digital PLM environment could be connected to processes outside the engineering domain. I wrote a series of posts related to the potential of such a new PLM infrastructure (The road to model-based and connected PLM)

Digital PLM, if implemented correctly, could serve people along the full product lifecycle, from marketing/portfolio management until service and, if relevant, decommissioning). The bigger challenge is even connecting eco-systems to the same infrastructure, in particular suppliers & partners but also customers. This is the new platform paradigm.

Some years ago, people stated IoT is the new PLM (IoT is the new PLM – PTC 2017). Or MBSE is the foundation for a new PLM (Will MBSE be the new PLM instead of IoT? A discussion @ PLM Roadmap conference 2018).

Some years ago, people stated IoT is the new PLM (IoT is the new PLM – PTC 2017). Or MBSE is the foundation for a new PLM (Will MBSE be the new PLM instead of IoT? A discussion @ PLM Roadmap conference 2018).

Even Digital Transformation was mentioned at that time. I don’t believe Digital Transformation is pointing to a domain, more to an ongoing process that most companies have t go through. And because it is so commonly used, it becomes too vague for the specifics of our domain. I liked Monica Schnitger‘s LinkedIn post: Digital Transformation? Let’s talk. There is enough to talk about; we have to learn and be more specific.

What is the difference?

The challenge is that we need more in-depth thinking about what a “digital transformed” company would look like. What would impact their business, their IT infrastructure, and their organization and people? As I discussed with Oleg Shilovitsky, a data-driven approach does not necessarily mean simplification.

I just finished recording a podcast with Nina Dar while writing this post. She is even more than me, active in the domain of PLM and strategic leadership toward a digital and sustainable future. You can find the pre-announcement of our podcast here (it was great fun to talk), and I will share the result later here too.

I just finished recording a podcast with Nina Dar while writing this post. She is even more than me, active in the domain of PLM and strategic leadership toward a digital and sustainable future. You can find the pre-announcement of our podcast here (it was great fun to talk), and I will share the result later here too.

What is clear to me is that a new future data-driven environment becomes like a System of Engagement. You can simulate assumptions and verify and qualify trade-offs in real-time in this environment. And not only product behavior, but you can also simulate and analyze behaviors all along the lifecycle, supporting business decisions.

This is where I position the digital twin. Modern PLM infrastructures are in real-time connected to the business. Still, PLM will have its system of record needs; however, the real value will come from the real-time collaboration.

This is where I position the digital twin. Modern PLM infrastructures are in real-time connected to the business. Still, PLM will have its system of record needs; however, the real value will come from the real-time collaboration.

The traditional PLM consultant should transform into a business consultant, understanding technology. Historically this was the opposite, creating friction in companies.

The traditional PLM consultant should transform into a business consultant, understanding technology. Historically this was the opposite, creating friction in companies.

Starting from the business needs

In my interactions with customers, the focus is no longer on traditional PLM; we discuss business scenarios where the company will benefit from a data-driven approach. You will not obtain significant benefits if you just implement your serial processes again in a digital PLM infrastructure.

In my interactions with customers, the focus is no longer on traditional PLM; we discuss business scenarios where the company will benefit from a data-driven approach. You will not obtain significant benefits if you just implement your serial processes again in a digital PLM infrastructure.

Efficiency gains are often single digit, where new ways of working can result in double-digit benefits or new opportunities.

Besides traditional pressure on companies to remain competitive, there is now a new additional driver that I have been discussing in my previous post, the Innovation Dilemma. To survive on our planet, we and therefore also companies, need to switch to sustainable products and business models.

Besides traditional pressure on companies to remain competitive, there is now a new additional driver that I have been discussing in my previous post, the Innovation Dilemma. To survive on our planet, we and therefore also companies, need to switch to sustainable products and business models.

This is a push for innovation; however, it requires a coordinated, end-to-end change within companies.

Be the change

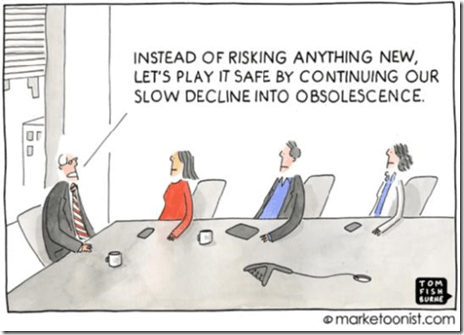

Interesting to read is this article from Jan Bosch that I read this morning: Resistance to Change. Read the article as it makes so much sense, but we need more than sense – we need people to get involved. My favorite quote from the article:

“The reasonable man adapts himself to the world; the unreasonable one persists in trying to adapt the world to himself. Therefore, all progress depends on the unreasonable man”.

Conclusion

PLM consultants should retrain themselves in System Thinking and start from the business. PLM technology alone is no longer enough to support companies in their (digital/sustainable) transformation. Therefore, I would like to introduce BLM (Business Lifecycle Management) as the new TLA.

However, BLM has been already framed as Black Lives Matter. I agree with that, extending it to ALM (All Lives Matter).

What do you think should we leave the comfortable term PLM behind us for a new frame?

In February, the PLM Global Green Alliance published our first interview discussing the relationship between PLM and Sustainability with the main vendors. We talked with Darren West from SAP.

You can find the interview here: PLM and Sustainability: talking with SAP. We spoke with Darren about SAP’s Responsible Design and Production module, allowing companies to understand their environmental and economic impact by calculating fees and taxes and implement measures to reduce regulatory costs. The high reliance on accurate data was one of the topics in our discussion.

You can find the interview here: PLM and Sustainability: talking with SAP. We spoke with Darren about SAP’s Responsible Design and Production module, allowing companies to understand their environmental and economic impact by calculating fees and taxes and implement measures to reduce regulatory costs. The high reliance on accurate data was one of the topics in our discussion.

In March, we interviewed Zoé Bezpalko and Jon den Hartog from Autodesk. Besides Autodesk’s impressive sustainability program, we discussed Autodesk’s BIM technology helping the construction industry to become greener and their Generative Design solution to support the designer in making better material usage or reuse decisions.

In March, we interviewed Zoé Bezpalko and Jon den Hartog from Autodesk. Besides Autodesk’s impressive sustainability program, we discussed Autodesk’s BIM technology helping the construction industry to become greener and their Generative Design solution to support the designer in making better material usage or reuse decisions.

The discussion ended with discussing Life Cycle Assessment tools to support the engineer in making sustainable decisions.

In my last blog post, the Innovation Dilemma, I explored the challenges of a Life Cycle Assessment. As it appears, it is not about just installing a tool. The concepts of a data-driven PLM infrastructure and digital twins are strong transformation prerequisites combined with the Inner Development Goals (IDG).

In my last blog post, the Innovation Dilemma, I explored the challenges of a Life Cycle Assessment. As it appears, it is not about just installing a tool. The concepts of a data-driven PLM infrastructure and digital twins are strong transformation prerequisites combined with the Inner Development Goals (IDG).

The IDGs are a human attitude needed besides the Sustainability Development Goals.

Therefore we were happy to discuss last week with Florence Verzelen, Executive Vice President Industry, Marketing & Sustainability and Xavier Adam, Worldwide Sustainability Senior Manager from Dassault Systemes. We discussed Dassault Systemes’ business sustainability goals and product offerings based on the 3DEXPERIENCE platform.

Therefore we were happy to discuss last week with Florence Verzelen, Executive Vice President Industry, Marketing & Sustainability and Xavier Adam, Worldwide Sustainability Senior Manager from Dassault Systemes. We discussed Dassault Systemes’ business sustainability goals and product offerings based on the 3DEXPERIENCE platform.

Have a look at the discussion below:

The slides shown in the recording can be found HERE.

What I learned

Dassault Systemes’ purpose has been to help their customers imagine sustainable innovations capable of harmonizing product, nature, and life for many years. A statement that now is slowly bubbling up in other companies too. Dassault Systemes has set a clear and interesting target for themselves in 2025. In that year two/thirds of their sales should come from solutions that make their customers more sustainable.

Their Eco-design solution is one of the first offerings to reach this objective. Their Life Cycle Assessment solution can govern your (virtual) product design on multiple criteria, not only greenhouse gas emissions. It will be interesting to follow up on this topic to see how companies make the change internally by relying on data and virtual twins of a product or a manufacturing process.

Want to learn more?

- Our Sustainability Commitment

- Unleashing Sustainable Innovation (a page full of resources)

- Virtual Twin Experiences

- Life Cycle Assessment Solution on the 3DEXPERIENCE Platform to Transform the Sustainable Innovation Process

Conclusion

80 % of the environmental impact of products is decided during the design phase. A Lifecycle Assessment Solutions combined with a virtual product model, the virtual design twin, allows you to decide on trade-offs in the virtual space before committing to the physical solution. Creating a data-driven, closed-loop between design, engineering, manufacturing and operations based on accurate data is the envisioned infrastructure for a sustainable future.

Yes, it is not a typo. Clayton Christensen famous book written in 1995 discussed the Innovator’s Dilemma when new technologies cause great firms to fail. This was the challenge two decades ago. Existing prominent companies could become obsolete quickly as they were bypassed by new technologies.

Yes, it is not a typo. Clayton Christensen famous book written in 1995 discussed the Innovator’s Dilemma when new technologies cause great firms to fail. This was the challenge two decades ago. Existing prominent companies could become obsolete quickly as they were bypassed by new technologies.

The examples are well known. To mention a few: DEC (Digital Equipment Corporation), Kodak, and Nokia.

Why the innovation dilemma?

This decade the challenge has become different. All companies are forced to become more sustainable in the next ten years. Either pushed by global regulations or because of their customer demands. The challenge is this time different. Besides the priority of reducing greenhouse gas emissions, there is also the need to transform our society from a linear, continuous growth economy into a circular doughnut economy.

The circular economy makes the creation, the usage and the reuse of our products more complex as the challenge is to reduce the need for raw materials and avoid landfills.

The doughnut economy makes the values of an economy more complex as it is not only about money and growth, human and environmental factors should also be considered.

To manage this complexity, I wrote SYSTEMS THINKING – a must-have skill in the 21st century, focusing on the logical part of the brain. In my follow-up post, Systems Thinking: a second thought, I looked at the human challenge. Our brain is not rational and wants to think fast to solve direct threats. Therefore, we have to overcome our old brains to make progress.

To manage this complexity, I wrote SYSTEMS THINKING – a must-have skill in the 21st century, focusing on the logical part of the brain. In my follow-up post, Systems Thinking: a second thought, I looked at the human challenge. Our brain is not rational and wants to think fast to solve direct threats. Therefore, we have to overcome our old brains to make progress.

An interesting and thought-provoking was shared by Nina Dar in this discussion, sharing the video below. The 17 Sustainability Development Goals (SDGs) describe what needs to be done. However, we also need the Inner Development Goals (IDGs) and the human side to connect. Watch the movie:

Our society needs to change and innovate; however, we cannot. The Innovation Dilemma.The future is data-driven and digital.

What is clear to me is that companies developing products and services have only one way to move forward: becoming data-driven and digital.

Why data-driven and digital?

Let’s look at something companies might already practice, REACH (Registration, Evaluation, Authorization and Restriction of Chemicals). This European directive, introduced in 2007, had the aim to protect human health and protect the environment by communicating information on chemicals up and down the supply chain. This would ensure that manufacturers, importers, and their customers are aware of information relating to the health and safety of the products supplied.

Let’s look at something companies might already practice, REACH (Registration, Evaluation, Authorization and Restriction of Chemicals). This European directive, introduced in 2007, had the aim to protect human health and protect the environment by communicating information on chemicals up and down the supply chain. This would ensure that manufacturers, importers, and their customers are aware of information relating to the health and safety of the products supplied.

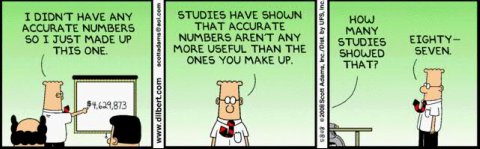

The regulation is currently still suffering in execution as most of the reporting and evaluation of chemicals is done manually. Suppliers report their chemicals in documents, and companies report the total of chemicals in their summary reports. Then, finally, authorities have to go through these reports.

The regulation is currently still suffering in execution as most of the reporting and evaluation of chemicals is done manually. Suppliers report their chemicals in documents, and companies report the total of chemicals in their summary reports. Then, finally, authorities have to go through these reports.

Where the scale of REACH is limited, the manual effort to have end-to-end reporting is relatively high. In addition, skilled workers are needed to do the job because reporting is done in a document-based manner.

Life Cycle Assessments (LCA)

Where you might think REACH is relatively simple, the real new challenges for companies are the need to perform Life Cycle Assessments for their products. In a Life Cycle Assessment. The Wiki definition of LCA says:

Life cycle assessment or LCA (also known as life cycle analysis) is a methodology for assessing environmental impacts associated with all the stages of the life cycle of a commercial product, process, or service. For instance, in the case of a manufactured product, environmental impacts are assessed from raw material extraction and processing (cradle), through the product’s manufacture, distribution and use, to the recycling or final disposal of the materials composing it (grave)

This will be a shift in the way companies need to define products. Much more thinking and analysis are required in the early design phases. Before committing to a physical solution, engineers and manufacturing engineers need to simulate and calculate the impact of their design decisions in the virtual world.

This is where the digital twin of the design and the digital twin of the manufacturing process becomes relevant. And remember: Digital Twins do not run on documents – you need connected data and various types of models to calculate and estimate the environmental impact.

This is where the digital twin of the design and the digital twin of the manufacturing process becomes relevant. And remember: Digital Twins do not run on documents – you need connected data and various types of models to calculate and estimate the environmental impact.

LCA done in a document-based manner will make your company too slow and expensive.

I described this needed transformation in my series from last year: The road to model-based and connected PLM – nine posts exploring the technology and concept of a model-based, data-driven PLM infrastructure.

Digital Product Passport (DPP)

The European Commission has published an action plan for the circular economy, one of the most important building blocks of the European Green Deal. One of the defined measures is the gradual introduction of a Digital Product Passport (DPP). As the quality of an LCA depends on the quality and trustworthy information about products and materials, the DPP is targeting to ensure circular economy metrics become reliable.

The European Commission has published an action plan for the circular economy, one of the most important building blocks of the European Green Deal. One of the defined measures is the gradual introduction of a Digital Product Passport (DPP). As the quality of an LCA depends on the quality and trustworthy information about products and materials, the DPP is targeting to ensure circular economy metrics become reliable.

This will be a long journey. If you want to catch a glimpse of the complexity, read this Medium article: The digital product passport and its technical implementation related to the DPP for batteries.

The innovation dilemma

Suppose you agree with my conclusion that companies need to change their current product or service development into a data-driven and model-based manner. In that case, the question will come up: where to start?

Becoming data-driven and model-based, of course, is not the business driver. However, this change is needed to be able to perform Life Cycle Assessments and comply with current and future regulations by remaining competitive.

Becoming data-driven and model-based, of course, is not the business driver. However, this change is needed to be able to perform Life Cycle Assessments and comply with current and future regulations by remaining competitive.

A document-driven approach is a dead-end.

Now let’s look at the real dilemmas by comparing a startup (clean sheet / no legacy) and an existing enterprise (experience with the past/legacy). Is there a winning approach?

The Startup

Having lived in Israel – the nation where almost everyone is a startup – and working with startups afterward in the past 10 years, I always get inspired by these people’s energy in startup companies. They have a unique value proposition most of the time, and they want to be visible on the market as soon as possible.

Having lived in Israel – the nation where almost everyone is a startup – and working with startups afterward in the past 10 years, I always get inspired by these people’s energy in startup companies. They have a unique value proposition most of the time, and they want to be visible on the market as soon as possible.

This approach is the opposite of systems thinking. It is often a very linear process to deliver this value proposition without exploring the side effects of such an approach.

For example, the new “green” transportation hype. Many cities now have been flooded with “green” scooters and electric bikes to promote transportation as a service. The idea behind this concept is that citizens do not require to own polluting motorbikes or cars anymore, and transportation means will be shared. Therefore, the city will be cleaner and greener.

For example, the new “green” transportation hype. Many cities now have been flooded with “green” scooters and electric bikes to promote transportation as a service. The idea behind this concept is that citizens do not require to own polluting motorbikes or cars anymore, and transportation means will be shared. Therefore, the city will be cleaner and greener.

However, these “green” vehicles are often designed in the traditional linear way. Is there a repair plan or a plan to recycle the batteries? Reuse of materials used.? Most of the time, not. Please, if you have examples contradicting my observations, let me know. I like to hear good news.

When startup companies start to scale, they need experts to help them grow the company. Often these experts are seasoned people, perhaps close to retirement. They will share their experience and what they know best from the past: traditional linear thinking.

As a result, even though startup companies can start with a clean sheet, their focus on delivering the product or service blocks further thinking. Instead, the seasoned experts will drive the company towards ways of working they know from the past.

Out of curiosity: Do you know or work in a startup that has started with a data-driven and model-based vision from scratch? Please add the name of this company in the comments, and let’s learn how they did it.

Out of curiosity: Do you know or work in a startup that has started with a data-driven and model-based vision from scratch? Please add the name of this company in the comments, and let’s learn how they did it.

The Existing company

Working in an established company is like being on board a big tanker. Changing its direction takes a clear eye on the target and navigation skills to come there. Unfortunately, most of the time, these changes take years as it is impossible to switch the PLM infrastructure and the people skills within a short time.

From the bimodal approach in 2015 to the hybrid approach for companies, inspired by this 2017 McKinsey article: Toward an integrated technology operating model, I discovered that this is probably the best approach to ensure a change will happen. In this approach – see image – the organization keeps running on its document-driven PLM infrastructure. This type of infrastructure becomes the system of record. Nothing different from what PLM currently is in most companies.

In parallel, you have to start with small groups of people who independently focus on a new product, a new service. Using the model-based approach, they work completely independently from the big enterprise in a data-driven approach. Their environment can be considered the future system of engagement.

The data-driven approach allows all disciplines to work in a connected, real-time manner. Mastering the new ways of working is usually the task of younger employees that are digital natives. These teams can be completed by experienced workers who behave as coaches. However, they will not work in the new environment; these coaches bring business knowledge to the team.

People cannot work in two modes, but organizations can. As you can see from the McKinsey chart, the digital teams will get bigger and more important for the core business over time. In parallel, when their data usage grows, more and more data integration will occur between the two operation modes. Therefore, the old PLM infrastructure can remain a System of Record and serve as a support backbone for the new systems of engagement.

The Innovation Dilemma conclusion

The upcoming ten years will push organizations to innovate their ways of working to become sustainable and competitive. As discussed before, they must learn to work in a data-driven, connected manner. Both startups and existing enterprises have challenges – they need to overcome the “thinking fast and acting slow” mindset. Do you see the change in your company?

Note: Before publishing this post, I read this interesting and complementary post from Jan Bosch Boost your digitalization: instrumentation.

Note: Before publishing this post, I read this interesting and complementary post from Jan Bosch Boost your digitalization: instrumentation.

It is in the air – grab it.

In the past four weeks, I have been writing about the various aspects related to PLM Education. First, starting from my bookshelf, zooming in on the strategic angle with CIMdata (Part 1).

In the past four weeks, I have been writing about the various aspects related to PLM Education. First, starting from my bookshelf, zooming in on the strategic angle with CIMdata (Part 1).

Next, I was looking at the educational angle and motivational angle with Share PLM (Part 2).

And the last time, I explored with John Stark the more academic view of PLM education. How do you – students and others – learn and explore the full context of PLM (Part 3)?

![]() Now I am talking with Dave Slawson from Quick Release_ , exploring their onboarding and educational program as a consultancy firm.

Now I am talking with Dave Slawson from Quick Release_ , exploring their onboarding and educational program as a consultancy firm.

How do they ensure their consultants bring added value to PLM-related activities, and can we learn something from that four our own practices?

Quick Release

Dave, can you tell us something more about Quick Release, further abbreviated to QR, and your role in the organization?

Dave, can you tell us something more about Quick Release, further abbreviated to QR, and your role in the organization?

.

Quick Release is a specialist PDM and PLM consultancy working primarily in the automotive sector in Europe, North America, and Australia. Robust data management and clear reporting of complex subjects are essential.

Quick Release is a specialist PDM and PLM consultancy working primarily in the automotive sector in Europe, North America, and Australia. Robust data management and clear reporting of complex subjects are essential.

Our sole focus is connecting the data silos within our client’s organizations, reducing program or build delays through effective change management.

I am QR’s head of Learning and Development, and I’ve been with the company since late 2014.

I’ve always had a passion for developing people and giving them a platform to push themselves to realize their potential. QR wants to build talent from within instead of just hiring experienced people.

However, with our rapid growth, it became necessary to have dedicated full-time resources to faster onboarding and upskilling our employees. This is combined with having an ongoing development strategy and execution.

QRs Learning & Development approach

Let’s focus on Learning & Development internally at QR first. What type of effort and time does it take to onboard a new employee, and what is their learning program?

Let’s focus on Learning & Development internally at QR first. What type of effort and time does it take to onboard a new employee, and what is their learning program?

.

We have a six-month onboarding program for new employees. Most starters join one of our “boot camps”, a three-week intensive program where a cohort of between 6 and 14 new starters receive classroom-style sessions led by our subject matter experts.

We have a six-month onboarding program for new employees. Most starters join one of our “boot camps”, a three-week intensive program where a cohort of between 6 and 14 new starters receive classroom-style sessions led by our subject matter experts.

During this, new starters learn about technical PDM and PLM and high-performance business skills that will help them deliver excellence for or clients and feel confident in their work.

While the teams spend a lot of time with the program coordinator, we also bring in our various Subject Matter Experts (SMEs) to ensure the highest quality and variety in these sessions. Some of these sessions are delivered by our founders and directors.

As a business, we believe in investing senior leadership time to ensure quality training and give our team members access to the highest levels of the company.

Since the Covid-19 pandemic started, we moved our training program to be primarily distance learning. However, some sessions are in person, with new starters attending workshops in our regional offices. Our sessions focus on engagement and “doing” instead of just watching a presentation. New starters have fed back that they are still just as enjoyable via distance learning.

Following boot camp, team members will start work on their client projects, supported by a Project Manager and a mentor. During this period, their mentor will help them use the on-the-job experience to build up their technical knowledge on top of their bootcamp learning. The mentor is also there to help them cope with what we know is a steep learning curve. Towards the end of the six months program, each new starter will carry out a self-evaluation designed to help them recognize their achievements to date and identify areas of focus for ongoing personal development.

Following boot camp, team members will start work on their client projects, supported by a Project Manager and a mentor. During this period, their mentor will help them use the on-the-job experience to build up their technical knowledge on top of their bootcamp learning. The mentor is also there to help them cope with what we know is a steep learning curve. Towards the end of the six months program, each new starter will carry out a self-evaluation designed to help them recognize their achievements to date and identify areas of focus for ongoing personal development.

We gather feedback from the trainers and trainees throughout the onboarding programs, ensuring that the former is shared with their mentors to help with coaching.

The latter is used to help us continuously improve our offering. Our trainers are subject matter experts, but we encourage them to evolve their content and approach based on feedback.

The latter is used to help us continuously improve our offering. Our trainers are subject matter experts, but we encourage them to evolve their content and approach based on feedback.

The learning journey

Some might say you only learn on the job – how do you relate to this statement? Where does QR education take place? Can you make a statement on ROI for Learning & Development?

Some might say you only learn on the job – how do you relate to this statement? Where does QR education take place? Can you make a statement on ROI for Learning & Development?

It is important to always be curious related to your work. We encourage our team members to challenge themselves to learn new things and dig deeper. Indeed, constant curiosity is one of our core values. We encourage people to challenge the status quo, challenge themselves, and adopt a growth mindset through all development and feedback cycles.

It is important to always be curious related to your work. We encourage our team members to challenge themselves to learn new things and dig deeper. Indeed, constant curiosity is one of our core values. We encourage people to challenge the status quo, challenge themselves, and adopt a growth mindset through all development and feedback cycles.

The learning curve in PDM and PLM can be steep; therefore, we must give people the tools and feedback that they can use to grow. At QR, this starts with our onboarding program and flows into an employee’s full career with us. In addition, at the end of every quarter, team members receive performance feedback from their managers, which feeds into their development target setting.

We have a wealth of internal resources to support development, from structured training materials to our internally compiled PDM Wiki and our suite of development “playbooks” (curated learning journeys catering to a range of learning styles).

On-the-job learning is critically important. So after the boot camp, we put our team members straight into projects to make sure they apply and build on their baseline knowledge through real-world experience. Still, they are supported with formal training and ongoing access to development resources.

On-the-job learning is critically important. So after the boot camp, we put our team members straight into projects to make sure they apply and build on their baseline knowledge through real-world experience. Still, they are supported with formal training and ongoing access to development resources.

Regarding Return on Investment, while it is impossible to give a specific number, we would say that quality training is invaluable to our clients and us. In seven years, the company has grown from 60 to 300 employees. In addition, it now operates in three other continents, illustrating that our clients trust the quality of how we train our consultants!

We also carried out internal studies regarding the long-term retention of team members relative to onboarding quality. These studies show that team members who experience a more controlled and structured onboarding program are mostly more successful in roles.

We also carried out internal studies regarding the long-term retention of team members relative to onboarding quality. These studies show that team members who experience a more controlled and structured onboarding program are mostly more successful in roles.

Investing in education?

I understood some of your customers also want to understand PLM processes better and ask for education from your side. Would the investment in education be similar? Would they be able to afford such an effort?

I understood some of your customers also want to understand PLM processes better and ask for education from your side. Would the investment in education be similar? Would they be able to afford such an effort?

Making a long-term and tangible impact for our clients is the core foundation of what QR are trying to achieve. We do not want to come in to resolve a problem, only for it to resurface once we’ve left. Nor do we want to do work that our clients could easily hire someone to do themselves.