You are currently browsing the category archive for the ‘Digital Enterprise’ category.

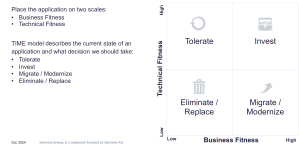

After a summer holiday in the south of Greece, it is time to resume my activities. The south of Crete is largely an analogue environment, far from any digital hype.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

So where do I stand? While digesting all these historical experiences, I reflected on what we can learn from them and what we didn’t learn from them.

It started with technology.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

Who remembers Automanager Workflow from Cyco, before SmarTeam came on the market?

For that reason, in the early days, PDM was an IT job. As the PDM system primarily dealt with engineering data, it was relatively easy to implement as an organizational change process. We transitioned from analogue to electronic in the department.

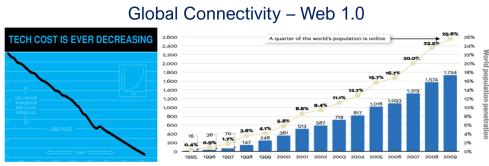

Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. The rapid decline of IT components, combined with the rapid growth of global connectivity, has created new opportunities for collaboration.

As part of the Dassault/IBM/SmarTeam organization, I explained and taught these new capabilities worldwide.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

Then came digital transformation

With growing connectivity, smartphones and Web 2.0 technology have led to more PLM-like discussions. PLM vendors expanded their scope and developed capabilities beyond mechanical engineering.

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

At the time, they were largely considered the same in discussions and advertisements..

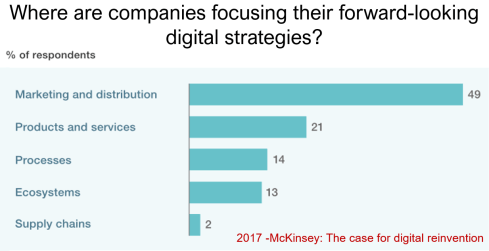

Meanwhile, digital transformation was occurring at the marketing and sales levels – companies invested in direct communication with their customers through the web.

Meanwhile, the internal ways of working for R&D, engineering, and manufacturing did not change significantly. Still, they were following linear processes, and despite the existence of 3D CAD, the 2D drawing remained the primary carrier of legal information between engineering, manufacturing, and suppliers.

Note: the option where the most benefits could be achieved – connected supply chains – had the lowest focus in 2017 – something that would change with COVID-19.

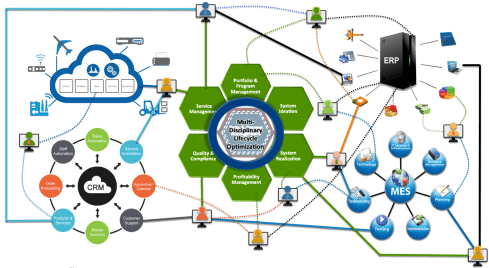

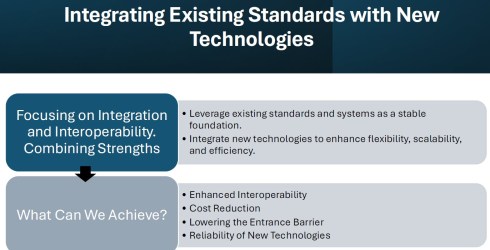

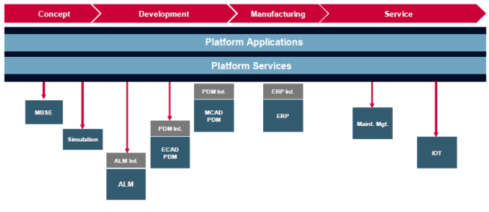

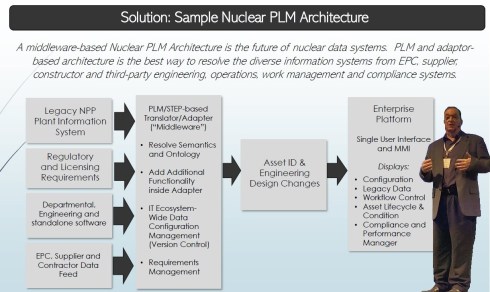

Fundamental digital transformation in the PLM domain occurred gradually. ARAS came with its overlay approach (the platform), connecting various disciplines and enterprise systems. In contrast, Dassault Systèmes introduced its 3DEXPERIENCE platform, utilizing its own software brands as platform components.

Most PLM vendors rapidly countered Aras’ overlay approach with their low-code offerings based on Mendix, ThingWorx or Netvibes, to enable data flows beyond the traditional PDM scope. The Coordinated Digital Thread was born.

The good news is that PLM has now clearly become a strategy based on a federated system infrastructure. The single PLM system no longer exists, although many of us still use the term’ PLM system’ to refer to the main component of a PLM infrastructure – the System of Record.

Moving to a federated PLM infrastructure is already a challenge for companies, not because of the available technology, but first of all because of the legacy data and, closely related to that, legacy processes and people skills.

Legacy is creating the inertia, not technology!

Next came the cloud – SaaS

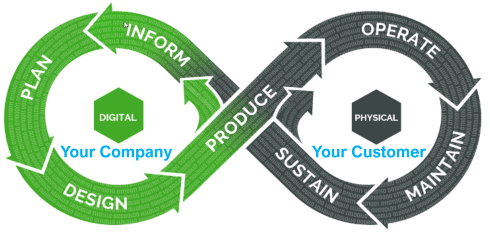

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

A connected enterprise no longer needs interfaces to transfer data from one system to another.

Instead, with apps and dashboards, combined data from different online sources is presented in a single, user-friendly working environment – A combination of the Systems of Record with the new environments – the Systems of Engagement.

The technology used to create dashboards and apps is based on modern data-driven technologies and principles (ontologies, graph databases, and the semantic web). The Connected Digital Thread was born.

However, legacy systems play an essential role again, as some systems of engagement can be implemented in a complementary manner to the systems of record, allowing companies to work within an integrated technology model.

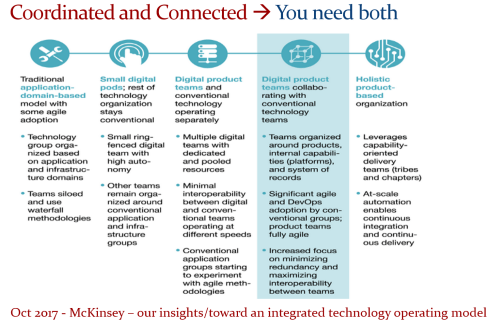

People will work in a particular mode, either coordinated or connected, but organizations can operate in both modes simultaneously. A story I have been sharing a lot – it is not about migrations but about an evolutionary approach towards an integrated technology model.

At this point, it becomes essential that business objectives drive the implementation of a PLM infrastructure. Of course, you hear me say we should start from the business; however, the big difference now is that a company should coordinate the technologies, systems, and tools it acquires to avoid isolated islands of information.

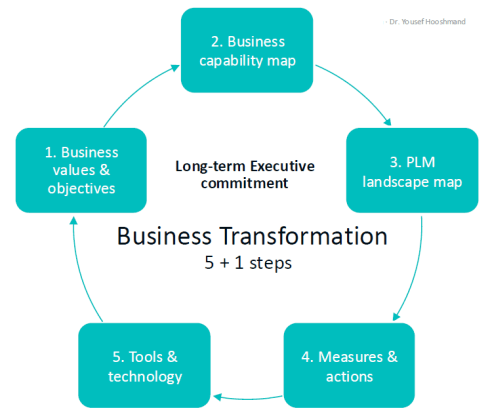

Follow Yousef Hooshmand‘s 5 + 1 business transformation steps.

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

- Data Quality and Data Governance – A hype? (part 1)

- Data Quality and Data Governance – the WHY and HOW (part 2)

- Building the Future: Data Quality and Governance in the Digital Age (part 3)

There are some great insights in this dialogue and the associated LinkedIn comments.

Despite the increasing availability of technology, it is the legacy of people, processes, and culture that is hindering progress.

Rob Feronne had a shocking lightbulb moment 😲 in our discussion about the future of PLM, where the participants – see below – answered a question related to the importance of technology in our PLM domain – shocking also for me.

My thumb was up because modern technology matters! The question inspired Oleg Shilovitsky to write a whole blog post on this topic. If you’re truly shocked, read his post, where I agree with the content; the question is too simple to answer with a thumbs up/down.

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

And now there is AI

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

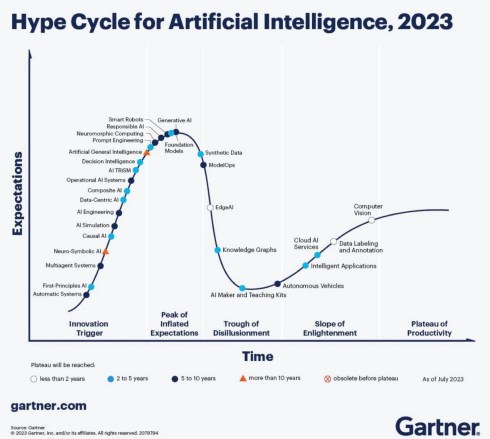

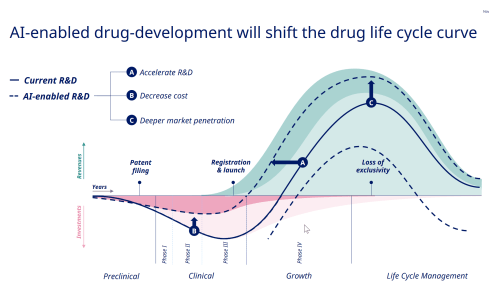

Although AI is not a new concept, the fact that various AI capabilities have now reached the end-user level is what drives the hype. Currently, I believe we are at the peak of the hype.

Last week, I participated in an interesting discussion in the series: The Future of PLM moderated by Michael Finochario, this time talking with the analysts. Click on the link to see Michael’s excellent summary and access to the recording of the event.

It was an interesting discussion for a little more than an hour, and the majority of our discussion was about the potential impact of AI on businesses. First, the impact AI can have on the traditional work of an analyst and next, the effects on the PLM domain.

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

The report’s interesting findings included high adoption of tools but low transformation. Despite significant investment in Generative AI (GenAI), most organizations are not achieving meaningful business transformation.

- 95% of organizations report zero return on GenAI investments.

- Only 5% of integrated AI pilots generate millions in value.

- 80% of organizations have explored or piloted tools like ChatGPT, but these primarily enhance individual productivity.

- 60% of organizations evaluated enterprise-grade systems, but only 20% reached the pilot stage, and just 5% reached production.

- Key barriers include brittle workflows, a lack of contextual learning, and operational misalignment.

Therefore, the question is – Is current AI the next bubble?

In 2014, I wrote about the lack of digital transformation in the PLM domain, and two images (below) from a report by The Economist could be used again. The report can be found here: The Onrushing Wave.

Click on the image to read the 2013 predictions.

I realized that my current job, as a recreational therapist and firefighter at the time, was not at risk, and that some of the predictions from 10 years ago had become a reality. Who is still bothered by telemarketers or retail salespersons?

However, many of the AI symptoms mentioned in the MIT NANDA report are similar to the hype surrounding digital transformation.

The only reservation I have now – will it take a decade before we understand and demonstrate the value of AI, or are we accelerating?

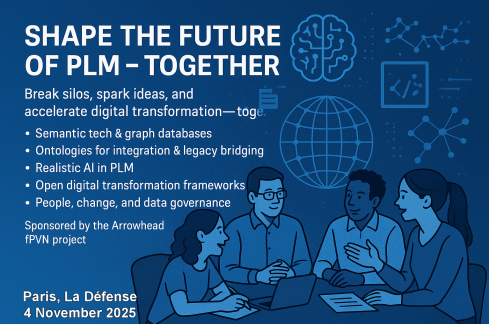

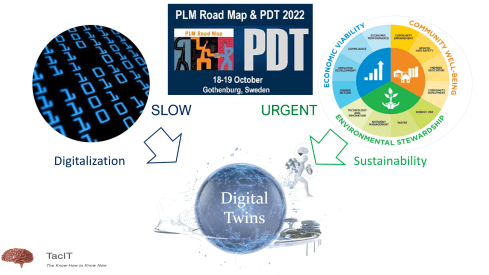

In this context, the upcoming PLM Roadmap/PDT Europe conference on 5 – 6 November will be interesting, as here we will discuss reality.

For a few of you interested in more, there is the day before the conference, a (free) workshop where we will discuss with some thought leaders and experts from various companies how the future of PLM could look like – based on standards, AI tools and more. Click on the image below the conclusion.

Conclusion

The summertime was a nice moment to reflect, inspired by others in my network. What is clear is that there is a shift from technology towards people and change. The rapid expansion of AI tools, along with connected technologies, has created an overwhelming array of possibilities. Now it is time for business leadership to understand them and utilize them for significant business improvement, where the fear is that substantial change will always be slowed down by organizational inertia.

In the past three weeks, between some short holidays, I had a discussion with Rob Ferrone, who you might know as

In the past three weeks, between some short holidays, I had a discussion with Rob Ferrone, who you might know as

“The original product Data PLuMber”.

Our discussion resulted in this concluding post and these two previous posts:

If you haven’t read them before, please take a moment to review them, to understand the flow of our dialogue and to get a full, holistic view of the WHY, WHAT and HOW of data quality and data governance.

A foundation required for any type of modern digital enterprise, with or without AI.

A first feedback round

Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me, Benedict Smith’s point in the discussion thread was an interesting one.

Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me, Benedict Smith’s point in the discussion thread was an interesting one.

From this reaction, I like to quote:

To suggest it’s merely a lack of discipline is to ignore the evidence. We have some of the most disciplined engineers in the world. The problem isn’t the people; it’s the architecture they are forced to inhabit.

My contention is that we have been trying to solve a reasoning problem with record-keeping tools. We need to stop just polishing the records and start architecting for the reasoning. The “what” will only ever be consistently correct when the “why” finally has a home. 😎

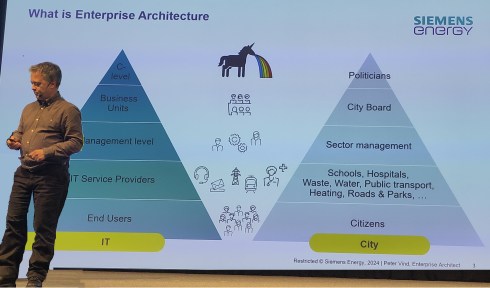

Here, I realized that the challenge is not only about moving From Coordinated to Coordinated and Connected, but also that our existing record-keeping mindset drives the old way of thinking about data. In the long term, this will be a dead end.

What did you notice?

Jos, indeed, Benedict’s point is great to have in mind for the future and in addition, I also liked the comment from Yousef Hooshmand, where he explains that a data-driven approach with a much higher data granularity automatically leads to a higher quality – I would quote Yousef:

Jos, indeed, Benedict’s point is great to have in mind for the future and in addition, I also liked the comment from Yousef Hooshmand, where he explains that a data-driven approach with a much higher data granularity automatically leads to a higher quality – I would quote Yousef:

The current landscapes are largely application-centric and not data-centric, so data is often treated as a second or even third-class citizen.

In contrast, a modern federated and semantic architecture is inherently data-centric. This shift naturally leads to better data quality with significantly less overhead. Just as important, data ownership becomes clearly defined and aligned with business responsibilities.

Take “weight” as a simple example: we often deal with “Target Weight,” “Calculated Weight,” and “Measured Weight.” In a federated, semantic setup, these attributes reside in the systems where their respective data owners (typically the business users) work daily, and are semantically linked in the background.

I believe the interesting part of this discussion is that people are thinking about data-driven concepts as a foundation for the paradigm, shifting from systems of record/systems of engagement to systems of reasoning. Additionally, I see how Yousef applies a data-centric approach in his current enterprise, laying the foundation for systems of reasoning.

What’s next?

Rob, your recommendations do not include a transformation, but rather an evolution to become better and more efficient – the typical work of a Product PLuMber, I would say. How about redesigning the way we work?

Rob, your recommendations do not include a transformation, but rather an evolution to become better and more efficient – the typical work of a Product PLuMber, I would say. How about redesigning the way we work?

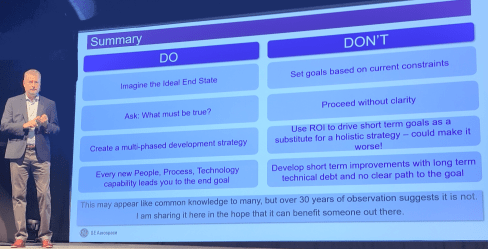

Bold visions and ideas are essential catalysts for transformations, but I’ve found that the execution of significant, strategic initiatives is often the failure mode.

Bold visions and ideas are essential catalysts for transformations, but I’ve found that the execution of significant, strategic initiatives is often the failure mode.

One of my favourite quotes is:

“A complex system that works is invariably found to have evolved from a simple system that worked.”

John Gall, Systemantics (1975)

For example, I advocate this approach when establishing Digital Threads.

It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

Therefore, my take on Digital Thread as a Service is not about a plug-and-play Digital Thread, but the Service of creating valuable Digital Threads.

You achieve the solution by first making the Thread work and progressively ‘leaving a trail of construction’.

The caveat is that this can’t happen in isolation; it must be aligned with a data strategy, a set of principles, and a roadmap that are grounded in the organization’s strategic business imperatives.

Your answer relates a lot to Steef Klein’s comment when he discussed: “Industry 4.0: Define your Digital Thread ML-related roadmap – Carefully select your digital innovation steps.” You can read Steef’s full comment here: Your architectural Industry 4.0 future)

First, I liked the example value cases presented by Steef. They’re a reminder that all these technology-enabled strategies, whether PLM, Digital Thread, or otherwise, are just means to an end. That end is usually growth or financial performance (and hopefully, one day, people too).

First, I liked the example value cases presented by Steef. They’re a reminder that all these technology-enabled strategies, whether PLM, Digital Thread, or otherwise, are just means to an end. That end is usually growth or financial performance (and hopefully, one day, people too).

It is a bit like Lego, however. You can’t build imaginative but robust solutions unless there is underlying compatibility and interoperability.

It is a bit like Lego, however. You can’t build imaginative but robust solutions unless there is underlying compatibility and interoperability.

It would be a wobbly castle made from a mix of Playmobil, Duplo, Lego and wood blocks (you can tell I have been doing childcare this summer – click on the image to see the details).

As the lines blur between products, services, and even companies themselves, effective collaboration increasingly depends on a shared data language, one that can be understood not just by people, but by the microservices and machines driving automation across ecosystems.

Discussing the future?

I think that for those interested in this discussion, I would like to point to the upcoming PLM Roadmap/PDT Europe 2025 conference on November 5th and 6th in Paris, where some of the thought leaders in these concepts will be presenting or attending. The detailed agenda is expected to be published after the summer holidays.

I think that for those interested in this discussion, I would like to point to the upcoming PLM Roadmap/PDT Europe 2025 conference on November 5th and 6th in Paris, where some of the thought leaders in these concepts will be presenting or attending. The detailed agenda is expected to be published after the summer holidays.

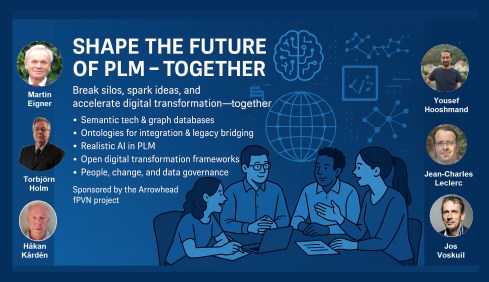

However, this conference also created the opportunity to have a pre-conference workshop, where Håkan Kårdén and I wanted to have an interactive discussion with some of these thought leaders and practitioners from the field.

However, this conference also created the opportunity to have a pre-conference workshop, where Håkan Kårdén and I wanted to have an interactive discussion with some of these thought leaders and practitioners from the field.

Sponsored by the Arrowhead fPVN project, we were able to book a room at the conference venue in the afternoon of November 4th. You can find the announcement and more details of the workshop here in Hakan’s post:. Shape the Future of PLM – Together.

Sponsored by the Arrowhead fPVN project, we were able to book a room at the conference venue in the afternoon of November 4th. You can find the announcement and more details of the workshop here in Hakan’s post:. Shape the Future of PLM – Together.

Last year at the PLM Roadmap PDT Europe conference in Gothenburg, I saw a presentation of the Arrowhead fPVN project. You can read more here: The long week after the PLM Roadmap/PDT Europe 2024 conference.

And, as you can see from the acknowledged participants below, we want to discuss and understand more concepts and their applications – and for sure, the application of AI concepts will be part of the discussion.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September.

And the people?

Rob, we just came from a human-centric PLM conference in Jerez – the Share PLM 2025 summit – where are the humans in this data-driven world?

Rob, we just came from a human-centric PLM conference in Jerez – the Share PLM 2025 summit – where are the humans in this data-driven world?

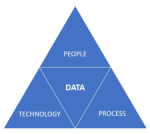

You can’t have a data-driven strategy in isolation. A business operating system comprises the coordinated interaction of people, processes, systems, and data, aligned to the lifecycle of products and services. Strategies should be defined at each layer, for instance, whether the system landscape is federated or monolithic, with each strategy reinforcing and aligning with the broader operating system vision.

You can’t have a data-driven strategy in isolation. A business operating system comprises the coordinated interaction of people, processes, systems, and data, aligned to the lifecycle of products and services. Strategies should be defined at each layer, for instance, whether the system landscape is federated or monolithic, with each strategy reinforcing and aligning with the broader operating system vision.

In terms of the people layer, a data strategy is only as good as the people who shape, feed, and use it. Systems don’t generate clean data; people do. If users aren’t trained, motivated, or measured on quality, the strategy falls apart.

Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset.

Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset.

There are risks; however, a system-driven approach can clash with local flexibility/agility.

People who previously operated on instinct or informal processes may now need to justify actions with data. And if the data is poor or the outputs feel misaligned, people will quickly disengage, reverting to offline workarounds or intuition.

Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

therefore there needs to be product data professionals in the mix – people who care, notice what’s wrong, and know how to fix it across silos.

Conclusion

- Our discussions on data quality and governance revealed a crucial insight: this is not a technical journey, but a human one. While the industry is shifting from systems of record to systems of reasoning, many organizations are still trapped in record-keeping mindsets and fragmented architectures. Better tools alone won’t fix the issue—we need better ownership, strategy, and engagement.

- True data quality isn’t about being perfect; it’s about the right maturity, at the right time, for the right decisions. Governance, too, isn’t a checkbox—it’s a foundation for trust and continuity. The transition to a data-centric way of working is evolutionary, not revolutionary—requiring people who understand the business, care about the data, and can work across silos.

The takeaway? Start small, build value early, and align people, processes, and systems under a shared strategy. And if you’re serious about your company’s data, join the dialogue in Paris this November.

![]() In my first discussion with Rob Ferrone, the original Product Data PLuMber, we discussed the necessary foundation for implementing a Digital Thread or leveraging AI capabilities beyond the hype. This is important because all these concepts require data quality and data governance as essential elements.

In my first discussion with Rob Ferrone, the original Product Data PLuMber, we discussed the necessary foundation for implementing a Digital Thread or leveraging AI capabilities beyond the hype. This is important because all these concepts require data quality and data governance as essential elements.

If you missed part 1, here is the link: Data Quality and Data Governance – A hype?

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations?

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations?

Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

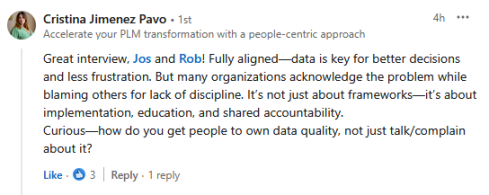

Cristina Jimenez Pavo’s comment illustrates that the question is in the air.:

Everyone knows that it should be better; high-performing businesses have good data governance, but most people don’t know how to systematically and sustainably improve their data quality. It’s hard and not glamorous (for most), so people tend to focus on buying new systems, which they believe will magically resolve their underlying issues.

Data governance as a strategy

Thanks for the clarification. I imagine it is similar to Configuration Management, i.e., with different needs per industry. I have seen ISO 8000 in the aerospace industry, but it has not spread further to other businesses. What about data governance as a strategy, similar to CM?

Thanks for the clarification. I imagine it is similar to Configuration Management, i.e., with different needs per industry. I have seen ISO 8000 in the aerospace industry, but it has not spread further to other businesses. What about data governance as a strategy, similar to CM?

That’s a great idea. Do you mind if I steal it?

That’s a great idea. Do you mind if I steal it?

If you ask any PLM or ERP vendor, they’ll claim to have a master product data governance template for every industry. While the core principles—ownership, control, quality, traceability, and change management, as in Configuration Management—are consistent, their application must vary based on the industry context, data types, and business priorities.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

The challenge is that both configuration management (CM) and data governance often suffer from a perception problem, being viewed as abstract or compliance-heavy. In truth, they must be practical, embedded in daily workflows, and treated as dynamic systems central to business operations, rather than static documents.

Think of it like the difference between stepping on a scale versus using a smartwatch that tracks your weight, heart rate, and activity, schedules workouts, suggests meals, and aligns with your goals.

![]() Governance should function the same way:

Governance should function the same way:

responsive, integrated, and outcome-driven.

Who is responsible for data quality?

I have seen companies simplifying data quality as an enhancement step for everyone in the organization, like a “You have to be more accurate” message, similar perhaps to configuration management. Here we touch people and organizational change. How do you make improving data quality happen beyond the wish?

I have seen companies simplifying data quality as an enhancement step for everyone in the organization, like a “You have to be more accurate” message, similar perhaps to configuration management. Here we touch people and organizational change. How do you make improving data quality happen beyond the wish?

In most companies, managing product data is a responsibility shared among all employees. But increasingly complex systems and processes are not designed around people, making the work challenging, unpleasant, and often poorly executed.

In most companies, managing product data is a responsibility shared among all employees. But increasingly complex systems and processes are not designed around people, making the work challenging, unpleasant, and often poorly executed.

I like to quote Larry English – The Father of Information Quality:

“Information producers will create information only to the quality level for which they are trained, measured and held accountable.”

A common reaction is to add data “police” or transactional administrators, who unintentionally create more noise or burden those generating the data.

The real solution lies in embedding capable, proactive individuals throughout the product lifecycle who care about data quality as much as others care about the product itself – it was the topic I discussed at the 2025 Share PLM summit in Jerez – Rob Ferrone – Bill O-Materials also presented in part 1 of our discussion.

These data professionals collaborate closely with designers, engineers, procurement, manufacturing, supply chain, maintenance, and repair teams. They take ownership of data quality in systems, without relieving engineers of their responsibility for the accuracy of source data.

Some data, like component weight, is best owned by engineers, while others—such as BoM structure—may be better managed by system specialists. The emphasis should be on giving data professionals precise requirements and the authority to deliver.

They not only understand what good data looks like in their domain but also appreciate the needs of adjacent teams. This results in improved data quality across the business, not just within silos. They also work with IT and process teams to manage system changes and lead continuous improvement efforts.

![]() The real challenge is finding leaders with the vision and drive to implement this approach.

The real challenge is finding leaders with the vision and drive to implement this approach.

The costs or benefits associated with good or poor data quality

At the peak of interest in being data-driven, large consulting firms published numerous studies and analyses, proving that data-driven companies achieve better results than their data-averse competitors. Have you seen situations where the business case for improving “product data” quality has led to noticeable business benefits, and if so, in what range? Double digit, single digit?

At the peak of interest in being data-driven, large consulting firms published numerous studies and analyses, proving that data-driven companies achieve better results than their data-averse competitors. Have you seen situations where the business case for improving “product data” quality has led to noticeable business benefits, and if so, in what range? Double digit, single digit?

Improving data quality in isolation delivers limited value. Data quality is a means to an end. To realise real benefits, you must not only know how to improve it, but also how to utilise high-quality data in conjunction with other levers to drive improved business outcomes.

Improving data quality in isolation delivers limited value. Data quality is a means to an end. To realise real benefits, you must not only know how to improve it, but also how to utilise high-quality data in conjunction with other levers to drive improved business outcomes.

I built a company whose premise was that good-quality product data flowing efficiently throughout the business delivered dividends due to improved business performance. We grew because we delivered results that outweighed our fees.

Last year’s turnover was €35M, so even with a conservatively estimated average in-year ROI of 3:1, the company delivered over € 100 M of cost savings or additional revenue per year to clients, with the majority of these benefits being sustainable.

There is also the potential to unlock new value and business models through data-driven innovation.

For example, connecting disparate product data sources into a unified view and taking steps to sustainably improve data quality enables faster, more accurate, and easier collaboration between OEMs, fleet operators, spare parts providers, workshops, and product users, which leads to a new value proposition around minimizing painful operational downtime.

For example, connecting disparate product data sources into a unified view and taking steps to sustainably improve data quality enables faster, more accurate, and easier collaboration between OEMs, fleet operators, spare parts providers, workshops, and product users, which leads to a new value proposition around minimizing painful operational downtime.

AI and Data Quality

Currently, we are seeing numerous concepts emerge where AI, particularly AI agents, can be highly valuable for PLM. However, we also know that in legacy environments, the overall quality of data is poor. How do you envision AI supporting PLM processes, and where should you start? Or has it already started?

Currently, we are seeing numerous concepts emerge where AI, particularly AI agents, can be highly valuable for PLM. However, we also know that in legacy environments, the overall quality of data is poor. How do you envision AI supporting PLM processes, and where should you start? Or has it already started?

It’s like mining for rare elements—sifting through massive amounts of legacy data to find the diamonds. Is it worth the effort, especially when diamonds can now be manufactured? AI certainly makes the task faster and easier. Interestingly, Elon Musk recently announced plans to use AI to rewrite legacy data and create a new, high-quality knowledge base. This suggests a potential market for trusted, validated, and industry-specific legacy training data.

It’s like mining for rare elements—sifting through massive amounts of legacy data to find the diamonds. Is it worth the effort, especially when diamonds can now be manufactured? AI certainly makes the task faster and easier. Interestingly, Elon Musk recently announced plans to use AI to rewrite legacy data and create a new, high-quality knowledge base. This suggests a potential market for trusted, validated, and industry-specific legacy training data.

Will OEMs sell it as valuable IP, or will it be made open source like Tesla’s patents?

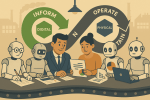

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments.

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments.

That said, data alignment remains complex, as discrepancies can be valid depending on context.

A practical starting point?

Data Quality as a Service. My former company, Quick Release, is piloting an AI-enabled service focused on EBoM to MBoM alignment. It combines a data quality platform with expert knowledge, collecting metadata from PLM, ERP, MES, and other systems to map engineering data models.

Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Summary

We hope the discussions in parts 1 and 2 helped you understand where to begin. It doesn’t need to stay theoretical or feel unachievable.

- The first step is simple: recognise product data as an asset that powers performance, not just admin.

Then treat it accordingly. - You don’t need a 5-year roadmap or a board-approved strategy before you begin. Start by identifying the product data that supports your most critical workflows, the stuff that breaks things when it’s wrong or missing. Work out what “good enough” looks like for that data at each phase of the lifecycle.

Then look around your business: who owns it, who touches it, and who cares when it fails? - From there, establish the roles, rules, and routines that help this data improve over time, even if it’s manual and messy to begin with. Add tooling where it helps.

- Use quality KPIs that reflect the business, not the system. Focus your governance efforts where there’s friction, waste, or rework.

- And where are you already getting value? Lock it in. Scale what works.

Conclusion

It’s not about perfection or policies; it’s about momentum and value. Data quality is a lever. Data governance is how you pull it.

Just start pulling- and then get serious with your AI applications!

Are you attending the PLM Roadmap/PDT Europe 2025 conference on

November 5th & 6th in Paris, La Defense?

There is an opportunity to discuss the future of PLM in a workshop before the event.

More information will be shared soon; please mark November 4th in the afternoon on your agenda.

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

In the last two weeks, I have had mixed discussions related to PLM, where I realized the two different ways people can look at PLM. Are implementing PLM capabilities driven by a cost-benefit analysis and a business case? Or is implementing PLM capabilities driven by strategy providing business value for a company?

Most companies I am working with focus on the first option – there needs to be a business case.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

This observation is a pleasant passageway into a broader discussion started by Rob Ferrone recently with his article Money for nothing and PLM for free. He explains the PDM cost of doing business, which goes beyond the software’s cost. Often, companies consider the other expenses inescapable.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

At the same time, Benedict Smith wrote some visionary posts about the potential power of an AI-driven PLM strategy, the most recent article being PLM augmentation – Panning for Gold.

It is a visionary article about what is possible in the PLM space (if there was no legacy ☹), based on Robust Reasoning and how you could even start with LLM Augmentation for PLM “Micro-Tasks.

Interestingly, the articles from both Rob and Benedict were supported by AI-generated images – I believe this is the future: Creating an AI image of the message you have in mind.

![]() When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

When you have digested their articles, it is time to dive deeper into the different perspectives of value and costs for PLM.

From a system to a strategy

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The biggest obstacle I have discovered is that people relate PLM to a system or, even worse, to an engineering tool. This 20-year-old misunderstanding probably comes from the fact that in the past, implementing PLM was more an IT activity – providing the best support for engineers and their data – than a business-driven set of capabilities needed to support the product lifecycle.

The System approach

Traditional organizations are siloed, and initially, PLM always had the challenge of supporting product information shared throughout the whole lifecycle, where there was no conventional focus per discipline to invest in sharing – every discipline has its P&L – and sharing comes with a cost.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

At the management level, the financial data coming from the ERP system drives the business. ERP systems are transactional and can provide real-time data about the company’s performance. C-level management wants to be sure they can see what is happening, so there is a massive focus on implementing the best ERP system.

In some cases, I noticed that the investment in ERP was twenty times more than the PLM investment.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

Why would you invest in PLM? Although the ERP engine will slow down without proper PLM, the complexity of PLM compared to ERP is a reason for management to look at the costs, as the PLM benefits are hard to grasp and depend on so much more than just execution.

See also my old 2015 article: How do you measure collaboration?

As I mentioned, the Cost of Non-Quality, too many iterations, time lost by searching, material scrap, manufacturing delays or customer complaints – often are considered inescapable parts of doing business (like everyone else) – it happens all the time..

The strategy approach

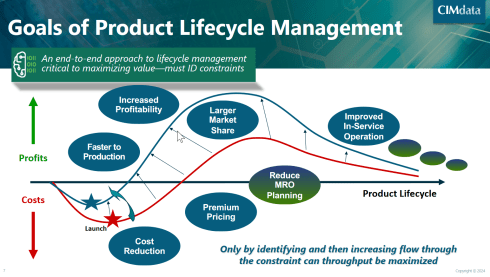

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

It is clear that when we accept the modern definition of PLM, we should be considering product lifecycle management as the management of the product lifecycle (as Patrick Hillberg says eloquently in our Share PLM podcast – see the image at the bottom of this post, too).

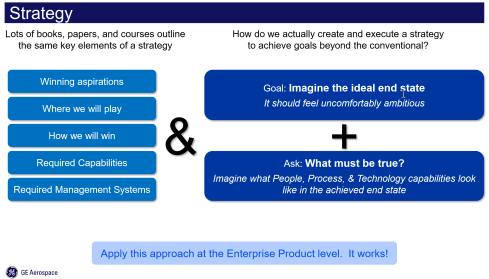

When you implement a strategy, it is evident that there should be a long(er) term vision behind it, which can be challenging for companies. Also, please read my previous article: The importance of a (PLM) vision.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

I cannot believe that, although perhaps not fully understood, the importance of a data-driven approach will be discussed at many strategic board meetings. A data-driven approach is needed to implement a digital thread as the foundation for enhanced business models based on digital twins and to ensure data quality and governance supporting AI initiatives.

It is a process I have been preaching: From Coordinated to Coordinated and Connected.

We can be sure that at the board level, strategy discussions should be about value creation, not about reducing costs or avoiding risks as the future strategy.

Understanding the (PLM) value

The biggest challenge for companies is to understand how to modernize their PLM infrastructure to bring value.

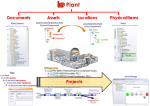

* Step 1 is obvious. Stop considering PLM as a system with capabilities, but investigate how you transform your infrastructure from a collection of systems and (document) interfaces towards a federated infrastructure of connected tools.

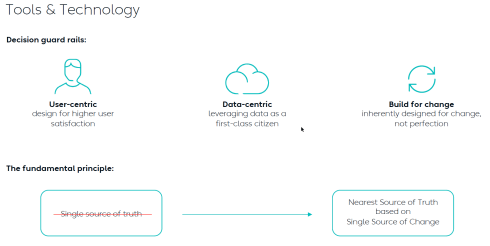

![]() Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

Note: the paradigm shift from a Single Source of Truth (in my system) towards a Nearest Source of Truth and a Single Source of Change.

* Step 2 is education. A data-driven approach creates new opportunities and impacts how companies should run their business. Different skills are needed, and other organizational structures are required, from disciplines working in siloes to hybrid organizations where people can work in domain-driven environments (the Systems of Record) and product-centric teams (the System of Engagement). AI tools and capabilities will likely create an effortless flow of information within the enterprise.

* Step 3 is building a compelling story to implement the vision. Implementing new ways of working based on new technical capabilities requires also organizational change. If your organization keeps working similarly, you might gain some percentage of efficiency improvements.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

The real benefits come from doing things differently, and technology allows you to do it differently. However, this requires people to work differently, too, and this is the most common mistake in transformational projects.

Companies understand the WHY and WHAT but leave the HOW to the middle management.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

People are squeezed into an ideal performance without taking them on the journey. For that reason, it is essential to build a compelling story that motivates individuals to join the transformation. Assisting companies in building compelling story lines is one of the areas where I specialize.

Feel free to contact me to explore the opportunity for your business.

It is not the technology!

With the upcoming availability of AI tools, implementing a PLM strategy will no longer depend on how IT understands the technology, the systems and the interfaces needed.

As Yousef Hooshmand‘s above image describes, a federated infrastructure of connected (SaaS) solutions will enable companies to focus on accurate data (priority #1) and people creating and using accurate data (priority #1). As you can see, people and data in modern PLM are the highest priority.

Therefore, I look forward to participating in the upcoming Share PLM Summit on 27-28 May in Jerez.

It will be a breakthrough – where traditional PLM conferences focus on technology and best practices. This conference will focus on how we can involve and motivate people. Regardless of which industry you are active in, it is a universal topic for any company that wants to transform.

Conclusion

Returning to this article’s introduction, modern PLM is an opportunity to transform the business and make it future-proof. It needs to be done for sure now or in the near future. Therefore PLM initiatives should be considered from the value point first instead of focusing on the costs. How well are you connected to your management’s vision to make PLM a value discussion?

Enjoy the podcast – several topics discuss relate to this post.

Four years ago, I wrote a series of posts with the common theme: The road to model-based and connected PLM. I discussed the various aspects of model-based and the transition from considering PLM as a system towards considering PLM as a strategy to implement a connected infrastructure.

Four years ago, I wrote a series of posts with the common theme: The road to model-based and connected PLM. I discussed the various aspects of model-based and the transition from considering PLM as a system towards considering PLM as a strategy to implement a connected infrastructure.

Since then, a lot has happened. The terminology of Digital Twin and Digital Thread has become better understood. The difference between Coordinated and Connected ways of working has become more apparent. Spoiler: You need both ways. And at this moment, Artificial Intelligence (AI) has become a new hype.

Many current discussions in the PLM domain are about structures and data connectivity, Bills of Materials (BOM), or Bills of Information(BOI) combined with the new term Digital Thread as a Service (DTaaS) introduced by Oleg Shilovitsky and Rob Ferrone. Here, we envision a digitally connected enterprise, based connected services.

Many current discussions in the PLM domain are about structures and data connectivity, Bills of Materials (BOM), or Bills of Information(BOI) combined with the new term Digital Thread as a Service (DTaaS) introduced by Oleg Shilovitsky and Rob Ferrone. Here, we envision a digitally connected enterprise, based connected services.

A lot can be explored in this direction; also relevant Lionel Grealou’s article in Engineering.com: RIP SaaS, long live AI-as-a-service and follow-up discussions related tot his topic. I chimed in with Data, Processes and AI.

However, we also need to focus on the term model-based or model-driven. When we talk about models currently, Large Language Models (LMM) are the hype, and when you are working in the design space, 3D CAD models might be your first association.

There is still confusion in the PLM domain: what do we mean by model-based, and where are we progressing with working model-based?

A topic I want to explore in this post.

It is not only Model-Based Definition (MBD)

Before I started The Road to Model-Based series, there was already the misunderstanding that model-based means 3D CAD model-based. See my post from that time: Model-Based – the confusion.

Model-Based Definition (MBD) is an excellent first step in understanding information continuity, in this case primarily between engineering and manufacturing, where the annotated model is used as the source for manufacturing.

In this way, there is no need for separate 2D drawings with manufacturing details, reducing the extra need to keep the engineering and manufacturing information in sync and, in addition, reducing the chance of misinterpretations.

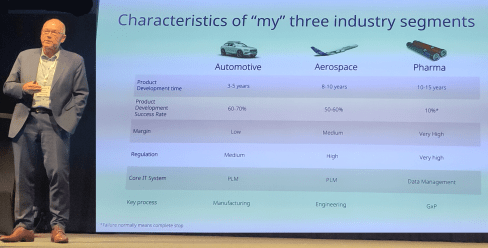

MBD is a common practice in aerospace and particularly in the automotive industry. Other industries are struggling to introduce MBD, either because the OEM is not ready or willing to share information in a different format than 3D + 2D drawings, or their supplier consider MBD too complex for them compared to their current document-driven approach.

MBD is a common practice in aerospace and particularly in the automotive industry. Other industries are struggling to introduce MBD, either because the OEM is not ready or willing to share information in a different format than 3D + 2D drawings, or their supplier consider MBD too complex for them compared to their current document-driven approach.

In its current practice, we must remember that MBD is part of a coordinated approach.

Companies exchange technical data packages based on potential MBD standards (ASME Y14.47 /ISO 16792 but also JT and 3D PDF). It is not yet part of the connected enterprise, but it connects engineering and manufacturing using the 3D Model as the core information carrier.

As I wrote, learning to work with MBD is a stepping stone in understanding a modern model-based and data-driven enterprise. See my 2022 post: Why Model-based Definition is important for us all.

As I wrote, learning to work with MBD is a stepping stone in understanding a modern model-based and data-driven enterprise. See my 2022 post: Why Model-based Definition is important for us all.

To conclude on MBD, Model-based definition is a crucial practice to improve collaboration between engineering, manufacturing, and suppliers, and it might be parallel to collaborative BOM structures.

And it is transformational as the following benefits are reported through ChatGPT:

- Up to 30% faster in product development cycles due to reduced need for 2D drawings and fewer design iterations. Boeing reported a 50% reduction in engineering change requests by using MBD.

- Companies using MBD see a 20–50% reduction in manufacturing errors caused by misinterpretations of 2D drawings. Caterpillar reported a 30% improvement in first-pass yield due to better communication between design and manufacturing teams.

- MBD can reduce product launch time by 20–50% by eliminating bottlenecks related to traditional drawings and manual data entry.

- 20–30% reduction in documentation costs by eliminating or reducing 2D drawings. Up to 60% savings on rework and scrap costs by reducing errors and inconsistencies.

Over five years, Lockheed Martin achieved a $300 million cost savings by implementing MBD across parts of its supply chain.

MBSE is not a silo.

For many people, Model-Based Systems Engineering(MBSE) seems to be something not relevant to their business, or it is a discipline for a small group of specialists that are conducting system engineering practices, not in the traditional document-driven V-shape approach but in an iterative process following the V-shape, meanwhile using models to predict and verify assumptions.

And what is the value connected in a PLM environment?

A quick heads up – what is a model

A model is a simplified representation of a system, process, or concept used to understand, predict, or optimize real-world phenomena. Models can be mathematical, computational, or conceptual.

We need models to:

- Simplify Complexity – Break down intricate systems into manageable components and focus on the main components.

- Make Predictions – Forecast outcomes in science, engineering, and economics by simulating behavior – Large Language Models, Machine Learning.

- Optimize Decisions – Improve efficiency in various fields like AI, finance, and logistics by running simulations and find the best virtual solution to apply.

- Test Hypotheses – Evaluate scenarios without real-world risks or costs for example a virtual crash test..

It is important to realize models are as accurate as the data elements they are running on – every modeling practices has a certain need for base data, be it measurements, formulas, statistics.

I watched and listened to the interesting podcast below, where Jonathan Scott and Pat Coulehan discuss this topic: Bridging MBSE and PLM: Overcoming Challenges in Digital Engineering. If you have time – watch it to grasp the challenges.

The challenge in an MBSE environment is that it is not a single tool with a single version of the truth; it is merely a federated environment of shared datasets that are interpreted by modeling applications to understand and define the behavior of a product.

In addition, an interesting article from Nicolas Figay might help you understand the value for a broader audience. Read his article: MBSE: Beyond Diagrams – Unlocking Model Intelligence for Computer-Aided Engineering.

In addition, an interesting article from Nicolas Figay might help you understand the value for a broader audience. Read his article: MBSE: Beyond Diagrams – Unlocking Model Intelligence for Computer-Aided Engineering.

Ultimately, and this is the agreement I found on many PLM conferences, we agree that MBSE practices are the foundation for downstream processes and operations.

We need a data-driven modeling environment to implement Digital Twins, which can span multiple systems and diagrams.

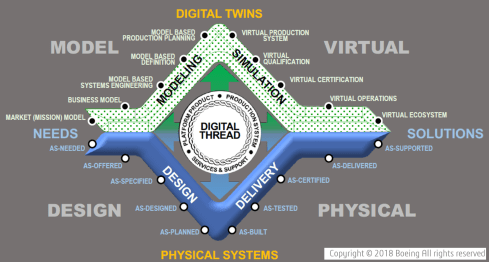

In this context, I like the Boeing diamond presented by Don Farr at the 2018 PLM Roadmap EMEA conference. It is a model view of a system, where between the virtual and the physical flow, we will have data flowing through a digital thread.

Where this image describes a model-based, data-driven infrastructure to deliver a solution, we can, in addition, apply the DevOp approach to the bigger picture for solutions in operation, as depicted by the PTC image below.

Model-based the foundation of the digital twins

![]() To conclude on MBSE, I hope that it is clear why I am promoting considering MBSE not only as the environment to conceptualize a solution but also as the foundation for a digital enterprise where information is connected through digital threads and AI models (**new**)

To conclude on MBSE, I hope that it is clear why I am promoting considering MBSE not only as the environment to conceptualize a solution but also as the foundation for a digital enterprise where information is connected through digital threads and AI models (**new**)

The data borders between traditional system domains will disappear – the single source of change and the nearest source of truth – paradigm, and this post, The Big Blocks of Future Lifecycle Management, from Prof. Dr. Jörg Fischer, are all about data domains.

However, having accessible data using all kinds of modern data sources and tools are necessary to build digital twins – either to simulate and predict a physical solution or to analyze a physical solution and, based on the analysis, either adjust the solutions or improve your virtual simulations.

Digital Twins at any stage of the product life cycle are crucial to developing and maintaining sustainable solutions, as I discussed in previous lectures. See the image below:

Conclusion

Data quality and architecture are the future of a modern digital enterprise – the building blocks. And there is a lot of discussion related to Artificial Intelligence. This will only work when we master the methodology and practices related to a data-driven and sustainable approach using models. MBD is not new, MBSE perhaps still new, building blocks for a model-based approach. Where are you in your lifecycle?

Most times in this PLM and Sustainability series, Klaus Brettschneider and Jos Voskuil from the PLM Green Global Alliance core team speak with PLM related vendors or service partners.

Most times in this PLM and Sustainability series, Klaus Brettschneider and Jos Voskuil from the PLM Green Global Alliance core team speak with PLM related vendors or service partners.

This year we have been speaking with Transition Technologies PSC, Configit, aPriori, Makersite and the PLM Vendors PTC, Siemens and SAP.

Where the first group of companies provided complementary software offerings to support sustainability – “the fourth dimension”– the PLM vendors focused more on the solutions within their portfolio.

This time we spoke with , CIMPA PLM services, a company supporting their customers with PLM and Sustainability challenges, offering an end-to-end support.

What makes them special is that they are also core partner of the PLM Global Green Alliance, where they moderate the Circular Economy theme – read their introduction here: PLM and Circular Economy.

CIMPA PLM services

We spoke with Pierre DAVID and Mahdi BESBES from CIMPA PLM services. Pierre is an environmental engineer and Mahdi is a consulting manager focusing on parts/components traceability in the context of sustainability and a circular economy. Many of the activities described by Pierre and Mahdi were related to the aerospace industry.

We spoke with Pierre DAVID and Mahdi BESBES from CIMPA PLM services. Pierre is an environmental engineer and Mahdi is a consulting manager focusing on parts/components traceability in the context of sustainability and a circular economy. Many of the activities described by Pierre and Mahdi were related to the aerospace industry.

We had an enjoyable and in-depth discussion of sustainability, as the aerospace industry is well-advanced in traceability during the upstream design processes. Good digital traceability is an excellent foundation to extend for sustainability purposes.

CSRD, LCA, DPP, AI and more

A bunch of abbreviations you will have to learn. We went through the need for a data-driven PLM infrastructure to support sustainability initiatives, like Life Cycle Assessments and more. We zoomed in on the current Corporate Sustainability Reporting Directive(CSRD) highlighting the challenges with the CSRD guidelines and how to connect the strategy (why we do the CSRD) to its execution (providing reports and KPIs that make sense to individuals).

In addition, we discussed the importance of using the proper methodology and databases for lifecycle assessments. Looking forward, we discussed the potential of AI and the value of the Digital Product Passport for products in service.

Enjoy the 37 minutes discussion and you are always welcome to comment or start a discussion with us.

What we learned

- Sustainability initiatives are quite mature in the aerospace industry and thanks to its nature of traceability, this industry is leading in methodology and best practices.

- The various challenges with the CSRD directive – standardization, strategy and execution.

- The importance of the right databases when performing lifecycle analysis.

- CIMPA is working on how AI can be used for assessing environmental impacts and the value of the Digital Product Passport for products in service to extend its traceability

Want to learn more?

Here are some links related to the topics discussed in our meeting:

- CIMPA’s theme page on the PLM Green website: PLM and Circular Economy

- CIMPA’s commitments towards A sustainable, human and guiding approach

- Sopra Steria, CIMPA’s parent company: INSIDE #8 magazine

Conclusion

The discussion was insightful, given the advanced environment in which CIMPA consultants operate compared to other manufacturing industries. Our dialogue offered valuable lessons in the aerospace industry, that others can draw on to advance and better understand their sustainability initiatives

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

Recently, I noticed I reduced my blogging activities as many topics have already been discussed and repeatably published without new content.

With the upcoming of Gen AI and ChatGPT, I believe my PLM feeds are flooded by AI-generated blog posts.

The ChatGPT option

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

Most companies are not frontrunners in using extremely modern PLM concepts, so you can type risk-free questions and get common-sense answers.

I just tried these five questions:

- Why do we need an MBOM in PLM, and which industries benefit the most?

- What is the difference between a PLM system and a PLM strategy?

- Why do so many PLM projects fail?

- Why do so many ERP projects fail?

- What are the changes and benefits of a model-based approach to product lifecycle management?

![]() Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

Note: Questions 3 and 4 have almost similar causes and impacts, although slightly different, which is to be expected given the scope of the domain.

All these questions provided enough information for a blog post based on the answer. This illustrates that if you are writing about what are current best practices in the field – stop writing – the knowledge is there.

PLM in the real life

Recently, I had several discussions about which skills a PLM expert should have or which topics a PLM project should address.

PLM for the individual

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

For the individual, there are often certifications to obtain. Roger Tempest has been fighting for PLM professional recognition through certification – a challenge due to the broad scope and possibilities. Read more about Roger’s work in this post: PLM is complex (and we have to accept it?)

PLM vendors and system integrators often certify their staff or resellers to guarantee the quality of their solution delivery. Potential topics will be missed as they do not fulfill the vendor’s or integrator’s business purpose.

Asking ChatGPT about the required skills for a PLM expert, these were the top 5 answers:

- Technical skills

- Domain Knowledge

- Analytical and Problem-Solving Skills

- Interpersonal and Management Skills

- Strategic Thinking

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

It was interesting to see the order proposed by ChatGPT. Fist the tools (technology), then the processes (domain knowledge / analytical thinking), and last the people and business (strategy and interpersonal and management skills) It is hard to find individuals with all these skills in a single person.

Although we want people to be that broad in their skills, job offerings are mainly looking for the expert in one domain, be it strategy, communication, industry or technology. To get an impression of the skills read my PLM and Education concluding blog post.

Now, let’s see what it means for an organization.

PLM for the organization

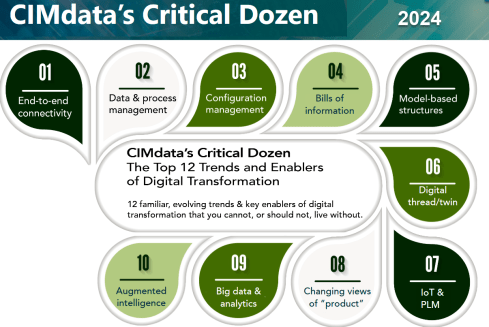

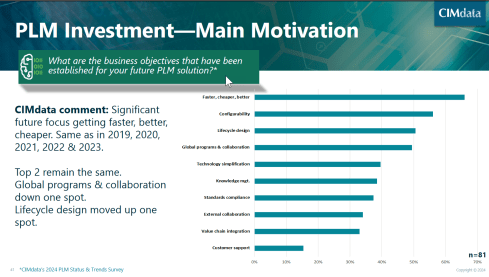

In this area, one of the most consistent frameworks I have seen over time is CIMdata‘s Critical Dozen. Although they refer less to skills and more to trends and enablers, a company should invest in – educate people & build skills – to support a successful digital transformation in the PLM domain.

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

Oleg Shilovitsky’s recent blog post, The 12 “P” s of PLM Explained by Role: How to Make PLM More Than Just a Buzzword describes in an AI manner the various aspects of the term PLM, using 12 P**-words, reacting to Lionel Grealou’ s post: Making PLM Great Again

The challenge I see with these types of posts is: “OK, what to do now? Where to start?”

I believe where to start at the first place is a commonly agreed topic.

Everything starts from having a purpose and a vision. And this vision should be supported by a motivating story about the WHY that inspires everyone.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

It is teamwork to define such a strategy, communicate it through a compelling story and make it personal. An excellent book to read is Make it personal from Dr. Cara Antoine – click on the image to discover the content and find my review why I believe this book is so compelling.

An important reason why we have to make transformations personal is because we are dealing first of all with human beings. And human beings are driven by emotions first even before ratio kicks in. We see it everywhere and unfortunately also in politics.

The HOW from real-life

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

This question cannot be answered by external PLM vendors, consultants or system integrators. Forget the Out-of-the-Box templates or the industry best practices (from the past), but start from your company’s culture and vision, introducing step-by-step new technologies, ways of working and business models to move towards the company’s vision target.

Building the HOW is not an easy journey, and to illustrate the variety of skills needed to be successful, I worked with Share PLM on their Series 2 podcast. You can find the complete overview here. There is one more to come to conclude this year.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

Our focus was to speak only with PLM experts from the field, understanding their day-to-day challenges with a focus on HOW they did it and WHAT they learned.

And this is what we learned:

Unveiling FLSmidth’s Industrial Equipment PLM Transformation: From Projects to Products

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

It was our first episode of Series 2, and we spoke with Johan Mikkelä, Head of the PLM Solution Architecture at FLSmidth.

FLSmidth provides the global mining and cement industries with equipment and services, which is very much an ETO business moving towards CTO.

We discussed their Industrial Equipment PLM Transformation and the impact it has made.

Start With People: ABB’s Engineering Approach to Digital Transformation

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

We spoke with Issam Darraj, who shared his thoughts on human-centric digitalization. Issam talks us through ABB’s engineering perspective on driving transformation and discusses the importance of focusing on your people. Our favorite quote:

To grow, you need to focus on your people. If your people are happy, you will automatically grow. If your people are unhappy, they will leave you or work against you.

Enabling change: Exploring the human side of digital transformations

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

We spoke with Antonio Casaschi as he shared his thoughts on the human side of digital transformation. When discussing the PLM expert, he agrees it is difficult. Our favorite part here:

“I see a PLM expert as someone with a lot of experience in organizational change management. Of course, maybe people with a different background can see a PLM expert with someone with a lot of knowledge of how you develop products, all the best practices around products, etc. We first need to agree on what a PLM expert is, and then we can agree on how you become an expert in such a domain.”

Revolutionizing PLM: Insights from Yousef Hooshmand

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

With Dr. Yousef Hooshmand, writer of the paper: From a Monolithic PLM Landscape to a Federated Domain and

Data Mesh, with over 15 years of experience in the PLM domain, currently PLM Lead at NIO, we discussed the complexity of digital transformation in the PLM domain and How to deal with legacy, meanwhile implementing a user-centric, data-driven future.

My favorite quote: The End of Single Source of Truth, now it is about The nearest Source of Truth and Single Source of Change.

Steadfast Consistency: Delving into Configuration Management with Martijn Dullaart

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

Martijn Dullaart, who is the man behind the blog MDUX: The Future of CM and author of the book The Essential Guide to Part Re-Identification: Unleash the Power of Interchangeability and Traceability, has been active both in the PLM and CM domain and with Martijn the similarities and differences between PLM and CM and why organizations need to be educated on the topic of CM

The ROI of Digitalization: A Deep Dive into Business Value with Susanna Maëntausta

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

With Susanna Maëntausta, we discussed how to implement PLM in non-traditional manufacturing industries, such as the chemical and pharmaceutical industries.

Susanna teaches us to ensure PLM projects are value-driven, connecting business objectives and KPIs to the implementation and execution steps in the field. Susanna is highly skilled in connecting people at any level of the organization.

Narratives of Change: Grundfos Transformation Tales with Björn Axling