You are currently browsing the category archive for the ‘Change’ category.

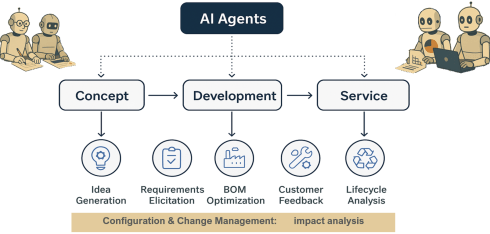

Recently, I have been reading some interesting posts beyond all the technical discussions related to PLM and AI. Is PLM becoming obsolete? Are we heading to a new type of infrastructure based on MCP agents? Are these agents an example of new ways of collaboration?

Collaboration – it pops up everywhere!

Here is a quote from the article that triggered me:

The 𝐧𝐮𝐦𝐛𝐞𝐫 𝐨𝐧𝐞 𝐫𝐞𝐚𝐬𝐨𝐧 organizations deploy MBSE is not simulation or architecture development. It is 𝐞𝐧𝐡𝐚𝐧𝐜𝐞𝐝 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐚𝐧𝐝 𝐜𝐨𝐦𝐦𝐮𝐧𝐢𝐜𝐚𝐭𝐢𝐨𝐧 — at 67%. But here is the uncomfortable part.

Only 24% reported actually achieving collaboration as a business outcome. That is a 43-point gap between intent and result. Traceability is even worse — 48% deploy MBSE for it, 9% say they have realized it.

What if the problem is not that MBSE fails to deliver collaboration — but that most organizations 𝐧𝐞𝐯𝐞𝐫 𝐝𝐞𝐟𝐢𝐧𝐞 𝐰𝐡𝐚𝐭 𝐛𝐞𝐭𝐭𝐞𝐫 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐥𝐨𝐨𝐤𝐬 𝐥𝐢𝐤𝐞 in measurable terms?

Chad Jackson’s article aligns with many other discussions I had with companies related to PLM (and MBSE) – itinspired me to focus this time on collaboration.

How do we measure collaboration?

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

From the management side, accountability flows downward. The architecture of the organization mirrors the architecture of the product, and the architecture of the product mirrors the architecture of the organization.

We have known this for decades; it is Conway’s Law. Yet we are still surprised when silos emerge exactly where we designed them.

The Management Dilemma

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

But collaboration? What is the KPI?

It is a fair question. If something cannot be quantified, it becomes subjective and depends on gut feelings. And if it cannot be tied directly to quarterly results, it often becomes optional.

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

Still, it improved collaboration a lot, and sometimes it became a burden with all the CC-messages and epistles exchanged.

Collaboration has an impact, deeply and systematically. But its impact is indirect, delayed, and distributed. It reduces friction, can improve shared understanding and prevent expensive rework.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

For a hierarchical and linearly structured organization, horizontal collaboration is often hard to “sell.”

Back to Conway’s Law

Organizational structure shapes communication patterns. Communication patterns shape systems.

Organizational structure shapes communication patterns. Communication patterns shape systems.

If your organization is vertical, your product will be vertical. If your incentives are local, your decisions will be local. If your teams are isolated, your solutions will be fragmented.

You cannot expect horizontal behavior from a vertically optimized structure without friction.

Disconnected collaboration initiatives fail because they try to overlay horizontal tools on top of vertical incentives.

Attempts like a new collaboration platform or using shared workspace technology to incentivize collaboration are examples of this approach.

But the underlying structure remains untouched. People are still measured on local performance. Budgets are still allocated per department. Promotions still reward vertical success.

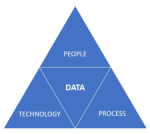

![]() First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

Most likely, it is in the IT or Engineering silo, rarely on a higher organizational level.

And then we are surprised when collaboration stalls?

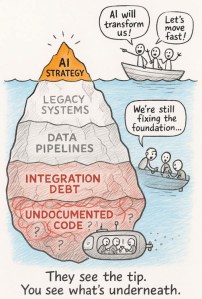

The Myth of the Tool

Whenever collaboration becomes a pain, people look for IT tools as a cure.

“We need better platforms.”

“We need better platforms.”

“We need integrated systems.”

and now:

“We need AI – the AI agents will do the collaboration for us.”

Tools matter, but they are amplifiers. They amplify existing behavior. They do not create it. While finalizing this article, I saw this post from Dr. Sebastian Wernicke coming in, containing this quote:

Agents are software. Maturity is culture. And culture, inconveniently, doesn’t come with an install package.

If trust is low, a collaboration platform becomes a battlefield. If incentives are misaligned, shared dashboards become weapons. If fear dominates, transparency becomes a threat.

![]() Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

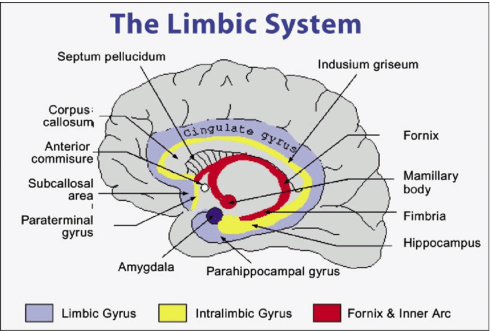

The Limbic Brain Is Always There

Beneath the rational layer of strategy and planning sits something older: the limbic system. The part of us that cares about belonging, safety, recognition, autonomy, and purpose.

Collaboration thrives when the limbic brain’s needs are met. It collapses when they are threatened.

- If people feel unsafe, they protect information!

- If they feel undervalued, they withdraw effort!

- If they feel controlled, they resist alignment!

You cannot mandate collaboration if the emotional system of the organization is defensive.

The question is not “How do we force collaboration?”

The question is “How do we create conditions where collaboration feels natural?”

And that requires leaders to connect to the human, not just to the role or an artificial intelligence solution. They should be inspired by this iconic image from Share PLM:

Besides a difficult-to-quantify ROI, there is another reason why collaboration struggles to gain executive traction: it rarely creates immediate success.

It prevents future failure, and we humans in general do not prioritize prevention, thinking of our environmental, financial and potential even health behavior. Where prevention has the lowest cost, most of the time, fixing the damage lies in our nature.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For me, the firefighters are the biggest challenge to successfully implementing a PLM infrastructure. The image to the left comes from a 2014 presentation when discussing potential resistance to a successful PLM implementation.

In vertical systems, firefighting is visible. Prevention is silent and therefore collaboration activities feel like a cost center rather than a strategic lever.

Where to Push, Where to Invest?

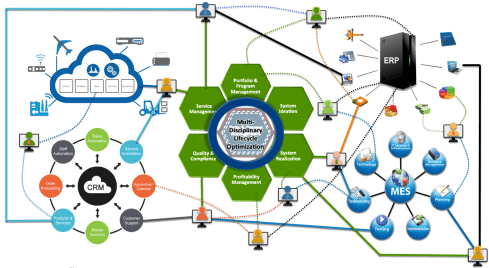

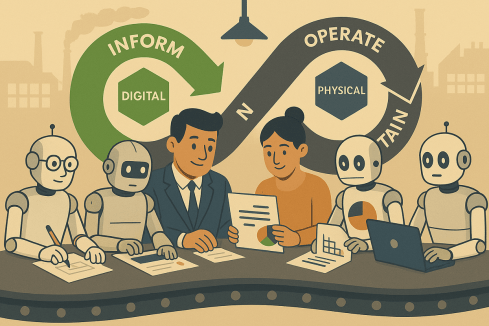

![]() If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

When people meet outside their vertical silos, something subtle shifts. They see faces instead of functions. They understand constraints instead of assuming incompetence. They replace narratives with conversations.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Also, when looking at events where people share experiences, there is a difference between a traditional vertical PLM/CM/IT/ERP conference where specialists focus on one discipline and on the other side, a human-centric conference, where humans share their experiences in an organization.

The Share PLM Summit in May last year was an eye-opener for me. Starting from the human perspective brought a lot of energy and willingness to discuss various insights – collaboration at its best.

Events, summits, workshops—when done well—create human connection. They remind participants that behind every deliverable sits a person trying to do meaningful work.

The focus on the human perspective is not soft. It is strategic because collaboration is not primarily about information exchange. It is about relationship quality and trust.

The Real Question

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

Because collaboration is not free, it requires time. It requires emotional energy. It requires psychological safety. It sometimes requires giving up local control for global benefit.

In systems terms, it requires shifting from local optimization to whole-system optimization.

That is uncomfortable.

But if our products are complex, interconnected, and rapidly evolving—as most are today—then vertical thinking alone is no longer sufficient. The world has become horizontal, even if our org charts have not.

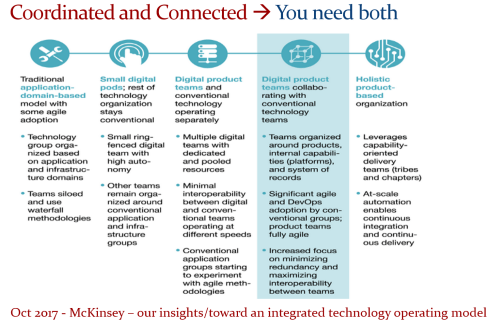

And perhaps the real challenge is not how to measure collaboration, but how to design organizations where collaboration is no longer something we need to sell at all. An article from McKinsey might inspire you here for this transition – for me, it did: Toward an integrated technology operating model.

Beyond AI

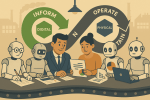

While everyone talks and writes about AI, I do not believe AI will solve the collaboration issue. For sure, AI collaboration with agents will increase personal and organizational effectiveness, but it never touches our limbic brain, the irreplaceable part that makes us typical humans and unique.

There will always be a need for that, unless we become numb and addicted to the AI environments. There are various studies popping up on how AI “untrains” our brain muscles, reduces patience and deep thinking. Finding a new human balance is crucial.

Conclusion

Triggered by Chad Jackson’s post about MBSE and collaboration, I took the time to deep-dive into the aspects of collaboration in the PLM domain. How do you manage collaboration?

Come and share your experiences at the upcoming Share PLM 2026 summit from 19-20 May in Jerez. The title of my keynote: Are Humans Still Resources? Agentic AI and the Future of Work and PLM.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

This blog post is especially written for our PLM Global Green Alliance LinkedIn members — a message from a “boomer” to the next generation of PLM enthusiasts.

If you belong to that next generation, please read until the end and share your thoughts.

With last week’s announcement from the US government, no longer treating greenhouse gas emissions as a threat to the planet or climate.

We see a push to remove regulations that limit companies from continuing or expanding business without considering the broader consequences for other countries and future generations.

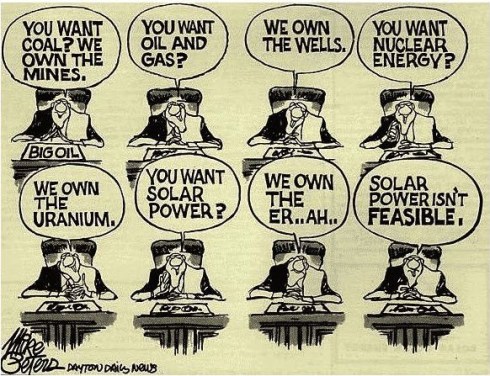

It feels like a short-term, greedy decision, largely influenced by those who benefit from fossil-carbon economies. Decisions like this make the energy transition harder, because the path of least resistance is always the easiest to follow.

Transitions are never simple. But when science is ignored, data is removed, and opinions replace facts, we are no longer supporting a transition — we are actively working against it.

My Story

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

When I started working in the PLM domain in 1999, climate change already existed in the background of society. The 1972 Limits to Growth report by the Club of Rome had created waves long before, encouraging some people to rethink business and lifestyle choices.

For me, however, it stayed outside my daily focus. I was at the beginning of my career, excited about the new challenges.

And important to notice that connecting to the internet with a 28k modem was the standard, a world without social media constantly reminding us of global issues.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

I enjoyed my role as the “Flying Dutchman,” travelling around the world to support PLM implementations and discussions. Flying was simply part of the job. Real communication meant being in the same room; early phone and video calls were expensive, awkward, and often ineffective. PLM was — and still is — a human business.

Back then, the effects of carbon emissions and global warming felt distant, almost abstract. Only around 2014 did the conversation become more mainstream for me, helped by social media, before algorithms and bots began driving polarization.

In 2015, while writing about PLM and global warming, I realized something that still resonates today: even when we understand change is needed, we often stick to familiar habits, because investments in the future rarely deliver immediate ROI for ourselves or our shareholders.

The PLM Green Global Alliance

When Rich McFall approached me in 2019 with the idea of creating an alliance where people and companies could share ideas and experiences around sustainability in the PLM domain, I was immediately interested — for two reasons.

- First, there was a certain sense of responsibility related to my past activities as the Flying Dutchman. Not guilt — life is about learning and gaining insight — but awareness that I needed to change, even if the past could not be changed.

- Second, and more importantly, the PLM Green Global Alliance offered a way to contribute. It gave me a reason to act — for personal peace of mind and for future generations. Not only for my children or grandchildren, but for all those who will share this planet with them.

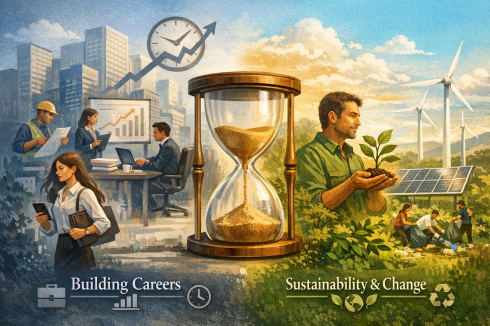

In the first years of the PGGA, we saw strong engagement from younger professionals. Over time, however, we noticed that career priorities often came first — which is understandable.

Like me at the start of my career, many focus first on building their future. Career and sustainability can coexist, but investing extra time in long-term change is not easy when daily responsibilities already demand so much.

Your Chance to Work on the Future

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

The real challenge lies with those willing to go the extra mile — staying focused on today’s business while also investing energy in the long-term future.

At the same time, I understand that not everyone is in a position to speak out or dedicate time to sustainability initiatives. Circumstances differ. For many, current responsibilities leave little space for additional commitments.

Still, for those willing to join us, we have two requests to better understand your expectations.

Two weeks ago, I connected with our 40 newest members of the PLM Green Global Alliance. We are now close to 1,600 members — up from around 1,500 in September 2025, as mentioned in Working on the Long Term.

That post was a gentle call to action. Seeing our PGGA membership continue to grow is encouraging — and naturally raises a question:

1. What motivates people to join the PGGA LinkedIn group?

So far, only a small number of the recent new members have completed a survey that was especially sent to them to explore changing priorities. Due to the low response, we extended the invitation to all members. We are curious about your expectations — and quietly hopeful about your involvement.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

If you haven’t filled in the survey yet, please click here and share your feedback. The survey is anonymous unless you choose to leave your details for follow-up. We will share the results in approximately 2 weeks from now.

2. Design for Sustainability – your contribution?

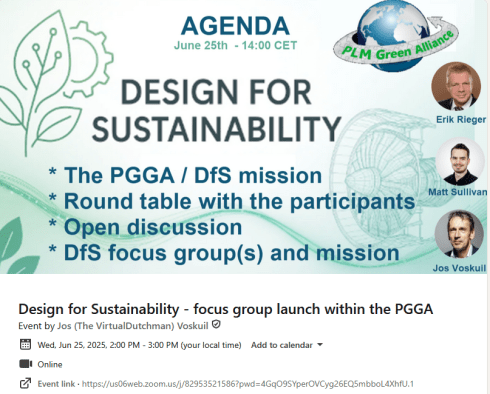

Last year, Erik Rieger and Matthew Sullivan launched a new workgroup within the PLM Green Global Alliance focused on Design for Sustainability. While the initial energy was strong, changes in personal priorities meant the team could not continue at the pace they hoped. Since many new members have joined since last May, we decided to relaunch the initiative.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

If you are interested in contributing to the revival of Design for Sustainability, please take five minutes to complete the short survey. Your input will help shape the direction of the DfS working group and frame future discussions.

Note: If you are worried about clicking on the links for the survey, you can always contact us directly (in private) to share your ambition

Conclusion

The outside world often pushes us to focus only on daily business. In some places, there is even active pressure to avoid long-term sustainability investments. Remember that pressure often comes from those invested in keeping the current system unchanged.

If you care about the future — your generation and those that follow — stay engaged. Small actions by millions of people can create meaningful change.

We look forward to your input and participation.

— says the boomer who still cares 😉

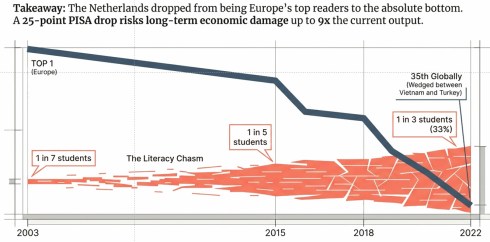

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

You can still read my post here in case you missed it: A very long week after PLM Roadmap / PDT Europe 2025

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

This post will focus on the other aspects of product lifecycle management – the evolving methodologies and the human side.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

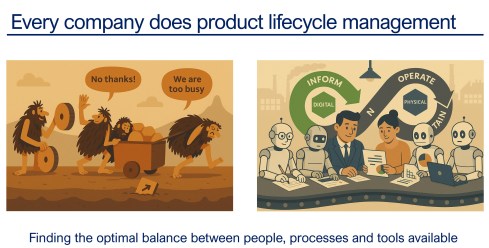

And as a reminder, I used the image above in other conversations. Every company does product lifecycle management; only the number of people, their processes, or their tools might differ. As Peter Billelo mentioned in his opening speech, the products are why the company exists.

Unlocking Efficiency with Model-Based Definition

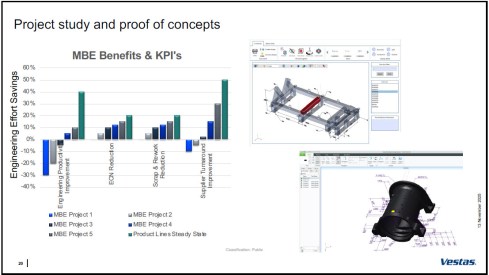

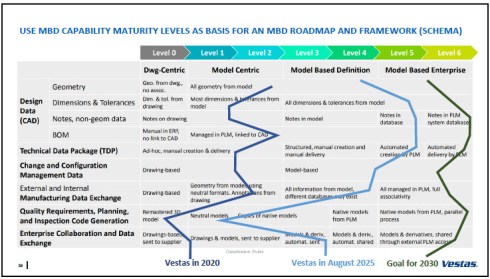

![]() Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Personally, I consider MBD as one of the stepping stones to learning and mastering a model-based enterprise, although do not be confused by the term “model”. In MBD, we use the 3D CAD model as the source to manage and support a data-driven connection among engineering, manufacturing, and suppliers. The business benefits are clear, as reported by companies that follow this approach.

However, it also involves changes in technology, methodology, skills, and even contractual relations.

Dennys started sharing the analysis they conducted on the amount of information in current manufacturing drawings. The image below shows that only the green marker information was used, so the time and effort spent creating the drawings were wasted.

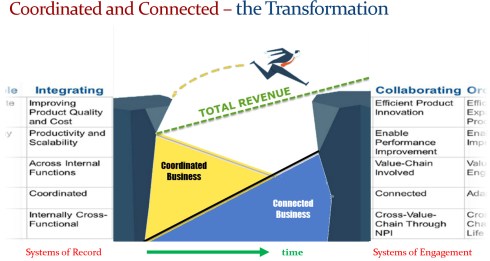

It was an opportunity to explore model-based definition, and the team ran several pilots to learn how to handle MBD, improve their skills, methodologies, and tool usage. As mentioned before, it is a profound change to move from coordinated to connected ways of working; it does not happen by simply installing a new tool.

The image above shows the learning phases and the ultimate benefits accomplished. Besides moving to a model-based definition of the information, Dennys mentioned they used the opportunity to simplify and automate the generation of the information.

Vestas is on a clear path, and it is interesting to see their ambition in the MBD roadmap below.

An inspirational story, hopefully motivating other companies to make this first step to a model-based enterprise. Perhaps difficult at the beginning from the people’s perspective, but as a business, it is a profitable and required direction.

Bridging The Gap Between IT and Business

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

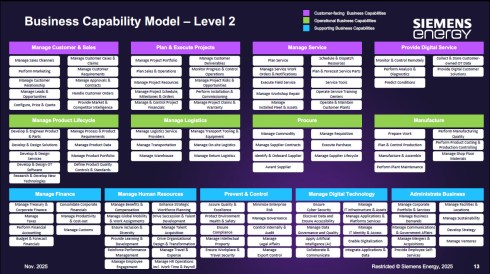

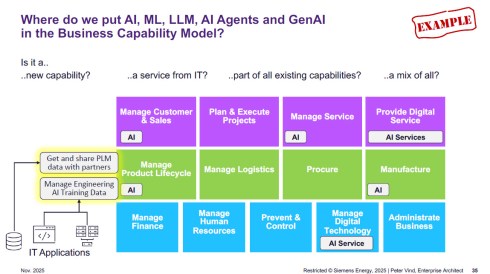

To answer these questions, Peter is referring to the Business Capability Model (BCM) he uses as an Enterprise Architect.

Business Capabilities define ‘what’ a company needs to do to execute its strategy, are structured into logical clusters, and should be the foundation for the enterprise, on which both IT and business can come to a common approach.

The detailed image above is worth studying if you are interested in the levels and the mappings of the capabilities. The BCM approach was beneficial when the company became disconnected from Siemens AG, enabling it to rationalize its application portfolio.

Next, Peter zoomed in on some of the examples of how a BCM and structured application portfolio management can help to rationalize the AI hype/demand – where is it applicable, where does AI have impact – and as he illustrated, it is not that simple. With the BCM, you have a base for further analysis.

Other future-relevant topics he shared included how to address the introduction of the digital product passport and how the BCM methodology supports the shift in business models toward a modern “Power-as-a-Service” model.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

Holistic PLM in Action.

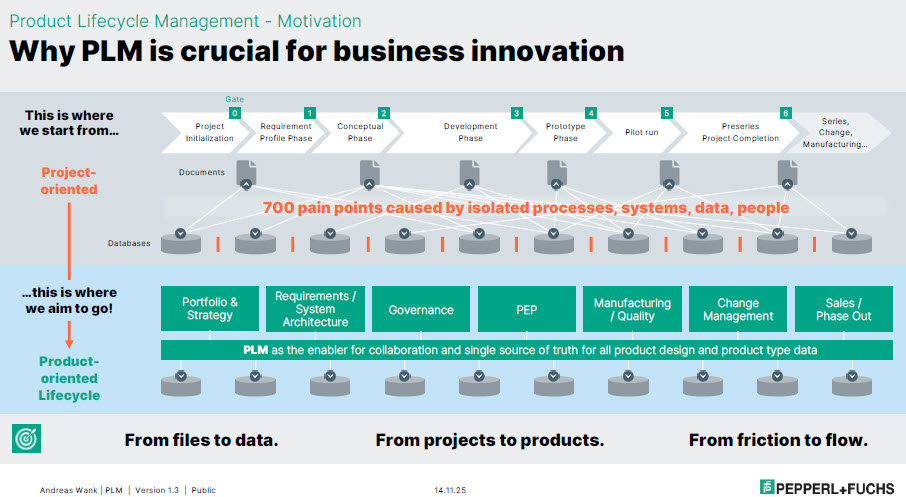

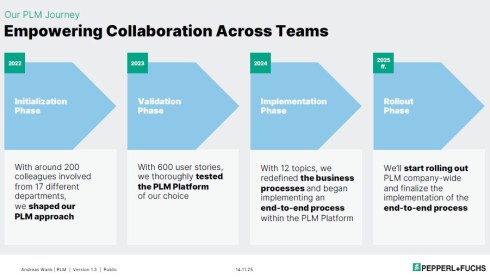

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

It started with the WHY. With such a massive portfolio, business innovation is under pressure without a PLM infrastructure. Too many changes, fragmented data, no single source of truth, and siloed ways of working lead to much rework, errors, and iterations that keep the company busy while missing the global value drivers.

Next, the journey!

The above image is an excellent way to communicate the why, what, and how to a broader audience. All the main messages are in the image, which helps people align with them.

The first phase of the project, creating digital continuity, is also an excellent example of digital transformation in traditional document-driven enterprises. From files to data align with the From Coordinated To Connected theme.

Next, the focus was to describe these new ways of working with all stakeholders involved before starting the selection and implementation of PLM tools. This approach is so crucial, as one of my big lessons learned from the past is: “Never start a PLM implementation in R&D.”

If you start in R&D, the priority shifts away from the easy flow of data between all stakeholders; it becomes an R&D System that others will have to live with.

You never get a second, first impression!

Pepperl+Fuchs spends a long time validating its PLM selection – something you might only see in privately owned companies that are not driven by shareholder demands, but take the time to prepare and understand their next move.

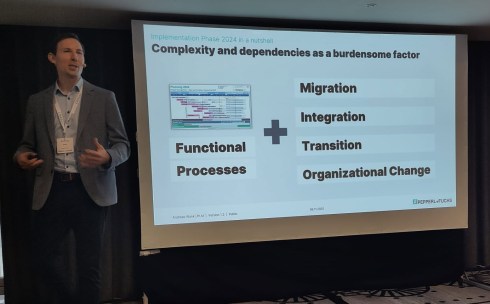

As Andreas also explained, it is not only about the functional processes. As the image shows, migration (often the elephant in the room) and integration with the other enterprise systems also need to be considered. And all of this is combined with managing the transition and the necessary organizational change.

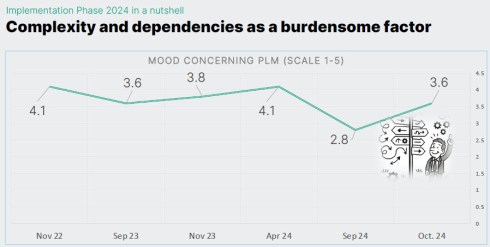

Andreas shared some best practices illustrating the focus on the transition and human aspects. They have implemented a regular survey to measure the PLM mood in the company. And when the mood went radical down on Sept 24, from 4.1 to 2.8 on a scale of 1 to 5, it was time to act.

They used one week at a separate location, where 30 of his colleagues worked on the reported issues in one room, leading to 70 decisions that week. And the result was measurable, as shown in the image below.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Trust, Small Changes, and Transformation.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

To understand their story, it is essential to comprehend Assa Abloy as a special company, as the image below explains. With over 1000 sites, 200 production facilities, and, last year, on average every two weeks, a new acquisition, it is hard to standardize the company, driven by a corporate organization.

However, this was precisely what Assa Abloy has been trying to do over the past few years. Working towards a single PLM system, with generic processes for all, spending a lot of time integrating and migrating data from the different entities became a mission impossible.

To increase user acceptance, they fell into the trap of customizing the system ever more to meet many user demands. A dead end, as many other companies have probably experienced similarly.

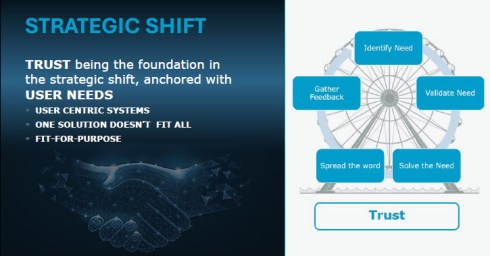

And then they came with a strategic shift. Instead of holding on to the past and the money invested in technology, they shifted to the human side.

The PLM group became a trusted organisation supporting the individual entities. Instead of telling them what to do (Top-Down), they talked with the local business and provided standardized PLM knowledge and capabilities where needed (Bottom-Up).

This “modular” approach made the PLM group the trusted partner of the individual business. A unique approach, making us realize that the human aspect remains part of implementing PLM

Humans cannot be transformed

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

You can find my presentation here on SlideShare as usual, and perhaps the best summary from my session was given in this post from Paul Comis. Enjoy his conclusion.

Conclusion

Two and a half intensive days in Paris again at the PLM Roadmap / PDT Europe conference, where some of the crucial aspects of PLM were shared in detail. The value of the conference lies in the stories and discussions with the participants. Only slides do not provide enough education. You need to be curious and active to discover the best perspective.

For those celebrating: Wishing you a wonderful Thanksgiving!

After a summer holiday in the south of Greece, it is time to resume my activities. The south of Crete is largely an analogue environment, far from any digital hype.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

So where do I stand? While digesting all these historical experiences, I reflected on what we can learn from them and what we didn’t learn from them.

It started with technology.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

Who remembers Automanager Workflow from Cyco, before SmarTeam came on the market?

For that reason, in the early days, PDM was an IT job. As the PDM system primarily dealt with engineering data, it was relatively easy to implement as an organizational change process. We transitioned from analogue to electronic in the department.

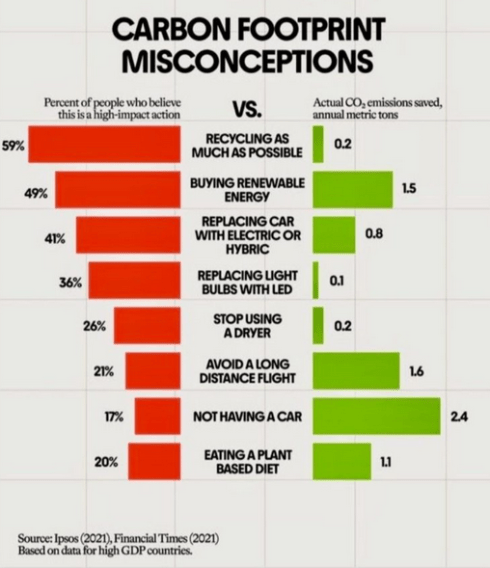

Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. The rapid decline of IT components, combined with the rapid growth of global connectivity, has created new opportunities for collaboration.

As part of the Dassault/IBM/SmarTeam organization, I explained and taught these new capabilities worldwide.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

Then came digital transformation

With growing connectivity, smartphones and Web 2.0 technology have led to more PLM-like discussions. PLM vendors expanded their scope and developed capabilities beyond mechanical engineering.

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

At the time, they were largely considered the same in discussions and advertisements..

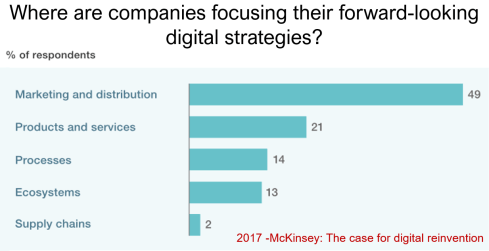

Meanwhile, digital transformation was occurring at the marketing and sales levels – companies invested in direct communication with their customers through the web.

Meanwhile, the internal ways of working for R&D, engineering, and manufacturing did not change significantly. Still, they were following linear processes, and despite the existence of 3D CAD, the 2D drawing remained the primary carrier of legal information between engineering, manufacturing, and suppliers.

Note: the option where the most benefits could be achieved – connected supply chains – had the lowest focus in 2017 – something that would change with COVID-19.

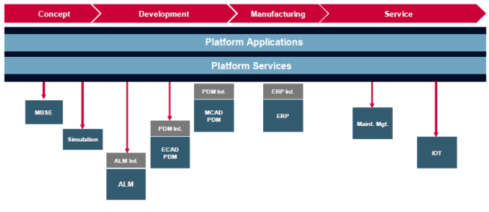

Fundamental digital transformation in the PLM domain occurred gradually. ARAS came with its overlay approach (the platform), connecting various disciplines and enterprise systems. In contrast, Dassault Systèmes introduced its 3DEXPERIENCE platform, utilizing its own software brands as platform components.

Most PLM vendors rapidly countered Aras’ overlay approach with their low-code offerings based on Mendix, ThingWorx or Netvibes, to enable data flows beyond the traditional PDM scope. The Coordinated Digital Thread was born.

The good news is that PLM has now clearly become a strategy based on a federated system infrastructure. The single PLM system no longer exists, although many of us still use the term’ PLM system’ to refer to the main component of a PLM infrastructure – the System of Record.

Moving to a federated PLM infrastructure is already a challenge for companies, not because of the available technology, but first of all because of the legacy data and, closely related to that, legacy processes and people skills.

Legacy is creating the inertia, not technology!

Next came the cloud – SaaS

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

A connected enterprise no longer needs interfaces to transfer data from one system to another.

Instead, with apps and dashboards, combined data from different online sources is presented in a single, user-friendly working environment – A combination of the Systems of Record with the new environments – the Systems of Engagement.

The technology used to create dashboards and apps is based on modern data-driven technologies and principles (ontologies, graph databases, and the semantic web). The Connected Digital Thread was born.

However, legacy systems play an essential role again, as some systems of engagement can be implemented in a complementary manner to the systems of record, allowing companies to work within an integrated technology model.

People will work in a particular mode, either coordinated or connected, but organizations can operate in both modes simultaneously. A story I have been sharing a lot – it is not about migrations but about an evolutionary approach towards an integrated technology model.

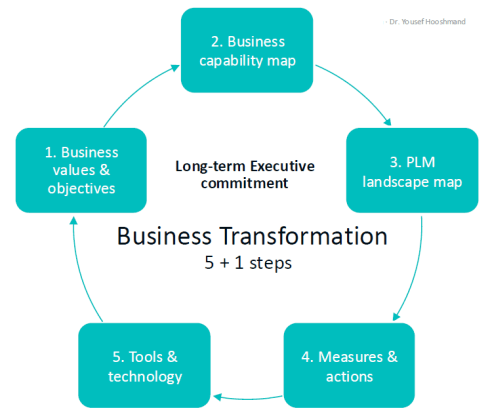

At this point, it becomes essential that business objectives drive the implementation of a PLM infrastructure. Of course, you hear me say we should start from the business; however, the big difference now is that a company should coordinate the technologies, systems, and tools it acquires to avoid isolated islands of information.

Follow Yousef Hooshmand‘s 5 + 1 business transformation steps.

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

- Data Quality and Data Governance – A hype? (part 1)

- Data Quality and Data Governance – the WHY and HOW (part 2)

- Building the Future: Data Quality and Governance in the Digital Age (part 3)

There are some great insights in this dialogue and the associated LinkedIn comments.

Despite the increasing availability of technology, it is the legacy of people, processes, and culture that is hindering progress.

Rob Feronne had a shocking lightbulb moment 😲 in our discussion about the future of PLM, where the participants – see below – answered a question related to the importance of technology in our PLM domain – shocking also for me.

My thumb was up because modern technology matters! The question inspired Oleg Shilovitsky to write a whole blog post on this topic. If you’re truly shocked, read his post, where I agree with the content; the question is too simple to answer with a thumbs up/down.

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

And now there is AI

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

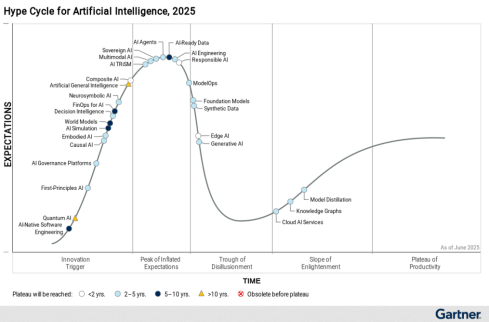

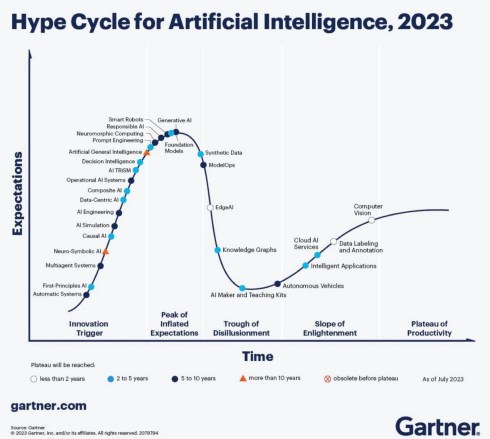

Although AI is not a new concept, the fact that various AI capabilities have now reached the end-user level is what drives the hype. Currently, I believe we are at the peak of the hype.

Last week, I participated in an interesting discussion in the series: The Future of PLM moderated by Michael Finochario, this time talking with the analysts. Click on the link to see Michael’s excellent summary and access to the recording of the event.

It was an interesting discussion for a little more than an hour, and the majority of our discussion was about the potential impact of AI on businesses. First, the impact AI can have on the traditional work of an analyst and next, the effects on the PLM domain.

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

The report’s interesting findings included high adoption of tools but low transformation. Despite significant investment in Generative AI (GenAI), most organizations are not achieving meaningful business transformation.

- 95% of organizations report zero return on GenAI investments.

- Only 5% of integrated AI pilots generate millions in value.

- 80% of organizations have explored or piloted tools like ChatGPT, but these primarily enhance individual productivity.

- 60% of organizations evaluated enterprise-grade systems, but only 20% reached the pilot stage, and just 5% reached production.

- Key barriers include brittle workflows, a lack of contextual learning, and operational misalignment.

Therefore, the question is – Is current AI the next bubble?

In 2014, I wrote about the lack of digital transformation in the PLM domain, and two images (below) from a report by The Economist could be used again. The report can be found here: The Onrushing Wave.

Click on the image to read the 2013 predictions.

I realized that my current job, as a recreational therapist and firefighter at the time, was not at risk, and that some of the predictions from 10 years ago had become a reality. Who is still bothered by telemarketers or retail salespersons?

However, many of the AI symptoms mentioned in the MIT NANDA report are similar to the hype surrounding digital transformation.

The only reservation I have now – will it take a decade before we understand and demonstrate the value of AI, or are we accelerating?

In this context, the upcoming PLM Roadmap/PDT Europe conference on 5 – 6 November will be interesting, as here we will discuss reality.

For a few of you interested in more, there is the day before the conference, a (free) workshop where we will discuss with some thought leaders and experts from various companies how the future of PLM could look like – based on standards, AI tools and more. Click on the image below the conclusion.

Conclusion

The summertime was a nice moment to reflect, inspired by others in my network. What is clear is that there is a shift from technology towards people and change. The rapid expansion of AI tools, along with connected technologies, has created an overwhelming array of possibilities. Now it is time for business leadership to understand them and utilize them for significant business improvement, where the fear is that substantial change will always be slowed down by organizational inertia.

In the past three weeks, between some short holidays, I had a discussion with Rob Ferrone, who you might know as

In the past three weeks, between some short holidays, I had a discussion with Rob Ferrone, who you might know as

“The original product Data PLuMber”.

Our discussion resulted in this concluding post and these two previous posts:

If you haven’t read them before, please take a moment to review them, to understand the flow of our dialogue and to get a full, holistic view of the WHY, WHAT and HOW of data quality and data governance.

A foundation required for any type of modern digital enterprise, with or without AI.

A first feedback round

Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me, Benedict Smith’s point in the discussion thread was an interesting one.

Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me, Benedict Smith’s point in the discussion thread was an interesting one.

From this reaction, I like to quote:

To suggest it’s merely a lack of discipline is to ignore the evidence. We have some of the most disciplined engineers in the world. The problem isn’t the people; it’s the architecture they are forced to inhabit.

My contention is that we have been trying to solve a reasoning problem with record-keeping tools. We need to stop just polishing the records and start architecting for the reasoning. The “what” will only ever be consistently correct when the “why” finally has a home. 😎

Here, I realized that the challenge is not only about moving From Coordinated to Coordinated and Connected, but also that our existing record-keeping mindset drives the old way of thinking about data. In the long term, this will be a dead end.

What did you notice?

Jos, indeed, Benedict’s point is great to have in mind for the future and in addition, I also liked the comment from Yousef Hooshmand, where he explains that a data-driven approach with a much higher data granularity automatically leads to a higher quality – I would quote Yousef:

Jos, indeed, Benedict’s point is great to have in mind for the future and in addition, I also liked the comment from Yousef Hooshmand, where he explains that a data-driven approach with a much higher data granularity automatically leads to a higher quality – I would quote Yousef:

The current landscapes are largely application-centric and not data-centric, so data is often treated as a second or even third-class citizen.

In contrast, a modern federated and semantic architecture is inherently data-centric. This shift naturally leads to better data quality with significantly less overhead. Just as important, data ownership becomes clearly defined and aligned with business responsibilities.

Take “weight” as a simple example: we often deal with “Target Weight,” “Calculated Weight,” and “Measured Weight.” In a federated, semantic setup, these attributes reside in the systems where their respective data owners (typically the business users) work daily, and are semantically linked in the background.

I believe the interesting part of this discussion is that people are thinking about data-driven concepts as a foundation for the paradigm, shifting from systems of record/systems of engagement to systems of reasoning. Additionally, I see how Yousef applies a data-centric approach in his current enterprise, laying the foundation for systems of reasoning.

What’s next?

Rob, your recommendations do not include a transformation, but rather an evolution to become better and more efficient – the typical work of a Product PLuMber, I would say. How about redesigning the way we work?

Rob, your recommendations do not include a transformation, but rather an evolution to become better and more efficient – the typical work of a Product PLuMber, I would say. How about redesigning the way we work?

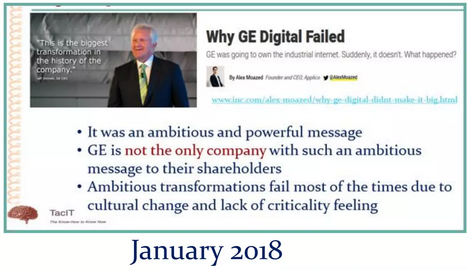

Bold visions and ideas are essential catalysts for transformations, but I’ve found that the execution of significant, strategic initiatives is often the failure mode.

Bold visions and ideas are essential catalysts for transformations, but I’ve found that the execution of significant, strategic initiatives is often the failure mode.

One of my favourite quotes is:

“A complex system that works is invariably found to have evolved from a simple system that worked.”

John Gall, Systemantics (1975)

For example, I advocate this approach when establishing Digital Threads.

It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

Therefore, my take on Digital Thread as a Service is not about a plug-and-play Digital Thread, but the Service of creating valuable Digital Threads.

You achieve the solution by first making the Thread work and progressively ‘leaving a trail of construction’.

The caveat is that this can’t happen in isolation; it must be aligned with a data strategy, a set of principles, and a roadmap that are grounded in the organization’s strategic business imperatives.

Your answer relates a lot to Steef Klein’s comment when he discussed: “Industry 4.0: Define your Digital Thread ML-related roadmap – Carefully select your digital innovation steps.” You can read Steef’s full comment here: Your architectural Industry 4.0 future)

First, I liked the example value cases presented by Steef. They’re a reminder that all these technology-enabled strategies, whether PLM, Digital Thread, or otherwise, are just means to an end. That end is usually growth or financial performance (and hopefully, one day, people too).

First, I liked the example value cases presented by Steef. They’re a reminder that all these technology-enabled strategies, whether PLM, Digital Thread, or otherwise, are just means to an end. That end is usually growth or financial performance (and hopefully, one day, people too).

It is a bit like Lego, however. You can’t build imaginative but robust solutions unless there is underlying compatibility and interoperability.

It is a bit like Lego, however. You can’t build imaginative but robust solutions unless there is underlying compatibility and interoperability.

It would be a wobbly castle made from a mix of Playmobil, Duplo, Lego and wood blocks (you can tell I have been doing childcare this summer – click on the image to see the details).

As the lines blur between products, services, and even companies themselves, effective collaboration increasingly depends on a shared data language, one that can be understood not just by people, but by the microservices and machines driving automation across ecosystems.

Discussing the future?

I think that for those interested in this discussion, I would like to point to the upcoming PLM Roadmap/PDT Europe 2025 conference on November 5th and 6th in Paris, where some of the thought leaders in these concepts will be presenting or attending. The detailed agenda is expected to be published after the summer holidays.

I think that for those interested in this discussion, I would like to point to the upcoming PLM Roadmap/PDT Europe 2025 conference on November 5th and 6th in Paris, where some of the thought leaders in these concepts will be presenting or attending. The detailed agenda is expected to be published after the summer holidays.

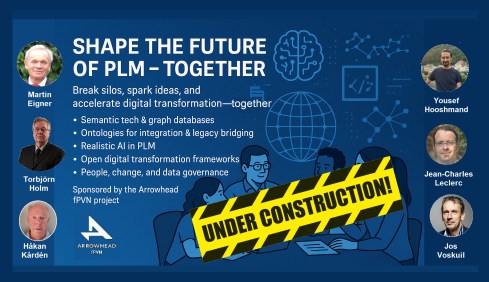

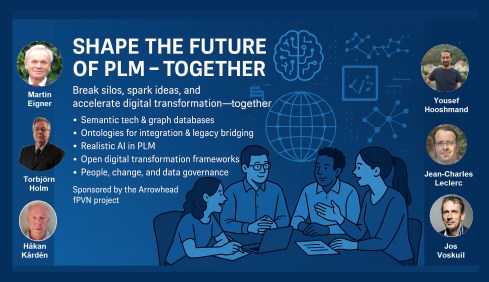

However, this conference also created the opportunity to have a pre-conference workshop, where Håkan Kårdén and I wanted to have an interactive discussion with some of these thought leaders and practitioners from the field.

However, this conference also created the opportunity to have a pre-conference workshop, where Håkan Kårdén and I wanted to have an interactive discussion with some of these thought leaders and practitioners from the field.

Sponsored by the Arrowhead fPVN project, we were able to book a room at the conference venue in the afternoon of November 4th. You can find the announcement and more details of the workshop here in Hakan’s post:. Shape the Future of PLM – Together.

Sponsored by the Arrowhead fPVN project, we were able to book a room at the conference venue in the afternoon of November 4th. You can find the announcement and more details of the workshop here in Hakan’s post:. Shape the Future of PLM – Together.

Last year at the PLM Roadmap PDT Europe conference in Gothenburg, I saw a presentation of the Arrowhead fPVN project. You can read more here: The long week after the PLM Roadmap/PDT Europe 2024 conference.

And, as you can see from the acknowledged participants below, we want to discuss and understand more concepts and their applications – and for sure, the application of AI concepts will be part of the discussion.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September.

And the people?

Rob, we just came from a human-centric PLM conference in Jerez – the Share PLM 2025 summit – where are the humans in this data-driven world?

Rob, we just came from a human-centric PLM conference in Jerez – the Share PLM 2025 summit – where are the humans in this data-driven world?

You can’t have a data-driven strategy in isolation. A business operating system comprises the coordinated interaction of people, processes, systems, and data, aligned to the lifecycle of products and services. Strategies should be defined at each layer, for instance, whether the system landscape is federated or monolithic, with each strategy reinforcing and aligning with the broader operating system vision.

You can’t have a data-driven strategy in isolation. A business operating system comprises the coordinated interaction of people, processes, systems, and data, aligned to the lifecycle of products and services. Strategies should be defined at each layer, for instance, whether the system landscape is federated or monolithic, with each strategy reinforcing and aligning with the broader operating system vision.

In terms of the people layer, a data strategy is only as good as the people who shape, feed, and use it. Systems don’t generate clean data; people do. If users aren’t trained, motivated, or measured on quality, the strategy falls apart.

Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset.

Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset.

There are risks; however, a system-driven approach can clash with local flexibility/agility.

People who previously operated on instinct or informal processes may now need to justify actions with data. And if the data is poor or the outputs feel misaligned, people will quickly disengage, reverting to offline workarounds or intuition.

Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

therefore there needs to be product data professionals in the mix – people who care, notice what’s wrong, and know how to fix it across silos.

Conclusion

- Our discussions on data quality and governance revealed a crucial insight: this is not a technical journey, but a human one. While the industry is shifting from systems of record to systems of reasoning, many organizations are still trapped in record-keeping mindsets and fragmented architectures. Better tools alone won’t fix the issue—we need better ownership, strategy, and engagement.

- True data quality isn’t about being perfect; it’s about the right maturity, at the right time, for the right decisions. Governance, too, isn’t a checkbox—it’s a foundation for trust and continuity. The transition to a data-centric way of working is evolutionary, not revolutionary—requiring people who understand the business, care about the data, and can work across silos.

The takeaway? Start small, build value early, and align people, processes, and systems under a shared strategy. And if you’re serious about your company’s data, join the dialogue in Paris this November.

![]() In my first discussion with Rob Ferrone, the original Product Data PLuMber, we discussed the necessary foundation for implementing a Digital Thread or leveraging AI capabilities beyond the hype. This is important because all these concepts require data quality and data governance as essential elements.

In my first discussion with Rob Ferrone, the original Product Data PLuMber, we discussed the necessary foundation for implementing a Digital Thread or leveraging AI capabilities beyond the hype. This is important because all these concepts require data quality and data governance as essential elements.

If you missed part 1, here is the link: Data Quality and Data Governance – A hype?

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations?

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations?

Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

Cristina Jimenez Pavo’s comment illustrates that the question is in the air.:

Everyone knows that it should be better; high-performing businesses have good data governance, but most people don’t know how to systematically and sustainably improve their data quality. It’s hard and not glamorous (for most), so people tend to focus on buying new systems, which they believe will magically resolve their underlying issues.

Data governance as a strategy

Thanks for the clarification. I imagine it is similar to Configuration Management, i.e., with different needs per industry. I have seen ISO 8000 in the aerospace industry, but it has not spread further to other businesses. What about data governance as a strategy, similar to CM?

Thanks for the clarification. I imagine it is similar to Configuration Management, i.e., with different needs per industry. I have seen ISO 8000 in the aerospace industry, but it has not spread further to other businesses. What about data governance as a strategy, similar to CM?

That’s a great idea. Do you mind if I steal it?

That’s a great idea. Do you mind if I steal it?

If you ask any PLM or ERP vendor, they’ll claim to have a master product data governance template for every industry. While the core principles—ownership, control, quality, traceability, and change management, as in Configuration Management—are consistent, their application must vary based on the industry context, data types, and business priorities.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

The challenge is that both configuration management (CM) and data governance often suffer from a perception problem, being viewed as abstract or compliance-heavy. In truth, they must be practical, embedded in daily workflows, and treated as dynamic systems central to business operations, rather than static documents.

Think of it like the difference between stepping on a scale versus using a smartwatch that tracks your weight, heart rate, and activity, schedules workouts, suggests meals, and aligns with your goals.

![]() Governance should function the same way:

Governance should function the same way:

responsive, integrated, and outcome-driven.

Who is responsible for data quality?

I have seen companies simplifying data quality as an enhancement step for everyone in the organization, like a “You have to be more accurate” message, similar perhaps to configuration management. Here we touch people and organizational change. How do you make improving data quality happen beyond the wish?

I have seen companies simplifying data quality as an enhancement step for everyone in the organization, like a “You have to be more accurate” message, similar perhaps to configuration management. Here we touch people and organizational change. How do you make improving data quality happen beyond the wish?

In most companies, managing product data is a responsibility shared among all employees. But increasingly complex systems and processes are not designed around people, making the work challenging, unpleasant, and often poorly executed.

In most companies, managing product data is a responsibility shared among all employees. But increasingly complex systems and processes are not designed around people, making the work challenging, unpleasant, and often poorly executed.

I like to quote Larry English – The Father of Information Quality:

“Information producers will create information only to the quality level for which they are trained, measured and held accountable.”

A common reaction is to add data “police” or transactional administrators, who unintentionally create more noise or burden those generating the data.

The real solution lies in embedding capable, proactive individuals throughout the product lifecycle who care about data quality as much as others care about the product itself – it was the topic I discussed at the 2025 Share PLM summit in Jerez – Rob Ferrone – Bill O-Materials also presented in part 1 of our discussion.

These data professionals collaborate closely with designers, engineers, procurement, manufacturing, supply chain, maintenance, and repair teams. They take ownership of data quality in systems, without relieving engineers of their responsibility for the accuracy of source data.

Some data, like component weight, is best owned by engineers, while others—such as BoM structure—may be better managed by system specialists. The emphasis should be on giving data professionals precise requirements and the authority to deliver.

They not only understand what good data looks like in their domain but also appreciate the needs of adjacent teams. This results in improved data quality across the business, not just within silos. They also work with IT and process teams to manage system changes and lead continuous improvement efforts.

![]() The real challenge is finding leaders with the vision and drive to implement this approach.

The real challenge is finding leaders with the vision and drive to implement this approach.

The costs or benefits associated with good or poor data quality

At the peak of interest in being data-driven, large consulting firms published numerous studies and analyses, proving that data-driven companies achieve better results than their data-averse competitors. Have you seen situations where the business case for improving “product data” quality has led to noticeable business benefits, and if so, in what range? Double digit, single digit?

At the peak of interest in being data-driven, large consulting firms published numerous studies and analyses, proving that data-driven companies achieve better results than their data-averse competitors. Have you seen situations where the business case for improving “product data” quality has led to noticeable business benefits, and if so, in what range? Double digit, single digit?

Improving data quality in isolation delivers limited value. Data quality is a means to an end. To realise real benefits, you must not only know how to improve it, but also how to utilise high-quality data in conjunction with other levers to drive improved business outcomes.

Improving data quality in isolation delivers limited value. Data quality is a means to an end. To realise real benefits, you must not only know how to improve it, but also how to utilise high-quality data in conjunction with other levers to drive improved business outcomes.

I built a company whose premise was that good-quality product data flowing efficiently throughout the business delivered dividends due to improved business performance. We grew because we delivered results that outweighed our fees.

Last year’s turnover was €35M, so even with a conservatively estimated average in-year ROI of 3:1, the company delivered over € 100 M of cost savings or additional revenue per year to clients, with the majority of these benefits being sustainable.

There is also the potential to unlock new value and business models through data-driven innovation.

For example, connecting disparate product data sources into a unified view and taking steps to sustainably improve data quality enables faster, more accurate, and easier collaboration between OEMs, fleet operators, spare parts providers, workshops, and product users, which leads to a new value proposition around minimizing painful operational downtime.

For example, connecting disparate product data sources into a unified view and taking steps to sustainably improve data quality enables faster, more accurate, and easier collaboration between OEMs, fleet operators, spare parts providers, workshops, and product users, which leads to a new value proposition around minimizing painful operational downtime.

AI and Data Quality

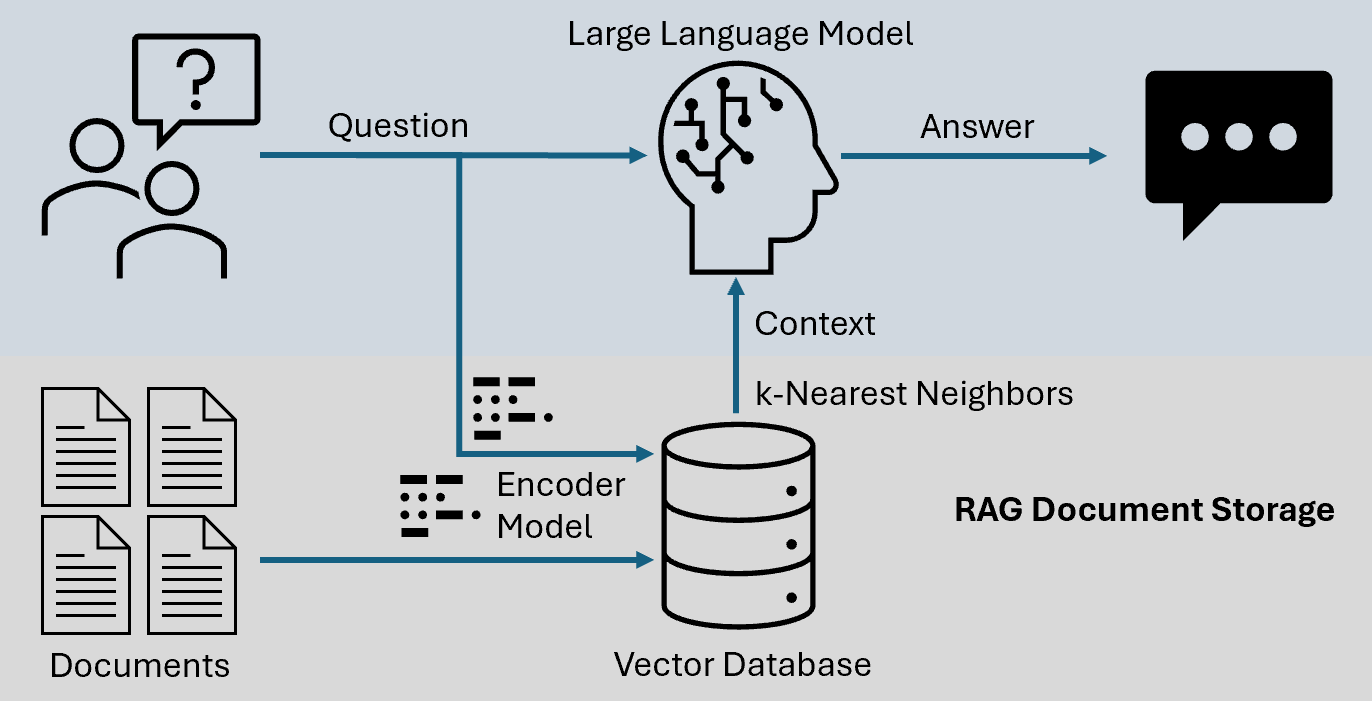

Currently, we are seeing numerous concepts emerge where AI, particularly AI agents, can be highly valuable for PLM. However, we also know that in legacy environments, the overall quality of data is poor. How do you envision AI supporting PLM processes, and where should you start? Or has it already started?

Currently, we are seeing numerous concepts emerge where AI, particularly AI agents, can be highly valuable for PLM. However, we also know that in legacy environments, the overall quality of data is poor. How do you envision AI supporting PLM processes, and where should you start? Or has it already started?

It’s like mining for rare elements—sifting through massive amounts of legacy data to find the diamonds. Is it worth the effort, especially when diamonds can now be manufactured? AI certainly makes the task faster and easier. Interestingly, Elon Musk recently announced plans to use AI to rewrite legacy data and create a new, high-quality knowledge base. This suggests a potential market for trusted, validated, and industry-specific legacy training data.

It’s like mining for rare elements—sifting through massive amounts of legacy data to find the diamonds. Is it worth the effort, especially when diamonds can now be manufactured? AI certainly makes the task faster and easier. Interestingly, Elon Musk recently announced plans to use AI to rewrite legacy data and create a new, high-quality knowledge base. This suggests a potential market for trusted, validated, and industry-specific legacy training data.

Will OEMs sell it as valuable IP, or will it be made open source like Tesla’s patents?

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments.

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments.

That said, data alignment remains complex, as discrepancies can be valid depending on context.

A practical starting point?

Data Quality as a Service. My former company, Quick Release, is piloting an AI-enabled service focused on EBoM to MBoM alignment. It combines a data quality platform with expert knowledge, collecting metadata from PLM, ERP, MES, and other systems to map engineering data models.

Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Summary

We hope the discussions in parts 1 and 2 helped you understand where to begin. It doesn’t need to stay theoretical or feel unachievable.

- The first step is simple: recognise product data as an asset that powers performance, not just admin.

Then treat it accordingly. - You don’t need a 5-year roadmap or a board-approved strategy before you begin. Start by identifying the product data that supports your most critical workflows, the stuff that breaks things when it’s wrong or missing. Work out what “good enough” looks like for that data at each phase of the lifecycle.

Then look around your business: who owns it, who touches it, and who cares when it fails? - From there, establish the roles, rules, and routines that help this data improve over time, even if it’s manual and messy to begin with. Add tooling where it helps.

- Use quality KPIs that reflect the business, not the system. Focus your governance efforts where there’s friction, waste, or rework.

- And where are you already getting value? Lock it in. Scale what works.

Conclusion

It’s not about perfection or policies; it’s about momentum and value. Data quality is a lever. Data governance is how you pull it.

Just start pulling- and then get serious with your AI applications!

Are you attending the PLM Roadmap/PDT Europe 2025 conference on

November 5th & 6th in Paris, La Defense?

There is an opportunity to discuss the future of PLM in a workshop before the event.

More information will be shared soon; please mark November 4th in the afternoon on your agenda.

In recent months, I’ve noticed a decline in momentum around sustainability discussions, both in my professional network and personal life. With current global crises—like the Middle East conflict and the erosion of democratic institutions—dominating our attention, long-term topics like sustainability seem to have taken a back seat.

In recent months, I’ve noticed a decline in momentum around sustainability discussions, both in my professional network and personal life. With current global crises—like the Middle East conflict and the erosion of democratic institutions—dominating our attention, long-term topics like sustainability seem to have taken a back seat.

But don’t stop reading yet—there is good news, though we’ll start with the bad.

The Convenient Truth

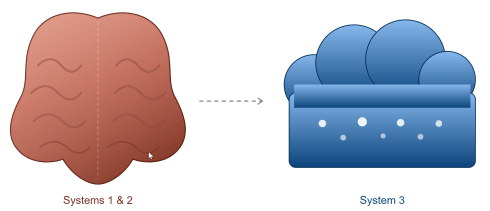

Human behavior is primarily emotional. A lesson valuable in the PLM domain and discussed during the Share PLM summit. As SharePLM notes in their change management approach, we rely on our “gator brain”—our limbic system – call it System 1 and System 2 or Thinking Fast and Slow. Faced with uncomfortable truths, we often seek out comforting alternatives.

Human behavior is primarily emotional. A lesson valuable in the PLM domain and discussed during the Share PLM summit. As SharePLM notes in their change management approach, we rely on our “gator brain”—our limbic system – call it System 1 and System 2 or Thinking Fast and Slow. Faced with uncomfortable truths, we often seek out comforting alternatives.

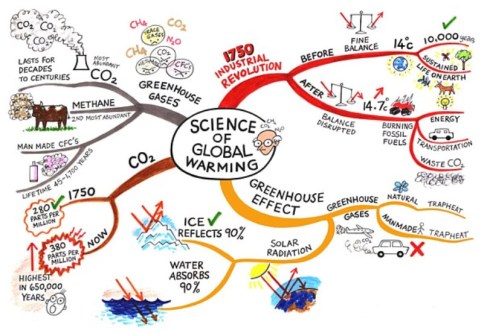

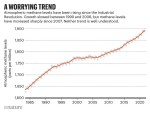

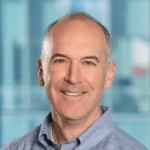

The film Don’t Look Up humorously captures this tendency. It mirrors real-life responses to climate change: “CO₂ levels were high before, so it’s nothing new.” Yet the data tells a different story. For 800,000 years, CO₂ ranged between 170–300 ppm. Today’s level is ~420 ppm—an unprecedented spike in just 150 years as illustrated below.

Frustratingly, some of this scientific data is no longer prominently published. The narrative has become inconvenient, particularly for the fossil fuel industry.

Persistent Myths

Then there is the pseudo-scientific claim that fossil fuels are infinite because the Earth’s core continually generates them. The Abiogenic Petroleum Origin theory is a fringe theory, sometimes revived from old Soviet science, and lacks credible evidence. See image below

Oil remains a finite, biologically sourced resource. Yet such myths persist, often supported by overly complex jargon designed to impress rather than inform.

The Dissonance of Daily Life

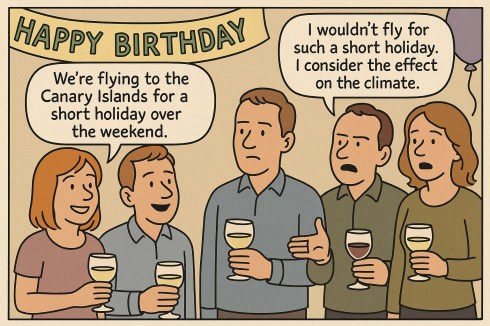

A young couple casually mentioned flying to the Canary Islands for a weekend at a recent birthday party. When someone objected on climate grounds, they simply replied, “But the climate is so nice there!”

“Great climate on the Canary Islands”

This reflects a common divide among young people—some are deeply concerned about the climate, while many prioritize enjoying life now. And that’s understandable. The sustainability transition is hard because it challenges our comfort, habits, and current economic models.

The Cost of Transition