![]()

For those who have followed my blog over the years, it must be clear that I am advocating for a digital enterprise explaining benefits of a data-driven approach where possible. In the past month an old topic with new insights came to my attention: Yes or No intelligent Part Numbers or do we mean Product Numbers?

What’s the difference between a Part and a Product?

In a PLM data model, you need to have support for both Parts and Products and there is a significant difference between these two types of business objects. A Product is an object facing the outside world, which can be a company (B2B) or customer (B2C) related. Examples of B2C products are the Apple iPhone 8, the famous IKEA Billy, or my Garmin 810 and my Dell OptiPlex 3050 MFXX8. Examples of B2B products are the ABB synchronous motor AMZ 2500, the FESTO standard cylinder DSBG. Products have a name and if there are variants of the product, they also have an additional identifier.

In a PLM data model, you need to have support for both Parts and Products and there is a significant difference between these two types of business objects. A Product is an object facing the outside world, which can be a company (B2B) or customer (B2C) related. Examples of B2C products are the Apple iPhone 8, the famous IKEA Billy, or my Garmin 810 and my Dell OptiPlex 3050 MFXX8. Examples of B2B products are the ABB synchronous motor AMZ 2500, the FESTO standard cylinder DSBG. Products have a name and if there are variants of the product, they also have an additional identifier.

A Part represents a physical object that can be purchased or manufactured. A combination of Parts appears in a BOM. In case these Parts are not yet resolved for manufacturing, this BOM might be the Engineering BOM or a generic Manufacturing BOM. In case the Parts are resolved for a specific manufacturing plant, we talk about the MBOM.

A Part represents a physical object that can be purchased or manufactured. A combination of Parts appears in a BOM. In case these Parts are not yet resolved for manufacturing, this BOM might be the Engineering BOM or a generic Manufacturing BOM. In case the Parts are resolved for a specific manufacturing plant, we talk about the MBOM.

I have discussed the relation between Parts and Products in a earlier post Products, BOMs and Parts which was a follow-up on my LinkedIn post, the importance of a PLM data model. Although both posts were written more than two years ago, the content is still valid. In the upcoming year, I will address this topic of products further, including software and services moving to solutions / experiences.

Intelligent number for Parts?

As parts are company internal business objects, I would like to state if the company is serious about becoming a digital enterprise, parts should have meaningless unique identifiers. Unique identifiers are the link between discipline or application specific data sets. For example, in the image below, where I imagined attributes sets for a part, based on engineering and manufacturing data sets.

Apart from the unique ID, there might be a common set of attributes that will be exposed in every connected system. For example, a description, a classification and one or more status attributes might be needed.

Note 1: A revision number is not needed when you create every time a new unique ID for a new version of the part. This practice is already common in the electronics industry. In the old mechanical domain, we are used to having revisions in particular for make parts based on Form-Fit-Function rules.

Note 2: The description might be generated automatically based on a concatenation of some key attributes.

Of course if you are aiming for a full digital enterprise, and I think you should, do not waste time fixing the past. In some situations, I learned that an external consultant recommended the company to rename their old meaningful part numbers to the new non-intelligent part numbering scheme. There are two mistakes here. Renumbering is too costly, as all referenced information should be updated. And secondly as long as the old part numbers have a unique ID for the enterprise, there is no need to change. The connectivity of information should not depend on how the unique ID is formatted.

Of course if you are aiming for a full digital enterprise, and I think you should, do not waste time fixing the past. In some situations, I learned that an external consultant recommended the company to rename their old meaningful part numbers to the new non-intelligent part numbering scheme. There are two mistakes here. Renumbering is too costly, as all referenced information should be updated. And secondly as long as the old part numbers have a unique ID for the enterprise, there is no need to change. The connectivity of information should not depend on how the unique ID is formatted.

Read more if you want here: The impact of Non-Intelligent Part Numbers

Intelligent numbers for Products?

If the world was 100 % digital and connected, we could work with non-intelligent product numbers. However, this is a stage beyond my current imagination. For products we will still need a number that allows customers to refer to, for when they communicate with their supplier / vendor or service provider. For many high-tech products the product name and type might be enough. When I talk about the Samsung S5 G900F 16G, the vendor knows which kind of configuration I am referring too. Still it is important to realize that behind these specifications, different MBOMs might exist due to different manufacturing locations or times.

If the world was 100 % digital and connected, we could work with non-intelligent product numbers. However, this is a stage beyond my current imagination. For products we will still need a number that allows customers to refer to, for when they communicate with their supplier / vendor or service provider. For many high-tech products the product name and type might be enough. When I talk about the Samsung S5 G900F 16G, the vendor knows which kind of configuration I am referring too. Still it is important to realize that behind these specifications, different MBOMs might exist due to different manufacturing locations or times.

However, when I refer to the IKEA Billy, there are too many options to easily describe the right one consistent in words, therefore you will find a part number on the website, e.g. 002.638.50. This unique ID connects directly to a single sell-able configuration. Here behind this unique ID also different MBOMs might exist for the same reason as for the Samsung telephone. The number is a connection to the sales configuration and should not be too complicated as people need to be able to read and recognize it when you go to a warehouse.

However, when I refer to the IKEA Billy, there are too many options to easily describe the right one consistent in words, therefore you will find a part number on the website, e.g. 002.638.50. This unique ID connects directly to a single sell-able configuration. Here behind this unique ID also different MBOMs might exist for the same reason as for the Samsung telephone. The number is a connection to the sales configuration and should not be too complicated as people need to be able to read and recognize it when you go to a warehouse.

Conclusion

There is a big difference between Product and Part numbers because of the intended scope of these business objects. Parts will soon exist in connected, digital enterprises and therefore do not need any meaningful number anymore. Products need to be identified by consumers anywhere around the world, not yet able or willing to have a digital connection with their vendors. Therefore smaller and understandable numbers will remain needed to support exact communication between consumer and vendor.

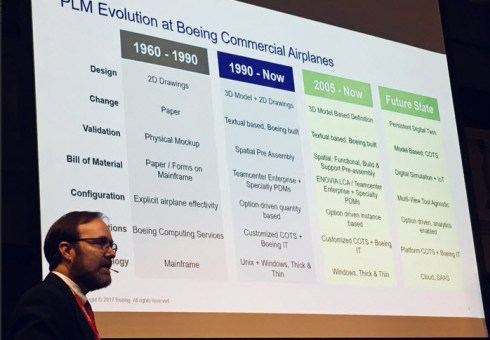

Approximate four years ago new concepts related to digitalization for PLM became more evident. How could a digital continuity connect the various disciplines around the product lifecycle and therefore provide end-to-end visibility and traceability? When speaking of end-to-end visibility most of the time companies talked about the way they designed and delivered products, visibility of what is happening stopped most of the time after manufacturing. The diagram to the left, showing a typical Build To Order organization illustrates the classical way of thinking. There is an R&D team working on Innovation, typically a few engineers and most of the engineers are working in Sales Engineering and Manufacturing Preparation to define and deliver a customer specific order. In theory, once delivered none of the engineers will be further involved, and it is up to the Service Department to react to what is happening in the field.

Approximate four years ago new concepts related to digitalization for PLM became more evident. How could a digital continuity connect the various disciplines around the product lifecycle and therefore provide end-to-end visibility and traceability? When speaking of end-to-end visibility most of the time companies talked about the way they designed and delivered products, visibility of what is happening stopped most of the time after manufacturing. The diagram to the left, showing a typical Build To Order organization illustrates the classical way of thinking. There is an R&D team working on Innovation, typically a few engineers and most of the engineers are working in Sales Engineering and Manufacturing Preparation to define and deliver a customer specific order. In theory, once delivered none of the engineers will be further involved, and it is up to the Service Department to react to what is happening in the field.

Modern business is about having customer or market involvement in the whole lifecycle of the product. And as products become more and more a combination of hardware and software, it is the software that allows the manufacturer to provide incremental innovation to their products. However, to innovate in a manner that is matching or even exceeding customer demands, information from the outside world needs to travel as fast as possible through an organization. In case this is done in isolated systems and documents, the journey will be cumbersome and too slow to allow a company to act fast enough. Here digitization comes in, making information directly available as data elements instead of documents with their own file formats and systems to author them. The ultimate dream is a digital enterprise where date “flows”, advocated already by some manufacturing companies for several years.

Modern business is about having customer or market involvement in the whole lifecycle of the product. And as products become more and more a combination of hardware and software, it is the software that allows the manufacturer to provide incremental innovation to their products. However, to innovate in a manner that is matching or even exceeding customer demands, information from the outside world needs to travel as fast as possible through an organization. In case this is done in isolated systems and documents, the journey will be cumbersome and too slow to allow a company to act fast enough. Here digitization comes in, making information directly available as data elements instead of documents with their own file formats and systems to author them. The ultimate dream is a digital enterprise where date “flows”, advocated already by some manufacturing companies for several years. This post is a rewrite of an article I wrote on LinkedIn two years ago and modified it to my current understanding. When you are following my blog, in particular, the posts related to the business change needed to transform a company towards a data-driven digital enterprise, one of the characteristics of digital is about the real-time availability of information. This has an impact on everyone working in such an organization. My conversations are in the context of PLM (Product Lifecycle Management) however I assume my observations are valid for other domains too.

This post is a rewrite of an article I wrote on LinkedIn two years ago and modified it to my current understanding. When you are following my blog, in particular, the posts related to the business change needed to transform a company towards a data-driven digital enterprise, one of the characteristics of digital is about the real-time availability of information. This has an impact on everyone working in such an organization. My conversations are in the context of PLM (Product Lifecycle Management) however I assume my observations are valid for other domains too.

And here we started the discussion. “Why do you want to escalate to a manager?” Escalation will only give more disruption and stress for the persons involved. Isn´t the person qualified enough to make a decision what is important?

And here we started the discussion. “Why do you want to escalate to a manager?” Escalation will only give more disruption and stress for the persons involved. Isn´t the person qualified enough to make a decision what is important? The ultimate conclusion of our discussion was: Implementing a modern PLM environment brings first of all almost 100 % visibility, the single version of the truth. This new capability breaks down silos, a department cannot hide activities behind their departmental wall anymore. Digital PLM allows horizontal multidisciplinary collaboration without the need going through the management hierarchy.

The ultimate conclusion of our discussion was: Implementing a modern PLM environment brings first of all almost 100 % visibility, the single version of the truth. This new capability breaks down silos, a department cannot hide activities behind their departmental wall anymore. Digital PLM allows horizontal multidisciplinary collaboration without the need going through the management hierarchy. Last week I posted my first review of the PDT Europe conference. You can read the details here:

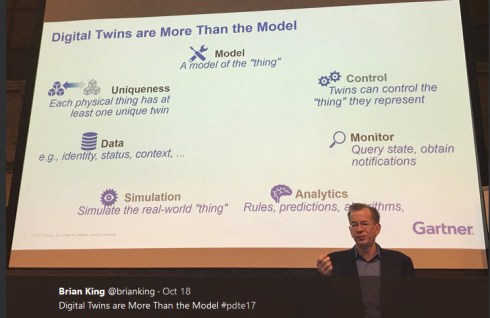

Last week I posted my first review of the PDT Europe conference. You can read the details here:  Now back to the conference. Day 2 started with a remote session from Simon Floyd. Simon is Microsoft’s Managing Director for Manufacturing Industry Architecture Enterprise Services and a frequent speaker at PDT. Simon shared with us Microsoft’s viewpoint of a Digital Twin, the strategy to implement a Digit Twin, the maturity status of several of their reference customers and areas these companies are focusing. From these customers it was clear most companies focused on retrieving data in relation to maintenance, providing analytics and historical data. Futuristic scenarios like using the digital twin for augmented reality or design validation. As I discussed in the earlier post, this relates to my observations, where creating a digital thread between products in operations is considered as a quick win. Establishing an end-to-end relationship between products in operation and their design requires many steps to fix. Read my post:

Now back to the conference. Day 2 started with a remote session from Simon Floyd. Simon is Microsoft’s Managing Director for Manufacturing Industry Architecture Enterprise Services and a frequent speaker at PDT. Simon shared with us Microsoft’s viewpoint of a Digital Twin, the strategy to implement a Digit Twin, the maturity status of several of their reference customers and areas these companies are focusing. From these customers it was clear most companies focused on retrieving data in relation to maintenance, providing analytics and historical data. Futuristic scenarios like using the digital twin for augmented reality or design validation. As I discussed in the earlier post, this relates to my observations, where creating a digital thread between products in operations is considered as a quick win. Establishing an end-to-end relationship between products in operation and their design requires many steps to fix. Read my post:

Sustainability and the circular economy has been a theme at PDT for some years now too. In his keynote speech, Torbjörn Holm from Eurostep took us through the global megatrends (Hay group 2030) and the technology trends (Gartner 2018) and mapped out that technology would be a good enabler to discuss several of the global trends.

Sustainability and the circular economy has been a theme at PDT for some years now too. In his keynote speech, Torbjörn Holm from Eurostep took us through the global megatrends (Hay group 2030) and the technology trends (Gartner 2018) and mapped out that technology would be a good enabler to discuss several of the global trends. Rebecca Ihrfors, CIO from the Swedish Defense Material Administration (FMV) shared her plans on transforming the IT landscape to harmonize the current existing environments and to become a broker between industry and the armed forces (FM). As now many of the assets come with their own data sets and PDM/PLM environments, the overhead to keep up all these proprietary environments is too expensive and fragmented. FWM wants to harmonize the data they retrieve from industry and the way they offer it to the armed forces in a secure way. There is a need for standards and interoperability.

Rebecca Ihrfors, CIO from the Swedish Defense Material Administration (FMV) shared her plans on transforming the IT landscape to harmonize the current existing environments and to become a broker between industry and the armed forces (FM). As now many of the assets come with their own data sets and PDM/PLM environments, the overhead to keep up all these proprietary environments is too expensive and fragmented. FWM wants to harmonize the data they retrieve from industry and the way they offer it to the armed forces in a secure way. There is a need for standards and interoperability.

Principle 1 The bimodal strategy as the image shows.

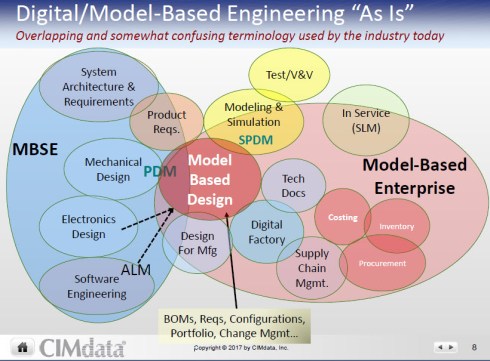

Principle 1 The bimodal strategy as the image shows. Peter Bilello from CIMdata kicked-off by bringing some structure related to the various Model-Based areas and Digital Thread. Peter started by mentioning that technology is the least important issue as organization culture, changing processing and adapting people skills are more critical factors for a successful adoption of modern PLM. Something that would repeatedly be confirmed by other speakers during the conference.

Peter Bilello from CIMdata kicked-off by bringing some structure related to the various Model-Based areas and Digital Thread. Peter started by mentioning that technology is the least important issue as organization culture, changing processing and adapting people skills are more critical factors for a successful adoption of modern PLM. Something that would repeatedly be confirmed by other speakers during the conference.

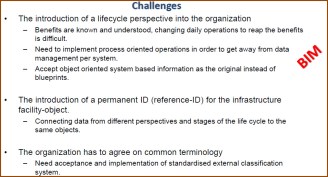

The first presentation from Väino Tarandi, professor in IT in Construction at KTH Sweden presented his findings related to BIM and GIS in the context of the lifecycle, a test bed where PLCS meets IFC. Interesting as I have been involved in BIM Level 3 discussions in the UK, which was already an operational challenge for stakeholders in the construction industry now extended with the concept of the lifecycle. So far these projects are at the academic level, and I am still waiting for companies to push and discover the full benefits of an integrated approach.

The first presentation from Väino Tarandi, professor in IT in Construction at KTH Sweden presented his findings related to BIM and GIS in the context of the lifecycle, a test bed where PLCS meets IFC. Interesting as I have been involved in BIM Level 3 discussions in the UK, which was already an operational challenge for stakeholders in the construction industry now extended with the concept of the lifecycle. So far these projects are at the academic level, and I am still waiting for companies to push and discover the full benefits of an integrated approach.

Outotec’s presentation related to managing installed base and unlock service opportunities explained by Sami Grönstrand and Helena Guiterrez was besides entertaining easy to digest content and well-paced. Without being academic, they explained somehow the challenges of a company with existing systems in place moving towards concepts of a digital twin and the related data management and quality issues. Their practical example illustrated that if you have a clear target, understanding better a customer specific environment to sell better services, can be achieved by rational thinking and doing, a typical Finish approach. This all including the “bi-modal approach” and people change management.

Outotec’s presentation related to managing installed base and unlock service opportunities explained by Sami Grönstrand and Helena Guiterrez was besides entertaining easy to digest content and well-paced. Without being academic, they explained somehow the challenges of a company with existing systems in place moving towards concepts of a digital twin and the related data management and quality issues. Their practical example illustrated that if you have a clear target, understanding better a customer specific environment to sell better services, can be achieved by rational thinking and doing, a typical Finish approach. This all including the “bi-modal approach” and people change management.

Martin Eigner took us in high-speed mode through his vision and experience working in a bi-modular approach with Aras to support legacy environments and a modern federated layer to support the complexity of a digital enterprise where the system architecture is leading. I will share more details on these concepts in my next post as during day 2 of PDT Europe both Marc Halpern and me were talking related to this topic, and I will combine it in a more extended story.

Martin Eigner took us in high-speed mode through his vision and experience working in a bi-modular approach with Aras to support legacy environments and a modern federated layer to support the complexity of a digital enterprise where the system architecture is leading. I will share more details on these concepts in my next post as during day 2 of PDT Europe both Marc Halpern and me were talking related to this topic, and I will combine it in a more extended story.

As I am preparing my presentation for the upcoming

As I am preparing my presentation for the upcoming  Classical ECO/ECR processes can become highly automated when the data is reliable, and the company’s strategy is captured in rules. In a data-driven environment, there will be much more granular data that requires some kind of approval status. We cannot do this manually anymore as it would kill the company, too expensive and too slow. Therefore, the need for algorithms.

Classical ECO/ECR processes can become highly automated when the data is reliable, and the company’s strategy is captured in rules. In a data-driven environment, there will be much more granular data that requires some kind of approval status. We cannot do this manually anymore as it would kill the company, too expensive and too slow. Therefore, the need for algorithms.

If you would currently start with an MDM initiative in your company and look for providers of MDM solution, you will discover that their values are based on technology capabilities, bringing data together from different enterprise systems in a way the customer thinks it should be organized. More a toolkit approach instead of an industry approach. And in cases, there is an industry approach it is sporadic that this approach is related to manufacturing companies. Remember my observation from 2015: manufacturing companies do not have MDM activities related to engineering/manufacturing because it is too complicated, too diverse, too many documents instead of data.

If you would currently start with an MDM initiative in your company and look for providers of MDM solution, you will discover that their values are based on technology capabilities, bringing data together from different enterprise systems in a way the customer thinks it should be organized. More a toolkit approach instead of an industry approach. And in cases, there is an industry approach it is sporadic that this approach is related to manufacturing companies. Remember my observation from 2015: manufacturing companies do not have MDM activities related to engineering/manufacturing because it is too complicated, too diverse, too many documents instead of data. Once data is able to flow, there will be another discussion: Who is responsible for which attributes. Bjørn Fidjeland from

Once data is able to flow, there will be another discussion: Who is responsible for which attributes. Bjørn Fidjeland from  At this moment there are two approaches to implement PLM. The most common practice is item-centric and model-centric will be potentially the best practice for the future. Perhaps your company still using a method from the previous century called drawing-centric. In that case, you should read this post with even more attention as there are opportunities to improve.

At this moment there are two approaches to implement PLM. The most common practice is item-centric and model-centric will be potentially the best practice for the future. Perhaps your company still using a method from the previous century called drawing-centric. In that case, you should read this post with even more attention as there are opportunities to improve.

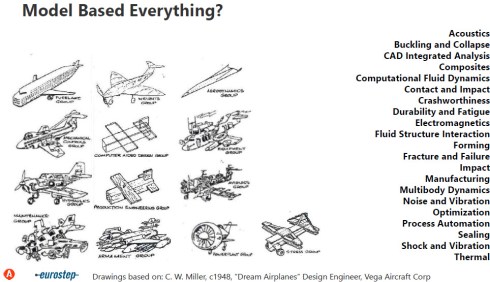

However, in the beginning, the model can be still a functional or logical model. In particular, for complex products, model-based systems engineering might be the base for defining the solution. Actually, when we talk about products that interact with the outside world through software, we tend to call them systems. This explains that model-based systems engineering is getting more and more a recommended approach to make sure the product works as expected, fulfills all the needs for the product and creates a foundation for incremental innovation without starting from scratch.

However, in the beginning, the model can be still a functional or logical model. In particular, for complex products, model-based systems engineering might be the base for defining the solution. Actually, when we talk about products that interact with the outside world through software, we tend to call them systems. This explains that model-based systems engineering is getting more and more a recommended approach to make sure the product works as expected, fulfills all the needs for the product and creates a foundation for incremental innovation without starting from scratch.

Once we are able to control this collection of managed data concepts of digital twin or even virtual twin can be exploited linking data to a single instance in the field.

Once we are able to control this collection of managed data concepts of digital twin or even virtual twin can be exploited linking data to a single instance in the field. During my summer holidays, I read some fantastic books to relax the brain.

During my summer holidays, I read some fantastic books to relax the brain.  Further down the book, Tom becomes a little grumpy and starts to complain about the Internet, Google and even about Wikipedia. These information resources provide so often fake or skin-deep information, which is not scientifically proven by experts. It reminded me of a conference that I attended in the early nineties of the previous century. An engineering society had organized this conference to discuss the issue that finite element analysis became more and more available to laymen. The affordable simulation software would be used by non-trained engineers, and they would make the wrong decisions. Constructions would fall down, machines would fail. Looking back now, we can see the liberation of finite element analysis leads to more usage of simulation technology providing better products and when really needed experts are still involved.

Further down the book, Tom becomes a little grumpy and starts to complain about the Internet, Google and even about Wikipedia. These information resources provide so often fake or skin-deep information, which is not scientifically proven by experts. It reminded me of a conference that I attended in the early nineties of the previous century. An engineering society had organized this conference to discuss the issue that finite element analysis became more and more available to laymen. The affordable simulation software would be used by non-trained engineers, and they would make the wrong decisions. Constructions would fall down, machines would fail. Looking back now, we can see the liberation of finite element analysis leads to more usage of simulation technology providing better products and when really needed experts are still involved. However, what is a PLM expert? Recently I wrote a post sharing the observation that a lot of PLM product – or IT-focused discussions miss the point of education (see

However, what is a PLM expert? Recently I wrote a post sharing the observation that a lot of PLM product – or IT-focused discussions miss the point of education (see

July and August are the months that privileged people go on holiday. Depending on where you live and work it can be a long weekend or a long month. I plan to give my PLM twisted brain a break for two weeks. I am not sure if it will happen as Greek beaches always have inspired for philosophers. What do you think about “PLM on the beach”?

July and August are the months that privileged people go on holiday. Depending on where you live and work it can be a long weekend or a long month. I plan to give my PLM twisted brain a break for two weeks. I am not sure if it will happen as Greek beaches always have inspired for philosophers. What do you think about “PLM on the beach”? I believe we all get immune for the term “Digital Transformation” (11.400.000 hits on Google today). I have talked about digital transformation in the context many times too. Change is happening. The classic ways of working were based on documents, a container of information, captured on paper (very classical) or captured in a file (still current).

I believe we all get immune for the term “Digital Transformation” (11.400.000 hits on Google today). I have talked about digital transformation in the context many times too. Change is happening. The classic ways of working were based on documents, a container of information, captured on paper (very classical) or captured in a file (still current). What we have learned from innovative companies is that a data-driven approach, where more granular information is stored uniquely as data objects instead of document containers bring huge benefits. Information objects can be shared where relevant along the product lifecycle and without the overhead of people creating and converting documents, the stakeholders become empowered as they can retrieve all information objects they desire (if allowed). We call this the digital thread.

What we have learned from innovative companies is that a data-driven approach, where more granular information is stored uniquely as data objects instead of document containers bring huge benefits. Information objects can be shared where relevant along the product lifecycle and without the overhead of people creating and converting documents, the stakeholders become empowered as they can retrieve all information objects they desire (if allowed). We call this the digital thread.

Companies are learning now how to manage the dependencies between these two domains, as consistency of requirements and features of the products is required. Due to the fast pace of software changes, it is almost impossible to connect everything to the PLM product definition. PLM Vendors are working on concepts to connect PLM and ALM through different approaches. Other companies might believe that their software process is crucial and that the mechanical product becomes a commodity. Could you build a product innovation platform starting from the software platform which some of the old industry giants believe?

Companies are learning now how to manage the dependencies between these two domains, as consistency of requirements and features of the products is required. Due to the fast pace of software changes, it is almost impossible to connect everything to the PLM product definition. PLM Vendors are working on concepts to connect PLM and ALM through different approaches. Other companies might believe that their software process is crucial and that the mechanical product becomes a commodity. Could you build a product innovation platform starting from the software platform which some of the old industry giants believe?

Does history repeat itself even in the PLM domain? The last week I have read various blog posts related to PLM and Small and Medium Enterprises (SME). A good summary of these thoughts can be found in Oleg Shilovitsky’s post:

Does history repeat itself even in the PLM domain? The last week I have read various blog posts related to PLM and Small and Medium Enterprises (SME). A good summary of these thoughts can be found in Oleg Shilovitsky’s post:  In 2006, Oleg and I worked @ SmarTeam where we defined and built a “Core PLM” solution, targeting mid-market companies. This core PLM solution called the SmarTeam Engineering Express (SNE) contained both pre-configured CAD-integrations as well as BOM practices (EBOM-MBOM). Combined with documented best practices, pre-configured methodology, and workflows this environment could be implemented relatively quick (if the implementer did not want to earn extra money on services ).

In 2006, Oleg and I worked @ SmarTeam where we defined and built a “Core PLM” solution, targeting mid-market companies. This core PLM solution called the SmarTeam Engineering Express (SNE) contained both pre-configured CAD-integrations as well as BOM practices (EBOM-MBOM). Combined with documented best practices, pre-configured methodology, and workflows this environment could be implemented relatively quick (if the implementer did not want to earn extra money on services ). Interesting enough SmarTeam’s enterprise customers requested the same capabilities. It makes you realize there is no unique difference in PLM for mid-market companies and large enterprises. I believe the major difference is due to education, the company’s culture and where the PLM decision is made. Let’s explore

Interesting enough SmarTeam’s enterprise customers requested the same capabilities. It makes you realize there is no unique difference in PLM for mid-market companies and large enterprises. I believe the major difference is due to education, the company’s culture and where the PLM decision is made. Let’s explore These new hires are normally not educated on standard PLM concepts like ECR, ECO, Configuration Management, PLM-ERP best practices (EBOM/MBOM). For an engineering study, these practices/processes are not considered as critical as it is about collaboration and not about skills. The PLM capabilities engineering students learn are the basic functionalities they need master when working with their (CAD) tools.

These new hires are normally not educated on standard PLM concepts like ECR, ECO, Configuration Management, PLM-ERP best practices (EBOM/MBOM). For an engineering study, these practices/processes are not considered as critical as it is about collaboration and not about skills. The PLM capabilities engineering students learn are the basic functionalities they need master when working with their (CAD) tools. Of course, you can educate yourself on PLM. CIMdata is well-known for its training program, John Stark and others can educate you on PLM. Have a look at this interesting new startup

Of course, you can educate yourself on PLM. CIMdata is well-known for its training program, John Stark and others can educate you on PLM. Have a look at this interesting new startup

Large enterprises usually have staff with a strategic task to work on standardized and optimized processes for the future. These people will discover and have time to be educated on the values of PLM, supported by strategic advisors that know the value of PLM. In these companies, the decisions made for PLM are top-down decisions. Usability, functions/features and costs are usually crucial for bottom-u

Large enterprises usually have staff with a strategic task to work on standardized and optimized processes for the future. These people will discover and have time to be educated on the values of PLM, supported by strategic advisors that know the value of PLM. In these companies, the decisions made for PLM are top-down decisions. Usability, functions/features and costs are usually crucial for bottom-u

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…

Jos, as usual, you've provided a buffet of "food for thought". Where do you see AI being trained by a…