After a summer holiday in the south of Greece, it is time to resume my activities. The south of Crete is largely an analogue environment, far from any digital hype.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

Tempted by LinkedIn posts, I noticed the summer was full of memories, with Martin Eigner sharing 40 years of PLM experience, Oleg Shilovitsky sharing 30 years of PDM Evolution, and Michael Finochario publishing posts on PLM vendors, CAD kernels, and more.

So where do I stand? While digesting all these historical experiences, I reflected on what we can learn from them and what we didn’t learn from them.

It started with technology.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

From 1990 to 1999, I worked with mid-market companies, where data management was the most significant challenge. The introduction of MS Windows made data management more user-friendly, evolving from drawing management systems with version and status management capabilities.

Who remembers Automanager Workflow from Cyco, before SmarTeam came on the market?

For that reason, in the early days, PDM was an IT job. As the PDM system primarily dealt with engineering data, it was relatively easy to implement as an organizational change process. We transitioned from analogue to electronic in the department.

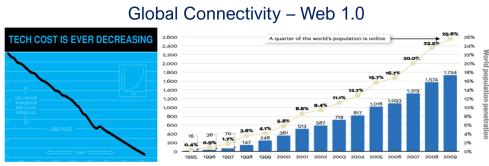

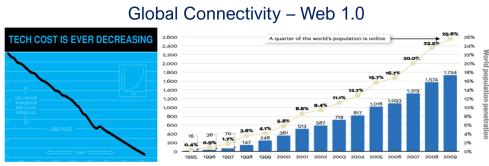

Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. Connecting with other systems, particularly ERP, was a serious IT job and a financial challenge. The rapid decline of IT components, combined with the rapid growth of global connectivity, has created new opportunities for collaboration.

As part of the Dassault/IBM/SmarTeam organization, I explained and taught these new capabilities worldwide.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

In 2008, my VirtualDutchman blog and coaching journey began, evolving from explanations of technology to modern methodologies, which led to organizational change and expectation management – skills not traditionally associated with IT.

Then came digital transformation

With growing connectivity, smartphones and Web 2.0 technology have led to more PLM-like discussions. PLM vendors expanded their scope and developed capabilities beyond mechanical engineering.

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

At the time, they were largely considered the same in discussions and advertisements..

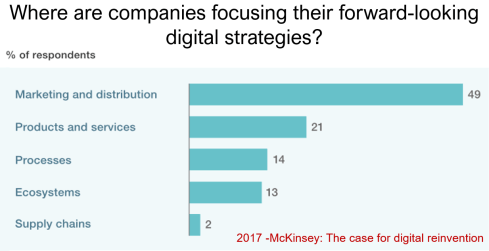

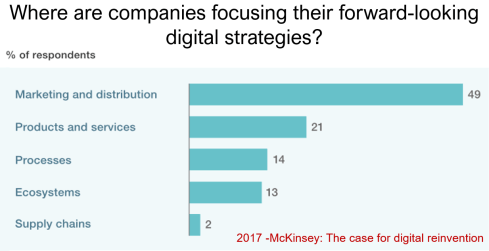

Meanwhile, digital transformation was occurring at the marketing and sales levels – companies invested in direct communication with their customers through the web.

Meanwhile, the internal ways of working for R&D, engineering, and manufacturing did not change significantly. Still, they were following linear processes, and despite the existence of 3D CAD, the 2D drawing remained the primary carrier of legal information between engineering, manufacturing, and suppliers.

Note: the option where the most benefits could be achieved – connected supply chains – had the lowest focus in 2017 – something that would change with COVID-19.

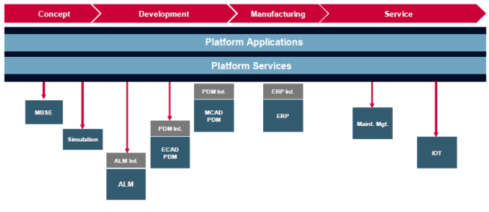

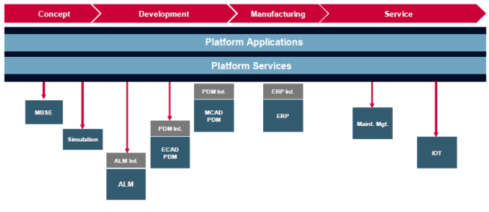

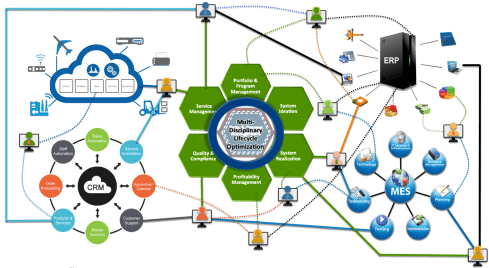

Fundamental digital transformation in the PLM domain occurred gradually. ARAS came with its overlay approach (the platform), connecting various disciplines and enterprise systems. In contrast, Dassault Systèmes introduced its 3DEXPERIENCE platform, utilizing its own software brands as platform components.

The Aras overlay approach

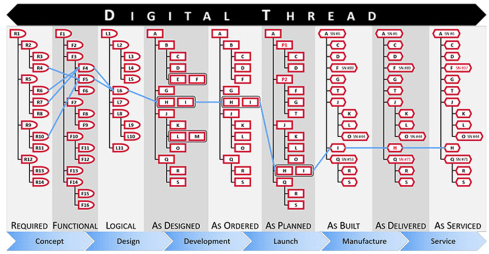

Most PLM vendors rapidly countered Aras’ overlay approach with their low-code offerings based on Mendix, ThingWorx or Netvibes, to enable data flows beyond the traditional PDM scope. The Coordinated Digital Thread was born.

The good news is that PLM has now clearly become a strategy based on a federated system infrastructure. The single PLM system no longer exists, although many of us still use the term’ PLM system’ to refer to the main component of a PLM infrastructure – the System of Record.

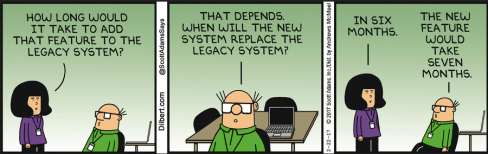

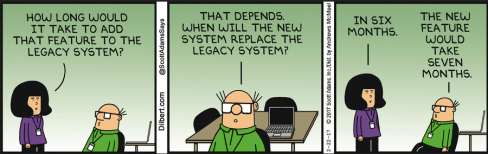

Moving to a federated PLM infrastructure is already a challenge for companies, not because of the available technology, but first of all because of the legacy data and, closely related to that, legacy processes and people skills.

Legacy is creating the inertia, not technology!

Next came the cloud – SaaS

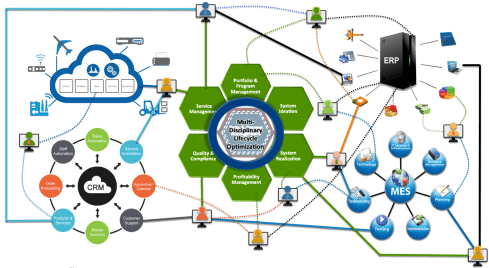

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

A connected enterprise no longer needs interfaces to transfer data from one system to another.

Instead, with apps and dashboards, combined data from different online sources is presented in a single, user-friendly working environment – A combination of the Systems of Record with the new environments – the Systems of Engagement.

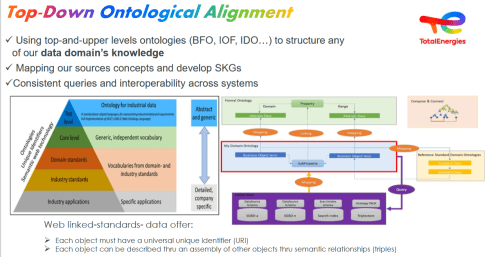

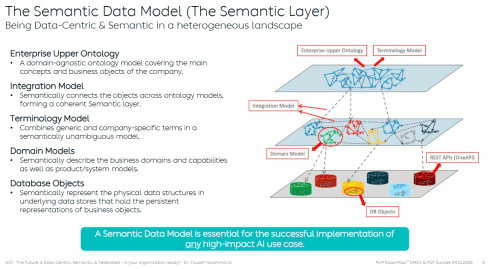

The technology used to create dashboards and apps is based on modern data-driven technologies and principles (ontologies, graph databases, and the semantic web). The Connected Digital Thread was born.

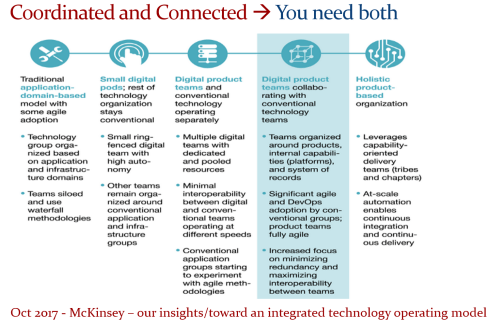

However, legacy systems play an essential role again, as some systems of engagement can be implemented in a complementary manner to the systems of record, allowing companies to work within an integrated technology model.

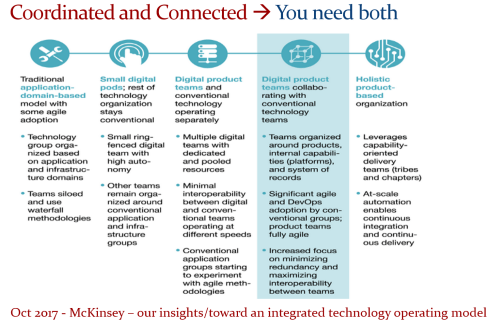

People will work in a particular mode, either coordinated or connected, but organizations can operate in both modes simultaneously. A story I have been sharing a lot – it is not about migrations but about an evolutionary approach towards an integrated technology model.

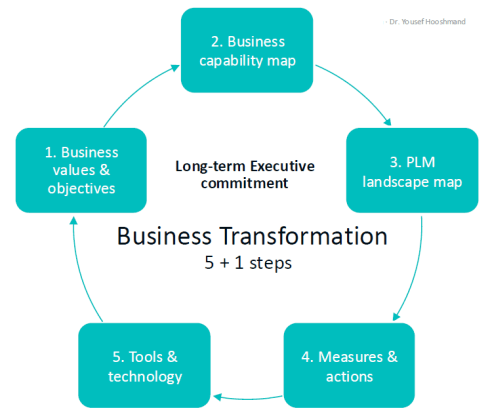

At this point, it becomes essential that business objectives drive the implementation of a PLM infrastructure. Of course, you hear me say we should start from the business; however, the big difference now is that a company should coordinate the technologies, systems, and tools it acquires to avoid isolated islands of information.

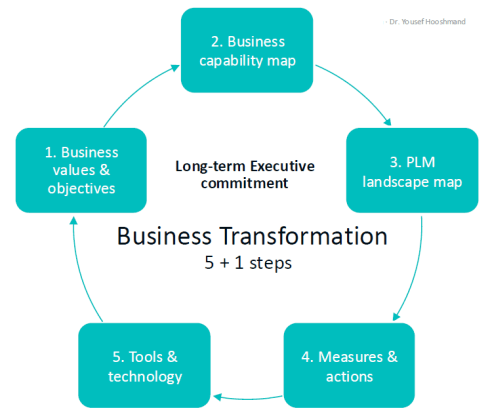

Follow Yousef Hooshmand‘s 5 + 1 business transformation steps.

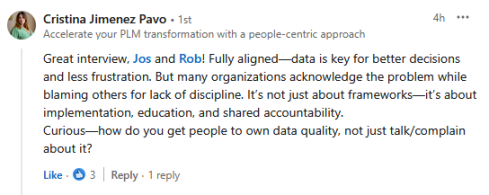

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with Rob Feronne, the product Digital PLuMber:

There are some great insights in this dialogue and the associated LinkedIn comments.

Despite the increasing availability of technology, it is the legacy of people, processes, and culture that is hindering progress.

Rob Feronne had a shocking lightbulb moment 😲 in our discussion about the future of PLM, where the participants – see below – answered a question related to the importance of technology in our PLM domain – shocking also for me.

My thumb was up because modern technology matters! The question inspired Oleg Shilovitsky to write a whole blog post on this topic. If you’re truly shocked, read his post, where I agree with the content; the question is too simple to answer with a thumbs up/down.

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

And now there is AI

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

If you haven’t read anything about AI recently, you must be living in an isolated location. Regardless of the business discussions you are following, it is all about the potential of AI.

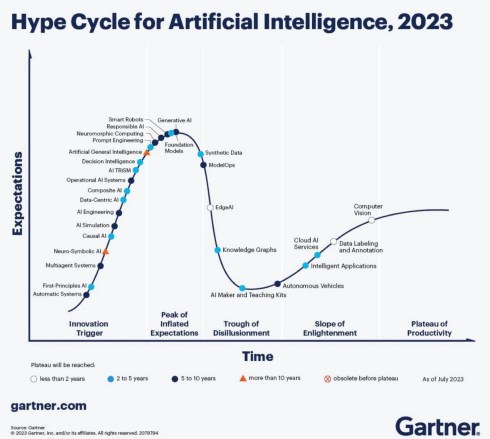

Although AI is not a new concept, the fact that various AI capabilities have now reached the end-user level is what drives the hype. Currently, I believe we are at the peak of the hype.

Last week, I participated in an interesting discussion in the series: The Future of PLM moderated by Michael Finochario, this time talking with the analysts. Click on the link to see Michael’s excellent summary and access to the recording of the event.

It was an interesting discussion for a little more than an hour, and the majority of our discussion was about the potential impact of AI on businesses. First, the impact AI can have on the traditional work of an analyst and next, the effects on the PLM domain.

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

I believe we agreed that AI at this moment is mainly providing higher user efficiency and performance, very much aligned with the interesting research I have been reading in the MIT NANDA report with the title The GenAI Divide: STATE OF AI IN BUSINESS 2025

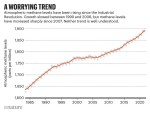

The report’s interesting findings included high adoption of tools but low transformation. Despite significant investment in Generative AI (GenAI), most organizations are not achieving meaningful business transformation.

- 95% of organizations report zero return on GenAI investments.

- Only 5% of integrated AI pilots generate millions in value.

- 80% of organizations have explored or piloted tools like ChatGPT, but these primarily enhance individual productivity.

- 60% of organizations evaluated enterprise-grade systems, but only 20% reached the pilot stage, and just 5% reached production.

- Key barriers include brittle workflows, a lack of contextual learning, and operational misalignment.

Therefore, the question is – Is current AI the next bubble?

In 2014, I wrote about the lack of digital transformation in the PLM domain, and two images (below) from a report by The Economist could be used again. The report can be found here: The Onrushing Wave.

Click on the image to read the 2013 predictions.

I realized that my current job, as a recreational therapist and firefighter at the time, was not at risk, and that some of the predictions from 10 years ago had become a reality. Who is still bothered by telemarketers or retail salespersons?

However, many of the AI symptoms mentioned in the MIT NANDA report are similar to the hype surrounding digital transformation.

The only reservation I have now – will it take a decade before we understand and demonstrate the value of AI, or are we accelerating?

In this context, the upcoming PLM Roadmap/PDT Europe conference on 5 – 6 November will be interesting, as here we will discuss reality.

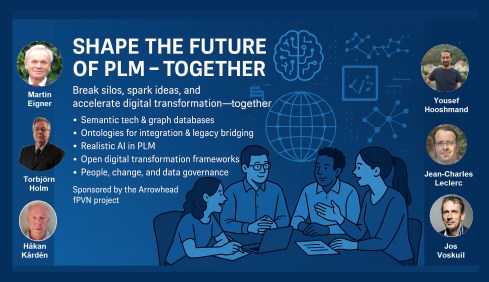

For a few of you interested in more, there is the day before the conference, a (free) workshop where we will discuss with some thought leaders and experts from various companies how the future of PLM could look like – based on standards, AI tools and more. Click on the image below the conclusion.

Conclusion

The summertime was a nice moment to reflect, inspired by others in my network. What is clear is that there is a shift from technology towards people and change. The rapid expansion of AI tools, along with connected technologies, has created an overwhelming array of possibilities. Now it is time for business leadership to understand them and utilize them for significant business improvement, where the fear is that substantial change will always be slowed down by organizational inertia.

On November 11th, we celebrated our 5th anniversary of the PLM Green Global Alliance (PGGA) with a webinar where ♻️ Jos Voskuil (me) interviewed the five other PGGA core team members about developments and experiences in their focus domain, potentially allowing for a broader discussion.

On November 11th, we celebrated our 5th anniversary of the PLM Green Global Alliance (PGGA) with a webinar where ♻️ Jos Voskuil (me) interviewed the five other PGGA core team members about developments and experiences in their focus domain, potentially allowing for a broader discussion. Probably, November 11th was not the best day for broad attendance, and therefore, we hope that the recording of this webinar will allow you to connect and comment on this post.

Probably, November 11th was not the best day for broad attendance, and therefore, we hope that the recording of this webinar will allow you to connect and comment on this post.

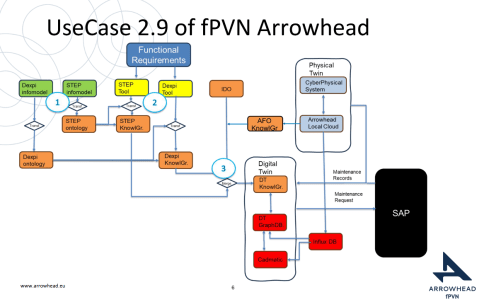

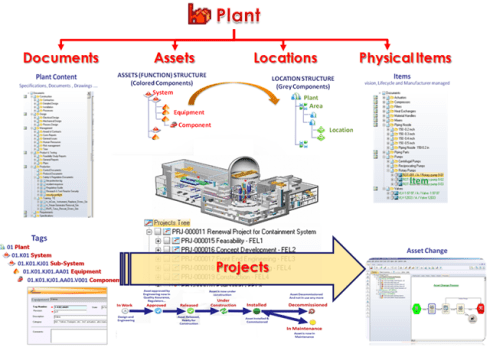

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as

With all these upcoming events, I did not have the time to focus on a new blog post; however, luckily, in the

With all these upcoming events, I did not have the time to focus on a new blog post; however, luckily, in the  As the year ends, I decided to take my crystal ball to see what would happen with PLM in the future. It felt like a virtual experience, and this is what I saw:

As the year ends, I decided to take my crystal ball to see what would happen with PLM in the future. It felt like a virtual experience, and this is what I saw: Over the last month, I have been actively engaged in the field; however, unfortunately, I have not been able to respond to all the interesting and sometimes humorous posts in my LinkedIn stream.

Over the last month, I have been actively engaged in the field; however, unfortunately, I have not been able to respond to all the interesting and sometimes humorous posts in my LinkedIn stream.

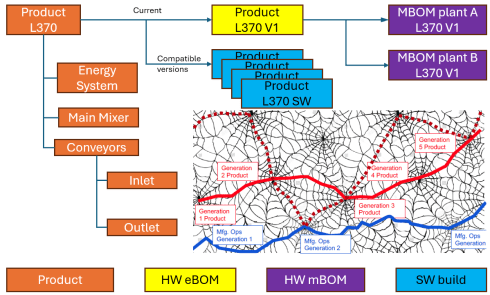

Initially, the Bill of Materials (BOM) existed only in ERP systems to support manufacturing. Together with the Bill of Process (BOP), it formed the heart of production execution. Without a BOM in ERP, product delivery would fail.

Initially, the Bill of Materials (BOM) existed only in ERP systems to support manufacturing. Together with the Bill of Process (BOP), it formed the heart of production execution. Without a BOM in ERP, product delivery would fail.

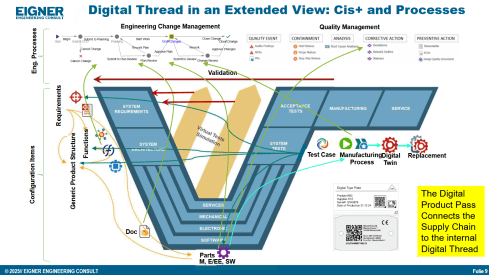

However, is the sBOM the real solution or only a theme pushed by BOM/PLM vendors to keep everything within their system? So far, this represents a linear hardware delivery model, with BOM structures tied to local ERP systems.

However, is the sBOM the real solution or only a theme pushed by BOM/PLM vendors to keep everything within their system? So far, this represents a linear hardware delivery model, with BOM structures tied to local ERP systems. As I mentioned earlier, during the Dutch PLM platform discussion, we had an interesting debate that began with the question of how to manage and service a product during operation. Here, we reach a new level of PLM – not only delivering products as efficiently as possible, but also maintaining them in the field – often for many years.

As I mentioned earlier, during the Dutch PLM platform discussion, we had an interesting debate that began with the question of how to manage and service a product during operation. Here, we reach a new level of PLM – not only delivering products as efficiently as possible, but also maintaining them in the field – often for many years.

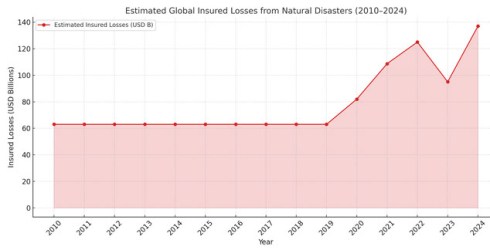

Although scientists engaged in a discussion about the scientific evidence, there were no significant economic forces behind the scenes influencing the scientific research.

Although scientists engaged in a discussion about the scientific evidence, there were no significant economic forces behind the scenes influencing the scientific research.

We look forward to having

We look forward to having

The tools for generative design, life cycle assessment, and, of course, digital twins for the various lifecycle phases can help companies to develop and manufacture more sustainable products.

The tools for generative design, life cycle assessment, and, of course, digital twins for the various lifecycle phases can help companies to develop and manufacture more sustainable products.

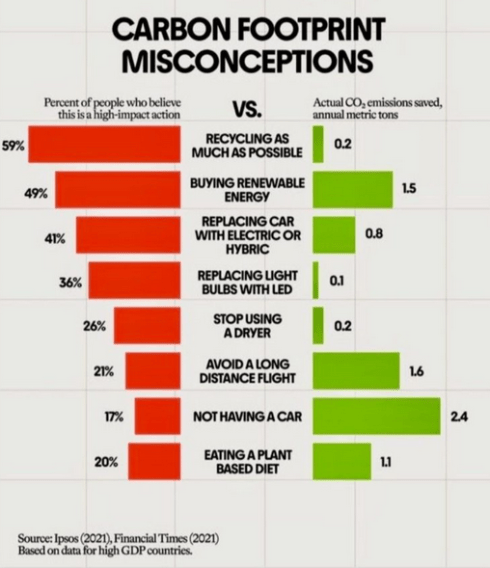

Part of this challenge is the lack of education among top management, who are primarily focused on efficiency gains rather than adopting new approaches or mitigating risk.

Part of this challenge is the lack of education among top management, who are primarily focused on efficiency gains rather than adopting new approaches or mitigating risk. However, first and foremost, the most critical factor in driving sustainability within organizations is the people. Where companies are challenged in creating a green image, including the introduction of the Chief Sustainability Officer (CSO), there has always been resistance from existing business leaders, who prioritize money and profitability.

However, first and foremost, the most critical factor in driving sustainability within organizations is the people. Where companies are challenged in creating a green image, including the introduction of the Chief Sustainability Officer (CSO), there has always been resistance from existing business leaders, who prioritize money and profitability.

And recently, we saw the

And recently, we saw the

Tempted by LinkedIn posts, I noticed the summer was full of memories, with

Tempted by LinkedIn posts, I noticed the summer was full of memories, with

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

The expansion of capabilities was also the moment when the confusion about the term PLM reached its peak: a PLM strategy or a PLM system?

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

With the availability of cloud solutions that support real-time interactions between stakeholders, either within an enterprise or in a value chain, a new paradigm has emerged: the connected enterprise.

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with

An open SaaS infrastructure enables a company to let data flow almost in real-time. There is a lot of discussion related to data quality and governance, and if you have missed it, please read these three articles I created together with

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

As technology has become more accessible than before, you no longer need an IT department to establish a PLM infrastructure. And then indeed, the people and process side needs and deserves much more attention..

In the past three weeks, between some short holidays, I had a discussion with

In the past three weeks, between some short holidays, I had a discussion with  Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me,

Rob, I was curious whether there were any interesting comments from the readers that enhanced your understanding. For me,  It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

It’s easy to imagine a Digital Thread, but building one that’s sustainable and delivers measurable value is a far more formidable challenge.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September.

Mark the date and this workshop in your agenda if you are able and willing to contribute. After the summer holidays, we will develop a more detailed agenda about the concepts to be discussed. Stay tuned to our LinkedIn feed at the end of August/beginning of September. Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset.

Data needs to be an integral, essential and valuable part of the product or service. Individuals become both consumers and producers of data, expected to input clean data, interpret dashboards, and act on insights. In a business where people collaborate across boundaries, ask questions, and share insight, data becomes a competitive asset. Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

Here it is critical that leaders truly believe in the value and set the tone, and because it rare to have everyone in the business care about the data as passionately as they do about the prime function of their unique role (e.g. designer);

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations?

Rob, did you receive any feedback related to part 1? I spoke with a company that emphasized the importance of data quality; however, they were more interested in applying plasters, as they consider a broader approach too disruptive to their current business. Do you see similar situations? Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

Honestly, not much feedback. Data Governance isn’t as sexy or exciting as discussions on Designing, Engineering, Manufacturing, or PLM Technology. HOWEVER, as the saying goes, all roads lead to Rome, and all Digital Engineering discussions ultimately lead to data.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

Designing effective data governance involves tailoring foundational elements, including data stewardship, standards, lineage, metadata, glossaries, and quality rules. These elements must reflect the realities of operations, striking a balance between trade-offs such as speed versus rigor or openness versus control.

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments.

AI also offers enormous potential for data quality and governance. From live monitoring to proactive guidance, adopting this approach will become a much easier business strategy. One can imagine AI forming the core of a company’s Digital Thread—no longer requiring rigidly hardwired systems and data flows, but instead intelligently comparing team data and flagging misalignments. Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Experts define quality rules (completeness, consistency, relationship integrity), and AI enables automated anomaly detection. Initially, humans triage issues, but over time, as trust in AI grows, more of the process can be automated. Eventually, no oversight may be needed; alerts could be sent directly to those empowered to act, whether human or AI.

Hi Jos, Knowing your background in methodology and education, I wanted to share a longer article with you: “What is…

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…