In my previous post, the PLM blame game, I briefly mentioned that there are two delivery models for PLM. One approach based on a PLM system, that contains predefined business logic and functionality, promoting to use the system as much as possible out-of-the-box (OOTB) somehow driving toward a certain rigidness or the other approach where the PLM capabilities need to be developed on top of a customizable infrastructure, providing more flexibility. I believe there has been a debate about this topic over more than 15 years without a decisive conclusion. Therefore I will take you through the pros and cons of both approaches illustrated by examples from the field.

In my previous post, the PLM blame game, I briefly mentioned that there are two delivery models for PLM. One approach based on a PLM system, that contains predefined business logic and functionality, promoting to use the system as much as possible out-of-the-box (OOTB) somehow driving toward a certain rigidness or the other approach where the PLM capabilities need to be developed on top of a customizable infrastructure, providing more flexibility. I believe there has been a debate about this topic over more than 15 years without a decisive conclusion. Therefore I will take you through the pros and cons of both approaches illustrated by examples from the field.

PLM started as a toolkit

The initial cPDM/PLM systems were toolkits for several reasons. In the early days, scalable connectivity was not available or way too expensive for a standard collaboration approach. Engineering information, mostly design files, needed to be shared globally in an efficient manner, and the PLM backbone was often a centralized repository for CAD-data. Bill of Materials handling in PLM was often at a basic level, as either the ERP-system (mostly Aerospace/Defense) or home-grown developed BOM-systems(Automotive) were in place for manufacturing.

The initial cPDM/PLM systems were toolkits for several reasons. In the early days, scalable connectivity was not available or way too expensive for a standard collaboration approach. Engineering information, mostly design files, needed to be shared globally in an efficient manner, and the PLM backbone was often a centralized repository for CAD-data. Bill of Materials handling in PLM was often at a basic level, as either the ERP-system (mostly Aerospace/Defense) or home-grown developed BOM-systems(Automotive) were in place for manufacturing.

Depending on the business needs of the company, the target was too connect as much as possible engineering data sources to the PLM backbone – PLM originated from engineering and is still considered by many people as an engineering solution. For connectivity interfaces and integrations needed to be developed in a time that application integration frameworks were primitive and complicated. This made PLM implementations complex and expensive, so only the large automotive and aerospace/defense companies could afford to invest in such systems. And a lot of tuition fees spent to achieve results. Many of these environments are still operational as they became too risky to touch, as I described in my post: The PLM Migration Dilemma.

The birth of OOTB

Around the year 2000, there was the first development of OOTB PLM. There was Agile (later acquired by Oracle) focusing on the high-tech and medical industry. Instead of document management, they focused on the scenario from bringing the BOM from engineering to manufacturing based on a relatively fixed scenario – therefore fast to implement and fast to validate. The last point, in particular, is crucial in regulated medical environments.

Around the year 2000, there was the first development of OOTB PLM. There was Agile (later acquired by Oracle) focusing on the high-tech and medical industry. Instead of document management, they focused on the scenario from bringing the BOM from engineering to manufacturing based on a relatively fixed scenario – therefore fast to implement and fast to validate. The last point, in particular, is crucial in regulated medical environments.

At that time, I was working with SmarTeam on the development of templates for various industries, with a similar mindset. A predefined template would lead to faster implementations and therefore reducing the implementation costs. The challenge with SmarTeam, however, was that is was very easy to customize, based on Microsoft technology and wizards for data modeling and UI design.

This was not a benefit for OOTB-delivery as SmarTeam was implemented through Value Added Resellers, and their major revenue came from providing services to their customers. So it was easy to reprogram the concepts of the templates and use them as your unique selling points towards a customer. A similar situation is now happening with Aras – the primary implementation skills are at the implementing companies, and their revenue does not come from software (maintenance).

This was not a benefit for OOTB-delivery as SmarTeam was implemented through Value Added Resellers, and their major revenue came from providing services to their customers. So it was easy to reprogram the concepts of the templates and use them as your unique selling points towards a customer. A similar situation is now happening with Aras – the primary implementation skills are at the implementing companies, and their revenue does not come from software (maintenance).

The result is that each implementer considers another implementer as a competitor and they are not willing to give up their IP to the software company.

SmarTeam resellers were not eager to deliver their IP back to SmarTeam to get it embedded in the product as it would reduce their unique selling points. I assume the same happens currently in the Aras channel – it might be called Open Source however probably it is only high-level infrastructure.

Around 2006 many of the main PLM-vendors had their various mid-market offerings, and I contributed at that time to the SmarTeam Engineering Express – a preconfigured solution that was rapid to implement if you wanted.

Although the SmarTeam Engineering Express was an excellent sales tool, the resellers that started to implement the software began to customize the environment as fast as possible in their own preferred manner. For two reasons: the customer most of the time had different current practices and secondly the money come from services. So why say No to a customer if you can say Yes?

Although the SmarTeam Engineering Express was an excellent sales tool, the resellers that started to implement the software began to customize the environment as fast as possible in their own preferred manner. For two reasons: the customer most of the time had different current practices and secondly the money come from services. So why say No to a customer if you can say Yes?

OOTB and modules

Initially, for the leading PLM Vendors, their mid-market templates were not just aiming at the mid-market. All companies wanted to have a standardized PLM-system with as little as possible customizations. This meant for the PLM vendors that they had to package their functionality into modules, sometimes addressing industry-specific capabilities, sometimes areas of interfaces (CAD and ERP integrations) as a module or generic governance capabilities like portfolio management, project management, and change management.

Initially, for the leading PLM Vendors, their mid-market templates were not just aiming at the mid-market. All companies wanted to have a standardized PLM-system with as little as possible customizations. This meant for the PLM vendors that they had to package their functionality into modules, sometimes addressing industry-specific capabilities, sometimes areas of interfaces (CAD and ERP integrations) as a module or generic governance capabilities like portfolio management, project management, and change management.

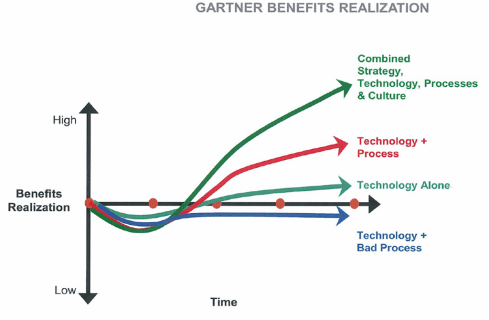

The principles behind the modules were that they need to deliver data model capabilities combined with business logic/behavior. Otherwise, the value of the module would be not relevant. And this causes a challenge. The more business logic a module delivers, the more the company that implements the module needs to adapt to more generic practices. This requires business change management, people need to be motivated to work differently. And who is eager to make people work differently? Almost nobody, as it is an intensive coaching job that cannot be done by the vendors (they sell software), often cannot be done by the implementers (they do not have the broad set of skills needed) or by the companies (they do not have the free resources for that). Precisely the principles behind the PLM Blame Game.

OOTB modularity advantages

The first advantage of modularity in the PLM software is that you only buy the software pieces that you really need. However, most companies do not see PLM as a journey, so they agree on a budget to start, and then every module that was not identified before becomes a cost issue. Main reason because the implementation teams focus on delivering capabilities at that stage, not at providing value-based metrics.

The first advantage of modularity in the PLM software is that you only buy the software pieces that you really need. However, most companies do not see PLM as a journey, so they agree on a budget to start, and then every module that was not identified before becomes a cost issue. Main reason because the implementation teams focus on delivering capabilities at that stage, not at providing value-based metrics.

The second potential advantage of PLM modularity is the fact that these modules supposed to be complementary to the other modules as they should have been developed in the context of each other. In reality, this is not always the case. Yes, the modules fit nicely on a single PowerPoint slide, however, when it comes to reality, there are separate systems with a minimum of integration with the core. However, the advantage is that the PLM software provider now becomes responsible for upgradability or extendibility of the provided functionality, which is a serious point to consider.

The second potential advantage of PLM modularity is the fact that these modules supposed to be complementary to the other modules as they should have been developed in the context of each other. In reality, this is not always the case. Yes, the modules fit nicely on a single PowerPoint slide, however, when it comes to reality, there are separate systems with a minimum of integration with the core. However, the advantage is that the PLM software provider now becomes responsible for upgradability or extendibility of the provided functionality, which is a serious point to consider.

The third advantage from the OOTB modular approach is that it forces the PLM vendor to invest in your industry and future needed capabilities, for example, digital twins, AR/VR, and model-based ways of working. Some skeptic people might say PLM vendors create problems to solve that do not exist yet, optimists might say they invest in imagining the future, which can only happen by trial-and-error. In a digital enterprise, it is: think big, start small, fail fast, and scale quickly.

The third advantage from the OOTB modular approach is that it forces the PLM vendor to invest in your industry and future needed capabilities, for example, digital twins, AR/VR, and model-based ways of working. Some skeptic people might say PLM vendors create problems to solve that do not exist yet, optimists might say they invest in imagining the future, which can only happen by trial-and-error. In a digital enterprise, it is: think big, start small, fail fast, and scale quickly.

OOTB modularity disadvantages

Most of the OOTB modularity disadvantages will be advantages in the toolkit approach, therefore discussed in the next paragraph. One downside from the OOTB modular approach is the disconnect between the people developing the modules and the implementers in the field. Often modules are developed based on some leading customer experiences (the big ones), where the majority of usage in the field is targeting smaller companies where people have multiple roles, the typical SMB approach. SMB implementations are often not visible at the PLM Vendor R&D level as they are hidden through the Value Added Reseller network and/or usually too small to become apparent.

Most of the OOTB modularity disadvantages will be advantages in the toolkit approach, therefore discussed in the next paragraph. One downside from the OOTB modular approach is the disconnect between the people developing the modules and the implementers in the field. Often modules are developed based on some leading customer experiences (the big ones), where the majority of usage in the field is targeting smaller companies where people have multiple roles, the typical SMB approach. SMB implementations are often not visible at the PLM Vendor R&D level as they are hidden through the Value Added Reseller network and/or usually too small to become apparent.

Toolkit advantages

The most significant advantage of a PLM toolkit approach is that the implementation can be a journey. Starting with a clear business need, for example in modern PLM, create a digital thread and then once this is achieved dive deeper in areas of the lifecycle that require improvement. And increased functionality is only linked to the number of users, not to extra costs for a new module.

The most significant advantage of a PLM toolkit approach is that the implementation can be a journey. Starting with a clear business need, for example in modern PLM, create a digital thread and then once this is achieved dive deeper in areas of the lifecycle that require improvement. And increased functionality is only linked to the number of users, not to extra costs for a new module.

However, if the development of additional functionality becomes massive, you have the risk that low license costs are nullified by development costs.

The second advantage of a PLM toolkit approach is that the implementer and users will have a better relationship in delivering capabilities and therefore, a higher chance of acceptance. The implementer builds what the customer is asking for.

The second advantage of a PLM toolkit approach is that the implementer and users will have a better relationship in delivering capabilities and therefore, a higher chance of acceptance. The implementer builds what the customer is asking for.

However, as Henry Ford said, if I would ask my customers what they wanted, they would ask for faster horses.

Toolkit considerations

There are several points where a PLM toolkit can be an advantage but also a disadvantage, very much depending on various characteristics of your company and your implementation team. Let’s review some of them:

Innovative: a toolkit does not provide an innovative way of working immediately. The toolkit can have an infrastructure to deliver innovative capabilities, even as small demonstrations, the implementation, and methodology to implement this innovative way of working needs to come from either your company’s resources or your implementer’s skills.

Innovative: a toolkit does not provide an innovative way of working immediately. The toolkit can have an infrastructure to deliver innovative capabilities, even as small demonstrations, the implementation, and methodology to implement this innovative way of working needs to come from either your company’s resources or your implementer’s skills.

Uniqueness: with a toolkit approach, you can build a unique PLM infrastructure that makes you more competitive than the other. Don’t share your IP and best practices to be more competitive. This approach can be valid if you truly have a competing plan here. Otherwise, the risk might be you are creating a legacy for your company that will slow you down later in time.

Uniqueness: with a toolkit approach, you can build a unique PLM infrastructure that makes you more competitive than the other. Don’t share your IP and best practices to be more competitive. This approach can be valid if you truly have a competing plan here. Otherwise, the risk might be you are creating a legacy for your company that will slow you down later in time.

Performance: this is a crucial topic if you want to scale your solution to the enterprise level. I spent a lot of time in the past analyzing and supporting SmarTeam implementers and template developers on their journey to optimize their solutions. Choosing the right algorithms, the right data modeling choices are crucial.

Performance: this is a crucial topic if you want to scale your solution to the enterprise level. I spent a lot of time in the past analyzing and supporting SmarTeam implementers and template developers on their journey to optimize their solutions. Choosing the right algorithms, the right data modeling choices are crucial.

Sometimes I came into a situation where the customer blamed SmarTeam because customizations were possible – you can read about this example in an old LinkedIn post: the importance of a PLM data model

Experience: When you plan to implement PLM “big” with a toolkit approach, experience becomes crucial as initial design decisions and scope are significant for future extensions and maintainability. Beautiful implementations can become a burden after five years as design decisions were not documented or analyzed. Having experience or an experienced partner/coach can help you in these situations. In general, it is sporadic for a company to have internally experienced PLM implementers as it is not their core business to implement PLM. Experienced PLM implementers vary from size and skills – make the right choice.

Experience: When you plan to implement PLM “big” with a toolkit approach, experience becomes crucial as initial design decisions and scope are significant for future extensions and maintainability. Beautiful implementations can become a burden after five years as design decisions were not documented or analyzed. Having experience or an experienced partner/coach can help you in these situations. In general, it is sporadic for a company to have internally experienced PLM implementers as it is not their core business to implement PLM. Experienced PLM implementers vary from size and skills – make the right choice.

Conclusion

After writing this post, I still cannot write a final verdict from my side what is the best approach. Personally, I like the PLM toolkit approach as I have been working in the PLM domain for twenty years seeing and experiencing good and best practices. The OOTB-box approach represents many of these best practices and therefore are a safe path to follow. The undecisive points are who are the people involved and what is your business model. It needs to be an end-to-end coherent approach, no matter which option you choose.

PLM implementations within the same industry might look the same but often vary significantly due to existing practices, which will not change due to the tool. So there is a need for customization or configuration.

PLM implementations within the same industry might look the same but often vary significantly due to existing practices, which will not change due to the tool. So there is a need for customization or configuration.

Strategic partners should be the partner to support business change management as they are likely to have experience with other companies. Unfortunately, this type of company does not have significant skills in PLM as the PLM domain is just a tiny subset of the whole potential business strategy.

Strategic partners should be the partner to support business change management as they are likely to have experience with other companies. Unfortunately, this type of company does not have significant skills in PLM as the PLM domain is just a tiny subset of the whole potential business strategy.

If your company is implementing PLM, then probably the perception is that you made all the effort to make it successful. You followed the strategic consultants’ advice, selected the best PLM Vendor and system integrator, and created a budget – so what could go wrong?

If your company is implementing PLM, then probably the perception is that you made all the effort to make it successful. You followed the strategic consultants’ advice, selected the best PLM Vendor and system integrator, and created a budget – so what could go wrong?

You might feel that everyone is to blame when a PLM implementation fails. I believe that is indeed the case. If you know in advance where all players have their strengths and weaknesses, a PLM implementation should not fail but be balanced with the right resources. Depending on the scope of your PLM implementation, whether it is a consolidation or a transformation, you should take care of all stakeholders participating in the anti-blame game.

You might feel that everyone is to blame when a PLM implementation fails. I believe that is indeed the case. If you know in advance where all players have their strengths and weaknesses, a PLM implementation should not fail but be balanced with the right resources. Depending on the scope of your PLM implementation, whether it is a consolidation or a transformation, you should take care of all stakeholders participating in the anti-blame game.

When you look at the significant wins Aras is mentioning in their customer base, GM, Schaeffler or Airbus, you will probably discover Aras is more the connection layer between legacy systems, old PLM or PDM systems. They are not the new PLM replacing old PLM. A connection layer creates a digital thread, connecting various data sources for traceability but does not provide digital continuity as the data in the legacy systems is untouched. Still it is an intermediate step towards a hybrid environment.

When you look at the significant wins Aras is mentioning in their customer base, GM, Schaeffler or Airbus, you will probably discover Aras is more the connection layer between legacy systems, old PLM or PDM systems. They are not the new PLM replacing old PLM. A connection layer creates a digital thread, connecting various data sources for traceability but does not provide digital continuity as the data in the legacy systems is untouched. Still it is an intermediate step towards a hybrid environment.

In a small or medium enterprise, the distance between the C-level and the work floor is most of the time much shorter and chances are that the CEO is a long-term company member in case of a long-standing family-owned business.

In a small or medium enterprise, the distance between the C-level and the work floor is most of the time much shorter and chances are that the CEO is a long-term company member in case of a long-standing family-owned business.

It is a heartbreaking statement. I would claim every business uses these words to the outside world. Transparency as far as possible, as you do not want to throw your strategy on the table unless you are a philanthropist (or too wealthy to care).

It is a heartbreaking statement. I would claim every business uses these words to the outside world. Transparency as far as possible, as you do not want to throw your strategy on the table unless you are a philanthropist (or too wealthy to care).

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…

Jos, as usual, you've provided a buffet of "food for thought". Where do you see AI being trained by a…