You are currently browsing the category archive for the ‘Multidisciplinary’ category.

Recently, I have been reading some interesting posts beyond all the technical discussions related to PLM and AI. Is PLM becoming obsolete? Are we heading to a new type of infrastructure based on MCP agents? Are these agents an example of new ways of collaboration?

Collaboration – it pops up everywhere!

Here is a quote from the article that triggered me:

The 𝐧𝐮𝐦𝐛𝐞𝐫 𝐨𝐧𝐞 𝐫𝐞𝐚𝐬𝐨𝐧 organizations deploy MBSE is not simulation or architecture development. It is 𝐞𝐧𝐡𝐚𝐧𝐜𝐞𝐝 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐚𝐧𝐝 𝐜𝐨𝐦𝐦𝐮𝐧𝐢𝐜𝐚𝐭𝐢𝐨𝐧 — at 67%. But here is the uncomfortable part.

Only 24% reported actually achieving collaboration as a business outcome. That is a 43-point gap between intent and result. Traceability is even worse — 48% deploy MBSE for it, 9% say they have realized it.

What if the problem is not that MBSE fails to deliver collaboration — but that most organizations 𝐧𝐞𝐯𝐞𝐫 𝐝𝐞𝐟𝐢𝐧𝐞 𝐰𝐡𝐚𝐭 𝐛𝐞𝐭𝐭𝐞𝐫 𝐜𝐨𝐥𝐥𝐚𝐛𝐨𝐫𝐚𝐭𝐢𝐨𝐧 𝐥𝐨𝐨𝐤𝐬 𝐥𝐢𝐤𝐞 in measurable terms?

Chad Jackson’s article aligns with many other discussions I had with companies related to PLM (and MBSE) – itinspired me to focus this time on collaboration.

How do we measure collaboration?

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

My 2015 blog post has the same title: How do you measure collaboration? The post was written at a time when PLM collaboration had to compete with ERP execution stories. Often, engineering collaboration was considered an inefficient process to be fixed in the future, according to some ERP vendors.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

ERP always had a strong voice at the management level—boxes on an org chart, reporting lines, clear ownership and KPIs flowing upward. You could see how the company was performing.

From the management side, accountability flows downward. The architecture of the organization mirrors the architecture of the product, and the architecture of the product mirrors the architecture of the organization.

We have known this for decades; it is Conway’s Law. Yet we are still surprised when silos emerge exactly where we designed them.

The Management Dilemma

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

In many of my engagements, the company’s management often struggles to understand the value of collaboration because there is no direct line between collaboration and immediate performance. Revenue can be measured. Cycle times can be measured. Defects can be measured. Even employee turnover can be measured.

But collaboration? What is the KPI?

It is a fair question. If something cannot be quantified, it becomes subjective and depends on gut feelings. And if it cannot be tied directly to quarterly results, it often becomes optional.

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

The problem is not that collaboration has no impact on performance – look at the introduction of email in companies. Did your company make a business case for that?

Still, it improved collaboration a lot, and sometimes it became a burden with all the CC-messages and epistles exchanged.

Collaboration has an impact, deeply and systematically. But its impact is indirect, delayed, and distributed. It reduces friction, can improve shared understanding and prevent expensive rework.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

The return on investment on collaboration is real, but it does not show up as a clean, linear metric.

For a hierarchical and linearly structured organization, horizontal collaboration is often hard to “sell.”

Back to Conway’s Law

Organizational structure shapes communication patterns. Communication patterns shape systems.

Organizational structure shapes communication patterns. Communication patterns shape systems.

If your organization is vertical, your product will be vertical. If your incentives are local, your decisions will be local. If your teams are isolated, your solutions will be fragmented.

You cannot expect horizontal behavior from a vertically optimized structure without friction.

Disconnected collaboration initiatives fail because they try to overlay horizontal tools on top of vertical incentives.

Attempts like a new collaboration platform or using shared workspace technology to incentivize collaboration are examples of this approach.

But the underlying structure remains untouched. People are still measured on local performance. Budgets are still allocated per department. Promotions still reward vertical success.

![]() First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

First question to ask in your company: Who is responsible for your PLM/collaboration infrastructure for non-transactional information?

Most likely, it is in the IT or Engineering silo, rarely on a higher organizational level.

And then we are surprised when collaboration stalls?

The Myth of the Tool

Whenever collaboration becomes a pain, people look for IT tools as a cure.

“We need better platforms.”

“We need better platforms.”

“We need integrated systems.”

and now:

“We need AI – the AI agents will do the collaboration for us.”

Tools matter, but they are amplifiers. They amplify existing behavior. They do not create it. While finalizing this article, I saw this post from Dr. Sebastian Wernicke coming in, containing this quote:

Agents are software. Maturity is culture. And culture, inconveniently, doesn’t come with an install package.

If trust is low, a collaboration platform becomes a battlefield. If incentives are misaligned, shared dashboards become weapons. If fear dominates, transparency becomes a threat.

![]() Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

Collaboration is not a software problem. It is a human problem. Which brings us to something that is rarely discussed in boardrooms: the intrinsic motivation of its employees.

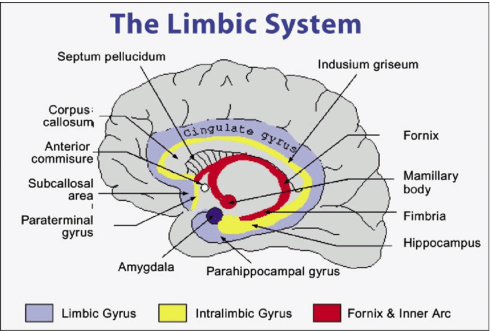

The Limbic Brain Is Always There

Beneath the rational layer of strategy and planning sits something older: the limbic system. The part of us that cares about belonging, safety, recognition, autonomy, and purpose.

Collaboration thrives when the limbic brain’s needs are met. It collapses when they are threatened.

- If people feel unsafe, they protect information!

- If they feel undervalued, they withdraw effort!

- If they feel controlled, they resist alignment!

You cannot mandate collaboration if the emotional system of the organization is defensive.

The question is not “How do we force collaboration?”

The question is “How do we create conditions where collaboration feels natural?”

And that requires leaders to connect to the human, not just to the role or an artificial intelligence solution. They should be inspired by this iconic image from Share PLM:

Besides a difficult-to-quantify ROI, there is another reason why collaboration struggles to gain executive traction: it rarely creates immediate success.

It prevents future failure, and we humans in general do not prioritize prevention, thinking of our environmental, financial and potential even health behavior. Where prevention has the lowest cost, most of the time, fixing the damage lies in our nature.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For companies, it is easier to celebrate the hero who fixes a late-stage integration disaster than the quiet team that prevented it months earlier through cross-functional dialogue.

For me, the firefighters are the biggest challenge to successfully implementing a PLM infrastructure. The image to the left comes from a 2014 presentation when discussing potential resistance to a successful PLM implementation.

In vertical systems, firefighting is visible. Prevention is silent and therefore collaboration activities feel like a cost center rather than a strategic lever.

Where to Push, Where to Invest?

![]() If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

If you cannot directly measure collaboration, where should you push? Not in tools alone, slogans or one-off workshops. Invest in shared experiences.

When people meet outside their vertical silos, something subtle shifts. They see faces instead of functions. They understand constraints instead of assuming incompetence. They replace narratives with conversations.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Note: shared experiences are not the same as planned online webmeetings that became popular during and after COVID. They have a rigid regime of collaboration enforcement, back-to-back in many companies, most of the time lacking the typical “coffee machine” experiences.

Also, when looking at events where people share experiences, there is a difference between a traditional vertical PLM/CM/IT/ERP conference where specialists focus on one discipline and on the other side, a human-centric conference, where humans share their experiences in an organization.

The Share PLM Summit in May last year was an eye-opener for me. Starting from the human perspective brought a lot of energy and willingness to discuss various insights – collaboration at its best.

Events, summits, workshops—when done well—create human connection. They remind participants that behind every deliverable sits a person trying to do meaningful work.

The focus on the human perspective is not soft. It is strategic because collaboration is not primarily about information exchange. It is about relationship quality and trust.

The Real Question

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

The question is not whether collaboration is valuable. The question is whether we are willing to adjust our vertical incentives to make it possible.

Because collaboration is not free, it requires time. It requires emotional energy. It requires psychological safety. It sometimes requires giving up local control for global benefit.

In systems terms, it requires shifting from local optimization to whole-system optimization.

That is uncomfortable.

But if our products are complex, interconnected, and rapidly evolving—as most are today—then vertical thinking alone is no longer sufficient. The world has become horizontal, even if our org charts have not.

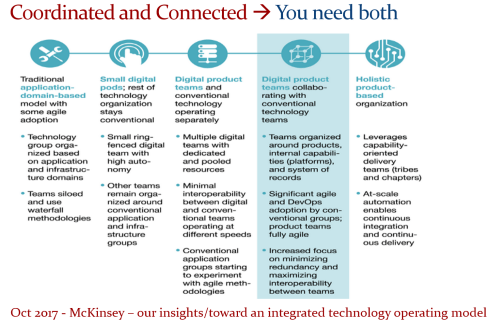

And perhaps the real challenge is not how to measure collaboration, but how to design organizations where collaboration is no longer something we need to sell at all. An article from McKinsey might inspire you here for this transition – for me, it did: Toward an integrated technology operating model.

Beyond AI

While everyone talks and writes about AI, I do not believe AI will solve the collaboration issue. For sure, AI collaboration with agents will increase personal and organizational effectiveness, but it never touches our limbic brain, the irreplaceable part that makes us typical humans and unique.

There will always be a need for that, unless we become numb and addicted to the AI environments. There are various studies popping up on how AI “untrains” our brain muscles, reduces patience and deep thinking. Finding a new human balance is crucial.

Conclusion

Triggered by Chad Jackson’s post about MBSE and collaboration, I took the time to deep-dive into the aspects of collaboration in the PLM domain. How do you manage collaboration?

Come and share your experiences at the upcoming Share PLM 2026 summit from 19-20 May in Jerez. The title of my keynote: Are Humans Still Resources? Agentic AI and the Future of Work and PLM.

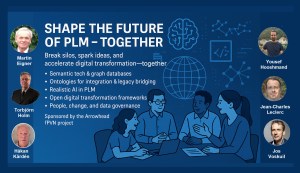

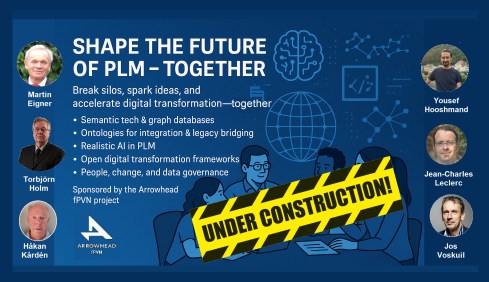

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

The meeting had two primary purposes.

- Firstly, we aimed to create an environment where these concepts could be discussed and presented to a broader audience, comprising academics, industrial professionals, and software developers. The group’s feedback could serve as a benchmark for them.

- The second goal was to bring people together and create a networking opportunity, either during the PLM Roadmap/PDT Europe conference, the day after, or through meetings established after this workshop.

Personally, it was a great pleasure to meet some people in person whose LinkedIn articles I had admired and read.

The meeting was sponsored by the Arrowhead fPVN project, a project I discussed in a previous blog post related to the PLM Roadmap/PDT Europe 2024 conference last year. Together with the speakers, we have begun working on a more in-depth paper that describes the similarities and the lessons learned that are relevant. This activity will take some time.

Therefore, this post only includes the abstracts from the speakers and links to their presentations. It concludes with a few observations from some attendees.

Reasoning Machines: Semantic Integration in Cyber-Physical Environments

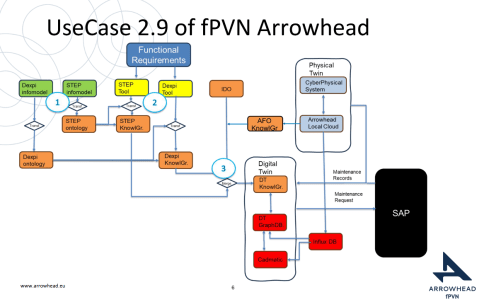

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

The presentation outlines the phases of the product and production lifecycle, including requirements, specification, design, build-up, handover, and operations. It raises a question about unifying these phases and their associated technologies and standards, emphasizing that the most extended phase, which involves operation, maintenance, failure, and evolution until retirement, should be the primary focus.

It also discusses seamless integration, outlining a partial list of standards and technologies categorized into three sections: “Modelling & Representation Standards,” “Communication & Integration Protocols,” and “Architectural & Security Standards.” Each section contains a table listing various technology standards, their purposes, and references. Additionally, the presentation includes a “Conceptual Layer Mapping” table that details the different layers (Knowledge, Service, Communication, Security, and Data), along with examples, functions, and references.

The presentation outlines an approach for utilizing semantic technologies to ensure interoperability across heterogeneous datasets throughout a product’s lifecycle. Key strategies include using OWL 2 DL for semantic consistency, aligning domain-specific knowledge graphs with ISO 23726-3, applying W3C Alignment techniques, and leveraging Arrowhead’s microservice-based architecture and Framework Ontology for scalable and interoperable system integration.

The utilized software architecture system, including three main sections: “Functional Requirements,” “Physical Twin,” and “Digital Twin,” each containing various interconnected components, will be presented. The Architecture includes today several Knowledge Graphs (KG): A DEXPI KG, A STEP (ISO 10303) KG, An Arrowhead Framework KG and under work the CFIHOS Semantics Ontology, all aligned.

👉The presentation: W3C Major standard interoperability_Paris

Beyond Handover: Building Lifecycle-Ready Semantic Interoperability

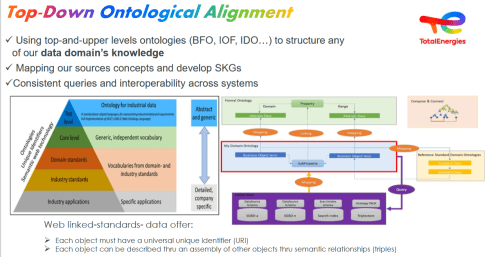

Jean-Charles Leclerc argued that Industrial data standards must evolve beyond the narrow scope of handover and static interoperability. To truly support digital transformation, they must embrace lifecycle semantics or, at the very least, be designed for future extensibility.

This shift enables technical objects and models to be reused, orchestrated, and enriched across internal and external processes, unlocking value for all stakeholders and managing the temporal evolution of properties throughout the lifecycle. A key enabler is the “pattern of change”, a dynamic framework that connects data, knowledge, and processes over time. It allows semantic models to reflect how things evolve, not just how they are delivered.

By grounding semantic knowledge graphs (SKGs) in such rigorous logic and aligning them with W3C standards, we ensure they are both robust and adaptable. This approach supports sustainable knowledge management across domains and disciplines, bridging engineering, operations, and applications.

Ultimately, it’s not just about technology; it’s about governance.

Being Sustainab’OWL (Web Ontology Language) by Design! means building semantic ecosystems that are reliable, scalable, and lifecycle-ready by nature.

Additional Insight: From Static Models to Living Knowledge

To transition from static information to living knowledge, organizations must reassess how they model and manage technical data. Lifecycle-ready interoperability means enabling continuous alignment between evolving assets, processes, and systems. This requires not only semantic precision but also a governance framework that supports change, traceability, and reuse, turning standards into operational levers rather than compliance checkboxes.

👉The presentation: Beyond Handover – Building Lifecycle Ready Semantic Interoperability

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

How can we achieve our vision of PLM – the Single Source of Truth?

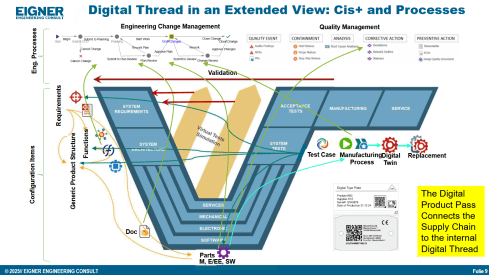

Martin Eigner stated that Product Lifecycle Management (PLM) has long promised to serve as the Single Source of Truth for organizations striving to manage product data, processes, and knowledge across their entire value chain. Yet, realizing this vision remains a complex challenge.

Achieving a unified PLM environment requires more than just implementing advanced software systems—it demands cultural alignment, organizational commitment, and seamless integration of diverse technologies. Central to this vision is data consistency: ensuring that stakeholders across engineering, manufacturing, supply chain, and service have access to accurate, up-to-date, and contextualized information along the Product Lifecycle. This involves breaking down silos, harmonizing data models, and establishing governance frameworks that enforce standards without limiting flexibility.

Emerging technologies and methodologies, such as Extended Digital Thread, Digital Twins, cloud-based platforms, and Artificial Intelligence, offer new opportunities to enhance collaboration and integrated data management.

However, their success depends on strong change management and a shared understanding of PLM as a strategic enabler rather than a purely technical solution. By fostering cross-functional collaboration, investing in interoperability, and adopting scalable architectures, organizations can move closer to a trustworthy single source of truth. Ultimately, realizing the vision of PLM requires striking a balance between innovation and discipline—ensuring trust in data while empowering agility in product development and lifecycle management.

👉The presentation: Martin – Workshop PLM Future 04_10_25

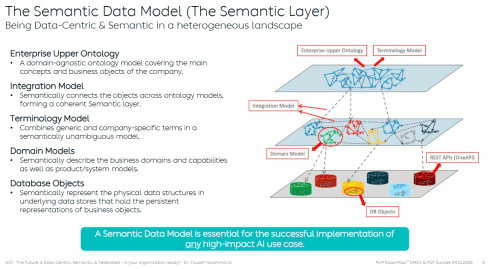

The Future is Data-Centric, Semantic, and Federated … Is your organization ready?

Yousef Hooshmand, who is currently working at NIO as PLM & R&D Toolchain Lead Architect, discussed the must-have relations between a data-centric approach, semantic models and a federated environment as the image below illustrates:

Why This Matters for the Future?

- Engineering is under unprecedented pressure: products are becoming increasingly complex, customers are demanding personalization, and development cycles must be accelerated to meet these demands. Traditional, siloed methods can no longer keep up.

- The way forward is a data-centric, semantic, and federated approach that transforms overwhelming complexity into actionable insights, reduces weeks of impact analysis to minutes, and connects fragmented silos to create a resilient ecosystem.

- This is not just an evolution, but a fundamental shift that will define the future of systems engineering. Is your organization ready to embrace it?

👉The presentation: The Future is Data-Centric, Semantic, and Federated.

Some of first impressions

👉 Bhanu Prakash Ila from Tata Consultancy Services– you can find his original comment in this LinkedIn post

Key points:

- Traditional PLM architectures struggle with the fundamental challenge of managing increasingly complex relationships between product data, process information, and enterprise systems.

- Ontology-Based Semantic Models – The Way Forward for PLM Digital Thread Integration: Ontology-based semantic models address this by providing explicit, machine-interpretable representations of domain knowledge that capture both concepts and their relationships. These lay the foundations for AI-related capabilities.

It’s clear that as AI, semantic technologies, and data intelligence mature, the way we think and talk about PLM must evolve too – from system-centric to value-driven, from managing data to enabling knowledge and decisions.

A quick & temporary conclusion

Typically, I conclude my blog posts with a summary. However, this time the conclusion is not there yet. There is work to be done to align concepts and understand for which industry they are most applicable. Using standards or avoiding standards as they move too slowly for the business is a point of ongoing discussion. The takeaway for everyone in the workshop was that data without context has no value. Ontologies, semantic models and domain-specific methodologies are mandatory for modern data-driven enterprises. You cannot avoid this learning path by just installing a graph database.

In my previous post, “My PLM Bookshelf,” on LinkedIn, I shared some of the books that influenced my thinking related to PLM. As you can see in the LinkedIn comments, other people added their recommendations for PLM-related books to get inspired or more knowledgeable.

In my previous post, “My PLM Bookshelf,” on LinkedIn, I shared some of the books that influenced my thinking related to PLM. As you can see in the LinkedIn comments, other people added their recommendations for PLM-related books to get inspired or more knowledgeable.

Where reading a book is a personal activity, now I want to share with you how to get educated in a more interactive manner related to PLM. In this post, I talk with Peter Bilello, President & CEO of CIMdata. If you haven’t heard about CIMdata and you are active in PLM, more to learn on their website HERE. Now let us focus on Education.

Where reading a book is a personal activity, now I want to share with you how to get educated in a more interactive manner related to PLM. In this post, I talk with Peter Bilello, President & CEO of CIMdata. If you haven’t heard about CIMdata and you are active in PLM, more to learn on their website HERE. Now let us focus on Education.

CIMdata

![]() Peter, knowing CIMdata from its research valid for the whole PLM community, I am curious to learn what is the typical kind of training CIMdata is providing to their customers.

Peter, knowing CIMdata from its research valid for the whole PLM community, I am curious to learn what is the typical kind of training CIMdata is providing to their customers.

![]() Jos, throughout much of CIMdata’s existence, we have delivered educational content to the global PLM industry. With a core business tenant of knowledge transfer, we began offering a rich set of PLM-related tutorials at our North American and pan-European conferences starting in the earlier 1990s.

Jos, throughout much of CIMdata’s existence, we have delivered educational content to the global PLM industry. With a core business tenant of knowledge transfer, we began offering a rich set of PLM-related tutorials at our North American and pan-European conferences starting in the earlier 1990s.

Since then, we have expanded our offering to include a comprehensive set of assessment-based certificate programs in a broader PLM sense. For example, systems engineering and digital transformation-related topics. In total, we offer more than 30 half-day classes. All of which can be delivered in-person as a custom configuration for a specific client and through public virtual-live or in-person classes. We have certificated more than 1,000 PLM professionals since the introduction in 2009 of this PLM Leadership offering.

Based on our experience, we recommend that an organization’s professional education strategy and plans address the organization’s specific processes and enabling technologies. This will help ensure that it drives the appropriate and consistent operations of its processes and technologies.

Based on our experience, we recommend that an organization’s professional education strategy and plans address the organization’s specific processes and enabling technologies. This will help ensure that it drives the appropriate and consistent operations of its processes and technologies.

For that purpose, we expanded our consulting offering to include a comprehensive and strategic digital skills transformation framework. This framework provides an organization with a roadmap that can define the skills an organization’s employees need to possess to ensure a successful digital transformation.

In turn, this framework can be used as an efficient tool for the organization’s HR department to define its training and job progression programs that align with its overall transformation.

In turn, this framework can be used as an efficient tool for the organization’s HR department to define its training and job progression programs that align with its overall transformation.

The success of training

![]() We are both promoting the importance of education to our customers. Can you share with us an example where Education really made a difference? Can we talk about ROI in the context of training?

We are both promoting the importance of education to our customers. Can you share with us an example where Education really made a difference? Can we talk about ROI in the context of training?

![]() Jos, I fully agree. Over the years, we have learned that education and training are often minimized (i.e., sub-optimized). This is unfortunate and has usually led to failed or partially successful implementations.

Jos, I fully agree. Over the years, we have learned that education and training are often minimized (i.e., sub-optimized). This is unfortunate and has usually led to failed or partially successful implementations.

In our view, both education and training are needed, along with strong organizational change management (OCM) and a quality assurance program during and after the implementation.

In our terms, education deals with the “WHY” and training with the “HOW”. Why do we need to change? Why do we need to do things differently? And then “HOW” to use new tools within the new processes.

In our terms, education deals with the “WHY” and training with the “HOW”. Why do we need to change? Why do we need to do things differently? And then “HOW” to use new tools within the new processes.

We have seen far too many failed implementations where sub-optimized decisions were made due to a lack of understanding (i.e., a clear lack of education). We have also witnessed training and education being done too early or too late.

This leads to a reduced Return on Investment (ROI).

Therefore a well-defined skills transformation framework is critical for any company that wants to grow and thrive in the digital world. Finally, a skills transformation framework needs to be tied directly to an organization’s digital implementation roadmap and structure, state of the process, and technology maturity to maximize success.

Therefore a well-defined skills transformation framework is critical for any company that wants to grow and thrive in the digital world. Finally, a skills transformation framework needs to be tied directly to an organization’s digital implementation roadmap and structure, state of the process, and technology maturity to maximize success.

Training for every size of the company?

![]() When CIMdata conducts PLM training, is there a difference, for example, when working with a big global enterprise or a small and medium enterprise?

When CIMdata conducts PLM training, is there a difference, for example, when working with a big global enterprise or a small and medium enterprise?

You might think the complexity might be similar; however, the amount of internal knowledge might differ. So how are you dealing with that?

W![]() e basically find that the amount of training/education required mostly depends on the implementation scope. Meaning the scope of the proposed digital transformation and the current maturity level of the impacted user community.

e basically find that the amount of training/education required mostly depends on the implementation scope. Meaning the scope of the proposed digital transformation and the current maturity level of the impacted user community.

It is important to measure the current maturity and establish appropriate metrics to measure the success of the training (e.g., are people, once trained, using the tools correctly).

CIMdata has created a three-part PLM maturity model that allows an organization to understand its current PLM-related organizational, process, and technology maturity.

The PLM maturity model provides an important baseline for identifying and/or developing the appropriate courses for execution.

This also allows us, when we are supporting the definition of a digital skills transformation framework, to understand how the level of internal knowledge might differ within and between departments, sites, and disciplines. All of which help define an organization-specific action plan, no matter its size.

This also allows us, when we are supporting the definition of a digital skills transformation framework, to understand how the level of internal knowledge might differ within and between departments, sites, and disciplines. All of which help define an organization-specific action plan, no matter its size.

Where is CIMdata training different?

![]() Most of the time, PLM implementers offer training too for their prospects or customers. So, where is CIMdata training different?

Most of the time, PLM implementers offer training too for their prospects or customers. So, where is CIMdata training different?

For this, it is important to differentiate between education and training. So, CIMdata provides education (the why) and training and education strategy development and planning.

For this, it is important to differentiate between education and training. So, CIMdata provides education (the why) and training and education strategy development and planning.

We don’t provide training on how to use a specific software tool. We believe that is best left to the systems integrator or software provider.

While some implementation partners can develop training plans and educational strategies, they often fall short in helping an organization to effectively transform its user community. Here we believe training specialists are better suited.

Digital Transformation and PLM

![]() One of my favorite topics is the impact of digitization in the area of product development. CIMdata introduced the Product Innovation Platform concept to differentiate from traditional PDM/PLM. Who needs to get educated to understand such a transformation, and what does CIMdata contribute to this understanding.

One of my favorite topics is the impact of digitization in the area of product development. CIMdata introduced the Product Innovation Platform concept to differentiate from traditional PDM/PLM. Who needs to get educated to understand such a transformation, and what does CIMdata contribute to this understanding.

![]() We often start with describing the difference between digitalization and digitization. This is crucial to be understood by an organization’s management team. In addition, management must understand that digitalization is an enterprise initiative.

We often start with describing the difference between digitalization and digitization. This is crucial to be understood by an organization’s management team. In addition, management must understand that digitalization is an enterprise initiative.

It isn’t just about product development, sales, or enabling a new service experience. It is about maximizing a company’s ROI in applying and leveraging digital as needed throughout the organization. The only way an organization can do this successfully is by taking an end-to-end approach.

The Product Innovation Platform is focused on end-to-end product lifecycle management. Therefore, it must work within the context of other enterprise processes that are focused on the business’s resources (i.e., people, facilities, and finances) and on its transactions (e.g., purchasing, paying, and hiring).

As a result, an organization must understand the interdependencies among these domains. If they don’t, they will ultimately sub-optimize their investment. It is these and other important topics that CIMdata describes and communicates in its education offering.

More than Education?

![]() As a former teacher, I know that a one-time education, a good book or slide deck, is not enough to get educated. How does CIMdata provide a learning path or coaching path to their customers?

As a former teacher, I know that a one-time education, a good book or slide deck, is not enough to get educated. How does CIMdata provide a learning path or coaching path to their customers?

![]() Jos, I fully agree. Sustainability of a change and/or improved way of working (i.e., long-term sustainability) is key to true and maximized ROI. Here I am referring to the sustainability of the transformation, which can take years.

Jos, I fully agree. Sustainability of a change and/or improved way of working (i.e., long-term sustainability) is key to true and maximized ROI. Here I am referring to the sustainability of the transformation, which can take years.

With this, organizational change management (OCM) is required. OCM must be an integral part of a digital transformation program and be embedded into a program’s strategy, execution, and long-term usage. That means training, education, communication, and reward systems all have to be managed and executed on an ongoing basis.

For example, OCM must be executed alongside an organization’s digital skills transformation program. Our OCM services focus on strategic planning and execution support. We have found that most companies understand the importance of OCM, often don’t fully follow through on it.

For example, OCM must be executed alongside an organization’s digital skills transformation program. Our OCM services focus on strategic planning and execution support. We have found that most companies understand the importance of OCM, often don’t fully follow through on it.

A model-based future?

![]() During the CIMdata Roadmap & PDT conferences, we have often discussed the importance of Model-Based Systems Engineering methodology as a foundation of a model-based enterprise. What do you see? Is it only the big Aerospace and Defense companies that can afford this learning journey, or should other industries also invest? And if yes, how to start.

During the CIMdata Roadmap & PDT conferences, we have often discussed the importance of Model-Based Systems Engineering methodology as a foundation of a model-based enterprise. What do you see? Is it only the big Aerospace and Defense companies that can afford this learning journey, or should other industries also invest? And if yes, how to start.

J![]() os, here I need to step back for a minute. All companies have to deal with increasing complexity for their organization, supply chain, products, and more.

os, here I need to step back for a minute. All companies have to deal with increasing complexity for their organization, supply chain, products, and more.

So, to optimize its business, an organization must understand and employ systems thinking and system optimization concepts. Unfortunately, most people think of MBSE as an engineering discipline. This is unfortunate because engineering is only one of the systems of systems that an organization needs to optimize across its end-to-end value streams.

The reality is all companies can benefit from MBSE. As long as they consider optimization across their specific disciplines, in the context of their products and services and where they exist within their value chain.

The MBSE is not just for Aerospace and Defense companies. Still, a lot can be learned from what has already been done. Also, leading automotive companies are implementing and using MBSE to design and optimize semi- and high-automated vehicles (i.e., systems of systems).

The starting point is understanding your systems of systems environment and where bottlenecks exist.

The starting point is understanding your systems of systems environment and where bottlenecks exist.

There should be no doubt, education is needed on MBSE and how MBSE supports the organization’s Model-Based Enterprise requirements.

Published work from the CIMdata administrated A&D PLM Action Group can be helpful. Also, various MBE and systems engineering maturity models, such as one that CIMdata utilizes in its consulting work.

Want to learn more?

![]() Thanks, Peter, for sharing your insights. Are there any specific links you want to provide to get educated on the topics discussed? Perhaps some books to read or conferences to visit?

Thanks, Peter, for sharing your insights. Are there any specific links you want to provide to get educated on the topics discussed? Perhaps some books to read or conferences to visit?

![]()

x

Jos, as you already mentioned:

x

- the CIMdata Roadmap & PDT conferences have provided a wealth of insight into this market for more than 25 years.

[Jos: Search for my blog posts starting with the text: “The weekend after ….”] - In addition, there are several blogs, like yours, that are worth following, and websites, like CIMdata’s pages for education or other resources which are filled with downloadable reading material.

- Additionally, there are many user conferences from PLM solution providers and third-party conferences, such as those hosted by the MarketKey organization in the UK.

These conferences have taken place in Europe and North America for several years. Information exchange and formal training and education are offered in many events. Additionally, they provide an excellent opportunity for networking and professional collaboration.

What I learned

Talking with Peter made me again aware of a few things. First, it is important to differentiate between education and training. Where education is a continuous process, training is an activity that must take place at the right time. Unfortunately, we often mix those two terms and believe that people are educated after having followed a training.

Talking with Peter made me again aware of a few things. First, it is important to differentiate between education and training. Where education is a continuous process, training is an activity that must take place at the right time. Unfortunately, we often mix those two terms and believe that people are educated after having followed a training.

Secondly, investing in education is as crucial as investing in hard- or software. As Peter mentioned:

We often start with describing the difference between digitalization and digitization. This is crucial to be understood by an organization’s management team. In addition, management must understand that digitalization is an enterprise initiative.

System Thinking is not just an engineering term; it will be a mandate for managing a company, a product and even a planet into the future

Conclusion

Conclusion

This time a quote from Albert Einstein, supporting my PLM coaching intentions:

“Education is not the learning of facts

but the training of the mind to think.”

In my last post, I zoomed in on a preferred technical architecture for the future digital enterprise. Drawing the conclusion that it is a mission impossible to aim for a single connected environment. Instead, information will be stored in different platforms, both domain-oriented (PLM, ERP, CRM, MES, IoT) and value chain oriented (OEM, Supplier, Marketplace, Supply Chain hub).

In my last post, I zoomed in on a preferred technical architecture for the future digital enterprise. Drawing the conclusion that it is a mission impossible to aim for a single connected environment. Instead, information will be stored in different platforms, both domain-oriented (PLM, ERP, CRM, MES, IoT) and value chain oriented (OEM, Supplier, Marketplace, Supply Chain hub).

In part 3, I posted seven statements that I will be discussing in this series. In this post, I will zoom in on point 2:

Data-driven does not mean we do not need any documents anymore. Read electronic files for documents. Likely, document sets will still be the interface to non-connected entities, suppliers, and regulatory bodies. These document sets can be considered a configuration baseline.

System of Record and System of Engagement

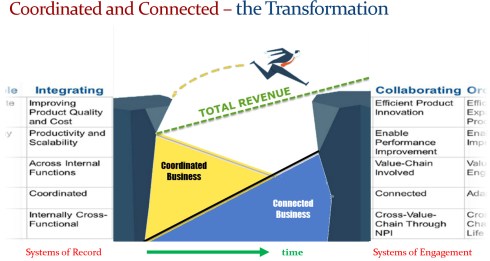

In the image below, a slide from 2016, I show a simplified view when discussing the difference between the current, coordinated approach and the future, connected approach. This picture might create the wrong impression that there are two different worlds – either you are document-driven, or you are data-driven.

In the follow-up of this presentation, I explained that companies need both environments in the future. The most efficient way of working for operations will be infrastructure on the right side, the platform-based approach using connected information.

For traceability and disconnected information exchanges, the left side will be there for many years to come. Systems of Record are needed for data exchange with disconnected suppliers, disconnected regulatory bodies and probably crucial for configuration management.

For traceability and disconnected information exchanges, the left side will be there for many years to come. Systems of Record are needed for data exchange with disconnected suppliers, disconnected regulatory bodies and probably crucial for configuration management.

The System of Record will probably remain as a capability in every platform or cross-section of platform information. The Systems of Engagement will be the configured real-time environment for anyone involved in active company processes, not only ERP or MES, all execution.

Introducing SysML and SML

This summer, I received a copy of Martin Eigner’s System Lifecycle Management book, which I am reading at his moment in my spare moments. I always enjoyed Martin’s presentations. In many ways, we share similar ideas. Martin from his profession spent more time on the academic aspects of product and system lifecycle than I. But, on the other hand, I have always been in the field observing and trying to make sense of what I see and learn in a coherent approach. I am halfway through the book now, and for sure, I will come back on the book when I have finished.

This summer, I received a copy of Martin Eigner’s System Lifecycle Management book, which I am reading at his moment in my spare moments. I always enjoyed Martin’s presentations. In many ways, we share similar ideas. Martin from his profession spent more time on the academic aspects of product and system lifecycle than I. But, on the other hand, I have always been in the field observing and trying to make sense of what I see and learn in a coherent approach. I am halfway through the book now, and for sure, I will come back on the book when I have finished.

A first impression: A great and interesting book for all. Martin and I share the same history of data management. Read all about this in his second chapter: Forty Years of Product Data Management

From PDM via PLM to SysLM, is a chapter that everyone should read when you haven’t lived it yourself. It helps you to understand the past (Learning for the past to understand the future). When I finish this series about the model-based and connected approach for products and systems, Martin’s book will be highly complementary given the content he describes.

There is one point for which I am looking forward to is feedback from the readers of this blog.

Should we, in our everyday language, better differentiate between Product Lifecycle Management (PLM) and System Lifecycle Management(SysLM)?

In some customer situations, I talk on purpose about System Lifecycle Management to create the awareness that the company’s offering is more than an electro/mechanical product. Or ultimately, in a more circular economy, would we use the term Solution Lifecycle Management as not only hardware and software might be part of the value proposition?

Martin uses consistently the abbreviation SysLM, where I would prefer the TLA SLM. The problem we both have is that both abbreviations are not unique or explicit enough. SysLM creates confusion with SysML (for dyslectic people or fast readers). SLM already has so many less valuable meanings: Simulation Lifecycle Management, Service Lifecycle Management or Software Lifecycle Management.

Martin uses consistently the abbreviation SysLM, where I would prefer the TLA SLM. The problem we both have is that both abbreviations are not unique or explicit enough. SysLM creates confusion with SysML (for dyslectic people or fast readers). SLM already has so many less valuable meanings: Simulation Lifecycle Management, Service Lifecycle Management or Software Lifecycle Management.

For the moment, I will use the abbreviation SLM, leaving it in the middle if it is System Lifecycle Management or Solution Lifecycle Management.

How to implement both approaches?

In the long term, I predict that more than 80 percent of the activities related to SLM will take place in a data-driven, model-based environment due to the changing content of the solutions offered by companies.

A solution will be based on hardware, the solid part of the solution, for which we could apply a BOM-centric approach. We can see the BOM-centric approach in most current PLM implementations. It is the logical result of optimizing the product lifecycle management processes in a coordinated manner.

However, the most dynamic part of the solution will be covered by software and services. Changing software or services related to a solution has completely different dynamics than a hardware product.

Software and services implementations are associated with a data-driven, model-based approach.

Software and services implementations are associated with a data-driven, model-based approach.

The management of solutions, therefore, needs to be done in a connected manner. Using the BOM-centric approach to manage software and services would create a Kafkaesque overhead.

Depending on your company’s value proposition to the market, the challenge will be to find the right balance. For example, when you keep on selling “disconnected” hardware, there is probably no need to change your internal PLM processes that much.

However, when you are moving to a “connected” business model providing solutions (connected systems / Outcome-based services), you need to introduce new ways of working with a different go-to-market mindset. No longer linear, but iterative.

A McKinsey concept, I have been promoting several times, illustrates a potential path – note the article was not written with a PLM mindset but in a business mindset.

What about Configuration Management?

The different datasets defining a solution also challenge traditional configuration management processes. Configuration Management (CM) is well established in the aerospace & defense industry. In theory, proper configuration management should be the target of every industry to guarantee an appropriate performance, reduced risk and cost of fixing issues.

The different datasets defining a solution also challenge traditional configuration management processes. Configuration Management (CM) is well established in the aerospace & defense industry. In theory, proper configuration management should be the target of every industry to guarantee an appropriate performance, reduced risk and cost of fixing issues.

The challenge, however, is that configuration management processes are not designed to manage systems or solutions, where dynamic updates can be applied whether or not done by the customer.

This is a topic to solve for the modern Connected Car (system) or Connected Car Sharing (solution)

For that reason, I am inquisitive to learn more from Martijn Dullaart’s presentation at the upcoming PLM Roadmap/PDT conference. The title of his session: The next disruption please …

In his abstract for this session, Martijn writes:

From Paper to Digital Files brought many benefits but did not fundamentally impact how Configuration Management was and still is done. The process to go digital was accelerated because of the Covid-19 Pandemic. Forced to work remotely was the disruption that was needed to push everyone to go digital. But a bigger disruption to CM has already arrived. Going model-based will require us to reexamine why we need CM and how to apply it in a model-based environment. Where, from a Configuration Management perspective, a digital file still in many ways behaves like a paper document, a model is something different. What is the deliverable? How do you manage change in models? How do you manage ownership? How should CM adopt MBx, and what requirements to support CM should be considered in the successful implementation of MBx? It’s time to start unraveling these questions in search of answers.

One of the ideas I am currently exploring is that we need a new layer on top of the current configuration management processes extending the validation to software and services. For example, instead of describing every validated configuration, a company might implement the regular configuration management processes for its hardware.

One of the ideas I am currently exploring is that we need a new layer on top of the current configuration management processes extending the validation to software and services. For example, instead of describing every validated configuration, a company might implement the regular configuration management processes for its hardware.

Next, the systems or solutions in the field will report (or validate) their configuration against validation rules. A topic that requires a long discussion and more than this blog post, potentially a full conference.

Therefore I am looking forward to participating in the CIMdata/PDT FALL conference and pick-up the discussions towards a data-driven, model-based future with the attendees. Besides CM, there are several other topics of great interest for the future. Have a look at the agenda here

Therefore I am looking forward to participating in the CIMdata/PDT FALL conference and pick-up the discussions towards a data-driven, model-based future with the attendees. Besides CM, there are several other topics of great interest for the future. Have a look at the agenda here

Conclusion

A data-driven and model-based infrastructure still need to be combined with a coordinated, document-driven infrastructure. Where the focus will be, depends on your company’s value proposition.

If we discuss hardware products, we should think PLM. When you deliver systems, you should perhaps talk SysML (or SLM). And maybe it is time to define Solution Lifecycle Management as the term for the future.

Please, share your thoughts in the comments.

After the first episode of “The PLM Doctor is IN“, this time a question from Helena Gutierrez. Helena is one of the founders of SharePLM, a young and dynamic company focusing on providing education services based on your company’s needs, instead of leaving it to function-feature training.

I might come back on this topic later this year in the context of PLM and complementary domains/services.

Now sit back and enjoy.

Note: Due to a technical mistake Helena’s mimic might give you a “CNN-like” impression as the recording of her doctor visit was too short to cover the full response.

PLM and Startups – is this a good match?

Relevant links discussed in this video

![]() Marc Halpern (Gartner): The PLM maturity table

Marc Halpern (Gartner): The PLM maturity table

VirtualDutchman: Digital PLM requires a Model-Based Enterprise

Conclusion

I hope you enjoyed the answer and look forward to your questions and comments. Let me know if you want to be an actor in one of the episodes.

The main rule: A single open question that is puzzling you related to PLM.

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…

Jos, as usual, you've provided a buffet of "food for thought". Where do you see AI being trained by a…