My previous post introducing the concept of connected platforms created some positive feedback and some interesting questions. For example, the question from Maxime Gravel:

My previous post introducing the concept of connected platforms created some positive feedback and some interesting questions. For example, the question from Maxime Gravel:

Thank you, Jos, for the great blog. Where do you see Change Management tool fit in this new Platform ecosystem?

is one of the questions I try to understand too. You can see my short comment in the comments here. However, while discussing with other experts in the CM-domain, we should paint the path forward. Because if we cannot solve this type of question, the value of connected platforms will be disputable.

It is essential to realize that a digital transformation in the PLM domain is challenging. No company or vendor has the perfect blueprint available to provide an end-to-end answer for a connected enterprise. In addition, I assume it will take 10 – 20 years till we will be familiar with the concepts.

It is essential to realize that a digital transformation in the PLM domain is challenging. No company or vendor has the perfect blueprint available to provide an end-to-end answer for a connected enterprise. In addition, I assume it will take 10 – 20 years till we will be familiar with the concepts.

It takes a generation to move from drawings to 3D CAD. It will take another generation to move from a document-driven, linear process to data-driven, real-time collaboration in an iterative manner. Perhaps we can move faster, as the Automotive, Aerospace & Defense, and Industrial Equipment industries are not the most innovative industries at this time. Other industries or startups might lead us faster into the future.

Although I prefer discussing methodology, I believe before moving into that area, I need to clarify some more technical points before moving forward. My apologies for writing it in such a simple manner. This information should be accessible for the majority of readers.

Although I prefer discussing methodology, I believe before moving into that area, I need to clarify some more technical points before moving forward. My apologies for writing it in such a simple manner. This information should be accessible for the majority of readers.

What means data-driven?

I often mention a data-driven environment, but what do I mean precisely by that. For me, a data-driven environment means that all information is stored in a dataset that contains a single aspect of information in a standardized manner, so it becomes accessible by outside tools.

I often mention a data-driven environment, but what do I mean precisely by that. For me, a data-driven environment means that all information is stored in a dataset that contains a single aspect of information in a standardized manner, so it becomes accessible by outside tools.

A document is not a dataset, as often it includes a collection of datasets. Most of the time, the information it is exposed to is not standardized in such a manner a tool can read and interpret the exact content. We will see that a dataset needs an identifier, a classification, and a status.

An identifier to be able to create a connection between other datasets – traceability or, in modern words, a digital thread.

An identifier to be able to create a connection between other datasets – traceability or, in modern words, a digital thread.

A classification as the classification identifier will determine the type of information the dataset contains and potential a set of mandatory attributes

A status to understand if the dataset is stable or still in work.

Examples of a data-driven approach – the item

The most common dataset in the PLM world is probably the item (or part) in a Bill of Material. The identifier is the item number (ID + revision if revisions are used). Next, the classification will tell you the type of part it is.

The most common dataset in the PLM world is probably the item (or part) in a Bill of Material. The identifier is the item number (ID + revision if revisions are used). Next, the classification will tell you the type of part it is.

Part classification can be a topic on its own, and every industry has its taxonomy.

Finally, the status is used to identify if the dataset is shareable in the context of other information (released, in work, obsolete), allowing tools to expose only relevant information.

In a data-driven manner, a part can occur in several Bill of Materials – an example of a single definition consumed in other places.

In a data-driven manner, a part can occur in several Bill of Materials – an example of a single definition consumed in other places.

When the part information changes, the accountable person has to analyze the relations to the part, which is easy in a data-driven environment. It is normal to find this functionality in a PDM or ERP system.

When the part would change in a document-driven environment, the effort is much higher.

When the part would change in a document-driven environment, the effort is much higher.

First, all documents need to be identified where this part occurs. Then the impact of change needs to be managed in document versions, which will lead to other related changes if you want to keep the information correct.

Examples of a data-driven approach – the requirement

Another example illustrating the benefits of a data-driven approach is implementing requirements management, where requirements become individual datasets. Often a product specification can contain hundreds of requirements, addressing the needs of different stakeholders.

Another example illustrating the benefits of a data-driven approach is implementing requirements management, where requirements become individual datasets. Often a product specification can contain hundreds of requirements, addressing the needs of different stakeholders.

In addition, several combinations of requirements need to be handled by other disciplines, mechanical, electrical, software, quality and legal, for example.

As requirements need to be analyzed and ranked, a specification document would never be frozen. Trade-off analysis might lead to dropping or changing a single requirement. It is almost impossible to manage this all in a document, although many companies use Excel. The disadvantages of Excel are known, in particular in a dynamic environment.

As requirements need to be analyzed and ranked, a specification document would never be frozen. Trade-off analysis might lead to dropping or changing a single requirement. It is almost impossible to manage this all in a document, although many companies use Excel. The disadvantages of Excel are known, in particular in a dynamic environment.

The advantage of managing requirements as datasets is that they can be grouped. So, for example, they can be pushed to a supplier (as a specification).

Or requirements could be linked to test criteria and test cases, without the need to manage documents and make sure you work with them last updated document.

![]() As you will see, also requirements need to have an Identifier (to manage digital relations), a classification (to allow grouping) and a status (in work / released /dropped)

As you will see, also requirements need to have an Identifier (to manage digital relations), a classification (to allow grouping) and a status (in work / released /dropped)

Data-driven and Models – the 3D CAD model

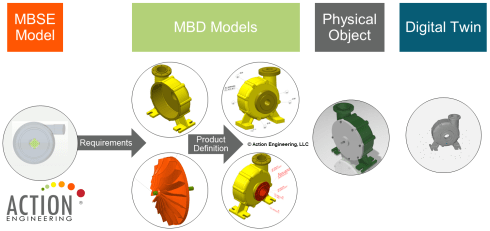

When I launched my series related to the model-based approach in 2018, the first comments I got came from people who believed that model-based equals the usage of 3D CAD models – see Model-based – the confusion. 3D Models are indeed an essential part of a model-based infrastructure, as the 3D model provides an unambiguous definition of the physical product. Just look at how most vendors depict the aspects of a virtual product using 3D (wireframe) models.

Although we use a 3D representation at each product lifecycle stage, most companies do not have a digital continuity for the 3D representation. Design models are often too heavy for visualization and field services support. The connection between engineering and manufacturing is usually based on drawings instead of annotated models.

I wrote about modern PLM and Model-Based Definition, supported by Jennifer Herron from Action Engineering – read the post PLM and Model-Based Definition here.

If your company wants to master a data-driven approach, this is one of the most accessible learning areas. You will discover that connecting engineering and manufacturing requires new technology, new ways of working and much more coordination between stakeholders.

Implementing Model-Based Definition is not an easy process. However, it is probably one of the best steps to get your digital transformation moving. The benefits of connected information between engineering and manufacturing have been discussed in the blog post PLM and Model-Based Definition

Essential to realize all these exciting capabilities linked to Industry 4.0 require a data-driven, model-based connection between engineering and manufacturing.

Essential to realize all these exciting capabilities linked to Industry 4.0 require a data-driven, model-based connection between engineering and manufacturing.

If this is not the case, the projected game-changers will not occur as they become too costly.

Data-driven and mathematical models

To manage complexity, we have learned that we have to describe the behavior in models to make logical decisions. This can be done in an abstract model, purely based on mathematical equations and relations. For example, suppose you look at climate models, weather models or COVID infections models.

In that case, we see they all lead to discussions from so-called experts that believe a model should be 100 % correct and any exception shows the model is wrong.

It is not that the model is wrong; the expectations are false.

For less complex systems and products, we also use models in the engineering domain. For example, logical models and behavior models are all descriptive models that allow people to analyze the behavior of a product.

For example, how software code impacts the product’s behavior. Usually, we speak about systems when software is involved, as the software will interact with the outside world.

For example, how software code impacts the product’s behavior. Usually, we speak about systems when software is involved, as the software will interact with the outside world.

There can be many models related to a product, and if you want to get an impression, look at this page from the SEBoK wiki: Types of Models. The current challenge is to keep the relations between these models by sharing parameters.

The sharable parameters then again should be datasets in a data-driven environment. Using standardized diagrams, like SysML or UML, enables the used objects in the diagram to become datasets.

The sharable parameters then again should be datasets in a data-driven environment. Using standardized diagrams, like SysML or UML, enables the used objects in the diagram to become datasets.

I will not dive further into the modeling details as I want to remain at a high level.

Essential to realize digital models should connect to a data-driven infrastructure by sharing relevant datasets.

What does data-driven imply?

I want to conclude this time with some statements to elaborate on further in upcoming posts and discussions

- Data-driven does not imply there needs to be a single environment, a single database that contains all information. Like I mentioned in my previous post, it will be about managing connected datasets in a federated manner. It is not anymore about owned the data; it is about access to reliable data.

- Data-driven does not mean we do not need any documents anymore. Read electronic files for documents. Likely, document sets will still be the interface to non-connected entities, suppliers, and regulatory bodies. These document sets can be considered a configuration baseline.

- Data-driven means that we need to manage data in a much more granular manner. We have to look different at data ownership. It becomes more data accountability per role as the data can be used and consumed throughout the product lifecycle.

- Data-driven means that you need to have an enterprise architecture, data governance and a master data management (MDM) approach. So far, the traditional PLM vendors have not been active in the MDM domain as they believe their proprietary data model is leading. Read also this interesting McKinsey article: How enterprise architects need to evolve to survive in a digital world

- A model-based approach with connected datasets seems to be the way forward. Managing data in documents will become inefficient as they cannot contribute to any digital accelerator, like applying algorithms. Artificial Intelligence relies on direct access to qualified data.

- I don’t believe in Low-Code platforms that provide ad-hoc solutions on demand. The ultimate result after several years might be again a new type of spaghetti. On the other hand, standardized interfaces and protocols will probably deliver higher, long-term benefits. Remember: Low code: A promising trend or a Pandora’s Box?

- Configuration Management requires a new approach. The current methodology is very much based on hardware products with labor-intensive change management. However, the world of software products has different configuration management and change procedure. Therefore, we need to merge them in a single framework. Unfortunately, this cannot be the BOM framework due to the dynamics in software changes. An interesting starting point for discussion can be found here: Configuration management of industrial products in PDM/PLM

Conclusion

Again, a long post, slowly moving into the future with many questions and points to discuss. Each of the seven points above could be a topic for another blog post, a further discussion and debate.

After my summer holiday break in August, I will follow up. I hope you will join me in this journey by commenting and contributing with your experiences and knowledge.

Discover more from Jos Voskuil's Weblog

Subscribe to get the latest posts sent to your email.

1 comment

Comments feed for this article

August 2, 2021 at 2:16 pm

jfvanoss

Jos,

Good blog article but I think your implications on data driven PLM leaves out some important points:

From the Greives Product Lifecycle Management book (which I highly recommend; https://www.amazon.com/Product-Lifecycle-Management-Generation-Thinking/dp/0071452303) one of the key things is Data singularity particularly in a MBD environment. I agree with your premise that there not be a single environment nor a single model but one does need to take care that the data or parameters are singular with derivatives as a dependency. The other 5 characteristics of PLM should also be considered:

Correspondence

Cohesion

Traceability

Reflectivity

Cued availability

The other concept that should be explored is as one goes toward MBD, a key aspect of this is to have the machines be able to read the models as opposed to just humans (as with the drawing based paradigm); this is also the basis of the digital twin. MBD is going to have people consider what is the configuration management rule for each attribute or feature of the model. In a Drawing based paradigm anything on a drawing followed one set of rules. with MBD things like a change in intellectual property or trade compliance legends would not require the same level of review, approval, or oversight; as a dimension or tolerance change. MBD will drive a dissection and mixed application of CM rules on aspects of the models.

Jim van Oss

jfvanoss@gmail.com

Thanks for your points, Jim, and I am fully aware that data-driven PLM has many points to consider. At this moment, I limit myself to writing a max of 1500 words per blog post, and still, there is so much more to say. Also, do not consider everything that I write as the ultimate truth. I am aware that we need to learn a lot to realize a future data-driven PLM environment. I am very much aware that my thinking is biased based on what I hear and see around me, not sure that this is the future. Perhaps in the future, a system would report its configuration. Through rules, we will be able to decide if upgrades are possible. There is so much more to explore, and I would be happy to discuss and learn your insights related to MBD.

Best regards, Jos

LikeLike