You are currently browsing the yearly archive for 2019.

Every time the message pops-up that there is a problem with PLM because it is not simple. Most of these messages come from new software vendors challenging the incumbents, other from frustrated people who tried to implement PLM directly and failed. The first group of people believe that technology will kill, the second group usually blames the vendors or implementers for complexity, never themselves. Let’s zoom in on both types:

The Vendor pitch

Two weeks ago Oleg Shilovitsky published: Why complexity is killing PLM and what are future trajectories and opportunities?. His post contained some quotes from my earlier posts (thanks for that Oleg J ). Read the article, and you will understand Oleg believes in technology, ending with the following conclusion/thoughts:

What is my conclusion? It is a time for new PLM leadership that can be built of transparency, trust and new technologies helping to build new intelligent and affordable systems for future manufacturing business. The old mantra about complex PLM should go away. Otherwise, PLM will shrink, retire and die. Just my thoughts…

It is a heartbreaking statement. I would claim every business uses these words to the outside world. Transparency as far as possible, as you do not want to throw your strategy on the table unless you are a philanthropist (or too wealthy to care).

It is a heartbreaking statement. I would claim every business uses these words to the outside world. Transparency as far as possible, as you do not want to throw your strategy on the table unless you are a philanthropist (or too wealthy to care).

Without trust, no long-term relationship can exist, and yes new technology can make some difference, but is current technology making PLM complex?

Vendors like Aras, Arena, FusePLM, Propel PLM all claim the new PLM space with modern technology – without strong support for 3D CAD/Model-Based approaches, as this creates complications. Other companies like OpenBOM, OnShape, and more are providing a piece of the contemporary PLM-puzzle.

Companies using their capabilities have to solve their PLM strategy /architecture themselves. Having worked for SmarTeam, the market leader in easy client-server PLM, I learned that an excellent first impression helps to sell PLM, but only to departments, it does not scale to the larger enterprise. Why?

Companies using their capabilities have to solve their PLM strategy /architecture themselves. Having worked for SmarTeam, the market leader in easy client-server PLM, I learned that an excellent first impression helps to sell PLM, but only to departments, it does not scale to the larger enterprise. Why?

PLM is about sharing (and connecting)

Let’s start with the most simplistic view of PLM. PLM is about sharing information along all the lifecycle phases. Current practices are based on a coordinated approach using documents/files. The future is about sharing information through connected data. My recent post: The Challenges of a connected ecosystem for PLM zooms in on these details.

Let’s start with the most simplistic view of PLM. PLM is about sharing information along all the lifecycle phases. Current practices are based on a coordinated approach using documents/files. The future is about sharing information through connected data. My recent post: The Challenges of a connected ecosystem for PLM zooms in on these details.

Can sharing be solved by technology? Let’s look at the most common way of information sharing we currently use: email. Initially, everyone was excited, fast and easy! Long live technology!

Email and communities

Email and communities

Now companies start to realize that email did not solve the problems of sharing. Messages with half of the company in CC, long, unstructured stories, hidden, local archives with crucial information all have led to unproductive situations. Every person shares based on guidelines, personal (best) practices or instinct. And this is only office communication.

Product lifecycle management data and practices are xxxx times more complicated. In particular, if we talk about a modern connected product based on hardware and software, managed through the whole lifecycle – here customers expect quality.

I will change my opinion about PLM simplicity as soon as a reasonable, scalable solution for the email problem exists that solves the chaos.

Some companies thought that moving email to (social) communities would be the logical next step see Why Atos Origin Is Striving To Be A Zero-Email Company. This was in 2011, and digital communities have reduced the number of emails.

Communities on LinkedIn flo urished in the beginning, however, now they are also filled with a large amount of ambiguous content and irrelevant puzzles. Also, these platforms became polluted. The main reason: the concept of communities again is implemented as technology, easy to publish anything (read my blog 🙂 ) but not necessarily combined with an attitude change.

urished in the beginning, however, now they are also filled with a large amount of ambiguous content and irrelevant puzzles. Also, these platforms became polluted. The main reason: the concept of communities again is implemented as technology, easy to publish anything (read my blog 🙂 ) but not necessarily combined with an attitude change.

Learning to share – business transformation

Traditional PLM and modern data-driven PLM both have the challenge to offer an infrastructure that will be accepted by the end-users, supporting sharing and collaboration guaranteeing at the end that products have the right quality and the company remains profitable.

Versions, revisions, configuration management, and change management are a must to control cost and quality. All kinds of practices the end-user hates, who “just wants to do his/her job.”

Versions, revisions, configuration management, and change management are a must to control cost and quality. All kinds of practices the end-user hates, who “just wants to do his/her job.”

(2010 post: PLM, CM and ALM – not sexy 😦 )

And this is precisely the challenge of PLM. The job to do is not an isolated activity. If you want your data to be reused or make your data discoverable after five or ten years from now, there is extra work to do and thinking needed. Engineers are often under pressure to deliver their designs with enough quality for the next step. Investing time in enriching the information for downstream or future use is considered a waste of time, as the engineering department is not rewarded for that. Actually, the feeling is that productivity is dropping due to “extra” work.

The critical mindset needed for PLM is to redefine the job of individuals. Instead of optimizing the work for individuals, a company needs to focus on the optimized value streams. What is the best moment to spend time on data quality and enrichment? Once the data is created or further downstream when it is required?

The critical mindset needed for PLM is to redefine the job of individuals. Instead of optimizing the work for individuals, a company needs to focus on the optimized value streams. What is the best moment to spend time on data quality and enrichment? Once the data is created or further downstream when it is required?

Changing this behavior is called business transformation, as it requires a redesign of processes and responsibilities. PLM implementations always have a transformational aspect if done right.

The tool will perform the business transformation

At the PLM Innovation Munich 2012 conference, Autodesk announced their cloud-based PLM 360 solution. One of their lead customers explained to the audience that within two weeks they were up and running. The audience as in shock – see the image to the left. You can find the full presentation here on SlideShare: The PLM Identity Crisis

At the PLM Innovation Munich 2012 conference, Autodesk announced their cloud-based PLM 360 solution. One of their lead customers explained to the audience that within two weeks they were up and running. The audience as in shock – see the image to the left. You can find the full presentation here on SlideShare: The PLM Identity Crisis

Easy configuration, even sitting at the airport, is a typical PLM Vendor-marketing sentence.

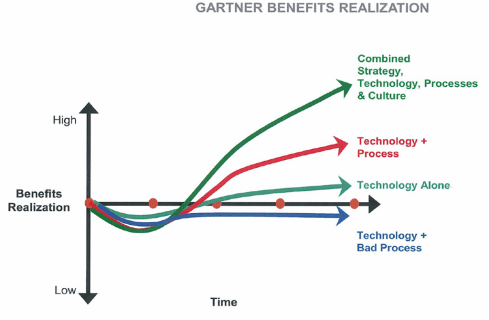

Too many PLM implementations have created frustration as the management believed the PLM-tools would transform the business. However, without a proper top-down redesign of the business, this is asking for failure.

The good news is that many past PLM implementations haven’t entirely failed because they have been implemented close to the existing processes, not really creating the value PLM could offer. They maintained the silos in a coordinated way. Similar to email – the PLM-system may give a technology boost, but five to ten years later the conclusion comes that fundamentally data quality is poor for downstream usage as it was not part of the easy scope.

The good news is that many past PLM implementations haven’t entirely failed because they have been implemented close to the existing processes, not really creating the value PLM could offer. They maintained the silos in a coordinated way. Similar to email – the PLM-system may give a technology boost, but five to ten years later the conclusion comes that fundamentally data quality is poor for downstream usage as it was not part of the easy scope.

Who does Change Management?

It is clear PLM-vendors make a living from selling software. They will not talk about the required Change Management as it complicates the deal. Change Management is a complex topic as it requires a combination of a vision and a restructuring (a part of) the organization. It is impossible to outsource change management – as a company you need to own your strategy and change. You can hire strategy consultants and coaches but is a costly exercise if you do not own your transformation.

Therefore, it remains a “soft” topic depending on your company’s management and culture. The longer your company exists, the more challenging change management will be, as we can see from big American / European enterprises, where the individual opinion is strongest, compared to upcoming Asian companies (with less legacy)

Change Management in the context of digital transformation becomes even more critical as, for sure, existing processes and ways of working no longer apply for a digital and connected enterprise.

There is so much learning and rethinking to do for businesses before we can reap all the benefits PLM Vendors are showing. Go to the upcoming Hannover Messe in Germany, you will be impressed by what is (technically) possible – Artificial Intelligence / Virtual Twins and VR/AR. Next, ask around and look for companies that have been able to implement these capabilities and have transformed their business. I will be happy to visit them all.

Conclusions

PLM will never be pure as it requires individuals to work in a sharing mode which is not natural by nature. Digital transformation, where the sharing of information becomes connecting information requires even more orchestration and less individualism. Culture and being able to create a vision and strategy related to sharing will be crucial – the technology is there.

“Technology for its own sake is a common trap. Don’t build your roadmap as a series of technology projects. Technology is only part of the story in digital transformation and often the least challenging one.”

(from “Leading Digital: Turning Technology into Business Transformation (English Edition)” by George Westerman, Didier Bonnet, Andrew McAfee)

In this post, I will explain the story behind my presentation at PI PLMx London. You can read my review of the event here: “The weekend after ……” and you can find my slides on SlideShare: HERE.

In this post, I will explain the story behind my presentation at PI PLMx London. You can read my review of the event here: “The weekend after ……” and you can find my slides on SlideShare: HERE.

For me, this presentation is a conclusion of a thought process and collection of built-up experiences in the past three to five years, related to the challenges digital transformation is creating for PLM and what makes it hard to go through compared to other enterprise business domains. So here we go:

Digital transformation or disruption?

Slide 2 (top image) until 5 are dealing with the common challenges of business transformation. In nature, the transformation from a Caterpillar (old linear business) to a Butterfly (modern, agile, flexible) has the cocoon stage, where the transformation happens. In business unfortunate companies cannot afford a cocoon phase, it needs to be a parallel change.

Human beings are not good at change (slide 3 & 4), and the risk is that a new technology or a new business model will disrupt your business if you are too confident – see examples from the past. The disruption theory introduced by Clayton Christensen in his book, the Innovators Dilemma is an excellent example of how this can happen. Some of my thoughts are in The Innovator’s dilemma and generation change (2015)

Human beings are not good at change (slide 3 & 4), and the risk is that a new technology or a new business model will disrupt your business if you are too confident – see examples from the past. The disruption theory introduced by Clayton Christensen in his book, the Innovators Dilemma is an excellent example of how this can happen. Some of my thoughts are in The Innovator’s dilemma and generation change (2015)

Although I know some PLM vendors consider themselves as disruptor, I give them no chance in the PLM domain. The main reason: The existing PLM systems are so closely tied to the data they manage, that switching from one PLM system to a more modern PLM system does not pay off. The data models are so diverse that it is better to stay with the existing environment.

What is clear for modern digital businesses is that if you could start from scratch or with almost no legacy you can move faster forward than the rest. But only if supported by a strong leadership , a(understandable) vision and relentless execution.

The impression of evolution

Marc Halpern’s slide presented at PDT 2015 is one of my favorite slides, as it maps business maturity to various characteristics of an organization, including the technologies used.

Slide 7 till 18 are zooming in on the terms Coordinated and Connected and the implications it has for data, people and business. I have written about Coordinated and Connected recently: Coordinated or Connected (2018)

A coordinated approach: Delivering the right information at the right moment in the proper context is what current PLM implementations try to achieve. Allowing people to use their own tools/systems as long as they deliver at the right moment their information (documents/files) as part of the lifecycle/delivery process. Very linear and not too complicated to implement you would expect. However it is difficult ! Here we already see the challenge of just aligning a company to implement a horizontal flow of data. Usability of the PLM backbone and optimized silo thinking are the main inhibitors.

In a connected approach: Providing actual information for anyone connected in any context the slide on the left shows the mental picture we need to have for a digital enterprise. Information coming from various platforms needs to be shareable and connected in real-time, leading, in particular for PLM, to a switch from document-based deliverables to models and parameters that are connected.

In a connected approach: Providing actual information for anyone connected in any context the slide on the left shows the mental picture we need to have for a digital enterprise. Information coming from various platforms needs to be shareable and connected in real-time, leading, in particular for PLM, to a switch from document-based deliverables to models and parameters that are connected.

Slide 15 has examples of some models. A data-driven approach creates different responsibilities as it is not about ownership anymore but about accountability.

The image above gives my PLM-twisted vision of which are the five core platforms for an enterprise. The number FIVE is interesting as David Sherburne just published his Five Platforms that Enable Digital Transformation and in 2016 Gartner identified Five domains for the digital platform .- more IT-twisted ? But remember the purpose of digital transformation is: FIVE!

From Coordinated to Connected is Digital Transformation

Slide 19 till 27 further elaborate on the fact that for PLM there is no evolutionary approach possible, going from a Coordinated technology towards a Connected technology.

Slide 19 till 27 further elaborate on the fact that for PLM there is no evolutionary approach possible, going from a Coordinated technology towards a Connected technology.

For three reasons: different type of data (document vs. database elements), different people (working in a connected environment requires modern digital skills) and different processes (the standard methods for mechanical-oriented PLM practices do not match processes needed to deliver systems (hardware & software) with an incremental delivery process).

Due to the incompatibility of the data, more and more companies discover that a single PLM-instance cannot support both modes – staying with your existing document-oriented PLM-system does not give the capabilities needed for a model-driven approach. Migrating the data from a traditional PLM-environment towards a modern data-driven environment does not bring any value. The majority of the coordinated data is not complete and with the right quality to use a data-driven environment. Note: in a data-driven environment you do not have people interpreting the data – the data should be correct for automation / algorithms.

The overlay approach, mentioned several times in various PLM-blogs, is an intermediate solution. It provides traceability and visibility between different data sources (PLM, ALM, ERP, SCM, …). However it does not make the information in these systems better accessible.

So the ultimate conclusion is: You need both approaches, and you need to learn to work in a hybrid environment !

What can various stakeholders do?

For the management of your company, it is crucial they understand the full impact of digital transformation. It is not about a sexy customer website, a service platform or Virtual Reality/Augmented Reality case for the shop floor or services. When these capabilities are created disconnected from the source (PLM), they will deliver inconsistencies in the long-term. The new digital baby becomes another silo in the organization. Real digital transformation comes from an end-to-end vision and implementation. The result of this end-to-end vision will be the understanding that there is a duality in data, in particular for the PLM domain.

For the management of your company, it is crucial they understand the full impact of digital transformation. It is not about a sexy customer website, a service platform or Virtual Reality/Augmented Reality case for the shop floor or services. When these capabilities are created disconnected from the source (PLM), they will deliver inconsistencies in the long-term. The new digital baby becomes another silo in the organization. Real digital transformation comes from an end-to-end vision and implementation. The result of this end-to-end vision will be the understanding that there is a duality in data, in particular for the PLM domain.

Besides the technicalities, when going through a digital transformation, it is crucial for the management to share their vision in a way it becomes a motivational story, a myth, for all employees. As Yuval Harari, writer of the book Sapiens, suggested, we (Home Sapiens) need an abstract story, a myth to align a larger group of people to achieve a common abstract goal. I discussed this topic in my posts: PLM as a myth? (2017) and PLM – measurable or a myth?

Finally, the beauty of new digital businesses is that they are connected and can be monitored in real-time. That implies you can check the results continuously and adjust – scale of fail!

Consultants and strategists in a company should also take the responsibility, to educate the management and when advising on less transformational steps, like efficiency improvements: Make sure you learn and understand model-based approaches and push for data governance initiatives. This will at least narrow the gap between coordinated and connected environments.

Consultants and strategists in a company should also take the responsibility, to educate the management and when advising on less transformational steps, like efficiency improvements: Make sure you learn and understand model-based approaches and push for data governance initiatives. This will at least narrow the gap between coordinated and connected environments.

This was about strategy – now about execution:

For PLM vendors and implementers, understanding the incompatibility of data between current PLM practices – coordinated and connected – it will lead to different business models. Where traditionally the new PLM vendor started first with a rip-and-replace of the earlier environment – no added value – now it is about starting a new parallel environment. This implies no more big replacement deals, but more a long-term. strategic and parallel journey. For PLM vendors it is crucial that being able to offer to these modes in parallel will allow them to keep up their customer base and grow. If they would choose for coordinated or connected only it is for sure a competitor will work in parallel.

For PLM vendors and implementers, understanding the incompatibility of data between current PLM practices – coordinated and connected – it will lead to different business models. Where traditionally the new PLM vendor started first with a rip-and-replace of the earlier environment – no added value – now it is about starting a new parallel environment. This implies no more big replacement deals, but more a long-term. strategic and parallel journey. For PLM vendors it is crucial that being able to offer to these modes in parallel will allow them to keep up their customer base and grow. If they would choose for coordinated or connected only it is for sure a competitor will work in parallel.

For PLM users, an organization should understand that they are the most valuable resources, realizing these people cannot make a drastic change in their behavior. People will adapt within their capabilities but do not expect a person who grew up in the traditional ways of working (linear / analogue) to become a successful worker in the new mode (agile / digital). Their value lies in transferring their skills and coaching new employees but do not let them work in two modes. And when it comes to education: permanent education is crucial and should be scheduled – it is not about one or two trainings per year – if the perfect training would exist, why do students go to school for several years ? Why not give them the perfect PowerPoint twice a year?

For PLM users, an organization should understand that they are the most valuable resources, realizing these people cannot make a drastic change in their behavior. People will adapt within their capabilities but do not expect a person who grew up in the traditional ways of working (linear / analogue) to become a successful worker in the new mode (agile / digital). Their value lies in transferring their skills and coaching new employees but do not let them work in two modes. And when it comes to education: permanent education is crucial and should be scheduled – it is not about one or two trainings per year – if the perfect training would exist, why do students go to school for several years ? Why not give them the perfect PowerPoint twice a year?

Conclusions

I believe after three years of blogging about this theme I have made my point. Let’s observe and learn from what is happening in the field – I remain curious and focused about proof points and new insights. This year I hope to share with you new ideas related to digital practices in all industries, of course all associated with the human side of what we once started to call PLM.

Note: Oleg Shilovitsky just published an interesting post this weekend: Why complexity is killing PLM and what are future trajectories and opportunities? Enough food for discussion. One point: The fact that consumers want simplicity does not mean PLM will become simple – working in the context of other information is the challenge – it is human behavior – team players are good in anticipating – big egos are not. To be continued…….

I was happy to take part at the PI PLMx London event last week. It was here and in the same hotel that this conference saw the light in 2011 – you can see my blog post from that event here: PLM and Innovation @ PLMINNOVATION 2011.

At that time the first vendor-independent PLM conference after a long time and it brought a lot of new people together to discuss their experience with PLM. Looking at the audience that time, many of the companies that were there, came back during the years, confirming the value this conference has brought to their PLM journey.

Similar to the PDT conference(s) – just announced for this year last week – here – the number of participants is diminishing.

Main hypotheses:

- the PLM-definition has become too vague. Going to a PLM conference does not guarantee it is your type of PLM discussions you expect to see?

- the average person is now much better informed related to PLM thanks to the internet and social media (blogs/webinars/ etc.) Therefore, the value retrieved from the PLM conference is not big enough any more?

- Digital Transformation is absorbing all the budget and attention downstream the organization not creating the need and awareness of modern PLM to the attention of the management anymore. g., a digital twin is sexier to discuss than PLM?

What do you think about the above three hypotheses – 1,2 and/or 3?

Back to the conference. The discussion related to PLM has changed over the past nine years. As I presented at PI from the beginning in 2011, here are the nine titles from my sessions:

![]() 2011 PLM – The missing link

2011 PLM – The missing link

2012 Making the case for PLM

2013 PLM loves Innovation

2014 PLM is changing

2015 The challenge of PLM upgrades

2016 The PLM identity crisis

2017 Digital Transformation affects PLM

2018 PLM transformation alongside Digitization

2019 The challenges of a connected Ecosystem for PLM

Where the focus started with justifying PLM, as well as a supporting infrastructure, to bring Innovation to the market, the first changes became visible in 2014. PLM was changing as more data-driven vendors appeared with new and modern (metadata) concepts and cloud, creating the discussion about what would be the next upgrade challenge.

The identity crisis reflected the introduction of software development / management combined with traditional (mechanical) PLM – how to deal with systems? Where are the best practices?

Then from 2017 on until now Digital Transformation and the impact on PLM and an organization became the themes to discuss – and we are not ready yet!

Now some of the highlights from the conference. As there were parallel sessions, I had to divide my attention – you can see the full agenda here:

How to Build Critical Architecture Models for the New Digital Economy

![]() The conference started with a refreshing presentation from David Sherburne (Carestream) explaining their journey towards a digital economy. According to David, the main reason behind digitization is to save time, as he quoted Harvey Mackay an American Businessman and Journalist,

The conference started with a refreshing presentation from David Sherburne (Carestream) explaining their journey towards a digital economy. According to David, the main reason behind digitization is to save time, as he quoted Harvey Mackay an American Businessman and Journalist,

Time is free, but it is priceless. You cannot own it, but you can use it. You can’t keep it, but you can spend it. Once you have lost it, you never can get it back

I tend to agree with this simplification as it makes the story easy to explain to everyone in your company. Probably I would add to that story that saving time also means less money spent on intermediate resources in a company, therefore, creating a two-sided competitive advantage.

David stated that today’s digital transformation is more about business change than technology and here I wholeheartedly agree. Once you can master the flow of data in your company, you can change and adapt your company’s business processes to be better connected to the customer and therefore deliver the value they expect (increases your competitive advantage).

Having new technology in place does not help you unless you change the way you work.

David introduced a new acronym ILM (Integrated Lifecycle Management) and I am sure some people will jump on this acronym.

David’s presentation contained an interesting view from the business-architectural point of view. An excellent start for the conference where various dimensions of digital transformation and PLM were explored.

Integrated PLM in the Chemical industry

![]() Another interesting session was from Susanna Mäentausta (Kemira oy) with the title: “Increased speed to market, decreased risk of non-compliance through integrated PLM in Chemical industry.” I selected her session as from my past involvement with the process industry, I noticed that PLM adoption is very low in the process industry. Understanding Why and How they implemented PLM was interesting for me. Her PLM vision slide says it all:

Another interesting session was from Susanna Mäentausta (Kemira oy) with the title: “Increased speed to market, decreased risk of non-compliance through integrated PLM in Chemical industry.” I selected her session as from my past involvement with the process industry, I noticed that PLM adoption is very low in the process industry. Understanding Why and How they implemented PLM was interesting for me. Her PLM vision slide says it all:

There were two points that I liked a lot from her presentation, as I can confirm they are crucial.

- Although there was a justification for the implementation of PLM, there was no ROI calculation done upfront. I think this is crucial, you know as a company you need to invest in PLM to stay competitive. Making an ROI-story is just consoling the people with artificial number – success and numbers depend on the implementation and Susanna confirmed that step 1 delivered enough value to be confident.

- There were an end-to-end governance and a communication plan in place. Compared to PLM projects I know, this was done very extensive – full engagement of key users and on-going feedback – communicate, communicate, communicate. How often do we forget this in PLM projects?

Extracting More Value of PLM in an Engineer-to-Order Business

![]() Sami Grönstrand & Helena Gutierrez presented as an experienced duo (they were active in PI P PLMx Hamburg/Berlin before) – their current status and mission for PLM @ Outotec. As the title suggests, it was about how to extract more value from PL M, in an Engineering to Order Business.

Sami Grönstrand & Helena Gutierrez presented as an experienced duo (they were active in PI P PLMx Hamburg/Berlin before) – their current status and mission for PLM @ Outotec. As the title suggests, it was about how to extract more value from PL M, in an Engineering to Order Business.

What I liked is how they simplified their PLM targets from a complex landscape into three story-lines.

If you jump into all the details where PLM is contributing to your business, it might get too complicated for the audience involved. Therefore, they aligned their work around three value messages:

- Boosting sales, by focusing on modularization and encouraging the use of a product configurator. This instead of developing every time a customer-specific solution

- Accelerating project deliverables, again reaping the benefits of modularization, creating libraries and training the workforce in using this new environment (otherwise no use of new capabilities). The results in reducing engineering hours was quite significant.

- Creating New Business Models, by connecting all data using a joint plant structure with related equipment. By linking these data elements, an end-to-end digital continuity was established to support advanced service and support business models.

My conclusion from this session was again that if you want to motivate people on a PLM-journey it is not about the technical details, it is about the business benefits that drive these new ways of working.

Managing Product Variation in a Configure-To-Order Business

![]() In the context of the previous session from Outotec, Björn Wilhemsson’s session was also addressing somehow the same topic of How to create as much as possible variation in your customer offering, while internally keep the number of variants and parts manageable.

In the context of the previous session from Outotec, Björn Wilhemsson’s session was also addressing somehow the same topic of How to create as much as possible variation in your customer offering, while internally keep the number of variants and parts manageable.

Björn, Alfa Laval’s OnePLM Programme Director, explained in detail the strategy they implemented to address these challenges. His presentation was very educational and could serve as a lesson for many of us related to product portfolio management and modularization.

Björn explained in detail the six measures to control variation, starting from a model-strategy / roadmap (thinking first) followed by building a modularized product architecture, controlling and limiting the number of variants during your New Product Development process. Next as Alfa Laval is in a Configure-To-Order business, Björn the implementation of order-based and automated addition of pre-approved variants (not every variant needs to exist in detail before selling it), followed by the controlled introduction of additional variants and continuous analysis of quoted and sold variant (the power of a digital portfolio) as his summary slides shows below:

There are some analogies to discover between his mission and a PLM implementation. It is all about having the total picture in mind. Plan and plan, prepare step-by-step in detail and rely on teamwork – it is not a solo journey – and it is about reaching a top (deliverable phase) in the most efficient way.

The differences: PLM does not need world records, you need to go with the pace an organization can digest and understand. Although the initial PLM climate during implementation might be chilling too, I do not believe you have to suffer temperatures below 50 degrees Celsius.

During the morning, I was involved in several meetings, therefore unfortunate unable to see some of the interesting sessions at that time. Hopefully later available on PI.TV for review as slides-only do not tell the full story. Although there are experts that can conclude and comment after seeing a single slide. You can read it here from my blog buddy Oleg Shilovitsky’s post : PLM Buzzword Detox. I think oversimplification is exactly creating the current problem we have in this world – people without knowledge become louder and sure about their opinion compared to knowledgeable people who have spent time to understand the matter.

During the morning, I was involved in several meetings, therefore unfortunate unable to see some of the interesting sessions at that time. Hopefully later available on PI.TV for review as slides-only do not tell the full story. Although there are experts that can conclude and comment after seeing a single slide. You can read it here from my blog buddy Oleg Shilovitsky’s post : PLM Buzzword Detox. I think oversimplification is exactly creating the current problem we have in this world – people without knowledge become louder and sure about their opinion compared to knowledgeable people who have spent time to understand the matter.

Have a look at the Dunning-Kruger effect here (if you take the time to understand).

PLM: Enabling the Future of a Smart and Connected Ecosystem

Peter Bilello from CIMdata shared his observations and guidance related to the current ongoing digital business revolution that is taking place thanks to internet and IoT technologies. It will fundamentally transform how people will work and interact between themselves and with machines. Survival in business will depend on how companies create Smart and Connected Ecosystems. Peter showed a slide from the 2015 World Economic Forum (below) which is still relevant:

Peter Bilello from CIMdata shared his observations and guidance related to the current ongoing digital business revolution that is taking place thanks to internet and IoT technologies. It will fundamentally transform how people will work and interact between themselves and with machines. Survival in business will depend on how companies create Smart and Connected Ecosystems. Peter showed a slide from the 2015 World Economic Forum (below) which is still relevant:

Probably depending on your business some of these waves might have touched your organization already. What is clear that the market leaders here will benefit the most – the ones owning a smart and connected ecosystem will be the winners shortly.

Next, Peter explained why PLM, and in particular the Product Innovation Platform, is crucial for a smart and connected enterprise. Shiny capabilities like a digital twin, the link between virtual and real, or virtual & augmented reality can only be achieved affordably and competitively if you invest in making the source digital connected. The scope of a product innovation platform is much broader than traditional PLM. Also, the way information is stored differs – moving from documents (files) towards data (elements in a database). I fully agree with Peter’s opinion here that PLM is conceptually the Killer App for a Smart & Connected Ecosystem and this notion is spreading.

A recent article from Forbes in the category Leadership: Is Your Company Ready For Digital Product Life Cycle Management? shows there is awareness. Still very basic and people are still confused to understand what is the difference with an electronic file (digital too ?) and a digital definition of information.

A recent article from Forbes in the category Leadership: Is Your Company Ready For Digital Product Life Cycle Management? shows there is awareness. Still very basic and people are still confused to understand what is the difference with an electronic file (digital too ?) and a digital definition of information.

The main point to remember here: Digital information can be accessed directly through a programming interface (API/Service) without the need to open a container (document) and search for this piece of information.

Peter then zoomed in on some topics that companies need to investigate to reach a smart & connected ecosystem. Security (still a question hardly addressed in IoT/Digital Twin demos), Standards and Interoperability ( you cannot connect in all proprietary formats economically and sustainably) A lot of points to consider and I want to close with Peter’s slide illustrating where most companies are in reality

The Challenges of a Connected Ecosystem for PLM

You can already download my slides from SlideShare here: The Challenges of a Connected Ecosystem for PLM . I will explain my presentation in an upcoming blog post as slides without a story might lead to the wrong interpretation, and we already reached 2000 words. Few words to come.

How to Run a PLM Project Using the Agile Manifesto

Andrew Lodge, head of Engineering Systems at JCB explained how applying the agile mindset towards a PLM project can lead to faster and accurate results needed by the business. I am a full supporter for this approach as having worked in long and waterfall-type of PLM implementations there was always the big crash and user dissatisfaction at the final delivery. Keeping the business involved every step seems to be the solution. The issue I discovered here is that agile implementation requires a lot of people, in particular, business, to be involved heavily. Some companies do not understand this need and dropped /reduced business contribution to the least, killing the value of an agile approach

Andrew Lodge, head of Engineering Systems at JCB explained how applying the agile mindset towards a PLM project can lead to faster and accurate results needed by the business. I am a full supporter for this approach as having worked in long and waterfall-type of PLM implementations there was always the big crash and user dissatisfaction at the final delivery. Keeping the business involved every step seems to be the solution. The issue I discovered here is that agile implementation requires a lot of people, in particular, business, to be involved heavily. Some companies do not understand this need and dropped /reduced business contribution to the least, killing the value of an agile approach

Concluding

For me coming back to London for the PI PLMx event was very motivational. Where the past two, three conferences before in Germany might have led to little progress per year, this year, thanks to new attendees and inspiration, it became for me a vivid event, hopefully growing shortly. Networking and listening to your peers in business remains crucial to digest it all.

I promised to write some more down-to-earth post this year related to current PLM practices. If you are eager for my ideas for the PLM future, come to PI PLMx in London on Feb 4th and 5th. Or later when reading the upcoming summary: The Weekend after PI PLMx London 2019.

I promised to write some more down-to-earth post this year related to current PLM practices. If you are eager for my ideas for the PLM future, come to PI PLMx in London on Feb 4th and 5th. Or later when reading the upcoming summary: The Weekend after PI PLMx London 2019.

ECO/ECR for Dummies was the most active post over the past five years. BOM-related posts, mainly related to the MBOM are second: Where is the MBOM? (2007) or The Importance of a PLM data model: EBOM – MBOM (2015) are the most popular posts.

In this post, an update related to current BOM practices. For most companies, their current focus while moving from CAD-centric to Item-centric.

Definitions

The EBOM (Engineering BOM) is a structure that reflects the engineering definition of a product, constructed using assemblies (functional groups of information) and parts (mechanical/electrical or software builds – specified by various specifications: 3D CAD, 2D CAD / Schematics or specifications). The EBOM reflects the full definition from a product how it should appear in the real-world and is used inside an organization to align information coming from different disciplines.

Note: This differs from the past, where initially the EBOM often was considered as a structure derived from the Mechanical Part definition.

The MBOM (Manufacturing BOM) is a structure that reflects the way the product is manufactured. The levels in the MBOM reflect the processing steps needed to build the product. Here based on the same EBOM different MBOMs can exist, as a company might decide that in one of their manufacturing plants they only assemble the product, wherein another plant they have tools to manufacture some of the parts themselves, therefore the MBOM will be different. See the example below: In case your company delivers products to their customers, either in a B2B or B2C model, the communication towards the customer is based on a product ID, which could be the customer part number (in case of B2B) or a catalog part number. The EBOM/MBOM for this product might evolve over time as long as the product specifications remain the same and are met.

In case your company delivers products to their customers, either in a B2B or B2C model, the communication towards the customer is based on a product ID, which could be the customer part number (in case of B2B) or a catalog part number. The EBOM/MBOM for this product might evolve over time as long as the product specifications remain the same and are met.

Does every company need an EBOM and MBOM?

Historically most companies have worked with a single BOM definition, due to the following reasons:

- In an Engineering-To-Order process or Build-to-Print process the company the target is to manufacture a product at a single site – Most of the time one product for ETO or one definition to many products in BTP. This means the BOM definition is already done with manufacturing resources and suppliers in mind.

- The PLM (or PDM) system only contained the engineering definition. Manufacturing engineers build the manufacturing definition manually in the local ERP-system by typing part numbers again and structuring the BOM according to the manufacturing process for that plant.

Where the first point makes sense, there might be still situations where a separate EBOM and MBOM is beneficial. Most of the time when the Build To Print activity is long-lasting and allows the company to optimize the manufacturing process without affecting the engineering definition.

The second point is describing a situation you will find at many companies as historically the ERP-system was the only enterprise IT-systems and here part numbers were registered.

Creating a PLM-ERP connection is usually the last activity a company does. First, optimize the silos and then think about how to connect them (unfortunate). The different structures on the left and right lead to a lot of complex programming by services-eager PLM implementers. Meanwhile, PLM-systems have evolved in the past 15 years, and a full BOM-centric approach from EBOM to MBOM is supported although every PLM vendor will have its own way of solving digital connectivity between BOM-items.

Meanwhile, PLM-systems have evolved in the past 15 years, and a full BOM-centric approach from EBOM to MBOM is supported although every PLM vendor will have its own way of solving digital connectivity between BOM-items. You should consider an EBOM / MBOM approach in the following situations:

You should consider an EBOM / MBOM approach in the following situations:

- In case you have a modular product the definition of the EBOM and MBOM can be managed at the module As modules might be manufactured at different locations, only the MBOM of the module may vary per plant. Therefore, fewer dependencies on the EBOM or the total EBOM of a product.

- In case a company has multiple manufacturing plants or is aiming to outsource manufacturing, the PLM-system is the only central place where all product knowledge can reside and pushed to the local ERPs when needed for potential localization. Localization means for me, using parts that are sourced in the region, still based on the part specification coming from the EBOM.

Are EBOM and MBOM parts different?

There is no real academic answer to this question. Let’s see some of the options, and you can decide yourself.

An EBOM part is specified by documents – this can be a document, a 3D model combined with 2D drawings or a 3D annotated model. In case this EBOM part is a standard part, the specification may come from a part manufacturer through their catalog.

As this part manufacturer will not be the only one manufacturing the standard part, you will see that this EBOM-part has potentially several MBOM-parts to be resolved when manufacturing is planned. The terms Approved Manufacturing List (AML) – defined by Engineering and Approved Vendor List (AVL) – based on the AML but controlled by purchasing apply here. Now the case that the EBOM-part is not a standard part. In that case, there are two options:

Now the case that the EBOM-part is not a standard part. In that case, there are two options:

- The EBOM-part holds only the specifications and the EBOM-part will be linked to one or more MBOM-parts (depending on manufacturing locations). In case the part is outsourced, your company might not even be interested in the MBOM-part as it is up to the supplier to manufacture it according to specifications

- The EBOM-part describes a specification but does not exist as such individuals. For example, 1.2-meter hydraulic tube, or the part should be painted (not specified in the EBOM with quantity) or cut out of steel with a certain thickness. In the MBOM you would find the materials in detailed sizes and related operations.

Do we need an EBOM and MBOM structure?

In the traditional PLM implementations, we often see the evolution of BOM-like structures along the enterprise: Concept-BOM, Engineering BOM, Manufacturing BOM, Service BOM, and others. Each structure could consist of individual objects in the PLM-system. This is an easy to understand approach, where the challenge is to keep these structures up-to-date and synced with the right information.

See below an old image from Dassault Systemes ENOVIA in 2014:

Another more granular approach is to have connected data sets that all have their unique properties and by combining some of them you have the EBOM, and by combining other elements, you have the MBOM. This approach is used in data platforms where the target is to have a “single version of the truth” for each piece of information and you create through apps an EBOM or MBOM-view with related functionality. My blog-buddy Oleg Shilovitsky from OpenBOM is promoting this approach already for several years – have a look here.

So the answer do you need multiple BOM structures is:

Probably YES currently but not anymore in the future once you are data-driven.

Ultimately we want to be data-driven to allow extreme efficient data handling and speed of operations (no more conversions / no more syncing to be controlled by human resources – highly automated and intelligent is the target)

Conclusion

Even this post is just showing the tip of the iceberg for a full EBOM-MBOM discussion. It is clear that this discussion now is more mature and understood by most PLM-consultants as compared to 5 – 10 years ago.

It is a necessary step to understand the future, which I hope to explain during the upcoming PI PLMx event in London or whenever we meet.

Happy New Year to all of you. It has been a period of reflection – first disconnected from PLM and then again slowly getting my PLM-twisted brain back to work. And although my mind is already running in a “What’s next mode,” I would like to share with you first some of the lessons learned from last year.

Happy New Year to all of you. It has been a period of reflection – first disconnected from PLM and then again slowly getting my PLM-twisted brain back to work. And although my mind is already running in a “What’s next mode,” I would like to share with you first some of the lessons learned from last year.

Model-Based confusion

Most of the topics related to model-based approaches, from systems engineering, through engineering and manufacturing, leading to digital twins, are very futuristic; an end-to-end model-driven enterprise is not in reach for most of us. There are several reasons for that:

- Not every company has this need or discovered the need to have digital continuity and almost real-time availability of information end-to-end. As a result, there are still slow businesses.

Understanding the needs for a model-driven enterprise takes time and effort. There are no available solutions on the market yet, so there is no baseline available to discuss and compare. For sure, solutions will be a combination of connected capabilities based on model-driven paradigms. It will take time to develop and understand the benefits.

Understanding the needs for a model-driven enterprise takes time and effort. There are no available solutions on the market yet, so there is no baseline available to discuss and compare. For sure, solutions will be a combination of connected capabilities based on model-driven paradigms. It will take time to develop and understand the benefits.- Model-Based approaches are not just a marketing invention, as some disbelievers suggest. In particular, in Asia, where people deal with less legacy (and skepticism) than our western engineering/manufacturing world, companies embrace these concepts faster. One more time, if you didn’t look at Zhang Xin Guo’s keynote speech at the Incose annual symposium last year, watch the speech below and learn to understand the needed paradigm change. Is it a coincidence that a Chinese speaker gives the best explanation so far?

Technology hype?

The (Industrial) Internet of Things, Machine Learning, Artificial Intelligence, Augmented reality, Virtual Reality, and Digit Twin appear in the news. But, most of the time, they are associated with tremendous opportunities, changing business models and potentially high profits. For example, read this quote from Jim Heppelmann after the acquisition of Frustum:

“PTC is pushing the boundaries of innovation with this acquisition,” said Jim Heppelmann, president and CEO, PTC. “Creo is core to PTC’s overall strategy, and the embedded capabilities from ANSYS and, later, Frustum will elevate Creo to a leading position in the world of design and simulation. With breakthrough new technologies such as AR/VR, high-performance computing, IoT, AI, and additive manufacturing entering the picture, the CAD industry is going through a renaissance period, and PTC is committed to leading the way.”

Of course, vendors have to sell their capabilities; the major challenge for companies is to make a valuable business from all these capabilities (if possible). In that context, I was happy to read one of Joe Barkai’s recent posts: When the Party is Over — Industrial Internet of Things Industry Snapshot and Predictions, where he puts the IIoT hype in a business perspective.

Can technology change business?

If you look back over the past 50 years, various changes in technology have affected the world of PLM. Assuming the current definition of PLM could exist in 1970 even without a PLM system – PLM was invented in 1999. First, there was the journey from drawing boards to electronic drawings and 3D models. Next global connectivity and an affordable infrastructure boosted product development collaboration. Still, many organizations work in a silo-ed way, inside the organization and outside with their suppliers. Still, the 2D Drawing is the legal information carrier combined with additional specification documents. Changing the paradigm to a connected 3D Model is already possible, however not pushed or implemented by manufacturing companies as it requires a change in ways of working (people) and related processes.

A business change has to come from the top of an organization, which is the challenge for PLM. What is the value of collaboration? How do you measure innovation capabilities? How do you impact time to market?

A business change has to come from the top of an organization, which is the challenge for PLM. What is the value of collaboration? How do you measure innovation capabilities? How do you impact time to market?

Time to market might be understood at the board level. There is an existing NPI (New Product Introduction) process in place, and by analyzing the process, fixing bottlenecks, an efficiency gain can be achieved. Every innovation goes through the same process. This is where traditional PLM plays a significant role as it is partly measurable.

But how to improve collaboration or innovation speed?

At the board level, often numbers rule and based on numbers, decisions are taken. This mindset often leads to narrow thinking. Adjustments are made by prioritizing one topic above the other. Complex topics like the needed change of business and business models are not really understood and passed to the middle management (who will kill it to guarantee the status quo).

Organizations need a myth!

One of the significant challenges for PLM is that there is no end-to-end belief in its purpose. According to Yuval Noah Harari in his book Sapiens, the primary success factor why homo sapiens became the dominant species on earth is because they can collaborate in large groups driven by abstract themes. It can be religion. A lot is done on behalf of faith. It can also be a charismatic CEO who can create the myth – think about Steve Jobs, Elon Musk.

One of the significant challenges for PLM is that there is no end-to-end belief in its purpose. According to Yuval Noah Harari in his book Sapiens, the primary success factor why homo sapiens became the dominant species on earth is because they can collaborate in large groups driven by abstract themes. It can be religion. A lot is done on behalf of faith. It can also be a charismatic CEO who can create the myth – think about Steve Jobs, Elon Musk.

Organizations that want to go through a digital transformation need to create their myth and deliver product/business innovation through new ways of working should be part of that. I wrote about myths in the context of PLM in the past: PLM as a myth? . I believe the domain of PLM is too small for a myth still. However, it is a crucial part of digital transformation.

In the upcoming year, I will focus again on how organizations can benefit from new technologies. But, again, not focusing on WHAT is possible, but mainly on WHY and HOW – all based on the myth that we can do so much better as organizations when innovation and customer focus are part of everyone’s empowered mind.

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…

Jos, as usual, you've provided a buffet of "food for thought". Where do you see AI being trained by a…