You are currently browsing the monthly archive for November 2025.

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

Last week, I wrote about the first day of the crowded PLM Roadmap/PDT Europe conference.

You can still read my post here in case you missed it: A very long week after PLM Roadmap / PDT Europe 2025

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

My conclusion from that post was that day 1 was a challenging day if you are a newbie in the domain of PLM and data-driven practices. We discussed and learned about relevant standards that support a digital enterprise, as well as the need for ontologies and semantic models to give data meaning and serve as a foundation for potential AI tools and use cases.

This post will focus on the other aspects of product lifecycle management – the evolving methodologies and the human side.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

Note: I try to avoid the abbreviation PLM, as many of us in the field associate PLM with a system, where, for me, the system is more of an IT solution, where the strategy and practices are best named as product lifecycle management.

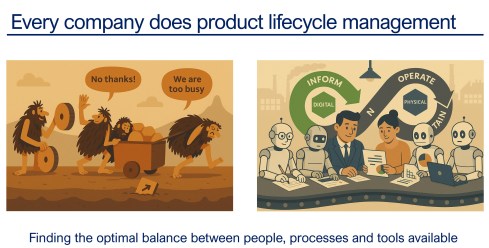

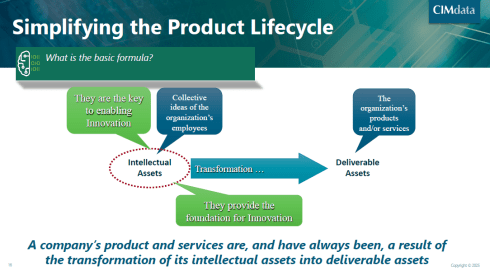

And as a reminder, I used the image above in other conversations. Every company does product lifecycle management; only the number of people, their processes, or their tools might differ. As Peter Billelo mentioned in his opening speech, the products are why the company exists.

Unlocking Efficiency with Model-Based Definition

![]() Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Day 2 started energetically with Dennys Gomes‘ keynote, which introduced model-based definition (MBD) at Vestas, a world-leading OEM for wind turbines.

Personally, I consider MBD as one of the stepping stones to learning and mastering a model-based enterprise, although do not be confused by the term “model”. In MBD, we use the 3D CAD model as the source to manage and support a data-driven connection among engineering, manufacturing, and suppliers. The business benefits are clear, as reported by companies that follow this approach.

However, it also involves changes in technology, methodology, skills, and even contractual relations.

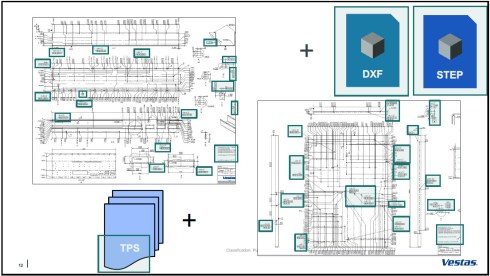

Dennys started sharing the analysis they conducted on the amount of information in current manufacturing drawings. The image below shows that only the green marker information was used, so the time and effort spent creating the drawings were wasted.

It was an opportunity to explore model-based definition, and the team ran several pilots to learn how to handle MBD, improve their skills, methodologies, and tool usage. As mentioned before, it is a profound change to move from coordinated to connected ways of working; it does not happen by simply installing a new tool.

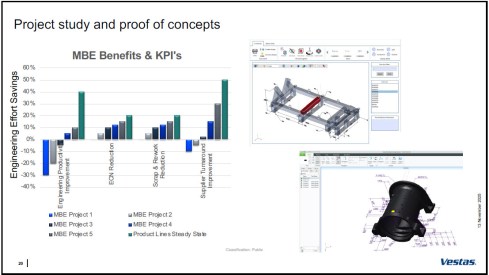

The image above shows the learning phases and the ultimate benefits accomplished. Besides moving to a model-based definition of the information, Dennys mentioned they used the opportunity to simplify and automate the generation of the information.

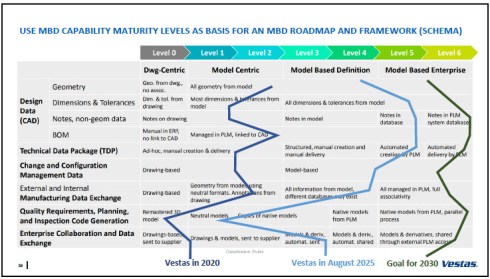

Vestas is on a clear path, and it is interesting to see their ambition in the MBD roadmap below.

An inspirational story, hopefully motivating other companies to make this first step to a model-based enterprise. Perhaps difficult at the beginning from the people’s perspective, but as a business, it is a profitable and required direction.

Bridging The Gap Between IT and Business

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

It was a great pleasure to listen again to Peter Vind from Siemens Energy, who first explained to the audience how to position the role of an enterprise architect in a company compared to society. He mentioned he has to deal with the unicorns at the C-level, who, like politicians in a city, sometimes have the most “innovative” ideas – can they be realized?

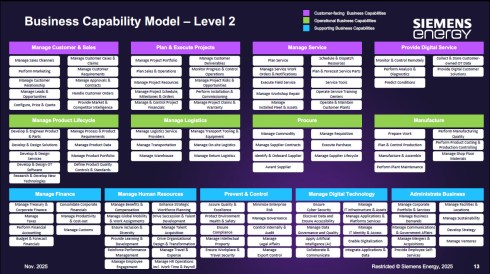

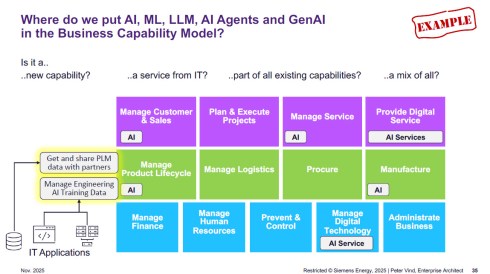

To answer these questions, Peter is referring to the Business Capability Model (BCM) he uses as an Enterprise Architect.

Business Capabilities define ‘what’ a company needs to do to execute its strategy, are structured into logical clusters, and should be the foundation for the enterprise, on which both IT and business can come to a common approach.

The detailed image above is worth studying if you are interested in the levels and the mappings of the capabilities. The BCM approach was beneficial when the company became disconnected from Siemens AG, enabling it to rationalize its application portfolio.

Next, Peter zoomed in on some of the examples of how a BCM and structured application portfolio management can help to rationalize the AI hype/demand – where is it applicable, where does AI have impact – and as he illustrated, it is not that simple. With the BCM, you have a base for further analysis.

Other future-relevant topics he shared included how to address the introduction of the digital product passport and how the BCM methodology supports the shift in business models toward a modern “Power-as-a-Service” model.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

He concludes that having a Business Capability Model gives you a stable foundation for managing your enterprise architecture now and into the future. The BCM complements other methodologies that connect business strategy to (IT) execution. See also my 2024 post: Don’t use the P** word! – 5 lessons learned.

Holistic PLM in Action.

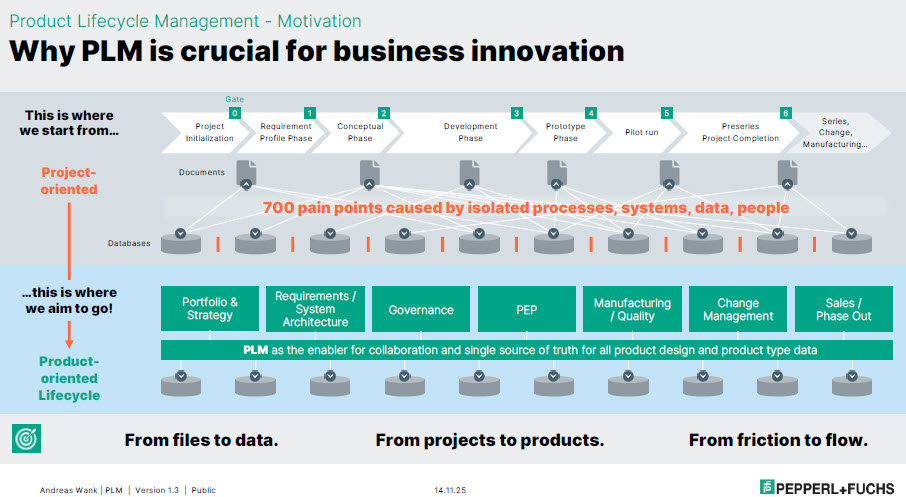

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

or companies struggling with their digital transformation in the PLM domain, Andreas Wank, Head of Smart Innovation at Pepperl+Fuchs SE, shared his journey so far. All the essential aspects of such a transformation were mentioned. Pepperl+Fuchs has a portfolio of approximately 15,000 products that combine hardware and software.

It started with the WHY. With such a massive portfolio, business innovation is under pressure without a PLM infrastructure. Too many changes, fragmented data, no single source of truth, and siloed ways of working lead to much rework, errors, and iterations that keep the company busy while missing the global value drivers.

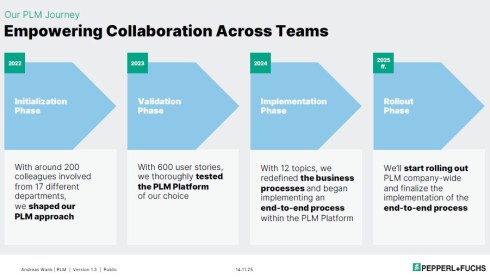

Next, the journey!

The above image is an excellent way to communicate the why, what, and how to a broader audience. All the main messages are in the image, which helps people align with them.

The first phase of the project, creating digital continuity, is also an excellent example of digital transformation in traditional document-driven enterprises. From files to data align with the From Coordinated To Connected theme.

Next, the focus was to describe these new ways of working with all stakeholders involved before starting the selection and implementation of PLM tools. This approach is so crucial, as one of my big lessons learned from the past is: “Never start a PLM implementation in R&D.”

If you start in R&D, the priority shifts away from the easy flow of data between all stakeholders; it becomes an R&D System that others will have to live with.

You never get a second, first impression!

Pepperl+Fuchs spends a long time validating its PLM selection – something you might only see in privately owned companies that are not driven by shareholder demands, but take the time to prepare and understand their next move.

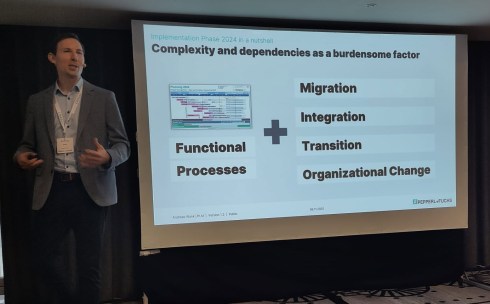

As Andreas also explained, it is not only about the functional processes. As the image shows, migration (often the elephant in the room) and integration with the other enterprise systems also need to be considered. And all of this is combined with managing the transition and the necessary organizational change.

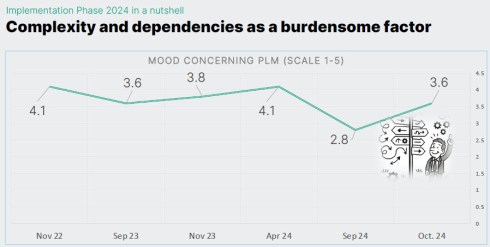

Andreas shared some best practices illustrating the focus on the transition and human aspects. They have implemented a regular survey to measure the PLM mood in the company. And when the mood went radical down on Sept 24, from 4.1 to 2.8 on a scale of 1 to 5, it was time to act.

They used one week at a separate location, where 30 of his colleagues worked on the reported issues in one room, leading to 70 decisions that week. And the result was measurable, as shown in the image below.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Andreas’s story was such a perfect fit for the discussions we have in the Share PLM podcast series that we asked him to tell it in more detail, also for those who have missed it. Subscribe and stay tuned for the podcast, coming soon.

Trust, Small Changes, and Transformation.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

Ashwath Sooriyanarayanan and Sofia Lindgren, both active at the corporate level in the PLM domain at Assa Abloy, came with an interesting story about their PLM lessons learned.

To understand their story, it is essential to comprehend Assa Abloy as a special company, as the image below explains. With over 1000 sites, 200 production facilities, and, last year, on average every two weeks, a new acquisition, it is hard to standardize the company, driven by a corporate organization.

However, this was precisely what Assa Abloy has been trying to do over the past few years. Working towards a single PLM system, with generic processes for all, spending a lot of time integrating and migrating data from the different entities became a mission impossible.

To increase user acceptance, they fell into the trap of customizing the system ever more to meet many user demands. A dead end, as many other companies have probably experienced similarly.

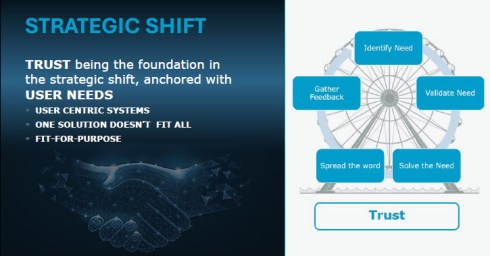

And then they came with a strategic shift. Instead of holding on to the past and the money invested in technology, they shifted to the human side.

The PLM group became a trusted organisation supporting the individual entities. Instead of telling them what to do (Top-Down), they talked with the local business and provided standardized PLM knowledge and capabilities where needed (Bottom-Up).

This “modular” approach made the PLM group the trusted partner of the individual business. A unique approach, making us realize that the human aspect remains part of implementing PLM

Humans cannot be transformed

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

Given the length of this blog post, I will not spend too much text on my closing presentation at the conference. After a technical start on DAY 1, we gradually moved to broader, human-related topics in the latter part.

You can find my presentation here on SlideShare as usual, and perhaps the best summary from my session was given in this post from Paul Comis. Enjoy his conclusion.

Conclusion

Two and a half intensive days in Paris again at the PLM Roadmap / PDT Europe conference, where some of the crucial aspects of PLM were shared in detail. The value of the conference lies in the stories and discussions with the participants. Only slides do not provide enough education. You need to be curious and active to discover the best perspective.

For those celebrating: Wishing you a wonderful Thanksgiving!

For those of you following my blog over the years, there is, every time after the PLM Roadmap PDT Europe conference, one or two blog posts, where the first starts with “The weekend after ….”

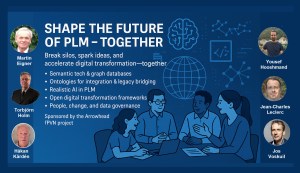

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

This time, November has been a hectic week for me, with first this engaging workshop “Shape the future of PLM – together” – you can read about it in my blog post or the latest post from Arrowhead fPVN, the sponsor of the workshop.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I celebrated with the core team from the PLM Green Global Alliance our 5th anniversary, during which we discussed sustainability in action. The term sustainability is currently under the radar, but if you want to learn what is happening, read this post with a link to the webinar recording.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

Last week, I was also active at the PTC/User Benelux conference, where I had many interesting discussions about PTC’s strategy and portfolio. A big and well-organized event in the town where I grew up in the world of teaching and data management.

And now it is time for the PLM roadmap / PDT conference review

The conference

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.

The conference is my favorite technical conference 😉 for learning what is happening in the field. Over the years, we have seen reports from the Aerospace & Defense PLM Action Groups, which systematically work on various themes related to a digital enterprise. The usage of standards, MBSE, Supplier Collaboration, Digital Thread & Digital Twin are all topics discussed.

This time, the conference was sold out with 150+ attendees, just fitting in the conference space, and the two-day program started with a challenging day 1 of advanced topics, and on day 2 we saw more company experiences.

Combined with the traditional dinner in the middle, it was again a great networking event to charge the brain. We still need the brain besides AI. Some of the highlights of day 1 in this post.

Combined with the traditional dinner in the middle, it was again a great networking event to charge the brain. We still need the brain besides AI. Some of the highlights of day 1 in this post.

PLM’s Integral Role in Digital Transformation

As usual, Peter Bilello, CIMdata’s President & CEO, kicked off the conference, and his message has not changed over the years. PLM should be understood as a strategic, enterprise-wide approach that manages intellectual assets and connects the entire product lifecycle.

As usual, Peter Bilello, CIMdata’s President & CEO, kicked off the conference, and his message has not changed over the years. PLM should be understood as a strategic, enterprise-wide approach that manages intellectual assets and connects the entire product lifecycle.

I like the image below explaining the WHY behind product lifecycle management.

It enables end-to-end digitalization, supports digital threads and twins, and provides the backbone for data governance, analytics, AI, and skills transformation.

Peter walked us briefly through CIMdata’s Critical Dozen (a YouTube recording is available here), all of which are relevant to the scope of digital transformation. Without strong PLM foundations and governance, digital transformation efforts will fail.

The Digital Thread as the Foundation of the Omniverse

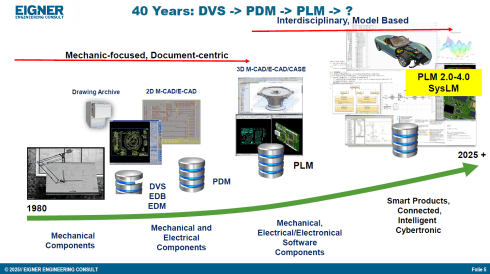

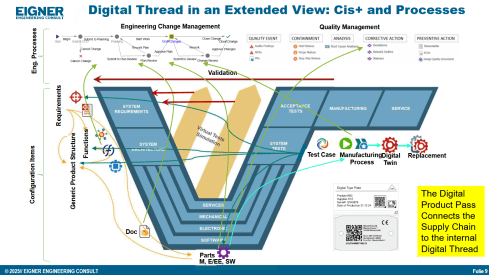

Prof. Dr.-Ing. Martin Eigner, well known for his lifetime passion and vision in product lifecycle management (PDM and PLM tools & methodology), shared insights from his 40-year journey, highlighting the growing complexity and ever-increasing fragmentation of customer solution landscapes.

Prof. Dr.-Ing. Martin Eigner, well known for his lifetime passion and vision in product lifecycle management (PDM and PLM tools & methodology), shared insights from his 40-year journey, highlighting the growing complexity and ever-increasing fragmentation of customer solution landscapes.

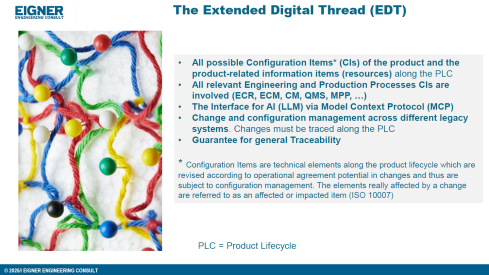

In his current eco-system, ERP (read SAP) is playing a significant role as an execution platform, complemented by PDM or ECTR capabilities. Few of his customers go for the broad PLM systems, and therefore, he stresses the importance of the so-called Extended Digital Thread.

Prof Eigner describes the EDT more precisely as an overlaying infrastructure implemented by a graph database that serves as a performant knowledge graph of the enterprise.

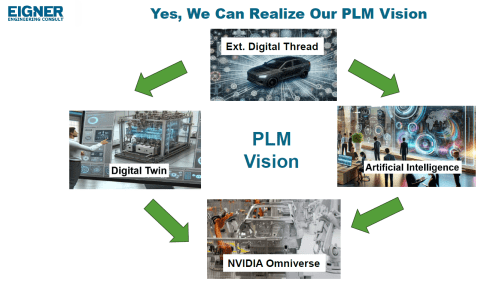

The EDT serves as the foundation for AI-driven applications, supporting impact analysis, change management, and natural-language interaction with product data. The presentation also provides a detailed view of Digital Twin concepts, ranging from component to system and process twins, and demonstrates how twins enhance predictive maintenance, sustainability, and process optimization.

Combined with the NVIDIA Omniverse as the next step toward immersive, real-time collaboration and simulation, enabling virtual factories and physics-accurate visualization. The outlook emphasizes that combining EDT, Digital Twin, AI, and Omniverse moves the industry closer to the original PLM vision: a unified, consistent Single Source of Truth 😮that boosts innovation, efficiency, and ROI.

![]() For me, hearing and reading the term Single Source of Truth still creates discomfort with reality and humanity, so we still have something to discuss.

For me, hearing and reading the term Single Source of Truth still creates discomfort with reality and humanity, so we still have something to discuss.

Semantic Digital Thread for Enhanced Systems Engineering in a Federated PLM Landscape

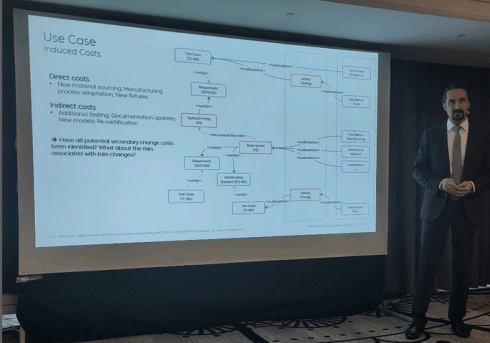

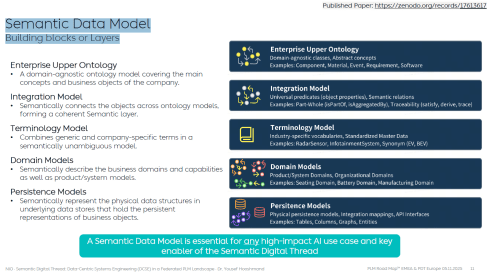

Dr. Yousef Hooshmand‘s presentation was a great continuation of the Extended Digital Thread theme discussed by Dr. Martin Eigner. Where the core of Martin’s EDT is based on traceability between artifacts and processes throughout the lifecycle, Yousef introduced a (for me) totally new concept: starting with managing and structuring the data to manage the knowledge, rather than starting from the models and tools to understand the knowledge.

Dr. Yousef Hooshmand‘s presentation was a great continuation of the Extended Digital Thread theme discussed by Dr. Martin Eigner. Where the core of Martin’s EDT is based on traceability between artifacts and processes throughout the lifecycle, Yousef introduced a (for me) totally new concept: starting with managing and structuring the data to manage the knowledge, rather than starting from the models and tools to understand the knowledge.

It is a fundamentally different approach to addressing the same problem of complexity. During our pre-conference workshop “Shape the future of PLM – together,” I already got a bit familiar with this approach, and Yousef’s recently released paper provides all the details.

All the relevant information can be found in his recent LinkedIn post here.

In his presentation during the conference, Yousef illustrated the value and applicability of the Semantic Digital Thread approach by presenting it in an automotive use case: Impact Analysis and Cost Estimation (image above)

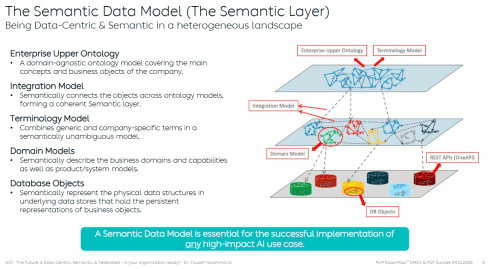

To understand the Semantic Digital Thread, it is essential to understand the Semantic Data Model and its building blocks or layers, as illustrated in the image below:

In addition, such an infrastructure is ideal for AI applications and avoids vendor- or tool lock-in, providing a significant long-term advantage.

I am sure it will take time for us to digest the content if you are entering the domain of a data-driven enterprise (the connected approach) instead of a document-driven enterprise (the coordinated approach).

However, as many of the other presentations on day 1 also stated: “data without context is worthless – then they become just bits and bytes.” For advanced and future scenarios, you cannot avoid working with ontologies, semantic models and graph databases.

However, as many of the other presentations on day 1 also stated: “data without context is worthless – then they become just bits and bytes.” For advanced and future scenarios, you cannot avoid working with ontologies, semantic models and graph databases.

Where is your company on the path to becoming more data-driven?

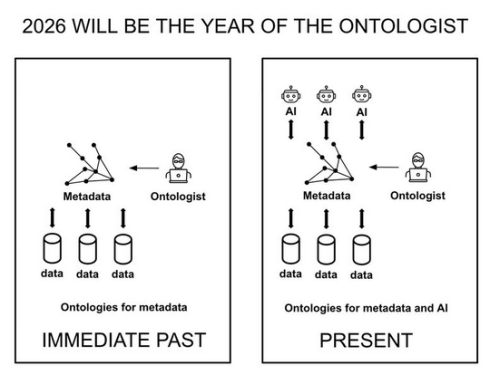

Note: I just saw this post and the image above, which emphasizes the importance of the relationship between ontologies and the application of AI agents.

Evaluation of SysML v2 for use in Collaborative MBSE between OEMs and Suppliers

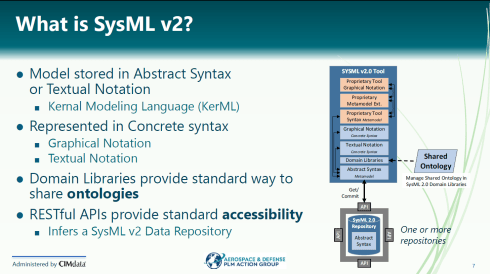

It was interesting to hear Chris Watkins’ speech, which presented the findings from the AD PLM Action Group MBSE Collaboration Working Group on digital collaboration based on SysML v2.

It was interesting to hear Chris Watkins’ speech, which presented the findings from the AD PLM Action Group MBSE Collaboration Working Group on digital collaboration based on SysML v2.

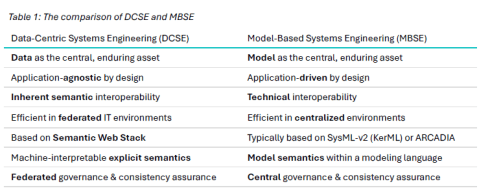

The topic they research is that currently there are no common methods and standards for exchanging digital model-based requirements and architecture deliverables for the design, procurement, and acceptance of aerospace systems equipment across the industry.

The action group explored the value of SysML v2 for data-driven collaboration between OEMs and suppliers, particularly in the early concept phases.

Chris started with a brief explanation of what SysXML v2 is – image below:

As the image illustrates, SysML v2-ready tools allow people to work in their proprietary interfaces while sharing results in common, defined structures and ontologies.

When analyzing various collaboration scenarios, one of the main challenges remained managing changes, the required ontologies, and working in a shared IT environment.

👉You can read the full report here: AD PAG reports: Model-Based Systems Engineering.

An interesting point of discussion here is that, in the report, participants note that, despite calling out significant gaps and concerns, a substantial majority of the industry indicated that their MBSE solution provider is a good partner. At the same time, only a small minority expressed a negative view.

Would Data-Centric Systems Engineering change the discussion? See table 1 below from Yousef’s paper:

An illustration that there was enough food for discussion during the conference.

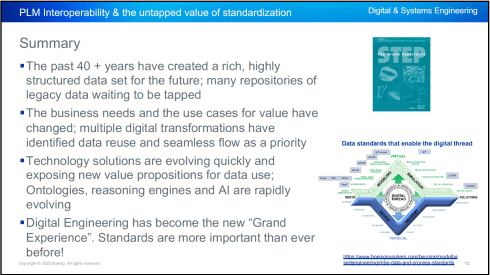

PLM Interoperability and the Untapped Value of 40 Years in Standardization

In the context of collaboration, two sessions fit together perfectly.

First, Kenny Swope from Boeing. Kenny is a longtime Boeing engineering leader and global industrial-data standards expert who oversees enterprise interoperability efforts, chairs ISO/TC 184/SC 4, and mentors youth in technology through 4-H and FIRST programs.

First, Kenny Swope from Boeing. Kenny is a longtime Boeing engineering leader and global industrial-data standards expert who oversees enterprise interoperability efforts, chairs ISO/TC 184/SC 4, and mentors youth in technology through 4-H and FIRST programs.

Kenny shared that over the past 40+ years, the understanding and value of this approach have become increasingly apparent, especially as organizations move toward a digital enterprise. In a digital enterprise, these standards are needed for efficient interoperability between various stakeholders. And the next session was an example of this.

Unlocking Enterprise Knowledge

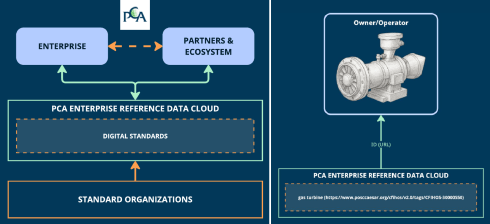

Fredrik Anthonisen, the CTO of the POSC Caesar Association (PCA), started his story about the potential value of efficient standard use.

Fredrik Anthonisen, the CTO of the POSC Caesar Association (PCA), started his story about the potential value of efficient standard use.

According to a Siemens report, “The true costs of downtime” a $1,4 trillion is lost to unplanned downtime.

The root cause is that, most of the time, the information needed to support the MRO activity is inaccessible or incomplete.

Making data available using standards can provide part of the answer, but static documents and slow consensus processes can’t keep up with the pace of change.

Therefore, PCA established the PCA enterprise reference data cloud, where all stakeholders in enterprise collaboration can relate their data to digital exposed standards, as the left side of the image shows.

Fredrik shared a use case (on the right side of the image) as an example. Also, he mentioned that the process for defining and making the digital reference data available to participants is ongoing. The reference data needs to become the trusted resource for the participants to monetize the benefits.

Summary

Day 1 had many more interesting and advanced concepts related to standards and the potential usage of AI.

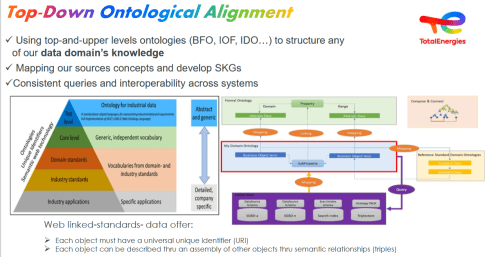

Jean-Charles Leclerc, Head of Innovation & Standards at TotalEnergies, in his session, “Bringing Meaning Back To Data,” elaborated on the need to provide data in the context of the domain for which it is intended, rather than “indexed” LLM data.

Jean-Charles Leclerc, Head of Innovation & Standards at TotalEnergies, in his session, “Bringing Meaning Back To Data,” elaborated on the need to provide data in the context of the domain for which it is intended, rather than “indexed” LLM data.

Very much aligned with Yousef’s statement that there is a need to apply semantic technologies, and especially ontologies, to turn the data into knowledge.

More details can also be found in the “Shape the future of PLM – together” post, where Jean-Charles was one of the leading voices.

The panel discussion at the end of day 1 was free of people jumping on the hype. Yes, benefits are envisioned across the product lifecycle management domain, but to be valuable, the foundation needs to be more structured than it has been in the past.

The panel discussion at the end of day 1 was free of people jumping on the hype. Yes, benefits are envisioned across the product lifecycle management domain, but to be valuable, the foundation needs to be more structured than it has been in the past.

“Reliable AI comes from a foundation that supports knowledge in its domain context.”

Conclusion

For the casual user, day 1 was tough – digital transformation in the product lifecycle domain requires skills that might not yet exist in smaller organizations. Understanding the need for ontologies (generic/domain-specific) and semantic models is essential to benefit from what AI can bring – a challenging and enjoyable journey to follow!

On November 11th, we celebrated our 5th anniversary of the PLM Green Global Alliance (PGGA) with a webinar where ♻️ Jos Voskuil (me) interviewed the five other PGGA core team members about developments and experiences in their focus domain, potentially allowing for a broader discussion.

On November 11th, we celebrated our 5th anniversary of the PLM Green Global Alliance (PGGA) with a webinar where ♻️ Jos Voskuil (me) interviewed the five other PGGA core team members about developments and experiences in their focus domain, potentially allowing for a broader discussion.

In our discussion, we focused on the trends and future directions of the PLM Green Global Alliance, emphasizing the intersection of Product Lifecycle Management (PLM) and sustainability.

Probably, November 11th was not the best day for broad attendance, and therefore, we hope that the recording of this webinar will allow you to connect and comment on this post.

Probably, November 11th was not the best day for broad attendance, and therefore, we hope that the recording of this webinar will allow you to connect and comment on this post.

Enjoy the discussion – watch it, or listen to it, as this time we did not share any visuals in the debate. Still, we hope to get your reflections and feedback on the interview related to the LinkedIn post.

The discussion centered on the trends and future directions of the PLM Green Global Alliance, with a focus on the intersection of Product Lifecycle Management (PLM) and sustainability.

Short Summary

♻️ Rich McFall shared his motivations for founding the alliance, highlighting the need for a platform that connects individuals committed to sustainability and addresses the previously limited discourse on PLM’s role in promoting environmental responsibility. He noted a significant variance in vendor engagement with sustainability, indicating that while some companies are proactive, others remain hesitant.

The conversation delved into the growing awareness and capabilities of how to perform a Life Cycle Assessment (LCA) with ♻️ Klaus Brettschneider, followed by the importance of integrating sustainability into PLM strategies, with ♻️ Mark Reisig discussing the ongoing energy transition and the growing investments in green technologies, particularly in China and Europe.

♻️ Evgeniya Burimskaya raised concerns about implementing circular economy principles in the aerospace industry, emphasizing the necessity of lifecycle analysis and the upcoming digital product passport requirements. The dialogue also touched on the Design for Sustainability initiative, led by ♻️ Erik Rieger, which aims to embed sustainability into the product design phase, necessitating a cultural shift in engineering education to prioritize sustainability.

Conclusion

We concluded with understanding the urgent realities of climate change, but also advocating for an optimistic mindset in the face of challenges – it is perhaps not as bad as it seems in the new media. There are significant investments in green energy, serving as a beacon of hope, which encourage people to remain committed to collaborative efforts in advancing sustainable practices.

We agreed on the long-term nature of behavioral change within organizations and the role of the Green Alliance in fostering this transformation, concluding with a positive outlook on the potential for future generations to drive necessary changes in sustainability.

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

Together with Håkan Kårdén, we had the pleasure of bringing together 32 passionate professionals on November 4th to explore the future of PLM (Product Lifecycle Management) and ALM (Asset Lifecycle Management), inspired by insights from four leading thinkers in the field. Please, click on the image for more details.

The meeting had two primary purposes.

- Firstly, we aimed to create an environment where these concepts could be discussed and presented to a broader audience, comprising academics, industrial professionals, and software developers. The group’s feedback could serve as a benchmark for them.

- The second goal was to bring people together and create a networking opportunity, either during the PLM Roadmap/PDT Europe conference, the day after, or through meetings established after this workshop.

Personally, it was a great pleasure to meet some people in person whose LinkedIn articles I had admired and read.

The meeting was sponsored by the Arrowhead fPVN project, a project I discussed in a previous blog post related to the PLM Roadmap/PDT Europe 2024 conference last year. Together with the speakers, we have begun working on a more in-depth paper that describes the similarities and the lessons learned that are relevant. This activity will take some time.

Therefore, this post only includes the abstracts from the speakers and links to their presentations. It concludes with a few observations from some attendees.

Reasoning Machines: Semantic Integration in Cyber-Physical Environments

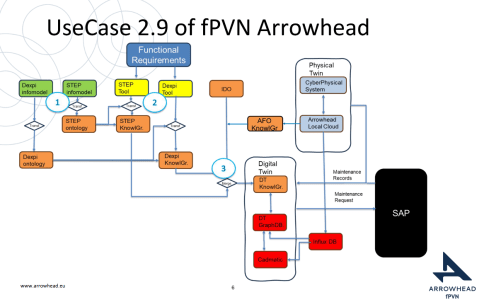

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

Torbjörn Holm / Jan van Deventer: The presentation discussed the transition from requirements to handover and operations, emphasizing the role of knowledge graphs in unifying standards and technologies for a flexible product value network

The presentation outlines the phases of the product and production lifecycle, including requirements, specification, design, build-up, handover, and operations. It raises a question about unifying these phases and their associated technologies and standards, emphasizing that the most extended phase, which involves operation, maintenance, failure, and evolution until retirement, should be the primary focus.

It also discusses seamless integration, outlining a partial list of standards and technologies categorized into three sections: “Modelling & Representation Standards,” “Communication & Integration Protocols,” and “Architectural & Security Standards.” Each section contains a table listing various technology standards, their purposes, and references. Additionally, the presentation includes a “Conceptual Layer Mapping” table that details the different layers (Knowledge, Service, Communication, Security, and Data), along with examples, functions, and references.

The presentation outlines an approach for utilizing semantic technologies to ensure interoperability across heterogeneous datasets throughout a product’s lifecycle. Key strategies include using OWL 2 DL for semantic consistency, aligning domain-specific knowledge graphs with ISO 23726-3, applying W3C Alignment techniques, and leveraging Arrowhead’s microservice-based architecture and Framework Ontology for scalable and interoperable system integration.

The utilized software architecture system, including three main sections: “Functional Requirements,” “Physical Twin,” and “Digital Twin,” each containing various interconnected components, will be presented. The Architecture includes today several Knowledge Graphs (KG): A DEXPI KG, A STEP (ISO 10303) KG, An Arrowhead Framework KG and under work the CFIHOS Semantics Ontology, all aligned.

👉The presentation: W3C Major standard interoperability_Paris

Beyond Handover: Building Lifecycle-Ready Semantic Interoperability

Jean-Charles Leclerc argued that Industrial data standards must evolve beyond the narrow scope of handover and static interoperability. To truly support digital transformation, they must embrace lifecycle semantics or, at the very least, be designed for future extensibility.

This shift enables technical objects and models to be reused, orchestrated, and enriched across internal and external processes, unlocking value for all stakeholders and managing the temporal evolution of properties throughout the lifecycle. A key enabler is the “pattern of change”, a dynamic framework that connects data, knowledge, and processes over time. It allows semantic models to reflect how things evolve, not just how they are delivered.

By grounding semantic knowledge graphs (SKGs) in such rigorous logic and aligning them with W3C standards, we ensure they are both robust and adaptable. This approach supports sustainable knowledge management across domains and disciplines, bridging engineering, operations, and applications.

Ultimately, it’s not just about technology; it’s about governance.

Being Sustainab’OWL (Web Ontology Language) by Design! means building semantic ecosystems that are reliable, scalable, and lifecycle-ready by nature.

Additional Insight: From Static Models to Living Knowledge

To transition from static information to living knowledge, organizations must reassess how they model and manage technical data. Lifecycle-ready interoperability means enabling continuous alignment between evolving assets, processes, and systems. This requires not only semantic precision but also a governance framework that supports change, traceability, and reuse, turning standards into operational levers rather than compliance checkboxes.

👉The presentation: Beyond Handover – Building Lifecycle Ready Semantic Interoperability

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

The first two presentations had a lot in common as they both come from the Asset Lifecycle Management domain and focus on an infrastructure to support assets over a long lifetime. This is particularly visible in the usage and references to standards such as DEXPI, STEP, and CFIHOS, which are typical for this domain.

How can we achieve our vision of PLM – the Single Source of Truth?

Martin Eigner stated that Product Lifecycle Management (PLM) has long promised to serve as the Single Source of Truth for organizations striving to manage product data, processes, and knowledge across their entire value chain. Yet, realizing this vision remains a complex challenge.

Achieving a unified PLM environment requires more than just implementing advanced software systems—it demands cultural alignment, organizational commitment, and seamless integration of diverse technologies. Central to this vision is data consistency: ensuring that stakeholders across engineering, manufacturing, supply chain, and service have access to accurate, up-to-date, and contextualized information along the Product Lifecycle. This involves breaking down silos, harmonizing data models, and establishing governance frameworks that enforce standards without limiting flexibility.

Emerging technologies and methodologies, such as Extended Digital Thread, Digital Twins, cloud-based platforms, and Artificial Intelligence, offer new opportunities to enhance collaboration and integrated data management.

However, their success depends on strong change management and a shared understanding of PLM as a strategic enabler rather than a purely technical solution. By fostering cross-functional collaboration, investing in interoperability, and adopting scalable architectures, organizations can move closer to a trustworthy single source of truth. Ultimately, realizing the vision of PLM requires striking a balance between innovation and discipline—ensuring trust in data while empowering agility in product development and lifecycle management.

👉The presentation: Martin – Workshop PLM Future 04_10_25

The Future is Data-Centric, Semantic, and Federated … Is your organization ready?

Yousef Hooshmand, who is currently working at NIO as PLM & R&D Toolchain Lead Architect, discussed the must-have relations between a data-centric approach, semantic models and a federated environment as the image below illustrates:

Why This Matters for the Future?

- Engineering is under unprecedented pressure: products are becoming increasingly complex, customers are demanding personalization, and development cycles must be accelerated to meet these demands. Traditional, siloed methods can no longer keep up.

- The way forward is a data-centric, semantic, and federated approach that transforms overwhelming complexity into actionable insights, reduces weeks of impact analysis to minutes, and connects fragmented silos to create a resilient ecosystem.

- This is not just an evolution, but a fundamental shift that will define the future of systems engineering. Is your organization ready to embrace it?

👉The presentation: The Future is Data-Centric, Semantic, and Federated.

Some of first impressions

👉 Bhanu Prakash Ila from Tata Consultancy Services– you can find his original comment in this LinkedIn post

Key points:

- Traditional PLM architectures struggle with the fundamental challenge of managing increasingly complex relationships between product data, process information, and enterprise systems.

- Ontology-Based Semantic Models – The Way Forward for PLM Digital Thread Integration: Ontology-based semantic models address this by providing explicit, machine-interpretable representations of domain knowledge that capture both concepts and their relationships. These lay the foundations for AI-related capabilities.

It’s clear that as AI, semantic technologies, and data intelligence mature, the way we think and talk about PLM must evolve too – from system-centric to value-driven, from managing data to enabling knowledge and decisions.

A quick & temporary conclusion

Typically, I conclude my blog posts with a summary. However, this time the conclusion is not there yet. There is work to be done to align concepts and understand for which industry they are most applicable. Using standards or avoiding standards as they move too slowly for the business is a point of ongoing discussion. The takeaway for everyone in the workshop was that data without context has no value. Ontologies, semantic models and domain-specific methodologies are mandatory for modern data-driven enterprises. You cannot avoid this learning path by just installing a graph database.

Recently, we initiated the Design for Sustainability workgroup, an initiative from two of our PGGA members, Erik Rieger and Matthew Sullivan. You can find a recording of the kick-off here on our YouTube channel.

Recently, we initiated the Design for Sustainability workgroup, an initiative from two of our PGGA members, Erik Rieger and Matthew Sullivan. You can find a recording of the kick-off here on our YouTube channel.

Thanks to the launch of the Design for Sustainability workgroup, we were introduced to Dr. Elvira Rakova, founder and CEO of the startup company Direktin.

Her mission is to build the Digital Ecosystem of engineering tools and simulation for Compressed Air Systems. As typical PLM professionals with a focus on product design, we were curious to learn about developments in the manufacturing space. And it was an interesting discussion, almost a lecture.

Compressed air and Direktin

Dr. Elvira Rakova has been working with compressed air in manufacturing plants for several years, during which she has observed the inefficiency of how compressed air is utilized in these facilities. It is an available resource for all kinds of machines in the plant, often overdimensioned and a significant source of wasted energy.

Dr. Elvira Rakova has been working with compressed air in manufacturing plants for several years, during which she has observed the inefficiency of how compressed air is utilized in these facilities. It is an available resource for all kinds of machines in the plant, often overdimensioned and a significant source of wasted energy.

To address this waste of energy, linked to CO2 emissions, she started her company to help companies scale, dimension, and analyse their compressed air usage. A mix of software and consultancy to make manufacturing processes using compressed air responsible for less carbon emissions, and for the plant owners, saving significant money related to energy usage.

For us, it was an educational discussion, and we recommend that you watch or listen to the next 36 minutes

What I learned

- The use of compressed air and its energy/environmental impact were like dark matter to me.

I never noticed it when visiting customers as a significant source to become more sustainable. - Although the topic of compressed air seems easy to understand, its usage and impact are all tough to address quickly and easily, due to legacy in plants, lack of visibility on compressed air (energy usage) and needs and standardization among the providers of machinery.

- The need for data analysis is crucial in addressing the reporting challenges of Scope 3 emissions, and it is also increasingly important as part of the Digital Product Passport data to be provided. Companies must invest in the digitalization of their plants to better analyze and improve energy usage, such as in the case of compressed air.

- In the end, we concluded that for sustainability, it is all about digital partnerships connecting the design world and the manufacturing world and for that reason, Elvira is personally motivated to join and support the Design for Sustainability workgroup

Want to learn more?

- Another educational webinar: Design Review Culture and Sustainability

- Explore the Direktin website to learn more

Conclusions

The PLM Green Global Alliance is not only about designing products; we have also seen lifecycle assessments for manufacturing, as discussed with Makersite and aPriori. These companies focused more on traditional operations in a manufacturing plant. Through our lecture/discussion on the use of compressed air in manufacturing plants, we identified a new domain that requires attention.

Interesting reflection, Jos. In my experience, the situation you describe is very recognizable. At the company where I work, sustainability…

[…] (The following post from PLM Green Global Alliance cofounder Jos Voskuil first appeared in his European PLM-focused blog HERE.) […]

[…] recent discussions in the PLM ecosystem, including PSC Transition Technologies (EcoPLM), CIMPA PLM services (LCA), and the Design for…

Jos, all interesting and relevant. There are additional elements to be mentioned and Ontologies seem to be one of the…

Jos, as usual, you've provided a buffet of "food for thought". Where do you see AI being trained by a…