Last week I listened to a Dutch podcast that gave me an unexpected inspiration. The podcast “Zo Simpel is het Niet” (“It is not that simple “in English) is a podcast with a focus on economic topics and trends, not at all about PLM, sometimes a little about the effects of digital transformation and AI is more and more mentioned.

Last week I listened to a Dutch podcast that gave me an unexpected inspiration. The podcast “Zo Simpel is het Niet” (“It is not that simple “in English) is a podcast with a focus on economic topics and trends, not at all about PLM, sometimes a little about the effects of digital transformation and AI is more and more mentioned.

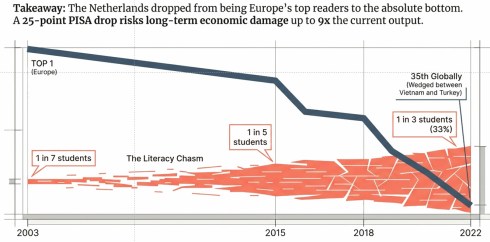

The episode I listened to was about the decline of literacy in the Netherlands. The conclusion is based on research discussed from the PISA test from the OECD. Learn more about the OECD and PISA test here on this Wiki page.

I asked Notebook LM to make an English slide deck from the podcast – You can download the deck HERE.

The conclusion: We are in a free fall in the Netherlands (image below) and likely in other countries too, because we are reading less, writing less, and consequently thinking less deeply.

Social media was identified as one of the root causes. Short-form content, the endless scroll, the dopamine loop of likes and shares — it is all rewiring how we process information – short-term, quick results without building deeper skills.

![]() And then the connection between social media and the drop in literacy and science skills hit me. We are doing the same thing at the moment with AI!

And then the connection between social media and the drop in literacy and science skills hit me. We are doing the same thing at the moment with AI!

Third Way of Thinking

In my posts, I sometimes refer to Daniel Kahneman’s book and his research: Thinking Fast and Slow, as this is for me a foundational theory for understanding human behavior.

In my posts, I sometimes refer to Daniel Kahneman’s book and his research: Thinking Fast and Slow, as this is for me a foundational theory for understanding human behavior.

Kahneman describes our brain as a combination of System 1, which is the fast, intuitive brain (low energy), and System 2, which is the slow, deliberative one (burns energy). As humans, we avoid using energy when thinking, although nowadays, outside our brains, we are hooked to fossil energy 😉.

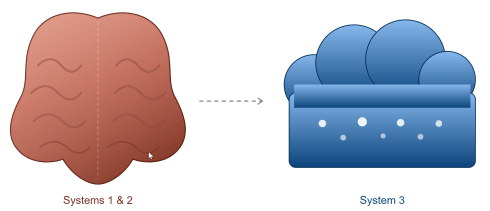

Next, I read a research from Steven Shaw and Gideon Nave, from The Wharton School of the University of Pennsylvania, that indicated that AI is going to have an additional impact on our behavior as humanity.

Their paper Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender introduces System 3 as artificial cognition. External, automated, data-driven reasoning that lives not in your brain but in the cloud.

Their central finding is something they call “cognitive surrender” – access to AI made people more confident, regardless of whether the AI was right or wrong. An enforcement of the Dunning-Kruger effect?

Their central finding is something they call “cognitive surrender” – access to AI made people more confident, regardless of whether the AI was right or wrong. An enforcement of the Dunning-Kruger effect?

The most vulnerable are people with higher trust in AI, lower need for cognition, and lower fluid intelligence, who showed the greatest cognitive surrender. The least critical thinkers delegate most, and then feel most certain about the result.

Benedict Smith, my True Intelligence friend, pointed to the same pattern in his post: When the Graph talks back. Read it and think!

Both their conclusions made me even more worried, combined with the results of the Dutch literacy developments – are we all racing downhill?

I believe our brain is a muscle. Like any muscle, it needs resistance to stay strong. You do not become a better cyclist by riding an eBike everywhere — the motor does the work, and your legs lose the real strength needed when you are without your bike. The same applies to cognitive effort.

I believe our brain is a muscle. Like any muscle, it needs resistance to stay strong. You do not become a better cyclist by riding an eBike everywhere — the motor does the work, and your legs lose the real strength needed when you are without your bike. The same applies to cognitive effort.

We Have Been Here Before

It is not the first time a transformative technology arrived with enormous promise and created a deeply unequal outcome. The Industrial Revolution reduced most workers to resources while a few became extraordinarily wealthy.

It is not the first time a transformative technology arrived with enormous promise and created a deeply unequal outcome. The Industrial Revolution reduced most workers to resources while a few became extraordinarily wealthy.

John D. Rockefeller (oil & railroad industries), Andrew Carnegie (steel industry), J.P. Morgan (financing the new industries) and Cornelius Vanderbilt (shipping and railroads) as examples.

These industry leaders did not care so much about humans, and it took roughly a hundred years — and the rise of labour unions — to begin correcting that imbalance.

The AI revolution is moving much faster! And if history teaches us anything, it is that working more efficiently with new tools does not automatically raise your value. The more companies invest in AI solutions, the more pressure there will be to develop your individual skills.

The AI revolution is moving much faster! And if history teaches us anything, it is that working more efficiently with new tools does not automatically raise your value. The more companies invest in AI solutions, the more pressure there will be to develop your individual skills.

Efficiency without insight is a commodity.

![]() My friend Helena Guitierrez wrote this weekend this supporting post: Preparing for AI Adoption

My friend Helena Guitierrez wrote this weekend this supporting post: Preparing for AI Adoption

What does it mean for Product Lifecycle Management?

Purposefully, I wrote Product Lifecycle Management to focus on the strategy, and not an all-around capable PLM system, as PLM systems have never quite delivered on their original promise.

The PLM vendors benefited from selling the dream, the consultants benefited from its complexity and the users, initially engineers and later more stakeholders in the product lifecycle, often suffered under rigid processes and complex systems. As the systems were designed to store information. User-friendlyness was not a priority.

The PLM vendors benefited from selling the dream, the consultants benefited from its complexity and the users, initially engineers and later more stakeholders in the product lifecycle, often suffered under rigid processes and complex systems. As the systems were designed to store information. User-friendlyness was not a priority.

Will AI, being layered on top of PLM and other enterprise systems, be the solution for these underperforming systems?

Oleg Shilovitsky believes in that, as you can read in his recent post: PLM’s OpenClaw Moment: How AI Agents Will Break Closed Systems

The risk is that we repeat the same pattern. AI will be positioned as the solution to problems actually caused by poor implementation and insufficient investment in the human side of change.

There is an interesting discussion ongoing about the future of PLM infrastructures, well described recently by Rainer Mewaldt in his post: What would a 𝗣𝗟𝗠 𝘀𝘆𝘀𝘁𝗲𝗺 look like if it were 𝗱𝗲𝘀𝗶𝗴𝗻𝗲𝗱 𝗳𝗼𝗿 𝗔𝗜 𝗳𝗿𝗼𝗺 𝘁𝗵𝗲 𝗴𝗿𝗼𝘂𝗻𝗱 𝘂𝗽?

There is an interesting discussion ongoing about the future of PLM infrastructures, well described recently by Rainer Mewaldt in his post: What would a 𝗣𝗟𝗠 𝘀𝘆𝘀𝘁𝗲𝗺 look like if it were 𝗱𝗲𝘀𝗶𝗴𝗻𝗲𝗱 𝗳𝗼𝗿 𝗔𝗜 𝗳𝗿𝗼𝗺 𝘁𝗵𝗲 𝗴𝗿𝗼𝘂𝗻𝗱 𝘂𝗽?

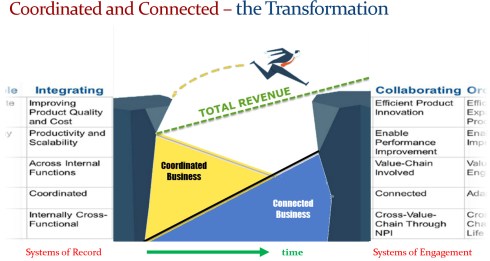

The principles are there, like 10 years ago, before AI became hyped, when we discussed digital transformation as moving from a coordinated infrastructure to a coordinated and connected infrastructure, with a mix of Systems of Record and Systems of Engagement.

I have not seen much progress with the customers I have been working with in the past five to ten years. The change did not seriously happen due to the need for new ways of working, different people skills and organizational change.

Will it happen now with the AI-wave? A question Ilan Madjar also asked this weekend.

What should we – companies and individuals – actually do?

As an approach, the research from Wharton suggests that using rewards (incentives) and providing feedback can help people stay mentally engaged and avoid disengaging or losing motivation.

In other words, these strategies can combat the tendency to mentally “check out” or stop trying.

When participants were rewarded for accuracy and received immediate feedback, their override rates on faulty AI roughly doubled.

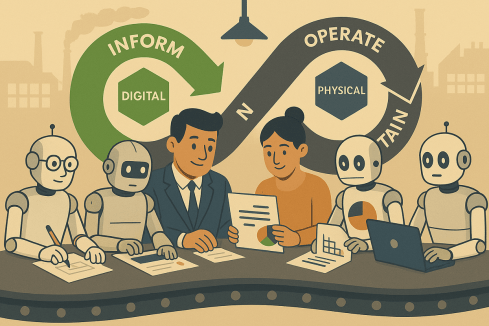

This observation means we should design AI-assisted workflows so that people remain accountable for outcomes and receive clear feedback when AI-assisted decisions go wrong. Work to do for startups and existing PLM vendors to develop the best combination of agents and content.

![]() BUT: do not let the AI absorb the accountability while the human takes the credit – System 3!

BUT: do not let the AI absorb the accountability while the human takes the credit – System 3!

Companies are in an uncomfortable situation. Before AI became the focus for improving businesses, the most heard statement was:

“Our employees are our assets – they create the value of our company,”

And this is one of the reasons that HR departments exist. Although not all HR departments are there for the employees – their role is to balance the HUMAN RESOURCES in a company – we are still talking about resources.

With AI, the new statement might be

“Our AI-supported employees are our assets, where part of the asset value comes from the AI tools used”.

This raises the question of who will remain as the AI-supported employee. We already see that entry-level jobs in any type of business get replaced by AI, creating stress on the job market. This, combined with the observed reduced mastery of deep-skills in math, reading and science, as described in the PISA research earlier shared in this post, puts a generation at risk.

![]() Like the winners of the Industrial Revolution, the winners of the AI revolution do not care about humans – they care about profits.

Like the winners of the Industrial Revolution, the winners of the AI revolution do not care about humans – they care about profits.

As individuals, we need to keep on training our brain-muscles without AI where the muscle matters. As the Dutch podcast mentioned: write your first draft before asking Claude to improve it, think through a problem before asking ChatGPT to solve it, and read a book of 100 pages.

As individuals, we need to keep on training our brain-muscles without AI where the muscle matters. As the Dutch podcast mentioned: write your first draft before asking Claude to improve it, think through a problem before asking ChatGPT to solve it, and read a book of 100 pages.

In PLM, judgment and contextual reasoning are the core of what people need to do. You should protect the practice of doing that work yourself. Use AI to accelerate and refine, not to replace the effort that builds competence.

Invest in yourself to remain independent. Read broadly — actually read, not skim AI summaries. The people who will remain valuable in an AI-saturated world are not the ones who prompt best, but the ones who can evaluate, challenge, and contextualise what AI produces.

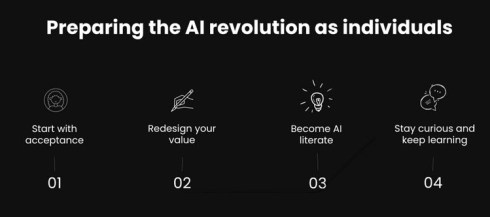

And with that, I want to come back to the post from Helena Guitierrez that I mentioned before, where she focused on what we can do as individuals.

Helena is one of the founders of Share PLM, a company with the purpose: BUILDING A HUMAN-CENTERED DIGITAL FUTURE, as is written on their website. We both believe that in order to enjoy the AI revolution, we have to invest in ourselves. The above image illustrates steps to take- click on the image for the full post.

Helena is one of the founders of Share PLM, a company with the purpose: BUILDING A HUMAN-CENTERED DIGITAL FUTURE, as is written on their website. We both believe that in order to enjoy the AI revolution, we have to invest in ourselves. The above image illustrates steps to take- click on the image for the full post.

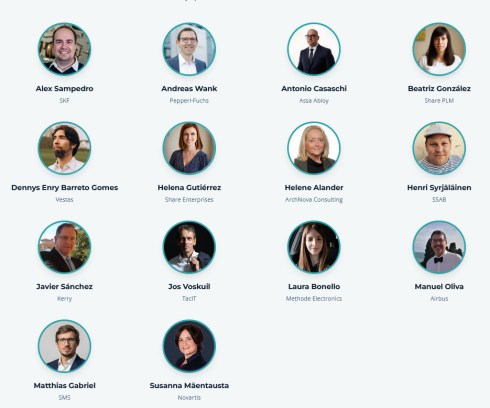

Talking about the human-centered digital future, have a look at the agenda of the upcoming Share PLM Summit on 19-20 May in Jerez de la Frontera (Spain) – our sessions and discussions will, of course, be based on PLM and AI experiences, with the focus on what it means for humans – it will not be a technology conference.

Conclusion

The Wharton researchers close with a question worth “What happens when our judgments are shaped by minds not our own?” Their answer so far: we become more confident, less accurate when the AI is wrong, and largely unaware of the difference – a big risk.

The challenge is not artificial intelligence. The challenge is whether we will remain genuinely intelligent in the presence of it – take care of yourself!

What do you think? I am looking forward to your comments and feedback.

Leave a comment

Comments feed for this article