Let me start with a confession: as a kid, I was a classic nerd, drawn to soccer and exact sciences. Math and physics weren’t just subjects—they were my playground.

Let me start with a confession: as a kid, I was a classic nerd, drawn to soccer and exact sciences. Math and physics weren’t just subjects—they were my playground.

During my education to become a teacher in Physics and Mathematics, I discovered something even more captivating: programming. It started with my first Apple IIe, where I tackled the challenge of programming with limited memory using machine language, analogue/digital interfaces, Pascal, and C.

Later, I turned to Visual Basic and C++, writing programs to simulate math scenarios, automate AutoCAD tasks, and later develop solutions on top of SmarTeam.

It was not just work—it was how I relaxed – structuring my thinking – would we call it now Vibe coding?

The upside of this experience? Technical and physical concepts never intimidated me – they helped me to see the bigger picture. I was wired to think deeply, patiently, and persistently—skills that have stayed with me ever since.

The upside of this experience? Technical and physical concepts never intimidated me – they helped me to see the bigger picture. I was wired to think deeply, patiently, and persistently—skills that have stayed with me ever since.

The switch to human

But then I got involved in training and mediating in PLM implementations, where I discovered that technical skills were needed; however, more important were understanding human behavior (not software), communication and PLM methodology skills.

Many implementations at that time stalled because everyone started with great enthusiasm until the results failed to materialize. The solution was not as expected, too unstable or not possible. And from the point of view of the users, it is too complex and frustrating for them. You can read one of my experiences from that time: Where is my ROI. Mister Voskuil

One of those many interesting discussions

But the budget was often finished, and the enthusiasm was gone. One of my favorite quotes at that time was:

“You never get a second first impression.”

indicating that from the start, you need to anticipate user acceptance, don’t think of a big bang approach and start with understanding and agreeing on the big picture before diving into the details.

How many of you have been in this situation?

Although the majority of people in the PLM community agree that human behavior can make or break a PLM implementation, the majority of discussions and focus are most of the time targeting tools and technologies.

Organizational Change Management is often considered too soft to address, particularly in so-called result-driven organizations. Shut up and do the work!

Organizational Change Management is often considered too soft to address, particularly in so-called result-driven organizations. Shut up and do the work!

Recently, some PLM software vendors mentioned OCM as an important activity, sometimes even provided by them. Their business model is to sell as many software licenses as possible, and therefore, they promise best-case scenarios and coverage of business scenarios.

Would you buy your PLM software from a company that says:

“Our software is great; however, you also need to address a business change program.”

Or would you buy from“We are a market leader in your business, and thousands of users are currently working happily with our software.”

I believe, with the experience as a PLM coach, that every PLM implementation should be a people and business discussion first – preferably sponsored at C-level – before jumping on the solutions.

I believe, with the experience as a PLM coach, that every PLM implementation should be a people and business discussion first – preferably sponsored at C-level – before jumping on the solutions.

The challenge of this approach is that a human-centric approach depends on people, often hard to scale, as it is a people business, not a software tools business.

Digital Transformation is failing

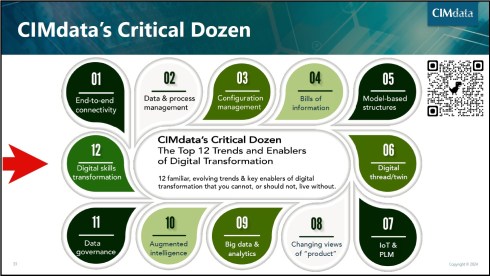

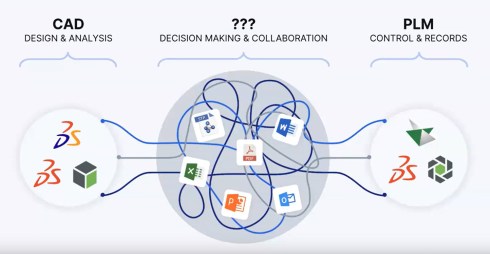

While preparing for the upcoming Share PLM summit in Jerez on May 19-20, I was looking back at why real digital transformation in the PLM domain is still failing – we keep on working mostly in a linear document-driven operating model.

While preparing for the upcoming Share PLM summit in Jerez on May 19-20, I was looking back at why real digital transformation in the PLM domain is still failing – we keep on working mostly in a linear document-driven operating model.

My opinion at this moment: For existing organizations, the move from coordinated to coordinated and connected is too complex for humans.

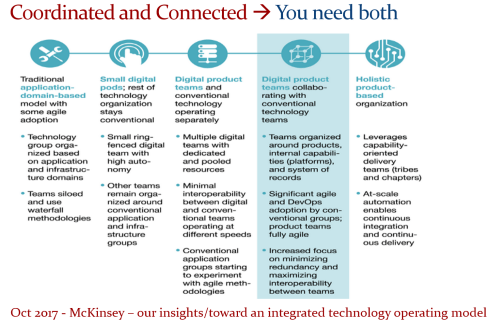

Despite a great white paper from McKinsey on how organisations could move away from a linear, often document-driven organisation to an organisation working in multidisciplinary product teams, there is no real progress in most organisations.

Changing the organizational structure appears to be so difficult, and this relates to Conway’s Law, which states that systems reflect the organizational structure, presenting a challenge in determining where to start.

![]() Not starting means not failing. And failing is the worst thing you can do at the C-level.

Not starting means not failing. And failing is the worst thing you can do at the C-level.

And now there is “product memory.”

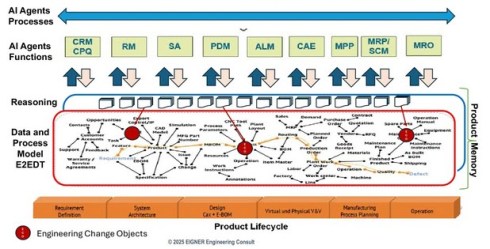

Is the “product memory” based on an agentic AI layer and an underlying ontology, the next big thing after the connected digital enterprise? Initially formulated by Benedict Smith and later translated to a more PLM-specific scope by Martin Eigner and Oleg Shilovitsky, we are trying to combine the (boring) systems of record data with all the reasoning and decision-making – that’s where the knowledge is sitting.

Is the “product memory” based on an agentic AI layer and an underlying ontology, the next big thing after the connected digital enterprise? Initially formulated by Benedict Smith and later translated to a more PLM-specific scope by Martin Eigner and Oleg Shilovitsky, we are trying to combine the (boring) systems of record data with all the reasoning and decision-making – that’s where the knowledge is sitting.

You can follow the thought experiments when reading the True Intelligence newsletters from the start.

A theme that came up also in other “the future of PLM” discussions was that traditional PLM only stores the results of a development and delivery process, but the reasoning is missing.

In my opinion, Colab Software was one of the first complementary to PLM startups, with a focus on capturing the discussions and decisions during a design review, as the older image below shows – also, Colab Software is now much more advanced with an AI-supported infrastructure.

Still, the image shows the value; the reasoning that was captured from the communication between different stakeholders in the product development process during design reviews.

More in the traditional PLM domain, Martin and Oleg started developing the tconcept of an agentic AI enterprise driven by a graph-based layer on top of existing enterprise systems as Martin’s image illustrates below.

Where Oleg stays (for me) more in the traditional PLM enterprise world:

![]() e.g., his post Product Memory Architecture: How PLM Loses Engineering Knowledge and What Comes Next,

e.g., his post Product Memory Architecture: How PLM Loses Engineering Knowledge and What Comes Next,

Martin zoomed in on his day-to-day customer base in Germany when writing

![]() this post: The Actual Concept of Product Memory based on a Digital Thread with a vision for the upcoming 5 years.

this post: The Actual Concept of Product Memory based on a Digital Thread with a vision for the upcoming 5 years.

In addition, less PLM-focused but very data-driven, Jan Bosch wrote a complementary post on his blog related to

![]() the agentic AI approach: From Copilot to Colleague – the rise of agentic AI.

the agentic AI approach: From Copilot to Colleague – the rise of agentic AI.

An interesting quote from this post, valid for us all:

Agent systems require investment in data architecture, workflow mapping, governance frameworks and operational monitoring. Those investments compound. The organization that has deployed agents across its revenue cycle, supply chain and finance operations simultaneously develops deep operational expertise in running agentic systems, which is itself a form of competitive advantage.

And while finalizing this post, there was an interesting discussion related to product memory at The Future of PLM: Introducing Product Memory organized by Fino, also known as Michael Finocchiaro

As a “techie,” I was able to enjoy and follow the discussion about a future infrastructure related to product knowledge. The term “product memory” seems a little overhyped, as if information that is not directly accessible through agents is a cause of failure. The big elephant in the room is where and how to start.

Enjoy the dialogue here:

What about a product memory trauma?

In the past, when discussing knowledge graphs, I already posed the question:

“How can knowledge graphs unlearn?”

In the techie world, there was always a hypothetical response for this question, but will it happen in a product memory environment where not everything is 100 percent exact and correct? Patrick Hillberg, one of the few PLM teachers, can educate you all about seemingly small mistakes with a big impact.

In the techie world, there was always a hypothetical response for this question, but will it happen in a product memory environment where not everything is 100 percent exact and correct? Patrick Hillberg, one of the few PLM teachers, can educate you all about seemingly small mistakes with a big impact.

During the product memory discussion, I heard a statement that only validated data is allowed to be part of the memory.

Has anyone thought about the utopia of this statement?

The ambitious statement that product memory would lead to a single source of truth is, for me, also a utopia. 100 percent correct data does not exist, nor will 100 percent accurate decisions exist. It will be the most likely truth for the moment.

Now compare this with the human brain; when a serious accident happens, the person involved might have trauma from that. Then you need a psychiatrist to fix the trauma, meaning create other memory constructs – rewiring the brain.

Now compare this with the human brain; when a serious accident happens, the person involved might have trauma from that. Then you need a psychiatrist to fix the trauma, meaning create other memory constructs – rewiring the brain.

While seeing this interesting dialogue with Rob Ferrone (the original Product Data PLuMber) about how Quick Release became a significant consultancy firm with the pragmatic focus on making the data flow (old image below), I had a new thought.

With Rob’s entrepreneurial skills, he might be able to start a new company soon, fixing product memory traumas – as data-governance becomes a commodity.

Will the product data plumber become the first product memory shrink?

Conclusion

We are experiencing a fast-moving convergence on future PLM concepts, where the image from Martin Eigner nicely represents such a possible architecture based on “product memory”. The challenge I see is whether we would be able to implement such an architecture to be reliable and supported by humans. Because humans still have their old hardware, the limbic brain, that will try to escape from the perfect world with a single source of truth – they like their truth

This was 2025 – this year, same atmosphere, more experienced & bigger and more to discuss.

Join us here (18) – 19 & 20 May in Jerez

Discover more from Jos Voskuil's Weblog

Subscribe to get the latest posts sent to your email.

Leave a comment

Comments feed for this article